pdf_oxide

The fastest PDF library for Python and Rust. Text extraction, image extraction, markdown conversion, PDF creation & editing. 0.8ms mean, 5× faster than industry leaders, 100% pass rate on 3,830 PDFs. MIT/Apache-2.0.

Stars: 97

PDF Oxide is a fast PDF library for Python and Rust that offers text extraction, image extraction, and markdown conversion. It is built on a Rust core, providing high performance with a mean processing time of 0.8ms per document. The library is 5 times faster than PyMuPDF and 15 times faster than pypdf, with a 100% pass rate on 3,830 real-world PDFs. It supports text extraction, image extraction, PDF creation, and editing, and offers a dual-language API with Python bindings. PDF Oxide is licensed under MIT and Apache-2.0, allowing free usage in both commercial and open-source projects.

README:

The fastest Python PDF library for text extraction, image extraction, and markdown conversion. Built on a Rust core — 0.8ms mean per document, 5× faster than PyMuPDF, 15× faster than pypdf. 100% pass rate on 3,830 real-world PDFs. MIT licensed.

from pdf_oxide import PdfDocument

doc = PdfDocument("paper.pdf")

text = doc.extract_text(0)

chars = doc.extract_chars(0)

markdown = doc.to_markdown(0, detect_headings=True)pip install pdf_oxideuse pdf_oxide::PdfDocument;

let mut doc = PdfDocument::open("paper.pdf")?;

let text = doc.extract_text(0)?;

let images = doc.extract_images(0)?;

let markdown = doc.to_markdown(0, Default::default())?;[dependencies]

pdf_oxide = "0.3"- Fast — 0.8ms mean per document, 5× faster than PyMuPDF, 15× faster than pypdf, 29× faster than pdfplumber

- Reliable — 100% pass rate on 3,830 test PDFs, zero panics, zero timeouts

- Complete — Text extraction, image extraction, PDF creation, and editing in one library

- Dual-language — First-class Rust API and Python bindings via PyO3

- Permissive license — MIT / Apache-2.0 — use freely in commercial and open-source projects

Benchmarked on 3,830 PDFs from three independent public test suites (veraPDF, Mozilla pdf.js, DARPA SafeDocs). Text extraction libraries only (no OCR). Single-thread, 60s timeout, no warm-up.

| Library | Mean | p99 | Pass Rate | License |

|---|---|---|---|---|

| PDF Oxide | 0.8ms | 9ms | 100% | MIT |

| PyMuPDF | 4.6ms | 28ms | 99.3% | AGPL-3.0 |

| pypdfium2 | 4.1ms | 42ms | 99.2% | Apache-2.0 |

| pymupdf4llm | 55.5ms | 280ms | 99.1% | AGPL-3.0 |

| pdftext | 7.3ms | 82ms | 99.0% | GPL-3.0 |

| pdfminer | 16.8ms | 124ms | 98.8% | MIT |

| pdfplumber | 23.2ms | 189ms | 98.8% | MIT |

| markitdown | 108.8ms | 378ms | 98.6% | MIT |

| pypdf | 12.1ms | 97ms | 98.4% | BSD-3 |

| Library | Mean | p99 | Pass Rate | Text Extraction |

|---|---|---|---|---|

| PDF Oxide | 0.8ms | 9ms | 100% | Built-in |

| oxidize_pdf | 13.5ms | 11ms | 99.1% | Basic |

| unpdf | 2.8ms | 10ms | 95.1% | Basic |

| pdf_extract | 4.08ms | 37ms | 91.5% | Basic |

| lopdf | 0.3ms | 2ms | 80.2% | No built-in extraction |

99.5% text parity vs PyMuPDF and pypdfium2 across the full corpus. PDF Oxide extracts text from 7–10× more "hard" files than it misses vs any competitor.

| Suite | PDFs | Pass Rate |

|---|---|---|

| veraPDF (PDF/A compliance) | 2,907 | 100% |

| Mozilla pdf.js | 897 | 99.2% |

| SafeDocs (targeted edge cases) | 26 | 100% |

| Total | 3,830 | 100% |

100% pass rate on all valid PDFs — the 7 non-passing files across the corpus are intentionally broken test fixtures (missing PDF header, fuzz-corrupted catalogs, invalid xref streams).

| Extract | Create | Edit |

|---|---|---|

| Text & Layout | Documents | Annotations |

| Images | Tables | Form Fields |

| Forms | Graphics | Bookmarks |

| Annotations | Templates | Links |

| Bookmarks | Images | Content |

from pdf_oxide import PdfDocument

doc = PdfDocument("report.pdf")

print(f"Pages: {doc.page_count}")

print(f"Version: {doc.version}")

# Extract text from each page

for i in range(doc.page_count):

text = doc.extract_text(i)

print(f"Page {i}: {len(text)} chars")

# Character-level extraction with positions

chars = doc.extract_chars(0)

for ch in chars:

print(f"'{ch.char}' at ({ch.x:.1f}, {ch.y:.1f})")

# Password-protected PDFs

doc = PdfDocument("encrypted.pdf")

doc.authenticate("password")

text = doc.extract_text(0)use pdf_oxide::PdfDocument;

fn main() -> Result<(), Box<dyn std::error::Error>> {

let mut doc = PdfDocument::open("paper.pdf")?;

// Extract text

let text = doc.extract_text(0)?;

// Character-level extraction

let chars = doc.extract_chars(0)?;

// Extract images

let images = doc.extract_images(0)?;

// Vector graphics

let paths = doc.extract_paths(0)?;

Ok(())

}pip install pdf_oxideWheels available for Linux, macOS, and Windows. Python 3.8–3.14.

[dependencies]

pdf_oxide = "0.3"# Clone and build

git clone https://github.com/yfedoseev/pdf_oxide

cd pdf_oxide

cargo build --release

# Run tests

cargo test

# Build Python bindings

maturin develop- Getting Started (Rust) - Complete Rust guide

- Getting Started (Python) - Complete Python guide

- API Docs - Full Rust API reference

- Full Documentation - Complete documentation site

- Performance Benchmarks - Full benchmark methodology and results

- RAG / LLM pipelines — Convert PDFs to clean Markdown for retrieval-augmented generation with LangChain, LlamaIndex, or any framework

- Document processing at scale — Extract text, images, and metadata from thousands of PDFs in seconds

- Data extraction — Pull structured data from forms, tables, and layouts

- Academic research — Parse papers, extract citations, and process large corpora

- PDF generation — Create invoices, reports, certificates, and templated documents programmatically

- PyMuPDF alternative — MIT licensed, 5× faster, no AGPL restrictions

Dual-licensed under MIT or Apache-2.0 at your option. Unlike AGPL-licensed alternatives, pdf_oxide can be used freely in any project — commercial or open-source — with no copyleft restrictions.

We welcome contributions! See CONTRIBUTING.md for guidelines.

cargo build && cargo test && cargo fmt && cargo clippy -- -D warnings@software{pdf_oxide,

title = {PDF Oxide: Fast PDF Toolkit for Rust and Python},

author = {Yury Fedoseev},

year = {2025},

url = {https://github.com/yfedoseev/pdf_oxide}

}Rust + Python | MIT/Apache-2.0 | 100% pass rate on 3,830 PDFs | 0.8ms mean | 5× faster than PyMuPDF | v0.3.9

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for pdf_oxide

Similar Open Source Tools

pdf_oxide

PDF Oxide is a fast PDF library for Python and Rust that offers text extraction, image extraction, and markdown conversion. It is built on a Rust core, providing high performance with a mean processing time of 0.8ms per document. The library is 5 times faster than PyMuPDF and 15 times faster than pypdf, with a 100% pass rate on 3,830 real-world PDFs. It supports text extraction, image extraction, PDF creation, and editing, and offers a dual-language API with Python bindings. PDF Oxide is licensed under MIT and Apache-2.0, allowing free usage in both commercial and open-source projects.

Open-dLLM

Open-dLLM is the most open release of a diffusion-based large language model, providing pretraining, evaluation, inference, and checkpoints. It introduces Open-dCoder, the code-generation variant of Open-dLLM. The repo offers a complete stack for diffusion LLMs, enabling users to go from raw data to training, checkpoints, evaluation, and inference in one place. It includes pretraining pipeline with open datasets, inference scripts for easy sampling and generation, evaluation suite with various metrics, weights and checkpoints on Hugging Face, and transparent configs for full reproducibility.

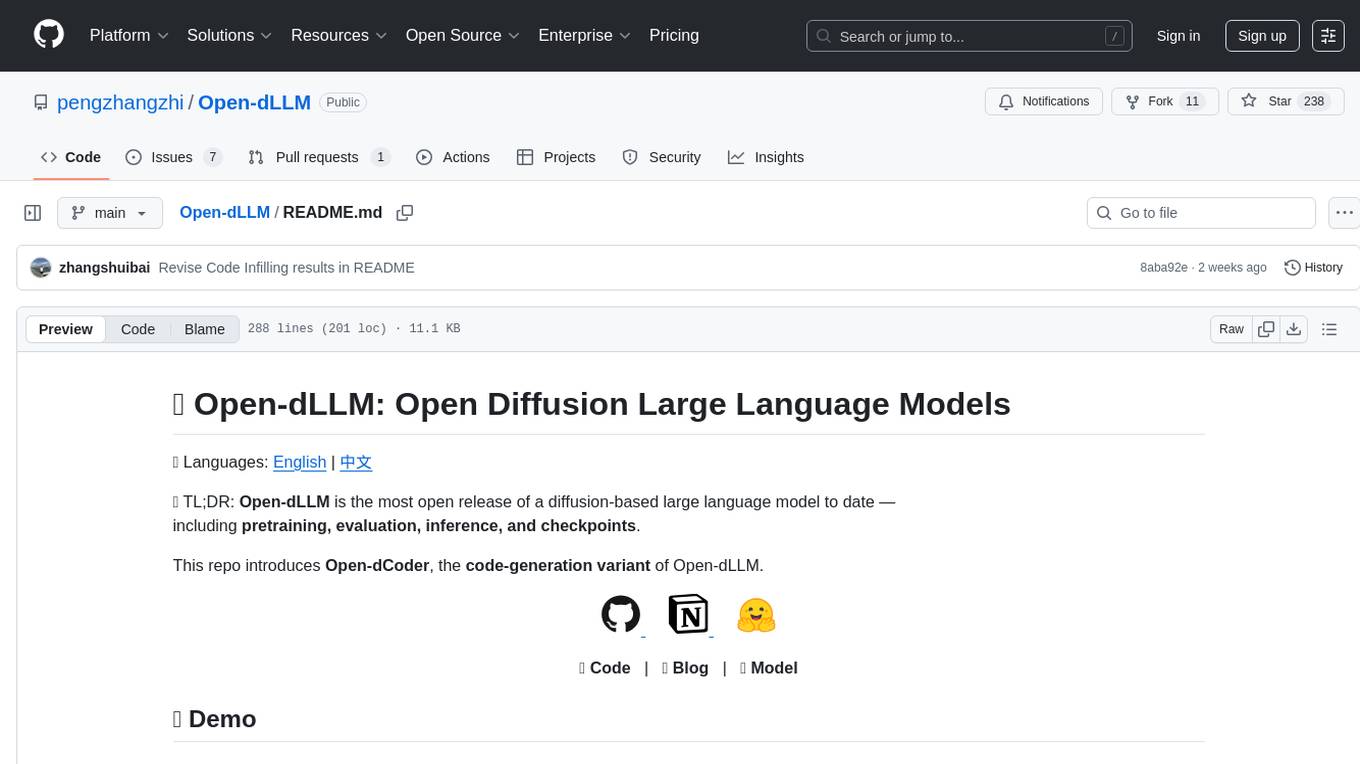

DeepRetrieval

DeepRetrieval is a tool designed to enhance search engines and retrievers using Large Language Models (LLMs) and Reinforcement Learning (RL). It allows LLMs to learn how to search effectively by integrating with search engine APIs and customizing reward functions. The tool provides functionalities for data preparation, training, evaluation, and monitoring search performance. DeepRetrieval aims to improve information retrieval tasks by leveraging advanced AI techniques.

ReGraph

ReGraph is a decentralized AI compute marketplace that connects hardware providers with developers who need inference and training resources. It democratizes access to AI computing power by creating a global network of distributed compute nodes. It is cost-effective, decentralized, easy to integrate, supports multiple models, and offers pay-as-you-go pricing.

Athena-Public

Project Athena is a Linux OS designed for AI Agents, providing memory, persistence, scheduling, and governance for AI models. It offers a comprehensive memory layer that survives across sessions, models, and IDEs, allowing users to own their data and port it anywhere. The system is built bottom-up through 1,079+ sessions, focusing on depth and compounding knowledge. Athena features a trilateral feedback loop for cross-model validation, a Model Context Protocol server with 9 tools, and a robust security model with data residency options. The repository structure includes an SDK package, examples for quickstart, scripts, protocols, workflows, and deep documentation. Key concepts cover architecture, knowledge graph, semantic memory, and adaptive latency. Workflows include booting, reasoning modes, planning, research, and iteration. The project has seen significant content expansion, viral validation, and metrics improvements.

EVE

EVE is an official PyTorch implementation of Unveiling Encoder-Free Vision-Language Models. The project aims to explore the removal of vision encoders from Vision-Language Models (VLMs) and transfer LLMs to encoder-free VLMs efficiently. It also focuses on bridging the performance gap between encoder-free and encoder-based VLMs. EVE offers a superior capability with arbitrary image aspect ratio, data efficiency by utilizing publicly available data for pre-training, and training efficiency with a transparent and practical strategy for developing a pure decoder-only architecture across modalities.

neurolink

NeuroLink is an Enterprise AI SDK for Production Applications that serves as a universal AI integration platform unifying 13 major AI providers and 100+ models under one consistent API. It offers production-ready tooling, including a TypeScript SDK and a professional CLI, for teams to quickly build, operate, and iterate on AI features. NeuroLink enables switching providers with a single parameter change, provides 64+ built-in tools and MCP servers, supports enterprise features like Redis memory and multi-provider failover, and optimizes costs automatically with intelligent routing. It is designed for the future of AI with edge-first execution and continuous streaming architectures.

nncase

nncase is a neural network compiler for AI accelerators that supports multiple inputs and outputs, static memory allocation, operators fusion and optimizations, float and quantized uint8 inference, post quantization from float model with calibration dataset, and flat model with zero copy loading. It can be installed via pip and supports TFLite, Caffe, and ONNX ops. Users can compile nncase from source using Ninja or make. The tool is suitable for tasks like image classification, object detection, image segmentation, pose estimation, and more.

ai-dev-kit

The AI Dev Kit is a comprehensive toolkit designed to enhance AI-driven development on Databricks. It provides trusted sources for AI coding assistants like Claude Code and Cursor to build faster and smarter on Databricks. The kit includes features such as Spark Declarative Pipelines, Databricks Jobs, AI/BI Dashboards, Unity Catalog, Genie Spaces, Knowledge Assistants, MLflow Experiments, Model Serving, Databricks Apps, and more. Users can choose from different adventures like installing the kit, using the visual builder app, teaching AI assistants Databricks patterns, executing Databricks actions, or building custom integrations with the core library. The kit also includes components like databricks-tools-core, databricks-mcp-server, databricks-skills, databricks-builder-app, and ai-dev-project.

microgpt-c

MicroGPT-C is a project that focuses on tiny specialist models working together to outperform monoliths on specific tasks. It is a C port of a Python GPT model, rewritten in pure C99 with zero dependencies. The project explores the concept of coordinated intelligence through 'organelles' that differentiate based on training data, resulting in improved performance across logic games and real-world data experiments.

paiml-mcp-agent-toolkit

PAIML MCP Agent Toolkit (PMAT) is a zero-configuration AI context generation system with extreme quality enforcement and Toyota Way standards. It allows users to analyze any codebase instantly through CLI, MCP, or HTTP interfaces. The toolkit provides features such as technical debt analysis, advanced monitoring, metrics aggregation, performance profiling, bottleneck detection, alert system, multi-format export, storage flexibility, and more. It also offers AI-powered intelligence for smart recommendations, polyglot analysis, repository showcase, and integration points. PMAT enforces quality standards like complexity ≤20, zero SATD comments, test coverage >80%, no lint warnings, and synchronized documentation with commits. The toolkit follows Toyota Way development principles for iterative improvement, direct AST traversal, automated quality gates, and zero SATD policy.

Botright

Botright is a tool designed for browser automation that focuses on stealth and captcha solving. It uses a real Chromium-based browser for enhanced stealth and offers features like browser fingerprinting and AI-powered captcha solving. The tool is suitable for developers looking to automate browser tasks while maintaining anonymity and bypassing captchas. Botright is available in async mode and can be easily integrated with existing Playwright code. It provides solutions for various captchas such as hCaptcha, reCaptcha, and GeeTest, with high success rates. Additionally, Botright offers browser stealth techniques and supports different browser functionalities for seamless automation.

rag-web-ui

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology. It helps enterprises and individuals build intelligent Q&A systems based on their own knowledge bases. By combining document retrieval and large language models, it delivers accurate and reliable knowledge-based question-answering services. The system is designed with features like intelligent document management, advanced dialogue engine, and a robust architecture. It supports multiple document formats, async document processing, multi-turn contextual dialogue, and reference citations in conversations. The architecture includes a backend stack with Python FastAPI, MySQL + ChromaDB, MinIO, Langchain, JWT + OAuth2 for authentication, and a frontend stack with Next.js, TypeScript, Tailwind CSS, Shadcn/UI, and Vercel AI SDK for AI integration. Performance optimization includes incremental document processing, streaming responses, vector database performance tuning, and distributed task processing. The project is licensed under the Apache-2.0 License and is intended for learning and sharing RAG knowledge only, not for commercial purposes.

spark-nlp

Spark NLP is a state-of-the-art Natural Language Processing library built on top of Apache Spark. It provides simple, performant, and accurate NLP annotations for machine learning pipelines that scale easily in a distributed environment. Spark NLP comes with 36000+ pretrained pipelines and models in more than 200+ languages. It offers tasks such as Tokenization, Word Segmentation, Part-of-Speech Tagging, Named Entity Recognition, Dependency Parsing, Spell Checking, Text Classification, Sentiment Analysis, Token Classification, Machine Translation, Summarization, Question Answering, Table Question Answering, Text Generation, Image Classification, Image to Text (captioning), Automatic Speech Recognition, Zero-Shot Learning, and many more NLP tasks. Spark NLP is the only open-source NLP library in production that offers state-of-the-art transformers such as BERT, CamemBERT, ALBERT, ELECTRA, XLNet, DistilBERT, RoBERTa, DeBERTa, XLM-RoBERTa, Longformer, ELMO, Universal Sentence Encoder, Llama-2, M2M100, BART, Instructor, E5, Google T5, MarianMT, OpenAI GPT2, Vision Transformers (ViT), OpenAI Whisper, and many more not only to Python and R, but also to JVM ecosystem (Java, Scala, and Kotlin) at scale by extending Apache Spark natively.

MooER

MooER (摩耳) is an LLM-based speech recognition and translation model developed by Moore Threads. It allows users to transcribe speech into text (ASR) and translate speech into other languages (AST) in an end-to-end manner. The model was trained using 5K hours of data and is now also available with an 80K hours version. MooER is the first LLM-based speech model trained and inferred using domestic GPUs. The repository includes pretrained models, inference code, and a Gradio demo for a better user experience.

Liger-Kernel

Liger Kernel is a collection of Triton kernels designed for LLM training, increasing training throughput by 20% and reducing memory usage by 60%. It includes Hugging Face Compatible modules like RMSNorm, RoPE, SwiGLU, CrossEntropy, and FusedLinearCrossEntropy. The tool works with Flash Attention, PyTorch FSDP, and Microsoft DeepSpeed, aiming to enhance model efficiency and performance for researchers, ML practitioners, and curious novices.

For similar tasks

firecrawl

Firecrawl is an API service that takes a URL, crawls it, and converts it into clean markdown. It crawls all accessible subpages and provides clean markdown for each, without requiring a sitemap. The API is easy to use and can be self-hosted. It also integrates with Langchain and Llama Index. The Python SDK makes it easy to crawl and scrape websites in Python code.

sosumi.ai

sosumi.ai provides Apple Developer documentation in an AI-readable format by converting JavaScript-rendered pages into Markdown. It offers an HTTP API to access Apple docs, supports external Swift-DocC sites, integrates with MCP server, and provides tools like searchAppleDocumentation and fetchAppleDocumentation. The project can be self-hosted and is currently hosted on Cloudflare Workers. It is built with Hono and supports various runtimes. The application is designed for accessibility-first, on-demand rendering of Apple Developer pages to Markdown.

doc-scraper

A configurable, concurrent, and resumable web crawler written in Go, specifically designed to scrape technical documentation websites, extract core content, convert it cleanly to Markdown format suitable for ingestion by Large Language Models (LLMs), and save the results locally. The tool is built for LLM training and RAG systems, preserving documentation structure, offering production-ready features like resumable crawls and rate limiting, and using Go's concurrency model for efficient parallel processing. It automates the process of gathering and cleaning web-based documentation for use with Large Language Models, providing a dataset that is text-focused, structured, cleaned, and locally accessible.

pdf_oxide

PDF Oxide is a fast PDF library for Python and Rust that offers text extraction, image extraction, and markdown conversion. It is built on a Rust core, providing high performance with a mean processing time of 0.8ms per document. The library is 5 times faster than PyMuPDF and 15 times faster than pypdf, with a 100% pass rate on 3,830 real-world PDFs. It supports text extraction, image extraction, PDF creation, and editing, and offers a dual-language API with Python bindings. PDF Oxide is licensed under MIT and Apache-2.0, allowing free usage in both commercial and open-source projects.

awesome-llm-web-ui

Curating the best Large Language Model (LLM) Web User Interfaces that facilitate interaction with powerful AI models. Explore and catalogue intuitive, feature-rich, and innovative web interfaces for interacting with LLMs, ranging from simple chatbots to comprehensive platforms equipped with functionalities like PDF generation and web search.

imgcompress

ImgCompress is a versatile tool for offline image compression, conversion, and AI background removal for Docker homelabs. It supports over 70 image formats and provides a local AI background remover for privacy and speed. The tool aims to simplify tasks like converting PSD and HEIC files, creating PDFs from screenshots, and resizing images, all in one place without the need for multiple apps or online converters. Deployed via Docker, ImgCompress offers a clean and convenient solution for managing various image-related tasks.

markdrop

Markdrop is a Python package that facilitates the conversion of PDFs to markdown format while extracting images and tables. It also generates descriptive text descriptions for extracted tables and images using various LLM clients. The tool offers additional functionalities such as PDF URL support, AI-powered image and table descriptions, interactive HTML output with downloadable Excel tables, customizable image resolution and UI elements, and a comprehensive logging system. Markdrop aims to simplify the process of handling PDF documents and enhancing their content with AI-generated descriptions.

MegaParse

MegaParse is a powerful and versatile parser designed to handle various types of documents such as text, PDFs, Powerpoint presentations, and Word documents with no information loss. It is fast, efficient, and open source, supporting a wide range of file formats. MegaParse ensures compatibility with tables, table of contents, headers, footers, and images, making it a comprehensive solution for document parsing.

For similar jobs

Perplexica

Perplexica is an open-source AI-powered search engine that utilizes advanced machine learning algorithms to provide clear answers with sources cited. It offers various modes like Copilot Mode, Normal Mode, and Focus Modes for specific types of questions. Perplexica ensures up-to-date information by using SearxNG metasearch engine. It also features image and video search capabilities and upcoming features include finalizing Copilot Mode and adding Discover and History Saving features.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

MMMU

MMMU is a benchmark designed to evaluate multimodal models on college-level subject knowledge tasks, covering 30 subjects and 183 subfields with 11.5K questions. It focuses on advanced perception and reasoning with domain-specific knowledge, challenging models to perform tasks akin to those faced by experts. The evaluation of various models highlights substantial challenges, with room for improvement to stimulate the community towards expert artificial general intelligence (AGI).

1filellm

1filellm is a command-line data aggregation tool designed for LLM ingestion. It aggregates and preprocesses data from various sources into a single text file, facilitating the creation of information-dense prompts for large language models. The tool supports automatic source type detection, handling of multiple file formats, web crawling functionality, integration with Sci-Hub for research paper downloads, text preprocessing, and token count reporting. Users can input local files, directories, GitHub repositories, pull requests, issues, ArXiv papers, YouTube transcripts, web pages, Sci-Hub papers via DOI or PMID. The tool provides uncompressed and compressed text outputs, with the uncompressed text automatically copied to the clipboard for easy pasting into LLMs.

gpt-researcher

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

ChatTTS

ChatTTS is a generative speech model optimized for dialogue scenarios, providing natural and expressive speech synthesis with fine-grained control over prosodic features. It supports multiple speakers and surpasses most open-source TTS models in terms of prosody. The model is trained with 100,000+ hours of Chinese and English audio data, and the open-source version on HuggingFace is a 40,000-hour pre-trained model without SFT. The roadmap includes open-sourcing additional features like VQ encoder, multi-emotion control, and streaming audio generation. The tool is intended for academic and research use only, with precautions taken to limit potential misuse.

HebTTS

HebTTS is a language modeling approach to diacritic-free Hebrew text-to-speech (TTS) system. It addresses the challenge of accurately mapping text to speech in Hebrew by proposing a language model that operates on discrete speech representations and is conditioned on a word-piece tokenizer. The system is optimized using weakly supervised recordings and outperforms diacritic-based Hebrew TTS systems in terms of content preservation and naturalness of generated speech.

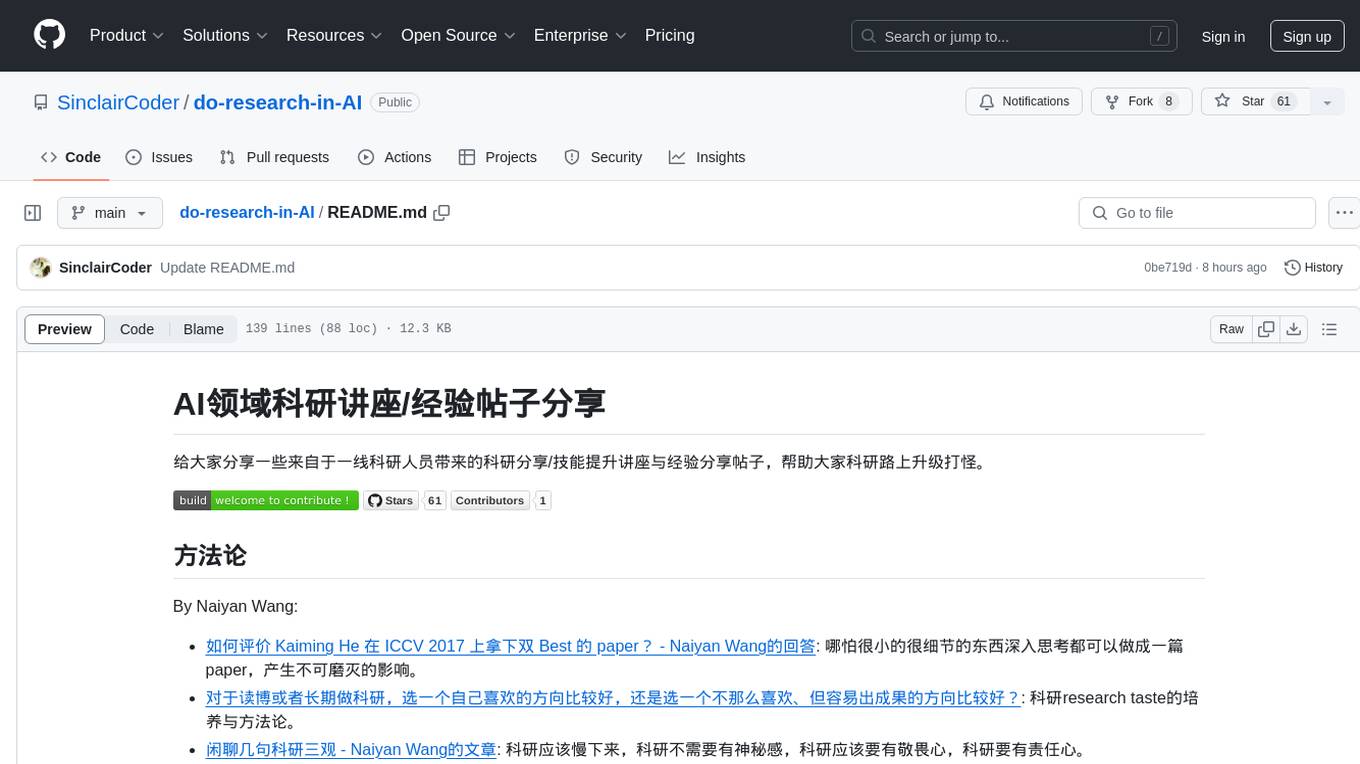

do-research-in-AI

This repository is a collection of research lectures and experience sharing posts from frontline researchers in the field of AI. It aims to help individuals upgrade their research skills and knowledge through insightful talks and experiences shared by experts. The content covers various topics such as evaluating research papers, choosing research directions, research methodologies, and tips for writing high-quality scientific papers. The repository also includes discussions on academic career paths, research ethics, and the emotional aspects of research work. Overall, it serves as a valuable resource for individuals interested in advancing their research capabilities in the field of AI.