rag-web-ui

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology.

Stars: 1963

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology. It helps enterprises and individuals build intelligent Q&A systems based on their own knowledge bases. By combining document retrieval and large language models, it delivers accurate and reliable knowledge-based question-answering services. The system is designed with features like intelligent document management, advanced dialogue engine, and a robust architecture. It supports multiple document formats, async document processing, multi-turn contextual dialogue, and reference citations in conversations. The architecture includes a backend stack with Python FastAPI, MySQL + ChromaDB, MinIO, Langchain, JWT + OAuth2 for authentication, and a frontend stack with Next.js, TypeScript, Tailwind CSS, Shadcn/UI, and Vercel AI SDK for AI integration. Performance optimization includes incremental document processing, streaming responses, vector database performance tuning, and distributed task processing. The project is licensed under the Apache-2.0 License and is intended for learning and sharing RAG knowledge only, not for commercial purposes.

README:

Knowledge Base Management Based on RAG (Retrieval-Augmented Generation)

Features • Quick Start • Deployment • Architecture • Development • Contributing

English | 简体中文

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology that helps build intelligent Q&A systems based on your own knowledge base. By combining document retrieval and large language models, it achieves accurate and reliable knowledge-based question answering services.

The system supports multiple LLM deployment options, including cloud services like OpenAI and DeepSeek, as well as local model deployment through Ollama, meeting privacy and cost requirements in different scenarios.

It also provides OpenAPI interfaces for convenient knowledge base access via API calls.

-

📚 Intelligent Document Management

- Support for multiple document formats (PDF, DOCX, Markdown, Text)

- Automatic document chunking and vectorization

- Support for async document processing and incremental updates

-

🤖 Advanced Dialogue Engine

- Precise retrieval and generation based on RAG

- Support for multi-turn contextual dialogue

- Support for reference citations in conversations

-

🎯 Robust Architecture

- Frontend-backend separation design

- Distributed file storage

- High-performance vector database: Support for ChromaDB, Qdrant with easy switching through Factory pattern

Knowledge Base Management Dashboard

Document Processing Dashboard

Document List

Intelligent Chat Interface with References

API Key Management

API Reference

graph TB

%% Role Definitions

client["Caller/User"]

open_api["Open API"]

subgraph import_process["Document Ingestion Process"]

direction TB

%% File Storage and Document Processing Flow

docs["Document Input<br/>(PDF/MD/TXT/DOCX)"]

job_id["Return Job ID"]

nfs["NFS"]

subgraph async_process["Asynchronous Document Processing"]

direction TB

preprocess["Document Preprocessing<br/>(Text Extraction/Cleaning)"]

split["Text Splitting<br/>(Segmentation/Overlap)"]

subgraph embedding_process["Embedding Service"]

direction LR

embedding_api["Embedding API"] --> embedding_server["Embedding Server"]

end

store[(Vector Database)]

%% Internal Flow of Asynchronous Processing

preprocess --> split

split --> embedding_api

embedding_server --> store

end

subgraph job_query["Job Status Query"]

direction TB

job_status["Job Status<br/>(Processing/Completed/Failed)"]

end

end

%% Query Service Flow

subgraph query_process["Query Service"]

direction LR

user_history["User History"] --> query["User Query<br/>(Based on User History)"]

query --> query_embed["Query Embedding"]

query_embed --> retrieve["Vector Retrieval"]

retrieve --> rerank["Re-ranking<br/>(Cross-Encoder)"]

rerank --> context["Context Assembly"]

context --> llm["LLM Generation"]

llm --> response["Final Response"]

query -.-> rerank

end

%% Main Flow Connections

client --> |"1.Upload Document"| docs

docs --> |"2.Generate"| job_id

docs --> |"3a.Trigger"| async_process

job_id --> |"3b.Return"| client

docs --> nfs

nfs --> preprocess

%% Open API Retrieval Flow

open_api --> |"Retrieve Context"| retrieval_service["Retrieval Service"]

retrieval_service --> |"Access"| store

retrieval_service --> |"Return Context"| open_api

%% Status Query Flow

client --> |"4.Poll"| job_status

job_status --> |"5.Return Progress"| client

%% Database connects to Query Service

store --> retrieve

%% Style Definitions (Adjusted to match GitHub theme colors)

classDef process fill:#d1ecf1,stroke:#0077b6,stroke-width:1px

classDef database fill:#e2eafc,stroke:#003566,stroke-width:1px

classDef input fill:#caf0f8,stroke:#0077b6,stroke-width:1px

classDef output fill:#ffc8dd,stroke:#d00000,stroke-width:1px

classDef rerank fill:#cdb4db,stroke:#5a189a,stroke-width:1px

classDef async fill:#f8edeb,stroke:#7f5539,stroke-width:1px,stroke-dasharray: 5 5

classDef actor fill:#fefae0,stroke:#606c38,stroke-width:1px

classDef jobQuery fill:#ffedd8,stroke:#ca6702,stroke-width:1px

classDef queryProcess fill:#d8f3dc,stroke:#40916c,stroke-width:1px

classDef embeddingService fill:#ffe5d9,stroke:#9d0208,stroke-width:1px

classDef importProcess fill:#e5e5e5,stroke:#495057,stroke-width:1px

%% Applying classes to nodes

class docs,query,retrieval_service input

class preprocess,split,query_embed,retrieve,context,llm process

class store,nfs database

class response,job_id,job_status output

class rerank rerank

class async_process async

class client,open_api actor

class job_query jobQuery

style query_process fill:#d8f3dc,stroke:#40916c,stroke-width:1px

style embedding_process fill:#ffe5d9,stroke:#9d0208,stroke-width:1px

style import_process fill:#e5e5e5,stroke:#495057,stroke-width:1px

style job_query fill:#ffedd8,stroke:#ca6702,stroke-width:1px- Docker & Docker Compose v2.0+

- Node.js 18+

- Python 3.9+

- 8GB+ RAM

- Clone the repository

git clone https://github.com/rag-web-ui/rag-web-ui.git

cd rag-web-ui- Configure environment variables

You can check the details in the configuration table below.

cp .env.example .env- Start services(development server)

docker compose up -d --buildAccess the following URLs after service startup:

- 🌐 Frontend UI: http://127.0.0.1.nip.io

- 📚 API Documentation: http://127.0.0.1.nip.io/redoc

- 💾 MinIO Console: http://127.0.0.1.nip.io:9001

- 🐍 Python FastAPI: High-performance async web framework

- 🗄️ MySQL + ChromaDB: Relational + Vector databases

- 📦 MinIO: Distributed object storage

- 🔗 Langchain: LLM application framework

- 🔒 JWT + OAuth2: Authentication

- ⚛️ Next.js 14: React framework

- 📘 TypeScript: Type safety

- 🎨 Tailwind CSS: Utility-first CSS

- 🎯 Shadcn/UI: High-quality components

- 🤖 Vercel AI SDK: AI integration

The system is optimized in the following aspects:

- ⚡️ Incremental document processing and async chunking

- 🔄 Streaming responses and real-time feedback

- 📑 Vector database performance tuning

- 🎯 Distributed task processing

docker compose -f docker-compose.dev.yml up -d --build| Parameter | Description | Default | Required |

|---|---|---|---|

| MYSQL_SERVER | MySQL Server Address | localhost | ✅ |

| MYSQL_USER | MySQL Username | postgres | ✅ |

| MYSQL_PASSWORD | MySQL Password | postgres | ✅ |

| MYSQL_DATABASE | MySQL Database Name | ragwebui | ✅ |

| SECRET_KEY | JWT Secret Key | - | ✅ |

| ACCESS_TOKEN_EXPIRE_MINUTES | JWT Token Expiry (minutes) | 30 | ✅ |

| Parameter | Description | Default | Applicable |

|---|---|---|---|

| CHAT_PROVIDER | LLM Service Provider | openai | ✅ |

| OPENAI_API_KEY | OpenAI API Key | - | Required for OpenAI |

| OPENAI_API_BASE | OpenAI API Base URL | https://api.openai.com/v1 | Optional for OpenAI |

| OPENAI_MODEL | OpenAI Model Name | gpt-4 | Required for OpenAI |

| DEEPSEEK_API_KEY | DeepSeek API Key | - | Required for DeepSeek |

| DEEPSEEK_API_BASE | DeepSeek API Base URL | - | Required for DeepSeek |

| DEEPSEEK_MODEL | DeepSeek Model Name | - | Required for DeepSeek |

| OLLAMA_API_BASE | Ollama API Base URL | http://localhost:11434 | Required for Ollama |

| OLLAMA_MODEL | Ollama Model Name | llama2 | Required for Ollama |

| Parameter | Description | Default | Applicable |

|---|---|---|---|

| EMBEDDINGS_PROVIDER | Embedding Service Provider | openai | ✅ |

| OPENAI_API_KEY | OpenAI API Key | - | Required for OpenAI Embedding |

| OPENAI_EMBEDDINGS_MODEL | OpenAI Embedding Model | text-embedding-ada-002 | Required for OpenAI Embedding |

| DASH_SCOPE_API_KEY | DashScope API Key | - | Required for DashScope |

| DASH_SCOPE_EMBEDDINGS_MODEL | DashScope Embedding Model | - | Required for DashScope |

| OLLAMA_EMBEDDINGS_MODEL | Ollama Embedding Model | deepseek-r1:7b | Required for Ollama Embedding |

| Parameter | Description | Default | Applicable |

|---|---|---|---|

| VECTOR_STORE_TYPE | Vector Store Type | chroma | ✅ |

| CHROMA_DB_HOST | ChromaDB Server Address | localhost | Required for ChromaDB |

| CHROMA_DB_PORT | ChromaDB Port | 8000 | Required for ChromaDB |

| QDRANT_URL | Qdrant Vector Store URL | http://localhost:6333 | Required for Qdrant |

| QDRANT_PREFER_GRPC | Prefer gRPC Connection for Qdrant | true | Optional for Qdrant |

| Parameter | Description | Default | Required |

|---|---|---|---|

| MINIO_ENDPOINT | MinIO Server Address | localhost:9000 | ✅ |

| MINIO_ACCESS_KEY | MinIO Access Key | minioadmin | ✅ |

| MINIO_SECRET_KEY | MinIO Secret Key | minioadmin | ✅ |

| MINIO_BUCKET_NAME | MinIO Bucket Name | documents | ✅ |

| Parameter | Description | Default | Required |

|---|---|---|---|

| TZ | Timezone Setting | Asia/Shanghai | ❌ |

We welcome community contributions!

- Fork the repository

- Create a feature branch (

git checkout -b feature/AmazingFeature) - Commit changes (

git commit -m 'Add some AmazingFeature') - Push to branch (

git push origin feature/AmazingFeature) - Create a Pull Request

- Follow Python PEP 8 coding standards

- Follow Conventional Commits

- [x] Knowledge Base API Integration

- [ ] Workflow By Natural Language

- [ ] Multi-path Retrieval

- [x] Support Multiple Models

- [x] Support Multiple Vector Databases

For common issues and solutions, please refer to our Troubleshooting Guide.

This project is licensed under the Apache-2.0 License

This project is for learning and sharing RAG knowledge only. Please do not use it for commercial purposes. It is not ready for production use and is still under active development.

Thanks to these open source projects:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for rag-web-ui

Similar Open Source Tools

rag-web-ui

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology. It helps enterprises and individuals build intelligent Q&A systems based on their own knowledge bases. By combining document retrieval and large language models, it delivers accurate and reliable knowledge-based question-answering services. The system is designed with features like intelligent document management, advanced dialogue engine, and a robust architecture. It supports multiple document formats, async document processing, multi-turn contextual dialogue, and reference citations in conversations. The architecture includes a backend stack with Python FastAPI, MySQL + ChromaDB, MinIO, Langchain, JWT + OAuth2 for authentication, and a frontend stack with Next.js, TypeScript, Tailwind CSS, Shadcn/UI, and Vercel AI SDK for AI integration. Performance optimization includes incremental document processing, streaming responses, vector database performance tuning, and distributed task processing. The project is licensed under the Apache-2.0 License and is intended for learning and sharing RAG knowledge only, not for commercial purposes.

neurolink

NeuroLink is an Enterprise AI SDK for Production Applications that serves as a universal AI integration platform unifying 13 major AI providers and 100+ models under one consistent API. It offers production-ready tooling, including a TypeScript SDK and a professional CLI, for teams to quickly build, operate, and iterate on AI features. NeuroLink enables switching providers with a single parameter change, provides 64+ built-in tools and MCP servers, supports enterprise features like Redis memory and multi-provider failover, and optimizes costs automatically with intelligent routing. It is designed for the future of AI with edge-first execution and continuous streaming architectures.

new-api

New API is a next-generation large model gateway and AI asset management system that provides a wide range of features, including a new UI interface, multi-language support, online recharge function, key query for usage quota, compatibility with the original One API database, model charging by usage count, channel weighted randomization, data dashboard, token grouping and model restrictions, support for various authorization login methods, support for Rerank models, OpenAI Realtime API, Claude Messages format, reasoning effort setting, content reasoning, user-specific model rate limiting, request format conversion, cache billing support, and various model support such as gpts, Midjourney-Proxy, Suno API, custom channels, Rerank models, Claude Messages format, Dify, and more.

eko

Eko is a lightweight and flexible command-line tool for managing environment variables in your projects. It allows you to easily set, get, and delete environment variables for different environments, making it simple to manage configurations across development, staging, and production environments. With Eko, you can streamline your workflow and ensure consistency in your application settings without the need for complex setup or configuration files.

llumen

Llumen is a self-hosted interface optimized for modest hardware like Raspberry Pi, old laptops, and minimal VPS. It offers privacy without complexity, providing essential features with minimal resource demands. Users can enjoy sub-second cold starts, real-time token streaming, various chat modes, rich media support, and a universal API for OpenAI-compatible providers. The tool has a small footprint with a binary size of around 17MB and RAM usage under 128MB. Llumen aims to simplify the setup process and offer a user-friendly experience for individuals seeking a privacy-focused solution.

Athena-Public

Project Athena is a Linux OS designed for AI Agents, providing memory, persistence, scheduling, and governance for AI models. It offers a comprehensive memory layer that survives across sessions, models, and IDEs, allowing users to own their data and port it anywhere. The system is built bottom-up through 1,079+ sessions, focusing on depth and compounding knowledge. Athena features a trilateral feedback loop for cross-model validation, a Model Context Protocol server with 9 tools, and a robust security model with data residency options. The repository structure includes an SDK package, examples for quickstart, scripts, protocols, workflows, and deep documentation. Key concepts cover architecture, knowledge graph, semantic memory, and adaptive latency. Workflows include booting, reasoning modes, planning, research, and iteration. The project has seen significant content expansion, viral validation, and metrics improvements.

ReGraph

ReGraph is a decentralized AI compute marketplace that connects hardware providers with developers who need inference and training resources. It democratizes access to AI computing power by creating a global network of distributed compute nodes. It is cost-effective, decentralized, easy to integrate, supports multiple models, and offers pay-as-you-go pricing.

deepfabric

DeepFabric is a CLI tool and SDK designed for researchers and developers to generate high-quality synthetic datasets at scale using large language models. It leverages a graph and tree-based architecture to create diverse and domain-specific datasets while minimizing redundancy. The tool supports generating Chain of Thought datasets for step-by-step reasoning tasks and offers multi-provider support for using different language models. DeepFabric also allows for automatic dataset upload to Hugging Face Hub and uses YAML configuration files for flexibility in dataset generation.

PraisonAI

Praison AI is a low-code, centralised framework that simplifies the creation and orchestration of multi-agent systems for various LLM applications. It emphasizes ease of use, customization, and human-agent interaction. The tool leverages AutoGen and CrewAI frameworks to facilitate the development of AI-generated scripts and movie concepts. Users can easily create, run, test, and deploy agents for scriptwriting and movie concept development. Praison AI also provides options for full automatic mode and integration with OpenAI models for enhanced AI capabilities.

auto-dev

AutoDev Xiuper is an AI-native, multi-agent development platform built on Kotlin Multiplatform. It covers all seven phases of the software development lifecycle and runs on 8+ platforms. The platform provides a unified architecture for writing code once and running it anywhere, with specialized agents for each phase of development. It supports various devices including IntelliJ IDEA, VS Code, CLI, Web, Desktop, Android, iOS, and Server. The platform also offers features like Multi-LLM support, DevIns language for workflow automation, MCP Protocol for extensible tool ecosystem, and code intelligence for multiple programming languages.

sf-skills

sf-skills is a collection of reusable skills for Agentic Salesforce Development, enabling AI-powered code generation, validation, testing, debugging, and deployment. It includes skills for development, quality, foundation, integration, AI & automation, DevOps & tooling. The installation process is newbie-friendly and includes an installer script for various CLIs. The skills are compatible with platforms like Claude Code, OpenCode, Codex, Gemini, Amp, Droid, Cursor, and Agentforce Vibes. The repository is community-driven and aims to strengthen the Salesforce ecosystem.

auto-dev

AutoDev is an AI-powered coding wizard that supports multiple languages, including Java, Kotlin, JavaScript/TypeScript, Rust, Python, Golang, C/C++/OC, and more. It offers a range of features, including auto development mode, copilot mode, chat with AI, customization options, SDLC support, custom AI agent integration, and language features such as language support, extensions, and a DevIns language for AI agent development. AutoDev is designed to assist developers with tasks such as auto code generation, bug detection, code explanation, exception tracing, commit message generation, code review content generation, smart refactoring, Dockerfile generation, CI/CD config file generation, and custom shell/command generation. It also provides a built-in LLM fine-tune model and supports UnitEval for LLM result evaluation and UnitGen for code-LLM fine-tune data generation.

runanywhere-sdks

RunAnywhere is an on-device AI tool for mobile apps that allows users to run LLMs, speech-to-text, text-to-speech, and voice assistant features locally, ensuring privacy, offline functionality, and fast performance. The tool provides a range of AI capabilities without relying on cloud services, reducing latency and ensuring that no data leaves the device. RunAnywhere offers SDKs for Swift (iOS/macOS), Kotlin (Android), React Native, and Flutter, making it easy for developers to integrate AI features into their mobile applications. The tool supports various models for LLM, speech-to-text, and text-to-speech, with detailed documentation and installation instructions available for each platform.

ai-dev-kit

The AI Dev Kit is a comprehensive toolkit designed to enhance AI-driven development on Databricks. It provides trusted sources for AI coding assistants like Claude Code and Cursor to build faster and smarter on Databricks. The kit includes features such as Spark Declarative Pipelines, Databricks Jobs, AI/BI Dashboards, Unity Catalog, Genie Spaces, Knowledge Assistants, MLflow Experiments, Model Serving, Databricks Apps, and more. Users can choose from different adventures like installing the kit, using the visual builder app, teaching AI assistants Databricks patterns, executing Databricks actions, or building custom integrations with the core library. The kit also includes components like databricks-tools-core, databricks-mcp-server, databricks-skills, databricks-builder-app, and ai-dev-project.

pai-opencode

PAI-OpenCode is a complete port of Daniel Miessler's Personal AI Infrastructure (PAI) to OpenCode, an open-source, provider-agnostic AI coding assistant. It brings modular capabilities, dynamic multi-agent orchestration, session history, and lifecycle automation to personalize AI assistants for users. With support for 75+ AI providers, PAI-OpenCode offers dynamic per-task model routing, full PAI infrastructure, real-time session sharing, and multiple client options. The tool optimizes cost and quality with a 3-tier model strategy and a 3-tier research system, allowing users to switch presets for different routing strategies. PAI-OpenCode's architecture preserves PAI's design while adapting to OpenCode, documented through Architecture Decision Records (ADRs).

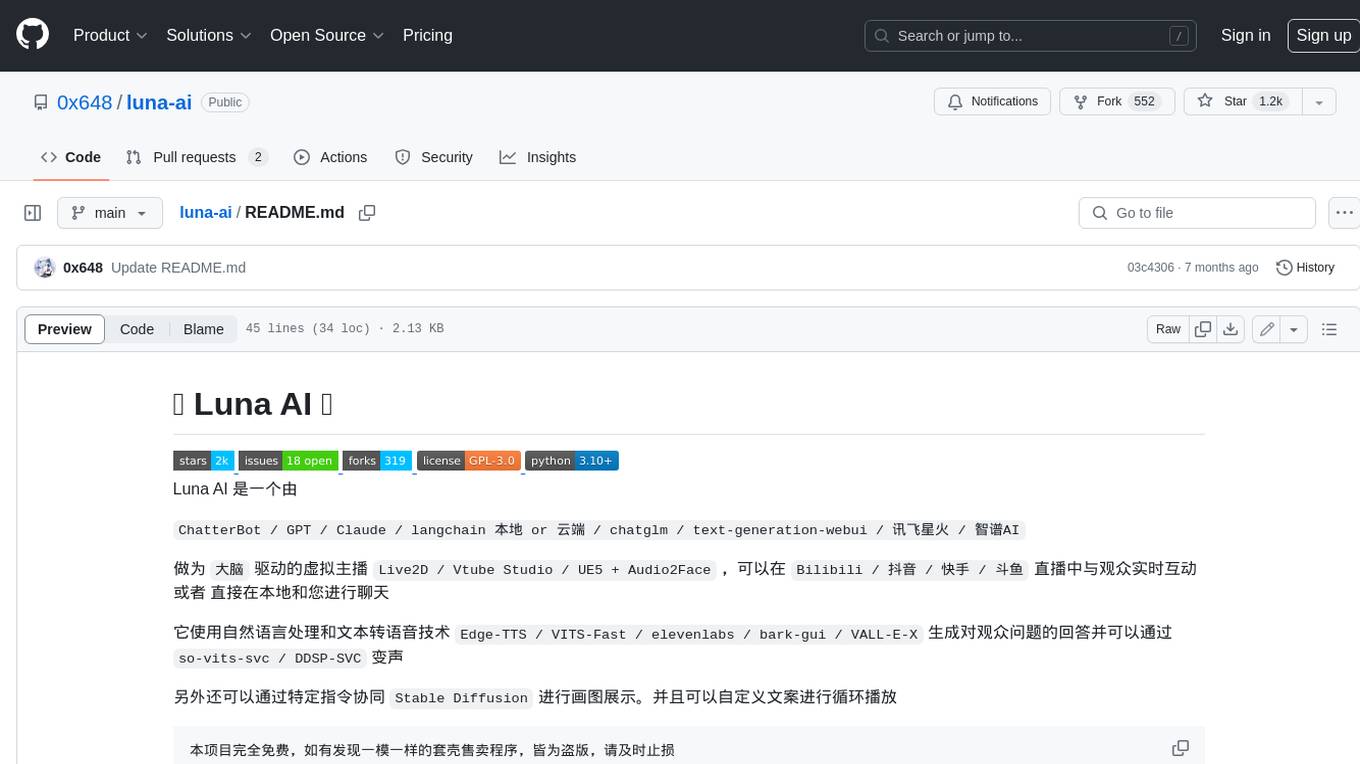

DeepRetrieval

DeepRetrieval is a tool designed to enhance search engines and retrievers using Large Language Models (LLMs) and Reinforcement Learning (RL). It allows LLMs to learn how to search effectively by integrating with search engine APIs and customizing reward functions. The tool provides functionalities for data preparation, training, evaluation, and monitoring search performance. DeepRetrieval aims to improve information retrieval tasks by leveraging advanced AI techniques.

For similar tasks

rag-web-ui

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology. It helps enterprises and individuals build intelligent Q&A systems based on their own knowledge bases. By combining document retrieval and large language models, it delivers accurate and reliable knowledge-based question-answering services. The system is designed with features like intelligent document management, advanced dialogue engine, and a robust architecture. It supports multiple document formats, async document processing, multi-turn contextual dialogue, and reference citations in conversations. The architecture includes a backend stack with Python FastAPI, MySQL + ChromaDB, MinIO, Langchain, JWT + OAuth2 for authentication, and a frontend stack with Next.js, TypeScript, Tailwind CSS, Shadcn/UI, and Vercel AI SDK for AI integration. Performance optimization includes incremental document processing, streaming responses, vector database performance tuning, and distributed task processing. The project is licensed under the Apache-2.0 License and is intended for learning and sharing RAG knowledge only, not for commercial purposes.

semantic-router

Semantic Router is a superfast decision-making layer for your LLMs and agents. Rather than waiting for slow LLM generations to make tool-use decisions, we use the magic of semantic vector space to make those decisions — _routing_ our requests using _semantic_ meaning.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

luna-ai

Luna AI is a virtual streamer driven by a 'brain' composed of ChatterBot, GPT, Claude, langchain, chatglm, text-generation-webui, 讯飞星火, 智谱AI. It can interact with viewers in real-time during live streams on platforms like Bilibili, Douyin, Kuaishou, Douyu, or chat with you locally. Luna AI uses natural language processing and text-to-speech technologies like Edge-TTS, VITS-Fast, elevenlabs, bark-gui, VALL-E-X to generate responses to viewer questions and can change voice using so-vits-svc, DDSP-SVC. It can also collaborate with Stable Diffusion for drawing displays and loop custom texts. This project is completely free, and any identical copycat selling programs are pirated, please stop them promptly.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

cria

Cria is a Python library designed for running Large Language Models with minimal configuration. It provides an easy and concise way to interact with LLMs, offering advanced features such as custom models, streams, message history management, and running multiple models in parallel. Cria simplifies the process of using LLMs by providing a straightforward API that requires only a few lines of code to get started. It also handles model installation automatically, making it efficient and user-friendly for various natural language processing tasks.

beyondllm

Beyond LLM offers an all-in-one toolkit for experimentation, evaluation, and deployment of Retrieval-Augmented Generation (RAG) systems. It simplifies the process with automated integration, customizable evaluation metrics, and support for various Large Language Models (LLMs) tailored to specific needs. The aim is to reduce LLM hallucination risks and enhance reliability.

Groma

Groma is a grounded multimodal assistant that excels in region understanding and visual grounding. It can process user-defined region inputs and generate contextually grounded long-form responses. The tool presents a unique paradigm for multimodal large language models, focusing on visual tokenization for localization. Groma achieves state-of-the-art performance in referring expression comprehension benchmarks. The tool provides pretrained model weights and instructions for data preparation, training, inference, and evaluation. Users can customize training by starting from intermediate checkpoints. Groma is designed to handle tasks related to detection pretraining, alignment pretraining, instruction finetuning, instruction following, and more.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.