gpt-researcher

LLM based autonomous agent that conducts deep local and web research on any topic and generates a long report with citations.

Stars: 23566

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

README:

GPT Researcher is an open deep research agent designed for both web and local research on any given task.

The agent produces detailed, factual, and unbiased research reports with citations. GPT Researcher provides a full suite of customization options to create tailor made and domain specific research agents. Inspired by the recent Plan-and-Solve and RAG papers, GPT Researcher addresses misinformation, speed, determinism, and reliability by offering stable performance and increased speed through parallelized agent work.

Our mission is to empower individuals and organizations with accurate, unbiased, and factual information through AI.

- Objective conclusions for manual research can take weeks, requiring vast resources and time.

- LLMs trained on outdated information can hallucinate, becoming irrelevant for current research tasks.

- Current LLMs have token limitations, insufficient for generating long research reports.

- Limited web sources in existing services lead to misinformation and shallow results.

- Selective web sources can introduce bias into research tasks.

https://github.com/user-attachments/assets/8fcaaa4c-31e5-4814-89b4-94f1433d139d

The core idea is to utilize 'planner' and 'execution' agents. The planner generates research questions, while the execution agents gather relevant information. The publisher then aggregates all findings into a comprehensive report.

Steps:

- Create a task-specific agent based on a research query.

- Generate questions that collectively form an objective opinion on the task.

- Use a crawler agent for gathering information for each question.

- Summarize and source-track each resource.

- Filter and aggregate summaries into a final research report.

- 📝 Generate detailed research reports using web and local documents.

- 🖼️ Smart image scraping and filtering for reports.

- 📜 Generate detailed reports exceeding 2,000 words.

- 🌐 Aggregate over 20 sources for objective conclusions.

- 🖥️ Frontend available in lightweight (HTML/CSS/JS) and production-ready (NextJS + Tailwind) versions.

- 🔍 JavaScript-enabled web scraping.

- 📂 Maintains memory and context throughout research.

- 📄 Export reports to PDF, Word, and other formats.

See the Documentation for:

- Installation and setup guides

- Configuration and customization options

- How-To examples

- Full API references

-

Install Python 3.11 or later. Guide.

-

Clone the project and navigate to the directory:

git clone https://github.com/assafelovic/gpt-researcher.git cd gpt-researcher -

Set up API keys by exporting them or storing them in a

.envfile.export OPENAI_API_KEY={Your OpenAI API Key here} export TAVILY_API_KEY={Your Tavily API Key here}

For custom OpenAI-compatible APIs (e.g., local models, other providers), you can also set:

export OPENAI_BASE_URL={Your custom API base URL here} -

Install dependencies and start the server:

pip install -r requirements.txt python -m uvicorn main:app --reload

Visit http://localhost:8000 to start.

For other setups (e.g., Poetry or virtual environments), check the Getting Started page.

pip install gpt-researcher

...

from gpt_researcher import GPTResearcher

query = "why is Nvidia stock going up?"

researcher = GPTResearcher(query=query)

# Conduct research on the given query

research_result = await researcher.conduct_research()

# Write the report

report = await researcher.write_report()

...For more examples and configurations, please refer to the PIP documentation page.

GPT Researcher supports MCP integration to connect with specialized data sources like GitHub repositories, databases, and custom APIs. This enables research from data sources alongside web search.

export RETRIEVER=tavily,mcp # Enable hybrid web + MCP researchfrom gpt_researcher import GPTResearcher

import asyncio

import os

async def mcp_research_example():

# Enable MCP with web search

os.environ["RETRIEVER"] = "tavily,mcp"

researcher = GPTResearcher(

query="What are the top open source web research agents?",

mcp_configs=[

{

"name": "github",

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {"GITHUB_TOKEN": os.getenv("GITHUB_TOKEN")}

}

]

)

research_result = await researcher.conduct_research()

report = await researcher.write_report()

return reportFor comprehensive MCP documentation and advanced examples, visit the MCP Integration Guide.

GPT Researcher now includes Deep Research - an advanced recursive research workflow that explores topics with agentic depth and breadth. This feature employs a tree-like exploration pattern, diving deeper into subtopics while maintaining a comprehensive view of the research subject.

- 🌳 Tree-like exploration with configurable depth and breadth

- ⚡️ Concurrent processing for faster results

- 🤝 Smart context management across research branches

- ⏱️ Takes ~5 minutes per deep research

- 💰 Costs ~$0.4 per research (using

o3-minion "high" reasoning effort)

Learn more about Deep Research in our documentation.

Step 1 - Install Docker

Step 2 - Clone the '.env.example' file, add your API Keys to the cloned file and save the file as '.env'

Step 3 - Within the docker-compose file comment out services that you don't want to run with Docker.

docker-compose up --buildIf that doesn't work, try running it without the dash:

docker compose up --buildStep 4 - By default, if you haven't uncommented anything in your docker-compose file, this flow will start 2 processes:

- the Python server running on localhost:8000

- the React app running on localhost:3000

Visit localhost:3000 on any browser and enjoy researching!

You can instruct the GPT Researcher to run research tasks based on your local documents. Currently supported file formats are: PDF, plain text, CSV, Excel, Markdown, PowerPoint, and Word documents.

Step 1: Add the env variable DOC_PATH pointing to the folder where your documents are located.

export DOC_PATH="./my-docs"Step 2:

- If you're running the frontend app on localhost:8000, simply select "My Documents" from the "Report Source" Dropdown Options.

- If you're running GPT Researcher with the PIP package, pass the

report_sourceargument as "local" when you instantiate theGPTResearcherclass code sample here.

We've moved our MCP server to a dedicated repository: gptr-mcp.

The GPT Researcher MCP Server enables AI applications like Claude to conduct deep research. While LLM apps can access web search tools with MCP, GPT Researcher MCP delivers deeper, more reliable research results.

Features:

- Deep research capabilities for AI assistants

- Higher quality information with optimized context usage

- Comprehensive results with better reasoning for LLMs

- Claude Desktop integration

For detailed installation and usage instructions, please visit the official repository.

As AI evolves from prompt engineering and RAG to multi-agent systems, we're excited to introduce our new multi-agent assistant built with LangGraph.

By using LangGraph, the research process can be significantly improved in depth and quality by leveraging multiple agents with specialized skills. Inspired by the recent STORM paper, this project showcases how a team of AI agents can work together to conduct research on a given topic, from planning to publication.

An average run generates a 5-6 page research report in multiple formats such as PDF, Docx and Markdown.

Check it out here or head over to our documentation for more information.

GPT-Researcher now features an enhanced frontend to improve the user experience and streamline the research process. The frontend offers:

- An intuitive interface for inputting research queries

- Real-time progress tracking of research tasks

- Interactive display of research findings

- Customizable settings for tailored research experiences

Two deployment options are available:

- A lightweight static frontend served by FastAPI

- A feature-rich NextJS application for advanced functionality

For detailed setup instructions and more information about the frontend features, please visit our documentation page.

We highly welcome contributions! Please check out contributing if you're interested.

Please check out our roadmap page and reach out to us via our Discord community if you're interested in joining our mission.

- Community Discord

- Author Email: [email protected]

This project, GPT Researcher, is an experimental application and is provided "as-is" without any warranty, express or implied. We are sharing codes for academic purposes under the Apache 2 license. Nothing herein is academic advice, and NOT a recommendation to use in academic or research papers.

Our view on unbiased research claims:

- The main goal of GPT Researcher is to reduce incorrect and biased facts. How? We assume that the more sites we scrape the less chances of incorrect data. By scraping multiple sites per research, and choosing the most frequent information, the chances that they are all wrong is extremely low.

- We do not aim to eliminate biases; we aim to reduce it as much as possible. We are here as a community to figure out the most effective human/llm interactions.

- In research, people also tend towards biases as most have already opinions on the topics they research about. This tool scrapes many opinions and will evenly explain diverse views that a biased person would never have read.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for gpt-researcher

Similar Open Source Tools

gpt-researcher

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

NadirClaw

NadirClaw is a powerful open-source tool designed for web scraping and data extraction. It provides a user-friendly interface for extracting data from websites with ease. With NadirClaw, users can easily scrape text, images, and other content from web pages for various purposes such as data analysis, research, and automation. The tool offers flexibility and customization options to cater to different scraping needs, making it a versatile solution for extracting data from the web. Whether you are a data scientist, researcher, or developer, NadirClaw can streamline your data extraction process and help you gather valuable insights from online sources.

taranis-ai

Taranis AI is an advanced Open-Source Intelligence (OSINT) tool that leverages Artificial Intelligence to revolutionize information gathering and situational analysis. It navigates through diverse data sources like websites to collect unstructured news articles, utilizing Natural Language Processing and Artificial Intelligence to enhance content quality. Analysts then refine these AI-augmented articles into structured reports that serve as the foundation for deliverables such as PDF files, which are ultimately published.

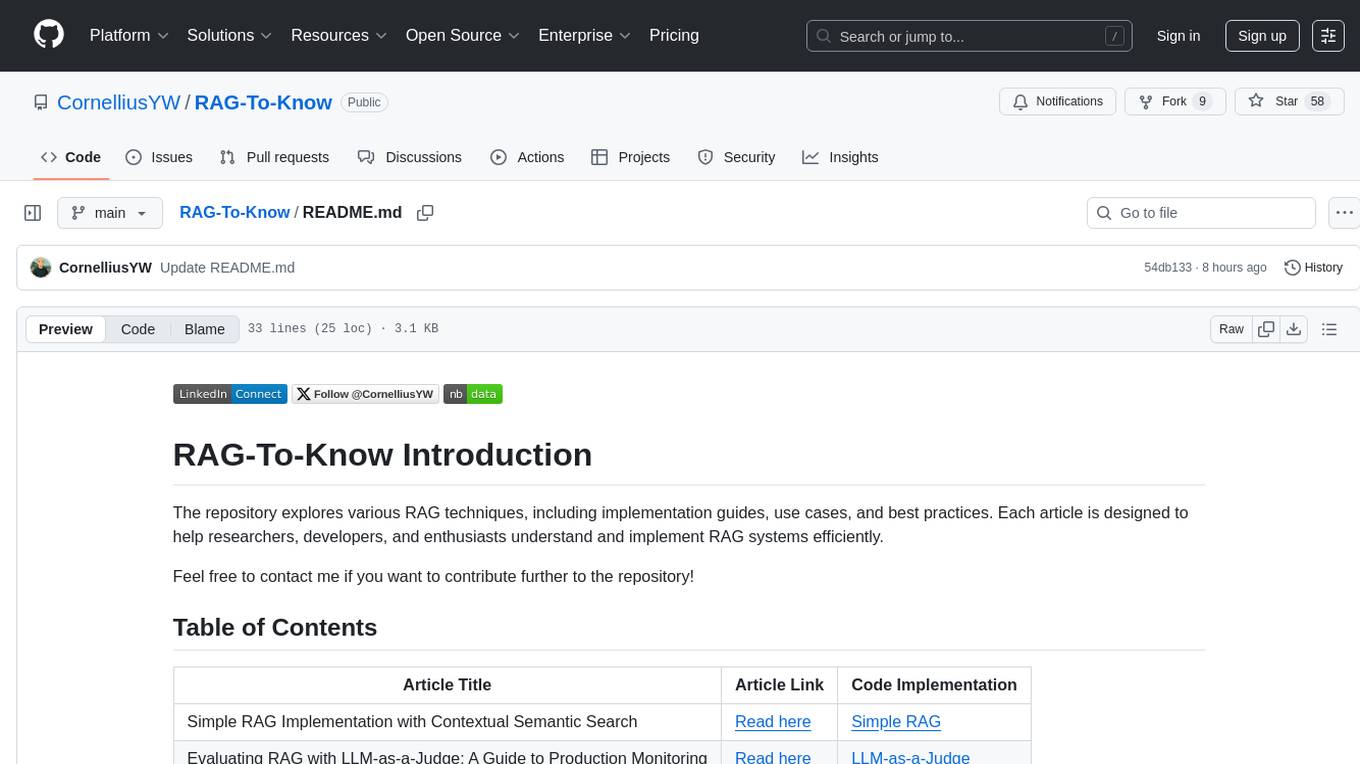

RAG-To-Know

RAG-To-Know is a versatile tool for knowledge extraction and summarization. It leverages the RAG (Retrieval-Augmented Generation) framework to provide a seamless way to retrieve and summarize information from various sources. With RAG-To-Know, users can easily extract key insights and generate concise summaries from large volumes of text data. The tool is designed to streamline the process of information retrieval and summarization, making it ideal for researchers, students, journalists, and anyone looking to quickly grasp the essence of complex information.

CrossIntelligence

CrossIntelligence is a powerful tool for data analysis and visualization. It allows users to easily connect and analyze data from multiple sources, providing valuable insights and trends. With a user-friendly interface and customizable features, CrossIntelligence is suitable for both beginners and advanced users in various industries such as marketing, finance, and research.

diffbot-kg-chatbot

This project is an end-to-end pipeline for constructing knowledge graphs from news articles using Neo4j and Diffbot. It also utilizes OpenAI LLMs to generate questions based on the knowledge graph. The application offers news monitoring capabilities, data extraction from text, and organization/personal information enrichment. Users can interact with the chatbot interface to ask questions and receive answers based on the knowledge graph.

God-Level-AI

A drill of scientific methods, processes, algorithms, and systems to build stories & models. An in-depth learning resource for humans. This repository is designed for individuals aiming to excel in the field of Data and AI, providing video sessions and text content for learning. It caters to those in leadership positions, professionals, and students, emphasizing the need for dedicated effort to achieve excellence in the tech field. The content covers various topics with a focus on practical application.

open-deep-research

Open Deep Research is a comprehensive repository that provides resources, tools, and information for deep learning research. It includes datasets, pre-trained models, code implementations, research papers, and tutorials to support researchers and developers in the field of deep learning. The repository aims to facilitate collaboration, knowledge sharing, and innovation in the deep learning community.

Aimer_WT

Aimer_WT is a web scraping tool designed to extract data from websites efficiently and accurately. It provides a user-friendly interface for users to specify the data they want to scrape and offers various customization options. With Aimer_WT, users can easily automate the process of collecting data from multiple web pages, saving time and effort. The tool is suitable for both beginners and experienced users who need to gather data for research, analysis, or other purposes. Aimer_WT supports various data formats and allows users to export the extracted data for further processing.

LLMs-in-Finance

This repository focuses on the application of Large Language Models (LLMs) in the field of finance. It provides insights and knowledge about how LLMs can be utilized in various scenarios within the finance industry, particularly in generating AI agents. The repository aims to explore the potential of LLMs to enhance financial processes and decision-making through the use of advanced natural language processing techniques.

proxyless-llm-websearch

Proxyless-LLM-WebSearch is a tool that enables users to perform large language model-based web search without the need for proxies. It leverages state-of-the-art language models to provide accurate and efficient web search results. The tool is designed to be user-friendly and accessible for individuals looking to conduct web searches at scale. With Proxyless-LLM-WebSearch, users can easily search the web using natural language queries and receive relevant results in a timely manner. This tool is particularly useful for researchers, data analysts, content creators, and anyone interested in leveraging advanced language models for web search tasks.

upgini

Upgini is an intelligent data search engine with a Python library that helps users find and add relevant features to their ML pipeline from various public, community, and premium external data sources. It automates the optimization of connected data sources by generating an optimal set of machine learning features using large language models, GraphNNs, and recurrent neural networks. The tool aims to simplify feature search and enrichment for external data to make it a standard approach in machine learning pipelines. It democratizes access to data sources for the data science community.

Daft

Daft is a lightweight and efficient tool for data analysis and visualization. It provides a user-friendly interface for exploring and manipulating datasets, making it ideal for both beginners and experienced data analysts. With Daft, you can easily import data from various sources, clean and preprocess it, perform statistical analysis, create insightful visualizations, and export your results in multiple formats. Whether you are a student, researcher, or business professional, Daft simplifies the process of analyzing data and deriving meaningful insights.

llama_index

LlamaIndex is a data framework for building LLM applications. It provides tools for ingesting, structuring, and querying data, as well as integrating with LLMs and other tools. LlamaIndex is designed to be easy to use for both beginner and advanced users, and it provides a comprehensive set of features for building LLM applications.

LLM-Project

LLM-Project is a machine learning model for sentiment analysis. It is designed to analyze text data and classify it into positive, negative, or neutral sentiments. The model uses natural language processing techniques to extract features from the text and train a classifier to make predictions. LLM-Project is suitable for researchers, developers, and data scientists who are working on sentiment analysis tasks. It provides a pre-trained model that can be easily integrated into existing projects or used for experimentation and research purposes. The codebase is well-documented and easy to understand, making it accessible to users with varying levels of expertise in machine learning and natural language processing.

context-portal

Context-portal is a versatile tool for managing and visualizing data in a collaborative environment. It provides a user-friendly interface for organizing and sharing information, making it easy for teams to work together on projects. With features such as customizable dashboards, real-time updates, and seamless integration with popular data sources, Context-portal streamlines the data management process and enhances productivity. Whether you are a data analyst, project manager, or team leader, Context-portal offers a comprehensive solution for optimizing workflows and driving better decision-making.

For similar tasks

gpt-researcher

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

lionagi

LionAGI is a robust framework for orchestrating multi-step AI operations with precise control. It allows users to bring together multiple models, advanced reasoning, tool integrations, and custom validations in a single coherent pipeline. The framework is structured, expandable, controlled, and transparent, offering features like real-time logging, message introspection, and tool usage tracking. LionAGI supports advanced multi-step reasoning with ReAct, integrates with Anthropic's Model Context Protocol, and provides observability and debugging tools. Users can seamlessly orchestrate multiple models, integrate with Claude Code CLI SDK, and leverage a fan-out fan-in pattern for orchestration. The framework also offers optional dependencies for additional functionalities like reader tools, local inference support, rich output formatting, database support, and graph visualization.

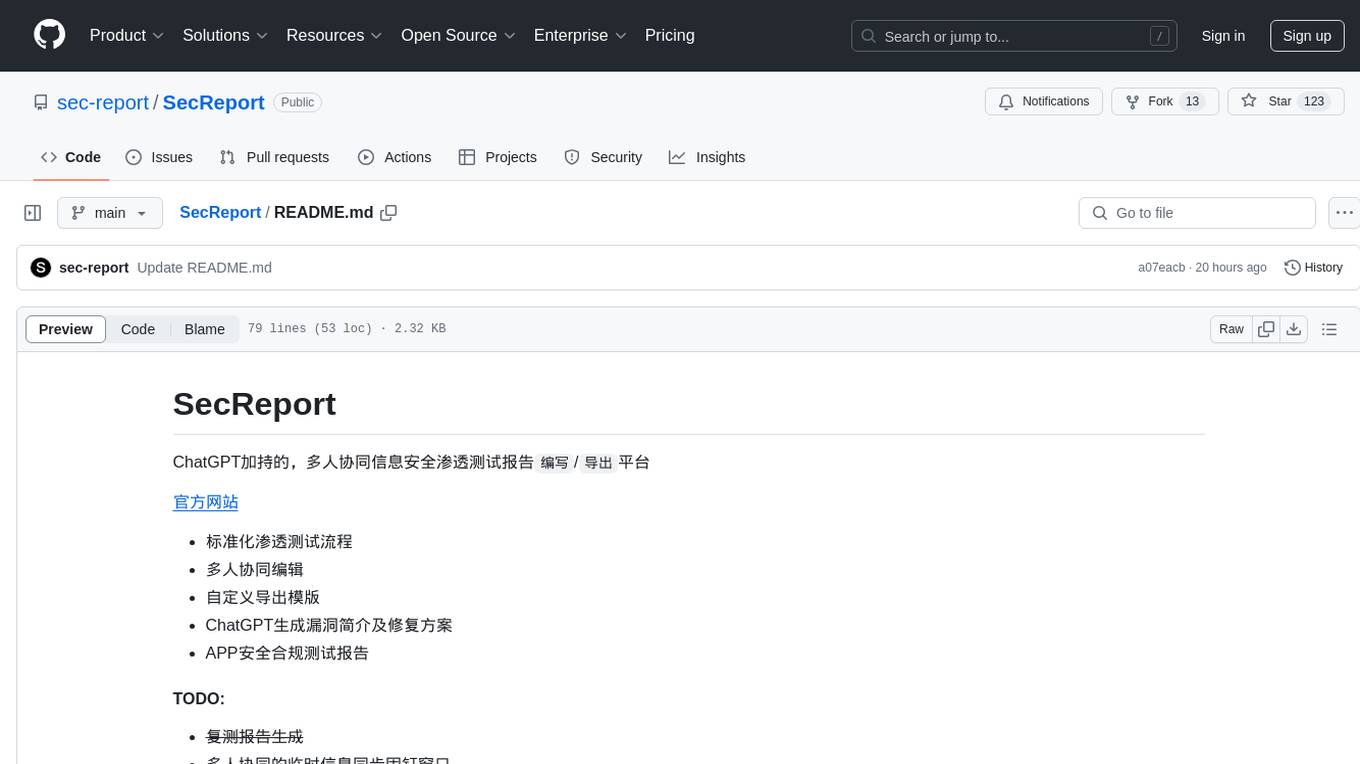

SecReport

SecReport is a platform for collaborative information security penetration testing report writing and exporting, powered by ChatGPT. It standardizes penetration testing processes, allows multiple users to edit reports, offers custom export templates, generates vulnerability summaries and fix suggestions using ChatGPT, and provides APP security compliance testing reports. The tool aims to streamline the process of creating and managing security reports for penetration testing and compliance purposes.

awesome-ai-web-search

The 'awesome-ai-web-search' repository is a curated list of AI-powered web search software that focuses on the intersection of Large Language Models (LLMs) and web search capabilities. It contains a timeline of various software supporting web search with LLM summarization, chat capabilities, and agent-driven research. The repository showcases both open-source and closed-source tools, providing a comprehensive overview of AI web search solutions available in the market.

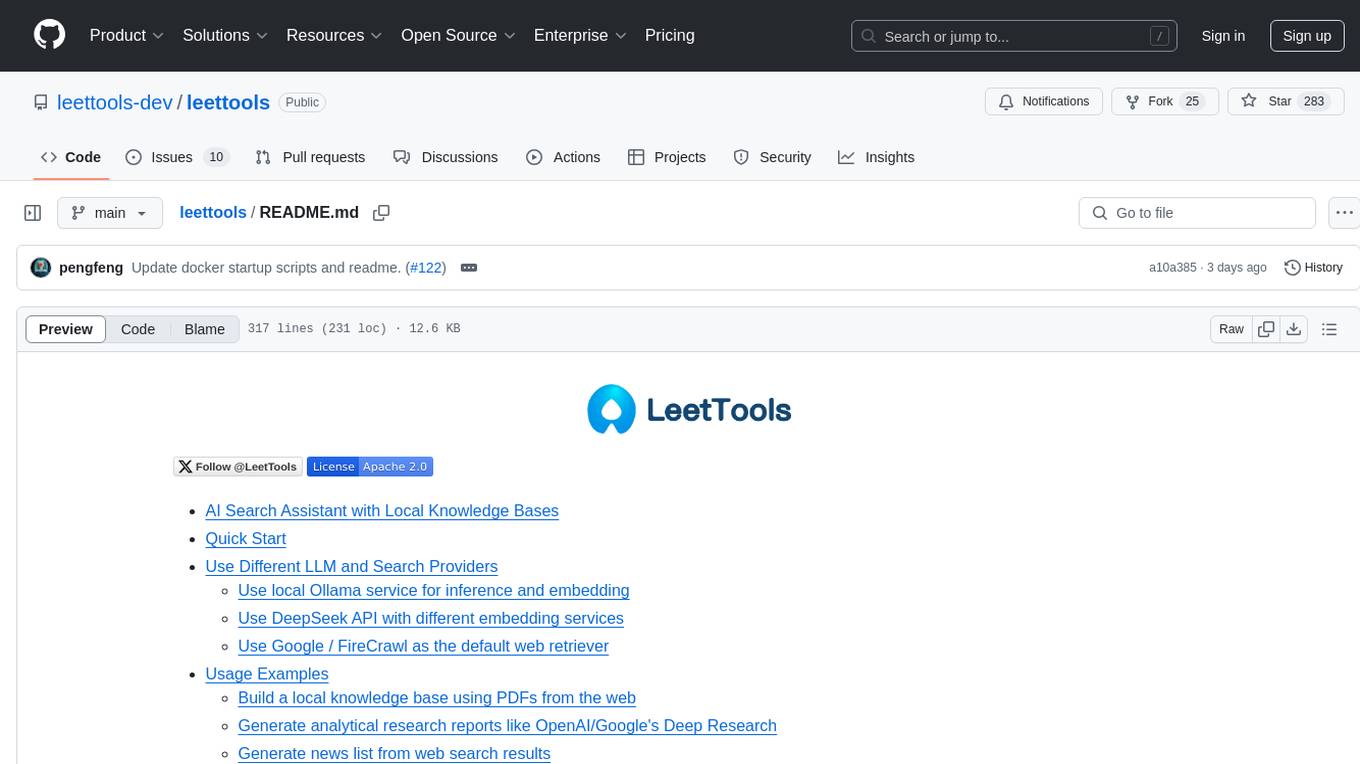

leettools

LeetTools is an AI search assistant that can perform highly customizable search workflows and generate customized format results based on both web and local knowledge bases. It provides an automated document pipeline for data ingestion, indexing, and storage, allowing users to focus on implementing workflows without worrying about infrastructure. LeetTools can run with minimal resource requirements on the command line with configurable LLM settings and supports different databases for various functions. Users can configure different functions in the same workflow to use different LLM providers and models.

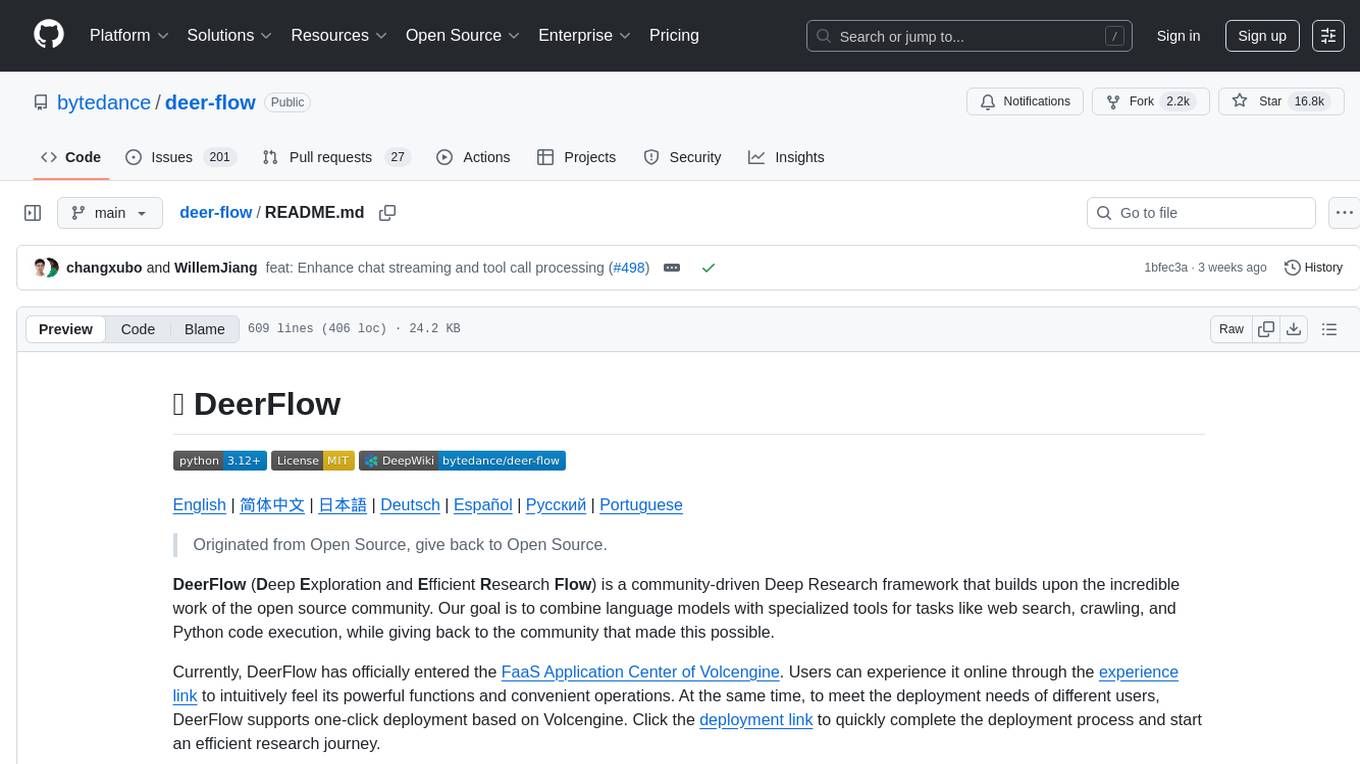

deer-flow

DeerFlow is a community-driven Deep Research framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It supports FaaS deployment and one-click deployment based on Volcengine. The framework includes core capabilities like LLM integration, search and retrieval, RAG integration, MCP seamless integration, human collaboration, report post-editing, and content creation. The architecture is based on a modular multi-agent system with components like Coordinator, Planner, Research Team, and Text-to-Speech integration. DeerFlow also supports interactive mode, human-in-the-loop mechanism, and command-line arguments for customization.

company-research-agent

Agentic Company Researcher is a multi-agent tool that generates comprehensive company research reports by utilizing a pipeline of AI agents to gather, curate, and synthesize information from various sources. It features multi-source research, AI-powered content filtering, real-time progress streaming, dual model architecture, modern React frontend, and modular architecture. The tool follows an agentic framework with specialized research and processing nodes, leverages separate models for content generation, uses a content curation system for relevance scoring and document processing, and implements a real-time communication system via WebSocket connections. Users can set up the tool quickly using the provided setup script or manually, and it can also be deployed using Docker and Docker Compose. The application can be used for local development and deployed to various cloud platforms like AWS Elastic Beanstalk, Docker, Heroku, and Google Cloud Run.

For similar jobs

Perplexica

Perplexica is an open-source AI-powered search engine that utilizes advanced machine learning algorithms to provide clear answers with sources cited. It offers various modes like Copilot Mode, Normal Mode, and Focus Modes for specific types of questions. Perplexica ensures up-to-date information by using SearxNG metasearch engine. It also features image and video search capabilities and upcoming features include finalizing Copilot Mode and adding Discover and History Saving features.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

MMMU

MMMU is a benchmark designed to evaluate multimodal models on college-level subject knowledge tasks, covering 30 subjects and 183 subfields with 11.5K questions. It focuses on advanced perception and reasoning with domain-specific knowledge, challenging models to perform tasks akin to those faced by experts. The evaluation of various models highlights substantial challenges, with room for improvement to stimulate the community towards expert artificial general intelligence (AGI).

1filellm

1filellm is a command-line data aggregation tool designed for LLM ingestion. It aggregates and preprocesses data from various sources into a single text file, facilitating the creation of information-dense prompts for large language models. The tool supports automatic source type detection, handling of multiple file formats, web crawling functionality, integration with Sci-Hub for research paper downloads, text preprocessing, and token count reporting. Users can input local files, directories, GitHub repositories, pull requests, issues, ArXiv papers, YouTube transcripts, web pages, Sci-Hub papers via DOI or PMID. The tool provides uncompressed and compressed text outputs, with the uncompressed text automatically copied to the clipboard for easy pasting into LLMs.

gpt-researcher

GPT Researcher is an autonomous agent designed for comprehensive online research on a variety of tasks. It can produce detailed, factual, and unbiased research reports with customization options. The tool addresses issues of speed, determinism, and reliability by leveraging parallelized agent work. The main idea involves running 'planner' and 'execution' agents to generate research questions, seek related information, and create research reports. GPT Researcher optimizes costs and completes tasks in around 3 minutes. Features include generating long research reports, aggregating web sources, an easy-to-use web interface, scraping web sources, and exporting reports to various formats.

ChatTTS

ChatTTS is a generative speech model optimized for dialogue scenarios, providing natural and expressive speech synthesis with fine-grained control over prosodic features. It supports multiple speakers and surpasses most open-source TTS models in terms of prosody. The model is trained with 100,000+ hours of Chinese and English audio data, and the open-source version on HuggingFace is a 40,000-hour pre-trained model without SFT. The roadmap includes open-sourcing additional features like VQ encoder, multi-emotion control, and streaming audio generation. The tool is intended for academic and research use only, with precautions taken to limit potential misuse.

HebTTS

HebTTS is a language modeling approach to diacritic-free Hebrew text-to-speech (TTS) system. It addresses the challenge of accurately mapping text to speech in Hebrew by proposing a language model that operates on discrete speech representations and is conditioned on a word-piece tokenizer. The system is optimized using weakly supervised recordings and outperforms diacritic-based Hebrew TTS systems in terms of content preservation and naturalness of generated speech.

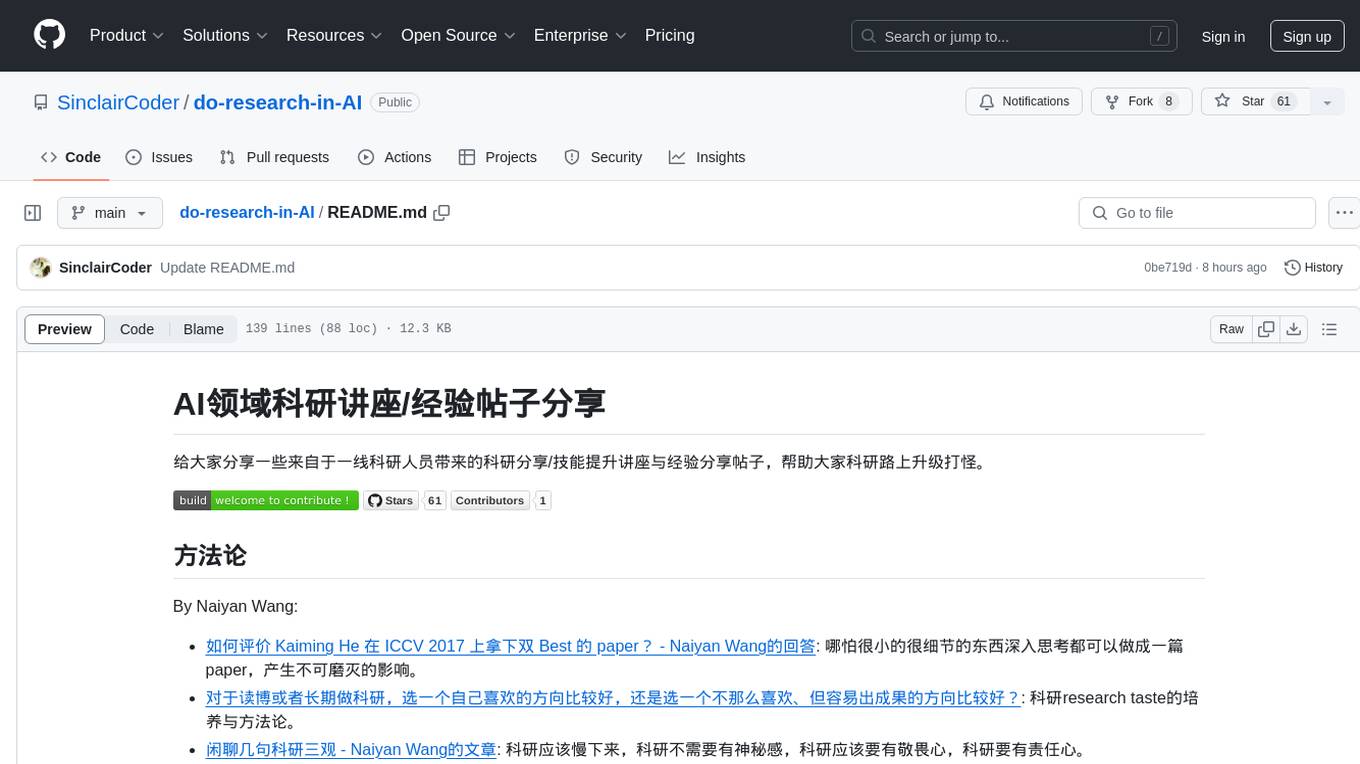

do-research-in-AI

This repository is a collection of research lectures and experience sharing posts from frontline researchers in the field of AI. It aims to help individuals upgrade their research skills and knowledge through insightful talks and experiences shared by experts. The content covers various topics such as evaluating research papers, choosing research directions, research methodologies, and tips for writing high-quality scientific papers. The repository also includes discussions on academic career paths, research ethics, and the emotional aspects of research work. Overall, it serves as a valuable resource for individuals interested in advancing their research capabilities in the field of AI.