crewAI

Framework for orchestrating role-playing, autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks.

Stars: 44305

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

README:

Homepage · Docs · Start Cloud Trial · Blog · Forum

CrewAI is a lean, lightning-fast Python framework built entirely from scratch—completely independent of LangChain or other agent frameworks. It empowers developers with both high-level simplicity and precise low-level control, ideal for creating autonomous AI agents tailored to any scenario.

- CrewAI Crews: Optimize for autonomy and collaborative intelligence.

- CrewAI Flows: The enterprise and production architecture for building and deploying multi-agent systems. Enable granular, event-driven control, single LLM calls for precise task orchestration and supports Crews natively

With over 100,000 developers certified through our community courses at learn.crewai.com, CrewAI is rapidly becoming the standard for enterprise-ready AI automation.

CrewAI AMP Suite is a comprehensive bundle tailored for organizations that require secure, scalable, and easy-to-manage agent-driven automation.

You can try one part of the suite the Crew Control Plane for free

- Tracing & Observability: Monitor and track your AI agents and workflows in real-time, including metrics, logs, and traces.

- Unified Control Plane: A centralized platform for managing, monitoring, and scaling your AI agents and workflows.

- Seamless Integrations: Easily connect with existing enterprise systems, data sources, and cloud infrastructure.

- Advanced Security: Built-in robust security and compliance measures ensuring safe deployment and management.

- Actionable Insights: Real-time analytics and reporting to optimize performance and decision-making.

- 24/7 Support: Dedicated enterprise support to ensure uninterrupted operation and quick resolution of issues.

- On-premise and Cloud Deployment Options: Deploy CrewAI AMP on-premise or in the cloud, depending on your security and compliance requirements.

CrewAI AMP is designed for enterprises seeking a powerful, reliable solution to transform complex business processes into efficient, intelligent automations.

- Why CrewAI?

- Getting Started

- Key Features

- Understanding Flows and Crews

- CrewAI vs LangGraph

- Examples

- Connecting Your Crew to a Model

- How CrewAI Compares

- Frequently Asked Questions (FAQ)

- Contribution

- Telemetry

- License

CrewAI unlocks the true potential of multi-agent automation, delivering the best-in-class combination of speed, flexibility, and control with either Crews of AI Agents or Flows of Events:

- Standalone Framework: Built from scratch, independent of LangChain or any other agent framework.

- High Performance: Optimized for speed and minimal resource usage, enabling faster execution.

- Flexible Low Level Customization: Complete freedom to customize at both high and low levels - from overall workflows and system architecture to granular agent behaviors, internal prompts, and execution logic.

- Ideal for Every Use Case: Proven effective for both simple tasks and highly complex, real-world, enterprise-grade scenarios.

- Robust Community: Backed by a rapidly growing community of over 100,000 certified developers offering comprehensive support and resources.

CrewAI empowers developers and enterprises to confidently build intelligent automations, bridging the gap between simplicity, flexibility, and performance.

Setup and run your first CrewAI agents by following this tutorial.

Learning Resources

Learn CrewAI through our comprehensive courses:

- Multi AI Agent Systems with CrewAI - Master the fundamentals of multi-agent systems

- Practical Multi AI Agents and Advanced Use Cases - Deep dive into advanced implementations

CrewAI offers two powerful, complementary approaches that work seamlessly together to build sophisticated AI applications:

-

Crews: Teams of AI agents with true autonomy and agency, working together to accomplish complex tasks through role-based collaboration. Crews enable:

- Natural, autonomous decision-making between agents

- Dynamic task delegation and collaboration

- Specialized roles with defined goals and expertise

- Flexible problem-solving approaches

-

Flows: Production-ready, event-driven workflows that deliver precise control over complex automations. Flows provide:

- Fine-grained control over execution paths for real-world scenarios

- Secure, consistent state management between tasks

- Clean integration of AI agents with production Python code

- Conditional branching for complex business logic

The true power of CrewAI emerges when combining Crews and Flows. This synergy allows you to:

- Build complex, production-grade applications

- Balance autonomy with precise control

- Handle sophisticated real-world scenarios

- Maintain clean, maintainable code structure

To get started with CrewAI, follow these simple steps:

Ensure you have Python >=3.10 <3.14 installed on your system. CrewAI uses UV for dependency management and package handling, offering a seamless setup and execution experience.

First, install CrewAI:

uv pip install crewaiIf you want to install the 'crewai' package along with its optional features that include additional tools for agents, you can do so by using the following command:

uv pip install 'crewai[tools]'The command above installs the basic package and also adds extra components which require more dependencies to function.

If you encounter issues during installation or usage, here are some common solutions:

-

ModuleNotFoundError: No module named 'tiktoken'

- Install tiktoken explicitly:

uv pip install 'crewai[embeddings]' - If using embedchain or other tools:

uv pip install 'crewai[tools]'

- Install tiktoken explicitly:

-

Failed building wheel for tiktoken

- Ensure Rust compiler is installed (see installation steps above)

- For Windows: Verify Visual C++ Build Tools are installed

- Try upgrading pip:

uv pip install --upgrade pip - If issues persist, use a pre-built wheel:

uv pip install tiktoken --prefer-binary

To create a new CrewAI project, run the following CLI (Command Line Interface) command:

crewai create crew <project_name>This command creates a new project folder with the following structure:

my_project/

├── .gitignore

├── pyproject.toml

├── README.md

├── .env

└── src/

└── my_project/

├── __init__.py

├── main.py

├── crew.py

├── tools/

│ ├── custom_tool.py

│ └── __init__.py

└── config/

├── agents.yaml

└── tasks.yaml

You can now start developing your crew by editing the files in the src/my_project folder. The main.py file is the entry point of the project, the crew.py file is where you define your crew, the agents.yaml file is where you define your agents, and the tasks.yaml file is where you define your tasks.

- Modify

src/my_project/config/agents.yamlto define your agents. - Modify

src/my_project/config/tasks.yamlto define your tasks. - Modify

src/my_project/crew.pyto add your own logic, tools, and specific arguments. - Modify

src/my_project/main.pyto add custom inputs for your agents and tasks. - Add your environment variables into the

.envfile.

Instantiate your crew:

crewai create crew latest-ai-developmentModify the files as needed to fit your use case:

agents.yaml

# src/my_project/config/agents.yaml

researcher:

role: >

{topic} Senior Data Researcher

goal: >

Uncover cutting-edge developments in {topic}

backstory: >

You're a seasoned researcher with a knack for uncovering the latest

developments in {topic}. Known for your ability to find the most relevant

information and present it in a clear and concise manner.

reporting_analyst:

role: >

{topic} Reporting Analyst

goal: >

Create detailed reports based on {topic} data analysis and research findings

backstory: >

You're a meticulous analyst with a keen eye for detail. You're known for

your ability to turn complex data into clear and concise reports, making

it easy for others to understand and act on the information you provide.tasks.yaml

# src/my_project/config/tasks.yaml

research_task:

description: >

Conduct a thorough research about {topic}

Make sure you find any interesting and relevant information given

the current year is 2025.

expected_output: >

A list with 10 bullet points of the most relevant information about {topic}

agent: researcher

reporting_task:

description: >

Review the context you got and expand each topic into a full section for a report.

Make sure the report is detailed and contains any and all relevant information.

expected_output: >

A fully fledge reports with the mains topics, each with a full section of information.

Formatted as markdown without '```'

agent: reporting_analyst

output_file: report.mdcrew.py

# src/my_project/crew.py

from crewai import Agent, Crew, Process, Task

from crewai.project import CrewBase, agent, crew, task

from crewai_tools import SerperDevTool

from crewai.agents.agent_builder.base_agent import BaseAgent

from typing import List

@CrewBase

class LatestAiDevelopmentCrew():

"""LatestAiDevelopment crew"""

agents: List[BaseAgent]

tasks: List[Task]

@agent

def researcher(self) -> Agent:

return Agent(

config=self.agents_config['researcher'],

verbose=True,

tools=[SerperDevTool()]

)

@agent

def reporting_analyst(self) -> Agent:

return Agent(

config=self.agents_config['reporting_analyst'],

verbose=True

)

@task

def research_task(self) -> Task:

return Task(

config=self.tasks_config['research_task'],

)

@task

def reporting_task(self) -> Task:

return Task(

config=self.tasks_config['reporting_task'],

output_file='report.md'

)

@crew

def crew(self) -> Crew:

"""Creates the LatestAiDevelopment crew"""

return Crew(

agents=self.agents, # Automatically created by the @agent decorator

tasks=self.tasks, # Automatically created by the @task decorator

process=Process.sequential,

verbose=True,

)main.py

#!/usr/bin/env python

# src/my_project/main.py

import sys

from latest_ai_development.crew import LatestAiDevelopmentCrew

def run():

"""

Run the crew.

"""

inputs = {

'topic': 'AI Agents'

}

LatestAiDevelopmentCrew().crew().kickoff(inputs=inputs)Before running your crew, make sure you have the following keys set as environment variables in your .env file:

- An OpenAI API key (or other LLM API key):

OPENAI_API_KEY=sk-... - A Serper.dev API key:

SERPER_API_KEY=YOUR_KEY_HERE

Lock the dependencies and install them by using the CLI command but first, navigate to your project directory:

cd my_project

crewai install (Optional)To run your crew, execute the following command in the root of your project:

crewai runor

python src/my_project/main.pyIf an error happens due to the usage of poetry, please run the following command to update your crewai package:

crewai updateYou should see the output in the console and the report.md file should be created in the root of your project with the full final report.

In addition to the sequential process, you can use the hierarchical process, which automatically assigns a manager to the defined crew to properly coordinate the planning and execution of tasks through delegation and validation of results. See more about the processes here.

CrewAI stands apart as a lean, standalone, high-performance multi-AI Agent framework delivering simplicity, flexibility, and precise control—free from the complexity and limitations found in other agent frameworks.

- Standalone & Lean: Completely independent from other frameworks like LangChain, offering faster execution and lighter resource demands.

- Flexible & Precise: Easily orchestrate autonomous agents through intuitive Crews or precise Flows, achieving perfect balance for your needs.

- Seamless Integration: Effortlessly combine Crews (autonomy) and Flows (precision) to create complex, real-world automations.

- Deep Customization: Tailor every aspect—from high-level workflows down to low-level internal prompts and agent behaviors.

- Reliable Performance: Consistent results across simple tasks and complex, enterprise-level automations.

- Thriving Community: Backed by robust documentation and over 100,000 certified developers, providing exceptional support and guidance.

Choose CrewAI to easily build powerful, adaptable, and production-ready AI automations.

You can test different real life examples of AI crews in the CrewAI-examples repo:

Check out code for this example or watch a video below:

Check out code for this example or watch a video below:

Check out code for this example or watch a video below:

CrewAI's power truly shines when combining Crews with Flows to create sophisticated automation pipelines.

CrewAI flows support logical operators like or_ and and_ to combine multiple conditions. This can be used with @start, @listen, or @router decorators to create complex triggering conditions.

-

or_: Triggers when any of the specified conditions are met. -

and_Triggers when all of the specified conditions are met.

Here's how you can orchestrate multiple Crews within a Flow:

from crewai.flow.flow import Flow, listen, start, router, or_

from crewai import Crew, Agent, Task, Process

from pydantic import BaseModel

# Define structured state for precise control

class MarketState(BaseModel):

sentiment: str = "neutral"

confidence: float = 0.0

recommendations: list = []

class AdvancedAnalysisFlow(Flow[MarketState]):

@start()

def fetch_market_data(self):

# Demonstrate low-level control with structured state

self.state.sentiment = "analyzing"

return {"sector": "tech", "timeframe": "1W"} # These parameters match the task description template

@listen(fetch_market_data)

def analyze_with_crew(self, market_data):

# Show crew agency through specialized roles

analyst = Agent(

role="Senior Market Analyst",

goal="Conduct deep market analysis with expert insight",

backstory="You're a veteran analyst known for identifying subtle market patterns"

)

researcher = Agent(

role="Data Researcher",

goal="Gather and validate supporting market data",

backstory="You excel at finding and correlating multiple data sources"

)

analysis_task = Task(

description="Analyze {sector} sector data for the past {timeframe}",

expected_output="Detailed market analysis with confidence score",

agent=analyst

)

research_task = Task(

description="Find supporting data to validate the analysis",

expected_output="Corroborating evidence and potential contradictions",

agent=researcher

)

# Demonstrate crew autonomy

analysis_crew = Crew(

agents=[analyst, researcher],

tasks=[analysis_task, research_task],

process=Process.sequential,

verbose=True

)

return analysis_crew.kickoff(inputs=market_data) # Pass market_data as named inputs

@router(analyze_with_crew)

def determine_next_steps(self):

# Show flow control with conditional routing

if self.state.confidence > 0.8:

return "high_confidence"

elif self.state.confidence > 0.5:

return "medium_confidence"

return "low_confidence"

@listen("high_confidence")

def execute_strategy(self):

# Demonstrate complex decision making

strategy_crew = Crew(

agents=[

Agent(role="Strategy Expert",

goal="Develop optimal market strategy")

],

tasks=[

Task(description="Create detailed strategy based on analysis",

expected_output="Step-by-step action plan")

]

)

return strategy_crew.kickoff()

@listen(or_("medium_confidence", "low_confidence"))

def request_additional_analysis(self):

self.state.recommendations.append("Gather more data")

return "Additional analysis required"This example demonstrates how to:

- Use Python code for basic data operations

- Create and execute Crews as steps in your workflow

- Use Flow decorators to manage the sequence of operations

- Implement conditional branching based on Crew results

CrewAI supports using various LLMs through a variety of connection options. By default your agents will use the OpenAI API when querying the model. However, there are several other ways to allow your agents to connect to models. For example, you can configure your agents to use a local model via the Ollama tool.

Please refer to the Connect CrewAI to LLMs page for details on configuring your agents' connections to models.

CrewAI's Advantage: CrewAI combines autonomous agent intelligence with precise workflow control through its unique Crews and Flows architecture. The framework excels at both high-level orchestration and low-level customization, enabling complex, production-grade systems with granular control.

- LangGraph: While LangGraph provides a foundation for building agent workflows, its approach requires significant boilerplate code and complex state management patterns. The framework's tight coupling with LangChain can limit flexibility when implementing custom agent behaviors or integrating with external systems.

P.S. CrewAI demonstrates significant performance advantages over LangGraph, executing 5.76x faster in certain cases like this QA task example (see comparison) while achieving higher evaluation scores with faster completion times in certain coding tasks, like in this example (detailed analysis).

- Autogen: While Autogen excels at creating conversational agents capable of working together, it lacks an inherent concept of process. In Autogen, orchestrating agents' interactions requires additional programming, which can become complex and cumbersome as the scale of tasks grows.

- ChatDev: ChatDev introduced the idea of processes into the realm of AI agents, but its implementation is quite rigid. Customizations in ChatDev are limited and not geared towards production environments, which can hinder scalability and flexibility in real-world applications.

CrewAI is open-source and we welcome contributions. If you're looking to contribute, please:

- Fork the repository.

- Create a new branch for your feature.

- Add your feature or improvement.

- Send a pull request.

- We appreciate your input!

uv lock

uv syncuv venvpre-commit installuv run pytest .uvx mypy srcuv builduv pip install dist/*.tar.gzCrewAI uses anonymous telemetry to collect usage data with the main purpose of helping us improve the library by focusing our efforts on the most used features, integrations and tools.

It's pivotal to understand that NO data is collected concerning prompts, task descriptions, agents' backstories or goals, usage of tools, API calls, responses, any data processed by the agents, or secrets and environment variables, with the exception of the conditions mentioned. When the share_crew feature is enabled, detailed data including task descriptions, agents' backstories or goals, and other specific attributes are collected to provide deeper insights while respecting user privacy. Users can disable telemetry by setting the environment variable OTEL_SDK_DISABLED to true.

Data collected includes:

- Version of CrewAI

- So we can understand how many users are using the latest version

- Version of Python

- So we can decide on what versions to better support

- General OS (e.g. number of CPUs, macOS/Windows/Linux)

- So we know what OS we should focus on and if we could build specific OS related features

- Number of agents and tasks in a crew

- So we make sure we are testing internally with similar use cases and educate people on the best practices

- Crew Process being used

- Understand where we should focus our efforts

- If Agents are using memory or allowing delegation

- Understand if we improved the features or maybe even drop them

- If Tasks are being executed in parallel or sequentially

- Understand if we should focus more on parallel execution

- Language model being used

- Improved support on most used languages

- Roles of agents in a crew

- Understand high level use cases so we can build better tools, integrations and examples about it

- Tools names available

- Understand out of the publicly available tools, which ones are being used the most so we can improve them

Users can opt-in to Further Telemetry, sharing the complete telemetry data by setting the share_crew attribute to True on their Crews. Enabling share_crew results in the collection of detailed crew and task execution data, including goal, backstory, context, and output of tasks. This enables a deeper insight into usage patterns while respecting the user's choice to share.

CrewAI is released under the MIT License.

- What exactly is CrewAI?

- How do I install CrewAI?

- Does CrewAI depend on LangChain?

- Is CrewAI open-source?

- Does CrewAI collect data from users?

- Can CrewAI handle complex use cases?

- Can I use CrewAI with local AI models?

- What makes Crews different from Flows?

- How is CrewAI better than LangChain?

- Does CrewAI support fine-tuning or training custom models?

- What additional features does CrewAI AMP offer?

- Is CrewAI AMP available for cloud and on-premise deployments?

- Can I try CrewAI AMP for free?

A: CrewAI is a standalone, lean, and fast Python framework built specifically for orchestrating autonomous AI agents. Unlike frameworks like LangChain, CrewAI does not rely on external dependencies, making it leaner, faster, and simpler.

A: Install CrewAI using pip:

uv pip install crewaiFor additional tools, use:

uv pip install 'crewai[tools]'A: No. CrewAI is built entirely from the ground up, with no dependencies on LangChain or other agent frameworks. This ensures a lean, fast, and flexible experience.

A: Yes. CrewAI excels at both simple and highly complex real-world scenarios, offering deep customization options at both high and low levels, from internal prompts to sophisticated workflow orchestration.

A: Absolutely! CrewAI supports various language models, including local ones. Tools like Ollama and LM Studio allow seamless integration. Check the LLM Connections documentation for more details.

A: Crews provide autonomous agent collaboration, ideal for tasks requiring flexible decision-making and dynamic interaction. Flows offer precise, event-driven control, ideal for managing detailed execution paths and secure state management. You can seamlessly combine both for maximum effectiveness.

A: CrewAI provides simpler, more intuitive APIs, faster execution speeds, more reliable and consistent results, robust documentation, and an active community—addressing common criticisms and limitations associated with LangChain.

A: Yes, CrewAI is open-source and actively encourages community contributions and collaboration.

A: CrewAI collects anonymous telemetry data strictly for improvement purposes. Sensitive data such as prompts, tasks, or API responses are never collected unless explicitly enabled by the user.

A: Check out practical examples in the CrewAI-examples repository, covering use cases like trip planners, stock analysis, and job postings.

A: Contributions are warmly welcomed! Fork the repository, create your branch, implement your changes, and submit a pull request. See the Contribution section of the README for detailed guidelines.

A: CrewAI AMP provides advanced features such as a unified control plane, real-time observability, secure integrations, advanced security, actionable insights, and dedicated 24/7 enterprise support.

A: Yes, CrewAI AMP supports both cloud-based and on-premise deployment options, allowing enterprises to meet their specific security and compliance requirements.

A: Yes, you can explore part of the CrewAI AMP Suite by accessing the Crew Control Plane for free.

A: Yes, CrewAI can integrate with custom-trained or fine-tuned models, allowing you to enhance your agents with domain-specific knowledge and accuracy.

A: Absolutely! CrewAI agents can easily integrate with external tools, APIs, and databases, empowering them to leverage real-world data and resources.

A: Yes, CrewAI is explicitly designed with production-grade standards, ensuring reliability, stability, and scalability for enterprise deployments.

A: CrewAI is highly scalable, supporting simple automations and large-scale enterprise workflows involving numerous agents and complex tasks simultaneously.

A: Yes, CrewAI AMP includes advanced debugging, tracing, and real-time observability features, simplifying the management and troubleshooting of your automations.

A: CrewAI is primarily Python-based but easily integrates with services and APIs written in any programming language through its flexible API integration capabilities.

A: Yes, CrewAI provides extensive beginner-friendly tutorials, courses, and documentation through learn.crewai.com, supporting developers at all skill levels.

A: Yes, CrewAI fully supports human-in-the-loop workflows, allowing seamless collaboration between human experts and AI agents for enhanced decision-making.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for crewAI

Similar Open Source Tools

crewAI

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

solace-agent-mesh

Solace Agent Mesh is an open-source framework designed for building event-driven multi-agent AI systems. It enables the creation of teams of AI agents with distinct skills and tools, facilitating communication and task delegation among agents. The framework is built on top of Solace AI Connector and Google's Agent Development Kit, providing a standardized communication layer for asynchronous, event-driven AI agent architecture. Solace Agent Mesh supports agent orchestration, flexible interfaces, extensibility, agent-to-agent communication, and dynamic embeds, making it suitable for developing complex AI applications with scalability and reliability.

AutoAgents

AutoAgents is a cutting-edge multi-agent framework built in Rust that enables the creation of intelligent, autonomous agents powered by Large Language Models (LLMs) and Ractor. Designed for performance, safety, and scalability. AutoAgents provides a robust foundation for building complex AI systems that can reason, act, and collaborate. With AutoAgents you can create Cloud Native Agents, Edge Native Agents and Hybrid Models as well. It is so extensible that other ML Models can be used to create complex pipelines using Actor Framework.

ISEK

ISEK is a decentralized agent network framework that enables building intelligent, collaborative agent-to-agent systems. It integrates the Google A2A protocol and ERC-8004 contracts for identity registration, reputation building, and cooperative task-solving, creating a self-organizing, decentralized society of agents. The platform addresses challenges in the agent ecosystem by providing an incentive system for users to pay for agent services, motivating developers to build high-quality agents and fostering innovation and quality in the ecosystem. ISEK focuses on decentralized agent collaboration and coordination, allowing agents to find each other, reason together, and act as a decentralized system without central control. The platform utilizes ERC-8004 for decentralized identity, reputation, and validation registries, establishing trustless verification and reputation management.

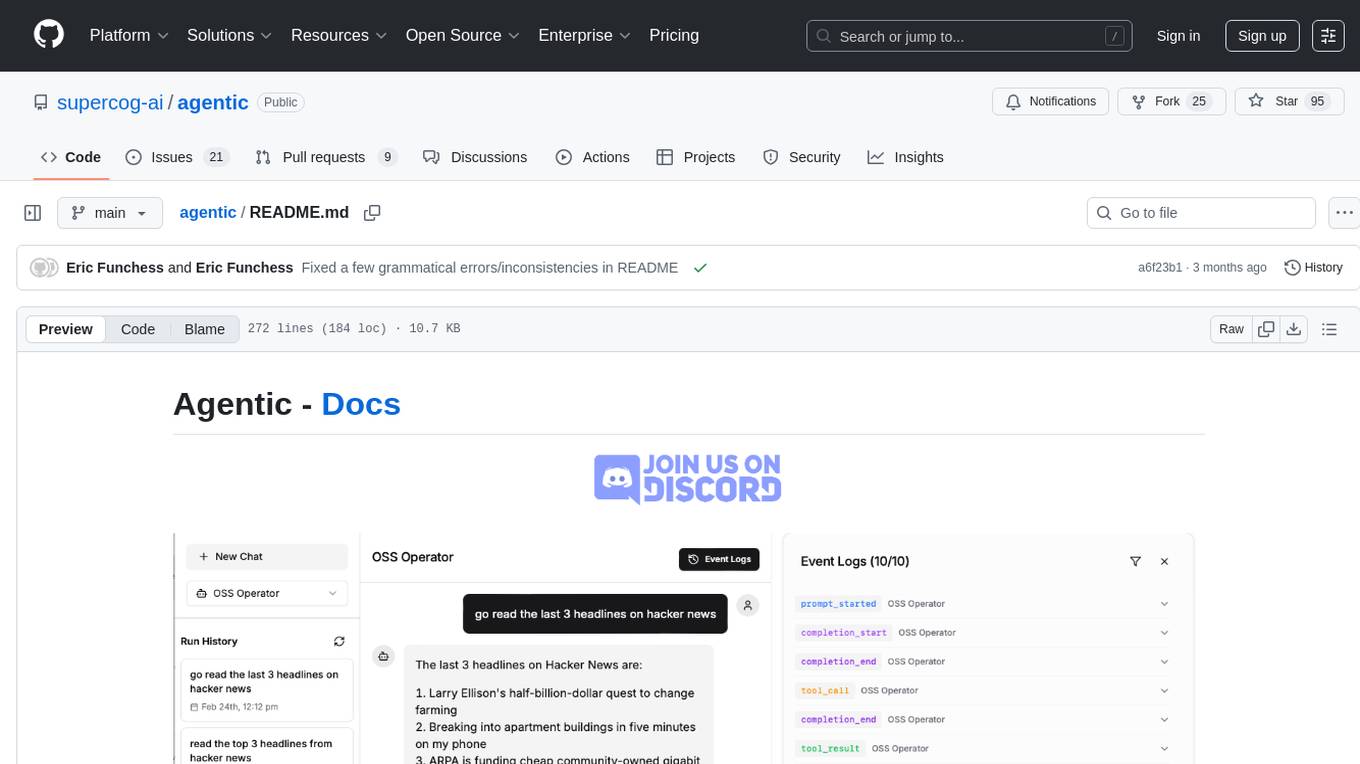

agentic

Agentic is a lightweight and flexible Python library for building multi-agent systems. It provides a simple and intuitive API for creating and managing agents, defining their behaviors, and simulating interactions in a multi-agent environment. With Agentic, users can easily design and implement complex agent-based models to study emergent behaviors, social dynamics, and decentralized decision-making processes. The library supports various agent architectures, communication protocols, and simulation scenarios, making it suitable for a wide range of research and educational applications in the fields of artificial intelligence, machine learning, social sciences, and robotics.

ms-agent

MS-Agent is a lightweight framework designed to empower agents with autonomous exploration capabilities. It provides a flexible and extensible architecture for creating agents capable of tasks like code generation, data analysis, and tool calling with MCP support. The framework supports multi-agent interactions, deep research, code generation, and is lightweight and extensible for various applications.

learn-claude-code

Learn Claude Code is an educational project by shareAI Lab that aims to help users understand how modern AI agents work by building one from scratch. The repository provides original educational material on various topics such as the agent loop, tool design, explicit planning, context management, knowledge injection, task systems, parallel execution, team messaging, and autonomous teams. Users can follow a learning path through different versions of the project, each introducing new concepts and mechanisms. The repository also includes technical tutorials, articles, and example skills for users to explore and learn from. The project emphasizes the philosophy that the model is crucial in agent development, with code playing a supporting role.

humanlayer

HumanLayer is a Python toolkit designed to enable AI agents to interact with humans in tool-based and asynchronous workflows. By incorporating humans-in-the-loop, agentic tools can access more powerful and meaningful tasks. The toolkit provides features like requiring human approval for function calls, human as a tool for contacting humans, omni-channel contact capabilities, granular routing, and support for various LLMs and orchestration frameworks. HumanLayer aims to ensure human oversight of high-stakes function calls, making AI agents more reliable and safe in executing impactful tasks.

langchain

LangChain is a framework for building LLM-powered applications that simplifies AI application development by chaining together interoperable components and third-party integrations. It helps developers connect LLMs to diverse data sources, swap models easily, and future-proof decisions as technology evolves. LangChain's ecosystem includes tools like LangSmith for agent evals, LangGraph for complex task handling, and LangGraph Platform for deployment and scaling. Additional resources include tutorials, how-to guides, conceptual guides, a forum, API reference, and chat support.

context-portal

Context-portal is a versatile tool for managing and visualizing data in a collaborative environment. It provides a user-friendly interface for organizing and sharing information, making it easy for teams to work together on projects. With features such as customizable dashboards, real-time updates, and seamless integration with popular data sources, Context-portal streamlines the data management process and enhances productivity. Whether you are a data analyst, project manager, or team leader, Context-portal offers a comprehensive solution for optimizing workflows and driving better decision-making.

trae-agent

Trae-agent is a Python library for building and training reinforcement learning agents. It provides a simple and flexible framework for implementing various reinforcement learning algorithms and experimenting with different environments. With Trae-agent, users can easily create custom agents, define reward functions, and train them on a variety of tasks. The library also includes utilities for visualizing agent performance and analyzing training results, making it a valuable tool for both beginners and experienced researchers in the field of reinforcement learning.

sdk-python

Strands Agents is a lightweight and flexible SDK that takes a model-driven approach to building and running AI agents. It supports various model providers, offers advanced capabilities like multi-agent systems and streaming support, and comes with built-in MCP server support. Users can easily create tools using Python decorators, integrate MCP servers seamlessly, and leverage multiple model providers for different AI tasks. The SDK is designed to scale from simple conversational assistants to complex autonomous workflows, making it suitable for a wide range of AI development needs.

deer-flow

DeerFlow is a community-driven Deep Research framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It supports FaaS deployment and one-click deployment based on Volcengine. The framework includes core capabilities like LLM integration, search and retrieval, RAG integration, MCP seamless integration, human collaboration, report post-editing, and content creation. The architecture is based on a modular multi-agent system with components like Coordinator, Planner, Research Team, and Text-to-Speech integration. DeerFlow also supports interactive mode, human-in-the-loop mechanism, and command-line arguments for customization.

MeeseeksAI

MeeseeksAI is a framework designed to orchestrate AI agents using a mermaid graph and networkx. It provides a structured approach to managing and coordinating multiple AI agents within a system. The framework allows users to define the interactions and dependencies between agents through a visual representation, making it easier to understand and modify the behavior of the AI system. By leveraging the power of networkx, MeeseeksAI enables efficient graph-based computations and optimizations, enhancing the overall performance of AI workflows. With its intuitive design and flexible architecture, MeeseeksAI simplifies the process of building and deploying complex AI systems, empowering users to create sophisticated agent interactions with ease.

MaIN.NET

MaIN.NET (Modular Artificial Intelligence Network) is a versatile .NET package designed to streamline the integration of large language models (LLMs) into advanced AI workflows. It offers a flexible and robust foundation for developing chatbots, automating processes, and exploring innovative AI techniques. The package connects diverse AI methods into one unified ecosystem, empowering developers with a low-code philosophy to create powerful AI applications with ease.

awesome-ai-agent-papers

This repository contains a curated list of papers related to artificial intelligence agents. It includes research papers, articles, and resources covering various aspects of AI agents, such as reinforcement learning, multi-agent systems, natural language processing, and more. Whether you are a researcher, student, or practitioner in the field of AI, this collection of papers can serve as a valuable reference to stay updated with the latest advancements and trends in AI agent technologies.

For similar tasks

Awesome-Segment-Anything

Awesome-Segment-Anything is a powerful tool for segmenting and extracting information from various types of data. It provides a user-friendly interface to easily define segmentation rules and apply them to text, images, and other data formats. The tool supports both supervised and unsupervised segmentation methods, allowing users to customize the segmentation process based on their specific needs. With its versatile functionality and intuitive design, Awesome-Segment-Anything is ideal for data analysts, researchers, content creators, and anyone looking to efficiently extract valuable insights from complex datasets.

Time-LLM

Time-LLM is a reprogramming framework that repurposes large language models (LLMs) for time series forecasting. It allows users to treat time series analysis as a 'language task' and effectively leverage pre-trained LLMs for forecasting. The framework involves reprogramming time series data into text representations and providing declarative prompts to guide the LLM reasoning process. Time-LLM supports various backbone models such as Llama-7B, GPT-2, and BERT, offering flexibility in model selection. The tool provides a general framework for repurposing language models for time series forecasting tasks.

crewAI

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

Transformers_And_LLM_Are_What_You_Dont_Need

Transformers_And_LLM_Are_What_You_Dont_Need is a repository that explores the limitations of transformers in time series forecasting. It contains a collection of papers, articles, and theses discussing the effectiveness of transformers and LLMs in this domain. The repository aims to provide insights into why transformers may not be the best choice for time series forecasting tasks.

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package for time series forecasting with state-of-the-art network architectures. It offers a high-level API for training networks on pandas data frames and utilizes PyTorch Lightning for scalable training on GPUs and CPUs. The package aims to simplify time series forecasting with neural networks by providing a flexible API for professionals and default settings for beginners. It includes a timeseries dataset class, base model class, multiple neural network architectures, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. PyTorch Forecasting is built on pytorch-lightning for easy training on various hardware configurations.

spider

Spider is a high-performance web crawler and indexer designed to handle data curation workloads efficiently. It offers features such as concurrency, streaming, decentralization, headless Chrome rendering, HTTP proxies, cron jobs, subscriptions, smart mode, blacklisting, whitelisting, budgeting depth, dynamic AI prompt scripting, CSS scraping, and more. Users can easily get started with the Spider Cloud hosted service or set up local installations with spider-cli. The tool supports integration with Node.js and Python for additional flexibility. With a focus on speed and scalability, Spider is ideal for extracting and organizing data from the web.

AI_for_Science_paper_collection

AI for Science paper collection is an initiative by AI for Science Community to collect and categorize papers in AI for Science areas by subjects, years, venues, and keywords. The repository contains `.csv` files with paper lists labeled by keys such as `Title`, `Conference`, `Type`, `Application`, `MLTech`, `OpenReviewLink`. It covers top conferences like ICML, NeurIPS, and ICLR. Volunteers can contribute by updating existing `.csv` files or adding new ones for uncovered conferences/years. The initiative aims to track the increasing trend of AI for Science papers and analyze trends in different applications.

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package designed for state-of-the-art timeseries forecasting using deep learning architectures. It offers a high-level API and leverages PyTorch Lightning for efficient training on GPU or CPU with automatic logging. The package aims to simplify timeseries forecasting tasks by providing a flexible API for professionals and user-friendly defaults for beginners. It includes features such as a timeseries dataset class for handling data transformations, missing values, and subsampling, various neural network architectures optimized for real-world deployment, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. Built on pytorch-lightning, it supports training on CPUs, single GPUs, and multiple GPUs out-of-the-box.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.