moon-bot

WhatsApp Bot use Baileys

Stars: 51

Moon Bot is a free script that utilizes the AlyaChan-APIs. It requires a server with specific specifications, NodeJS, FFMPEG, WhatsApp, and an API key. The script can be deployed on platforms like Heroku, VPS/RDP DigitalOcean, VPS NAT HostData, and Panel Optiklink. It supports databases like MongoDB, PostgreSQL Supabase, and PostgreSQL/MongoDB Railway for testing. Users can configure the script through .env, config.json, and config.js files. Installation and running instructions are provided for different environments. The script supports plugins and events, and external session management is possible. Moon Bot is under development and receives regular updates.

README:

This script is 100% free, which uses the api from AlyaChan-APIs

Group : Community

- [x] Server vCPU/RAM 1/1GB (Min)

- [x] NodeJS

- [x] FFMPEG

- [x] WhatsApp

- [x] Apikey

- [x] Heroku (Recommended)

- [x] VPS/RDP DigitalOcean

- [x] VPS NAT HostData

- [x] Panel Optiklink

Note :

- Do not use fake panels, usually have bocil JB, use trusted panels, there is a price there is quality

- Jangan menggunakan panel abal abal, biasanya punya bocil JB, gunakanlah panel yang terpercaya, ada harga ada kualitas

There are 3 files that can be changed .env, config.json and config.js

DATABASE_URL = ''

API_ENDPOINT = 'https://api.alyachan.dev/'

API_KEY = 'yourkey'{

"owner": "6281252848955",

"owner_name": "Contact Support",

"limit": "10",

"multiplier": "250",

"min_reward": 5000,

"max_reward": 20000,

"ram_limit": "1.2GB",

"max_upload": 150,

"max_upload_free": 40,

"timer": 120000,

"timeout": 1800000,

"evaluate_chars": ["=>", ">", "$", "~>", "!", "+", "/", "#", "."],

"pairing": {

"state": false,

"number": 62000

}

}global.header = `moon-bot v${require('../../package.json').version}`

global.footer = Func.Styles('simple whatsapp bot made by moon')Install buildpack

- Heroku/Nodejs

- Heroku/Python

- https://github.com/jonathanong/heroku-buildpack-ffmpeg-latest.git

- https://github.com/DuckyTeam/heroku-buildpack-imagemagick.git

- Download and install Git here

- Download and install NodeJS here

- Download and install FFMPEG here

- Download and install ImageMagick here

$ bash install.sh

$ git clone https://github.com/rifnd/moon-bot

$ cd moon-bot

$ npm install

$ node .$ pm2 start index.js --name "mybot"{

"pairing": {

"state": false, /** true to enable, false to disable */

"number": 62000 /** here's the bot number */

},

}Note :

- Works with old and new plugins

- Bisa untuk plugin lama dan plugin baru

module.exports = {

help: ['display'],

tags: ['category'],

command: ['command'],

run: async (m, {

conn,

usedPrefix,

prefixes,

command,

args,

text,

body,

plugins,

Scraper,

Func

}) => {

const raimu = require('jancok')

console.log(raimu.asu)

},

limit: Boolean,

premium: Boolean,

group: Boolean,

private: Boolean,

owner: Boolean,

admin: Boolean,

botAdmin: Boolean,

register: Boolean

}module.exports = {

async before(m, {

conn,

Scraper,

Func

}) {

try {

const raimu = require('jancok')

console.log(raimu.ancok)

} catch (e) {

console.log(e)

}

return true

}

}Install depedency

$ npm install session@github:neoxr/session#mongodb in main.js

const { useMongoAuthState } = require('session')

const conn = new Connection({

directory: 'plugins',

session: useMongoAuthState(process.env.DATABASE_URL, 'session'),

online: false,

browser: ['Ubuntu', 'Chrome', '20.0.04']

})This script is still in development and will continue to be updated, keep an eye on this repository, don't forget to give stars and forks

Adios.....

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for moon-bot

Similar Open Source Tools

moon-bot

Moon Bot is a free script that utilizes the AlyaChan-APIs. It requires a server with specific specifications, NodeJS, FFMPEG, WhatsApp, and an API key. The script can be deployed on platforms like Heroku, VPS/RDP DigitalOcean, VPS NAT HostData, and Panel Optiklink. It supports databases like MongoDB, PostgreSQL Supabase, and PostgreSQL/MongoDB Railway for testing. Users can configure the script through .env, config.json, and config.js files. Installation and running instructions are provided for different environments. The script supports plugins and events, and external session management is possible. Moon Bot is under development and receives regular updates.

aio-scrapy

Aio-scrapy is an asyncio-based web crawling and web scraping framework inspired by Scrapy. It supports distributed crawling/scraping, implements compatibility with scrapyd, and provides options for using redis queue and rabbitmq queue. The framework is designed for fast extraction of structured data from websites. Aio-scrapy requires Python 3.9+ and is compatible with Linux, Windows, macOS, and BSD systems.

klavis

Klavis AI is a production-ready solution for managing Multiple Communication Protocol (MCP) servers. It offers self-hosted solutions and a hosted service with enterprise OAuth support. With Klavis AI, users can easily deploy and manage over 50 MCP servers for various services like GitHub, Gmail, Google Sheets, YouTube, Slack, and more. The tool provides instant access to MCP servers, seamless authentication, and integration with AI frameworks, making it ideal for individuals and businesses looking to streamline their communication and data management workflows.

langgraph-redis

LangGraph Redis is a repository containing Redis implementations for LangGraph, offering Checkpoint Savers and Stores functionality. It consists of Redis Checkpoint Savers for storing and managing checkpoints, Redis Stores for key-value stores with vector search capabilities, and Redis Middleware for semantic caching, tool caching, and conversation memory. The project has dependencies on Python packages like redis, redisvl, and langgraph-checkpoint. It requires Redis with RedisJSON and RediSearch modules for search and indexing capabilities. The repository provides detailed instructions for installation, usage of Redis Checkpoint Savers, Async Implementation, Shallow Implementations, Redis Checkpoint TTL Support, Redis Stores, and Redis Middleware for LangChain Agents. It also includes example notebooks for various use cases and implementation details on Redis module usage, indexing, TTL implementation, and contribution guidelines.

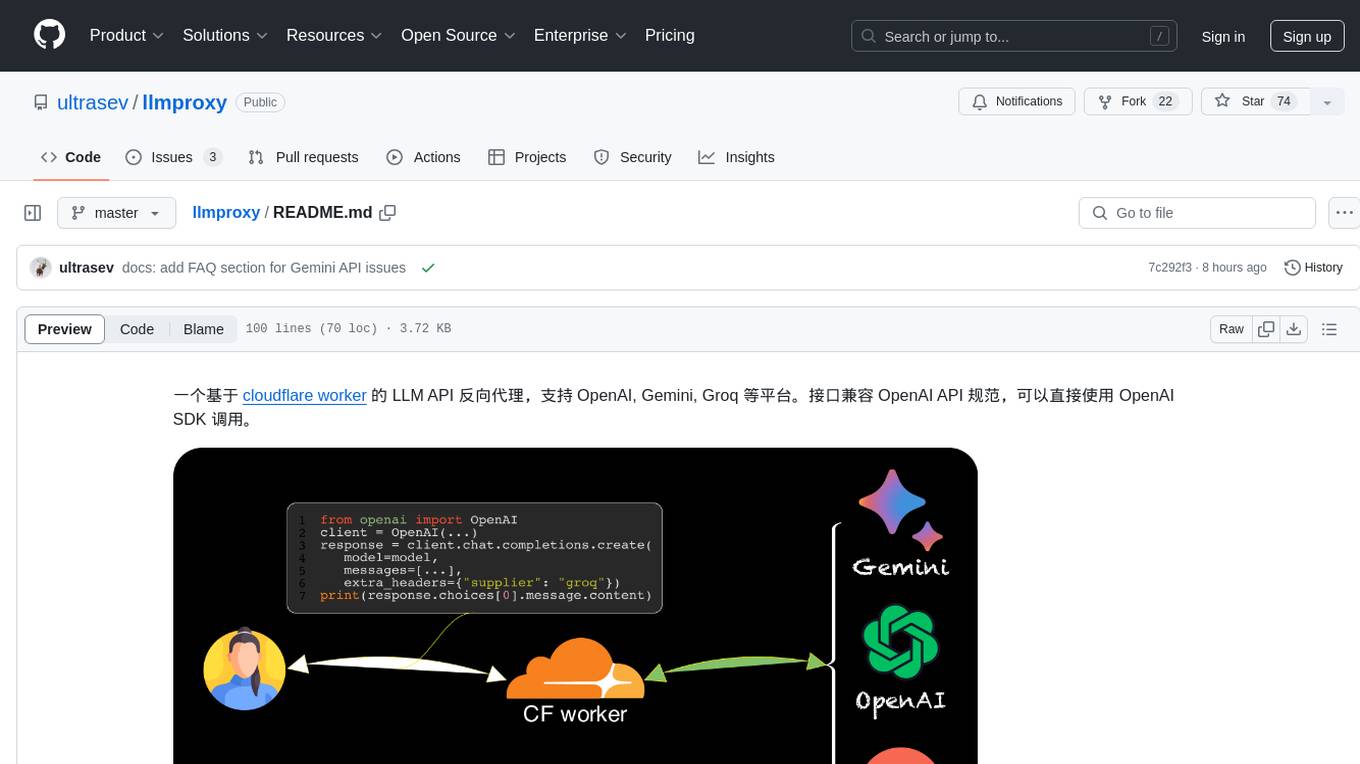

llmproxy

llmproxy is a reverse proxy for LLM API based on Cloudflare Worker, supporting platforms like OpenAI, Gemini, and Groq. The interface is compatible with the OpenAI API specification and can be directly accessed using the OpenAI SDK. It provides a convenient way to interact with various AI platforms through a unified API endpoint, enabling seamless integration and usage in different applications.

mcp-framework

MCP-Framework is a TypeScript framework for building Model Context Protocol (MCP) servers with automatic directory-based discovery for tools, resources, and prompts. It provides powerful abstractions, simple server setup, and a CLI for rapid development and project scaffolding.

acte

Acte is a framework designed to build GUI-like tools for AI Agents. It aims to address the issues of cognitive load and freedom degrees when interacting with multiple APIs in complex scenarios. By providing a graphical user interface (GUI) for Agents, Acte helps reduce cognitive load and constraints interaction, similar to how humans interact with computers through GUIs. The tool offers APIs for starting new sessions, executing actions, and displaying screens, accessible via HTTP requests or the SessionManager class.

firecrawl

Firecrawl is an API service that empowers AI applications with clean data from any website. It features advanced scraping, crawling, and data extraction capabilities. The repository is still in development, integrating custom modules into the mono repo. Users can run it locally but it's not fully ready for self-hosted deployment yet. Firecrawl offers powerful capabilities like scraping, crawling, mapping, searching, and extracting structured data from single pages, multiple pages, or entire websites with AI. It supports various formats, actions, and batch scraping. The tool is designed to handle proxies, anti-bot mechanisms, dynamic content, media parsing, change tracking, and more. Firecrawl is available as an open-source project under the AGPL-3.0 license, with additional features offered in the cloud version.

superagent

Superagent is an open-source AI assistant framework and API that allows developers to add powerful AI assistants to their applications. These assistants use large language models (LLMs), retrieval augmented generation (RAG), and generative AI to help users with a variety of tasks, including question answering, chatbot development, content generation, data aggregation, and workflow automation. Superagent is backed by Y Combinator and is part of YC W24.

mcp-hub

MCP Hub is a centralized manager for Model Context Protocol (MCP) servers, offering dynamic server management and monitoring, REST API for tool execution and resource access, MCP Server marketplace integration, real-time server status tracking, client connection management, and process lifecycle handling. It acts as a central management server connecting to and managing multiple MCP servers, providing unified API endpoints for client access, handling server lifecycle and health monitoring, and routing requests between clients and MCP servers.

python-utcp

The Universal Tool Calling Protocol (UTCP) is a secure and scalable standard for defining and interacting with tools across various communication protocols. UTCP emphasizes scalability, extensibility, interoperability, and ease of use. It offers a modular core with a plugin-based architecture, making it extensible, testable, and easy to package. The repository contains the complete UTCP Python implementation with core components and protocol-specific plugins for HTTP, CLI, Model Context Protocol, file-based tools, and more.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

ai-wechat-bot

Gewechat is a project based on the Gewechat project to implement a personal WeChat channel, using the iPad protocol for login. It can obtain wxid and send voice messages, which is more stable than the itchat protocol. The project provides documentation for the API. Users can deploy the Gewechat service and use the ai-wechat-bot project to interface with it. Configuration parameters for Gewechat and ai-wechat-bot need to be set in the config.json file. Gewechat supports sending voice messages, with limitations on the duration of received voice messages. The project has restrictions such as requiring the server to be in the same province as the device logging into WeChat, limited file download support, and support only for text and image messages.

airtable

A simple Golang package to access the Airtable API. It provides functionalities to interact with Airtable such as initializing client, getting tables, listing records, adding records, updating records, deleting records, and bulk deleting records. The package is compatible with Go 1.13 and above.

gp.nvim

Gp.nvim (GPT prompt) Neovim AI plugin provides a seamless integration of GPT models into Neovim, offering features like streaming responses, extensibility via hook functions, minimal dependencies, ChatGPT-like sessions, instructable text/code operations, speech-to-text support, and image generation directly within Neovim. The plugin aims to enhance the Neovim experience by leveraging the power of AI models in a user-friendly and native way.

Bindu

Bindu is an operating layer for AI agents that provides identity, communication, and payment capabilities. It delivers a production-ready service with a convenient API to connect, authenticate, and orchestrate agents across distributed systems using open protocols: A2A, AP2, and X402. Built with a distributed architecture, Bindu makes it fast to develop and easy to integrate with any AI framework. Transform any agent framework into a fully interoperable service for communication, collaboration, and commerce in the Internet of Agents.

For similar tasks

Forza-Mods-AIO

Forza Mods AIO is a free and open-source tool that enhances the gaming experience in Forza Horizon 4 and 5. It offers a range of time-saving and quality-of-life features, making gameplay more enjoyable and efficient. The tool is designed to streamline various aspects of the game, improving user satisfaction and overall enjoyment.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

MaterialSearch

MaterialSearch is a tool for searching local images and videos using natural language. It provides functionalities such as text search for images, image search for images, text search for videos (providing matching video clips), image search for videos (searching for the segment in a video through a screenshot), image-text similarity calculation, and Pexels video search. The tool can be deployed through the source code or Docker image, and it supports GPU acceleration. Users can configure the tool through environment variables or a .env file. The tool is still under development, and configurations may change frequently. Users can report issues or suggest improvements through issues or pull requests.

tenere

Tenere is a TUI interface for Language Model Libraries (LLMs) written in Rust. It provides syntax highlighting, chat history, saving chats to files, Vim keybindings, copying text from/to clipboard, and supports multiple backends. Users can configure Tenere using a TOML configuration file, set key bindings, and use different LLMs such as ChatGPT, llama.cpp, and ollama. Tenere offers default key bindings for global and prompt modes, with features like starting a new chat, saving chats, scrolling, showing chat history, and quitting the app. Users can interact with the prompt in different modes like Normal, Visual, and Insert, with various key bindings for navigation, editing, and text manipulation.

openkore

OpenKore is a custom client and intelligent automated assistant for Ragnarok Online. It is a free, open source, and cross-platform program (Linux, Windows, and MacOS are supported). To run OpenKore, you need to download and extract it or clone the repository using Git. Configure OpenKore according to the documentation and run openkore.pl to start. The tool provides a FAQ section for troubleshooting, guidelines for reporting issues, and information about botting status on official servers. OpenKore is developed by a global team, and contributions are welcome through pull requests. Various community resources are available for support and communication. Users are advised to comply with the GNU General Public License when using and distributing the software.

QA-Pilot

QA-Pilot is an interactive chat project that leverages online/local LLM for rapid understanding and navigation of GitHub code repository. It allows users to chat with GitHub public repositories using a git clone approach, store chat history, configure settings easily, manage multiple chat sessions, and quickly locate sessions with a search function. The tool integrates with `codegraph` to view Python files and supports various LLM models such as ollama, openai, mistralai, and localai. The project is continuously updated with new features and improvements, such as converting from `flask` to `fastapi`, adding `localai` API support, and upgrading dependencies like `langchain` and `Streamlit` to enhance performance.

extension-gen-ai

The Looker GenAI Extension provides code examples and resources for building a Looker Extension that integrates with Vertex AI Large Language Models (LLMs). Users can leverage the power of LLMs to enhance data exploration and analysis within Looker. The extension offers generative explore functionality to ask natural language questions about data and generative insights on dashboards to analyze data by asking questions. It leverages components like BQML Remote Models, BQML Remote UDF with Vertex AI, and Custom Fine Tune Model for different integration options. Deployment involves setting up infrastructure with Terraform and deploying the Looker Extension by creating a Looker project, copying extension files, configuring BigQuery connection, connecting to Git, and testing the extension. Users can save example prompts and configure user settings for the extension. Development of the Looker Extension environment includes installing dependencies, starting the development server, and building for production.

For similar jobs

ChatFAQ

ChatFAQ is an open-source comprehensive platform for creating a wide variety of chatbots: generic ones, business-trained, or even capable of redirecting requests to human operators. It includes a specialized NLP/NLG engine based on a RAG architecture and customized chat widgets, ensuring a tailored experience for users and avoiding vendor lock-in.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

anything-llm

AnythingLLM is a full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use as well as supporting multi-user management and permissions.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.

glide

Glide is a cloud-native LLM gateway that provides a unified REST API for accessing various large language models (LLMs) from different providers. It handles LLMOps tasks such as model failover, caching, key management, and more, making it easy to integrate LLMs into applications. Glide supports popular LLM providers like OpenAI, Anthropic, Azure OpenAI, AWS Bedrock (Titan), Cohere, Google Gemini, OctoML, and Ollama. It offers high availability, performance, and observability, and provides SDKs for Python and NodeJS to simplify integration.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

onnxruntime-genai

ONNX Runtime Generative AI is a library that provides the generative AI loop for ONNX models, including inference with ONNX Runtime, logits processing, search and sampling, and KV cache management. Users can call a high level `generate()` method, or run each iteration of the model in a loop. It supports greedy/beam search and TopP, TopK sampling to generate token sequences, has built in logits processing like repetition penalties, and allows for easy custom scoring.