crawl4ai

🚀🤖 Crawl4AI: Open-source LLM Friendly Web Crawler & Scraper. Don't be shy, join here: https://discord.gg/jP8KfhDhyN

Stars: 60426

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

README:

Reliable, large-scale web extraction, now built to be drastically more cost-effective than any of the existing solutions.

👉 Apply here for early access

We’ll be onboarding in phases and working closely with early users.

Limited slots.

Crawl4AI turns the web into clean, LLM ready Markdown for RAG, agents, and data pipelines. Fast, controllable, battle tested by a 50k+ star community.

✨ Check out latest update v0.8.0

✨ New in v0.8.0: Crash Recovery & Prefetch Mode! Deep crawl crash recovery with resume_state and on_state_change callbacks for long-running crawls. New prefetch=True mode for 5-10x faster URL discovery. Critical security fixes for Docker API (hooks disabled by default, file:// URLs blocked). Release notes →

✨ Recent v0.7.8: Stability & Bug Fix Release! 11 bug fixes addressing Docker API issues, LLM extraction improvements, URL handling fixes, and dependency updates. Release notes →

✨ Previous v0.7.7: Complete Self-Hosting Platform with Real-time Monitoring! Enterprise-grade monitoring dashboard, comprehensive REST API, WebSocket streaming, and smart browser pool management. Release notes →

🤓 My Personal Story

I grew up on an Amstrad, thanks to my dad, and never stopped building. In grad school I specialized in NLP and built crawlers for research. That’s where I learned how much extraction matters.

In 2023, I needed web-to-Markdown. The “open source” option wanted an account, API token, and $16, and still under-delivered. I went turbo anger mode, built Crawl4AI in days, and it went viral. Now it’s the most-starred crawler on GitHub.

I made it open source for availability, anyone can use it without a gate. Now I’m building the platform for affordability, anyone can run serious crawls without breaking the bank. If that resonates, join in, send feedback, or just crawl something amazing.

Why developers pick Crawl4AI

- LLM ready output, smart Markdown with headings, tables, code, citation hints

- Fast in practice, async browser pool, caching, minimal hops

- Full control, sessions, proxies, cookies, user scripts, hooks

- Adaptive intelligence, learns site patterns, explores only what matters

- Deploy anywhere, zero keys, CLI and Docker, cloud friendly

- Install Crawl4AI:

# Install the package

pip install -U crawl4ai

# For pre release versions

pip install crawl4ai --pre

# Run post-installation setup

crawl4ai-setup

# Verify your installation

crawl4ai-doctorIf you encounter any browser-related issues, you can install them manually:

python -m playwright install --with-deps chromium- Run a simple web crawl with Python:

import asyncio

from crawl4ai import *

async def main():

async with AsyncWebCrawler() as crawler:

result = await crawler.arun(

url="https://www.nbcnews.com/business",

)

print(result.markdown)

if __name__ == "__main__":

asyncio.run(main())- Or use the new command-line interface:

# Basic crawl with markdown output

crwl https://www.nbcnews.com/business -o markdown

# Deep crawl with BFS strategy, max 10 pages

crwl https://docs.crawl4ai.com --deep-crawl bfs --max-pages 10

# Use LLM extraction with a specific question

crwl https://www.example.com/products -q "Extract all product prices"🎉 Sponsorship Program Now Open! After powering 51K+ developers and 1 year of growth, Crawl4AI is launching dedicated support for startups and enterprises. Be among the first 50 Founding Sponsors for permanent recognition in our Hall of Fame.

Crawl4AI is the #1 trending open-source web crawler on GitHub. Your support keeps it independent, innovative, and free for the community — while giving you direct access to premium benefits.

- 🌱 Believer ($5/mo) — Join the movement for data democratization

- 🚀 Builder ($50/mo) — Priority support & early access to features

- 💼 Growing Team ($500/mo) — Bi-weekly syncs & optimization help

-

🏢 Data Infrastructure Partner ($2000/mo) — Full partnership with dedicated support

Custom arrangements available - see SPONSORS.md for details & contact

Why sponsor?

No rate-limited APIs. No lock-in. Build and own your data pipeline with direct guidance from the creator of Crawl4AI.

📝 Markdown Generation

- 🧹 Clean Markdown: Generates clean, structured Markdown with accurate formatting.

- 🎯 Fit Markdown: Heuristic-based filtering to remove noise and irrelevant parts for AI-friendly processing.

- 🔗 Citations and References: Converts page links into a numbered reference list with clean citations.

- 🛠️ Custom Strategies: Users can create their own Markdown generation strategies tailored to specific needs.

- 📚 BM25 Algorithm: Employs BM25-based filtering for extracting core information and removing irrelevant content.

📊 Structured Data Extraction

- 🤖 LLM-Driven Extraction: Supports all LLMs (open-source and proprietary) for structured data extraction.

- 🧱 Chunking Strategies: Implements chunking (topic-based, regex, sentence-level) for targeted content processing.

- 🌌 Cosine Similarity: Find relevant content chunks based on user queries for semantic extraction.

- 🔎 CSS-Based Extraction: Fast schema-based data extraction using XPath and CSS selectors.

- 🔧 Schema Definition: Define custom schemas for extracting structured JSON from repetitive patterns.

🌐 Browser Integration

- 🖥️ Managed Browser: Use user-owned browsers with full control, avoiding bot detection.

- 🔄 Remote Browser Control: Connect to Chrome Developer Tools Protocol for remote, large-scale data extraction.

- 👤 Browser Profiler: Create and manage persistent profiles with saved authentication states, cookies, and settings.

- 🔒 Session Management: Preserve browser states and reuse them for multi-step crawling.

- 🧩 Proxy Support: Seamlessly connect to proxies with authentication for secure access.

- ⚙️ Full Browser Control: Modify headers, cookies, user agents, and more for tailored crawling setups.

- 🌍 Multi-Browser Support: Compatible with Chromium, Firefox, and WebKit.

- 📐 Dynamic Viewport Adjustment: Automatically adjusts the browser viewport to match page content, ensuring complete rendering and capturing of all elements.

🔎 Crawling & Scraping

- 🖼️ Media Support: Extract images, audio, videos, and responsive image formats like

srcsetandpicture. - 🚀 Dynamic Crawling: Execute JS and wait for async or sync for dynamic content extraction.

- 📸 Screenshots: Capture page screenshots during crawling for debugging or analysis.

- 📂 Raw Data Crawling: Directly process raw HTML (

raw:) or local files (file://). - 🔗 Comprehensive Link Extraction: Extracts internal, external links, and embedded iframe content.

- 🛠️ Customizable Hooks: Define hooks at every step to customize crawling behavior (supports both string and function-based APIs).

- 💾 Caching: Cache data for improved speed and to avoid redundant fetches.

- 📄 Metadata Extraction: Retrieve structured metadata from web pages.

- 📡 IFrame Content Extraction: Seamless extraction from embedded iframe content.

- 🕵️ Lazy Load Handling: Waits for images to fully load, ensuring no content is missed due to lazy loading.

- 🔄 Full-Page Scanning: Simulates scrolling to load and capture all dynamic content, perfect for infinite scroll pages.

🚀 Deployment

- 🐳 Dockerized Setup: Optimized Docker image with FastAPI server for easy deployment.

- 🔑 Secure Authentication: Built-in JWT token authentication for API security.

- 🔄 API Gateway: One-click deployment with secure token authentication for API-based workflows.

- 🌐 Scalable Architecture: Designed for mass-scale production and optimized server performance.

- ☁️ Cloud Deployment: Ready-to-deploy configurations for major cloud platforms.

🎯 Additional Features

- 🕶️ Stealth Mode: Avoid bot detection by mimicking real users.

- 🏷️ Tag-Based Content Extraction: Refine crawling based on custom tags, headers, or metadata.

- 🔗 Link Analysis: Extract and analyze all links for detailed data exploration.

- 🛡️ Error Handling: Robust error management for seamless execution.

- 🔐 CORS & Static Serving: Supports filesystem-based caching and cross-origin requests.

- 📖 Clear Documentation: Simplified and updated guides for onboarding and advanced usage.

- 🙌 Community Recognition: Acknowledges contributors and pull requests for transparency.

✨ Visit our Documentation Website

Crawl4AI offers flexible installation options to suit various use cases. You can install it as a Python package or use Docker.

🐍 Using pip

Choose the installation option that best fits your needs:

For basic web crawling and scraping tasks:

pip install crawl4ai

crawl4ai-setup # Setup the browserBy default, this will install the asynchronous version of Crawl4AI, using Playwright for web crawling.

👉 Note: When you install Crawl4AI, the crawl4ai-setup should automatically install and set up Playwright. However, if you encounter any Playwright-related errors, you can manually install it using one of these methods:

-

Through the command line:

playwright install

-

If the above doesn't work, try this more specific command:

python -m playwright install chromium

This second method has proven to be more reliable in some cases.

The sync version is deprecated and will be removed in future versions. If you need the synchronous version using Selenium:

pip install crawl4ai[sync]For contributors who plan to modify the source code:

git clone https://github.com/unclecode/crawl4ai.git

cd crawl4ai

pip install -e . # Basic installation in editable modeInstall optional features:

pip install -e ".[torch]" # With PyTorch features

pip install -e ".[transformer]" # With Transformer features

pip install -e ".[cosine]" # With cosine similarity features

pip install -e ".[sync]" # With synchronous crawling (Selenium)

pip install -e ".[all]" # Install all optional features🐳 Docker Deployment

🚀 Now Available! Our completely redesigned Docker implementation is here! This new solution makes deployment more efficient and seamless than ever.

The new Docker implementation includes:

- Real-time Monitoring Dashboard with live system metrics and browser pool visibility

- Browser pooling with page pre-warming for faster response times

- Interactive playground to test and generate request code

- MCP integration for direct connection to AI tools like Claude Code

- Comprehensive API endpoints including HTML extraction, screenshots, PDF generation, and JavaScript execution

- Multi-architecture support with automatic detection (AMD64/ARM64)

- Optimized resources with improved memory management

# Pull and run the latest release

docker pull unclecode/crawl4ai:latest

docker run -d -p 11235:11235 --name crawl4ai --shm-size=1g unclecode/crawl4ai:latest

# Visit the monitoring dashboard at http://localhost:11235/dashboard

# Or the playground at http://localhost:11235/playgroundRun a quick test (works for both Docker options):

import requests

# Submit a crawl job

response = requests.post(

"http://localhost:11235/crawl",

json={"urls": ["https://example.com"], "priority": 10}

)

if response.status_code == 200:

print("Crawl job submitted successfully.")

if "results" in response.json():

results = response.json()["results"]

print("Crawl job completed. Results:")

for result in results:

print(result)

else:

task_id = response.json()["task_id"]

print(f"Crawl job submitted. Task ID:: {task_id}")

result = requests.get(f"http://localhost:11235/task/{task_id}")For more examples, see our Docker Examples. For advanced configuration, monitoring features, and production deployment, see our Self-Hosting Guide.

You can check the project structure in the directory docs/examples. Over there, you can find a variety of examples; here, some popular examples are shared.

📝 Heuristic Markdown Generation with Clean and Fit Markdown

import asyncio

from crawl4ai import AsyncWebCrawler, BrowserConfig, CrawlerRunConfig, CacheMode

from crawl4ai.content_filter_strategy import PruningContentFilter, BM25ContentFilter

from crawl4ai.markdown_generation_strategy import DefaultMarkdownGenerator

async def main():

browser_config = BrowserConfig(

headless=True,

verbose=True,

)

run_config = CrawlerRunConfig(

cache_mode=CacheMode.ENABLED,

markdown_generator=DefaultMarkdownGenerator(

content_filter=PruningContentFilter(threshold=0.48, threshold_type="fixed", min_word_threshold=0)

),

# markdown_generator=DefaultMarkdownGenerator(

# content_filter=BM25ContentFilter(user_query="WHEN_WE_FOCUS_BASED_ON_A_USER_QUERY", bm25_threshold=1.0)

# ),

)

async with AsyncWebCrawler(config=browser_config) as crawler:

result = await crawler.arun(

url="https://docs.micronaut.io/4.9.9/guide/",

config=run_config

)

print(len(result.markdown.raw_markdown))

print(len(result.markdown.fit_markdown))

if __name__ == "__main__":

asyncio.run(main())🖥️ Executing JavaScript & Extract Structured Data without LLMs

import asyncio

from crawl4ai import AsyncWebCrawler, BrowserConfig, CrawlerRunConfig, CacheMode

from crawl4ai import JsonCssExtractionStrategy

import json

async def main():

schema = {

"name": "KidoCode Courses",

"baseSelector": "section.charge-methodology .w-tab-content > div",

"fields": [

{

"name": "section_title",

"selector": "h3.heading-50",

"type": "text",

},

{

"name": "section_description",

"selector": ".charge-content",

"type": "text",

},

{

"name": "course_name",

"selector": ".text-block-93",

"type": "text",

},

{

"name": "course_description",

"selector": ".course-content-text",

"type": "text",

},

{

"name": "course_icon",

"selector": ".image-92",

"type": "attribute",

"attribute": "src"

}

]

}

extraction_strategy = JsonCssExtractionStrategy(schema, verbose=True)

browser_config = BrowserConfig(

headless=False,

verbose=True

)

run_config = CrawlerRunConfig(

extraction_strategy=extraction_strategy,

js_code=["""(async () => {const tabs = document.querySelectorAll("section.charge-methodology .tabs-menu-3 > div");for(let tab of tabs) {tab.scrollIntoView();tab.click();await new Promise(r => setTimeout(r, 500));}})();"""],

cache_mode=CacheMode.BYPASS

)

async with AsyncWebCrawler(config=browser_config) as crawler:

result = await crawler.arun(

url="https://www.kidocode.com/degrees/technology",

config=run_config

)

companies = json.loads(result.extracted_content)

print(f"Successfully extracted {len(companies)} companies")

print(json.dumps(companies[0], indent=2))

if __name__ == "__main__":

asyncio.run(main())📚 Extracting Structured Data with LLMs

import os

import asyncio

from crawl4ai import AsyncWebCrawler, BrowserConfig, CrawlerRunConfig, CacheMode, LLMConfig

from crawl4ai import LLMExtractionStrategy

from pydantic import BaseModel, Field

class OpenAIModelFee(BaseModel):

model_name: str = Field(..., description="Name of the OpenAI model.")

input_fee: str = Field(..., description="Fee for input token for the OpenAI model.")

output_fee: str = Field(..., description="Fee for output token for the OpenAI model.")

async def main():

browser_config = BrowserConfig(verbose=True)

run_config = CrawlerRunConfig(

word_count_threshold=1,

extraction_strategy=LLMExtractionStrategy(

# Here you can use any provider that Litellm library supports, for instance: ollama/qwen2

# provider="ollama/qwen2", api_token="no-token",

llm_config = LLMConfig(provider="openai/gpt-4o", api_token=os.getenv('OPENAI_API_KEY')),

schema=OpenAIModelFee.schema(),

extraction_type="schema",

instruction="""From the crawled content, extract all mentioned model names along with their fees for input and output tokens.

Do not miss any models in the entire content. One extracted model JSON format should look like this:

{"model_name": "GPT-4", "input_fee": "US$10.00 / 1M tokens", "output_fee": "US$30.00 / 1M tokens"}."""

),

cache_mode=CacheMode.BYPASS,

)

async with AsyncWebCrawler(config=browser_config) as crawler:

result = await crawler.arun(

url='https://openai.com/api/pricing/',

config=run_config

)

print(result.extracted_content)

if __name__ == "__main__":

asyncio.run(main())🤖 Using Your own Browser with Custom User Profile

import os, sys

from pathlib import Path

import asyncio, time

from crawl4ai import AsyncWebCrawler, BrowserConfig, CrawlerRunConfig, CacheMode

async def test_news_crawl():

# Create a persistent user data directory

user_data_dir = os.path.join(Path.home(), ".crawl4ai", "browser_profile")

os.makedirs(user_data_dir, exist_ok=True)

browser_config = BrowserConfig(

verbose=True,

headless=True,

user_data_dir=user_data_dir,

use_persistent_context=True,

)

run_config = CrawlerRunConfig(

cache_mode=CacheMode.BYPASS

)

async with AsyncWebCrawler(config=browser_config) as crawler:

url = "ADDRESS_OF_A_CHALLENGING_WEBSITE"

result = await crawler.arun(

url,

config=run_config,

magic=True,

)

print(f"Successfully crawled {url}")

print(f"Content length: {len(result.markdown)}")💡 Tip: Some websites may use CAPTCHA based verification mechanisms to prevent automated access. If your workflow encounters such challenges, you may optionally integrate a third-party CAPTCHA-handling service such as CapSolver. They support reCAPTCHA v2/v3, Cloudflare Turnstile, Challenge, AWS WAF, and more. Please ensure that your usage complies with the target website’s terms of service and applicable laws.

Version 0.8.0 Release Highlights - Crash Recovery & Prefetch Mode

This release introduces crash recovery for deep crawls, a new prefetch mode for fast URL discovery, and critical security fixes for Docker deployments.

-

🔄 Deep Crawl Crash Recovery:

-

on_state_changecallback fires after each URL for real-time state persistence -

resume_stateparameter to continue from a saved checkpoint - JSON-serializable state for Redis/database storage

- Works with BFS, DFS, and Best-First strategies

from crawl4ai.deep_crawling import BFSDeepCrawlStrategy strategy = BFSDeepCrawlStrategy( max_depth=3, resume_state=saved_state, # Continue from checkpoint on_state_change=save_to_redis, # Called after each URL )

-

-

⚡ Prefetch Mode for Fast URL Discovery:

-

prefetch=Trueskips markdown, extraction, and media processing - 5-10x faster than full processing

- Perfect for two-phase crawling: discover first, process selectively

config = CrawlerRunConfig(prefetch=True) result = await crawler.arun("https://example.com", config=config) # Returns HTML and links only - no markdown generation

-

-

🔒 Security Fixes (Docker API):

- Hooks disabled by default (

CRAWL4AI_HOOKS_ENABLED=false) -

file://URLs blocked on API endpoints to prevent LFI -

__import__removed from hook execution sandbox

- Hooks disabled by default (

Version 0.7.8 Release Highlights - Stability & Bug Fix Release

This release focuses on stability with 11 bug fixes addressing issues reported by the community. No new features, but significant improvements to reliability.

-

🐳 Docker API Fixes:

- Fixed

ContentRelevanceFilterdeserialization in deep crawl requests (#1642) - Fixed

ProxyConfigJSON serialization inBrowserConfig.to_dict()(#1629) - Fixed

.cachefolder permissions in Docker image (#1638)

- Fixed

-

🤖 LLM Extraction Improvements:

- Configurable rate limiter backoff with new

LLMConfigparameters (#1269):from crawl4ai import LLMConfig config = LLMConfig( provider="openai/gpt-4o-mini", backoff_base_delay=5, # Wait 5s on first retry backoff_max_attempts=5, # Try up to 5 times backoff_exponential_factor=3 # Multiply delay by 3 each attempt )

- HTML input format support for

LLMExtractionStrategy(#1178):from crawl4ai import LLMExtractionStrategy strategy = LLMExtractionStrategy( llm_config=config, instruction="Extract table data", input_format="html" # Now supports: "html", "markdown", "fit_markdown" )

- Fixed raw HTML URL variable - extraction strategies now receive

"Raw HTML"instead of HTML blob (#1116)

- Configurable rate limiter backoff with new

-

🔗 URL Handling:

- Fixed relative URL resolution after JavaScript redirects (#1268)

- Fixed import statement formatting in extracted code (#1181)

-

📦 Dependency Updates:

- Replaced deprecated PyPDF2 with pypdf (#1412)

- Pydantic v2 ConfigDict compatibility - no more deprecation warnings (#678)

-

🧠 AdaptiveCrawler:

- Fixed query expansion to actually use LLM instead of hardcoded mock data (#1621)

Version 0.7.7 Release Highlights - The Self-Hosting & Monitoring Update

-

📊 Real-time Monitoring Dashboard: Interactive web UI with live system metrics and browser pool visibility

# Access the monitoring dashboard # Visit: http://localhost:11235/dashboard # Real-time metrics include: # - System health (CPU, memory, network, uptime) # - Active and completed request tracking # - Browser pool management (permanent/hot/cold) # - Janitor cleanup events # - Error monitoring with full context

-

🔌 Comprehensive Monitor API: Complete REST API for programmatic access to all monitoring data

import httpx async with httpx.AsyncClient() as client: # System health health = await client.get("http://localhost:11235/monitor/health") # Request tracking requests = await client.get("http://localhost:11235/monitor/requests") # Browser pool status browsers = await client.get("http://localhost:11235/monitor/browsers") # Endpoint statistics stats = await client.get("http://localhost:11235/monitor/endpoints/stats")

-

⚡ WebSocket Streaming: Real-time updates every 2 seconds for custom dashboards

-

🔥 Smart Browser Pool: 3-tier architecture (permanent/hot/cold) with automatic promotion and cleanup

-

🧹 Janitor System: Automatic resource management with event logging

-

🎮 Control Actions: Manual browser management (kill, restart, cleanup) via API

-

📈 Production Metrics: 6 critical metrics for operational excellence with Prometheus integration

-

🐛 Critical Bug Fixes:

- Fixed async LLM extraction blocking issue (#1055)

- Enhanced DFS deep crawl strategy (#1607)

- Fixed sitemap parsing in AsyncUrlSeeder (#1598)

- Resolved browser viewport configuration (#1495)

- Fixed CDP timing with exponential backoff (#1528)

- Security update for pyOpenSSL (>=25.3.0)

Version 0.7.5 Release Highlights - The Docker Hooks & Security Update

-

🔧 Docker Hooks System: Complete pipeline customization with user-provided Python functions at 8 key points

-

✨ Function-Based Hooks API (NEW): Write hooks as regular Python functions with full IDE support:

from crawl4ai import hooks_to_string from crawl4ai.docker_client import Crawl4aiDockerClient # Define hooks as regular Python functions async def on_page_context_created(page, context, **kwargs): """Block images to speed up crawling""" await context.route("**/*.{png,jpg,jpeg,gif,webp}", lambda route: route.abort()) await page.set_viewport_size({"width": 1920, "height": 1080}) return page async def before_goto(page, context, url, **kwargs): """Add custom headers""" await page.set_extra_http_headers({'X-Crawl4AI': 'v0.7.5'}) return page # Option 1: Use hooks_to_string() utility for REST API hooks_code = hooks_to_string({ "on_page_context_created": on_page_context_created, "before_goto": before_goto }) # Option 2: Docker client with automatic conversion (Recommended) client = Crawl4aiDockerClient(base_url="http://localhost:11235") results = await client.crawl( urls=["https://httpbin.org/html"], hooks={ "on_page_context_created": on_page_context_created, "before_goto": before_goto } ) # ✓ Full IDE support, type checking, and reusability!

-

🤖 Enhanced LLM Integration: Custom providers with temperature control and base_url configuration

-

🔒 HTTPS Preservation: Secure internal link handling with

preserve_https_for_internal_links=True -

🐍 Python 3.10+ Support: Modern language features and enhanced performance

-

🛠️ Bug Fixes: Resolved multiple community-reported issues including URL processing, JWT authentication, and proxy configuration

Version 0.7.4 Release Highlights - The Intelligent Table Extraction & Performance Update

-

🚀 LLMTableExtraction: Revolutionary table extraction with intelligent chunking for massive tables:

from crawl4ai import LLMTableExtraction, LLMConfig # Configure intelligent table extraction table_strategy = LLMTableExtraction( llm_config=LLMConfig(provider="openai/gpt-4.1-mini"), enable_chunking=True, # Handle massive tables chunk_token_threshold=5000, # Smart chunking threshold overlap_threshold=100, # Maintain context between chunks extraction_type="structured" # Get structured data output ) config = CrawlerRunConfig(table_extraction_strategy=table_strategy) result = await crawler.arun("https://complex-tables-site.com", config=config) # Tables are automatically chunked, processed, and merged for table in result.tables: print(f"Extracted table: {len(table['data'])} rows")

-

⚡ Dispatcher Bug Fix: Fixed sequential processing bottleneck in arun_many for fast-completing tasks

-

🧹 Memory Management Refactor: Consolidated memory utilities into main utils module for cleaner architecture

-

🔧 Browser Manager Fixes: Resolved race conditions in concurrent page creation with thread-safe locking

-

🔗 Advanced URL Processing: Better handling of raw:// URLs and base tag link resolution

-

🛡️ Enhanced Proxy Support: Flexible proxy configuration supporting both dict and string formats

Version 0.7.3 Release Highlights - The Multi-Config Intelligence Update

-

🕵️ Undetected Browser Support: Bypass sophisticated bot detection systems:

from crawl4ai import AsyncWebCrawler, BrowserConfig browser_config = BrowserConfig( browser_type="undetected", # Use undetected Chrome headless=True, # Can run headless with stealth extra_args=[ "--disable-blink-features=AutomationControlled", "--disable-web-security" ] ) async with AsyncWebCrawler(config=browser_config) as crawler: result = await crawler.arun("https://protected-site.com") # Successfully bypass Cloudflare, Akamai, and custom bot detection

-

🎨 Multi-URL Configuration: Different strategies for different URL patterns in one batch:

from crawl4ai import CrawlerRunConfig, MatchMode configs = [ # Documentation sites - aggressive caching CrawlerRunConfig( url_matcher=["*docs*", "*documentation*"], cache_mode="write", markdown_generator_options={"include_links": True} ), # News/blog sites - fresh content CrawlerRunConfig( url_matcher=lambda url: 'blog' in url or 'news' in url, cache_mode="bypass" ), # Fallback for everything else CrawlerRunConfig() ] results = await crawler.arun_many(urls, config=configs) # Each URL gets the perfect configuration automatically

-

🧠 Memory Monitoring: Track and optimize memory usage during crawling:

from crawl4ai.memory_utils import MemoryMonitor monitor = MemoryMonitor() monitor.start_monitoring() results = await crawler.arun_many(large_url_list) report = monitor.get_report() print(f"Peak memory: {report['peak_mb']:.1f} MB") print(f"Efficiency: {report['efficiency']:.1f}%") # Get optimization recommendations

-

📊 Enhanced Table Extraction: Direct DataFrame conversion from web tables:

result = await crawler.arun("https://site-with-tables.com") # New way - direct table access if result.tables: import pandas as pd for table in result.tables: df = pd.DataFrame(table['data']) print(f"Table: {df.shape[0]} rows × {df.shape[1]} columns")

-

💰 GitHub Sponsors: 4-tier sponsorship system for project sustainability

-

🐳 Docker LLM Flexibility: Configure providers via environment variables

Version 0.7.0 Release Highlights - The Adaptive Intelligence Update

-

🧠 Adaptive Crawling: Your crawler now learns and adapts to website patterns automatically:

config = AdaptiveConfig( confidence_threshold=0.7, # Min confidence to stop crawling max_depth=5, # Maximum crawl depth max_pages=20, # Maximum number of pages to crawl strategy="statistical" ) async with AsyncWebCrawler() as crawler: adaptive_crawler = AdaptiveCrawler(crawler, config) state = await adaptive_crawler.digest( start_url="https://news.example.com", query="latest news content" ) # Crawler learns patterns and improves extraction over time

-

🌊 Virtual Scroll Support: Complete content extraction from infinite scroll pages:

scroll_config = VirtualScrollConfig( container_selector="[data-testid='feed']", scroll_count=20, scroll_by="container_height", wait_after_scroll=1.0 ) result = await crawler.arun(url, config=CrawlerRunConfig( virtual_scroll_config=scroll_config ))

-

🔗 Intelligent Link Analysis: 3-layer scoring system for smart link prioritization:

link_config = LinkPreviewConfig( query="machine learning tutorials", score_threshold=0.3, concurrent_requests=10 ) result = await crawler.arun(url, config=CrawlerRunConfig( link_preview_config=link_config, score_links=True )) # Links ranked by relevance and quality

-

🎣 Async URL Seeder: Discover thousands of URLs in seconds:

seeder = AsyncUrlSeeder(SeedingConfig( source="sitemap+cc", pattern="*/blog/*", query="python tutorials", score_threshold=0.4 )) urls = await seeder.discover("https://example.com")

-

⚡ Performance Boost: Up to 3x faster with optimized resource handling and memory efficiency

Read the full details in our 0.7.0 Release Notes or check the CHANGELOG.

Crawl4AI follows standard Python version numbering conventions (PEP 440) to help users understand the stability and features of each release.

📈 Version Numbers Explained

Our version numbers follow this pattern: MAJOR.MINOR.PATCH (e.g., 0.4.3)

We use different suffixes to indicate development stages:

-

dev(0.4.3dev1): Development versions, unstable -

a(0.4.3a1): Alpha releases, experimental features -

b(0.4.3b1): Beta releases, feature complete but needs testing -

rc(0.4.3): Release candidates, potential final version

-

Regular installation (stable version):

pip install -U crawl4ai

-

Install pre-release versions:

pip install crawl4ai --pre

-

Install specific version:

pip install crawl4ai==0.4.3b1

We use pre-releases to:

- Test new features in real-world scenarios

- Gather feedback before final releases

- Ensure stability for production users

- Allow early adopters to try new features

For production environments, we recommend using the stable version. For testing new features, you can opt-in to pre-releases using the --pre flag.

🚨 Documentation Update Alert: We're undertaking a major documentation overhaul next week to reflect recent updates and improvements. Stay tuned for a more comprehensive and up-to-date guide!

For current documentation, including installation instructions, advanced features, and API reference, visit our Documentation Website.

To check our development plans and upcoming features, visit our Roadmap.

📈 Development TODOs

- [x] 0. Graph Crawler: Smart website traversal using graph search algorithms for comprehensive nested page extraction

- [x] 1. Question-Based Crawler: Natural language driven web discovery and content extraction

- [x] 2. Knowledge-Optimal Crawler: Smart crawling that maximizes knowledge while minimizing data extraction

- [x] 3. Agentic Crawler: Autonomous system for complex multi-step crawling operations

- [x] 4. Automated Schema Generator: Convert natural language to extraction schemas

- [x] 5. Domain-Specific Scrapers: Pre-configured extractors for common platforms (academic, e-commerce)

- [x] 6. Web Embedding Index: Semantic search infrastructure for crawled content

- [x] 7. Interactive Playground: Web UI for testing, comparing strategies with AI assistance

- [x] 8. Performance Monitor: Real-time insights into crawler operations

- [ ] 9. Cloud Integration: One-click deployment solutions across cloud providers

- [x] 10. Sponsorship Program: Structured support system with tiered benefits

- [ ] 11. Educational Content: "How to Crawl" video series and interactive tutorials

We welcome contributions from the open-source community. Check out our contribution guidelines for more information.

I'll help modify the license section with badges. For the halftone effect, here's a version with it:

Here's the updated license section:

This project is licensed under the Apache License 2.0, attribution is recommended via the badges below. See the Apache 2.0 License file for details.

When using Crawl4AI, you must include one of the following attribution methods:

📈 1. Badge Attribution (Recommended)

Add one of these badges to your README, documentation, or website:| Theme | Badge |

|---|---|

| Disco Theme (Animated) | |

| Night Theme (Dark with Neon) | |

| Dark Theme (Classic) | |

| Light Theme (Classic) |

HTML code for adding the badges:

<!-- Disco Theme (Animated) -->

<a href="https://github.com/unclecode/crawl4ai">

<img src="https://raw.githubusercontent.com/unclecode/crawl4ai/main/docs/assets/powered-by-disco.svg" alt="Powered by Crawl4AI" width="200"/>

</a>

<!-- Night Theme (Dark with Neon) -->

<a href="https://github.com/unclecode/crawl4ai">

<img src="https://raw.githubusercontent.com/unclecode/crawl4ai/main/docs/assets/powered-by-night.svg" alt="Powered by Crawl4AI" width="200"/>

</a>

<!-- Dark Theme (Classic) -->

<a href="https://github.com/unclecode/crawl4ai">

<img src="https://raw.githubusercontent.com/unclecode/crawl4ai/main/docs/assets/powered-by-dark.svg" alt="Powered by Crawl4AI" width="200"/>

</a>

<!-- Light Theme (Classic) -->

<a href="https://github.com/unclecode/crawl4ai">

<img src="https://raw.githubusercontent.com/unclecode/crawl4ai/main/docs/assets/powered-by-light.svg" alt="Powered by Crawl4AI" width="200"/>

</a>

<!-- Simple Shield Badge -->

<a href="https://github.com/unclecode/crawl4ai">

<img src="https://img.shields.io/badge/Powered%20by-Crawl4AI-blue?style=flat-square" alt="Powered by Crawl4AI"/>

</a>📖 2. Text Attribution

Add this line to your documentation: ``` This project uses Crawl4AI (https://github.com/unclecode/crawl4ai) for web data extraction. ```If you use Crawl4AI in your research or project, please cite:

@software{crawl4ai2024,

author = {UncleCode},

title = {Crawl4AI: Open-source LLM Friendly Web Crawler & Scraper},

year = {2024},

publisher = {GitHub},

journal = {GitHub Repository},

howpublished = {\url{https://github.com/unclecode/crawl4ai}},

commit = {Please use the commit hash you're working with}

}Text citation format:

UncleCode. (2024). Crawl4AI: Open-source LLM Friendly Web Crawler & Scraper [Computer software].

GitHub. https://github.com/unclecode/crawl4ai

For questions, suggestions, or feedback, feel free to reach out:

- GitHub: unclecode

- Twitter: @unclecode

- Website: crawl4ai.com

Happy Crawling! 🕸️🚀

Our mission is to unlock the value of personal and enterprise data by transforming digital footprints into structured, tradeable assets. Crawl4AI empowers individuals and organizations with open-source tools to extract and structure data, fostering a shared data economy.

We envision a future where AI is powered by real human knowledge, ensuring data creators directly benefit from their contributions. By democratizing data and enabling ethical sharing, we are laying the foundation for authentic AI advancement.

🔑 Key Opportunities

- Data Capitalization: Transform digital footprints into measurable, valuable assets.

- Authentic AI Data: Provide AI systems with real human insights.

- Shared Economy: Create a fair data marketplace that benefits data creators.

🚀 Development Pathway

- Open-Source Tools: Community-driven platforms for transparent data extraction.

- Digital Asset Structuring: Tools to organize and value digital knowledge.

- Ethical Data Marketplace: A secure, fair platform for exchanging structured data.

For more details, see our full mission statement.

Our enterprise sponsors and technology partners help scale Crawl4AI to power production-grade data pipelines.

| Company | About | Sponsorship Tier |

|---|---|---|

| Leveraging Thordata ensures seamless compatibility with any AI/ML workflows and data infrastructure, massively accessing web data with 99.9% uptime, backed by one-on-one customer support. | 🥈 Silver | |

| NstProxy is a trusted proxy provider with over 110M+ real residential IPs, city-level targeting, 99.99% uptime, and low pricing at $0.1/GB, it delivers unmatched stability, scale, and cost-efficiency. | 🥈 Silver | |

| Scrapeless provides production-grade infrastructure for Crawling, Automation, and AI Agents, offering Scraping Browser, 4 Proxy Types and Universal Scraping API. | 🥈 Silver | |

|

AI-powered Captcha solving service. Supports all major Captcha types, including reCAPTCHA, Cloudflare, and more | 🥉 Bronze |

|

Helps engineers and buyers find, compare, and source electronic & industrial parts in seconds, with specs, pricing, lead times & alternatives. | 🥇 Gold |

KidoCode |

Kidocode is a hybrid technology and entrepreneurship school for kids aged 5–18, offering both online and on-campus education. | 🥇 Gold |

| Singapore-based Aleph Null is Asia’s leading edtech hub, dedicated to student-centric, AI-driven education—empowering learners with the tools to thrive in a fast-changing world. | 🥇 Gold |

A heartfelt thanks to our individual supporters! Every contribution helps us keep our opensource mission alive and thriving!

Want to join them? Sponsor Crawl4AI →

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for crawl4ai

Similar Open Source Tools

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

firecrawl

Firecrawl is an API service that empowers AI applications with clean data from any website. It features advanced scraping, crawling, and data extraction capabilities. The repository is still in development, integrating custom modules into the mono repo. Users can run it locally but it's not fully ready for self-hosted deployment yet. Firecrawl offers powerful capabilities like scraping, crawling, mapping, searching, and extracting structured data from single pages, multiple pages, or entire websites with AI. It supports various formats, actions, and batch scraping. The tool is designed to handle proxies, anti-bot mechanisms, dynamic content, media parsing, change tracking, and more. Firecrawl is available as an open-source project under the AGPL-3.0 license, with additional features offered in the cloud version.

onlook

Onlook is a web scraping tool that allows users to extract data from websites easily and efficiently. It provides a user-friendly interface for creating web scraping scripts and supports various data formats for exporting the extracted data. With Onlook, users can automate the process of collecting information from multiple websites, saving time and effort. The tool is designed to be flexible and customizable, making it suitable for a wide range of web scraping tasks.

NadirClaw

NadirClaw is a powerful open-source tool designed for web scraping and data extraction. It provides a user-friendly interface for extracting data from websites with ease. With NadirClaw, users can easily scrape text, images, and other content from web pages for various purposes such as data analysis, research, and automation. The tool offers flexibility and customization options to cater to different scraping needs, making it a versatile solution for extracting data from the web. Whether you are a data scientist, researcher, or developer, NadirClaw can streamline your data extraction process and help you gather valuable insights from online sources.

llms

llms.py is a lightweight CLI, API, and ChatGPT-like alternative to Open WebUI for accessing multiple LLMs. It operates entirely offline, ensuring all data is kept private in browser storage. The tool provides a convenient way to interact with various LLM models without the need for an internet connection, prioritizing user privacy and data security.

waidrin

Waidrin is a powerful web scraping tool that allows users to easily extract data from websites. It provides a user-friendly interface for creating custom web scraping scripts and supports various data formats for exporting the extracted data. With Waidrin, users can automate the process of collecting information from multiple websites, saving time and effort. The tool is designed to be flexible and scalable, making it suitable for both beginners and advanced users in the field of web scraping.

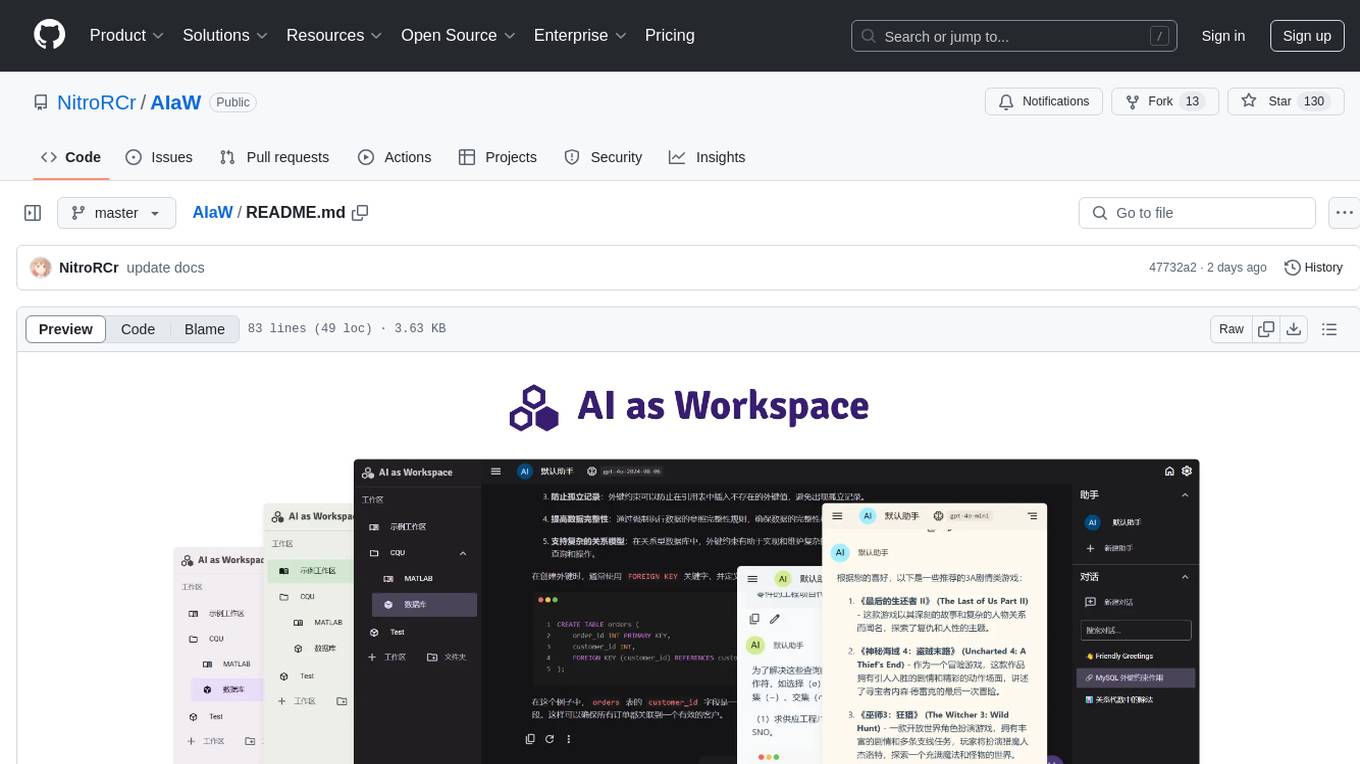

AIaW

AIaW is a next-generation LLM client with full functionality, lightweight, and extensible. It supports various basic functions such as streaming transfer, image uploading, and latex formulas. The tool is cross-platform with a responsive interface design. It supports multiple service providers like OpenAI, Anthropic, and Google. Users can modify questions, regenerate in a forked manner, and visualize conversations in a tree structure. Additionally, it offers features like file parsing, video parsing, plugin system, assistant market, local storage with real-time cloud sync, and customizable interface themes. Users can create multiple workspaces, use dynamic prompt word variables, extend plugins, and benefit from detailed design elements like real-time content preview, optimized code pasting, and support for various file types.

databerry

Chaindesk is a no-code platform that allows users to easily set up a semantic search system for personal data without technical knowledge. It supports loading data from various sources such as raw text, web pages, files (Word, Excel, PowerPoint, PDF, Markdown, Plain Text), and upcoming support for web sites, Notion, and Airtable. The platform offers a user-friendly interface for managing datastores, querying data via a secure API endpoint, and auto-generating ChatGPT Plugins for each datastore. Chaindesk utilizes a Vector Database (Qdrant), Openai's text-embedding-ada-002 for embeddings, and has a chunk size of 1024 tokens. The technology stack includes Next.js, Joy UI, LangchainJS, PostgreSQL, Prisma, and Qdrant, inspired by the ChatGPT Retrieval Plugin.

Website-Crawler

Website-Crawler is a tool designed to extract data from websites in an automated manner. It allows users to scrape information such as text, images, links, and more from web pages. The tool provides functionalities to navigate through websites, handle different types of content, and store extracted data for further analysis. Website-Crawler is useful for tasks like web scraping, data collection, content aggregation, and competitive analysis. It can be customized to extract specific data elements based on user requirements, making it a versatile tool for various web data extraction needs.

generator

ctx is a tool designed to automatically generate organized context files from code files, GitHub repositories, Git commits, web pages, and plain text. It aims to efficiently provide necessary context to AI language models like ChatGPT and Claude, enabling users to streamline code refactoring, multiple iteration development, documentation generation, and seamless AI integration. With ctx, users can create structured markdown documents, save context files, and serve context through an MCP server for real-time assistance. The tool simplifies the process of sharing project information with AI assistants, making AI conversations smarter and easier.

oramacore

OramaCore is a database designed for AI projects, answer engines, copilots, and search functionalities. It offers features such as a full-text search engine, vector database, LLM interface, and various utilities. The tool is currently under active development and not recommended for production use due to potential API changes. OramaCore aims to provide a comprehensive solution for managing data and enabling advanced search capabilities in AI applications.

airstore

Airstore is a filesystem for AI agents that adds any source of data into a virtual filesystem, allowing users to connect services like Gmail, GitHub, Linear, and more, and describe data needs in plain English. Results are presented as files that can be read by Claude Code. Features include smart folders for natural language queries, integrations with various services, executable MCP servers, team workspaces, and local mode operation on user infrastructure. Users can sign up, connect integrations, create smart folders, install the CLI, mount the filesystem, and use with Claude Code to perform tasks like summarizing invoices, identifying unpaid invoices, and extracting data into CSV format.

Aimer_WT

Aimer_WT is a web scraping tool designed to extract data from websites efficiently and accurately. It provides a user-friendly interface for users to specify the data they want to scrape and offers various customization options. With Aimer_WT, users can easily automate the process of collecting data from multiple web pages, saving time and effort. The tool is suitable for both beginners and experienced users who need to gather data for research, analysis, or other purposes. Aimer_WT supports various data formats and allows users to export the extracted data for further processing.

wtffmpeg

wtffmpeg is a command-line tool that uses a Large Language Model (LLM) to translate plain-English descriptions of video or audio tasks into actual, executable ffmpeg commands. It aims to streamline the process of generating ffmpeg commands by allowing users to describe what they want to do in natural language, review the generated command, optionally edit it, and then decide whether to run it. The tool provides an interactive REPL interface where users can input their commands, retain conversational context, and history, and control the level of interactivity. wtffmpeg is designed to assist users in efficiently working with ffmpeg commands, reducing the need to search for solutions, read lengthy explanations, and manually adjust commands.

OllamaSharp

OllamaSharp is a .NET binding for the Ollama API, providing an intuitive API client to interact with Ollama. It offers support for all Ollama API endpoints, real-time streaming, progress reporting, and an API console for remote management. Users can easily set up the client, list models, pull models with progress feedback, stream completions, and build interactive chats. The project includes a demo console for exploring and managing the Ollama host.

ollama4j

Ollama4j is a Java library that serves as a wrapper or binding for the Ollama server. It allows users to communicate with the Ollama server and manage models for various deployment scenarios. The library provides APIs for interacting with Ollama, generating fake data, testing UI interactions, translating messages, and building web UIs. Users can easily integrate Ollama4j into their Java projects to leverage the functionalities offered by the Ollama server.

For similar tasks

Forza-Mods-AIO

Forza Mods AIO is a free and open-source tool that enhances the gaming experience in Forza Horizon 4 and 5. It offers a range of time-saving and quality-of-life features, making gameplay more enjoyable and efficient. The tool is designed to streamline various aspects of the game, improving user satisfaction and overall enjoyment.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

MaterialSearch

MaterialSearch is a tool for searching local images and videos using natural language. It provides functionalities such as text search for images, image search for images, text search for videos (providing matching video clips), image search for videos (searching for the segment in a video through a screenshot), image-text similarity calculation, and Pexels video search. The tool can be deployed through the source code or Docker image, and it supports GPU acceleration. Users can configure the tool through environment variables or a .env file. The tool is still under development, and configurations may change frequently. Users can report issues or suggest improvements through issues or pull requests.

tenere

Tenere is a TUI interface for Language Model Libraries (LLMs) written in Rust. It provides syntax highlighting, chat history, saving chats to files, Vim keybindings, copying text from/to clipboard, and supports multiple backends. Users can configure Tenere using a TOML configuration file, set key bindings, and use different LLMs such as ChatGPT, llama.cpp, and ollama. Tenere offers default key bindings for global and prompt modes, with features like starting a new chat, saving chats, scrolling, showing chat history, and quitting the app. Users can interact with the prompt in different modes like Normal, Visual, and Insert, with various key bindings for navigation, editing, and text manipulation.

openkore

OpenKore is a custom client and intelligent automated assistant for Ragnarok Online. It is a free, open source, and cross-platform program (Linux, Windows, and MacOS are supported). To run OpenKore, you need to download and extract it or clone the repository using Git. Configure OpenKore according to the documentation and run openkore.pl to start. The tool provides a FAQ section for troubleshooting, guidelines for reporting issues, and information about botting status on official servers. OpenKore is developed by a global team, and contributions are welcome through pull requests. Various community resources are available for support and communication. Users are advised to comply with the GNU General Public License when using and distributing the software.

QA-Pilot

QA-Pilot is an interactive chat project that leverages online/local LLM for rapid understanding and navigation of GitHub code repository. It allows users to chat with GitHub public repositories using a git clone approach, store chat history, configure settings easily, manage multiple chat sessions, and quickly locate sessions with a search function. The tool integrates with `codegraph` to view Python files and supports various LLM models such as ollama, openai, mistralai, and localai. The project is continuously updated with new features and improvements, such as converting from `flask` to `fastapi`, adding `localai` API support, and upgrading dependencies like `langchain` and `Streamlit` to enhance performance.

extension-gen-ai

The Looker GenAI Extension provides code examples and resources for building a Looker Extension that integrates with Vertex AI Large Language Models (LLMs). Users can leverage the power of LLMs to enhance data exploration and analysis within Looker. The extension offers generative explore functionality to ask natural language questions about data and generative insights on dashboards to analyze data by asking questions. It leverages components like BQML Remote Models, BQML Remote UDF with Vertex AI, and Custom Fine Tune Model for different integration options. Deployment involves setting up infrastructure with Terraform and deploying the Looker Extension by creating a Looker project, copying extension files, configuring BigQuery connection, connecting to Git, and testing the extension. Users can save example prompts and configure user settings for the extension. Development of the Looker Extension environment includes installing dependencies, starting the development server, and building for production.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.