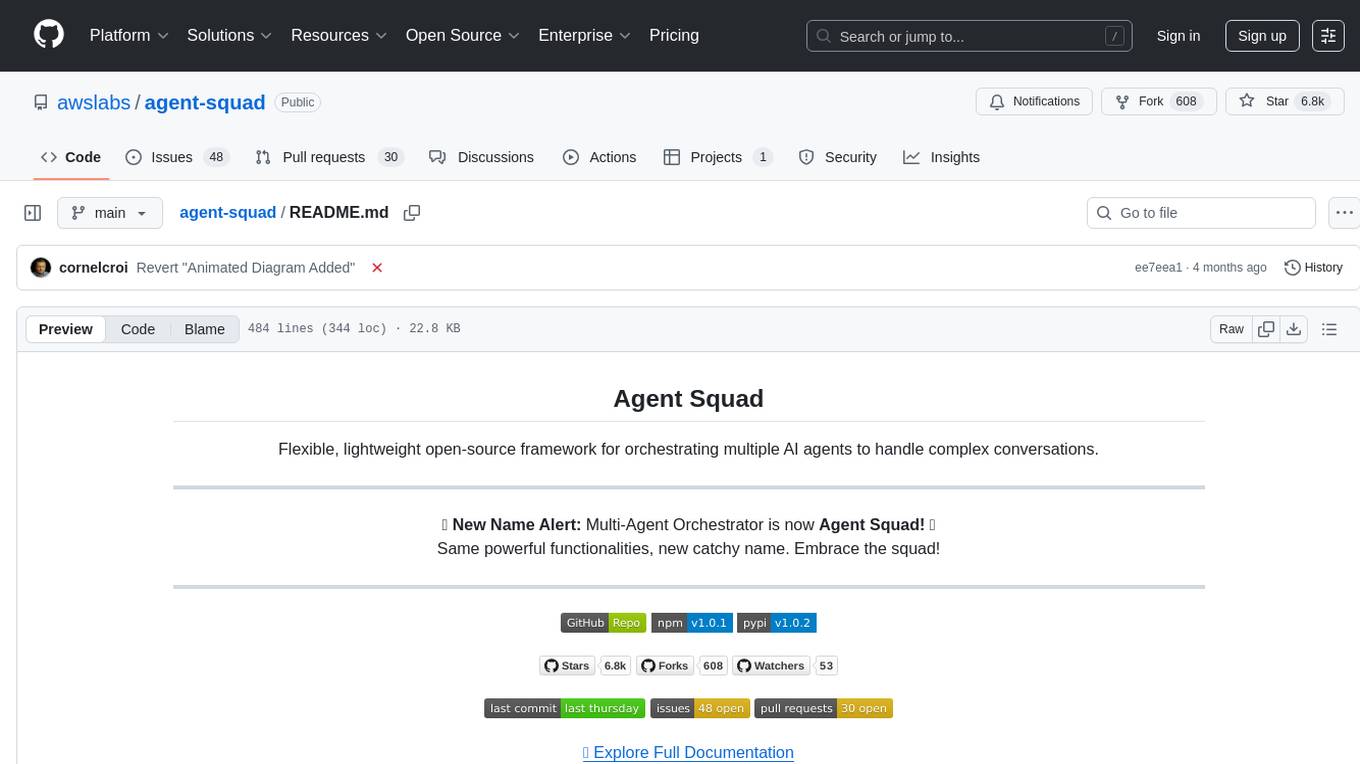

agent-squad

Flexible and powerful framework for managing multiple AI agents and handling complex conversations

Stars: 6833

Agent Squad is a flexible, lightweight open-source framework for orchestrating multiple AI agents to handle complex conversations. It intelligently routes queries, maintains context across interactions, and offers pre-built components for quick deployment. The system allows easy integration of custom agents and conversation messages storage solutions, making it suitable for various applications from simple chatbots to sophisticated AI systems, scaling efficiently.

README:

Flexible, lightweight open-source framework for orchestrating multiple AI agents to handle complex conversations.

📢 New Name Alert: Multi-Agent Orchestrator is now Agent Squad! 🎉

Same powerful functionalities, new catchy name. Embrace the squad!

- 🧠 Intelligent intent classification — Dynamically route queries to the most suitable agent based on context and content.

- 🔤 Dual language support — Fully implemented in both Python and TypeScript.

- 🌊 Flexible agent responses — Support for both streaming and non-streaming responses from different agents.

- 📚 Context management — Maintain and utilize conversation context across multiple agents for coherent interactions.

- 🔧 Extensible architecture — Easily integrate new agents or customize existing ones to fit your specific needs.

- 🌐 Universal deployment — Run anywhere - from AWS Lambda to your local environment or any cloud platform.

- 📦 Pre-built agents and classifiers — A variety of ready-to-use agents and multiple classifier implementations available.

The Agent Squad is a flexible framework for managing multiple AI agents and handling complex conversations. It intelligently routes queries and maintains context across interactions.

The system offers pre-built components for quick deployment, while also allowing easy integration of custom agents and conversation messages storage solutions.

This adaptability makes it suitable for a wide range of applications, from simple chatbots to sophisticated AI systems, accommodating diverse requirements and scaling efficiently.

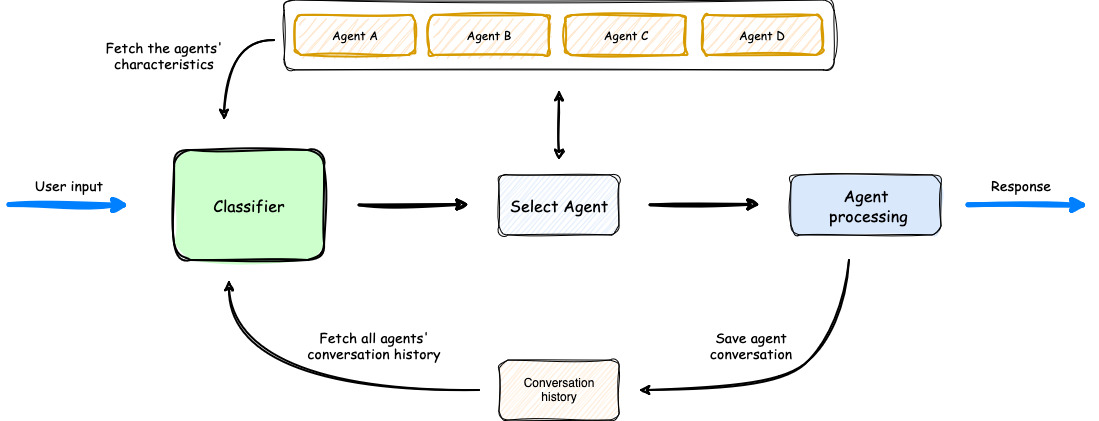

- The process begins with user input, which is analyzed by a Classifier.

- The Classifier leverages both Agents' Characteristics and Agents' Conversation history to select the most appropriate agent for the task.

- Once an agent is selected, it processes the user input.

- The orchestrator then saves the conversation, updating the Agents' Conversation history, before delivering the response back to the user.

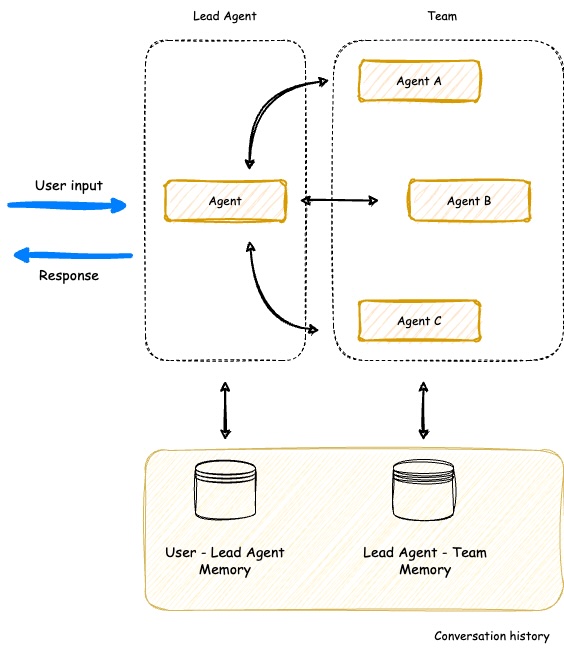

The Agent Squad now includes a powerful new SupervisorAgent that enables sophisticated team coordination between multiple specialized agents. This new component implements a "agent-as-tools" architecture, allowing a lead agent to coordinate a team of specialized agents in parallel, maintaining context and delivering coherent responses.

Key capabilities:

- 🤝 Team Coordination - Coordinate multiple specialized agents working together on complex tasks

- ⚡ Parallel Processing - Execute multiple agent queries simultaneously

- 🧠 Smart Context Management - Maintain conversation history across all team members

- 🔄 Dynamic Delegation - Intelligently distribute subtasks to appropriate team members

- 🤖 Agent Compatibility - Works with all agent types (Bedrock, Anthropic, Lex, etc.)

The SupervisorAgent can be used in two powerful ways:

- Direct Usage - Call it directly when you need dedicated team coordination for specific tasks

- Classifier Integration - Add it as an agent within the classifier to build complex hierarchical systems with multiple specialized teams

Here are just a few examples where this agent can be used:

- Customer Support Teams with specialized sub-teams

- AI Movie Production Studios

- Travel Planning Services

- Product Development Teams

- Healthcare Coordination Systems

Learn more about SupervisorAgent →

In the screen recording below, we demonstrate an extended version of the demo app that uses 6 specialized agents:

- Travel Agent: Powered by an Amazon Lex Bot

- Weather Agent: Utilizes a Bedrock LLM Agent with a tool to query the open-meteo API

- Restaurant Agent: Implemented as an Amazon Bedrock Agent

- Math Agent: Utilizes a Bedrock LLM Agent with two tools for executing mathematical operations

- Tech Agent: A Bedrock LLM Agent designed to answer questions on technical topics

- Health Agent: A Bedrock LLM Agent focused on addressing health-related queries

Watch as the system seamlessly switches context between diverse topics, from booking flights to checking weather, solving math problems, and providing health information. Notice how the appropriate agent is selected for each query, maintaining coherence even with brief follow-up inputs.

The demo highlights the system's ability to handle complex, multi-turn conversations while preserving context and leveraging specialized agents across various domains.

Get hands-on experience with the Agent Squad through our diverse set of examples:

-

Demo Applications:

-

Streamlit Global Demo: A single Streamlit application showcasing multiple demos, including:

- AI Movie Production Studio

- AI Travel Planner

-

Chat Demo App:

- Explore multiple specialized agents handling various domains like travel, weather, math, and health

-

E-commerce Support Simulator: Experience AI-powered customer support with:

- Automated response generation for common queries

- Intelligent routing of complex issues to human support

- Real-time chat and email-style communication

- Human-in-the-loop interactions for complex cases

-

Streamlit Global Demo: A single Streamlit application showcasing multiple demos, including:

-

Sample Projects: Explore our example implementations in the

examplesfolder:-

chat-demo-app: Web-based chat interface with multiple specialized agents -

ecommerce-support-simulator: AI-powered customer support system -

chat-chainlit-app: Chat application built with Chainlit -

fast-api-streaming: FastAPI implementation with streaming support -

text-2-structured-output: Natural Language to Structured Data -

bedrock-inline-agents: Bedrock Inline Agents sample -

bedrock-prompt-routing: Bedrock Prompt Routing sample code

-

Examples are available in both Python and TypeScript. Check out our documentation for comprehensive guides on setting up and using the Agent Squad framework!

Discover creative implementations and diverse applications of the Agent Squad:

-

From 'Bonjour' to 'Boarding Pass': Multilingual AI Chatbot for Flight Reservations

This article demonstrates how to build a multilingual chatbot using the Agent Squad framework. The article explains how to use an Amazon Lex bot as an agent, along with 2 other new agents to make it work in many languages with just a few lines of code.

-

Beyond Auto-Replies: Building an AI-Powered E-commerce Support system

This article demonstrates how to build an AI-driven multi-agent system for automated e-commerce customer email support. It covers the architecture and setup of specialized AI agents using the Agent Squad framework, integrating automated processing with human-in-the-loop oversight. The guide explores email ingestion, intelligent routing, automated response generation, and human verification, providing a comprehensive approach to balancing AI efficiency with human expertise in customer support.

-

Speak Up, AI: Voicing Your Agents with Amazon Connect, Lex, and Bedrock

This article demonstrates how to build an AI customer call center. It covers the architecture and setup of specialized AI agents using the Agent Squad framework interacting with voice via Amazon Connect and Amazon Lex.

-

Unlock Bedrock InvokeInlineAgent API's Hidden Potential

Learn how to scale Amazon Bedrock Agents beyond knowledge base limitations using the Agent Squad framework and InvokeInlineAgent API. This article demonstrates dynamic agent creation and knowledge base selection for enterprise-scale AI applications.

-

Supercharging Amazon Bedrock Flows

Learn how to enhance Amazon Bedrock Flows with conversation memory and multi-flow orchestration using the Agent Squad framework. This guide shows how to overcome Bedrock Flows' limitations to build more sophisticated AI workflows with persistent memory and intelligent routing between flows.

-

🇫🇷 Podcast (French): L'orchestrateur multi-agents : Un orchestrateur open source pour vos agents IA

- Platforms:

-

🇬🇧 Podcast (English): An Orchestrator for Your AI Agents

- Platforms:

🔄

multi-agent-orchestratorbecomesagent-squad

npm install agent-squadThe following example demonstrates how to use the Agent Squad with two different types of agents: a Bedrock LLM Agent with Converse API support and a Lex Bot Agent. This showcases the flexibility of the system in integrating various AI services.

import { AgentSquad, BedrockLLMAgent, LexBotAgent } from "agent-squad";

const orchestrator = new AgentSquad();

// Add a Bedrock LLM Agent with Converse API support

orchestrator.addAgent(

new BedrockLLMAgent({

name: "Tech Agent",

description:

"Specializes in technology areas including software development, hardware, AI, cybersecurity, blockchain, cloud computing, emerging tech innovations, and pricing/costs related to technology products and services.",

streaming: true

})

);

// Add a Lex Bot Agent for handling travel-related queries

orchestrator.addAgent(

new LexBotAgent({

name: "Travel Agent",

description: "Helps users book and manage their flight reservations",

botId: process.env.LEX_BOT_ID,

botAliasId: process.env.LEX_BOT_ALIAS_ID,

localeId: "en_US",

})

);

// Example usage

const response = await orchestrator.routeRequest(

"I want to book a flight",

'user123',

'session456'

);

// Handle the response (streaming or non-streaming)

if (response.streaming == true) {

console.log("\n** RESPONSE STREAMING ** \n");

// Send metadata immediately

console.log(`> Agent ID: ${response.metadata.agentId}`);

console.log(`> Agent Name: ${response.metadata.agentName}`);

console.log(`> User Input: ${response.metadata.userInput}`);

console.log(`> User ID: ${response.metadata.userId}`);

console.log(`> Session ID: ${response.metadata.sessionId}`);

console.log(

`> Additional Parameters:`,

response.metadata.additionalParams

);

console.log(`\n> Response: `);

// Stream the content

for await (const chunk of response.output) {

if (typeof chunk === "string") {

process.stdout.write(chunk);

} else {

console.error("Received unexpected chunk type:", typeof chunk);

}

}

} else {

// Handle non-streaming response (AgentProcessingResult)

console.log("\n** RESPONSE ** \n");

console.log(`> Agent ID: ${response.metadata.agentId}`);

console.log(`> Agent Name: ${response.metadata.agentName}`);

console.log(`> User Input: ${response.metadata.userInput}`);

console.log(`> User ID: ${response.metadata.userId}`);

console.log(`> Session ID: ${response.metadata.sessionId}`);

console.log(

`> Additional Parameters:`,

response.metadata.additionalParams

);

console.log(`\n> Response: ${response.output}`);

}🔄

multi-agent-orchestratorbecomesagent-squad

# Optional: Set up a virtual environment

python -m venv venv

source venv/bin/activate # On Windows use `venv\Scripts\activate`

pip install agent-squad[aws]Here's an equivalent Python example demonstrating the use of the Agent Squad with a Bedrock LLM Agent and a Lex Bot Agent:

import sys

import asyncio

from agent_squad.orchestrator import AgentSquad

from agent_squad.agents import BedrockLLMAgent, BedrockLLMAgentOptions, AgentStreamResponse

orchestrator = AgentSquad()

tech_agent = BedrockLLMAgent(BedrockLLMAgentOptions(

name="Tech Agent",

streaming=True,

description="Specializes in technology areas including software development, hardware, AI, \

cybersecurity, blockchain, cloud computing, emerging tech innovations, and pricing/costs \

related to technology products and services.",

model_id="anthropic.claude-3-sonnet-20240229-v1:0",

))

orchestrator.add_agent(tech_agent)

health_agent = BedrockLLMAgent(BedrockLLMAgentOptions(

name="Health Agent",

streaming=True,

description="Specializes in health and well being",

))

orchestrator.add_agent(health_agent)

async def main():

# Example usage

response = await orchestrator.route_request(

"What is AWS Lambda?",

'user123',

'session456',

{},

True

)

# Handle the response (streaming or non-streaming)

if response.streaming:

print("\n** RESPONSE STREAMING ** \n")

# Send metadata immediately

print(f"> Agent ID: {response.metadata.agent_id}")

print(f"> Agent Name: {response.metadata.agent_name}")

print(f"> User Input: {response.metadata.user_input}")

print(f"> User ID: {response.metadata.user_id}")

print(f"> Session ID: {response.metadata.session_id}")

print(f"> Additional Parameters: {response.metadata.additional_params}")

print("\n> Response: ")

# Stream the content

async for chunk in response.output:

async for chunk in response.output:

if isinstance(chunk, AgentStreamResponse):

print(chunk.text, end='', flush=True)

else:

print(f"Received unexpected chunk type: {type(chunk)}", file=sys.stderr)

else:

# Handle non-streaming response (AgentProcessingResult)

print("\n** RESPONSE ** \n")

print(f"> Agent ID: {response.metadata.agent_id}")

print(f"> Agent Name: {response.metadata.agent_name}")

print(f"> User Input: {response.metadata.user_input}")

print(f"> User ID: {response.metadata.user_id}")

print(f"> Session ID: {response.metadata.session_id}")

print(f"> Additional Parameters: {response.metadata.additional_params}")

print(f"\n> Response: {response.output.content}")

if __name__ == "__main__":

asyncio.run(main())These examples showcase:

- The use of a Bedrock LLM Agent with Converse API support, allowing for multi-turn conversations.

- Integration of a Lex Bot Agent for specialized tasks (in this case, travel-related queries).

- The orchestrator's ability to route requests to the most appropriate agent based on the input.

- Handling of both streaming and non-streaming responses from different types of agents.

The Agent Squad is designed with a modular architecture, allowing you to install only the components you need while ensuring you always get the core functionality.

1. AWS Integration:

pip install "agent-squad[aws]"Includes core orchestration functionality with comprehensive AWS service integrations (BedrockLLMAgent, AmazonBedrockAgent, LambdaAgent, etc.)

2. Anthropic Integration:

pip install "agent-squad[anthropic]"3. OpenAI Integration:

pip install "agent-squad[openai]"Adds OpenAI's GPT models for agents and classification, along with core packages.

4. Full Installation:

pip install "agent-squad[all]"Includes all optional dependencies for maximum flexibility.

Have something to share, discuss, or brainstorm? We’d love to connect with you and hear about your journey with the Agent Squad framework. Here’s how you can get involved:

-

🙌 Show & Tell: Got a success story, cool project, or creative implementation? Share it with us in the Show and Tell section. Your work might inspire the entire community! 🎉

-

💬 General Discussion: Have questions, feedback, or suggestions? Join the conversation in our General Discussions section. It’s the perfect place to connect with other users and contributors.

-

💡 Ideas: Thinking of a new feature or improvement? Share your thoughts in the Ideas section. We’re always open to exploring innovative ways to make the orchestrator even better!

Let’s collaborate, learn from each other, and build something incredible together! 🚀

This repository follows an Issue-First policy:

- Every pull request must be linked to an existing issue

- If there isn't an issue for the changes you want to make, please create one first

- Use the issue to discuss proposed changes before investing time in implementation

When creating a pull request, you must link it to an issue using one of these methods:

-

Include a reference in the PR description using keywords:

Fixes #123Resolves #123Closes #123

-

Manually link the PR to an issue through GitHub's UI:

- On the right sidebar of your PR, click "Development" and then "Link an issue"

We use GitHub Actions to automatically verify that each PR is linked to an issue. PRs without linked issues will not pass required checks and cannot be merged.

This policy helps us:

- Maintain clear documentation of changes and their purposes

- Ensure community discussion before implementation

- Keep a structured development process

- Make project history more traceable and understandable

Once your proposal is approved, here are the next steps:

- 📚 Review our Contributing Guide

- 💡 Create a GitHub Issue

- 🔨 Submit a pull request

✅ Follow existing project structure and include documentation for new features.

🌟 Stay Updated: Star the repository to be notified about new features, improvements, and exciting developments in the Agent Squad framework!

Big shout out to our awesome contributors! Thank you for making this project better! 🌟 ⭐ 🚀

Please see our contributing guide for guidelines on how to propose bugfixes and improvements.

This project is licensed under the Apache 2.0 licence - see the LICENSE file for details.

This project uses the JetBrainsMono NF font, licensed under the SIL Open Font License 1.1. For full license details, see FONT-LICENSE.md.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for agent-squad

Similar Open Source Tools

agent-squad

Agent Squad is a flexible, lightweight open-source framework for orchestrating multiple AI agents to handle complex conversations. It intelligently routes queries, maintains context across interactions, and offers pre-built components for quick deployment. The system allows easy integration of custom agents and conversation messages storage solutions, making it suitable for various applications from simple chatbots to sophisticated AI systems, scaling efficiently.

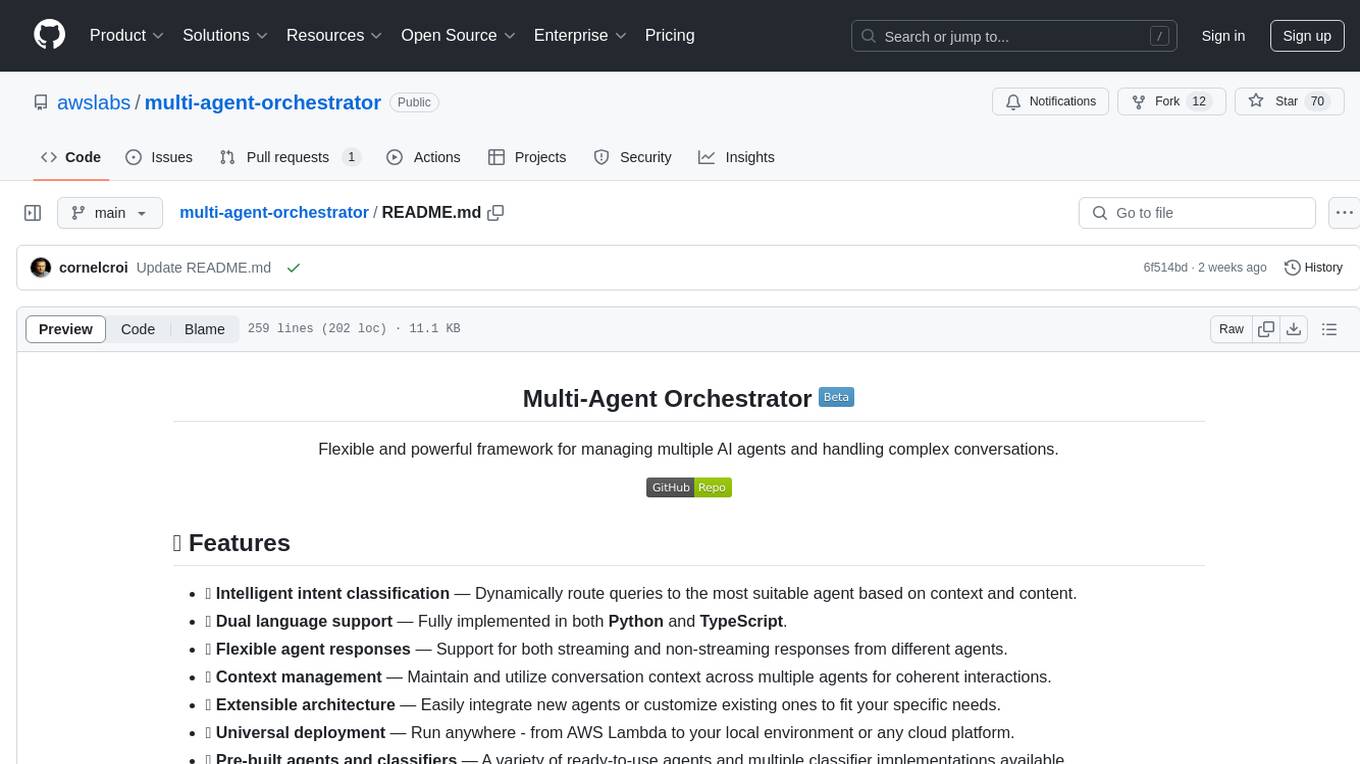

multi-agent-orchestrator

Multi-Agent Orchestrator is a flexible and powerful framework for managing multiple AI agents and handling complex conversations. It intelligently routes queries to the most suitable agent based on context and content, supports dual language implementation in Python and TypeScript, offers flexible agent responses, context management across agents, extensible architecture for customization, universal deployment options, and pre-built agents and classifiers. It is suitable for various applications, from simple chatbots to sophisticated AI systems, accommodating diverse requirements and scaling efficiently.

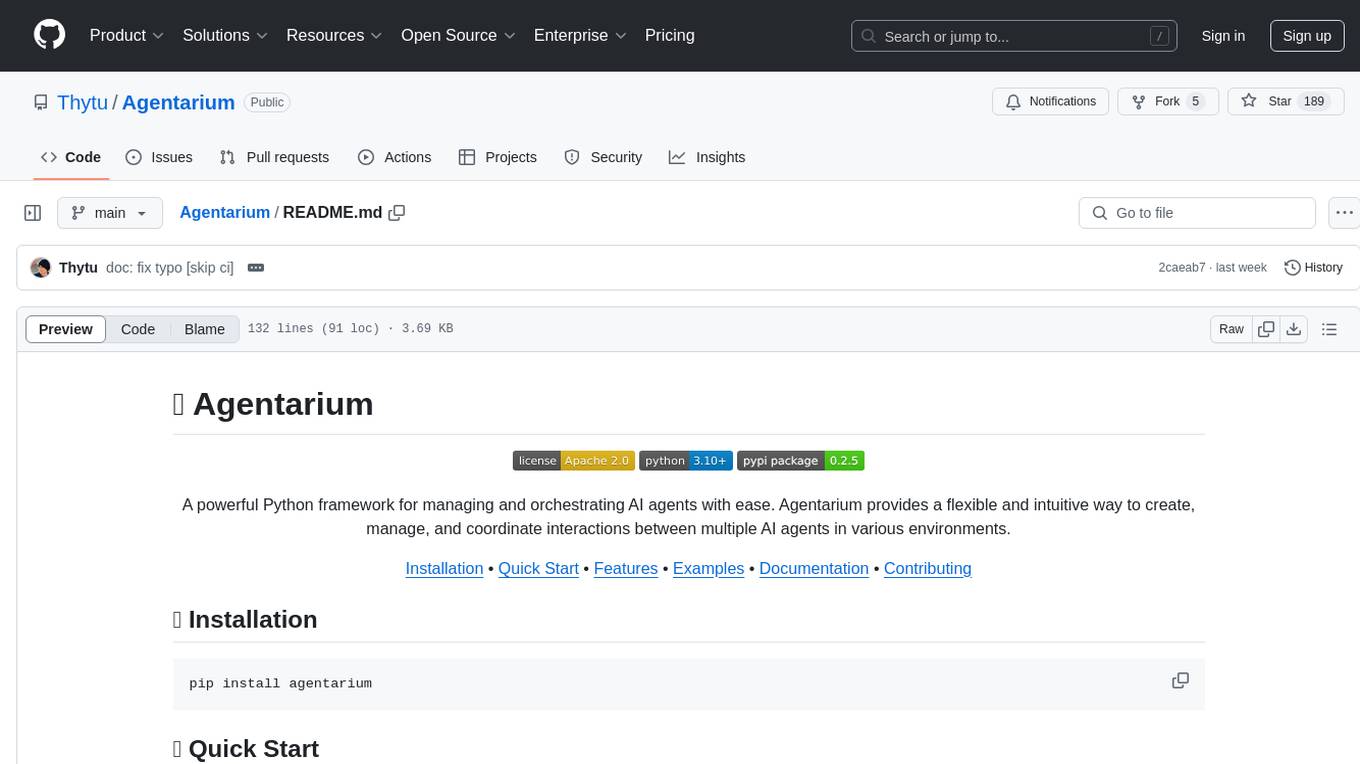

Agentarium

Agentarium is a powerful Python framework for managing and orchestrating AI agents with ease. It provides a flexible and intuitive way to create, manage, and coordinate interactions between multiple AI agents in various environments. The framework offers advanced agent management, robust interaction management, a checkpoint system for saving and restoring agent states, data generation through agent interactions, performance optimization, flexible environment configuration, and an extensible architecture for customization.

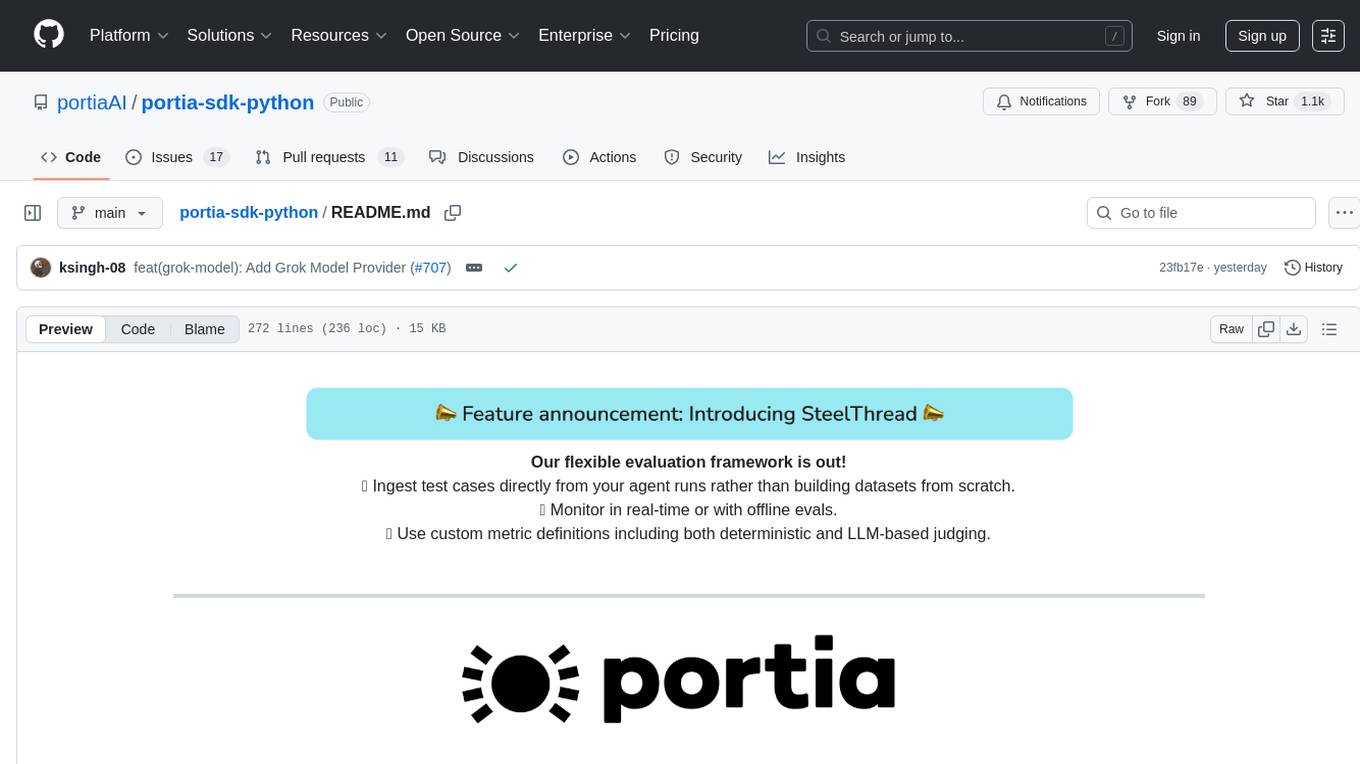

portia-sdk-python

Portia AI is an open source developer framework for predictable, stateful, authenticated agentic workflows. It allows developers to have oversight over their multi-agent deployments and focuses on production readiness. The framework supports iterating on agents' reasoning, extensive tool support including MCP support, authentication for API and web agents, and is production-ready with features like attribute multi-agent runs, large inputs and outputs storage, and connecting any LLM. Portia AI aims to provide a flexible and reliable platform for developing AI agents with tools, authentication, and smart control.

TaskingAI

TaskingAI brings Firebase's simplicity to **AI-native app development**. The platform enables the creation of GPTs-like multi-tenant applications using a wide range of LLMs from various providers. It features distinct, modular functions such as Inference, Retrieval, Assistant, and Tool, seamlessly integrated to enhance the development process. TaskingAI’s cohesive design ensures an efficient, intelligent, and user-friendly experience in AI application development.

DevoxxGenieIDEAPlugin

Devoxx Genie is a Java-based IntelliJ IDEA plugin that integrates with local and cloud-based LLM providers to aid in reviewing, testing, and explaining project code. It supports features like code highlighting, chat conversations, and adding files/code snippets to context. Users can modify REST endpoints and LLM parameters in settings, including support for cloud-based LLMs. The plugin requires IntelliJ version 2023.3.4 and JDK 17. Building and publishing the plugin is done using Gradle tasks. Users can select an LLM provider, choose code, and use commands like review, explain, or generate unit tests for code analysis.

sre

SmythOS is an operating system designed for building, deploying, and managing intelligent AI agents at scale. It provides a unified SDK and resource abstraction layer for various AI services, making it easy to scale and flexible. With an agent-first design, developer-friendly SDK, modular architecture, and enterprise security features, SmythOS offers a robust foundation for AI workloads. The system is built with a philosophy inspired by traditional operating system kernels, ensuring autonomy, control, and security for AI agents. SmythOS aims to make shipping production-ready AI agents accessible and open for everyone in the coming Internet of Agents era.

Mira

Mira is an agentic AI library designed for automating company research by gathering information from various sources like company websites, LinkedIn profiles, and Google Search. It utilizes a multi-agent architecture to collect and merge data points into a structured profile with confidence scores and clear source attribution. The core library is framework-agnostic and can be integrated into applications, pipelines, or custom workflows. Mira offers features such as real-time progress events, confidence scoring, company criteria matching, and built-in services for data gathering. The tool is suitable for users looking to streamline company research processes and enhance data collection efficiency.

agent-framework

Microsoft Agent Framework is a comprehensive multi-language framework for building, orchestrating, and deploying AI agents with support for both .NET and Python implementations. It provides everything from simple chat agents to complex multi-agent workflows with graph-based orchestration. The framework offers features like graph-based workflows, AF Labs for experimental packages, DevUI for interactive developer UI, support for Python and C#/.NET, observability with OpenTelemetry integration, multiple agent provider support, and flexible middleware system. Users can find documentation, tutorials, and user guides to get started with building agents and workflows. The framework also supports various LLM providers and offers contributor resources like a contributing guide, Python development guide, design documents, and architectural decision records.

beeai-platform

BeeAI is an open-source platform that simplifies the discovery, running, and sharing of AI agents across different frameworks. It addresses challenges such as framework fragmentation, deployment complexity, and discovery issues by providing a standardized platform for individuals and teams to access agents easily. With features like a centralized agent catalog, framework-agnostic interfaces, containerized agents, and consistent user experiences, BeeAI aims to streamline the process of working with AI agents for both developers and teams.

trip_planner_agent

VacAIgent is an AI tool that automates and enhances trip planning by leveraging the CrewAI framework. It integrates a user-friendly Streamlit interface for interactive travel planning. Users can input preferences and receive tailored travel plans with the help of autonomous AI agents. The tool allows for collaborative decision-making on cities and crafting complete itineraries based on specified preferences, all accessible via a streamlined Streamlit user interface. VacAIgent can be customized to use different AI models like GPT-3.5 or local models like Ollama for enhanced privacy and customization.

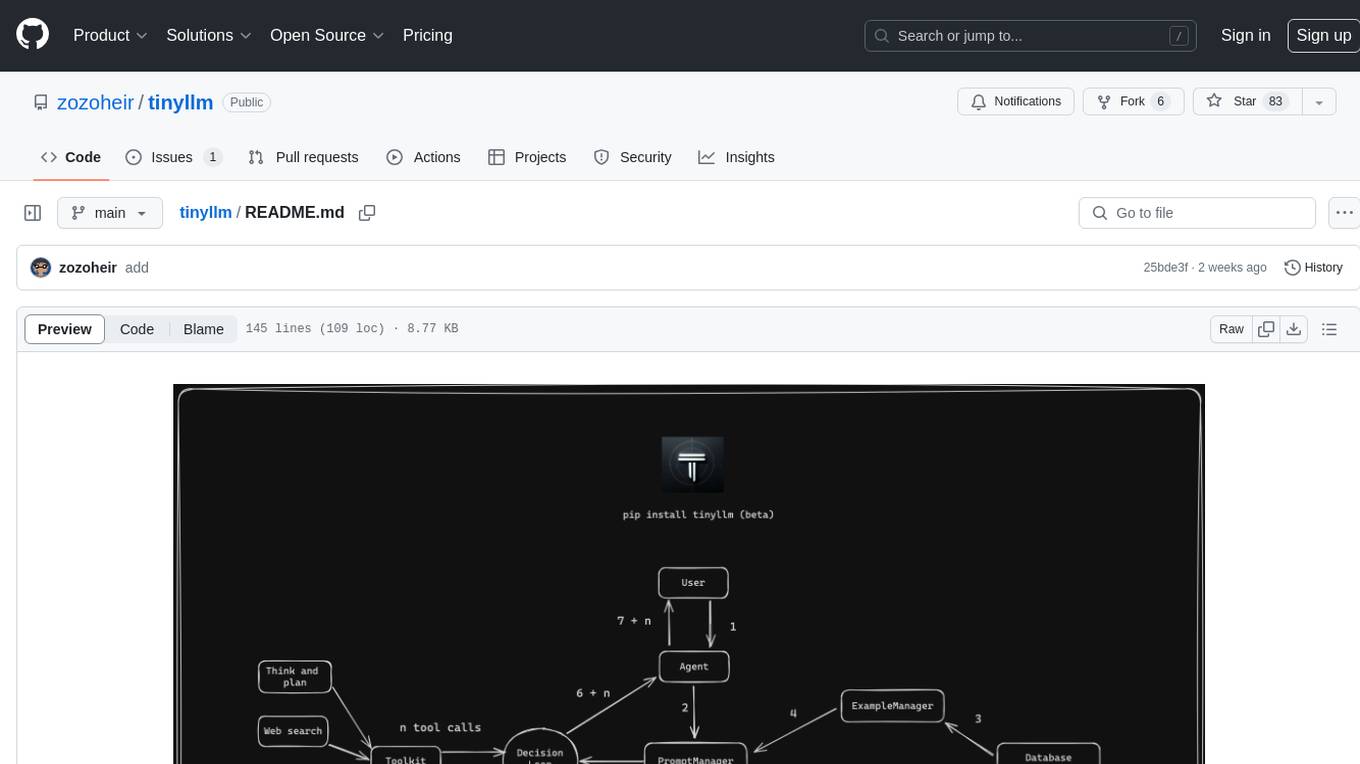

tinyllm

tinyllm is a lightweight framework designed for developing, debugging, and monitoring LLM and Agent powered applications at scale. It aims to simplify code while enabling users to create complex agents or LLM workflows in production. The core classes, Function and FunctionStream, standardize and control LLM, ToolStore, and relevant calls for scalable production use. It offers structured handling of function execution, including input/output validation, error handling, evaluation, and more, all while maintaining code readability. Users can create chains with prompts, LLM models, and evaluators in a single file without the need for extensive class definitions or spaghetti code. Additionally, tinyllm integrates with various libraries like Langfuse and provides tools for prompt engineering, observability, logging, and finite state machine design.

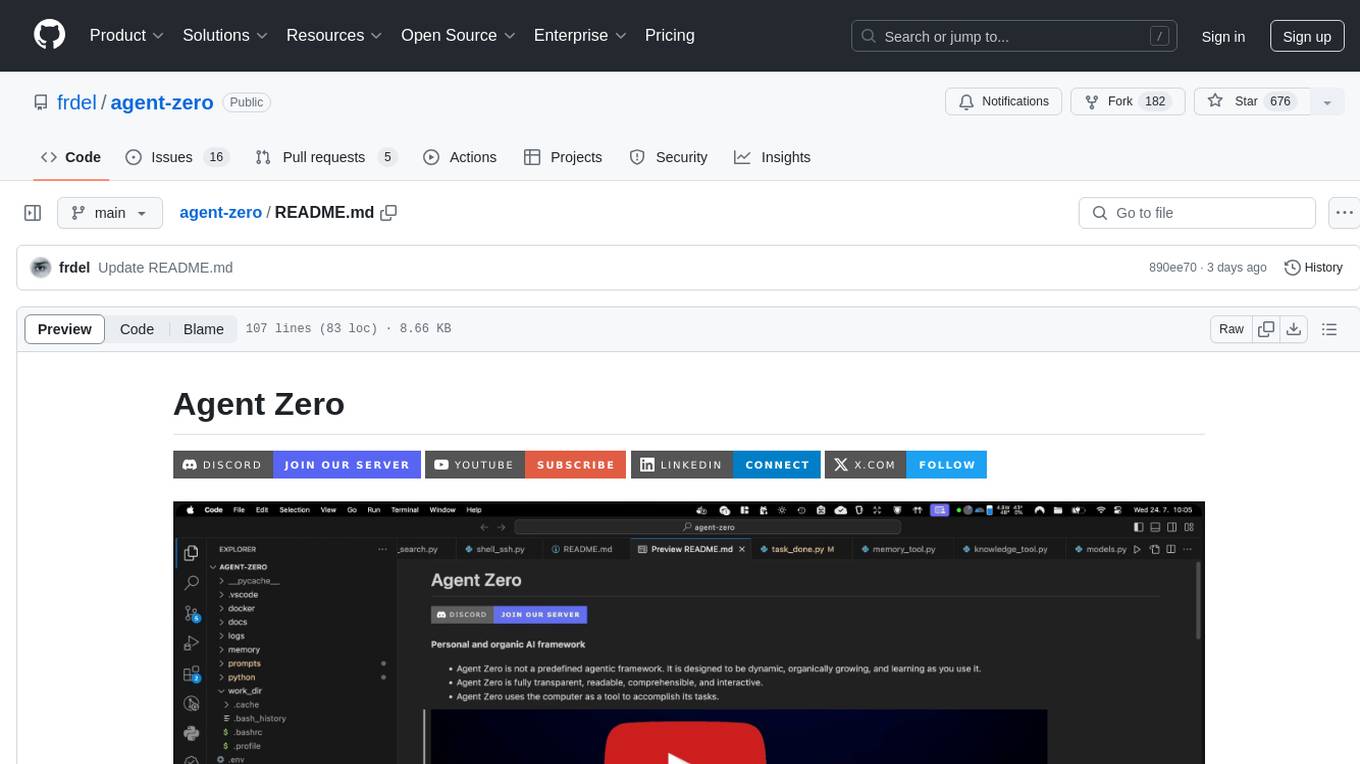

agent-zero

Agent Zero is a personal and organic AI framework designed to be dynamic, organically growing, and learning as you use it. It is fully transparent, readable, comprehensible, customizable, and interactive. The framework uses the computer as a tool to accomplish tasks, with no single-purpose tools pre-programmed. It emphasizes multi-agent cooperation, complete customization, and extensibility. Communication is key in this framework, allowing users to give proper system prompts and instructions to achieve desired outcomes. Agent Zero is capable of dangerous actions and should be run in an isolated environment. The framework is prompt-based, highly customizable, and requires a specific environment to run effectively.

embodied-agents

Embodied Agents is a toolkit for integrating large multi-modal models into existing robot stacks with just a few lines of code. It provides consistency, reliability, scalability, and is configurable to any observation and action space. The toolkit is designed to reduce complexities involved in setting up inference endpoints, converting between different model formats, and collecting/storing datasets. It aims to facilitate data collection and sharing among roboticists by providing Python-first abstractions that are modular, extensible, and applicable to a wide range of tasks. The toolkit supports asynchronous and remote thread-safe agent execution for maximal responsiveness and scalability, and is compatible with various APIs like HuggingFace Spaces, Datasets, Gymnasium Spaces, Ollama, and OpenAI. It also offers automatic dataset recording and optional uploads to the HuggingFace hub.

Director

Director is a framework to build video agents that can reason through complex video tasks like search, editing, compilation, generation, etc. It enables users to summarize videos, search for specific moments, create clips instantly, integrate GenAI projects and APIs, add overlays, generate thumbnails, and more. Built on VideoDB's 'video-as-data' infrastructure, Director is perfect for developers, creators, and teams looking to simplify media workflows and unlock new possibilities.

ai_automation_suggester

An integration for Home Assistant that leverages AI models to understand your unique home environment and propose intelligent automations. By analyzing your entities, devices, areas, and existing automations, the AI Automation Suggester helps you discover new, context-aware use cases you might not have considered, ultimately streamlining your home management and improving efficiency, comfort, and convenience. The tool acts as a personal automation consultant, providing actionable YAML-based automations that can save energy, improve security, enhance comfort, and reduce manual intervention. It turns the complexity of a large Home Assistant environment into actionable insights and tangible benefits.

For similar tasks

agent-squad

Agent Squad is a flexible, lightweight open-source framework for orchestrating multiple AI agents to handle complex conversations. It intelligently routes queries, maintains context across interactions, and offers pre-built components for quick deployment. The system allows easy integration of custom agents and conversation messages storage solutions, making it suitable for various applications from simple chatbots to sophisticated AI systems, scaling efficiently.

FDAbench

FDABench is a benchmark tool designed for evaluating data agents' reasoning ability over heterogeneous data in analytical scenarios. It offers 2,007 tasks across various data sources, domains, difficulty levels, and task types. The tool provides ready-to-use data agent implementations, a DAG-based evaluation system, and a framework for agent-expert collaboration in dataset generation. Key features include data agent implementations, comprehensive evaluation metrics, multi-database support, different task types, extensible framework for custom agent integration, and cost tracking. Users can set up the environment using Python 3.10+ on Linux, macOS, or Windows. FDABench can be installed with a one-command setup or manually. The tool supports API configuration for LLM access and offers quick start guides for database download, dataset loading, and running examples. It also includes features like dataset generation using the PUDDING framework, custom agent integration, evaluation metrics like accuracy and rubric score, and a directory structure for easy navigation.

superagent-py

Superagent is an open-source framework that enables developers to integrate production-ready AI assistants into any application quickly and easily. It provides a Python SDK for interacting with the Superagent API, allowing developers to create, manage, and invoke AI agents. The SDK simplifies the process of building AI-powered applications, making it accessible to developers of all skill levels.

AGiXT

AGiXT is a dynamic Artificial Intelligence Automation Platform engineered to orchestrate efficient AI instruction management and task execution across a multitude of providers. Our solution infuses adaptive memory handling with a broad spectrum of commands to enhance AI's understanding and responsiveness, leading to improved task completion. The platform's smart features, like Smart Instruct and Smart Chat, seamlessly integrate web search, planning strategies, and conversation continuity, transforming the interaction between users and AI. By leveraging a powerful plugin system that includes web browsing and command execution, AGiXT stands as a versatile bridge between AI models and users. With an expanding roster of AI providers, code evaluation capabilities, comprehensive chain management, and platform interoperability, AGiXT is consistently evolving to drive a multitude of applications, affirming its place at the forefront of AI technology.

infra

E2B Infra is a cloud runtime for AI agents. It provides SDKs and CLI to customize and manage environments and run AI agents in the cloud. The infrastructure is deployed using Terraform and is currently only deployable on GCP. The main components of the infrastructure are the API server, daemon running inside instances (sandboxes), Nomad driver for managing instances (sandboxes), and Nomad driver for building environments (templates).

Awesome-European-Tech

Awesome European Tech is an up-to-date list of recommended European projects and companies curated by the community to support and strengthen the European tech ecosystem. It focuses on privacy and sustainability, showcasing companies that adhere to GDPR compliance and sustainability standards. The project aims to highlight and support European startups and projects excelling in privacy, sustainability, and innovation to contribute to a more diverse, resilient, and interconnected global tech landscape.

LarAgent

LarAgent is a framework designed to simplify the creation and management of AI agents within Laravel projects. It offers an Eloquent-like syntax for creating and managing AI agents, Laravel-style artisan commands, flexible agent configuration, structured output handling, image input support, and extensibility. LarAgent supports multiple chat history storage options, custom tool creation, event system for agent interactions, multiple provider support, and can be used both in Laravel and standalone environments. The framework is constantly evolving to enhance developer experience, improve AI capabilities, enhance security and storage features, and enable advanced integrations like provider fallback system, Laravel Actions integration, and voice chat support.

mcp-gateway-registry

The MCP Gateway & Registry is a unified, enterprise-ready platform that centralizes access to both MCP Servers and AI Agents using the Model Context Protocol (MCP). It serves as a Unified MCP Server Gateway, MCP Servers Registry, and Agent Registry & A2A Communication Hub. The platform integrates with external registries, providing a single control plane for tool access, agent orchestration, and communication patterns. It transforms the chaos of managing individual MCP server configurations into an organized approach with secure, governed access to curated servers and registered agents. The platform supports dynamic tool discovery, autonomous agent communication, and unified policies for server and agent access.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.