gemini-coder

The free 2M context AI coding assistant

Stars: 67

Gemini Coder is a free 2M context AI coding assistant that allows users to conveniently copy folders and files for chatbots. It provides FIM completions, file refactoring, and AI-suggested changes. The extension is versatile, private, and lightweight, offering unmatched accuracy, speed, and cost in AI assistance. Users have full control over the context and coding conventions included, ensuring high performance and signal to noise ratio. Gemini Coder supports various chatbots and provides quick start guides for chat and FIM completions. It also offers commands for FIM completions, refactoring, applying changes, chat, and context copying. Users can set up custom model providers for API features and contribute to the project through pull requests or discussions. The tool is licensed under the MIT License.

README:

Copy folders and files for chatbots or initialize them hands-free!

Use the free Gemini API for FIM completions, file refactoring and applying AI-suggested changes.

Gemini Coder lets you conveniently copy folders and files for chatbots. With the Connector browser extension you can initalize them hands-free!

The extension uses the same context for built-in API features: Fill-In-the-Middle (FIM) completions and file refactoring. Hit apply changes to integrate AI responses with your codebase with just a single click.

- 100% free & open source: MIT License

- Versitale: Not limited to Gemini or AI Studio

- Private: Does not collect any usage data

- Local: Talks with the web browser via WebSockets

- One of a kind: Lets you use any website for context

- Lightweight: Unpacked build is just ~1MB

Other AI coding tools try to "guess" what context matters, often getting it wrong. Gemini Coder works differently:

- You select which folders and files provide relevant context

- You control what examples of coding conventions to include

- You know how much tokens is used in web chats and FIM/refactoring requests

The result? Unmatched in accuracy, speed and cost AI assistance.

Too many tokens fighting for attention may decrease performance due to being too "distracting", diffusing attention too broadly and decreasing a signal to noise ratio in the features. ~Andrej Karpathy

Gemini Coder works with many popular chatbots:

- AI Studio - fully supported (model, temperature, system instructions)

- Gemini

- ChatGPT

- Claude

- GitHub Copilot

- Grok

- DeepSeek

- Mistral

- HuggingChat

- Open WebUI (localhost)

- Open the new Gemini Coder view from the activity bar (sparkles icon).

- Select files/folders for the context.

- Click copy icon from the toolbar.

- (optional) Install browser integration for hands-free initializations.

- Get your API key from Google AI Studio.

- Open VS Code and navigate to settings.

- Search for "Gemini Coder" and paste your API key.

- Use Command Palette (Ctrl/Cmd + Shift + P) and type "FIM Completion".

- Bind the command to a keyboard shortcut by opening Keyboard Shortcuts (Ctrl/Cmd+K Ctrl/Cmd+S), searching for

Gemini Coder: FIM Completion, clicking the + icon, and pressing your preferred key combination (e.g. Ctrl/Cmd+I).

-

Gemini Coder: FIM Completion- Get fill-in-the-middle completion using default model. -

Gemini Coder: FIM Completion with...- Get fill-in-the-middle completion with model selection. -

Gemini Coder: FIM Completion to Clipboard- Copy FIM completion content to clipboard. -

Gemini Coder: Change Default FIM Model- Change default AI model for FIM completions.

-

Gemini Coder: Refactor this File- Apply changes based on refactoring instruction. -

Gemini Coder: Refactor this File with...- Refactor with model selection. -

Gemini Coder: Refactor to Clipboard- Copy refactoring content to clipboard. -

Gemini Coder: Change Default Refactoring Model- Change default AI model for refactoring.

-

Gemini Coder: Apply Changes- Apply changes suggested by AI using clipboard content. -

Gemini Coder: Apply Changes with...- Apply changes with model selection. -

Gemini Coder: Apply Changes to Clipboard- Copy apply changes content to clipboard. -

Gemini Coder: Change Default Apply Changes Model- Change default AI model for applying changes.

-

Gemini Coder: Web Chat- Enter instructions and open web chat hands-free. -

Gemini Coder: Chat to Clipboard- Enter instructions and copy to clipboard.

-

Gemini Coder: Copy Context- Copy selected files as XML context.

The extension supports OpenAI-API compatible model providers for API features.

"geminiCoder.providers": [

{

"name": "DeepSeek",

"endpointUrl": "https://api.deepseek.com/v1/chat/completions",

"bearerToken": "[API KEY]",

"model": "deepseek-chat",

"temperature": 0,

"instruction": ""

},

{

"name": "Mistral Large Latest",

"endpointUrl": "https://api.mistral.ai/v1/chat/completions",

"bearerToken": "[API KEY]",

"model": "mistral-large-latest",

"temperature": 0,

"instruction": ""

},

],All contributions are welcome. Feel free to submit pull requests or create issues and discussions.

Copyright (c) 2025 Robert Piosik. MIT License.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for gemini-coder

Similar Open Source Tools

gemini-coder

Gemini Coder is a free 2M context AI coding assistant that allows users to conveniently copy folders and files for chatbots. It provides FIM completions, file refactoring, and AI-suggested changes. The extension is versatile, private, and lightweight, offering unmatched accuracy, speed, and cost in AI assistance. Users have full control over the context and coding conventions included, ensuring high performance and signal to noise ratio. Gemini Coder supports various chatbots and provides quick start guides for chat and FIM completions. It also offers commands for FIM completions, refactoring, applying changes, chat, and context copying. Users can set up custom model providers for API features and contribute to the project through pull requests or discussions. The tool is licensed under the MIT License.

refact-lsp

Refact Agent is a small executable written in Rust as part of the Refact Agent project. It lives inside your IDE to keep AST and VecDB indexes up to date, supporting connection graphs between definitions and usages in popular programming languages. It functions as an LSP server, offering code completion, chat functionality, and integration with various tools like browsers, databases, and debuggers. Users can interact with it through a Text UI in the command line.

chunkr

Chunkr is an open-source document intelligence API that provides a production-ready service for document layout analysis, OCR, and semantic chunking. It allows users to convert PDFs, PPTs, Word docs, and images into RAG/LLM-ready chunks. The API offers features such as layout analysis, OCR with bounding boxes, structured HTML and markdown output, and VLM processing controls. Users can interact with Chunkr through a Python SDK, enabling them to upload documents, process them, and export results in various formats. The tool also supports self-hosted deployment options using Docker Compose or Kubernetes, with configurations for different AI models like OpenAI, Google AI Studio, and OpenRouter. Chunkr is dual-licensed under the GNU Affero General Public License v3.0 (AGPL-3.0) and a commercial license, providing flexibility for different usage scenarios.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

DesktopCommanderMCP

Desktop Commander MCP is a server that allows the Claude desktop app to execute long-running terminal commands on your computer and manage processes through Model Context Protocol (MCP). It is built on top of MCP Filesystem Server to provide additional search and replace file editing capabilities. The tool enables users to execute terminal commands with output streaming, manage processes, perform full filesystem operations, and edit code with surgical text replacements or full file rewrites. It also supports vscode-ripgrep based recursive code or text search in folders.

better-chatbot

Better Chatbot is an open-source AI chatbot designed for individuals and teams, inspired by various AI models. It integrates major LLMs, offers powerful tools like MCP protocol and data visualization, supports automation with custom agents and visual workflows, enables collaboration by sharing configurations, provides a voice assistant feature, and ensures an intuitive user experience. The platform is built with Vercel AI SDK and Next.js, combining leading AI services into one platform for enhanced chatbot capabilities.

AiR

AiR is an AI tool built entirely in Rust that delivers blazing speed and efficiency. It features accurate translation and seamless text rewriting to supercharge productivity. AiR is designed to assist non-native speakers by automatically fixing errors and polishing language to sound like a native speaker. The tool is under heavy development with more features on the horizon.

openai-kotlin

OpenAI Kotlin API client is a Kotlin client for OpenAI's API with multiplatform and coroutines capabilities. It allows users to interact with OpenAI's API using Kotlin programming language. The client supports various features such as models, chat, images, embeddings, files, fine-tuning, moderations, audio, assistants, threads, messages, and runs. It also provides guides on getting started, chat & function call, file source guide, and assistants. Sample apps are available for reference, and troubleshooting guides are provided for common issues. The project is open-source and licensed under the MIT license, allowing contributions from the community.

bytebot

Bytebot is an open-source AI desktop agent that provides a virtual employee with its own computer to complete tasks for users. It can use various applications, download and organize files, log into websites, process documents, and perform complex multi-step workflows. By giving AI access to a complete desktop environment, Bytebot unlocks capabilities not possible with browser-only agents or API integrations, enabling complete task autonomy, document processing, and usage of real applications.

LEANN

LEANN is an innovative vector database that democratizes personal AI, transforming your laptop into a powerful RAG system that can index and search through millions of documents using 97% less storage than traditional solutions without accuracy loss. It achieves this through graph-based selective recomputation and high-degree preserving pruning, computing embeddings on-demand instead of storing them all. LEANN allows semantic search of file system, emails, browser history, chat history, codebase, or external knowledge bases on your laptop with zero cloud costs and complete privacy. It is a drop-in semantic search MCP service fully compatible with Claude Code, enabling intelligent retrieval without changing your workflow.

VibeSurf

VibeSurf is an open-source AI agentic browser that combines workflow automation with intelligent AI agents, offering faster, cheaper, and smarter browser automation. It allows users to create revolutionary browser workflows, run multiple AI agents in parallel, perform intelligent AI automation tasks, maintain privacy with local LLM support, and seamlessly integrate as a Chrome extension. Users can save on token costs, achieve efficiency gains, and enjoy deterministic workflows for consistent and accurate results. VibeSurf also provides a Docker image for easy deployment and offers pre-built workflow templates for common tasks.

browser-use

Browser Use is a tool designed to make websites accessible for AI agents. It provides an easy way to connect AI agents with the browser, enabling users to perform tasks such as extracting vision and HTML elements, managing multiple tabs, and executing custom actions. The tool supports various language models and allows users to parallelize multiple agents for efficient processing. With features like self-correction and the ability to register custom actions, Browser Use offers a versatile solution for interacting with web content using AI technology.

Groqqle

Groqqle 2.1 is a revolutionary, free AI web search and API that instantly returns ORIGINAL content derived from source articles, websites, videos, and even foreign language sources, for ANY target market of ANY reading comprehension level! It combines the power of large language models with advanced web and news search capabilities, offering a user-friendly web interface, a robust API, and now a powerful Groqqle_web_tool for seamless integration into your projects. Developers can instantly incorporate Groqqle into their applications, providing a powerful tool for content generation, research, and analysis across various domains and languages.

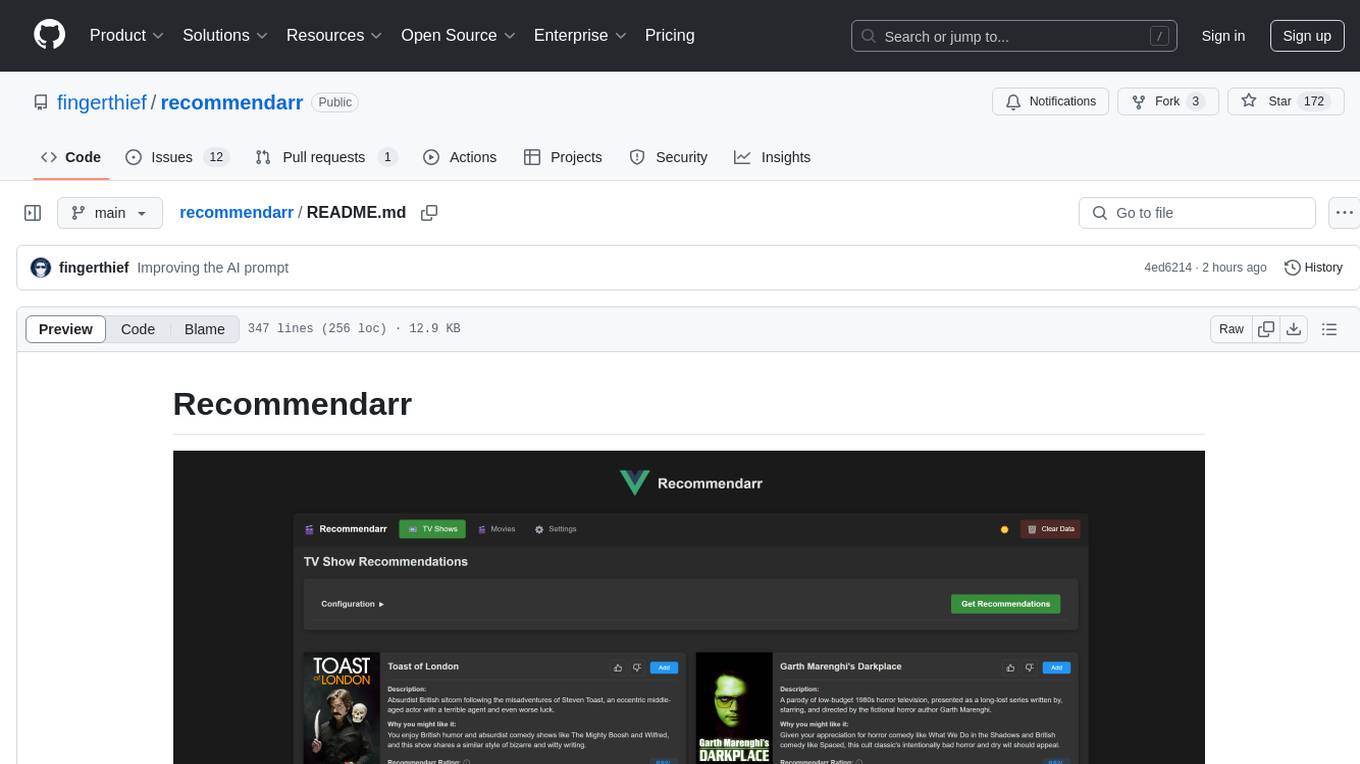

recommendarr

Recommendarr is a tool that generates personalized TV show and movie recommendations based on your Sonarr, Radarr, Plex, and Jellyfin libraries using AI. It offers AI-powered recommendations, media server integration, flexible AI support, watch history analysis, customization options, and dark/light mode toggle. Users can connect their media libraries and watch history services, configure AI service settings, and get personalized recommendations based on genre, language, and mood/vibe preferences. The tool works with any OpenAI-compatible API and offers various recommended models for different cost options and performance levels. It provides personalized suggestions, detailed information, filter options, watch history analysis, and one-click adding of recommended content to Sonarr/Radarr.

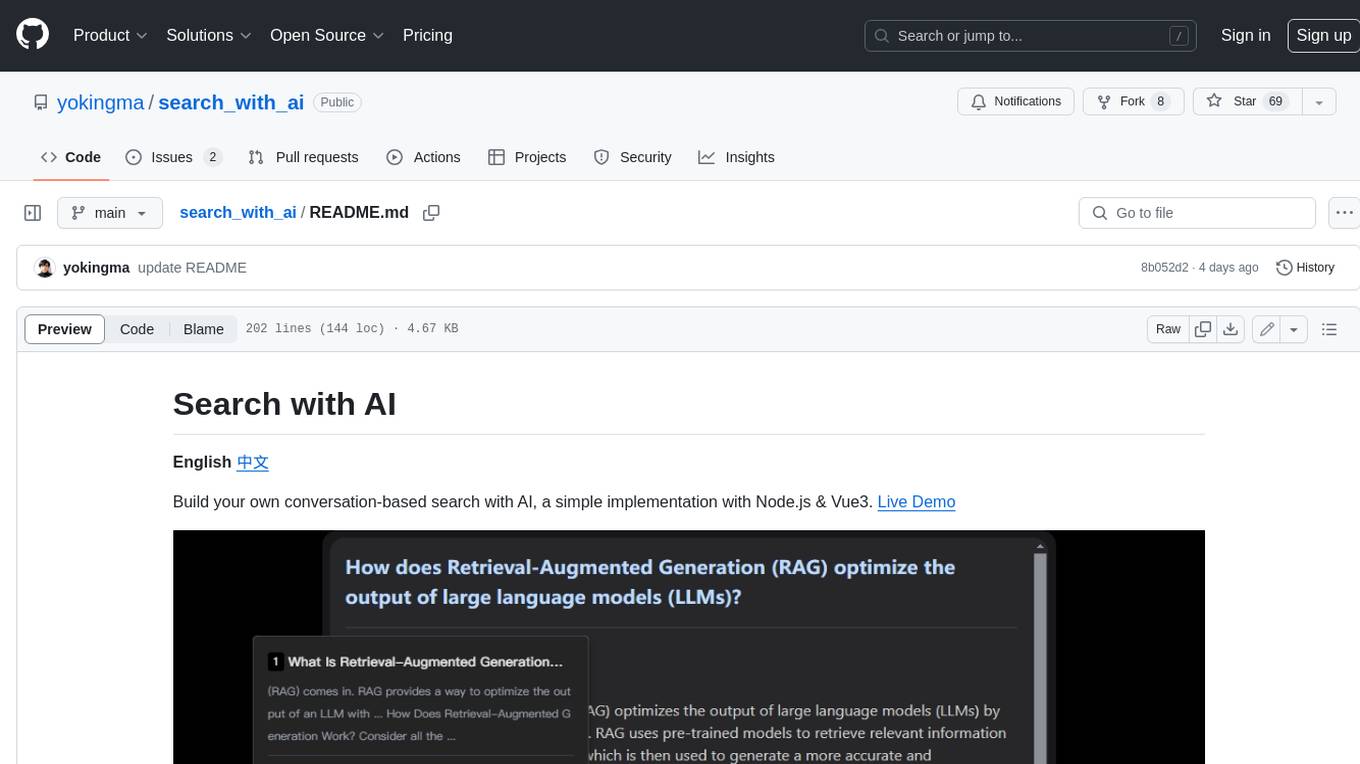

search_with_ai

Build your own conversation-based search with AI, a simple implementation with Node.js & Vue3. Live Demo Features: * Built-in support for LLM: OpenAI, Google, Lepton, Ollama(Free) * Built-in support for search engine: Bing, Sogou, Google, SearXNG(Free) * Customizable pretty UI interface * Support dark mode * Support mobile display * Support local LLM with Ollama * Support i18n * Support Continue Q&A with contexts.

g4f.dev

G4f.dev is the official documentation hub for GPT4Free, a free and convenient AI tool with endpoints that can be integrated directly into apps, scripts, and web browsers. The documentation provides clear overviews, quick examples, and deeper insights into the major features of GPT4Free, including text and image generation. Users can choose between Python and JavaScript for installation and setup, and can access various API endpoints, providers, models, and client options for different tasks.

For similar tasks

gemini-coder

Gemini Coder is a free 2M context AI coding assistant that allows users to conveniently copy folders and files for chatbots. It provides FIM completions, file refactoring, and AI-suggested changes. The extension is versatile, private, and lightweight, offering unmatched accuracy, speed, and cost in AI assistance. Users have full control over the context and coding conventions included, ensuring high performance and signal to noise ratio. Gemini Coder supports various chatbots and provides quick start guides for chat and FIM completions. It also offers commands for FIM completions, refactoring, applying changes, chat, and context copying. Users can set up custom model providers for API features and contribute to the project through pull requests or discussions. The tool is licensed under the MIT License.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.