listen

Solana Swiss Army Knife

Stars: 884

Listen is a Solana Swiss-Knife toolkit for algorithmic trading, offering real-time transaction monitoring, multi-DEX swap execution, fast transactions with Jito MEV bundles, price tracking, token management utilities, and performance monitoring. It includes tools for grabbing data from unofficial APIs and works with the $arc rig framework for AI Agents to interact with the Solana blockchain. The repository provides miscellaneous tools for analysis and data retrieval, with the core functionality in the `src` directory.

README:

listen started sa Solana Swiss-Knife toolkit for algorithmic trading, its mission is to become the go-to framework for AI portfolio management agents

It powers the Listen App, check it out to see what listen framework is capable of

graph TB

subgraph "Rig Agent Kit by Listen"

RAK[RIG Agent Kit]

RAK_MT[Multi-tenant Stream Manager]

RAK_WALLET[Delegated Wallet Manager]

RAK --> RAK_MT

RAK --> RAK_WALLET

end

subgraph "Listen Trading Engine"

LTE[Trading Engine]

ORDER_COL[Order Collector]

PIPE[Pipeline Executor]

EXEC[Order Executor]

ORDER_COL --> PIPE

PIPE --> EXEC

LTE --> ORDER_COL

end

subgraph "Listen Data Service"

LDS[Data Service]

SUB[Substreams Indexer]

DB[(Clickhouse OLAP)]

PRICE[Price Stream]

SUB -->|Index Solana Slots| DB

LDS --> PRICE

LDS --> DB

end

%% External Systems

MOBILE[Mobile App]

CHAIN((Blockchain))

PRIVY((Privy))

WALLET[(Solana/EVM Wallets)]

%% Connections

RAK -->|Tool Calls| CHAIN

RAK -->|Execute Trades| LTE

LDS -->|Pricing Updates| LTE

LDS -->|Enriched Data| MOBILE

MOBILE -->|User Intents| RAK

LTE -->|Sign & Send Tx| CHAIN

DB -->|Query Data| RAK

RAK_WALLET -->|Integration| PRIVY

PRIVY --> WALLET

- 🔍 Real-time transaction monitoring

- 💱 Multi-DEX swap execution (Pump.fun, Jupiter V6 API or Raydium)

- 🚀 Blazingly fast transactions thanks to Jito MEV bundles

- 📊 Price tracking and metrics

- 🧰 Token management utilities

- 📈 Performance monitoring with Prometheus integration

And more!

It works plug'n'play with $arc rig framework framework allowing AI Agents interact with the Solana blockchain, see example: src/agent.rs and the output image.

For complete rundown of features, check out the CLI output of cargo run or the

documentation.

To play around with listen-rs, you can use the UI

Fill in the .env.example and ./dashboard/.env.example, copy over to .env and ./dashboard/.env.example, then

docker compose up

You can then access the dashboard over http://localhost:4173

[!WARNING] listen-rs is undergoing rapid iterations, some things might not work and there could be breaking changes

-

System Dependencies

- Rust (with nightly toolchain)

protocbuild-essentialpkg-configlibssl-dev

-

Configuration

- Copy

.env.exampleto.env - Set up

auth.jsonfor JITO authentication (optional, gRPC HTTP/2.0 searcher client) - Populate

fund.json

- Copy

Both keypairs are in solana-keygen format, array of 64 bytes, 32 bytes

private key and 32 bytes public key.

# Install dependencies

sudo apt install protoc build-essential pkg-config libssl-dev

# Build

cargo build --release

# Run services

./run-systemd-services.shcargo run -- listen \

--worker-count [COUNT] \

--buffer-size [SIZE]cargo run -- swap \

--input-mint sol \

--output-mint EPjFWdd5AufqSSqeM2qN1xzybapC8G4wEGGkZwyTDt1v \

--amount 10000000[!WARNING] Default configuration is set for mainnet with small transactions. Ensure proper configuration for testnet usage and carefully review code before execution.

Listen includes built-in metrics exposed at localhost:3030/metrics. To visualize:

- Start Prometheus:

prometheus --config=prometheus.yml- Access metrics at

localhost:3030/metrics

Grafana should show something like this

The stackcollapse.pl can be installed through

gh repo clone brendangregg/FlameGraph && \

sudo cp FlameGraph/stackcollapse.pl /usr/local/bin && \

sudo cp FlameGraph/flamegraph.pl /usr/local/binProfile swap performance using DTrace to produce a flamegraph:

./hack/profile-swap.shFor Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for listen

Similar Open Source Tools

listen

Listen is a Solana Swiss-Knife toolkit for algorithmic trading, offering real-time transaction monitoring, multi-DEX swap execution, fast transactions with Jito MEV bundles, price tracking, token management utilities, and performance monitoring. It includes tools for grabbing data from unofficial APIs and works with the $arc rig framework for AI Agents to interact with the Solana blockchain. The repository provides miscellaneous tools for analysis and data retrieval, with the core functionality in the `src` directory.

QA-Pilot

QA-Pilot is an interactive chat project that leverages online/local LLM for rapid understanding and navigation of GitHub code repository. It allows users to chat with GitHub public repositories using a git clone approach, store chat history, configure settings easily, manage multiple chat sessions, and quickly locate sessions with a search function. The tool integrates with `codegraph` to view Python files and supports various LLM models such as ollama, openai, mistralai, and localai. The project is continuously updated with new features and improvements, such as converting from `flask` to `fastapi`, adding `localai` API support, and upgrading dependencies like `langchain` and `Streamlit` to enhance performance.

k8sgpt

K8sGPT is a tool for scanning your Kubernetes clusters, diagnosing, and triaging issues in simple English. It has SRE experience codified into its analyzers and helps to pull out the most relevant information to enrich it with AI.

forge

Forge is a powerful open-source tool for building modern web applications. It provides a simple and intuitive interface for developers to quickly scaffold and deploy projects. With Forge, you can easily create custom components, manage dependencies, and streamline your development workflow. Whether you are a beginner or an experienced developer, Forge offers a flexible and efficient solution for your web development needs.

ChatSim

ChatSim is a tool designed for editable scene simulation for autonomous driving via LLM-Agent collaboration. It provides functionalities for setting up the environment, installing necessary dependencies like McNeRF and Inpainting tools, and preparing data for simulation. Users can train models, simulate scenes, and track trajectories for smoother and more realistic results. The tool integrates with Blender software and offers options for training McNeRF models and McLight's skydome estimation network. It also includes a trajectory tracking module for improved trajectory tracking. ChatSim aims to facilitate the simulation of autonomous driving scenarios with collaborative LLM-Agents.

shell-pilot

Shell-pilot is a simple, lightweight shell script designed to interact with various AI models such as OpenAI, Ollama, Mistral AI, LocalAI, ZhipuAI, Anthropic, Moonshot, and Novita AI from the terminal. It enhances intelligent system management without any dependencies, offering features like setting up a local LLM repository, using official models and APIs, viewing history and session persistence, passing input prompts with pipe/redirector, listing available models, setting request parameters, generating and running commands in the terminal, easy configuration setup, system package version checking, and managing system aliases.

pentagi

PentAGI is an innovative tool for automated security testing that leverages cutting-edge artificial intelligence technologies. It is designed for information security professionals, researchers, and enthusiasts who need a powerful and flexible solution for conducting penetration tests. The tool provides secure and isolated operations in a sandboxed Docker environment, fully autonomous AI-powered agent for penetration testing steps, a suite of 20+ professional security tools, smart memory system for storing research results, web intelligence for gathering information, integration with external search systems, team delegation system, comprehensive monitoring and reporting, modern interface, API integration, persistent storage, scalable architecture, self-hosted solution, flexible authentication, and quick deployment through Docker Compose.

aira-dojo

aira-dojo is a scalable and customizable framework for AI research agents, designed to accelerate hill-climbing on research capabilities toward a fully automated AI research scientist. The framework provides a general abstraction for tasks and agents, implements the MLE-bench task, and includes state-of-the-art agents. It features an isolated code execution environment that integrates smoothly with job schedulers like Slurm, enabling large-scale experiments and rapid iteration across a portfolio of tasks and solvers.

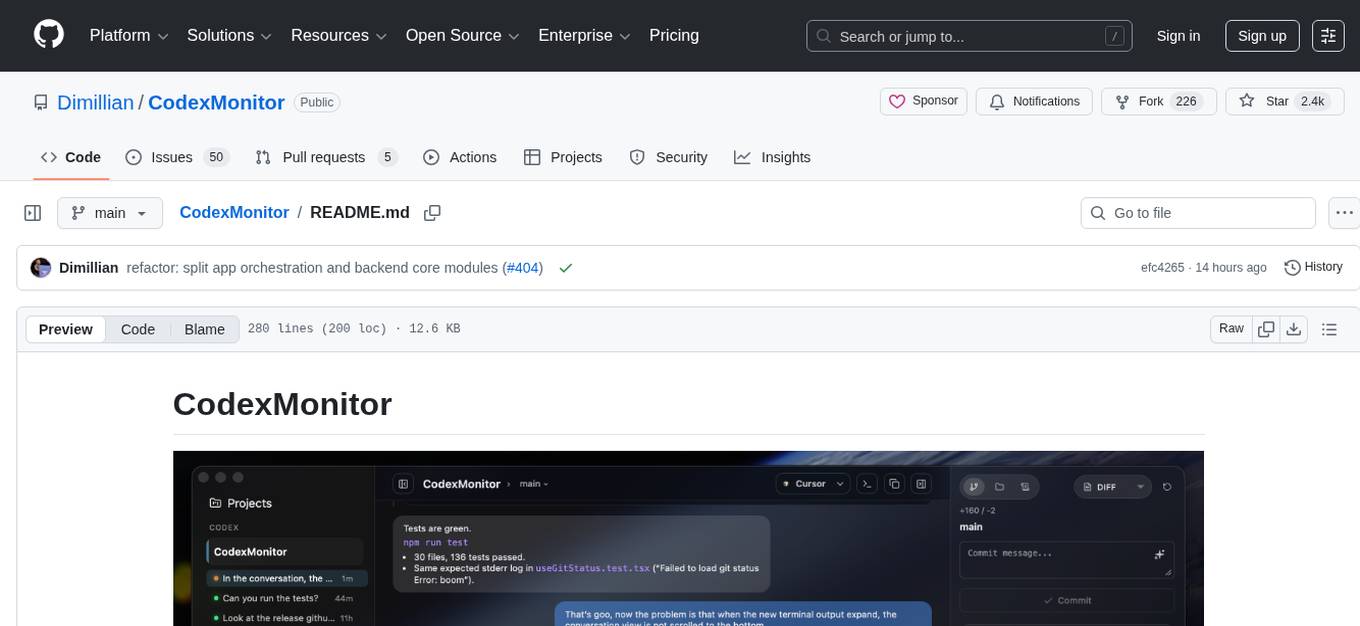

CodexMonitor

CodexMonitor is a Tauri app designed for managing multiple Codex agents in local workspaces. It offers features such as workspace and thread management, composer and agent controls, Git and GitHub integration, file and prompt handling, as well as a user-friendly UI and experience. The tool requires Node.js, Rust toolchain, CMake, LLVM/Clang, Codex CLI, Git CLI, and optionally GitHub CLI. It supports iOS with Tailscale setup, and provides instructions for iOS support, prerequisites, simulator usage, USB device deployment, and release builds. The project structure includes frontend and backend components, with persistent data storage, settings, and feature configurations. Tauri IPC surface enables various functionalities like settings management, workspace operations, thread handling, Git/GitHub interactions, prompts management, terminal/dictation/notifications, and remote backend helpers.

agent-device

CLI tool for controlling iOS and Android devices for AI agents, with core commands like open, back, home, press, and more. It supports minimal dependencies, TypeScript execution on Node 22+, and is in early development. The tool allows for automation flows, session management, semantic finding, assertions, replay updates, and settings helpers for simulators. It also includes backends for iOS snapshots, app resolution, iOS-specific notes, testing, and building. Contributions are welcome, and the project is maintained by Callstack, a group of React and React Native enthusiasts.

olah

Olah is a self-hosted lightweight Huggingface mirror service that implements mirroring feature for Huggingface resources at file block level, enhancing download speeds and saving bandwidth. It offers cache control policies and allows administrators to configure accessible repositories. Users can install Olah with pip or from source, set up the mirror site, and download models and datasets using huggingface-cli. Olah provides additional configurations through a configuration file for basic setup and accessibility restrictions. Future work includes implementing an administrator and user system, OOS backend support, and mirror update schedule task. Olah is released under the MIT License.

trickPrompt-engine

This repository contains a vulnerability mining engine based on GPT technology. The engine is designed to identify logic vulnerabilities in code by utilizing task-driven prompts. It does not require prior knowledge or fine-tuning and focuses on prompt design rather than model design. The tool is effective in real-world projects and should not be used for academic vulnerability testing. It supports scanning projects in various languages, with current support for Solidity. The engine is configured through prompts and environment settings, enabling users to scan for vulnerabilities in their codebase. Future updates aim to optimize code structure, add more language support, and enhance usability through command line mode. The tool has received a significant audit bounty of $50,000+ as of May 2024.

llama.vim

llama.vim is a plugin that provides local LLM-assisted text completion for Vim users. It offers features such as auto-suggest on cursor movement, manual suggestion toggling, suggestion acceptance with Tab and Shift+Tab, control over text generation time, context configuration, ring context with chunks from open and edited files, and performance stats display. The plugin requires a llama.cpp server instance to be running and supports FIM-compatible models. It aims to be simple, lightweight, and provide high-quality and performant local FIM completions even on consumer-grade hardware.

holon

Holon is a tool that runs AI coding agents headlessly to automate the process of turning issues into PR-ready patches and summaries. It provides a sandboxed execution environment with standardized artifacts, allowing for deterministic and repeatable runs. Users can easily create or update PRs, manage state persistence, and customize agent bundles. Holon can be used locally or in CI environments, offering seamless integration with GitHub Actions.

cheating-based-prompt-engine

This is a vulnerability mining engine purely based on GPT, requiring no prior knowledge base, no fine-tuning, yet its effectiveness can overwhelmingly surpass most of the current related research. The core idea revolves around being task-driven, not question-driven, driven by prompts, not by code, and focused on prompt design, not model design. The essence is encapsulated in one word: deception. It is a type of code understanding logic vulnerability mining that fully stimulates the capabilities of GPT, suitable for real actual projects.

chat-ui

A chat interface using open source models, eg OpenAssistant or Llama. It is a SvelteKit app and it powers the HuggingChat app on hf.co/chat.

For similar tasks

listen

Listen is a Solana Swiss-Knife toolkit for algorithmic trading, offering real-time transaction monitoring, multi-DEX swap execution, fast transactions with Jito MEV bundles, price tracking, token management utilities, and performance monitoring. It includes tools for grabbing data from unofficial APIs and works with the $arc rig framework for AI Agents to interact with the Solana blockchain. The repository provides miscellaneous tools for analysis and data retrieval, with the core functionality in the `src` directory.

SunoApi

SunoAPI is an unofficial client for Suno AI, built on Python and Streamlit. It supports functions like generating music and obtaining music information. Users can set up multiple account information to be saved for use. The tool also features built-in maintenance and activation functions for tokens, eliminating concerns about token expiration. It supports multiple languages and allows users to upload pictures for generating songs based on image content analysis.

PSAI

PSAI is a PowerShell module that empowers scripts with the intelligence of OpenAI, bridging the gap between PowerShell and AI. It enables seamless integration for tasks like file searches and data analysis, revolutionizing automation possibilities with just a few lines of code. The module supports the latest OpenAI API changes, offering features like improved file search, vector store objects, token usage control, message limits, tool choice parameter, custom conversation histories, and model configuration parameters.

aiges

AIGES is a core component of the Athena Serving Framework, designed as a universal encapsulation tool for AI developers to deploy AI algorithm models and engines quickly. By integrating AIGES, you can deploy AI algorithm models and engines rapidly and host them on the Athena Serving Framework, utilizing supporting auxiliary systems for networking, distribution strategies, data processing, etc. The Athena Serving Framework aims to accelerate the cloud service of AI algorithm models and engines, providing multiple guarantees for cloud service stability through cloud-native architecture. You can efficiently and securely deploy, upgrade, scale, operate, and monitor models and engines without focusing on underlying infrastructure and service-related development, governance, and operations.

holoinsight

HoloInsight is a cloud-native observability platform that provides low-cost and high-performance monitoring services for cloud-native applications. It offers deep insights through real-time log analysis and AI integration. The platform is designed to help users gain a comprehensive understanding of their applications' performance and behavior in the cloud environment. HoloInsight is easy to deploy using Docker and Kubernetes, making it a versatile tool for monitoring and optimizing cloud-native applications. With a focus on scalability and efficiency, HoloInsight is suitable for organizations looking to enhance their observability and monitoring capabilities in the cloud.

awesome-AIOps

awesome-AIOps is a curated list of academic researches and industrial materials related to Artificial Intelligence for IT Operations (AIOps). It includes resources such as competitions, white papers, blogs, tutorials, benchmarks, tools, companies, academic materials, talks, workshops, papers, and courses covering various aspects of AIOps like anomaly detection, root cause analysis, incident management, microservices, dependency tracing, and more.

OpenLLM

OpenLLM is a platform that helps developers run any open-source Large Language Models (LLMs) as OpenAI-compatible API endpoints, locally and in the cloud. It supports a wide range of LLMs, provides state-of-the-art serving and inference performance, and simplifies cloud deployment via BentoML. Users can fine-tune, serve, deploy, and monitor any LLMs with ease using OpenLLM. The platform also supports various quantization techniques, serving fine-tuning layers, and multiple runtime implementations. OpenLLM seamlessly integrates with other tools like OpenAI Compatible Endpoints, LlamaIndex, LangChain, and Transformers Agents. It offers deployment options through Docker containers, BentoCloud, and provides a community for collaboration and contributions.

laravel-slower

Laravel Slower is a powerful package designed for Laravel developers to optimize the performance of their applications by identifying slow database queries and providing AI-driven suggestions for optimal indexing strategies and performance improvements. It offers actionable insights for debugging and monitoring database interactions, enhancing efficiency and scalability.

For similar jobs

qlib

Qlib is an open-source, AI-oriented quantitative investment platform that supports diverse machine learning modeling paradigms, including supervised learning, market dynamics modeling, and reinforcement learning. It covers the entire chain of quantitative investment, from alpha seeking to order execution. The platform empowers researchers to explore ideas and implement productions using AI technologies in quantitative investment. Qlib collaboratively solves key challenges in quantitative investment by releasing state-of-the-art research works in various paradigms. It provides a full ML pipeline for data processing, model training, and back-testing, enabling users to perform tasks such as forecasting market patterns, adapting to market dynamics, and modeling continuous investment decisions.

jupyter-quant

Jupyter Quant is a dockerized environment tailored for quantitative research, equipped with essential tools like statsmodels, pymc, arch, py_vollib, zipline-reloaded, PyPortfolioOpt, numpy, pandas, sci-py, scikit-learn, yellowbricks, shap, optuna, ib_insync, Cython, Numba, bottleneck, numexpr, jedi language server, jupyterlab-lsp, black, isort, and more. It does not include conda/mamba and relies on pip for package installation. The image is optimized for size, includes common command line utilities, supports apt cache, and allows for the installation of additional packages. It is designed for ephemeral containers, ensuring data persistence, and offers volumes for data, configuration, and notebooks. Common tasks include setting up the server, managing configurations, setting passwords, listing installed packages, passing parameters to jupyter-lab, running commands in the container, building wheels outside the container, installing dotfiles and SSH keys, and creating SSH tunnels.

FinRobot

FinRobot is an open-source AI agent platform designed for financial applications using large language models. It transcends the scope of FinGPT, offering a comprehensive solution that integrates a diverse array of AI technologies. The platform's versatility and adaptability cater to the multifaceted needs of the financial industry. FinRobot's ecosystem is organized into four layers, including Financial AI Agents Layer, Financial LLMs Algorithms Layer, LLMOps and DataOps Layers, and Multi-source LLM Foundation Models Layer. The platform's agent workflow involves Perception, Brain, and Action modules to capture, process, and execute financial data and insights. The Smart Scheduler optimizes model diversity and selection for tasks, managed by components like Director Agent, Agent Registration, Agent Adaptor, and Task Manager. The tool provides a structured file organization with subfolders for agents, data sources, and functional modules, along with installation instructions and hands-on tutorials.

hands-on-lab-neo4j-and-vertex-ai

This repository provides a hands-on lab for learning about Neo4j and Google Cloud Vertex AI. It is intended for data scientists and data engineers to deploy Neo4j and Vertex AI in a Google Cloud account, work with real-world datasets, apply generative AI, build a chatbot over a knowledge graph, and use vector search and index functionality for semantic search. The lab focuses on analyzing quarterly filings of asset managers with $100m+ assets under management, exploring relationships using Neo4j Browser and Cypher query language, and discussing potential applications in capital markets such as algorithmic trading and securities master data management.

jupyter-quant

Jupyter Quant is a dockerized environment tailored for quantitative research, equipped with essential tools like statsmodels, pymc, arch, py_vollib, zipline-reloaded, PyPortfolioOpt, numpy, pandas, sci-py, scikit-learn, yellowbricks, shap, optuna, and more. It provides Interactive Broker connectivity via ib_async and includes major Python packages for statistical and time series analysis. The image is optimized for size, includes jedi language server, jupyterlab-lsp, and common command line utilities. Users can install new packages with sudo, leverage apt cache, and bring their own dot files and SSH keys. The tool is designed for ephemeral containers, ensuring data persistence and flexibility for quantitative analysis tasks.

Qbot

Qbot is an AI-oriented automated quantitative investment platform that supports diverse machine learning modeling paradigms, including supervised learning, market dynamics modeling, and reinforcement learning. It provides a full closed-loop process from data acquisition, strategy development, backtesting, simulation trading to live trading. The platform emphasizes AI strategies such as machine learning, reinforcement learning, and deep learning, combined with multi-factor models to enhance returns. Users with some Python knowledge and trading experience can easily utilize the platform to address trading pain points and gaps in the market.

FinMem-LLM-StockTrading

This repository contains the Python source code for FINMEM, a Performance-Enhanced Large Language Model Trading Agent with Layered Memory and Character Design. It introduces FinMem, a novel LLM-based agent framework devised for financial decision-making, encompassing three core modules: Profiling, Memory with layered processing, and Decision-making. FinMem's memory module aligns closely with the cognitive structure of human traders, offering robust interpretability and real-time tuning. The framework enables the agent to self-evolve its professional knowledge, react agilely to new investment cues, and continuously refine trading decisions in the volatile financial environment. It presents a cutting-edge LLM agent framework for automated trading, boosting cumulative investment returns.

LLMs-in-Finance

This repository focuses on the application of Large Language Models (LLMs) in the field of finance. It provides insights and knowledge about how LLMs can be utilized in various scenarios within the finance industry, particularly in generating AI agents. The repository aims to explore the potential of LLMs to enhance financial processes and decision-making through the use of advanced natural language processing techniques.