LLM-LieDetector

Code for the ICLR 2024 paper "How to catch an AI liar: Lie detection in black-box LLMs by asking unrelated questions"

Stars: 54

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

README:

Code for the paper "How to catch an AI liar: Lie detection in black-box LLMs by asking unrelated questions"

This repository contains code for reproducing the experiments in the paper "How to catch an AI liar: Lie detection in black-box LLMs by asking unrelated questions".

The main contribution of the paper are:

- a collection of Q/A datasets, prompts and fine-tuning datasets to generate lies with language models;

- lie detectors relying on asking a set of binary "elicitation questions" after a model is suspected to have lied and diagnose whether the model had actually lied.

For a quick tour of the essential functionalities of this repository, check tutorial.ipynb.

NB: this repository allows potentially to reproduce all results. Doing so however would incur a large cost in OpenAI API calls and require access to GPUs (for the open-source models). As such, the repository includes all the results which are necessary to train and test the lie detectors, in data/processed_questions/*json, finetuning_dataset_validation_original_with_results.json and a few other files in results.

-

datacontains the Q/A datasets which are used to generate lies. These are a set of 10 existing dataset plus one which we introduce.data/raw_questionscontains the datasets in their original format, whiledata/processed_questionscontains the datasets transformed to a common format (seetutorial.ipynbfor details on how this is done)· -

lllm: contains source code powering all the rest of the code. In particular,questions_loader.pycontains the classes handling the data loading (one class per dataset) and including methods for computing lying rate and double-down rate. -

lying_rate_double_down_rate_probes.ipynbprompts GPT-3.5 to lie, evaluates its lying rate and double-down rate over the different datasets. -

finetuningcontains datasets for fine-tuning Llama and GPT-3 to lie, and code to do so, evaluate the resulting lying rate and double-down rate. Seefinetuning/README.md -

experiments_alpaca_vicunacontains code to prompt the Open-Source models Alpaca and Vicuna to lie and evaluate their lying and double-down rate. Seeexperiments_alpaca_vicuna/README.mdfor more details.

-

lllm/questions_loader.pycontains source code to ask the set of "elicitation questions" indata/probes.csvafter a model was suspected to have lied. This relies on the classes defined indialogue_classes.py -

lying_rate_double_down_rate_probes.ipynbasks these elicitation questions to GPT-3.5 after it has lied and stores the results indata/processed_questions/*json(one file per Q/A dataset) -

classification_notebookscontains most of the experiments on lie detection. In particular,classification_notebooks/train_classifiers_on_prompted_GPT_3.5.ipynbtrains a set of detectors (for different groups of elicitation questions and considering binary and logprob response to the elicitation questions) on the answers provided by GPT-3.5, which are then tested in other experiments. The lie detectors trained inclassification_notebooks/train_classifiers_on_prompted_GPT_3.5.ipynbare stored inresults/trained_classifiersfolder - The generalization of the lie detectors is studied in multiple places:

-

classification_notebooksfurther contains generalization experiments involving GPT-3.5; see details inclassification_notebooks/README.md. Some of the model answers to elicitation questions with different prompting modalities are stored inresults - Generalization experiments to other models are contained in

experiments_alpaca_vicuna,finetuning/llamaandfinetuning/davinci. The former involves instruction-finetuned models, while the latter two involve models which are finetuned to lie by us. See theREADME.mdfile in those folders for more details. Those folders also contain code to ask the elicitation questions to the finetuned or open-source models.

-

-

lllmcontains additional utilities that are used throughout. -

imgscontain a few images present in the paper and a notebook to generate them -

othercontains utility notebooks to explore the model answers when instructed to lie and to add and test elicitation questions.

To use this code, create a clean Python environment and then run

pip install .

To run experiments with the open-source models, you need access to a computing cluster with GPUs and to install the deepspeed_llama repository on that cluster. You'll need to change the source code of that repository to point to the cluster directory where the weights for the open-source models are stored. experiments_alpaca_vicuna and finetuning/llama contain a few *.sh example scripts for clusters using slurm.

There are also a few other things that need to be changed in lllm/llama_utils.py according to the paths of your cluster. Moreover, finetuning/llama/llama_ft_folder.json maps the different fine-tuning setups for Llama to a specific path on the cluster we used, so this needs to be changed too.

Finally, to run experiments on the OpenAI models, you'll need to store your OpenAI API key in a .env file in the root of this directory, with the format:

OPENAI_API_KEY=sk-<your key>

Running experiments with the OpenAI API will incur a monetary cost. Some of our experiments are extensive and, as such, the costs will be substantial. However, our results are already stored in this repository and, by default, most of our code will load them instead of querying the API. Of course, you can overwrite our results by specifying the corresponding argument to the various functions and methods.

Q: Which probes should I be using for binary responses vs. logprobs?

A: The same probing questions are used in both cases, but the trained classifiers have different coefficients.

Q: For the classifier predictions, what do 1/0 correspond to?

A: 1 corresponds to truth while 0 corresponds to lie.

Q: For the logprob classifier, paper Section 5.2 says the inputs are the “difference in logprobabilities assigned to yes/no”. Does this mean logprob(yes) - logprob(no) or logprob(no) - logprob(yes)?

A: logprob(yes) - logprob(no)

Q: How is logprob(yes) and logprob(no)computed?

A: we look at the first position in the model response, and compute the log probability of the "yes" and "no" tokens (and synonyms) in the top 5 most plausible tokens (as those are what you get from the OpenAI API). If no "yes" or "no" token are in the top 5 most plausible models, the log probability is upper bounded by considering the probability of the other tokens, see this function.

Q: Where are the generated lies?

A: you can find GPT-3.5 generated lies in the false_statement column in each Q/A dataset. Those doi not exactly corresponds to the answers given by GPT-3.5 when it was prompted to answer the elicitation questions as it was resampled with T=0.7, but they are close.

Q: How to get the indices that correspond to the different elicitation question groups? A:

probes = pd.read_csv("../../data/probes.csv")["probe"].tolist()

# load indices

no_lie_indices = np.load("../../results/probes_groups/no_lie_indices.npy")

lie_indices= np.load("../../results/probes_groups/lie_indices.npy")

knowable_indices = np.load("../../results/probes_groups/knowable_indices.npy")

subsets_union_indices = np.concatenate([no_lie_indices, lie_indices, knowable_indices])}

These indices index both the relevant probing questions, as well as the precomputed logprobs.

- While we worked on this project, we used the term

probesinstead ofelicitation questions, as they are now indicated in the paper. The previous term stuck in the repository, which still uses it. - In the paper, we say that we use 48 elicitation questions. However, we originally defined 65 elicitation questions, from which some were afterwards cancelled as they did not satisfy some of the requirements for elicitation questions we posed (for instance, they did not instruct the model to answer yes/no). However, most of the experiments were already run with those set of 65 probes, which are then still present in this repository. The lie detector experiments however do not use these, as the probe groups (specified in

results/probes_groups) do not involve all elicitation questions.

If you use this software please cite as follows:

@inproceedings{

pacchiardi2024how,

title={How to Catch an {AI} Liar: Lie Detection in Black-Box {LLM}s by Asking Unrelated Questions},

author={Lorenzo Pacchiardi and Alex James Chan and S{\"o}ren Mindermann and Ilan Moscovitz and Alexa Yue Pan and Yarin Gal and Owain Evans and Jan M. Brauner},

booktitle={The Twelfth International Conference on Learning Representations},

year={2024},

url={https://openreview.net/forum?id=567BjxgaTp}

}```For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for LLM-LieDetector

Similar Open Source Tools

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

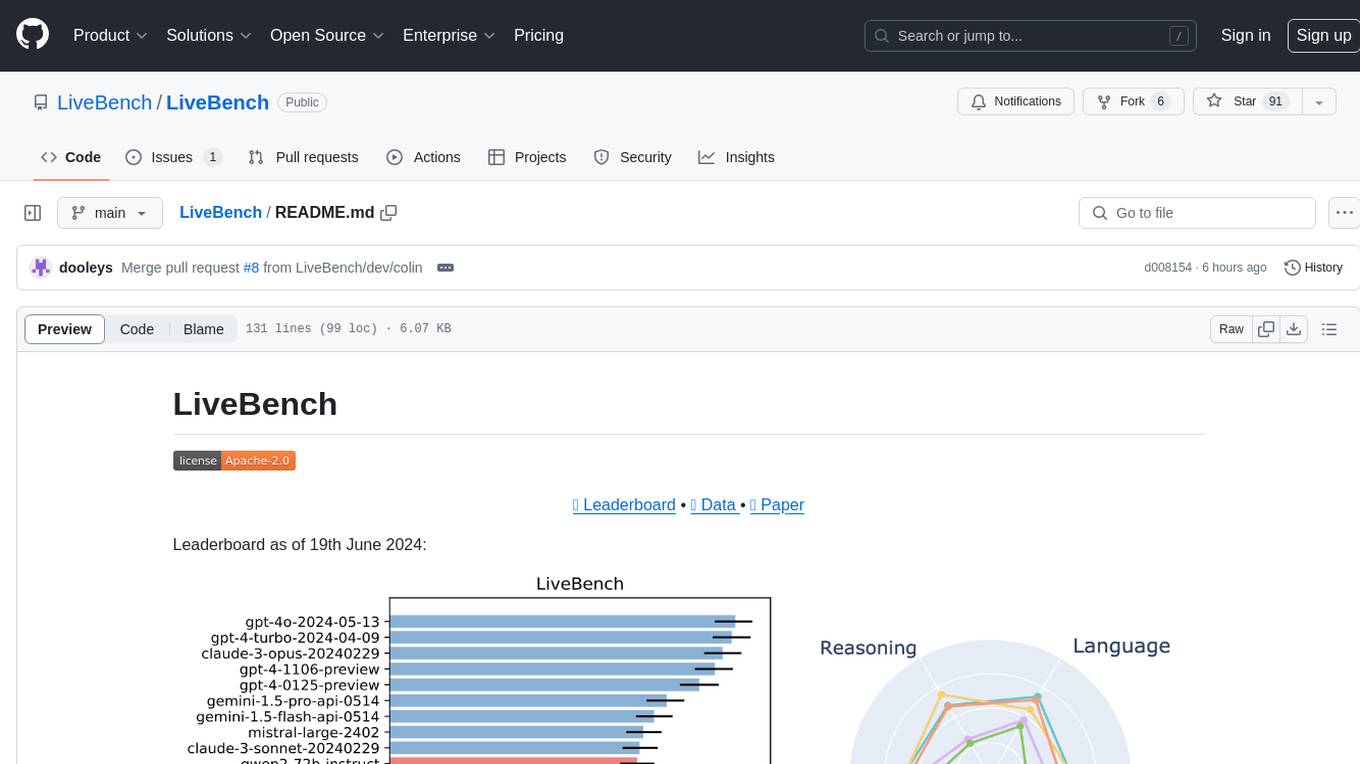

LiveBench

LiveBench is a benchmark tool designed for Language Model Models (LLMs) with a focus on limiting contamination through monthly new questions based on recent datasets, arXiv papers, news articles, and IMDb movie synopses. It provides verifiable, objective ground-truth answers for accurate scoring without an LLM judge. The tool offers 18 diverse tasks across 6 categories and promises to release more challenging tasks over time. LiveBench is built on FastChat's llm_judge module and incorporates code from LiveCodeBench and IFEval.

OlympicArena

OlympicArena is a comprehensive benchmark designed to evaluate advanced AI capabilities across various disciplines. It aims to push AI towards superintelligence by tackling complex challenges in science and beyond. The repository provides detailed data for different disciplines, allows users to run inference and evaluation locally, and offers a submission platform for testing models on the test set. Additionally, it includes an annotation interface and encourages users to cite their paper if they find the code or dataset helpful.

llms

The 'llms' repository is a comprehensive guide on Large Language Models (LLMs), covering topics such as language modeling, applications of LLMs, statistical language modeling, neural language models, conditional language models, evaluation methods, transformer-based language models, practical LLMs like GPT and BERT, prompt engineering, fine-tuning LLMs, retrieval augmented generation, AI agents, and LLMs for computer vision. The repository provides detailed explanations, examples, and tools for working with LLMs.

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve and Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. Best run on GPU with 16GB vRAM. Users can combine RAG with fine-tuning using LLaMa2Lang repository. The tool allows configuration for LLM, data, LLM parameters, prompt, and document splitting. Funding is sought to democratize AI and advance its applications.

MultiPL-E

MultiPL-E is a system for translating unit test-driven neural code generation benchmarks to new languages. It is part of the BigCode Code Generation LM Harness and allows for evaluating Code LLMs using various benchmarks. The tool supports multiple versions with improvements and new language additions, providing a scalable and polyglot approach to benchmarking neural code generation. Users can access a tutorial for direct usage and explore the dataset of translated prompts on the Hugging Face Hub.

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve, Answer, Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. The tool can run on CPU but is optimized for GPUs with at least 16GB of vRAM. Users can combine RAG with fine-tuning using the LLaMa2Lang repository. The tool provides a configurable RAG pipeline without the need for coding, utilizing indexing and inference steps to accurately answer user queries.

FigStep

FigStep is a black-box jailbreaking algorithm against large vision-language models (VLMs). It feeds harmful instructions through the image channel and uses benign text prompts to induce VLMs to output contents that violate common AI safety policies. The tool highlights the vulnerability of VLMs to jailbreaking attacks, emphasizing the need for safety alignments between visual and textual modalities.

rag-experiment-accelerator

The RAG Experiment Accelerator is a versatile tool that helps you conduct experiments and evaluations using Azure AI Search and RAG pattern. It offers a rich set of features, including experiment setup, integration with Azure AI Search, Azure Machine Learning, MLFlow, and Azure OpenAI, multiple document chunking strategies, query generation, multiple search types, sub-querying, re-ranking, metrics and evaluation, report generation, and multi-lingual support. The tool is designed to make it easier and faster to run experiments and evaluations of search queries and quality of response from OpenAI, and is useful for researchers, data scientists, and developers who want to test the performance of different search and OpenAI related hyperparameters, compare the effectiveness of various search strategies, fine-tune and optimize parameters, find the best combination of hyperparameters, and generate detailed reports and visualizations from experiment results.

eval-dev-quality

DevQualityEval is an evaluation benchmark and framework designed to compare and improve the quality of code generation of Language Model Models (LLMs). It provides developers with a standardized benchmark to enhance real-world usage in software development and offers users metrics and comparisons to assess the usefulness of LLMs for their tasks. The tool evaluates LLMs' performance in solving software development tasks and measures the quality of their results through a point-based system. Users can run specific tasks, such as test generation, across different programming languages to evaluate LLMs' language understanding and code generation capabilities.

curategpt

CurateGPT is a prototype web application and framework designed for general purpose AI-guided curation and curation-related operations over collections of objects. It provides functionalities for loading example data, building indexes, interacting with knowledge bases, and performing tasks such as chatting with a knowledge base, querying Pubmed, interacting with a GitHub issue tracker, term autocompletion, and all-by-all comparisons. The tool is built to work best with the OpenAI gpt-4 model and OpenAI ada-text-embedding-002 for embedding, but also supports alternative models through a plugin architecture.

LongRAG

This repository contains the code for LongRAG, a framework that enhances retrieval-augmented generation with long-context LLMs. LongRAG introduces a 'long retriever' and a 'long reader' to improve performance by using a 4K-token retrieval unit, offering insights into combining RAG with long-context LLMs. The repo provides instructions for installation, quick start, corpus preparation, long retriever, and long reader.

curate-gpt

CurateGPT is a prototype web application and framework for performing general purpose AI-guided curation and curation-related operations over collections of objects. It allows users to load JSON, YAML, or CSV data, build vector database indexes for ontologies, and interact with various data sources like GitHub, Google Drives, Google Sheets, and more. The tool supports ontology curation, knowledge base querying, term autocompletion, and all-by-all comparisons for objects in a collection.

ChatAFL

ChatAFL is a protocol fuzzer guided by large language models (LLMs) that extracts machine-readable grammar for protocol mutation, increases message diversity, and breaks coverage plateaus. It integrates with ProfuzzBench for stateful fuzzing of network protocols, providing smooth integration. The artifact includes modified versions of AFLNet and ProfuzzBench, source code for ChatAFL with proposed strategies, and scripts for setup, execution, analysis, and cleanup. Users can analyze data, construct plots, examine LLM-generated grammars, enriched seeds, and state-stall responses, and reproduce results with downsized experiments. Customization options include modifying fuzzers, tuning parameters, adding new subjects, troubleshooting, and working on GPT-4. Limitations include interaction with OpenAI's Large Language Models and a hard limit of 150,000 tokens per minute.

chronon

Chronon is a platform that simplifies and improves ML workflows by providing a central place to define features, ensuring point-in-time correctness for backfills, simplifying orchestration for batch and streaming pipelines, offering easy endpoints for feature fetching, and guaranteeing and measuring consistency. It offers benefits over other approaches by enabling the use of a broad set of data for training, handling large aggregations and other computationally intensive transformations, and abstracting away the infrastructure complexity of data plumbing.

strwythura

Strwythura is a library and tutorial focused on constructing a knowledge graph from unstructured data sources using state-of-the-art models for named entity recognition. It implements an enhanced GraphRAG approach and curates semantics for optimizing AI application outcomes within a specific domain. The tutorial emphasizes the use of sophisticated NLP pipelines based on spaCy, GLiNER, TextRank, and related libraries to provide better/faster/cheaper results with more control over the intentional arrangement of the knowledge graph. It leverages neurosymbolic AI methods and combines practices from natural language processing, graph data science, entity resolution, ontology pipeline, context engineering, and human-in-the-loop processes.

For similar tasks

llm-compression-intelligence

This repository presents the findings of the paper "Compression Represents Intelligence Linearly". The study reveals a strong linear correlation between the intelligence of LLMs, as measured by benchmark scores, and their ability to compress external text corpora. Compression efficiency, derived from raw text corpora, serves as a reliable evaluation metric that is linearly associated with model capabilities. The repository includes the compression corpora used in the paper, code for computing compression efficiency, and data collection and processing pipelines.

edsl

The Expected Parrot Domain-Specific Language (EDSL) package enables users to conduct computational social science and market research with AI. It facilitates designing surveys and experiments, simulating responses using large language models, and performing data labeling and other research tasks. EDSL includes built-in methods for analyzing, visualizing, and sharing research results. It is compatible with Python 3.9 - 3.11 and requires API keys for LLMs stored in a `.env` file.

fast-stable-diffusion

Fast-stable-diffusion is a project that offers notebooks for RunPod, Paperspace, and Colab Pro adaptations with AUTOMATIC1111 Webui and Dreambooth. It provides tools for running and implementing Dreambooth, a stable diffusion project. The project includes implementations by XavierXiao and is sponsored by Runpod, Paperspace, and Colab Pro.

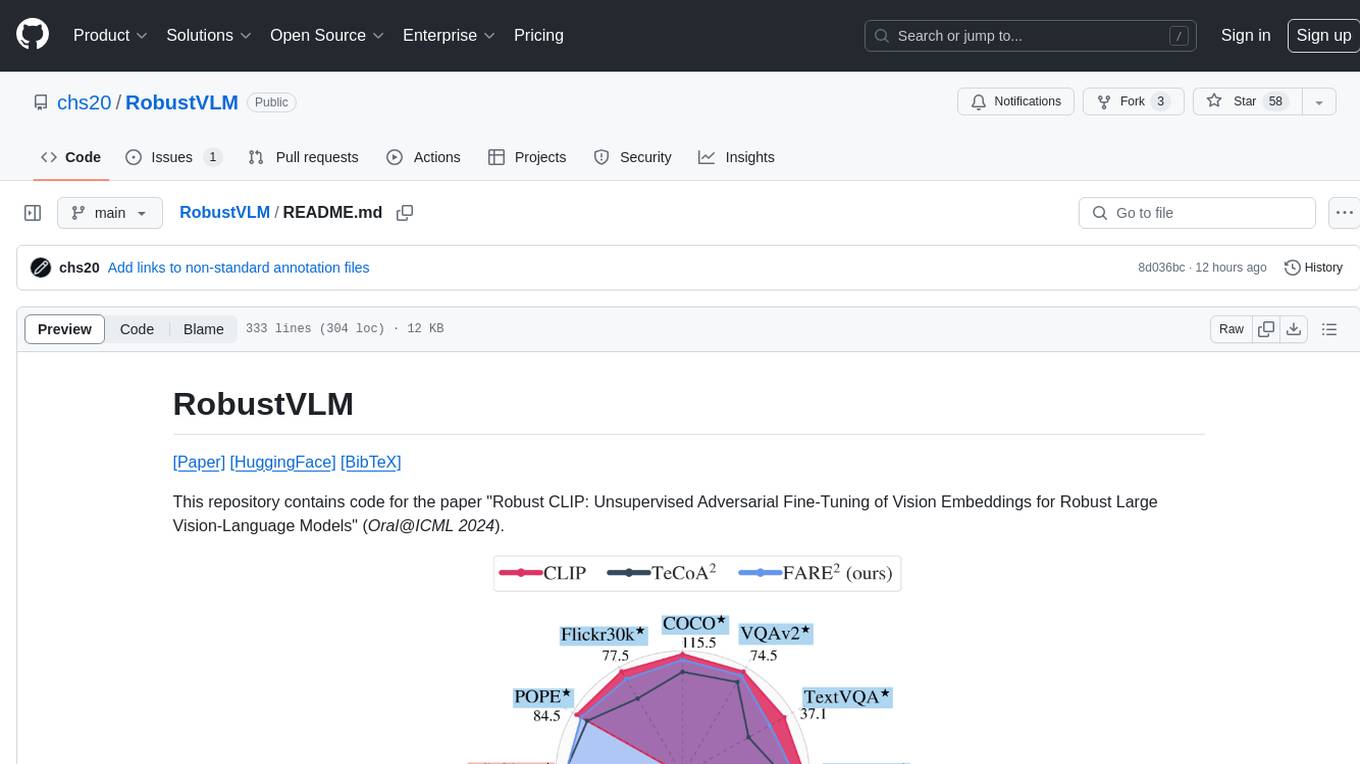

RobustVLM

This repository contains code for the paper 'Robust CLIP: Unsupervised Adversarial Fine-Tuning of Vision Embeddings for Robust Large Vision-Language Models'. It focuses on fine-tuning CLIP in an unsupervised manner to enhance its robustness against visual adversarial attacks. By replacing the vision encoder of large vision-language models with the fine-tuned CLIP models, it achieves state-of-the-art adversarial robustness on various vision-language tasks. The repository provides adversarially fine-tuned ViT-L/14 CLIP models and offers insights into zero-shot classification settings and clean accuracy improvements.

TempCompass

TempCompass is a benchmark designed to evaluate the temporal perception ability of Video LLMs. It encompasses a diverse set of temporal aspects and task formats to comprehensively assess the capability of Video LLMs in understanding videos. The benchmark includes conflicting videos to prevent models from relying on single-frame bias and language priors. Users can clone the repository, install required packages, prepare data, run inference using examples like Video-LLaVA and Gemini, and evaluate the performance of their models across different tasks such as Multi-Choice QA, Yes/No QA, Caption Matching, and Caption Generation.

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

bigcodebench

BigCodeBench is an easy-to-use benchmark for code generation with practical and challenging programming tasks. It aims to evaluate the true programming capabilities of large language models (LLMs) in a more realistic setting. The benchmark is designed for HumanEval-like function-level code generation tasks, but with much more complex instructions and diverse function calls. BigCodeBench focuses on the evaluation of LLM4Code with diverse function calls and complex instructions, providing precise evaluation & ranking and pre-generated samples to accelerate code intelligence research. It inherits the design of the EvalPlus framework but differs in terms of execution environment and test evaluation.

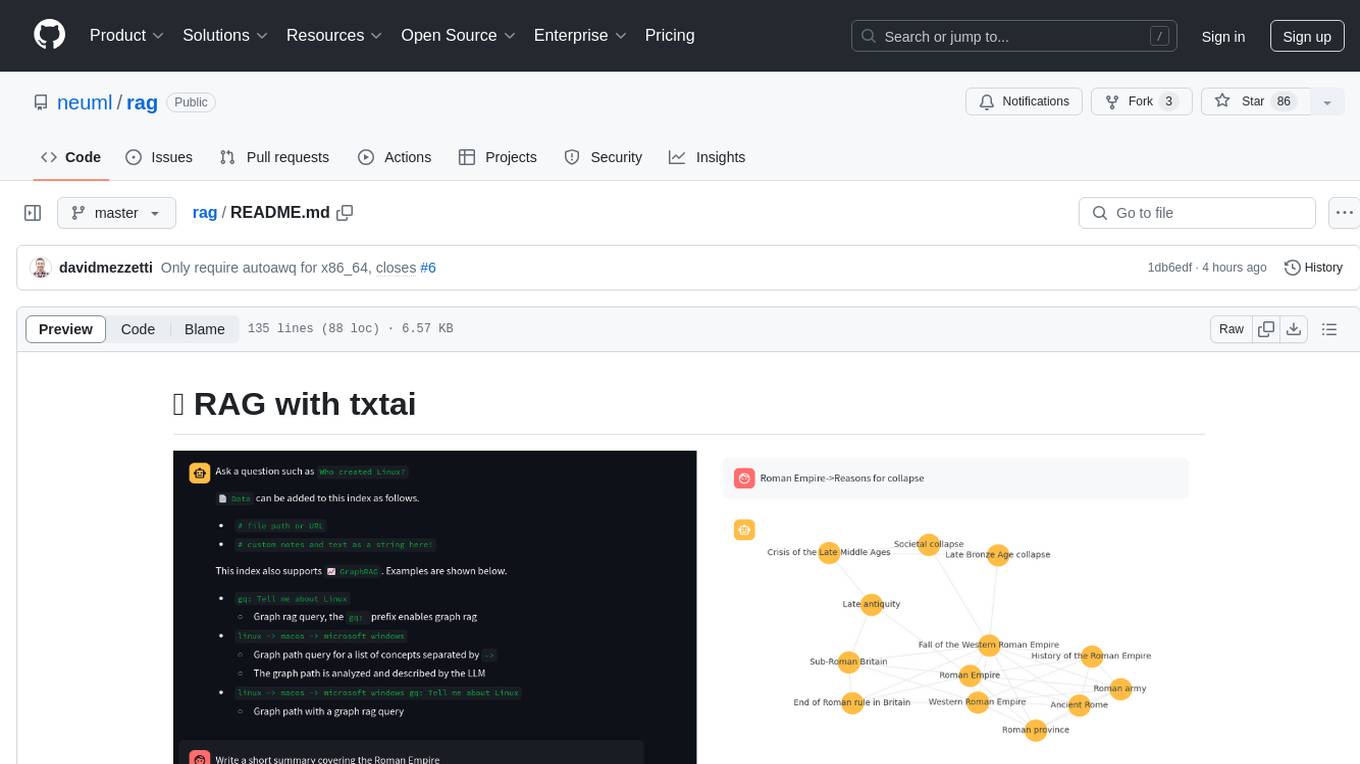

rag

RAG with txtai is a Retrieval Augmented Generation (RAG) Streamlit application that helps generate factually correct content by limiting the context in which a Large Language Model (LLM) can generate answers. It supports two categories of RAG: Vector RAG, where context is supplied via a vector search query, and Graph RAG, where context is supplied via a graph path traversal query. The application allows users to run queries, add data to the index, and configure various parameters to control its behavior.

For similar jobs

LLM-and-Law

This repository is dedicated to summarizing papers related to large language models with the field of law. It includes applications of large language models in legal tasks, legal agents, legal problems of large language models, data resources for large language models in law, law LLMs, and evaluation of large language models in the legal domain.

start-llms

This repository is a comprehensive guide for individuals looking to start and improve their skills in Large Language Models (LLMs) without an advanced background in the field. It provides free resources, online courses, books, articles, and practical tips to become an expert in machine learning. The guide covers topics such as terminology, transformers, prompting, retrieval augmented generation (RAG), and more. It also includes recommendations for podcasts, YouTube videos, and communities to stay updated with the latest news in AI and LLMs.

aiverify

AI Verify is an AI governance testing framework and software toolkit that validates the performance of AI systems against internationally recognised principles through standardised tests. It offers a new API Connector feature to bypass size limitations, test various AI frameworks, and configure connection settings for batch requests. The toolkit operates within an enterprise environment, conducting technical tests on common supervised learning models for tabular and image datasets. It does not define AI ethical standards or guarantee complete safety from risks or biases.

Awesome-LLM-Watermark

This repository contains a collection of research papers related to watermarking techniques for text and images, specifically focusing on large language models (LLMs). The papers cover various aspects of watermarking LLM-generated content, including robustness, statistical understanding, topic-based watermarks, quality-detection trade-offs, dual watermarks, watermark collision, and more. Researchers have explored different methods and frameworks for watermarking LLMs to protect intellectual property, detect machine-generated text, improve generation quality, and evaluate watermarking techniques. The repository serves as a valuable resource for those interested in the field of watermarking for LLMs.

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

graphrag

The GraphRAG project is a data pipeline and transformation suite designed to extract meaningful, structured data from unstructured text using LLMs. It enhances LLMs' ability to reason about private data. The repository provides guidance on using knowledge graph memory structures to enhance LLM outputs, with a warning about the potential costs of GraphRAG indexing. It offers contribution guidelines, development resources, and encourages prompt tuning for optimal results. The Responsible AI FAQ addresses GraphRAG's capabilities, intended uses, evaluation metrics, limitations, and operational factors for effective and responsible use.

langtest

LangTest is a comprehensive evaluation library for custom LLM and NLP models. It aims to deliver safe and effective language models by providing tools to test model quality, augment training data, and support popular NLP frameworks. LangTest comes with benchmark datasets to challenge and enhance language models, ensuring peak performance in various linguistic tasks. The tool offers more than 60 distinct types of tests with just one line of code, covering aspects like robustness, bias, representation, fairness, and accuracy. It supports testing LLMS for question answering, toxicity, clinical tests, legal support, factuality, sycophancy, and summarization.

Awesome-Jailbreak-on-LLMs

Awesome-Jailbreak-on-LLMs is a collection of state-of-the-art, novel, and exciting jailbreak methods on Large Language Models (LLMs). The repository contains papers, codes, datasets, evaluations, and analyses related to jailbreak attacks on LLMs. It serves as a comprehensive resource for researchers and practitioners interested in exploring various jailbreak techniques and defenses in the context of LLMs. Contributions such as additional jailbreak-related content, pull requests, and issue reports are welcome, and contributors are acknowledged. For any inquiries or issues, contact [email protected]. If you find this repository useful for your research or work, consider starring it to show appreciation.