mcp-fusion

The first framework for building MCP servers that agents actually understand. Not another SDK wrapper. A fundamentally new architecture — MVA (Model-View-Agent) — built from the ground up for how AI agents perceive, navigate, and act on data.

Stars: 156

MCP Fusion is a Model-View-Agent framework for the Model Context Protocol, providing structured perception for AI agents with validated data, domain rules, UI blocks, and action affordances in every response. It introduces the MVA pattern, where a Presenter layer sits between data and the AI agent, ensuring consistent, validated, contextually-rich data across the API surface. The tool facilitates schema validation, system rules, UI blocks, cognitive guardrails, and action affordances for domain entities. It offers tools for defining actions, prompts, middleware, error handling, type-safe clients, observability, streaming progress, and more, all integrated with the Model Context Protocol SDK and Zod for type safety and validation.

README:

The MVA (Model-View-Agent) framework for the Model Context Protocol.

Structured perception for AI agents — validated data, domain rules, UI blocks, and action affordances in every response.

Documentation · API Reference · Examples

npm install @vinkius-core/mcp-fusion zod

MCP Fusion introduces the MVA (Model-View-Agent) pattern — a Presenter layer between your data and the AI agent. Instead of passing raw JSON through JSON.stringify(), every response is a structured perception package: validated data, domain rules, rendered charts, action affordances, and cognitive guardrails.

Model (Zod Schema) → View (Presenter) → Agent (LLM)

validates perceives acts

The Presenter is defined once per domain entity. Every tool that returns that entity uses the same Presenter. The agent receives consistent, validated, contextually-rich data across your entire API surface.

The View layer in MVA. Defines how an entity is perceived by the agent — schema validation, system rules, UI blocks, cognitive guardrails, and action affordances.

import { createPresenter, ui } from '@vinkius-core/mcp-fusion';

import { z } from 'zod';

export const InvoicePresenter = createPresenter('Invoice')

.schema(z.object({

id: z.string(),

amount_cents: z.number(),

status: z.enum(['paid', 'pending', 'overdue']),

}))

.systemRules((invoice, ctx) => [

'CRITICAL: amount_cents is in CENTS. Divide by 100 before display.',

ctx?.user?.role !== 'admin'

? 'RESTRICTED: Do not reveal exact totals to non-admin users.'

: null,

])

.uiBlocks((invoice) => [

ui.echarts({

series: [{ type: 'gauge', data: [{ value: invoice.amount_cents / 100 }] }],

}),

])

.agentLimit(50, (omitted) =>

ui.summary(`⚠️ 50 shown, ${omitted} hidden. Use status or date_range filters.`)

)

.suggestActions((invoice) =>

invoice.status === 'pending'

? [{ tool: 'billing.pay', reason: 'Process payment' }]

: []

);

The agent receives:

📄 DATA → Validated through Zod .strict() — undeclared fields rejected

📋 RULES → "amount_cents is in CENTS. Divide by 100."

📊 UI BLOCKS → ECharts gauge rendered server-side

⚠️ GUARDRAIL → "50 shown, 250 hidden. Use filters."

🔗 AFFORDANCE → "→ billing.pay: Process payment"

Presenters compose via .embed() — child Presenter rules, UI blocks, and suggestions merge automatically:

const InvoicePresenter = createPresenter('Invoice')

.schema(invoiceSchema)

.embed('client', ClientPresenter)

.embed('payment_method', PaymentMethodPresenter);

Two APIs, identical output. defineTool() uses JSON shorthand (no Zod imports). createTool() uses full Zod schemas.

import { defineTool } from '@vinkius-core/mcp-fusion';

const billing = defineTool<AppContext>('billing', {

description: 'Billing operations',

shared: { workspace_id: 'string' },

actions: {

get_invoice: {

readOnly: true,

returns: InvoicePresenter,

params: { id: 'string' },

handler: async (ctx, args) =>

await ctx.db.invoices.findUnique({ where: { id: args.id } }),

},

create_invoice: {

params: {

client_id: 'string',

amount: { type: 'number', min: 0 },

currency: { enum: ['USD', 'EUR', 'BRL'] as const },

},

handler: async (ctx, args) =>

await ctx.db.invoices.create({ data: args }),

},

void_invoice: {

destructive: true,

params: { id: 'string', reason: { type: 'string', optional: true } },

handler: async (ctx, args) => {

await ctx.db.invoices.void(args.id);

return 'Invoice voided';

},

},

},

});

import { createTool } from '@vinkius-core/mcp-fusion';

const billing = createTool<AppContext>('billing')

.description('Billing operations')

.commonSchema(z.object({ workspace_id: z.string() }))

.action({

name: 'get_invoice',

readOnly: true,

returns: InvoicePresenter,

schema: z.object({ id: z.string() }),

handler: async (ctx, args) =>

await ctx.db.invoices.findUnique({ where: { id: args.id } }),

});

Multiple actions register as a single MCP tool with a discriminator field. The agent sees one well-structured tool instead of 50 individual registrations:

billing — Billing operations

Action: get_invoice | create_invoice | void_invoice

- 'get_invoice': Requires: workspace_id, id. READ-ONLY

- 'create_invoice': Requires: workspace_id, client_id, amount, currency

- 'void_invoice': Requires: workspace_id, id ⚠️ DESTRUCTIVE

For large APIs (5,000+ operations), nest actions into groups:

createTool<AppContext>('platform')

.group('users', 'User management', g => {

g.use(requireAdmin)

.action({ name: 'list', readOnly: true, handler: listUsers })

.action({ name: 'ban', destructive: true, schema: banSchema, handler: banUser });

})

.group('billing', 'Billing operations', g => {

g.action({ name: 'refund', destructive: true, schema: refundSchema, handler: issueRefund });

});

// Discriminator values: users.list | users.ban | billing.refund

Full MCP prompts/list + prompts/get implementation. Prompt arguments are flat primitives only (string, number, boolean, enum) — MCP clients render them as forms.

import { definePrompt, PromptMessage } from '@vinkius-core/mcp-fusion';

const AuditPrompt = definePrompt<AppContext>('financial_audit', {

title: 'Financial Audit',

description: 'Run a compliance audit on an invoice.',

args: {

invoiceId: 'string',

depth: { enum: ['quick', 'thorough'] as const },

} as const,

middleware: [requireAuth, requireRole('auditor')],

handler: async (ctx, { invoiceId, depth }) => {

const invoice = await ctx.db.invoices.get(invoiceId);

return {

messages: [

PromptMessage.system('You are a Senior Financial Auditor.'),

...PromptMessage.fromView(InvoicePresenter.make(invoice, ctx)),

PromptMessage.user(`Perform a ${depth} audit on this invoice.`),

],

};

},

});

Decomposes a ResponseBuilder (from Presenter.make()) into XML-tagged prompt messages. Rules, data, UI blocks, and action suggestions from the Presenter are extracted into semantically separated blocks — same source of truth as the Tool response, zero duplication:

Presenter.make(data, ctx) → ResponseBuilder

│

├─ <domain_rules> → system role │ Presenter's systemRules()

├─ <dataset> → user role │ Validated JSON

├─ <visual_context> → user role │ UI blocks (ECharts, Mermaid, tables)

└─ <system_guidance> → system role │ Hints + HATEOAS action suggestions

tRPC-style context derivation with pre-compiled chains:

import { defineMiddleware } from '@vinkius-core/mcp-fusion';

const requireAuth = defineMiddleware(async (ctx: { token: string }) => {

const user = await db.getUser(ctx.token);

if (!user) throw new Error('Unauthorized');

return { user }; // ← merged into ctx, TS infers { user: User }

});

// Apply globally or per-action

defineTool<AppContext>('projects', {

middleware: [requireAuth, requireRole('editor')],

actions: { ... },

});

Structured errors with recovery instructions. The agent receives the error code, a suggestion, and a list of valid actions to try:

import { toolError } from '@vinkius-core/mcp-fusion';

return toolError('ProjectNotFound', {

message: `Project '${id}' does not exist.`,

suggestion: 'Call projects.list first to get valid IDs.',

availableActions: ['projects.list'],

});

<tool_error code="ProjectNotFound">

<message>Project 'xyz' does not exist.</message>

<recovery>Call projects.list first to get valid IDs.</recovery>

<available_actions>projects.list</available_actions>

</tool_error>

End-to-end type inference from server to client — autocomplete for action names and typed arguments:

import { createFusionClient } from '@vinkius-core/mcp-fusion/client';

import type { AppRouter } from './server';

const client = createFusionClient<AppRouter>(transport);

const result = await client.execute('billing.get_invoice', { workspace_id: 'ws_1', id: 'inv_42' });

// ^^^^^^^^^^^^^^^^^^^^ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

// autocomplete typed args

import { ToolRegistry, PromptRegistry } from '@vinkius-core/mcp-fusion';

const tools = new ToolRegistry<AppContext>();

tools.register(billing);

tools.register(projects);

const prompts = new PromptRegistry<AppContext>();

prompts.register(AuditPrompt);

prompts.register(SummarizePrompt);

// Attach to MCP server (works with Server and McpServer — duck-typed)

tools.attachToServer(server, {

contextFactory: (extra) => createAppContext(extra),

filter: { tags: ['public'] }, // Tag-based context gating

toolExposition: 'flat', // 'flat' or 'grouped' wire format

stateSync: { // RFC 7234-inspired cache signals

defaults: { cacheControl: 'no-store' },

policies: [

{ match: 'sprints.update', invalidates: ['sprints.*'] },

{ match: 'countries.*', cacheControl: 'immutable' },

],

},

});

prompts.attachToServer(server, {

contextFactory: (extra) => createAppContext(extra),

});

Generator handlers yield progress events — automatically forwarded as MCP notifications/progress when the client provides a progressToken:

handler: async function* (ctx, args) {

yield progress(10, 'Cloning repository...');

yield progress(50, 'Building AST...');

yield progress(90, 'Running analysis...');

return success(analysisResult);

}

Zero-overhead typed event system. Debug observers attach per-tool or globally:

import { createDebugObserver } from '@vinkius-core/mcp-fusion';

// Per-tool

billing.debug(createDebugObserver());

// Global — propagates to all registered tools

tools.enableDebug(createDebugObserver((event) => {

opentelemetry.addEvent(event.type, event);

}));

OpenTelemetry-compatible tracing with structural subtyping (no @opentelemetry/api dependency required):

tools.enableTracing(tracer);

// Spans: mcp.tool, mcp.action, mcp.durationMs, mcp.isError, mcp.tags

| Capability | Mechanism |

|---|---|

| Presenter | Domain-level View layer — .schema(), .systemRules(), .uiBlocks(), .suggestActions(), .embed()

|

| Cognitive Guardrails |

.agentLimit(max, onTruncate) — truncates arrays, injects filter guidance |

| Action Consolidation | Multiple actions → single MCP tool with discriminator enum |

| Hierarchical Groups |

.group() — namespace 5,000+ actions as module.action

|

| Prompt Engine |

definePrompt() with flat schema constraint, middleware, lifecycle sync |

| MVA-Driven Prompts |

PromptMessage.fromView() — Presenter → XML-tagged prompt messages |

| Context Derivation |

defineMiddleware() — tRPC-style typed context merging |

| Self-Healing Errors |

toolError() — structured recovery with action suggestions |

| Type-Safe Client |

createFusionClient<T>() — full inference from server to client |

| Streaming Progress |

yield progress() → MCP notifications/progress

|

| State Sync | RFC 7234 cache-control signals — invalidates, no-store, immutable

|

| Tool Exposition |

'flat' or 'grouped' wire format — same handlers, different topology |

| Tag Filtering | RBAC context gating — { tags: ['core'] } / { exclude: ['internal'] }

|

| Observability | Zero-overhead debug observers + OpenTelemetry-compatible tracing |

| TOON Encoding | Token-Optimized Object Notation — ~40% fewer tokens |

| Validation | Zod .merge().strict() — unknown fields rejected with actionable errors |

| Introspection | Runtime metadata via fusion://manifest.json MCP resource |

| Immutability |

Object.freeze() after buildToolDefinition() — no post-registration mutation |

| Guide | |

|---|---|

| MVA Architecture | The MVA pattern — why and how |

| Quickstart | Build a Fusion server from zero |

| Presenter | Schema, rules, UI blocks, affordances, composition |

| Prompt Engine |

definePrompt(), PromptMessage.fromView(), registry |

| Middleware | Context derivation, authentication, chains |

| State Sync | Cache-control signals, causal invalidation |

| Observability | Debug observers, tracing |

| Tool Exposition | Flat vs grouped wire strategies |

| Cookbook | Real-world patterns |

| API Reference | Complete typings |

| Cost & Hallucination | Token reduction analysis |

- Node.js 18+

- TypeScript 5.7+

-

@modelcontextprotocol/sdk ^1.12.1(peer dependency) -

zod ^3.25.1 || ^4.0.0(peer dependency)

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for mcp-fusion

Similar Open Source Tools

mcp-fusion

MCP Fusion is a Model-View-Agent framework for the Model Context Protocol, providing structured perception for AI agents with validated data, domain rules, UI blocks, and action affordances in every response. It introduces the MVA pattern, where a Presenter layer sits between data and the AI agent, ensuring consistent, validated, contextually-rich data across the API surface. The tool facilitates schema validation, system rules, UI blocks, cognitive guardrails, and action affordances for domain entities. It offers tools for defining actions, prompts, middleware, error handling, type-safe clients, observability, streaming progress, and more, all integrated with the Model Context Protocol SDK and Zod for type safety and validation.

Code

A3S Code is an embeddable AI coding agent framework in Rust that allows users to build agents capable of reading, writing, and executing code with tool access, planning, and safety controls. It is production-ready with features like permission system, HITL confirmation, skill-based tool restrictions, and error recovery. The framework is extensible with 19 trait-based extension points and supports lane-based priority queue for scalable multi-machine task distribution.

SwiftAgent

A type-safe, declarative framework for building AI agents in Swift, SwiftAgent is built on Apple FoundationModels. It allows users to compose agents by combining Steps in a declarative syntax similar to SwiftUI. The framework ensures compile-time checked input/output types, native Apple AI integration, structured output generation, and built-in security features like permission, sandbox, and guardrail systems. SwiftAgent is extensible with MCP integration, distributed agents, and a skills system. Users can install SwiftAgent with Swift 6.2+ on iOS 26+, macOS 26+, or Xcode 26+ using Swift Package Manager.

openai-scala-client

This is a no-nonsense async Scala client for OpenAI API supporting all the available endpoints and params including streaming, chat completion, vision, and voice routines. It provides a single service called OpenAIService that supports various calls such as Models, Completions, Chat Completions, Edits, Images, Embeddings, Batches, Audio, Files, Fine-tunes, Moderations, Assistants, Threads, Thread Messages, Runs, Run Steps, Vector Stores, Vector Store Files, and Vector Store File Batches. The library aims to be self-contained with minimal dependencies and supports API-compatible providers like Azure OpenAI, Azure AI, Anthropic, Google Vertex AI, Groq, Grok, Fireworks AI, OctoAI, TogetherAI, Cerebras, Mistral, Deepseek, Ollama, FastChat, and more.

opencode.nvim

Opencode.nvim is a Neovim plugin that provides a simple and efficient way to browse, search, and open files in a project. It enhances the file navigation experience by offering features like fuzzy finding, file preview, and quick access to frequently used files. With Opencode.nvim, users can easily navigate through their project files, jump to specific locations, and manage their workflow more effectively. The plugin is designed to improve productivity and streamline the development process by simplifying file handling tasks within Neovim.

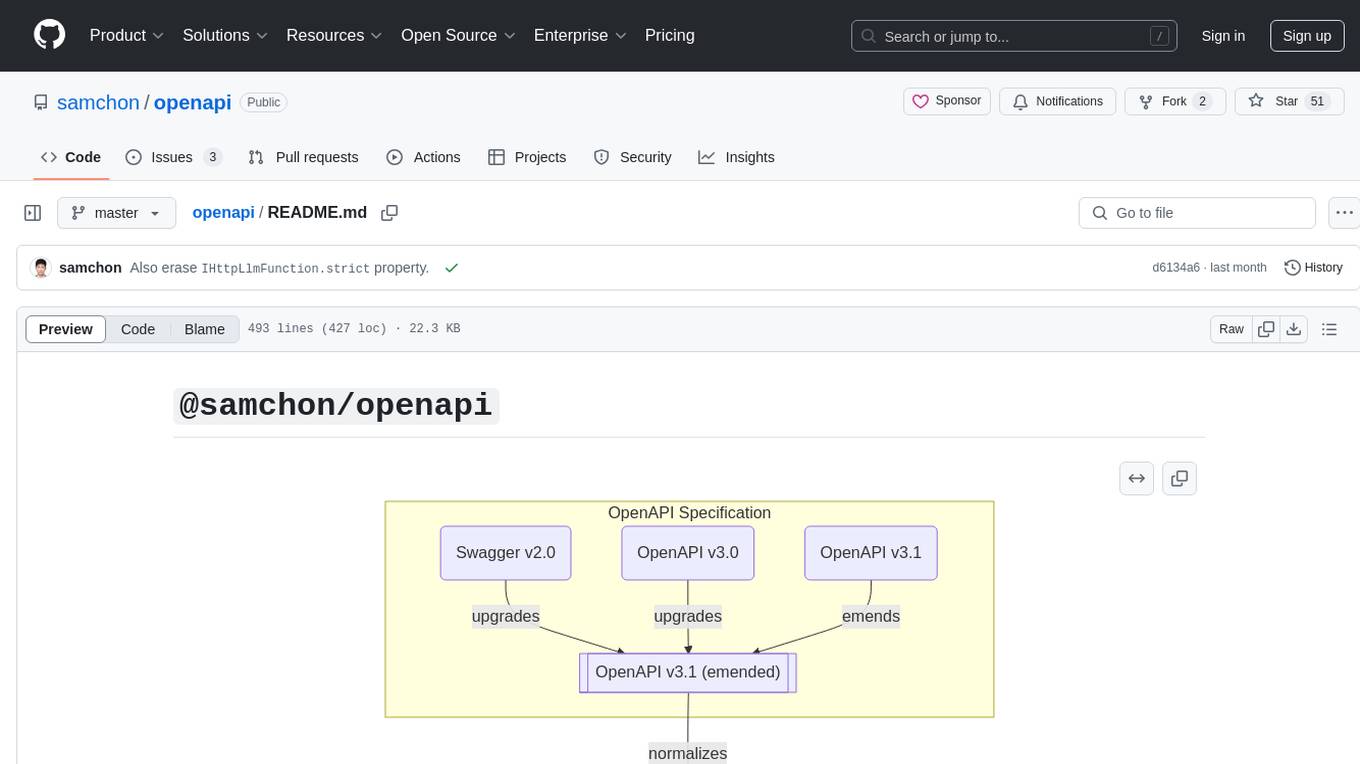

openapi

The `@samchon/openapi` repository is a collection of OpenAPI types and converters for various versions of OpenAPI specifications. It includes an 'emended' OpenAPI v3.1 specification that enhances clarity by removing ambiguous and duplicated expressions. The repository also provides an application composer for LLM (Large Language Model) function calling from OpenAPI documents, allowing users to easily perform LLM function calls based on the Swagger document. Conversions to different versions of OpenAPI documents are also supported, all based on the emended OpenAPI v3.1 specification. Users can validate their OpenAPI documents using the `typia` library with `@samchon/openapi` types, ensuring compliance with standard specifications.

island-ai

island-ai is a TypeScript toolkit tailored for developers engaging with structured outputs from Large Language Models. It offers streamlined processes for handling, parsing, streaming, and leveraging AI-generated data across various applications. The toolkit includes packages like zod-stream for interfacing with LLM streams, stream-hooks for integrating streaming JSON data into React applications, and schema-stream for JSON streaming parsing based on Zod schemas. Additionally, related packages like @instructor-ai/instructor-js focus on data validation and retry mechanisms, enhancing the reliability of data processing workflows.

agents-flex

Agents-Flex is a LLM Application Framework like LangChain base on Java. It provides a set of tools and components for building LLM applications, including LLM Visit, Prompt and Prompt Template Loader, Function Calling Definer, Invoker and Running, Memory, Embedding, Vector Storage, Resource Loaders, Document, Splitter, Loader, Parser, LLMs Chain, and Agents Chain.

MCP-Chinese-Getting-Started-Guide

The Model Context Protocol (MCP) is an innovative open-source protocol that redefines the interaction between large language models (LLMs) and the external world. MCP provides a standardized approach for any large language model to easily connect to various data sources and tools, enabling seamless access and processing of information. MCP acts as a USB-C interface for AI applications, offering a standardized way for AI models to connect to different data sources and tools. The core functionalities of MCP include Resources, Prompts, Tools, Sampling, Roots, and Transports. This guide focuses on developing an MCP server for network search using Python and uv management. It covers initializing the project, installing dependencies, creating a server, implementing tool execution methods, and running the server. Additionally, it explains how to debug the MCP server using the Inspector tool, how to call tools from the server, and how to connect multiple MCP servers. The guide also introduces the Sampling feature, which allows pre- and post-tool execution operations, and demonstrates how to integrate MCP servers into LangChain for AI applications.

react-native-rag

React Native RAG is a library that enables private, local RAGs to supercharge LLMs with a custom knowledge base. It offers modular and extensible components like `LLM`, `Embeddings`, `VectorStore`, and `TextSplitter`, with multiple integration options. The library supports on-device inference, vector store persistence, and semantic search implementation. Users can easily generate text responses, manage documents, and utilize custom components for advanced use cases.

node-sdk

The ChatBotKit Node SDK is a JavaScript-based platform for building conversational AI bots and agents. It offers easy setup, serverless compatibility, modern framework support, customizability, and multi-platform deployment. With capabilities like multi-modal and multi-language support, conversation management, chat history review, custom datasets, and various integrations, this SDK enables users to create advanced chatbots for websites, mobile apps, and messaging platforms.

smithers

Smithers is a tool for declarative AI workflow orchestration using React components. It allows users to define complex multi-agent workflows as component trees, ensuring composability, durability, and error handling. The tool leverages React's re-rendering mechanism to persist outputs to SQLite, enabling crashed workflows to resume seamlessly. Users can define schemas for task outputs, create workflow instances, define agents, build workflow trees, and run workflows programmatically or via CLI. Smithers supports components for pipeline stages, structured output validation with Zod, MDX prompts, validation loops with Ralph, dynamic branching, and various built-in tools like read, edit, bash, grep, and write. The tool follows a clear workflow execution process involving defining, rendering, executing, re-rendering, and repeating tasks until completion, all while storing task results in SQLite for fault tolerance.

lagent

Lagent is a lightweight open-source framework that allows users to efficiently build large language model(LLM)-based agents. It also provides some typical tools to augment LLM. The overview of our framework is shown below:

agent-prism

AgentPrism is an open source library of React components designed for visualizing traces from AI agents. It helps in turning complex JSON data into clear and visual diagrams for debugging AI agents. By plugging in OpenTelemetry data, users can visualize LLM calls, tool executions, and agent workflows in a hierarchical timeline. The library is currently in alpha release and under active development, with APIs subject to change. Users can try out AgentPrism live at agent-prism.evilmartians.io to visualize and debug their own agent traces.

Conduit

Conduit is a unified Swift 6.2 SDK for local and cloud LLM inference, providing a single Swift-native API that can target Anthropic, OpenRouter, Ollama, MLX, HuggingFace, and Apple’s Foundation Models without rewriting your prompt pipeline. It allows switching between local, cloud, and system providers with minimal code changes, supports downloading models from HuggingFace Hub for local MLX inference, generates Swift types directly from LLM responses, offers privacy-first options for on-device running, and is built with Swift 6.2 concurrency features like actors, Sendable types, and AsyncSequence.

mediapipe-rs

MediaPipe-rs is a Rust library designed for MediaPipe tasks on WasmEdge WASI-NN. It offers easy-to-use low-code APIs similar to mediapipe-python, with low overhead and flexibility for custom media input. The library supports various tasks like object detection, image classification, gesture recognition, and more, including TfLite models, TF Hub models, and custom models. Users can create task instances, run sessions for pre-processing, inference, and post-processing, and speed up processing by reusing sessions. The library also provides support for audio tasks using audio data from symphonia, ffmpeg, or raw audio. Users can choose between CPU, GPU, or TPU devices for processing.

For similar tasks

mcp-fusion

MCP Fusion is a Model-View-Agent framework for the Model Context Protocol, providing structured perception for AI agents with validated data, domain rules, UI blocks, and action affordances in every response. It introduces the MVA pattern, where a Presenter layer sits between data and the AI agent, ensuring consistent, validated, contextually-rich data across the API surface. The tool facilitates schema validation, system rules, UI blocks, cognitive guardrails, and action affordances for domain entities. It offers tools for defining actions, prompts, middleware, error handling, type-safe clients, observability, streaming progress, and more, all integrated with the Model Context Protocol SDK and Zod for type safety and validation.

vulcan-sql

VulcanSQL is an Analytical Data API Framework for AI agents and data apps. It aims to help data professionals deliver RESTful APIs from databases, data warehouses or data lakes much easier and secure. It turns your SQL into APIs in no time!

lassxToolkit

lassxToolkit is a versatile tool designed for file processing tasks. It allows users to manipulate files and folders based on specified configurations in a strict .json format. The tool supports various AI models for tasks such as image upscaling and denoising. Users can customize settings like input/output paths, error handling, file selection, and plugin integration. lassxToolkit provides detailed instructions on configuration options, default values, and model selection. It also offers features like tree restoration, recursive processing, and regex-based file filtering. The tool is suitable for users looking to automate file processing tasks with AI capabilities.

gemini-ai

Gemini AI is a Ruby Gem designed to provide low-level access to Google's generative AI services through Vertex AI, Generative Language API, or AI Studio. It allows users to interact with Gemini to build abstractions on top of it. The Gem provides functionalities for tasks such as generating content, embeddings, predictions, and more. It supports streaming capabilities, server-sent events, safety settings, system instructions, JSON format responses, and tools (functions) calling. The Gem also includes error handling, development setup, publishing to RubyGems, updating the README, and references to resources for further learning.

llm-interface

LLM Interface is an npm module that streamlines interactions with various Large Language Model (LLM) providers in Node.js applications. It offers a unified interface for switching between providers and models, supporting 36 providers and hundreds of models. Features include chat completion, streaming, error handling, extensibility, response caching, retries, JSON output, and repair. The package relies on npm packages like axios, @google/generative-ai, dotenv, jsonrepair, and loglevel. Installation is done via npm, and usage involves sending prompts to LLM providers. Tests can be run using npm test. Contributions are welcome under the MIT License.

partial-json-parser-js

Partial JSON Parser is a lightweight and customizable library for parsing partial JSON strings. It allows users to parse incomplete JSON data and stream it to the user. The library provides options to specify what types of partialness are allowed during parsing, such as strings, objects, arrays, special values, and more. It helps handle malformed JSON and returns the parsed JavaScript value. Partial JSON Parser is implemented purely in JavaScript and offers both commonjs and esm builds.

premsql

PremSQL is an open-source library designed to help developers create secure, fully local Text-to-SQL solutions using small language models. It provides essential tools for building and deploying end-to-end Text-to-SQL pipelines with customizable components, ideal for secure, autonomous AI-powered data analysis. The library offers features like Local-First approach, Customizable Datasets, Robust Executors and Evaluators, Advanced Generators, Error Handling and Self-Correction, Fine-Tuning Support, and End-to-End Pipelines. Users can fine-tune models, generate SQL queries from natural language inputs, handle errors, and evaluate model performance against predefined metrics. PremSQL is extendible for customization and private data usage.

ai21-python

The AI21 Labs Python SDK is a comprehensive tool for interacting with the AI21 API. It provides functionalities for chat completions, conversational RAG, token counting, error handling, and support for various cloud providers like AWS, Azure, and Vertex. The SDK offers both synchronous and asynchronous usage, along with detailed examples and documentation. Users can quickly get started with the SDK to leverage AI21's powerful models for various natural language processing tasks.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.