nncf

Neural Network Compression Framework for enhanced OpenVINO™ inference

Stars: 1083

Neural Network Compression Framework (NNCF) provides a suite of post-training and training-time algorithms for optimizing inference of neural networks in OpenVINO™ with a minimal accuracy drop. It is designed to work with models from PyTorch, TorchFX, TensorFlow, ONNX, and OpenVINO™. NNCF offers samples demonstrating compression algorithms for various use cases and models, with the ability to add different compression algorithms easily. It supports GPU-accelerated layers, distributed training, and seamless combination of pruning, sparsity, and quantization algorithms. NNCF allows exporting compressed models to ONNX or TensorFlow formats for use with OpenVINO™ toolkit, and supports Accuracy-Aware model training pipelines via Adaptive Compression Level Training and Early Exit Training.

README:

Key Features • Installation • Documentation • Usage • Tutorials and Samples • Third-party integration • Model Zoo

Neural Network Compression Framework (NNCF) provides a suite of post-training and training-time algorithms for optimizing inference of neural networks in OpenVINO™ with a minimal accuracy drop.

NNCF is designed to work with models from PyTorch, TorchFX, TensorFlow, ONNX and OpenVINO™.

NNCF provides samples that demonstrate the usage of compression algorithms for different use cases and models. See compression results achievable with the NNCF-powered samples on the NNCF Model Zoo page.

The framework is organized as a Python* package that can be built and used in a standalone mode. The framework architecture is unified to make it easy to add different compression algorithms for both PyTorch and TensorFlow deep learning frameworks.

| Compression algorithm | OpenVINO | PyTorch | TorchFX | TensorFlow | ONNX |

|---|---|---|---|---|---|

| Post-Training Quantization | Supported | Supported | Experimental | Supported | Supported |

| Weights Compression | Supported | Supported | Experimental | Not supported | Not supported |

| Activation Sparsity | Not supported | Experimental | Not supported | Not supported | Not supported |

| Compression algorithm | PyTorch | TensorFlow |

|---|---|---|

| Quantization Aware Training | Supported | Supported |

| Weight-Only Quantization Aware Training with LoRA and NLS | Supported | Not Supported |

| Mixed-Precision Quantization | Supported | Not supported |

| Sparsity | Supported | Supported |

| Filter pruning | Supported | Supported |

| Movement pruning | Experimental | Not supported |

- Automatic, configurable model graph transformation to obtain the compressed model.

NOTE: Limited support for TensorFlow models. Only models created using Sequential or Keras Functional API are supported.

- Common interface for compression methods.

- GPU-accelerated layers for faster compressed model fine-tuning.

- Distributed training support.

- Git patch for prominent third-party repository (huggingface-transformers) demonstrating the process of integrating NNCF into custom training pipelines.

- Exporting PyTorch compressed models to ONNX* checkpoints and TensorFlow compressed models to SavedModel or Frozen Graph format, ready to use with OpenVINO™ toolkit.

- Support for Accuracy-Aware model training pipelines via the Adaptive Compression Level Training and Early Exit Training.

This documentation covers detailed information about NNCF algorithms and functions needed for the contribution to NNCF.

The latest user documentation for NNCF is available here.

NNCF API documentation can be found here.

The NNCF PTQ is the simplest way to apply 8-bit quantization. To run the algorithm you only need your model and a small (~300 samples) calibration dataset.

OpenVINO is the preferred backend to run PTQ with, while PyTorch, TensorFlow, and ONNX are also supported.

OpenVINO

import nncf

import openvino as ov

import torch

from torchvision import datasets, transforms

# Instantiate your uncompressed model

model = ov.Core().read_model("/model_path")

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

dataset_loader = torch.utils.data.DataLoader(val_dataset, batch_size=1)

# Step 1: Initialize transformation function

def transform_fn(data_item):

images, _ = data_item

return images

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(dataset_loader, transform_fn)

# Step 3: Run the quantization pipeline

quantized_model = nncf.quantize(model, calibration_dataset)PyTorch

import nncf

import torch

from torchvision import datasets, models

# Instantiate your uncompressed model

model = models.mobilenet_v2()

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

dataset_loader = torch.utils.data.DataLoader(val_dataset)

# Step 1: Initialize the transformation function

def transform_fn(data_item):

images, _ = data_item

return images

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(dataset_loader, transform_fn)

# Step 3: Run the quantization pipeline

quantized_model = nncf.quantize(model, calibration_dataset)NOTE If the Post-Training Quantization algorithm does not meet quality requirements you can fine-tune the quantized pytorch model. You can find an example of the Quantization-Aware training pipeline for a pytorch model here.

TorchFX

import nncf

import torch.fx

from torchvision import datasets, models

# Instantiate your uncompressed model

model = models.mobilenet_v2()

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

dataset_loader = torch.utils.data.DataLoader(val_dataset)

# Step 1: Initialize the transformation function

def transform_fn(data_item):

images, _ = data_item

return images

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(dataset_loader, transform_fn)

# Step 3: Export model to TorchFX

input_shape = (1, 3, 224, 224)

fx_model = torch.export.export_for_training(model, args=(ex_input,)).module()

# or

# fx_model = torch.export.export(model, args=(ex_input,)).module()

# Step 4: Run the quantization pipeline

quantized_fx_model = nncf.quantize(fx_model, calibration_dataset)TensorFlow

import nncf

import tensorflow as tf

import tensorflow_datasets as tfds

# Instantiate your uncompressed model

model = tf.keras.applications.MobileNetV2()

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = tfds.load("/path", split="validation",

shuffle_files=False, as_supervised=True)

# Step 1: Initialize transformation function

def transform_fn(data_item):

images, _ = data_item

return images

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(val_dataset, transform_fn)

# Step 3: Run the quantization pipeline

quantized_model = nncf.quantize(model, calibration_dataset)ONNX

import onnx

import nncf

import torch

from torchvision import datasets

# Instantiate your uncompressed model

onnx_model = onnx.load_model("/model_path")

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

dataset_loader = torch.utils.data.DataLoader(val_dataset, batch_size=1)

# Step 1: Initialize transformation function

input_name = onnx_model.graph.input[0].name

def transform_fn(data_item):

images, _ = data_item

return {input_name: images.numpy()}

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(dataset_loader, transform_fn)

# Step 3: Run the quantization pipeline

quantized_model = nncf.quantize(onnx_model, calibration_dataset)Here is an example of Accuracy Aware Quantization pipeline where model weights and compression parameters may be fine-tuned to achieve a higher accuracy.

PyTorch

import nncf

import nncf.torch

import torch

from torchvision import datasets, models

# Instantiate your uncompressed model

model = models.mobilenet_v2()

# Provide validation part of the dataset to collect statistics needed for the compression algorithm

val_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

dataset_loader = torch.utils.data.DataLoader(val_dataset)

# Step 1: Initialize the transformation function

def transform_fn(data_item):

images, _ = data_item

return images

# Step 2: Initialize NNCF Dataset

calibration_dataset = nncf.Dataset(dataset_loader, transform_fn)

# Step 3: Run the quantization pipeline

quantized_model = nncf.quantize(model, calibration_dataset)

# Now use compressed_model as a usual torch.nn.Module

# to fine-tune compression parameters along with the model weights

# Save quantization modules and the quantized model parameters

checkpoint = {

'state_dict': model.state_dict(),

'nncf_config': nncf.torch.get_config(model),

... # the rest of the user-defined objects to save

}

torch.save(checkpoint, path_to_checkpoint)

# ...

# Load quantization modules and the quantized model parameters

resuming_checkpoint = torch.load(path_to_checkpoint)

nncf_config = resuming_checkpoint['nncf_config']

state_dict = resuming_checkpoint['state_dict']

quantized_model = nncf.torch.load_from_config(model, nncf_config, example_input)

model.load_state_dict(state_dict)

# ... the rest of the usual PyTorch-powered training pipelineHere is an example of Accuracy Aware RB Sparsification pipeline where model weights and compression parameters may be fine-tuned to achieve a higher accuracy.

PyTorch

import torch

import nncf.torch # Important - must be imported before any other external package that depends on torch

from nncf import NNCFConfig

from nncf.torch import create_compressed_model, register_default_init_args

# Instantiate your uncompressed model

from torchvision.models.resnet import resnet50

model = resnet50()

# Load a configuration file to specify compression

nncf_config = NNCFConfig.from_json("resnet50_imagenet_rb_sparsity.json")

# Provide data loaders for compression algorithm initialization, if necessary

import torchvision.datasets as datasets

representative_dataset = datasets.ImageFolder("/path", transform=transforms.Compose([transforms.ToTensor()]))

init_loader = torch.utils.data.DataLoader(representative_dataset)

nncf_config = register_default_init_args(nncf_config, init_loader)

# Apply the specified compression algorithms to the model

compression_ctrl, compressed_model = create_compressed_model(model, nncf_config)

# Now use compressed_model as a usual torch.nn.Module

# to fine-tune compression parameters along with the model weights

# ... the rest of the usual PyTorch-powered training pipeline

# Export to ONNX or .pth when done fine-tuning

compression_ctrl.export_model("compressed_model.onnx")

torch.save(compressed_model.state_dict(), "compressed_model.pth")NOTE (PyTorch): Due to the way NNCF works within the PyTorch backend, import nncf must be done before any other import of torch in your package or in third-party packages that your code utilizes. Otherwise, the compression may be applied incompletely.

Tensorflow

import tensorflow as tf

from nncf import NNCFConfig

from nncf.tensorflow import create_compressed_model, register_default_init_args

# Instantiate your uncompressed model

from tensorflow.keras.applications import ResNet50

model = ResNet50()

# Load a configuration file to specify compression

nncf_config = NNCFConfig.from_json("resnet50_imagenet_rb_sparsity.json")

# Provide dataset for compression algorithm initialization

representative_dataset = tf.data.Dataset.list_files("/path/*.jpeg")

nncf_config = register_default_init_args(nncf_config, representative_dataset, batch_size=1)

# Apply the specified compression algorithms to the model

compression_ctrl, compressed_model = create_compressed_model(model, nncf_config)

# Now use compressed_model as a usual Keras model

# to fine-tune compression parameters along with the model weights

# ... the rest of the usual TensorFlow-powered training pipeline

# Export to Frozen Graph, TensorFlow SavedModel or .h5 when done fine-tuning

compression_ctrl.export_model("compressed_model.pb", save_format="frozen_graph")For a more detailed description of NNCF usage in your training code, see this tutorial.

For a quicker start with NNCF-powered compression, try sample notebooks and scripts presented below.

Ready-to-run Jupyter* notebook tutorials and demos are available to explain and display NNCF compression algorithms for optimizing models for inference with the OpenVINO Toolkit:

| Notebook Tutorial Name | Compression Algorithm | Backend | Domain |

|---|---|---|---|

|

BERT Quantization |

Post-Training Quantization | OpenVINO | NLP |

|

MONAI Segmentation Model Quantization |

Post-Training Quantization | OpenVINO | Segmentation |

| PyTorch Model Quantization | Post-Training Quantization | PyTorch | Image Classification |

| YOLOv11 Quantization with Accuracy Control | Post-Training Quantization with Accuracy Control | OpenVINO | Speech-to-Text, Object Detection |

| PyTorch Training-Time Compression | Training-Time Compression | PyTorch | Image Classification |

| TensorFlow Training-Time Compression | Training-Time Compression | Tensorflow | Image Classification |

| Joint Pruning, Quantization and Distillation for BERT | Joint Pruning, Quantization and Distillation | OpenVINO | NLP |

A list of notebooks demonstrating OpenVINO conversion and inference together with NNCF compression for models from various domains:

| Demo Model | Compression Algorithm | Backend | Domain |

|---|---|---|---|

|

YOLOv8 |

Post-Training Quantization | OpenVINO | Object Detection, KeyPoint Detection, Instance Segmentation |

| EfficientSAM | Post-Training Quantization | OpenVINO | Image Segmentation |

| Segment Anything Model | Post-Training Quantization | OpenVINO | Image Segmentation |

| OneFormer | Post-Training Quantization | OpenVINO | Image Segmentation |

| InstructPix2Pix | Post-Training Quantization | OpenVINO | Image-to-Image |

| CLIP | Post-Training Quantization | OpenVINO | Image-to-Text |

| BLIP | Post-Training Quantization | OpenVINO | Image-to-Text |

| Latent Consistency Model | Post-Training Quantization | OpenVINO | Text-to-Image |

| ControlNet QR Code Monster | Post-Training Quantization | OpenVINO | Text-to-Image |

| SDXL-turbo | Post-Training Quantization | OpenVINO | Text-to-Image, Image-to-Image |

| Distil-Whisper | Post-Training Quantization | OpenVINO | Speech-to-Text |

|

Whisper |

Post-Training Quantization | OpenVINO | Speech-to-Text |

| MMS Speech Recognition | Post-Training Quantization | OpenVINO | Speech-to-Text |

| Grammar Error Correction | Post-Training Quantization | OpenVINO | NLP, Grammar Correction |

| LLM Instruction Following | Weight Compression | OpenVINO | NLP, Instruction Following |

| LLM Chat Bots | Weight Compression | OpenVINO | NLP, Chat Bot |

Compact scripts demonstrating quantization and corresponding inference speed boost:

| Example Name | Compression Algorithm | Backend | Domain |

|---|---|---|---|

| OpenVINO MobileNetV2 | Post-Training Quantization | OpenVINO | Image Classification |

| OpenVINO YOLOv8 | Post-Training Quantization | OpenVINO | Object Detection |

| OpenVINO YOLOv8 QwAС | Post-Training Quantization with Accuracy Control | OpenVINO | Object Detection |

| OpenVINO Anomaly Classification | Post-Training Quantization with Accuracy Control | OpenVINO | Anomaly Classification |

| PyTorch MobileNetV2 | Post-Training Quantization | PyTorch | Image Classification |

| PyTorch SSD | Post-Training Quantization | PyTorch | Object Detection |

| TorchFX Resnet18 | Post-Training Quantization | TorchFX | Image Classification |

| TensorFlow MobileNetV2 | Post-Training Quantization | TensorFlow | Image Classification |

| ONNX MobileNetV2 | Post-Training Quantization | ONNX | Image Classification |

| Example Name | Compression Algorithm | Backend | Domain |

|---|---|---|---|

| PyTorch Resnet18 | Quantization-Aware Training | PyTorch | Image Classification |

| PyTorch Anomalib | Quantization-Aware Training | PyTorch | Anomaly Detection |

NNCF may be easily integrated into training/evaluation pipelines of third-party repositories.

-

NNCF is used as a compression backend within the renowned

transformersrepository in HuggingFace Optimum Intel. For instance, the command below exports the Llama-3.2-3B-Instruct model to OpenVINO format with INT4-quantized weights:optimum-cli export openvino -m meta-llama/Llama-3.2-3B-Instruct --weight-format int4 ./Llama-3.2-3B-Instruct-int4 -

NNCF is integrated into the Intel OpenVINO export pipeline, enabling quantization for the exported models.

-

NNCF is used as primary quantization framework for the ExecuTorch OpenVINO integration.

-

NNCF is used as primary quantization framework for the torch.compile OpenVINO integration.

-

NNCF is integrated into OpenVINO Training Extensions as a model optimization backend. You can train, optimize, and export new models based on available model templates as well as run the exported models with OpenVINO.

For detailed installation instructions, refer to the Installation guide.

NNCF can be installed as a regular PyPI package via pip:

pip install nncfNNCF is also available via conda:

conda install -c conda-forge nncfSystem requirements of NNCF correspond to the used backend. System requirements for each backend and the matrix of corresponding versions can be found in installation.md.

List of models and compression results for them can be found at our NNCF Model Zoo page.

@article{kozlov2020neural,

title = {Neural network compression framework for fast model inference},

author = {Kozlov, Alexander and Lazarevich, Ivan and Shamporov, Vasily and Lyalyushkin, Nikolay and Gorbachev, Yury},

journal = {arXiv preprint arXiv:2002.08679},

year = {2020}

}Refer to the CONTRIBUTING.md file for guidelines on contributions to the NNCF repository.

- Documentation

- Examples

- FAQ

- Notebooks

- HuggingFace Optimum Intel

- OpenVINO Model Optimization Guide

- OpenVINO Hugging Face page

- OpenVino Performance Benchmarks page

NNCF as part of the OpenVINO™ toolkit collects anonymous usage data for the purpose of improving OpenVINO™ tools. You can opt-out at any time by running the following command in the Python environment where you have NNCF installed:

opt_in_out --opt_out

More information available on OpenVINO telemetry.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for nncf

Similar Open Source Tools

nncf

Neural Network Compression Framework (NNCF) provides a suite of post-training and training-time algorithms for optimizing inference of neural networks in OpenVINO™ with a minimal accuracy drop. It is designed to work with models from PyTorch, TorchFX, TensorFlow, ONNX, and OpenVINO™. NNCF offers samples demonstrating compression algorithms for various use cases and models, with the ability to add different compression algorithms easily. It supports GPU-accelerated layers, distributed training, and seamless combination of pruning, sparsity, and quantization algorithms. NNCF allows exporting compressed models to ONNX or TensorFlow formats for use with OpenVINO™ toolkit, and supports Accuracy-Aware model training pipelines via Adaptive Compression Level Training and Early Exit Training.

dl_model_infer

This project is a c++ version of the AI reasoning library that supports the reasoning of tensorrt models. It provides accelerated deployment cases of deep learning CV popular models and supports dynamic-batch image processing, inference, decode, and NMS. The project has been updated with various models and provides tutorials for model exports. It also includes a producer-consumer inference model for specific tasks. The project directory includes implementations for model inference applications, backend reasoning classes, post-processing, pre-processing, and target detection and tracking. Speed tests have been conducted on various models, and onnx downloads are available for different models.

AQLM

AQLM is the official PyTorch implementation for Extreme Compression of Large Language Models via Additive Quantization. It includes prequantized AQLM models without PV-Tuning and PV-Tuned models for LLaMA, Mistral, and Mixtral families. The repository provides inference examples, model details, and quantization setups. Users can run prequantized models using Google Colab examples, work with different model families, and install the necessary inference library. The repository also offers detailed instructions for quantization, fine-tuning, and model evaluation. AQLM quantization involves calibrating models for compression, and users can improve model accuracy through finetuning. Additionally, the repository includes information on preparing models for inference and contributing guidelines.

SimpleAICV_pytorch_training_examples

SimpleAICV_pytorch_training_examples is a repository that provides simple training and testing examples for various computer vision tasks such as image classification, object detection, semantic segmentation, instance segmentation, knowledge distillation, contrastive learning, masked image modeling, OCR text detection, OCR text recognition, human matting, salient object detection, interactive segmentation, image inpainting, and diffusion model tasks. The repository includes support for multiple datasets and networks, along with instructions on how to prepare datasets, train and test models, and use gradio demos. It also offers pretrained models and experiment records for download from huggingface or Baidu-Netdisk. The repository requires specific environments and package installations to run effectively.

floneum

Floneum is a graph editor that makes it easy to develop your own AI workflows. It uses large language models (LLMs) to run AI models locally, without any external dependencies or even a GPU. This makes it easy to use LLMs with your own data, without worrying about privacy. Floneum also has a plugin system that allows you to improve the performance of LLMs and make them work better for your specific use case. Plugins can be used in any language that supports web assembly, and they can control the output of LLMs with a process similar to JSONformer or guidance.

cambrian

Cambrian-1 is a fully open project focused on exploring multimodal Large Language Models (LLMs) with a vision-centric approach. It offers competitive performance across various benchmarks with models at different parameter levels. The project includes training configurations, model weights, instruction tuning data, and evaluation details. Users can interact with Cambrian-1 through a Gradio web interface for inference. The project is inspired by LLaVA and incorporates contributions from Vicuna, LLaMA, and Yi. Cambrian-1 is licensed under Apache 2.0 and utilizes datasets and checkpoints subject to their respective original licenses.

imodels

Python package for concise, transparent, and accurate predictive modeling. All sklearn-compatible and easy to use. _For interpretability in NLP, check out our new package:imodelsX _

deepfabric

DeepFabric is a CLI tool and SDK designed for researchers and developers to generate high-quality synthetic datasets at scale using large language models. It leverages a graph and tree-based architecture to create diverse and domain-specific datasets while minimizing redundancy. The tool supports generating Chain of Thought datasets for step-by-step reasoning tasks and offers multi-provider support for using different language models. DeepFabric also allows for automatic dataset upload to Hugging Face Hub and uses YAML configuration files for flexibility in dataset generation.

Groma

Groma is a grounded multimodal assistant that excels in region understanding and visual grounding. It can process user-defined region inputs and generate contextually grounded long-form responses. The tool presents a unique paradigm for multimodal large language models, focusing on visual tokenization for localization. Groma achieves state-of-the-art performance in referring expression comprehension benchmarks. The tool provides pretrained model weights and instructions for data preparation, training, inference, and evaluation. Users can customize training by starting from intermediate checkpoints. Groma is designed to handle tasks related to detection pretraining, alignment pretraining, instruction finetuning, instruction following, and more.

aimet

AIMET is a library that provides advanced model quantization and compression techniques for trained neural network models. It provides features that have been proven to improve run-time performance of deep learning neural network models with lower compute and memory requirements and minimal impact to task accuracy. AIMET is designed to work with PyTorch, TensorFlow and ONNX models. We also host the AIMET Model Zoo - a collection of popular neural network models optimized for 8-bit inference. We also provide recipes for users to quantize floating point models using AIMET.

langchain_dart

LangChain.dart is a Dart port of the popular LangChain Python framework created by Harrison Chase. LangChain provides a set of ready-to-use components for working with language models and a standard interface for chaining them together to formulate more advanced use cases (e.g. chatbots, Q&A with RAG, agents, summarization, extraction, etc.). The components can be grouped into a few core modules: * **Model I/O:** LangChain offers a unified API for interacting with various LLM providers (e.g. OpenAI, Google, Mistral, Ollama, etc.), allowing developers to switch between them with ease. Additionally, it provides tools for managing model inputs (prompt templates and example selectors) and parsing the resulting model outputs (output parsers). * **Retrieval:** assists in loading user data (via document loaders), transforming it (with text splitters), extracting its meaning (using embedding models), storing (in vector stores) and retrieving it (through retrievers) so that it can be used to ground the model's responses (i.e. Retrieval-Augmented Generation or RAG). * **Agents:** "bots" that leverage LLMs to make informed decisions about which available tools (such as web search, calculators, database lookup, etc.) to use to accomplish the designated task. The different components can be composed together using the LangChain Expression Language (LCEL).

llm-graph-builder

Knowledge Graph Builder App is a tool designed to convert PDF documents into a structured knowledge graph stored in Neo4j. It utilizes OpenAI's GPT/Diffbot LLM to extract nodes, relationships, and properties from PDF text content. Users can upload files from local machine or S3 bucket, choose LLM model, and create a knowledge graph. The app integrates with Neo4j for easy visualization and querying of extracted information.

llm4ad

LLM4AD is an open-source Python-based platform leveraging Large Language Models (LLMs) for Automatic Algorithm Design (AD). It provides unified interfaces for methods, tasks, and LLMs, along with features like evaluation acceleration, secure evaluation, logs, GUI support, and more. The platform was originally developed for optimization tasks but is versatile enough to be used in other areas such as machine learning, science discovery, game theory, and engineering design. It offers various search methods and algorithm design tasks across different domains. LLM4AD supports remote LLM API, local HuggingFace LLM deployment, and custom LLM interfaces. The project is licensed under the MIT License and welcomes contributions, collaborations, and issue reports.

cocoindex

CocoIndex is the world's first open-source engine that supports both custom transformation logic and incremental updates specialized for data indexing. Users declare the transformation, CocoIndex creates & maintains an index, and keeps the derived index up to date based on source update, with minimal computation and changes. It provides a Python library for data indexing with features like text embedding, code embedding, PDF parsing, and more. The tool is designed to simplify the process of indexing data for semantic search and structured information extraction.

SimAI

SimAI is the industry's first full-stack, high-precision simulator for AI large-scale training. It provides detailed modeling and simulation of the entire LLM training process, encompassing framework, collective communication, network layers, and more. This comprehensive approach offers end-to-end performance data, enabling researchers to analyze training process details, evaluate time consumption of AI tasks under specific conditions, and assess performance gains from various algorithmic optimizations.

vscode-unify-chat-provider

The 'vscode-unify-chat-provider' repository is a tool that integrates multiple LLM API providers into VS Code's GitHub Copilot Chat using the Language Model API. It offers free tier access to mainstream models, perfect compatibility with major LLM API formats, deep adaptation to API features, best performance with built-in parameters, out-of-the-box configuration, import/export support, great UX, and one-click use of various models. The tool simplifies model setup, migration, and configuration for users, providing a seamless experience within VS Code for utilizing different language models.

For similar tasks

nncf

Neural Network Compression Framework (NNCF) provides a suite of post-training and training-time algorithms for optimizing inference of neural networks in OpenVINO™ with a minimal accuracy drop. It is designed to work with models from PyTorch, TorchFX, TensorFlow, ONNX, and OpenVINO™. NNCF offers samples demonstrating compression algorithms for various use cases and models, with the ability to add different compression algorithms easily. It supports GPU-accelerated layers, distributed training, and seamless combination of pruning, sparsity, and quantization algorithms. NNCF allows exporting compressed models to ONNX or TensorFlow formats for use with OpenVINO™ toolkit, and supports Accuracy-Aware model training pipelines via Adaptive Compression Level Training and Early Exit Training.

ai-algorithms

This repository is a work in progress that contains first-principle implementations of groundbreaking AI algorithms using various deep learning frameworks. Each implementation is accompanied by supporting research papers, aiming to provide comprehensive educational resources for understanding and implementing foundational AI algorithms from scratch.

edgen

Edgen is a local GenAI API server that serves as a drop-in replacement for OpenAI's API. It provides multi-endpoint support for chat completions and speech-to-text, is model agnostic, offers optimized inference, and features model caching. Built in Rust, Edgen is natively compiled for Windows, MacOS, and Linux, eliminating the need for Docker. It allows users to utilize GenAI locally on their devices for free and with data privacy. With features like session caching, GPU support, and support for various endpoints, Edgen offers a scalable, reliable, and cost-effective solution for running GenAI applications locally.

easydist

EasyDist is an automated parallelization system and infrastructure designed for multiple ecosystems. It offers usability by making parallelizing training or inference code effortless with just a single line of change. It ensures ecological compatibility by serving as a centralized source of truth for SPMD rules at the operator-level for various machine learning frameworks. EasyDist decouples auto-parallel algorithms from specific frameworks and IRs, allowing for the development and benchmarking of different auto-parallel algorithms in a flexible manner. The architecture includes MetaOp, MetaIR, and the ShardCombine Algorithm for SPMD sharding rules without manual annotations.

Awesome_LLM_System-PaperList

Since the emergence of chatGPT in 2022, the acceleration of Large Language Model has become increasingly important. Here is a list of papers on LLMs inference and serving.

LLM-Viewer

LLM-Viewer is a tool for visualizing Language and Learning Models (LLMs) and analyzing performance on different hardware platforms. It enables network-wise analysis, considering factors such as peak memory consumption and total inference time cost. With LLM-Viewer, users can gain valuable insights into LLM inference and performance optimization. The tool can be used in a web browser or as a command line interface (CLI) for easy configuration and visualization. The ongoing project aims to enhance features like showing tensor shapes, expanding hardware platform compatibility, and supporting more LLMs with manual model graph configuration.

ServerlessLLM

ServerlessLLM is a fast, affordable, and easy-to-use library designed for multi-LLM serving, optimized for environments with limited GPU resources. It supports loading various leading LLM inference libraries, achieving fast load times, and reducing model switching overhead. The library facilitates easy deployment via Ray Cluster and Kubernetes, integrates with the OpenAI Query API, and is actively maintained by contributors.

sarathi-serve

Sarathi-Serve is the official OSDI'24 artifact submission for paper #444, focusing on 'Taming Throughput-Latency Tradeoff in LLM Inference'. It is a research prototype built on top of CUDA 12.1, designed to optimize throughput-latency tradeoff in Large Language Models (LLM) inference. The tool provides a Python environment for users to install and reproduce results from the associated experiments. Users can refer to specific folders for individual figures and are encouraged to cite the paper if they use the tool in their work.

For similar jobs

Qwen-TensorRT-LLM

Qwen-TensorRT-LLM is a project developed for the NVIDIA TensorRT Hackathon 2023, focusing on accelerating inference for the Qwen-7B-Chat model using TRT-LLM. The project offers various functionalities such as FP16/BF16 support, INT8 and INT4 quantization options, Tensor Parallel for multi-GPU parallelism, web demo setup with gradio, Triton API deployment for maximum throughput/concurrency, fastapi integration for openai requests, CLI interaction, and langchain support. It supports models like qwen2, qwen, and qwen-vl for both base and chat models. The project also provides tutorials on Bilibili and blogs for adapting Qwen models in NVIDIA TensorRT-LLM, along with hardware requirements and quick start guides for different model types and quantization methods.

dl_model_infer

This project is a c++ version of the AI reasoning library that supports the reasoning of tensorrt models. It provides accelerated deployment cases of deep learning CV popular models and supports dynamic-batch image processing, inference, decode, and NMS. The project has been updated with various models and provides tutorials for model exports. It also includes a producer-consumer inference model for specific tasks. The project directory includes implementations for model inference applications, backend reasoning classes, post-processing, pre-processing, and target detection and tracking. Speed tests have been conducted on various models, and onnx downloads are available for different models.

joliGEN

JoliGEN is an integrated framework for training custom generative AI image-to-image models. It implements GAN, Diffusion, and Consistency models for various image translation tasks, including domain and style adaptation with conservation of semantics. The tool is designed for real-world applications such as Controlled Image Generation, Augmented Reality, Dataset Smart Augmentation, and Synthetic to Real transforms. JoliGEN allows for fast and stable training with a REST API server for simplified deployment. It offers a wide range of options and parameters with detailed documentation available for models, dataset formats, and data augmentation.

ai-edge-torch

AI Edge Torch is a Python library that supports converting PyTorch models into a .tflite format for on-device applications on Android, iOS, and IoT devices. It offers broad CPU coverage with initial GPU and NPU support, closely integrating with PyTorch and providing good coverage of Core ATen operators. The library includes a PyTorch converter for model conversion and a Generative API for authoring mobile-optimized PyTorch Transformer models, enabling easy deployment of Large Language Models (LLMs) on mobile devices.

awesome-RK3588

RK3588 is a flagship 8K SoC chip by Rockchip, integrating Cortex-A76 and Cortex-A55 cores with NEON coprocessor for 8K video codec. This repository curates resources for developing with RK3588, including official resources, RKNN models, projects, development boards, documentation, tools, and sample code.

cl-waffe2

cl-waffe2 is an experimental deep learning framework in Common Lisp, providing fast, systematic, and customizable matrix operations, reverse mode tape-based Automatic Differentiation, and neural network model building and training features accelerated by a JIT Compiler. It offers abstraction layers, extensibility, inlining, graph-level optimization, visualization, debugging, systematic nodes, and symbolic differentiation. Users can easily write extensions and optimize their networks without overheads. The framework is designed to eliminate barriers between users and developers, allowing for easy customization and extension.

TensorRT-Model-Optimizer

The NVIDIA TensorRT Model Optimizer is a library designed to quantize and compress deep learning models for optimized inference on GPUs. It offers state-of-the-art model optimization techniques including quantization and sparsity to reduce inference costs for generative AI models. Users can easily stack different optimization techniques to produce quantized checkpoints from torch or ONNX models. The quantized checkpoints are ready for deployment in inference frameworks like TensorRT-LLM or TensorRT, with planned integrations for NVIDIA NeMo and Megatron-LM. The tool also supports 8-bit quantization with Stable Diffusion for enterprise users on NVIDIA NIM. Model Optimizer is available for free on NVIDIA PyPI, and this repository serves as a platform for sharing examples, GPU-optimized recipes, and collecting community feedback.

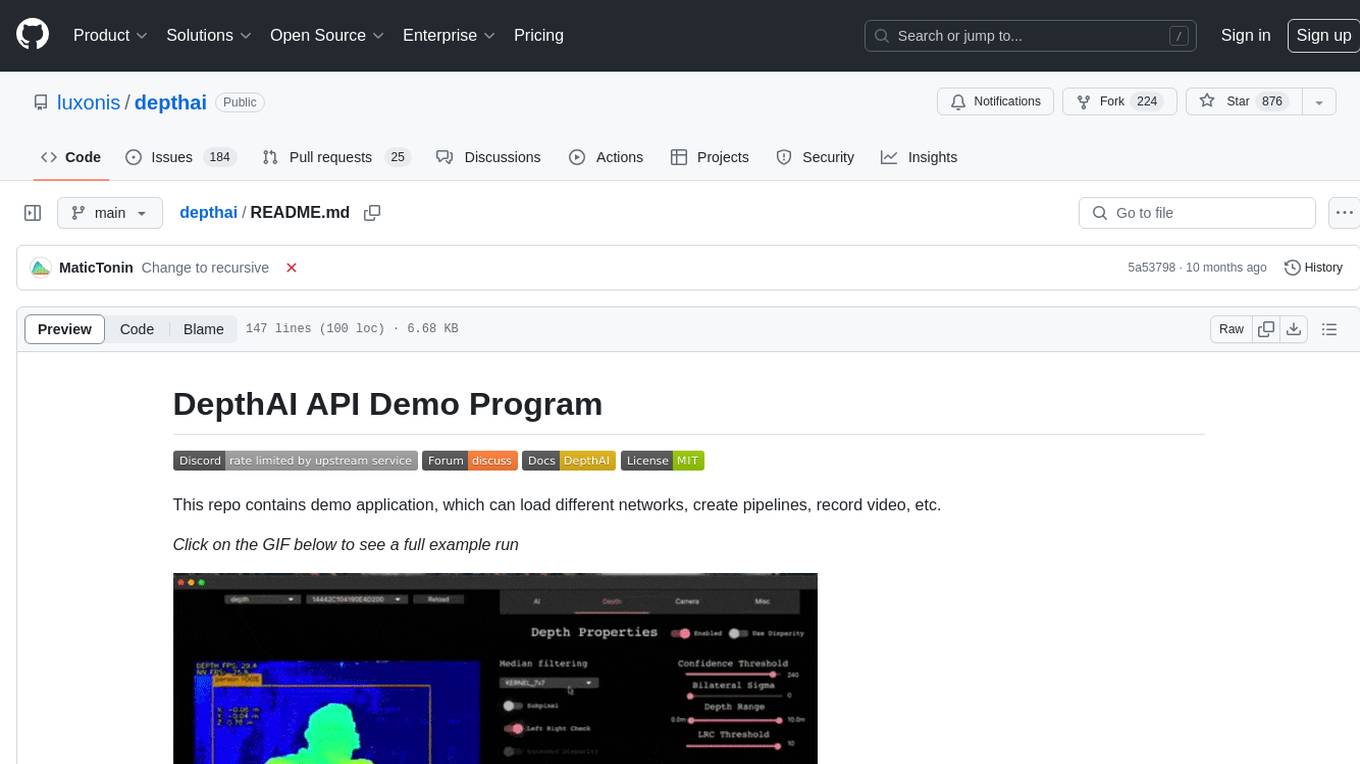

depthai

This repository contains a demo application for DepthAI, a tool that can load different networks, create pipelines, record video, and more. It provides documentation for installation and usage, including running programs through Docker. Users can explore DepthAI features via command line arguments or a clickable QT interface. Supported models include various AI models for tasks like face detection, human pose estimation, and object detection. The tool collects anonymous usage statistics by default, which can be disabled. Users can report issues to the development team for support and troubleshooting.