jido

🤖 Autonomous agent framework for Elixir. Built for distributed, autonomous behavior and dynamic workflows.

Stars: 900

Jido is a toolkit for building autonomous, distributed agent systems in Elixir. It provides the foundation for creating smart, composable workflows that can evolve and respond to their environment. Geared towards Agent builders, it contains core state primitives, composable actions, agent data structures, real-time sensors, signal system, skills, and testing tools. Jido is designed for multi-node Elixir clusters and offers rich helpers for unit and property-based testing.

README:

Pure functional agents and OTP runtime for building autonomous multi-agent workflows in Elixir.

The name "Jido" (自動) comes from the Japanese word meaning "automatic" or "automated", where 自 (ji) means "self" and 動 (dō) means "movement".

Learn more about Jido at agentjido.xyz.

With Jido, your agents are immutable data structures with a single command function:

defmodule MyAgent do

use Jido.Agent,

name: "my_agent",

description: "My custom agent",

schema: [

count: [type: :integer, default: 0]

]

end

end

{agent, directives} = MyAgent.cmd(agent, action)State changes are pure data transformations; side effects are described as directives and executed by an OTP runtime. You get deterministic agent logic, testability without processes, and a clear path to running those agents in production.

Jido is the core package of the Jido ecosystem. The ecosystem is built around the core Jido Agent behavior and offer several opt-in packages to extend the core behavior.

| Package | Description |

|---|---|

| req_llm | HTTP client for LLM APIs |

| jido_action | Composable, validated actions with AI tool integration |

| jido_signal | CloudEvents-based message envelope and supporting utilities for routing and pub/sub messaging |

| jido | Core agent framework with state management, directives, and runtime |

| jido_ai | AI/LLM integration for agents |

For demos and examples of what you can build with the Jido Ecosystem, see https://agentjido.xyz.

OTP primitives are excellent. You can build agent systems with raw GenServer. But when building multiple cooperating agents, you'll reinvent:

| Raw OTP | Jido Formalizes |

|---|---|

| Ad-hoc message shapes per GenServer | Signals as standard envelope |

| Business logic mixed in callbacks | Actions as reusable command pattern |

| Implicit effects scattered in code | Directives as typed effect descriptions |

| Custom child tracking per server | Built-in parent/child hierarchy |

| Process exit = completion | State-based completion semantics |

Jido isn't "better GenServer" - it's a formalized agent pattern built on GenServer.

- Pure functional agent design inspired by Elm/Redux

-

cmd/2as the core operation: actions in, updated agent + directives out - Schema-validated state with NimbleOptions or Zoi

- Actions transform state; directives describe external effects

- Built-in directives: Emit, Spawn, SpawnAgent, StopChild, Schedule, Stop

- Protocol-based extensibility for custom directives

- GenServer-based AgentServer for production deployment

- Parent-child agent hierarchies with lifecycle management

- Signal routing with configurable strategies

- Instance-scoped supervision for multi-tenant deployments

- Reusable capability modules that extend agents

- State isolation per plugin with automatic schema merging

- Lifecycle hooks for initialization and signal handling

- Direct execution for simple workflows

- FSM (Finite State Machine) strategy for state-driven workflows

- Extensible strategy protocol for custom execution patterns

- Multi-agent workflows with configurable strategies

- Plan-based orchestration for complex workflows

- Extensible strategy protocol for custom execution patterns

The fastest way to get started is with Igniter:

mix igniter.install jidoThis automatically:

- Adds Jido to your dependencies

- Creates configuration in

config/config.exs - Adds

Jido.Bus.InMemoryto your supervision tree

Generate an example agent to get started:

mix igniter.install jido --exampleAdd jido to your list of dependencies in mix.exs:

def deps do

[

{:jido, "~> 2.0"}

]

endThen define a Jido instance module and add it to your supervision tree:

# In lib/my_app/jido.ex

defmodule MyApp.Jido do

use Jido, otp_app: :my_app

end# In config/config.exs

config :my_app, MyApp.Jido,

max_tasks: 1000,

agent_pools: []# In your application.ex

children = [

MyApp.Jido

]

Supervisor.start_link(children, strategy: :one_for_one)defmodule MyApp.CounterAgent do

use Jido.Agent,

name: "counter",

description: "A simple counter agent",

schema: [

count: [type: :integer, default: 0]

]

enddefmodule MyApp.Actions.Increment do

use Jido.Action,

name: "increment",

description: "Increments the counter by a given amount",

schema: [

amount: [type: :integer, default: 1]

]

def run(params, context) do

current = context.state[:count] || 0

{:ok, %{count: current + params.amount}}

end

end# Create an agent

agent = MyApp.CounterAgent.new()

# Execute an action - returns updated agent + directives

{agent, directives} = MyApp.CounterAgent.cmd(agent, {MyApp.Actions.Increment, %{amount: 5}})

# Check the state

agent.state.count

# => 5# Start the agent server

{:ok, pid} = MyApp.Jido.start_agent(MyApp.CounterAgent, id: "counter-1")

# Send signals to the running agent (synchronous)

{:ok, agent} = Jido.AgentServer.call(pid, Jido.Signal.new!("increment", %{amount: 10}, source: "/user"))

# Look up the agent by ID

pid = MyApp.Jido.whereis("counter-1")

# List all running agents

agents = MyApp.Jido.list_agents()The fundamental operation in Jido:

{agent, directives} = MyAgent.cmd(agent, action)Key invariants:

- The returned

agentis always complete - no "apply directives" step needed -

directivesdescribe external effects only - they never modify agent state -

cmd/2is a pure function - same inputs always produce same outputs

| Actions | Directives | State Operations |

|---|---|---|

| Transform state, may perform side effects | Describe external effects | Describe internal state changes |

Executed by cmd/2, update agent.state

|

Bare structs emitted by agents | Applied by strategy layer |

| Can call APIs, read files, query databases | Runtime (AgentServer) interprets them | Never leave the strategy |

State operations are internal state transitions handled by the strategy layer during cmd/2. Unlike directives, they never reach the runtime.

| StateOp | Purpose |

|---|---|

SetState |

Deep merge attributes into state |

ReplaceState |

Replace state wholesale |

DeleteKeys |

Remove top-level keys |

SetPath |

Set value at nested path |

DeletePath |

Delete value at nested path |

| Directive | Purpose |

|---|---|

Emit |

Dispatch a signal via configured adapters |

Error |

Signal an error from cmd/2 |

Spawn |

Spawn a generic BEAM child process |

SpawnAgent |

Spawn a child Jido agent with hierarchy tracking |

StopChild |

Gracefully stop a tracked child agent |

Schedule |

Schedule a delayed message |

Stop |

Stop the agent process |

Start here:

- Quick Start - Build your first agent in 5 minutes

- Core Loop - Understand the mental model

Guides:

- Building Agents - Agent definitions and state management

- Signals & Routing - Signal-based communication

- Agent Directives - Effect descriptions for the runtime

- Runtime and AgentServer - Process-based agent execution

- Persistence & Storage - Hibernate, thaw, and InstanceManager lifecycle

- Scheduling - Declarative and dynamic cron scheduling

- Plugins - Composable capability bundles

- Strategies - Execution strategies (Direct, FSM)

Advanced:

- FSM Strategy Deep Dive - State machine workflows

- Worker Pools - Pre-warmed agent pools for throughput

- Testing Agents - Testing patterns and best practices

API Reference: hexdocs.pm/jido

- Elixir 1.17+

- Erlang/OTP 26+

mix testmix quality # Runs formatter, dialyzer, and credoWe welcome contributions! Please see our Contributing Guide for details on:

- Setting up your development environment

- Running tests and quality checks

- Submitting pull requests

- Code style guidelines

Copyright 2024-2025 Mike Hostetler

Licensed under the Apache License, Version 2.0. See LICENSE for details.

- Documentation: https://hexdocs.pm/jido

- GitHub: https://github.com/agentjido/jido

- AgentJido: https://agentjido.xyz

- Jido Workbench: https://github.com/agentjido/jido_workbench

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for jido

Similar Open Source Tools

jido

Jido is a toolkit for building autonomous, distributed agent systems in Elixir. It provides the foundation for creating smart, composable workflows that can evolve and respond to their environment. Geared towards Agent builders, it contains core state primitives, composable actions, agent data structures, real-time sensors, signal system, skills, and testing tools. Jido is designed for multi-node Elixir clusters and offers rich helpers for unit and property-based testing.

factorio-learning-environment

Factorio Learning Environment is an open source framework designed for developing and evaluating LLM agents in the game of Factorio. It provides two settings: Lab-play with structured tasks and Open-play for building large factories. Results show limitations in spatial reasoning and automation strategies. Agents interact with the environment through code synthesis, observation, action, and feedback. Tools are provided for game actions and state representation. Agents operate in episodes with observation, planning, and action execution. Tasks specify agent goals and are implemented in JSON files. The project structure includes directories for agents, environment, cluster, data, docs, eval, and more. A database is used for checkpointing agent steps. Benchmarks show performance metrics for different configurations.

open-responses

OpenResponses API provides enterprise-grade AI capabilities through a powerful API, simplifying development and deployment while ensuring complete data control. It offers automated tracing, integrated RAG for contextual information retrieval, pre-built tool integrations, self-hosted architecture, and an OpenAI-compatible interface. The toolkit addresses development challenges like feature gaps and integration complexity, as well as operational concerns such as data privacy and operational control. Engineering teams can benefit from improved productivity, production readiness, compliance confidence, and simplified architecture by choosing OpenResponses.

skyvern

Skyvern automates browser-based workflows using LLMs and computer vision. It provides a simple API endpoint to fully automate manual workflows, replacing brittle or unreliable automation solutions. Traditional approaches to browser automations required writing custom scripts for websites, often relying on DOM parsing and XPath-based interactions which would break whenever the website layouts changed. Instead of only relying on code-defined XPath interactions, Skyvern adds computer vision and LLMs to the mix to parse items in the viewport in real-time, create a plan for interaction and interact with them. This approach gives us a few advantages: 1. Skyvern can operate on websites it’s never seen before, as it’s able to map visual elements to actions necessary to complete a workflow, without any customized code 2. Skyvern is resistant to website layout changes, as there are no pre-determined XPaths or other selectors our system is looking for while trying to navigate 3. Skyvern leverages LLMs to reason through interactions to ensure we can cover complex situations. Examples include: 1. If you wanted to get an auto insurance quote from Geico, the answer to a common question “Were you eligible to drive at 18?” could be inferred from the driver receiving their license at age 16 2. If you were doing competitor analysis, it’s understanding that an Arnold Palmer 22 oz can at 7/11 is almost definitely the same product as a 23 oz can at Gopuff (even though the sizes are slightly different, which could be a rounding error!) Want to see examples of Skyvern in action? Jump to #real-world-examples-of- skyvern

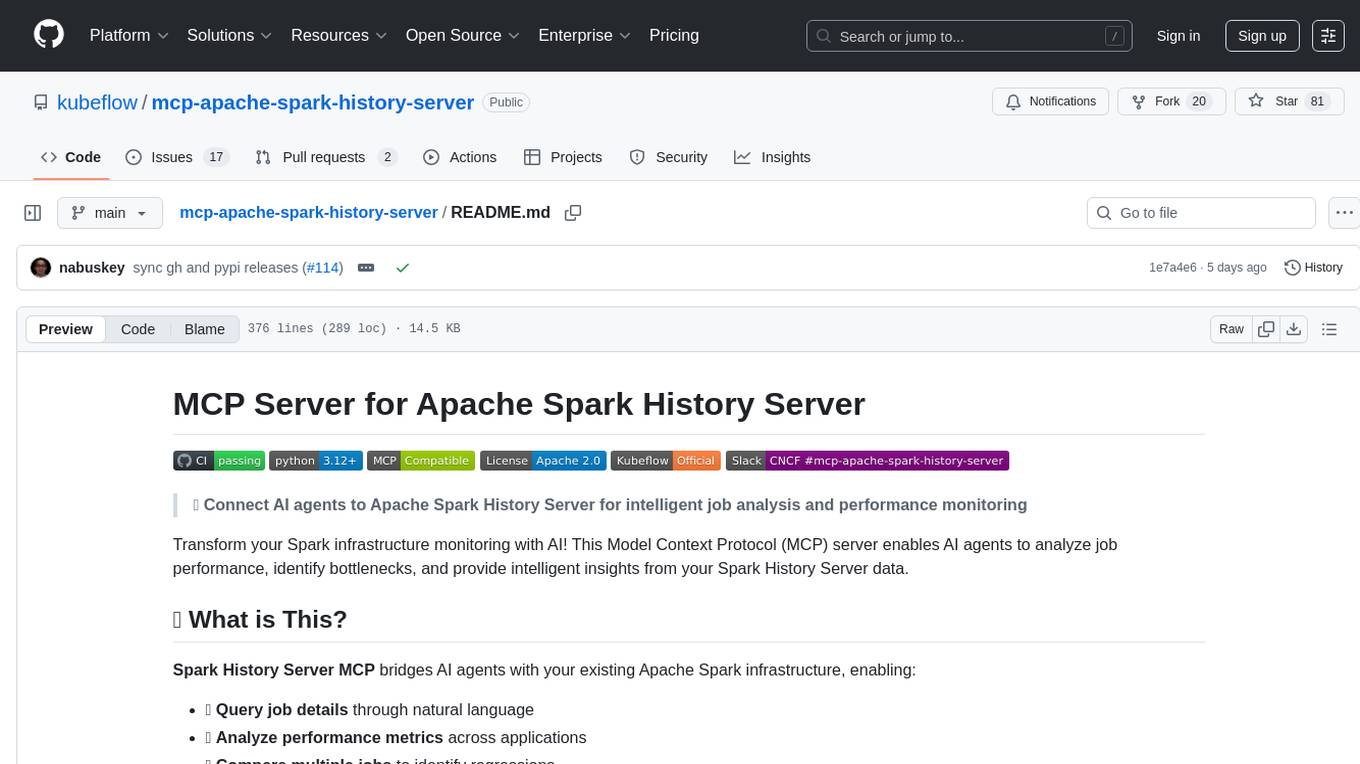

mcp-apache-spark-history-server

The MCP Server for Apache Spark History Server is a tool that connects AI agents to Apache Spark History Server for intelligent job analysis and performance monitoring. It enables AI agents to analyze job performance, identify bottlenecks, and provide insights from Spark History Server data. The server bridges AI agents with existing Apache Spark infrastructure, allowing users to query job details, analyze performance metrics, compare multiple jobs, investigate failures, and generate insights from historical execution data.

evalchemy

Evalchemy is a unified and easy-to-use toolkit for evaluating language models, focusing on post-trained models. It integrates multiple existing benchmarks such as RepoBench, AlpacaEval, and ZeroEval. Key features include unified installation, parallel evaluation, simplified usage, and results management. Users can run various benchmarks with a consistent command-line interface and track results locally or integrate with a database for systematic tracking and leaderboard submission.

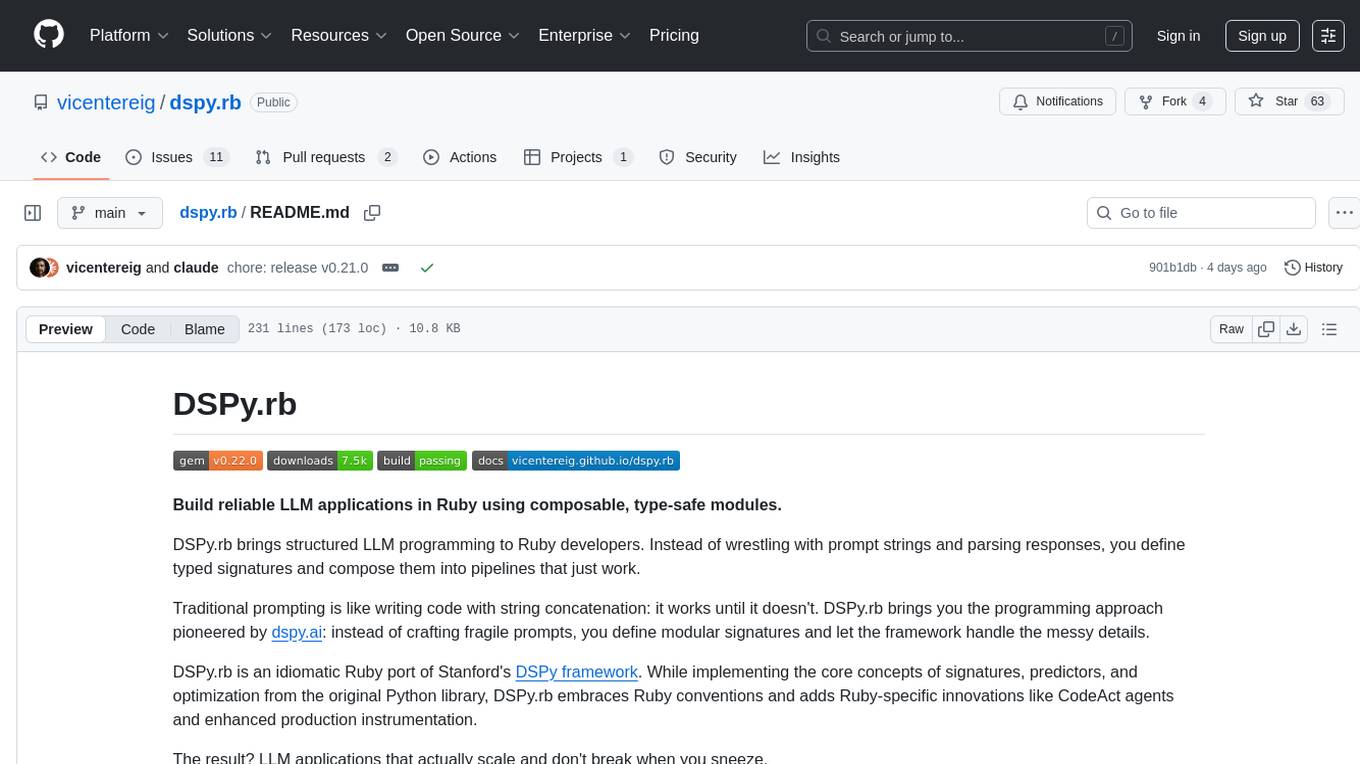

dspy.rb

DSPy.rb is a Ruby framework for building reliable LLM applications using composable, type-safe modules. It enables developers to define typed signatures and compose them into pipelines, offering a more structured approach compared to traditional prompting. The framework embraces Ruby conventions and adds innovations like CodeAct agents and enhanced production instrumentation, resulting in scalable LLM applications that are robust and efficient. DSPy.rb is actively developed, with a focus on stability and real-world feedback through the 0.x series before reaching a stable v1.0 API.

pr-pilot

PR Pilot is an AI-powered tool designed to assist users in their daily workflow by delegating routine work to AI with confidence and predictability. It integrates seamlessly with popular development tools and allows users to interact with it through a Command-Line Interface, Python SDK, REST API, and Smart Workflows. Users can automate tasks such as generating PR titles and descriptions, summarizing and posting issues, and formatting README files. The tool aims to save time and enhance productivity by providing AI-powered solutions for common development tasks.

sec-code-bench

SecCodeBench is a benchmark suite for evaluating the security of AI-generated code, specifically designed for modern Agentic Coding Tools. It addresses challenges in existing security benchmarks by ensuring test case quality, employing precise evaluation methods, and covering Agentic Coding Tools. The suite includes 98 test cases across 5 programming languages, focusing on functionality-first evaluation and dynamic execution-based validation. It offers a highly extensible testing framework for end-to-end automated evaluation of agentic coding tools, generating comprehensive reports and logs for analysis and improvement.

EasySteer

EasySteer is a unified framework built on vLLM for high-performance LLM steering. It offers fast, flexible, and easy-to-use steering capabilities with features like high performance, modular design, fine-grained control, pre-computed steering vectors, and an interactive demo. Users can interactively configure models, adjust steering parameters, and test interventions without writing code. The tool supports OpenAI-compatible APIs and provides modules for hidden states extraction, analysis-based steering, learning-based steering, and a frontend web interface for interactive steering and ReFT interventions.

Consistency_LLM

Consistency Large Language Models (CLLMs) is a family of efficient parallel decoders that reduce inference latency by efficiently decoding multiple tokens in parallel. The models are trained to perform efficient Jacobi decoding, mapping any randomly initialized token sequence to the same result as auto-regressive decoding in as few steps as possible. CLLMs have shown significant improvements in generation speed on various tasks, achieving up to 3.4 times faster generation. The tool provides a seamless integration with other techniques for efficient Large Language Model (LLM) inference, without the need for draft models or architectural modifications.

manim-generator

The 'manim-generator' repository focuses on automatic video generation using an agentic LLM flow combined with the manim python library. It experiments with automated Manim video creation by delegating code drafting and validation to specific roles, reducing render failures, and improving visual consistency through iterative feedback and vision inputs. The project also includes 'Manim Bench' for comparing AI models on full Manim video generation.

call-center-ai

Call Center AI is an AI-powered call center solution leveraging Azure and OpenAI GPT. It allows for AI agent-initiated phone calls or direct calls to the bot from a configured phone number. The bot is customizable for various industries like insurance, IT support, and customer service, with features such as accessing claim information, conversation history, language change, SMS sending, and more. The project is a proof of concept showcasing the integration of Azure Communication Services, Azure Cognitive Services, and Azure OpenAI for an automated call center solution.

curator

Bespoke Curator is an open-source tool for data curation and structured data extraction. It provides a Python library for generating synthetic data at scale, with features like programmability, performance optimization, caching, and integration with HuggingFace Datasets. The tool includes a Curator Viewer for dataset visualization and offers a rich set of functionalities for creating and refining data generation strategies.

AutoGPTQ

AutoGPTQ is an easy-to-use LLM quantization package with user-friendly APIs, based on GPTQ algorithm (weight-only quantization). It provides a simple and efficient way to quantize large language models (LLMs) to reduce their size and computational cost while maintaining their performance. AutoGPTQ supports a wide range of LLM models, including GPT-2, GPT-J, OPT, and BLOOM. It also supports various evaluation tasks, such as language modeling, sequence classification, and text summarization. With AutoGPTQ, users can easily quantize their LLM models and deploy them on resource-constrained devices, such as mobile phones and embedded systems.

CrackSQL

CrackSQL is a powerful SQL dialect translation tool that integrates rule-based strategies with large language models (LLMs) for high accuracy. It enables seamless conversion between dialects (e.g., PostgreSQL → MySQL) with flexible access through Python API, command line, and web interface. The tool supports extensive dialect compatibility, precision & advanced processing, and versatile access & integration. It offers three modes for dialect translation and demonstrates high translation accuracy over collected benchmarks. Users can deploy CrackSQL using PyPI package installation or source code installation methods. The tool can be extended to support additional syntax, new dialects, and improve translation efficiency. The project is actively maintained and welcomes contributions from the community.

For similar tasks

OpenAGI

OpenAGI is an AI agent creation package designed for researchers and developers to create intelligent agents using advanced machine learning techniques. The package provides tools and resources for building and training AI models, enabling users to develop sophisticated AI applications. With a focus on collaboration and community engagement, OpenAGI aims to facilitate the integration of AI technologies into various domains, fostering innovation and knowledge sharing among experts and enthusiasts.

GPTSwarm

GPTSwarm is a graph-based framework for LLM-based agents that enables the creation of LLM-based agents from graphs and facilitates the customized and automatic self-organization of agent swarms with self-improvement capabilities. The library includes components for domain-specific operations, graph-related functions, LLM backend selection, memory management, and optimization algorithms to enhance agent performance and swarm efficiency. Users can quickly run predefined swarms or utilize tools like the file analyzer. GPTSwarm supports local LM inference via LM Studio, allowing users to run with a local LLM model. The framework has been accepted by ICML2024 and offers advanced features for experimentation and customization.

AgentForge

AgentForge is a low-code framework tailored for the rapid development, testing, and iteration of AI-powered autonomous agents and Cognitive Architectures. It is compatible with a range of LLM models and offers flexibility to run different models for different agents based on specific needs. The framework is designed for seamless extensibility and database-flexibility, making it an ideal playground for various AI projects. AgentForge is a beta-testing ground and future-proof hub for crafting intelligent, model-agnostic autonomous agents.

atomic_agents

Atomic Agents is a modular and extensible framework designed for creating powerful applications. It follows the principles of Atomic Design, emphasizing small and single-purpose components. Leveraging Pydantic for data validation and serialization, the framework offers a set of tools and agents that can be combined to build AI applications. It depends on the Instructor package and supports various APIs like OpenAI, Cohere, Anthropic, and Gemini. Atomic Agents is suitable for developers looking to create AI agents with a focus on modularity and flexibility.

LongRoPE

LongRoPE is a method to extend the context window of large language models (LLMs) beyond 2 million tokens. It identifies and exploits non-uniformities in positional embeddings to enable 8x context extension without fine-tuning. The method utilizes a progressive extension strategy with 256k fine-tuning to reach a 2048k context. It adjusts embeddings for shorter contexts to maintain performance within the original window size. LongRoPE has been shown to be effective in maintaining performance across various tasks from 4k to 2048k context lengths.

ax

Ax is a Typescript library that allows users to build intelligent agents inspired by agentic workflows and the Stanford DSP paper. It seamlessly integrates with multiple Large Language Models (LLMs) and VectorDBs to create RAG pipelines or collaborative agents capable of solving complex problems. The library offers advanced features such as streaming validation, multi-modal DSP, and automatic prompt tuning using optimizers. Users can easily convert documents of any format to text, perform smart chunking, embedding, and querying, and ensure output validation while streaming. Ax is production-ready, written in Typescript, and has zero dependencies.

Awesome-AI-Agents

Awesome-AI-Agents is a curated list of projects, frameworks, benchmarks, platforms, and related resources focused on autonomous AI agents powered by Large Language Models (LLMs). The repository showcases a wide range of applications, multi-agent task solver projects, agent society simulations, and advanced components for building and customizing AI agents. It also includes frameworks for orchestrating role-playing, evaluating LLM-as-Agent performance, and connecting LLMs with real-world applications through platforms and APIs. Additionally, the repository features surveys, paper lists, and blogs related to LLM-based autonomous agents, making it a valuable resource for researchers, developers, and enthusiasts in the field of AI.

CodeFuse-muAgent

CodeFuse-muAgent is a Multi-Agent framework designed to streamline Standard Operating Procedure (SOP) orchestration for agents. It integrates toolkits, code libraries, knowledge bases, and sandbox environments for rapid construction of complex Multi-Agent interactive applications. The framework enables efficient execution and handling of multi-layered and multi-dimensional tasks.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.