positronic

Python-native stack for real-life ML robotics

Stars: 60

Positronic is an end-to-end toolkit for building ML-driven robotics systems, aiming to simplify data collection, messy data handling, and complex deployment in the field of robotics. It provides a Python-native stack for real-life ML robotics, covering hardware integration, dataset curation, policy training, deployment, and monitoring. The toolkit is designed to make professional-grade ML robotics approachable, without the need for ROS. Positronic offers solutions for data ops, hardware drivers, unified inference API, and iteration workflows, enabling teams to focus on developing manipulation systems for robots.

README:

AI promises to transform robotics: teach robots through demonstrations instead of code. ML-driven approaches can unlock capabilities traditional analytical control can't reach.

The field is early. The ecosystem lacks dedicated instruments to make development simple, repeatable, and accessible:

- Data collection is expensive: Hardware integration, teleoperation setup, dataset curation all require specialized expertise

- Data is messy: Multi-rate sensors, format fragmentation, re-recording for each framework, datasets thrown away when you try different state/action representations

- Deployment is complex: Vendor-specific APIs, hardware compatibility issues, monitoring infrastructure from scratch

Positronic solves these operational challenges so teams building manipulation systems can focus on what their robots should do, not how to make the infrastructure work.

Positronic is an end-to-end toolkit for building ML-driven robotics systems.

It covers the full lifecycle: bring new hardware online, capture and curate datasets, train and evaluate policies, deploy inference, monitor performance, and iterate when behaviour drifts.

Every subsystem is implemented in plain Python. No ROS required. Compatible with LeRobot training and foundation models like OpenPI and GR00T.

Our goal is to make professional-grade ML robotics approachable. Join the conversation on the Positronic Discord to share feedback, showcase projects, and get help from the community.

Positronic is under heavy development and in alpha stage. APIs, interfaces, and workflows may change significantly between releases.

Positronic builds on the robotics ML ecosystem:

- LeRobot/HuggingFace for training scripts and workflows

- MuJoCo for physics simulation

- Rerun.io for visualization

- Foundation model builders: Physical Intelligence and NVIDIA

We focus on what's missing: the plumbing, hardware integration, and operational lifecycle that production systems need.

The ecosystem provides: Training frameworks, foundation models, simulation engines, model research Positronic adds: Data ops, hardware drivers, unified inference API, iteration workflows, deployment infrastructure

Problem solved: Stop re-recording datasets for each framework AND stop throwing away datasets when you want different state/action formats.

The Positronic dataset library provides raw data storage and a unified API for plumbing, preprocessing, and backward compatibility. Codecs apply lazy transforms to convert one dataset into LeRobot, GR00T, or OpenPI format without re-recording.

Try different state representations (joint space vs end-effector space), action formats (absolute vs delta), observation encodings, all from the same raw data. Immutable storage, composable transforms, infinite uses.

Problem solved: Vendor lock-in and API fragmentation.

The offboard inference system provides a single WebSocket protocol (v1) across all vendors. The RemotePolicy client works interchangeably with LeRobot, GR00T, and OpenPI servers.

Built-in status streaming handles long model loads (120-300s) gracefully. Swap models without changing hardware code.

pimm wires sensors, controllers, inference, and GUIs without ROS launch files or bespoke DSLs. Control loops stay testable and readable. See the Pimm README for details.

Positronic supports state-of-the-art foundation models with first-class workflows:

| Model | Capability | Training | Inference | Best For |

|---|---|---|---|---|

| OpenPI (π₀.₅) | Most capable, generalist | Capable GPU (~78GB, LoRA) | Capable GPU (~62GB) | Complex multi-task manipulation |

| GR00T | Generalist robot policy | Capable GPU (~50GB) | Smaller GPU (~7.5GB) | Logistics and industry applications |

| LeRobot ACT | Single-task, efficient | Consumer GPU | Consumer GPU | Specific manipulation tasks |

Recommendation: Start with ACT if you want something quick and low-cost. Progress to GR00T or OpenPI if you need more capable models. Positronic makes switching easy.

Our goal is to democratize ML/AI in robotics. You shouldn't be locked to a single vendor or architecture.

Positronic's plug-and-play structure means:

- Same dataset format — Record once, train on any model

- Same inference API — Swap models without changing hardware code

- Easy experimentation — Try all models with your data, pick what works best

- Future-proof — We'll keep adding foundation models as they emerge

See Model Selection Guide for detailed comparison and decision criteria.

Clone the repository and set up a local uv environment.

Prerequisites: Python 3.11, uv, libturbojpeg, and FFmpeg

sudo apt install libturbojpeg ffmpeg portaudio19-dev # Linux

brew install jpeg-turbo ffmpeg # macOS

git clone [email protected]:Positronic-Robotics/positronic.git

cd positronic

uv venv -p 3.11 # optional but keeps the interpreter isolated

source .venv/bin/activate # activate the venv if you created one

uv sync --frozen --extra dev # install core + dev toolingInstall hardware extras only when you need physical robot drivers (Linux only):

uv sync --frozen --extra hardwareAfter installation, the following command-line scripts will be available:

-

positronic-data-collection: Collect demonstrations in simulation or on hardware -

positronic-server: Browse and inspect datasets -

positronic-to-lerobot: Convert datasets to model format -

positronic-inference: Run trained policies in simulation or on hardware

All commands work both inside an activated virtual environment and with uv run prefix (e.g., uv run positronic-server).

For training and inference servers, use vendor-specific Docker services (see Training Workflow).

uv run positronic-data-collection sim \

--output_dir=~/datasets/stack_cubes_raw \

--sound=None --webxr=.iphoneOpens MuJoCo simulation with phone-based teleoperation. Record demonstrations by moving your phone to control the robot arm and using the on-screen controls to open/close the gripper and start/stop recording.

Then browse your episodes:

uv run positronic-server --dataset.path=~/datasets/stack_cubes_raw --port=5001Visit http://localhost:5001 to view episodes. Continue to full workflow below.

The usual loop is: collect demonstrations → review and curate → train → validate and iterate.

Use the data collection script for both simulation and hardware captures.

Quick start in simulation:

uv run positronic-data-collection sim \

--output_dir=~/datasets/stack_cubes_raw \

--sound=None --webxr=.iphone --operator_position=.BACKLoads the MuJoCo scene, starts the DearPyGui UI, and records episodes into the local dataset.

Teleoperation:

- Phone (iPhone/Android) or VR headset (Oculus) control the robot in 6-DOF

- Browser shows AR interface with Track, Record, Reset buttons

- See Data Collection Guide for complete setup

Physical robots:

uv run positronic-data-collection real --output_dir=~/datasets/franka_kitchen

uv run positronic-data-collection so101 --output_dir=~/datasets/so101_runs

uv run positronic-data-collection droid --output_dir=~/datasets/droid_runsBrowse datasets with the positronic-server:

uv run positronic-server \

--dataset.path=~/datasets/stack_cubes_raw \

--port=5001Visit http://localhost:5001 to view episodes. The viewer is read-only for now: mark low-quality runs while watching, then rename or remove the corresponding episode directories manually.

To preview exactly what the training will see, pass the same codec configuration you'll use for conversion:

uv run positronic-server \

[email protected]_all \

--dataset.path=~/datasets/stack_cubes_raw \

[email protected] \

--port=5001Convert curated runs using a codec:

cd docker && docker compose run --rm positronic-to-lerobot convert \

--dataset.dataset=.local \

--dataset.dataset.path=~/datasets/stack_cubes_raw \

[email protected] \

--output_dir=~/datasets/lerobot/stack_cubes \

--task="pick up the green cube and place it on the red cube" \

--fps=30Train using vendor-specific workflows:

Training is handled through Docker services. Example with ACT (fastest baseline):

cd docker && docker compose run --rm lerobot-train \

--input_path=~/datasets/lerobot/stack_cubes \

--exp_name=stack_cubes_act \

--output_dir=~/checkpoints/lerobot/Progress to OpenPI or GR00T when you need more capable models. See:

Run trained policies through the inference script:

uv run positronic-inference sim \

[email protected]_absolute \

--policy.base.checkpoints_dir=~/checkpoints/openpi/<run_id> \

--driver.simulation_time=60 \

--driver.show_gui=True \

--output_dir=~/datasets/inference_logs/stack_cubes_pi0Remote inference (run policy on a different machine):

# On inference server:

cd docker && docker compose run --rm --service-ports lerobot-server \

--checkpoints_dir=~/checkpoints/lerobot/<run_id> \

[email protected]_absolute

# On robot:

uv run positronic-inference sim \

--policy=.remote \

--policy.host=<server-ip>Monitor performance, collect edge cases, and iterate. See Inference Guide for details.

Core Concepts:

- Dataset Library — Storage, codecs, transforms

- Pimm Runtime — Immediate-mode control systems

- Offboard Inference — Unified protocol

Model Workflows:

- OpenPI (π₀.₅) — Recommended for most tasks

- GR00T — NVIDIA's generalist policy

- LeRobot ACT — Single-task transformer

Guides:

Hardware:

- Drivers — Robot arms, cameras, grippers

- Hardware Configs — Franka, Kinova, SO101, DROID

Install development dependencies first:

uv sync --frozen --extra dev # install core + dev toolingInstall pre-commit hooks (one-time setup):

pre-commit install --hook-type pre-commit --hook-type commit-msgRun tests and linters from the root directory:

uv run pytest --no-cov

uv run ruff check .

uv run ruff format .Use uv add / uv remove to modify dependencies and uv lock to refresh the lockfile.

We welcome contributions from the community! Before submitting a pull request, please:

- Read our CONTRIBUTING.md for detailed guidelines

- Sign your commits cryptographically (SSH or GPG signing)

- Install and use pre-commit hooks for automated checks

- Follow our code style guidelines (enforced by Ruff)

For questions or to discuss ideas before sending a PR, hop into the Discord server.

If you want to explore ML robotics, prototype policies, or learn the basics, use LeRobot. It shines for teaching and fast experiments with imitation/reinforcement learning and public datasets.

If you need to build and operate real applications, use Positronic. Beyond training, it provides the runtime, data tooling, teleoperation, and hardware integrations required to put policies on robots, monitor them, and iterate safely.

- LeRobot: Training-centric; quick demos and learning on reference robots and open datasets

- Positronic: Lifecycle-centric; immediate-mode middleware (Pimm), first-class data ops (Dataset Library), and hardware-first operations (Drivers, WebXR, inference)

We use LeRobot's training infrastructure and build on their excellent work. Positronic adds the operational layer that production systems need.

Our plans evolve with your feedback. Highlights for the next milestones:

-

Delivered

- Policy presets for π₀.₅ and GR00T. Full support for both architectures.

- Remote inference primitives. Run policies on different machines via unified WebSocket API.

-

Batch evaluation harness.

utilities/validate_server.pyfor automated checkpoint scoring.

-

Short term

- Richer Positronic Server. Surface metadata fields, annotation, and filtering flows for rapid triage.

- Direct Positronic Dataset integration. Native adapter for training scripts to stream tensors directly from Positronic datasets.

-

Medium term

- SO101 leader support. Promote SO101 from follower mode to first-class leader arm.

- New operator inputs. Keyboard and gamepad controllers for quick teleop.

- Streaming datasets. Cloud-ready dataset backend for long-running collection jobs.

- Community hardware. Continue adding camera, gripper, and arm drivers requested by adopters.

-

Long term

-

Distributed scheduling. Cross-machine orchestration on

pimmfor coordinating collectors, trainers, and inference nodes. - Hybrid cloud workflows. Episode ingestion into object storage with local curation and optional cloud inference.

-

Distributed scheduling. Cross-machine orchestration on

Let us know what you need on our Discord server, drop us a line at [email protected] or open a feature request at GitHub.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for positronic

Similar Open Source Tools

positronic

Positronic is an end-to-end toolkit for building ML-driven robotics systems, aiming to simplify data collection, messy data handling, and complex deployment in the field of robotics. It provides a Python-native stack for real-life ML robotics, covering hardware integration, dataset curation, policy training, deployment, and monitoring. The toolkit is designed to make professional-grade ML robotics approachable, without the need for ROS. Positronic offers solutions for data ops, hardware drivers, unified inference API, and iteration workflows, enabling teams to focus on developing manipulation systems for robots.

UltraRAG

The UltraRAG framework is a researcher and developer-friendly RAG system solution that simplifies the process from data construction to model fine-tuning in domain adaptation. It introduces an automated knowledge adaptation technology system, supporting no-code programming, one-click synthesis and fine-tuning, multidimensional evaluation, and research-friendly exploration work integration. The architecture consists of Frontend, Service, and Backend components, offering flexibility in customization and optimization. Performance evaluation in the legal field shows improved results compared to VanillaRAG, with specific metrics provided. The repository is licensed under Apache-2.0 and encourages citation for support.

cosdata

Cosdata is a cutting-edge AI data platform designed to power the next generation search pipelines. It features immutability, version control, and excels in semantic search, structured knowledge graphs, hybrid search capabilities, real-time search at scale, and ML pipeline integration. The platform is customizable, scalable, efficient, enterprise-grade, easy to use, and can manage multi-modal data. It offers high performance, indexing, low latency, and high requests per second. Cosdata is designed to meet the demands of modern search applications, empowering businesses to harness the full potential of their data.

Upsonic

Upsonic offers a cutting-edge enterprise-ready framework for orchestrating LLM calls, agents, and computer use to complete tasks cost-effectively. It provides reliable systems, scalability, and a task-oriented structure for real-world cases. Key features include production-ready scalability, task-centric design, MCP server support, tool-calling server, computer use integration, and easy addition of custom tools. The framework supports client-server architecture and allows seamless deployment on AWS, GCP, or locally using Docker.

sail

Sail is a tool designed to unify stream processing, batch processing, and compute-intensive workloads, serving as a drop-in replacement for Spark SQL and the Spark DataFrame API in single-process settings. It aims to streamline data processing tasks and facilitate AI workloads.

plandex

Plandex is an open source, terminal-based AI coding engine designed for complex tasks. It uses long-running agents to break up large tasks into smaller subtasks, helping users work through backlogs, navigate unfamiliar technologies, and save time on repetitive tasks. Plandex supports various AI models, including OpenAI, Anthropic Claude, Google Gemini, and more. It allows users to manage context efficiently in the terminal, experiment with different approaches using branches, and review changes before applying them. The tool is platform-independent and runs from a single binary with no dependencies.

deer-flow

DeerFlow is a community-driven Deep Research framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It supports FaaS deployment and one-click deployment based on Volcengine. The framework includes core capabilities like LLM integration, search and retrieval, RAG integration, MCP seamless integration, human collaboration, report post-editing, and content creation. The architecture is based on a modular multi-agent system with components like Coordinator, Planner, Research Team, and Text-to-Speech integration. DeerFlow also supports interactive mode, human-in-the-loop mechanism, and command-line arguments for customization.

cline-based-code-generator

HAI Code Generator is a cutting-edge tool designed to simplify and automate task execution while enhancing code generation workflows. Leveraging Specif AI, it streamlines processes like task execution, file identification, and code documentation through intelligent automation and AI-driven capabilities. Built on Cline's powerful foundation for AI-assisted development, HAI Code Generator boosts productivity and precision by automating task execution and integrating file management capabilities. It combines intelligent file indexing, context generation, and LLM-driven automation to minimize manual effort and ensure task accuracy. Perfect for developers and teams aiming to enhance their workflows.

eole

EOLE is an open language modeling toolkit based on PyTorch. It aims to provide a research-friendly approach with a comprehensive yet compact and modular codebase for experimenting with various types of language models. The toolkit includes features such as versatile training and inference, dynamic data transforms, comprehensive large language model support, advanced quantization, efficient finetuning, flexible inference, and tensor parallelism. EOLE is a work in progress with ongoing enhancements in configuration management, command line entry points, reproducible recipes, core API simplification, and plans for further simplification, refactoring, inference server development, additional recipes, documentation enhancement, test coverage improvement, logging enhancements, and broader model support.

langmanus

LangManus is a community-driven AI automation framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It implements a hierarchical multi-agent system with agents like Coordinator, Planner, Supervisor, Researcher, Coder, Browser, and Reporter. The framework supports LLM integration, search and retrieval tools, Python integration, workflow management, and visualization. LangManus aims to give back to the open-source community and welcomes contributions in various forms.

agent-zero

Agent Zero is a personal, organic agentic framework designed to be dynamic, transparent, customizable, and interactive. It uses the computer as a tool to accomplish tasks, with features like general-purpose assistant, computer as a tool, multi-agent cooperation, customizable and extensible framework, and communication skills. The tool is fully Dockerized, with Speech-to-Text and TTS capabilities, and offers real-world use cases like financial analysis, Excel automation, API integration, server monitoring, and project isolation. Agent Zero can be dangerous if not used properly and is prompt-based, guided by the prompts folder. The tool is extensively documented and has a changelog highlighting various updates and improvements.

amazon-bedrock-agentcore-samples

Amazon Bedrock AgentCore Samples repository provides examples and tutorials to deploy and operate AI agents securely at scale using any framework and model. It is framework-agnostic and model-agnostic, allowing flexibility in deployment. The repository includes tutorials, end-to-end applications, integration guides, deployment automation, and full-stack reference applications for developers to understand and implement Amazon Bedrock AgentCore capabilities into their applications.

DevoxxGenieIDEAPlugin

Devoxx Genie is a Java-based IntelliJ IDEA plugin that integrates with local and cloud-based LLM providers to aid in reviewing, testing, and explaining project code. It supports features like code highlighting, chat conversations, and adding files/code snippets to context. Users can modify REST endpoints and LLM parameters in settings, including support for cloud-based LLMs. The plugin requires IntelliJ version 2023.3.4 and JDK 17. Building and publishing the plugin is done using Gradle tasks. Users can select an LLM provider, choose code, and use commands like review, explain, or generate unit tests for code analysis.

llm-d-inference-sim

The `llm-d-inference-sim` is a lightweight, configurable, and real-time simulator designed to mimic the behavior of vLLM without the need for GPUs or running heavy models. It operates as an OpenAI-compliant server, allowing developers to test clients, schedulers, and infrastructure using realistic request-response cycles, token streaming, and latency patterns. The simulator offers modes of operation, response generation from predefined text or real datasets, latency simulation, tokenization options, LoRA management, KV cache simulation, failure injection, and deployment options for standalone or Kubernetes testing. It supports a subset of standard vLLM Prometheus metrics for observability.

postgresml

PostgresML is a powerful Postgres extension that seamlessly combines data storage and machine learning inference within your database. It enables running machine learning and AI operations directly within PostgreSQL, leveraging GPU acceleration for faster computations, integrating state-of-the-art large language models, providing built-in functions for text processing, enabling efficient similarity search, offering diverse ML algorithms, ensuring high performance, scalability, and security, supporting a wide range of NLP tasks, and seamlessly integrating with existing PostgreSQL tools and client libraries.

qdrant

Qdrant is a vector similarity search engine and vector database. It is written in Rust, which makes it fast and reliable even under high load. Qdrant can be used for a variety of applications, including: * Semantic search * Image search * Product recommendations * Chatbots * Anomaly detection Qdrant offers a variety of features, including: * Payload storage and filtering * Hybrid search with sparse vectors * Vector quantization and on-disk storage * Distributed deployment * Highlighted features such as query planning, payload indexes, SIMD hardware acceleration, async I/O, and write-ahead logging Qdrant is available as a fully managed cloud service or as an open-source software that can be deployed on-premises.

For similar tasks

morgana-form

MorGana Form is a full-stack form builder project developed using Next.js, React, TypeScript, Ant Design, PostgreSQL, and other technologies. It allows users to quickly create and collect data through survey forms. The project structure includes components, hooks, utilities, pages, constants, Redux store, themes, types, server-side code, and component packages. Environment variables are required for database settings, NextAuth login configuration, and file upload services. Additionally, the project integrates an AI model for form generation using the Ali Qianwen model API.

PC-Agent

PC Agent introduces a novel framework to empower autonomous digital agents through human cognition transfer. It consists of PC Tracker for data collection, Cognition Completion for transforming raw data, and a multi-agent system for decision-making and visual grounding. Users can set up the tool in Python environment, customize data collection with PC Tracker, process data into cognitive trajectories, and run the multi-agent system. The tool aims to enable AI to work autonomously while users sleep, providing a cognitive journey into the digital world.

RoboMatrix

RoboMatrix is a skill-centric hierarchical framework for scalable robot task planning and execution in an open-world environment. It provides a structured approach to robot task execution using a combination of hardware components, environment configuration, installation procedures, and data collection methods. The framework is developed using the ROS2 framework on Ubuntu and supports robots from DJI's RoboMaster series. Users can follow the provided installation guidance to set up RoboMatrix and utilize it for various tasks such as data collection, task execution, and dataset construction. The framework also includes a supervised fine-tuning dataset and aims to optimize communication and release additional components in the future.

LLM-Engineers-Handbook

The LLM Engineer's Handbook is an official repository containing a comprehensive guide on creating an end-to-end LLM-based system using best practices. It covers data collection & generation, LLM training pipeline, a simple RAG system, production-ready AWS deployment, comprehensive monitoring, and testing and evaluation framework. The repository includes detailed instructions on setting up local and cloud dependencies, project structure, installation steps, infrastructure setup, pipelines for data processing, training, and inference, as well as QA, tests, and running the project end-to-end.

qiaoqiaoyun

Qiaoqiaoyun is a new generation zero-code product that combines an AI application development platform, AI knowledge base, and zero-code platform, helping enterprises quickly build personalized business applications in an AI way. Users can build personalized applications that meet business needs without any code. Qiaoqiaoyun has comprehensive application building capabilities, form engine, workflow engine, and dashboard engine, meeting enterprise's normal requirements. It is also an AI application development platform based on LLM large language model and RAG open-source knowledge base question-answering system.

ds-ml-bootcamp

The DS-ML Bootcamp repository is a comprehensive resource for a one-month intensive bootcamp that covers the full machine learning workflow. It includes lessons, code examples, and resources to take participants from zero to hands-on projects. The goal is to move from unreal to real, from unimaginable to imaginable, by practicing the entire data science/machine learning journey step by step in just one month.

positronic

Positronic is an end-to-end toolkit for building ML-driven robotics systems, aiming to simplify data collection, messy data handling, and complex deployment in the field of robotics. It provides a Python-native stack for real-life ML robotics, covering hardware integration, dataset curation, policy training, deployment, and monitoring. The toolkit is designed to make professional-grade ML robotics approachable, without the need for ROS. Positronic offers solutions for data ops, hardware drivers, unified inference API, and iteration workflows, enabling teams to focus on developing manipulation systems for robots.

paper-reading

This repository is a collection of tools and resources for deep learning infrastructure, covering programming languages, algorithms, acceleration techniques, and engineering aspects. It provides information on various online tools for chip architecture, CPU and GPU benchmarks, and code analysis. Additionally, it includes content on AI compilers, deep learning models, high-performance computing, Docker and Kubernetes tutorials, Protobuf and gRPC guides, and programming languages such as C++, Python, and Shell. The repository aims to bridge the gap between algorithm understanding and engineering implementation in the fields of AI and deep learning.

For similar jobs

LitServe

LitServe is a high-throughput serving engine designed for deploying AI models at scale. It generates an API endpoint for models, handles batching, streaming, and autoscaling across CPU/GPUs. LitServe is built for enterprise scale with a focus on minimal, hackable code-base without bloat. It supports various model types like LLMs, vision, time-series, and works with frameworks like PyTorch, JAX, Tensorflow, and more. The tool allows users to focus on model performance rather than serving boilerplate, providing full control and flexibility.

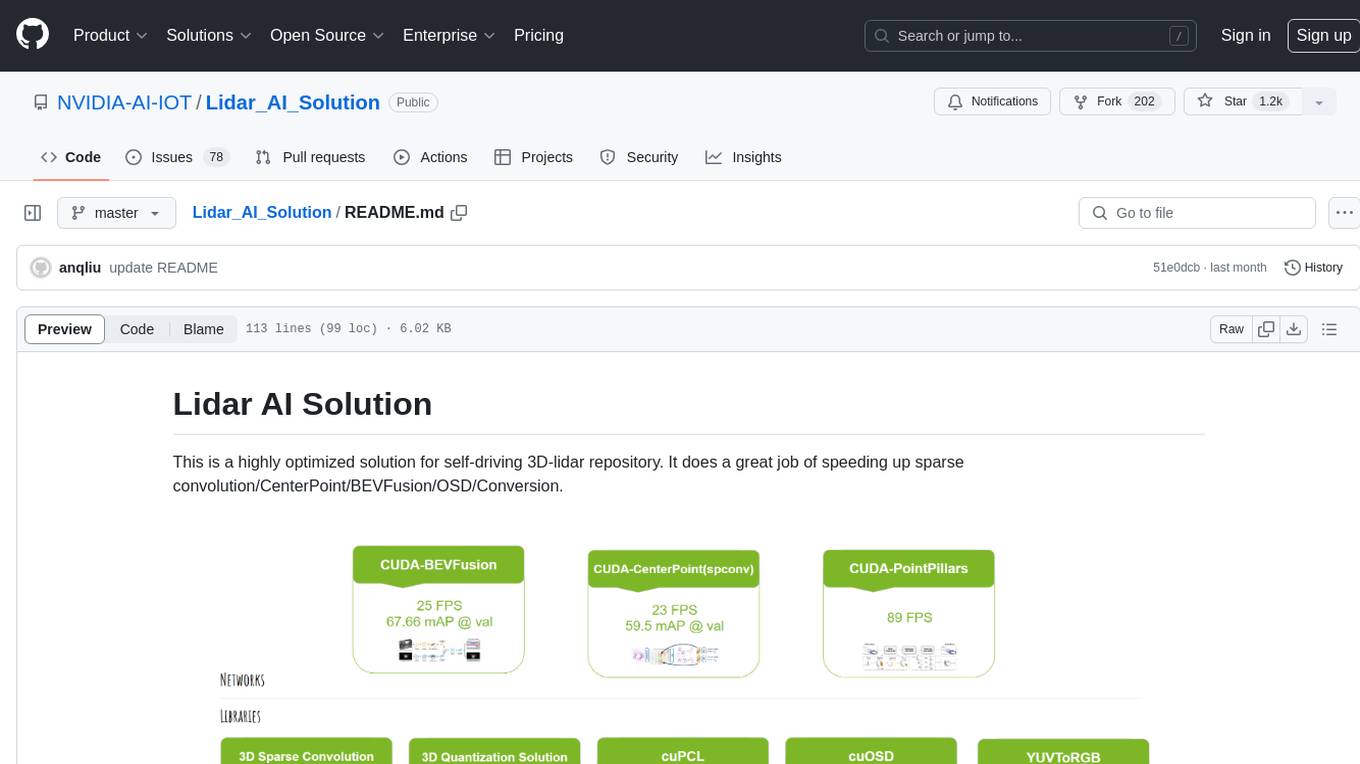

Lidar_AI_Solution

Lidar AI Solution is a highly optimized repository for self-driving 3D lidar, providing solutions for sparse convolution, BEVFusion, CenterPoint, OSD, and Conversion. It includes CUDA and TensorRT implementations for various tasks such as 3D sparse convolution, BEVFusion, CenterPoint, PointPillars, V2XFusion, cuOSD, cuPCL, and YUV to RGB conversion. The repository offers easy-to-use solutions, high accuracy, low memory usage, and quantization options for different tasks related to self-driving technology.

generative-ai-sagemaker-cdk-demo

This repository showcases how to deploy generative AI models from Amazon SageMaker JumpStart using the AWS CDK. Generative AI is a type of AI that can create new content and ideas, such as conversations, stories, images, videos, and music. The repository provides a detailed guide on deploying image and text generative AI models, utilizing pre-trained models from SageMaker JumpStart. The web application is built on Streamlit and hosted on Amazon ECS with Fargate. It interacts with the SageMaker model endpoints through Lambda functions and Amazon API Gateway. The repository also includes instructions on setting up the AWS CDK application, deploying the stacks, using the models, and viewing the deployed resources on the AWS Management Console.

cake

cake is a pure Rust implementation of the llama3 LLM distributed inference based on Candle. The project aims to enable running large models on consumer hardware clusters of iOS, macOS, Linux, and Windows devices by sharding transformer blocks. It allows running inferences on models that wouldn't fit in a single device's GPU memory by batching contiguous transformer blocks on the same worker to minimize latency. The tool provides a way to optimize memory and disk space by splitting the model into smaller bundles for workers, ensuring they only have the necessary data. cake supports various OS, architectures, and accelerations, with different statuses for each configuration.

Awesome-Robotics-3D

Awesome-Robotics-3D is a curated list of 3D Vision papers related to Robotics domain, focusing on large models like LLMs/VLMs. It includes papers on Policy Learning, Pretraining, VLM and LLM, Representations, and Simulations, Datasets, and Benchmarks. The repository is maintained by Zubair Irshad and welcomes contributions and suggestions for adding papers. It serves as a valuable resource for researchers and practitioners in the field of Robotics and Computer Vision.

tensorzero

TensorZero is an open-source platform that helps LLM applications graduate from API wrappers into defensible AI products. It enables a data & learning flywheel for LLMs by unifying inference, observability, optimization, and experimentation. The platform includes a high-performance model gateway, structured schema-based inference, observability, experimentation, and data warehouse for analytics. TensorZero Recipes optimize prompts and models, and the platform supports experimentation features and GitOps orchestration for deployment.

vector-inference

This repository provides an easy-to-use solution for running inference servers on Slurm-managed computing clusters using vLLM. All scripts in this repository run natively on the Vector Institute cluster environment. Users can deploy models as Slurm jobs, check server status and performance metrics, and shut down models. The repository also supports launching custom models with specific configurations. Additionally, users can send inference requests and set up an SSH tunnel to run inference from a local device.

rhesis

Rhesis is a comprehensive test management platform designed for Gen AI teams, offering tools to create, manage, and execute test cases for generative AI applications. It ensures the robustness, reliability, and compliance of AI systems through features like test set management, automated test generation, edge case discovery, compliance validation, integration capabilities, and performance tracking. The platform is open source, emphasizing community-driven development, transparency, extensible architecture, and democratizing AI safety. It includes components such as backend services, frontend applications, SDK for developers, worker services, chatbot applications, and Polyphemus for uncensored LLM service. Rhesis enables users to address challenges unique to testing generative AI applications, such as non-deterministic outputs, hallucinations, edge cases, ethical concerns, and compliance requirements.