postgresml

Postgres with GPUs for ML/AI apps.

Stars: 6101

PostgresML is a powerful Postgres extension that seamlessly combines data storage and machine learning inference within your database. It enables running machine learning and AI operations directly within PostgreSQL, leveraging GPU acceleration for faster computations, integrating state-of-the-art large language models, providing built-in functions for text processing, enabling efficient similarity search, offering diverse ML algorithms, ensuring high performance, scalability, and security, supporting a wide range of NLP tasks, and seamlessly integrating with existing PostgreSQL tools and client libraries.

README:

Postgres + GPUs for ML/AI applications.

| Documentation | Blog | Discord |

Why do ML/AI in Postgres?

Data for ML & AI systems is inherently larger and more dynamic than the models. It's more efficient, manageable and reliable to move models to the database, rather than constantly moving data to the models.

- In-Database ML/AI: Run machine learning and AI operations directly within PostgreSQL

- GPU Acceleration: Leverage GPU power for faster computations and model inference

- Large Language Models: Integrate and use state-of-the-art LLMs from Hugging Face

- RAG Pipeline: Built-in functions for chunking, embedding, ranking, and transforming text

- Vector Search: Efficient similarity search using pgvector integration

- Diverse ML Algorithms: 47+ classification and regression algorithms available

- High Performance: 8-40X faster inference compared to HTTP-based model serving

- Scalability: Support for millions of transactions per second and horizontal scaling

- NLP Tasks: Wide range of natural language processing capabilities

- Security: Enhanced data privacy by keeping models and data together

- Seamless Integration: Works with existing PostgreSQL tools and client libraries

The only prerequisites for using PostgresML is a Postgres database with our open-source pgml extension installed.

Our serverless cloud is the easiest and recommend way to get started.

Sign up for a free PostgresML account. You'll get a free database in seconds, with access to GPUs and state of the art LLMs.

If you don't want to use our cloud you can self host it.

docker run \

-it \

-v postgresml_data:/var/lib/postgresql \

-p 5433:5432 \

-p 8000:8000 \

ghcr.io/postgresml/postgresml:2.7.12 \

sudo -u postgresml psql -d postgresml

For more details, take a look at our Quick Start with Docker documentation.

We have a number of other tools and libraries that are specifically designed to work with PostgreML. Remeber PostgresML is a postgres extension running inside of Postgres so you can connect with psql and use any of your favorite tooling and client libraries like psycopg to connect and run queries.

PostgresML Specific Client Libraries:

- Korvus - Korvus is a Python, JavaScript, Rust and C search SDK that unifies the entire RAG pipeline in a single database query.

- postgresml-django - postgresml-django is a Python module that integrates PostgresML with Django ORM.

Recommended Postgres Poolers:

- pgcat - pgcat is a PostgreSQL pooler with sharding, load balancing and failover support.

PostgresML brings models directly to your data, eliminating the need for costly and time-consuming data transfers. This approach significantly enhances performance, security, and scalability for AI-driven applications.

By running models within the database, PostgresML enables:

- Reduced latency and improved query performance

- Enhanced data privacy and security

- Simplified infrastructure management

- Seamless integration with existing database operations

PostgresML supports a wide range of state-of-the-art deep learning architectures available on the Hugging Face model hub. This integration allows you to:

- Access thousands of pre-trained models

- Utilize cutting-edge NLP, computer vision, and other AI models

- Easily experiment with different architectures

While cloud-based LLM providers offer powerful capabilities, making API calls from within the database can introduce latency, security risks, and potential compliance issues. Currently, PostgresML does not directly support integration with remote LLM providers like OpenAI.

PostgresML transforms your PostgreSQL database into a powerful vector database for Retrieval-Augmented Generation (RAG) applications. It leverages pgvector for efficient storage and retrieval of embeddings.

Our RAG implementation is built on four key SQL functions:

- Chunk: Splits text into manageable segments

- Embed: Generates vector embeddings from text using pre-trained models

- Rank: Performs similarity search on embeddings

- Transform: Applies language models for text generation or transformation

For more information on using RAG with PostgresML see our guide on Unified RAG.

The pgml.chunk function chunks documents using the specified splitter. This is typically done before embedding.

pgml.chunk(

splitter TEXT, -- splitter name

text TEXT, -- text to embed

kwargs JSON -- optional arguments (see below)

)

See pgml.chunk docs for more information.

The pgml.embed function generates embeddings from text using in-database models.

pgml.embed(

transformer TEXT,

"text" TEXT,

kwargs JSONB

)

See pgml.embed docs for more information.

The pgml.rank function uses Cross-Encoders to score sentence pairs.

This is typically used as a re-ranking step when performing search.

pgml.rank(

transformer TEXT,

query TEXT,

documents TEXT[],

kwargs JSONB

)

Docs coming soon.

The pgml.transform function can be used to generate text.

SELECT pgml.transform(

task => TEXT OR JSONB, -- Pipeline initializer arguments

inputs => TEXT[] OR BYTEA[], -- inputs for inference

args => JSONB -- (optional) arguments to the pipeline.

)

See pgml.transform docs for more information.

See our Text Generation guide for a guide on generating text.

Some highlights:

- 47+ classification and regression algorithms

- 8 - 40X faster inference than HTTP based model serving

- Millions of transactions per second

- Horizontal scalability

Training a classification model

Training

SELECT * FROM pgml.train(

'Handwritten Digit Image Classifier',

algorithm => 'xgboost',

'classification',

'pgml.digits',

'target'

);

Inference

SELECT pgml.predict(

'My Classification Project',

ARRAY[0.1, 2.0, 5.0]

) AS prediction;

The pgml.transform function exposes a number of available NLP tasks.

Available tasks are:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for postgresml

Similar Open Source Tools

postgresml

PostgresML is a powerful Postgres extension that seamlessly combines data storage and machine learning inference within your database. It enables running machine learning and AI operations directly within PostgreSQL, leveraging GPU acceleration for faster computations, integrating state-of-the-art large language models, providing built-in functions for text processing, enabling efficient similarity search, offering diverse ML algorithms, ensuring high performance, scalability, and security, supporting a wide range of NLP tasks, and seamlessly integrating with existing PostgreSQL tools and client libraries.

cosdata

Cosdata is a cutting-edge AI data platform designed to power the next generation search pipelines. It features immutability, version control, and excels in semantic search, structured knowledge graphs, hybrid search capabilities, real-time search at scale, and ML pipeline integration. The platform is customizable, scalable, efficient, enterprise-grade, easy to use, and can manage multi-modal data. It offers high performance, indexing, low latency, and high requests per second. Cosdata is designed to meet the demands of modern search applications, empowering businesses to harness the full potential of their data.

Upsonic

Upsonic offers a cutting-edge enterprise-ready framework for orchestrating LLM calls, agents, and computer use to complete tasks cost-effectively. It provides reliable systems, scalability, and a task-oriented structure for real-world cases. Key features include production-ready scalability, task-centric design, MCP server support, tool-calling server, computer use integration, and easy addition of custom tools. The framework supports client-server architecture and allows seamless deployment on AWS, GCP, or locally using Docker.

llm-on-ray

LLM-on-Ray is a comprehensive solution for building, customizing, and deploying Large Language Models (LLMs). It simplifies complex processes into manageable steps by leveraging the power of Ray for distributed computing. The tool supports pretraining, finetuning, and serving LLMs across various hardware setups, incorporating industry and Intel optimizations for performance. It offers modular workflows with intuitive configurations, robust fault tolerance, and scalability. Additionally, it provides an Interactive Web UI for enhanced usability, including a chatbot application for testing and refining models.

openvino

OpenVINO™ is an open-source toolkit for optimizing and deploying AI inference. It provides a common API to deliver inference solutions on various platforms, including CPU, GPU, NPU, and heterogeneous devices. OpenVINO™ supports pre-trained models from Open Model Zoo and popular frameworks like TensorFlow, PyTorch, and ONNX. Key components of OpenVINO™ include the OpenVINO™ Runtime, plugins for different hardware devices, frontends for reading models from native framework formats, and the OpenVINO Model Converter (OVC) for adjusting models for optimal execution on target devices.

greenmask

Greenmask is a powerful open-source utility designed for logical database backup dumping, anonymization, synthetic data generation, and restoration. It is highly customizable, stateless, and backward-compatible with existing PostgreSQL utilities. Greenmask supports advanced subset systems, deterministic transformers, dynamic parameters, transformation conditions, and more. It is cross-platform, database type safe, extensible, and supports parallel execution and various storage options. Ideal for backup and restoration tasks, anonymization, transformation, and data masking.

trustgraph

TrustGraph is a tool that deploys private GraphRAG pipelines to build a RDF style knowledge graph from data, enabling accurate and secure `RAG` requests compatible with cloud LLMs and open-source SLMs. It showcases the reliability and efficiencies of GraphRAG algorithms, capturing contextual language flags missed in conventional RAG approaches. The tool offers features like PDF decoding, text chunking, inference of various LMs, RDF-aligned Knowledge Graph extraction, and more. TrustGraph is designed to be modular, supporting multiple Language Models and environments, with a plug'n'play architecture for easy customization.

aistore

AIStore is a lightweight object storage system designed for AI applications. It is highly scalable, reliable, and easy to use. AIStore can be deployed on any commodity hardware, and it can be used to store and manage large datasets for deep learning and other AI applications.

RAG-FiT

RAG-FiT is a library designed to improve Language Models' ability to use external information by fine-tuning models on specially created RAG-augmented datasets. The library assists in creating training data, training models using parameter-efficient finetuning (PEFT), and evaluating performance using RAG-specific metrics. It is modular, customizable via configuration files, and facilitates fast prototyping and experimentation with various RAG settings and configurations.

RAGFoundry

RAG Foundry is a library designed to enhance Large Language Models (LLMs) by fine-tuning models on RAG-augmented datasets. It helps create training data, train models using parameter-efficient finetuning (PEFT), and measure performance using RAG-specific metrics. The library is modular, customizable using configuration files, and facilitates prototyping with various RAG settings and configurations for tasks like data processing, retrieval, training, inference, and evaluation.

kodit

Kodit is a Code Indexing MCP Server that connects AI coding assistants to external codebases, providing accurate and up-to-date code snippets. It improves AI-assisted coding by offering canonical examples, indexing local and public codebases, integrating with AI coding assistants, enabling keyword and semantic search, and supporting OpenAI-compatible or custom APIs/models. Kodit helps engineers working with AI-powered coding assistants by providing relevant examples to reduce errors and hallucinations.

ApeRAG

ApeRAG is a production-ready platform for Retrieval-Augmented Generation (RAG) that combines Graph RAG, vector search, and full-text search with advanced AI agents. It is ideal for building Knowledge Graphs, Context Engineering, and deploying intelligent AI agents for autonomous search and reasoning across knowledge bases. The platform offers features like advanced index types, intelligent AI agents with MCP support, enhanced Graph RAG with entity normalization, multimodal processing, hybrid retrieval engine, MinerU integration for document parsing, production-grade deployment with Kubernetes, enterprise management features, MCP integration, and developer-friendly tools for customization and contribution.

JamAIBase

JamAI Base is an open-source platform integrating SQLite and LanceDB databases with managed memory and RAG capabilities. It offers built-in LLM, vector embeddings, and reranker orchestration accessible through a spreadsheet-like UI and REST API. Users can transform static tables into dynamic entities, facilitate real-time interactions, manage structured data, and simplify chatbot development. The tool focuses on ease of use, scalability, flexibility, declarative paradigm, and innovative RAG techniques, making complex data operations accessible to users with varying technical expertise.

Auto-Analyst

Auto-Analyst is an AI-driven data analytics agentic system designed to simplify and enhance the data science process. By integrating various specialized AI agents, this tool aims to make complex data analysis tasks more accessible and efficient for data analysts and scientists. Auto-Analyst provides a streamlined approach to data preprocessing, statistical analysis, machine learning, and visualization, all within an interactive Streamlit interface. It offers plug and play Streamlit UI, agents with data science speciality, complete automation, LLM agnostic operation, and is built using lightweight frameworks.

fenic

fenic is an opinionated DataFrame framework from typedef.ai for building AI and agentic applications. It transforms unstructured and structured data into insights using familiar DataFrame operations enhanced with semantic intelligence. With support for markdown, transcripts, and semantic operators, plus efficient batch inference across various model providers. fenic is purpose-built for LLM inference, providing a query engine designed for AI workloads, semantic operators as first-class citizens, native unstructured data support, production-ready infrastructure, and a familiar DataFrame API.

For similar tasks

postgresml

PostgresML is a powerful Postgres extension that seamlessly combines data storage and machine learning inference within your database. It enables running machine learning and AI operations directly within PostgreSQL, leveraging GPU acceleration for faster computations, integrating state-of-the-art large language models, providing built-in functions for text processing, enabling efficient similarity search, offering diverse ML algorithms, ensuring high performance, scalability, and security, supporting a wide range of NLP tasks, and seamlessly integrating with existing PostgreSQL tools and client libraries.

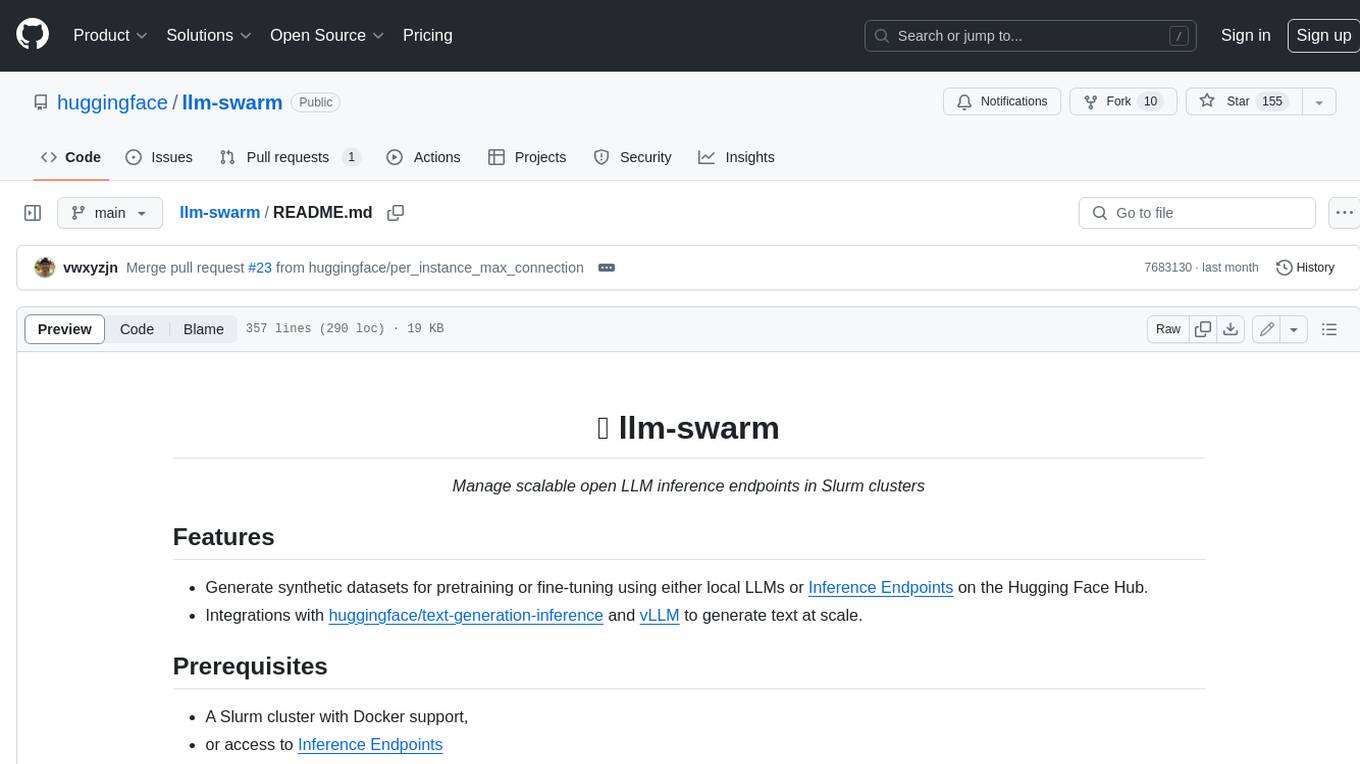

llm-swarm

llm-swarm is a tool designed to manage scalable open LLM inference endpoints in Slurm clusters. It allows users to generate synthetic datasets for pretraining or fine-tuning using local LLMs or Inference Endpoints on the Hugging Face Hub. The tool integrates with huggingface/text-generation-inference and vLLM to generate text at scale. It manages inference endpoint lifetime by automatically spinning up instances via `sbatch`, checking if they are created or connected, performing the generation job, and auto-terminating the inference endpoints to prevent idling. Additionally, it provides load balancing between multiple endpoints using a simple nginx docker for scalability. Users can create slurm files based on default configurations and inspect logs for further analysis. For users without a Slurm cluster, hosted inference endpoints are available for testing with usage limits based on registration status.

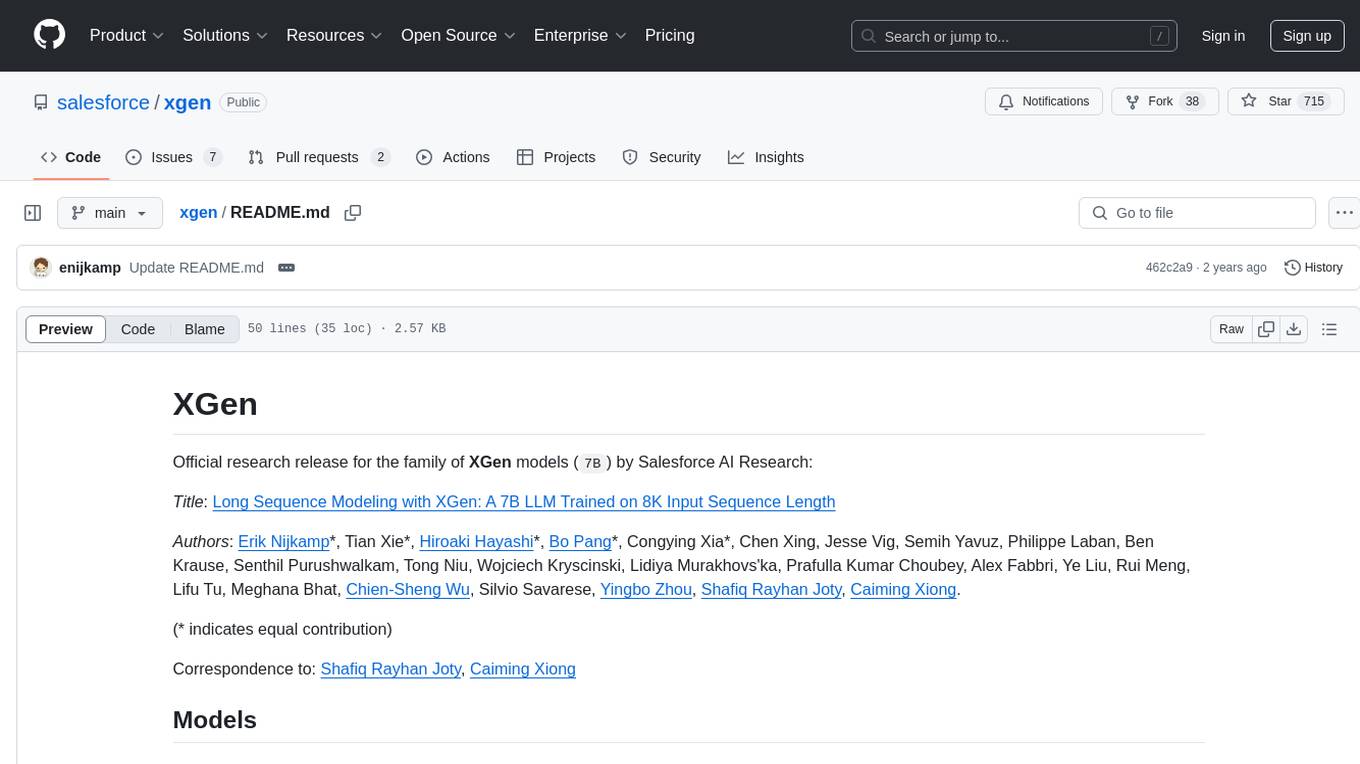

xgen

XGen is a research release for the family of XGen models (7B) by Salesforce AI Research. It includes models with support for different sequence lengths and tokenization using the OpenAI Tiktoken package. The models can be used for auto-regressive sampling in natural language generation tasks.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.