aistore

AIStore: scalable storage for AI applications

Stars: 1761

AIStore is a lightweight object storage system designed for AI applications. It is highly scalable, reliable, and easy to use. AIStore can be deployed on any commodity hardware, and it can be used to store and manage large datasets for deep learning and other AI applications.

README:

AIStore: High-Performance, Scalable Storage for AI Workloads

AIStore (AIS) is a lightweight distributed storage stack tailored for AI applications. It's an elastic cluster that can grow and shrink at runtime and can be ad-hoc deployed, with or without Kubernetes, anywhere from a single Linux machine to a bare-metal cluster of any size. Built from scratch, AIS provides linear scale-out, consistent performance, and a flexible deployment model.

AIS is a reliable storage cluster that can natively operate on both in-cluster and remote data, without treating either as a cache.

AIS consistently shows balanced I/O distribution and linear scalability across an arbitrary number of clustered nodes. The system supports fast data access, reliability, and rich customization for data transformation workloads.

- ✅ Multi-Cloud Access: Seamlessly access and manage content across multiple cloud backends (including AWS S3, GCS, Azure, and OCI), with fast-tier performance, configurable redundancy, and namespace-aware bucket identity (same-name buckets can coexist across accounts, endpoints, and providers).

- ✅ Deploy Anywhere: AIS runs on any Linux machine, virtual or physical. Deployment options range from a single Docker container and Google Colab to petascale Kubernetes clusters. There are no built-in limitations on deployment size or functionality.

- ✅ High Availability: Redundant control and data planes. Self-healing, end-to-end protection, n-way mirroring, and erasure coding. Arbitrary number of lightweight access points (AIS proxies).

- ✅ HTTP-based API: A feature-rich, native API (with user-friendly SDKs for Go and Python), and compliant Amazon S3 API for running unmodified S3 clients.

- ✅ Monitoring: Comprehensive observability with integrated Prometheus metrics, Grafana dashboards, detailed logs with configurable verbosity, and CLI-based performance tracking for complete cluster visibility and troubleshooting. See AIStore Observability for details.

- ✅ Chunked Objects: High-performance chunked object representation, with independently retrievable chunks, metadata v2, and checksum-protected manifests. Supports rechunking, parallel reads, and seamless integration with Get-Batch, blob-downloader, and multipart uploads to supported cloud backends.

- ✅ JWT Authentication and Authorization: Validates request JWTs to provide cluster- and bucket-level access control using static keys or dynamic OIDC issuer JWKS lookup.

- ✅ Secure Redirects: Configurable cryptographic signing of redirect URLs using HMAC-SHA256 with a versioned cluster key (distributed via metasync, stored in memory only).

- ✅ Load-Aware Throttling: Dynamic request throttling based on a multi-dimensional load vector (CPU, memory, disk, file descriptors, goroutines) to protect AIS clusters under stress.

- ✅ Unified Namespace: Attach AIS clusters together to provide unified access to datasets across independent clusters, allowing users to reference shared buckets with cluster-specific identifiers.

- ✅ Turn-key Cache: In addition to robust data protection features, AIS offers a per-bucket configurable LRU-based cache with eviction thresholds and storage capacity watermarks.

- ✅ ETL Offload: Execute I/O intensive data transformations close to the data, either inline (on-the-fly as part of each read request) or offline (batch processing, with the destination bucket populated with transformed results).

- ✅ Get-Batch: Retrieve multiple objects and/or archived files with a single call. Designed for ML/AI pipelines, Get-Batch fetches an entire training batch in one operation, assembling a TAR (or other supported serialization formats) that contains all requested items in the exact user-specified order.

- ✅ Data Consistency: Guaranteed consistency across all gateways, with write-through semantics in presence of remote backends.

- ✅ Serialization & Sharding: Native, first-class support for TAR, TGZ, TAR.LZ4, and ZIP archives for efficient storage and processing of small-file datasets. Features include seamless integration with existing unmodified workflows across all APIs and subsystems.

- ✅ Kubernetes: For production, AIS runs natively on Kubernetes. The dedicated ais-k8s repository includes the AIS K8s Operator, Ansible playbooks, Helm charts, and deployment guidance.

- ✅ Batch Jobs: More than 30 cluster-wide batch operations that you can start, monitor, and control otherwise. The list currently includes:

$ ais show job --help

NAME:

archive blob-download cleanup copy-bucket copy-objects delete-objects

download dsort ec-bucket ec-get ec-put ec-resp

elect-primary etl-bucket etl-inline etl-objects evict-objects evict-remote-bucket

get-batch list lru-eviction mirror prefetch-objects promote-files

put-copies rebalance rechunk rename-bucket resilver summary

warm-up-metadataThe feature set continues to grow and also includes: blob-downloader; lightweight AuthN Service (Beta), to manage users and roles and generate JWTs; runtime management of TLS certificates; full support for adding/removing nodes at runtime; listing, copying, prefetching, and transforming virtual directories; executing presigned S3 requests; adaptive rate limiting; and more.

For the original white paper and design philosophy, please see AIStore Overview, which also includes high-level block diagram, terminology, APIs, CLI, and more. For our 2024 KubeCon presentation, please see AIStore: Enhancing petascale Deep Learning across Cloud backends.

AIS includes an integrated, scriptable CLI for managing clusters, buckets, and objects, running and monitoring batch jobs, viewing and downloading logs, generating performance reports, and more:

$ ais <TAB-TAB>

advanced config get object scrub tls

alias cp help performance search wait

archive create job prefetch show

auth download log put space-cleanup

blob-download dsort ls remote-cluster start

bucket etl ml rmb stop

cluster evict mpu rmo storageAIS runs natively on Kubernetes and features open format - thus, the freedom to copy or move your data from AIS at any time using the familiar Linux tar(1), scp(1), rsync(1) and similar.

For developers and data scientists, there's also:

- Go API used in CLI and benchmarking tools

- Python SDK + Reference Guide

- PyTorch integration and usage examples

- Boto3 support

- Read the Getting Started Guide for a 5-minute local install, or

- Run a minimal AIS cluster consisting of a single gateway and a single storage node, or

- Clone the repo and run

make kill cli aisloader deployfollowed byais show cluster

AIS deployment options, as well as intended (development vs. production vs. first-time) usages, are all summarized here.

Since the prerequisites essentially boil down to having Linux with a disk the deployment options range from all-in-one container to a petascale bare-metal cluster of any size, and from a single VM to multiple racks of high-end servers. Practical use cases require, of course, further consideration.

Some of the most popular deployment options include:

| Option | Use Case |

|---|---|

| Local playground | AIS developers or first-time users, Linux or Mac OS. Run make kill cli aisloader deploy <<< $'N\nM', where N is a number of targets, M is a number of gateways

|

| Minimal production-ready deployment | This option utilizes preinstalled docker image and is targeting first-time users or researchers (who could immediately start training their models on smaller datasets) |

| Docker container | Quick testing and evaluation; single-node setup |

| GCP/GKE automated install | Developers, first-time users, AI researchers |

| Large-scale production deployment | Requires Kubernetes; provided via ais-k8s |

For performance tuning, see performance and AIS K8s Playbooks.

AIS supports multiple ingestion modes:

- ✅ On Demand: Transparent cloud access during workloads.

- ✅ PUT: Locally accessible files and directories.

- ✅ Promote: Import local target directories and/or NFS/SMB shares mounted on AIS targets.

- ✅ Copy: Full buckets, virtual subdirectories (recursively or non-recursively), lists or ranges (via Bash expansion).

- ✅ Download: HTTP(S)-accessible datasets and objects.

- ✅ Prefetch: Remote buckets or selected objects (from remote buckets), including subdirectories, lists, and/or ranges.

- ✅ Archive: Group and store related small files from an original dataset.

You can install the CLI and benchmarking tools using:

./scripts/install_from_binaries.sh --helpThe script installs aisloader and CLI from the latest or previous GitHub release and enables CLI auto-completions.

PyTorch integration is a growing set of datasets (both iterable and map-style), samplers, and dataloaders:

- Taxonomy of abstractions and API reference

- AIS plugin for PyTorch: usage examples

- Jupyter notebook examples

Let others know your project is powered by high-performance AI storage:

[](https://github.com/NVIDIA/aistore)- Overview and Design

- Networking Model

- Getting Started

- AIS Buckets: Design and Operations

- Observability

- Technical Blog

- S3 Compatibility

- Batch Jobs

- Performance and CLI: performance

- CLI Reference

- Production Deployment: Kubernetes Operator, Ansible Playbooks, Helm Charts, Monitoring

- See Extended Index

- Use CLI

searchcommand, e.g.:ais search copy - Clone the repository and run

git grep, e.g.:git grep -n out-of-band -- "*.md"

MIT

Alex Aizman (NVIDIA)

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for aistore

Similar Open Source Tools

aistore

AIStore is a lightweight object storage system designed for AI applications. It is highly scalable, reliable, and easy to use. AIStore can be deployed on any commodity hardware, and it can be used to store and manage large datasets for deep learning and other AI applications.

postgresml

PostgresML is a powerful Postgres extension that seamlessly combines data storage and machine learning inference within your database. It enables running machine learning and AI operations directly within PostgreSQL, leveraging GPU acceleration for faster computations, integrating state-of-the-art large language models, providing built-in functions for text processing, enabling efficient similarity search, offering diverse ML algorithms, ensuring high performance, scalability, and security, supporting a wide range of NLP tasks, and seamlessly integrating with existing PostgreSQL tools and client libraries.

uccl

UCCL is a command-line utility tool designed to simplify the process of converting Unix-style file paths to Windows-style file paths and vice versa. It provides a convenient way for developers and system administrators to handle file path conversions without the need for manual adjustments. With UCCL, users can easily convert file paths between different operating systems, making it a valuable tool for cross-platform development and file management tasks.

qdrant

Qdrant is a vector similarity search engine and vector database. It is written in Rust, which makes it fast and reliable even under high load. Qdrant can be used for a variety of applications, including: * Semantic search * Image search * Product recommendations * Chatbots * Anomaly detection Qdrant offers a variety of features, including: * Payload storage and filtering * Hybrid search with sparse vectors * Vector quantization and on-disk storage * Distributed deployment * Highlighted features such as query planning, payload indexes, SIMD hardware acceleration, async I/O, and write-ahead logging Qdrant is available as a fully managed cloud service or as an open-source software that can be deployed on-premises.

sail

Sail is a tool designed to unify stream processing, batch processing, and compute-intensive workloads, serving as a drop-in replacement for Spark SQL and the Spark DataFrame API in single-process settings. It aims to streamline data processing tasks and facilitate AI workloads.

greenmask

Greenmask is a powerful open-source utility designed for logical database backup dumping, anonymization, synthetic data generation, and restoration. It is highly customizable, stateless, and backward-compatible with existing PostgreSQL utilities. Greenmask supports advanced subset systems, deterministic transformers, dynamic parameters, transformation conditions, and more. It is cross-platform, database type safe, extensible, and supports parallel execution and various storage options. Ideal for backup and restoration tasks, anonymization, transformation, and data masking.

cosdata

Cosdata is a cutting-edge AI data platform designed to power the next generation search pipelines. It features immutability, version control, and excels in semantic search, structured knowledge graphs, hybrid search capabilities, real-time search at scale, and ML pipeline integration. The platform is customizable, scalable, efficient, enterprise-grade, easy to use, and can manage multi-modal data. It offers high performance, indexing, low latency, and high requests per second. Cosdata is designed to meet the demands of modern search applications, empowering businesses to harness the full potential of their data.

comfyui_LLM_Polymath

LLM Polymath Chat Node is an advanced Chat Node for ComfyUI that integrates large language models to build text-driven applications and automate data processes, enhancing prompt responses by incorporating real-time web search, linked content extraction, and custom agent instructions. It supports both OpenAI’s GPT-like models and alternative models served via a local Ollama API. The core functionalities include Comfy Node Finder and Smart Assistant, along with additional agents like Flux Prompter, Custom Instructors, Python debugger, and scripter. The tool offers features for prompt processing, web search integration, model & API integration, custom instructions, image handling, logging & debugging, output compression, and more.

deepflow

DeepFlow is an open-source project that provides deep observability for complex cloud-native and AI applications. It offers Zero Code data collection with eBPF for metrics, distributed tracing, request logs, and function profiling. DeepFlow is integrated with SmartEncoding to achieve Full Stack correlation and efficient access to all observability data. With DeepFlow, cloud-native and AI applications automatically gain deep observability, removing the burden of developers continually instrumenting code and providing monitoring and diagnostic capabilities covering everything from code to infrastructure for DevOps/SRE teams.

positronic

Positronic is an end-to-end toolkit for building ML-driven robotics systems, aiming to simplify data collection, messy data handling, and complex deployment in the field of robotics. It provides a Python-native stack for real-life ML robotics, covering hardware integration, dataset curation, policy training, deployment, and monitoring. The toolkit is designed to make professional-grade ML robotics approachable, without the need for ROS. Positronic offers solutions for data ops, hardware drivers, unified inference API, and iteration workflows, enabling teams to focus on developing manipulation systems for robots.

agent-zero

Agent Zero is a personal, organic agentic framework designed to be dynamic, transparent, customizable, and interactive. It uses the computer as a tool to accomplish tasks, with features like general-purpose assistant, computer as a tool, multi-agent cooperation, customizable and extensible framework, and communication skills. The tool is fully Dockerized, with Speech-to-Text and TTS capabilities, and offers real-world use cases like financial analysis, Excel automation, API integration, server monitoring, and project isolation. Agent Zero can be dangerous if not used properly and is prompt-based, guided by the prompts folder. The tool is extensively documented and has a changelog highlighting various updates and improvements.

Upsonic

Upsonic offers a cutting-edge enterprise-ready framework for orchestrating LLM calls, agents, and computer use to complete tasks cost-effectively. It provides reliable systems, scalability, and a task-oriented structure for real-world cases. Key features include production-ready scalability, task-centric design, MCP server support, tool-calling server, computer use integration, and easy addition of custom tools. The framework supports client-server architecture and allows seamless deployment on AWS, GCP, or locally using Docker.

UltraRAG

The UltraRAG framework is a researcher and developer-friendly RAG system solution that simplifies the process from data construction to model fine-tuning in domain adaptation. It introduces an automated knowledge adaptation technology system, supporting no-code programming, one-click synthesis and fine-tuning, multidimensional evaluation, and research-friendly exploration work integration. The architecture consists of Frontend, Service, and Backend components, offering flexibility in customization and optimization. Performance evaluation in the legal field shows improved results compared to VanillaRAG, with specific metrics provided. The repository is licensed under Apache-2.0 and encourages citation for support.

Mooncake

Mooncake is a serving platform for Kimi, a leading LLM service provided by Moonshot AI. It features a KVCache-centric disaggregated architecture that separates prefill and decoding clusters, leveraging underutilized CPU, DRAM, and SSD resources of the GPU cluster. Mooncake's scheduler balances throughput and latency-related SLOs, with a prediction-based early rejection policy for highly overloaded scenarios. It excels in long-context scenarios, achieving up to a 525% increase in throughput while handling 75% more requests under real workloads.

higress

Higress is an open-source cloud-native API gateway built on the core of Istio and Envoy, based on Alibaba's internal practice of Envoy Gateway. It is designed for AI-native API gateway, serving AI businesses such as Tongyi Qianwen APP, Bailian Big Model API, and Machine Learning PAI platform. Higress provides capabilities to interface with LLM model vendors, AI observability, multi-model load balancing/fallback, AI token flow control, and AI caching. It offers features for AI gateway, Kubernetes Ingress gateway, microservices gateway, and security protection gateway, with advantages in production-level scalability, stream processing, extensibility, and ease of use.

Genkit

Genkit is an open-source framework for building full-stack AI-powered applications, used in production by Google's Firebase. It provides SDKs for JavaScript/TypeScript (Stable), Go (Beta), and Python (Alpha) with unified interface for integrating AI models from providers like Google, OpenAI, Anthropic, Ollama. Rapidly build chatbots, automations, and recommendation systems using streamlined APIs for multimodal content, structured outputs, tool calling, and agentic workflows. Genkit simplifies AI integration with open-source SDK, unified APIs, and offers text and image generation, structured data generation, tool calling, prompt templating, persisted chat interfaces, AI workflows, and AI-powered data retrieval (RAG).

For similar tasks

aistore

AIStore is a lightweight object storage system designed for AI applications. It is highly scalable, reliable, and easy to use. AIStore can be deployed on any commodity hardware, and it can be used to store and manage large datasets for deep learning and other AI applications.

cl-waffe2

cl-waffe2 is an experimental deep learning framework in Common Lisp, providing fast, systematic, and customizable matrix operations, reverse mode tape-based Automatic Differentiation, and neural network model building and training features accelerated by a JIT Compiler. It offers abstraction layers, extensibility, inlining, graph-level optimization, visualization, debugging, systematic nodes, and symbolic differentiation. Users can easily write extensions and optimize their networks without overheads. The framework is designed to eliminate barriers between users and developers, allowing for easy customization and extension.

aigt

AIGT is a repository containing scripts for deep learning in guided medical interventions, focusing on ultrasound imaging. It provides a complete workflow from formatting and annotations to real-time model deployment. Users can set up an Anaconda environment, run Slicer notebooks, acquire tracked ultrasound data, and process exported data for training. The repository includes tools for segmentation, image export, and annotation creation.

DeepLearing-Interview-Awesome-2024

DeepLearning-Interview-Awesome-2024 is a repository that covers various topics related to deep learning, computer vision, big models (LLMs), autonomous driving, smart healthcare, and more. It provides a collection of interview questions with detailed explanations sourced from recent academic papers and industry developments. The repository is aimed at assisting individuals in academic research, work innovation, and job interviews. It includes six major modules covering topics such as large language models (LLMs), computer vision models, common problems in computer vision and perception algorithms, deep learning basics and frameworks, as well as specific tasks like 3D object detection, medical image segmentation, and more.

PINNACLE

PINNACLE is a flexible geometric deep learning approach that trains on contextualized protein interaction networks to generate context-aware protein representations. It provides protein representations split across various cell-type contexts from different tissues and organs. The tool can be fine-tuned to study the genomic effects of drugs and nominate promising protein targets and cell-type contexts for further investigation. PINNACLE exemplifies the paradigm of incorporating context-specific effects for studying biological systems, especially the impact of disease and therapeutics.

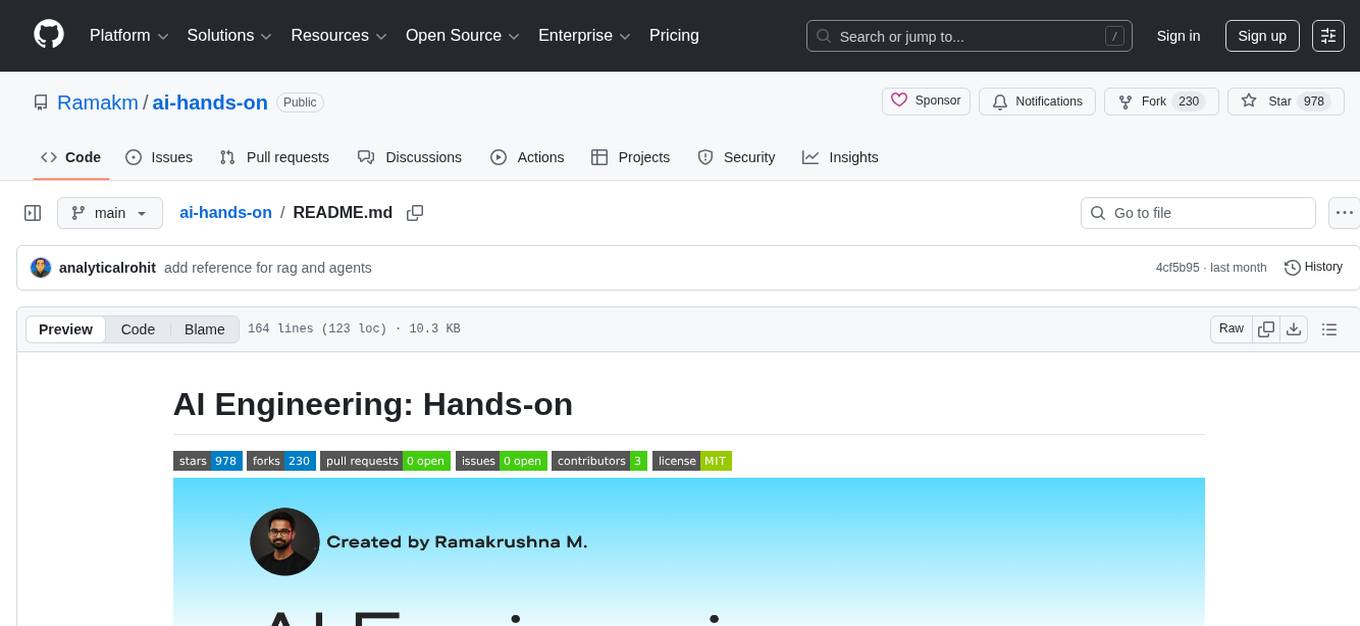

ai-hands-on

A complete, hands-on guide to becoming an AI Engineer. This repository is designed to help you learn AI from first principles, build real neural networks, and understand modern LLM systems end-to-end. Progress through math, PyTorch, deep learning, transformers, RAG, and OCR with clean, intuitive Jupyter notebooks guiding you at every step. Suitable for beginners and engineers leveling up, providing clarity, structure, and intuition to build real AI systems.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.