raglite

🥤 RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with DuckDB or PostgreSQL

Stars: 1141

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with PostgreSQL or SQLite. It offers configurable options for choosing LLM providers, database types, and rerankers. The toolkit is fast and permissive, utilizing lightweight dependencies and hardware acceleration. RAGLite provides features like PDF to Markdown conversion, multi-vector chunk embedding, optimal semantic chunking, hybrid search capabilities, adaptive retrieval, and improved output quality. It is extensible with a built-in Model Context Protocol server, customizable ChatGPT-like frontend, document conversion to Markdown, and evaluation tools. Users can configure RAGLite for various tasks like configuring, inserting documents, running RAG pipelines, computing query adapters, evaluating performance, running MCP servers, and serving frontends.

README:

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with DuckDB or PostgreSQL.

- 🧠 Choose any LLM provider with LiteLLM, including local llama-cpp-python models

- 💾 Choose either DuckDB or PostgreSQL as a keyword & vector search database

- 🥇 Choose any reranker with rerankers, including multilingual FlashRank as the default

- ❤️ Only lightweight and permissive open source dependencies (e.g., no PyTorch or LangChain)

- 🚀 Acceleration with Metal on macOS, and CUDA on Linux and Windows

- 📖 PDF to Markdown conversion on top of pdftext and pypdfium2

- 🧬 Multi-vector chunk embedding with late chunking and contextual chunk headings

- ✏️ Optimal sentence splitting with wtpsplit-lite by solving a binary integer programming problem

- ✂️ Optimal semantic chunking by solving a binary integer programming problem

- 🔍 Hybrid search with the database's native keyword & vector search (FTS+VSS; tsvector+pgvector)

- 💭 Adaptive retrieval where the LLM decides whether to and what to retrieve based on the query

- 💰 Improved cost and latency with a prompt caching-aware message array structure

- 🍰 Improved output quality with Anthropic's long-context prompt format

- 🌀 Optimal closed-form linear query adapter by solving an orthogonal Procrustes problem

- 🔌 A built-in Model Context Protocol (MCP) server that any MCP client like Claude desktop can connect with

- 💬 Optional customizable ChatGPT-like frontend for web, Slack, and Teams with Chainlit

- ✍️ Optional conversion of any input document to Markdown with Pandoc

- ✅ Optional evaluation of retrieval and generation performance with Ragas

[!TIP] 🚀 If you want to use local models, it is recommended to install an accelerated llama-cpp-python precompiled binary with:

# Configure which llama-cpp-python precompiled binary to install (⚠️ not every combination is available): LLAMA_CPP_PYTHON_VERSION=0.3.9 PYTHON_VERSION=310|311|312 ACCELERATOR=metal|cu121|cu122|cu123|cu124 PLATFORM=macosx_11_0_arm64|linux_x86_64|win_amd64 # Install llama-cpp-python: pip install "https://github.com/abetlen/llama-cpp-python/releases/download/v$LLAMA_CPP_PYTHON_VERSION-$ACCELERATOR/llama_cpp_python-$LLAMA_CPP_PYTHON_VERSION-cp$PYTHON_VERSION-cp$PYTHON_VERSION-$PLATFORM.whl"

Install RAGLite with:

pip install ragliteTo add support for a customizable ChatGPT-like frontend, use the chainlit extra:

pip install raglite[chainlit]To add support for filetypes other than PDF, use the pandoc extra:

pip install raglite[pandoc]To add support for evaluation, use the ragas extra:

pip install raglite[ragas]- Configuring RAGLite

- Inserting documents

- Retrieval-Augmented Generation (RAG)

- Computing and using an optimal query adapter

- Evaluation of retrieval and generation

- Running a Model Context Protocol (MCP) server

- Serving a customizable ChatGPT-like frontend

[!TIP] 🧠 RAGLite extends LiteLLM with support for llama.cpp models using llama-cpp-python. To select a llama.cpp model (e.g., from Unsloth's collection), use a model identifier of the form

"llama-cpp-python/<hugging_face_repo_id>/<filename>@<n_ctx>", wheren_ctxis an optional parameter that specifies the context size of the model.

[!TIP] 💾 You can create a PostgreSQL database in a few clicks at neon.tech.

First, configure RAGLite with your preferred DuckDB or PostgreSQL database and any LLM supported by LiteLLM:

from raglite import RAGLiteConfig

# Example 'remote' config with a PostgreSQL database and an OpenAI LLM:

my_config = RAGLiteConfig(

db_url="postgresql://my_username:my_password@my_host:5432/my_database",

llm="gpt-4o-mini", # Or any LLM supported by LiteLLM

embedder="text-embedding-3-large", # Or any embedder supported by LiteLLM

)

# Example 'local' config with a DuckDB database and a llama.cpp LLM:

my_config = RAGLiteConfig(

db_url="duckdb:///raglite.db",

llm="llama-cpp-python/unsloth/Qwen3-8B-GGUF/*Q4_K_M.gguf@8192",

embedder="llama-cpp-python/lm-kit/bge-m3-gguf/*F16.gguf@512", # More than 512 tokens degrades bge-m3's performance

)You can also configure any reranker supported by rerankers:

from rerankers import Reranker

# Example remote API-based reranker:

my_config = RAGLiteConfig(

db_url="postgresql://my_username:my_password@my_host:5432/my_database"

reranker=Reranker("rerank-v3.5", model_type="cohere", api_key=COHERE_API_KEY, verbose=0) # Multilingual

)

# Example local cross-encoder reranker per language (this is the default):

my_config = RAGLiteConfig(

db_url="duckdb:///raglite.db",

reranker={

"en": Reranker("ms-marco-MiniLM-L-12-v2", model_type="flashrank", verbose=0), # English

"other": Reranker("ms-marco-MultiBERT-L-12", model_type="flashrank", verbose=0), # Other languages

}

)Self-query is also supported, allowing the LLM to automatically generate and apply metadata filters to refine search results based on the user's input. To enable self-query, set self_query=True in your RAGLiteConfig:

my_config = RAGLiteConfig(

db_url="duckdb:///raglite.db",

llm="gpt-4o-mini",

embedder="text-embedding-3-large",

self_query=True, # Enable self-query

)[!TIP] ✍️ To insert documents other than PDF, install the

pandocextra withpip install raglite[pandoc].

Next, insert some documents into the database. RAGLite will take care of the conversion to Markdown, optimal level 4 semantic chunking, and multi-vector embedding with late chunking:

# Insert documents given their file path

from pathlib import Path

from raglite import Document, insert_documents

documents = [

Document.from_path(Path("On the Measure of Intelligence.pdf")),

Document.from_path(Path("Special Relativity.pdf")),

]

insert_documents(documents, config=my_config)

# Insert documents given their text/plain or text/markdown content

content = """

# ON THE ELECTRODYNAMICS OF MOVING BODIES

## By A. EINSTEIN June 30, 1905

It is known that Maxwell...

"""

documents = [

Document.from_text(content, author="Einstein", topic="physics", year=1905)

]

insert_documents(documents, config=my_config)[!TIP] 📝 Documents can include metadata by passing keyword arguments to

Document.from_text()orDocument.from_path(). This metadata can later be used for filtering during retrieval.

You may also want to expand the document metadata before insertion:

from typing import Annotated

from pydantic import Field

from raglite import expand_document_metadata

# Expand the documents' metadata.

metadata_fields = {

"title": Annotated[str, Field(..., description="Document title.")],

"author": Annotated[str, Field(..., description="Primary author.")],

"topics": Annotated[list[Literal["A", "B", "C"]], Field(..., description="Key themes.")],

}

documents = list(expand_document_metadata(documents, metadata_fields, config=my_config))

# Insert documents given their text/plain or text/markdown content

insert_documents(documents, config=my_config)Now you can run an adaptive RAG pipeline that consists of adding the user prompt to the message history and streaming the LLM response:

from raglite import rag

# Create a user message

messages = [] # Or start with an existing message history

messages.append({

"role": "user",

"content": "How is intelligence measured?"

})

# Adaptively decide whether to retrieve and then stream the response

chunk_spans = []

stream = rag(messages, on_retrieval=lambda x: chunk_spans.extend(x), config=my_config)

for update in stream:

print(update, end="")

# Access the documents referenced in the RAG context

documents = [chunk_span.document for chunk_span in chunk_spans]The LLM will adaptively decide whether to retrieve information based on the complexity of the user prompt. If retrieval is necessary, the LLM generates the search query and RAGLite applies hybrid search and reranking to retrieve the most relevant chunk spans (each of which is a list of consecutive chunks). The retrieval results are sent to the on_retrieval callback and are appended to the message history as a tool output. Finally, the assistant response is streamed and appended to the message history.

If you need manual control over the RAG pipeline, you can run a basic but powerful pipeline that consists of retrieving the most relevant chunk spans with hybrid search and reranking, converting the user prompt to a RAG instruction and appending it to the message history, and finally generating the RAG response:

from raglite import add_context, rag, retrieve_context, vector_search

# Choose a search method

from dataclasses import replace

my_config = replace(my_config, search_method=vector_search) # Or `hybrid_search`, `search_and_rerank_chunks`, ...

# Retrieve relevant chunk spans with the configured search method

user_prompt = "How is intelligence measured?"

chunk_spans = retrieve_context(

query=user_prompt,

num_chunks=5,

metadata_filter={"author": "Einstein"}, # Optional: filter by metadata

config=my_config

)

# Append a RAG instruction based on the user prompt and context to the message history

messages = [] # Or start with an existing message history

messages.append(add_context(user_prompt=user_prompt, context=chunk_spans, config=my_config))

# Stream the RAG response and append it to the message history

stream = rag(messages, config=my_config)

for update in stream:

print(update, end="")

# Access the documents referenced in the RAG context

documents = [chunk_span.document for chunk_span in chunk_spans][!TIP] 🥇 Reranking can significantly improve the output quality of a RAG application. To add reranking to your application: first search for a larger set of 20 relevant chunks, then rerank them with a rerankers reranker, and finally keep the top 5 chunks.

RAGLite also offers more advanced control over the individual steps of a full RAG pipeline:

- Searching for relevant chunks with keyword, vector, or hybrid search

- Retrieving the chunks from the database

- Reranking the chunks and selecting the top 5 results

- Extending the chunks with their neighbors and grouping them into chunk spans

- Converting the user prompt to a RAG instruction and appending it to the message history

- Streaming an LLM response to the message history

- Accessing the cited documents from the chunk spans

A full RAG pipeline is straightforward to implement with RAGLite:

# Search for chunks

from raglite import hybrid_search, keyword_search, vector_search

user_prompt = "How is intelligence measured?"

chunk_ids_vector, _ = vector_search(user_prompt, num_results=20, config=my_config)

chunk_ids_keyword, _ = keyword_search(user_prompt, num_results=20, config=my_config)

chunk_ids_hybrid, _ = hybrid_search(

user_prompt, num_results=20, metadata_filter={"topic": "physics"}, config=my_config

) # Filter results to only include chunks from documents with topic="physics" (works with any search method)

# Retrieve chunks

from raglite import retrieve_chunks

chunks_hybrid = retrieve_chunks(chunk_ids_hybrid, config=my_config)

# Rerank chunks and keep the top 5 (optional, but recommended)

from raglite import rerank_chunks

chunks_reranked = rerank_chunks(user_prompt, chunks_hybrid, config=my_config)

chunks_reranked = chunks_reranked[:5]

# Extend chunks with their neighbors and group them into chunk spans

from raglite import retrieve_chunk_spans

chunk_spans = retrieve_chunk_spans(chunks_reranked, config=my_config)

# Append a RAG instruction based on the user prompt and context to the message history

from raglite import add_context

messages = [] # Or start with an existing message history

messages.append(add_context(user_prompt=user_prompt, context=chunk_spans, config=my_config))

# Stream the RAG response and append it to the message history

from raglite import rag

stream = rag(messages, config=my_config)

for update in stream:

print(update, end="")

# Access the documents referenced in the RAG context

documents = [chunk_span.document for chunk_span in chunk_spans]RAGLite can compute and apply an optimal closed-form query adapter to the prompt embedding to improve the output quality of RAG. To benefit from this, first generate a set of evals with insert_evals and then compute and store the optimal query adapter with update_query_adapter:

# Improve RAG with an optimal query adapter

from raglite import insert_evals, update_query_adapter

insert_evals(num_evals=100, config=my_config)

update_query_adapter(config=my_config) # From here, every vector search will use the query adapterIf you installed the ragas extra, you can use RAGLite to answer the evals and then evaluate the quality of both the retrieval and generation steps of RAG using Ragas:

# Evaluate retrieval and generation

from raglite import answer_evals, evaluate, insert_evals

insert_evals(num_evals=100, config=my_config)

answered_evals_df = answer_evals(num_evals=10, config=my_config)

evaluation_df = evaluate(answered_evals_df, config=my_config)RAGLite comes with an MCP server implemented with FastMCP that exposes a search_knowledge_base tool. To use the server:

- Install Claude desktop

- Install uv so that Claude desktop can start the server

- Configure Claude desktop to use

uvto start the MCP server with:

raglite \

--db-url duckdb:///raglite.db \

--llm llama-cpp-python/unsloth/Qwen3-4B-GGUF/*Q4_K_M.gguf@8192 \

--embedder llama-cpp-python/lm-kit/bge-m3-gguf/*F16.gguf@512 \

mcp install

To use an API-based LLM, make sure to include your credentials in a .env file or supply them inline:

export OPENAI_API_KEY=sk-...

raglite \

--llm gpt-4o-mini \

--embedder text-embedding-3-large \

mcp installNow, when you start Claude desktop you should see a 🔨 icon at the bottom right of your prompt indicating that the Claude has successfully connected with the MCP server.

When relevant, Claude will suggest to use the search_knowledge_base tool that the MCP server provides. You can also explicitly ask Claude to search the knowledge base if you want to be certain that it does.

If you installed the chainlit extra, you can serve a customizable ChatGPT-like frontend with:

raglite chainlitThe application is also deployable to web, Slack, and Teams.

You can specify the database URL, LLM, and embedder directly in the Chainlit frontend, or with the CLI as follows:

raglite \

--db-url duckdb:///raglite.db \

--llm llama-cpp-python/unsloth/Qwen3-4B-GGUF/*Q4_K_M.gguf@8192 \

--embedder llama-cpp-python/lm-kit/bge-m3-gguf/*F16.gguf@512 \

chainlitTo use an API-based LLM, make sure to include your credentials in a .env file or supply them inline:

OPENAI_API_KEY=sk-... raglite --llm gpt-4o-mini --embedder text-embedding-3-large chainlitPrerequisites

-

Generate an SSH key and add the SSH key to your GitHub account.

-

Configure SSH to automatically load your SSH keys:

cat << EOF >> ~/.ssh/config Host * AddKeysToAgent yes IgnoreUnknown UseKeychain UseKeychain yes ForwardAgent yes EOF

-

Install VS Code and VS Code's Dev Containers extension. Alternatively, install PyCharm.

-

Optional: install a Nerd Font such as FiraCode Nerd Font and configure VS Code or PyCharm to use it.

Development environments

The following development environments are supported:

-

⭐️ GitHub Codespaces: click on Open in GitHub Codespaces to start developing in your browser.

-

⭐️ VS Code Dev Container (with container volume): click on Open in Dev Containers to clone this repository in a container volume and create a Dev Container with VS Code.

-

⭐️ uv: clone this repository and run the following from root of the repository:

# Create and install a virtual environment uv sync --python 3.10 --all-extras # Activate the virtual environment source .venv/bin/activate # Install the pre-commit hooks pre-commit install --install-hooks

-

VS Code Dev Container: clone this repository, open it with VS Code, and run Ctrl/⌘ + ⇧ + P → Dev Containers: Reopen in Container.

-

PyCharm Dev Container: clone this repository, open it with PyCharm, create a Dev Container with Mount Sources, and configure an existing Python interpreter at

/opt/venv/bin/python.

Developing

- This project follows the Conventional Commits standard to automate Semantic Versioning and Keep A Changelog with Commitizen.

- Run

poefrom within the development environment to print a list of Poe the Poet tasks available to run on this project. - Run

uv add {package}from within the development environment to install a run time dependency and add it topyproject.tomlanduv.lock. Add--devto install a development dependency. - Run

uv sync --upgradefrom within the development environment to upgrade all dependencies to the latest versions allowed bypyproject.toml. Add--only-devto upgrade the development dependencies only. - Run

cz bumpto bump the package's version, update theCHANGELOG.md, and create a git tag. Then push the changes and the git tag withgit push origin main --tags.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for raglite

Similar Open Source Tools

raglite

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with PostgreSQL or SQLite. It offers configurable options for choosing LLM providers, database types, and rerankers. The toolkit is fast and permissive, utilizing lightweight dependencies and hardware acceleration. RAGLite provides features like PDF to Markdown conversion, multi-vector chunk embedding, optimal semantic chunking, hybrid search capabilities, adaptive retrieval, and improved output quality. It is extensible with a built-in Model Context Protocol server, customizable ChatGPT-like frontend, document conversion to Markdown, and evaluation tools. Users can configure RAGLite for various tasks like configuring, inserting documents, running RAG pipelines, computing query adapters, evaluating performance, running MCP servers, and serving frontends.

pebblo

Pebblo enables developers to safely load data and promote their Gen AI app to deployment without worrying about the organization’s compliance and security requirements. The project identifies semantic topics and entities found in the loaded data and summarizes them on the UI or a PDF report.

wllama

Wllama is a WebAssembly binding for llama.cpp, a high-performance and lightweight language model library. It enables you to run inference directly on the browser without the need for a backend or GPU. Wllama provides both high-level and low-level APIs, allowing you to perform various tasks such as completions, embeddings, tokenization, and more. It also supports model splitting, enabling you to load large models in parallel for faster download. With its Typescript support and pre-built npm package, Wllama is easy to integrate into your React Typescript projects.

lollms_legacy

Lord of Large Language Models (LoLLMs) Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications. The tool supports multiple personalities for generating text with different styles and tones, real-time text generation with WebSocket-based communication, RESTful API for listing personalities and adding new personalities, easy integration with various applications and frameworks, sending files to personalities, running on multiple nodes to provide a generation service to many outputs at once, and keeping data local even in the remote version.

trieve

Trieve is an advanced relevance API for hybrid search, recommendations, and RAG. It offers a range of features including self-hosting, semantic dense vector search, typo tolerant full-text/neural search, sub-sentence highlighting, recommendations, convenient RAG API routes, the ability to bring your own models, hybrid search with cross-encoder re-ranking, recency biasing, tunable popularity-based ranking, filtering, duplicate detection, and grouping. Trieve is designed to be flexible and customizable, allowing users to tailor it to their specific needs. It is also easy to use, with a simple API and well-documented features.

lollms

LoLLMs Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications.

fragments

Fragments is an open-source tool that leverages Anthropic's Claude Artifacts, Vercel v0, and GPT Engineer. It is powered by E2B Sandbox SDK and Code Interpreter SDK, allowing secure execution of AI-generated code. The tool is based on Next.js 14, shadcn/ui, TailwindCSS, and Vercel AI SDK. Users can stream in the UI, install packages from npm and pip, and add custom stacks and LLM providers. Fragments enables users to build web apps with Python interpreter, Next.js, Vue.js, Streamlit, and Gradio, utilizing providers like OpenAI, Anthropic, Google AI, and more.

ragflow

RAGFlow is an open-source Retrieval-Augmented Generation (RAG) engine that combines deep document understanding with Large Language Models (LLMs) to provide accurate question-answering capabilities. It offers a streamlined RAG workflow for businesses of all sizes, enabling them to extract knowledge from unstructured data in various formats, including Word documents, slides, Excel files, images, and more. RAGFlow's key features include deep document understanding, template-based chunking, grounded citations with reduced hallucinations, compatibility with heterogeneous data sources, and an automated and effortless RAG workflow. It supports multiple recall paired with fused re-ranking, configurable LLMs and embedding models, and intuitive APIs for seamless integration with business applications.

GraphRAG-SDK

Build fast and accurate GenAI applications with GraphRAG SDK, a specialized toolkit for building Graph Retrieval-Augmented Generation (GraphRAG) systems. It integrates knowledge graphs, ontology management, and state-of-the-art LLMs to deliver accurate, efficient, and customizable RAG workflows. The SDK simplifies the development process by automating ontology creation, knowledge graph agent creation, and query handling, enabling users to interact and query their knowledge graphs effectively. It supports multi-agent systems and orchestrates agents specialized in different domains. The SDK is optimized for FalkorDB, ensuring high performance and scalability for large-scale applications. By leveraging knowledge graphs, it enables semantic relationships and ontology-driven queries that go beyond standard vector similarity, enhancing retrieval-augmented generation capabilities.

ai-microcore

MicroCore is a collection of python adapters for Large Language Models and Vector Databases / Semantic Search APIs. It allows convenient communication with these services, easy switching between models & services, and separation of business logic from implementation details. Users can keep applications simple and try various models & services without changing application code. MicroCore connects MCP tools to language models easily, supports text completion and chat completion models, and provides features for configuring, installing vendor-specific packages, and using vector databases.

magika

Magika is a novel AI-powered file type detection tool that relies on deep learning to provide accurate detection. It employs a custom, highly optimized model to enable precise file identification within milliseconds. Trained on a dataset of ~100M samples across 200+ content types, achieving an average ~99% accuracy. Used at scale by Google to improve user safety by routing files to security scanners. Available as a command line tool in Rust, Python API, and bindings for Rust, JavaScript/TypeScript, and GoLang.

refact-lsp

Refact Agent is a small executable written in Rust as part of the Refact Agent project. It lives inside your IDE to keep AST and VecDB indexes up to date, supporting connection graphs between definitions and usages in popular programming languages. It functions as an LSP server, offering code completion, chat functionality, and integration with various tools like browsers, databases, and debuggers. Users can interact with it through a Text UI in the command line.

mcp-agent

mcp-agent is a simple, composable framework designed to build agents using the Model Context Protocol. It handles the lifecycle of MCP server connections and implements patterns for building production-ready AI agents in a composable way. The framework also includes OpenAI's Swarm pattern for multi-agent orchestration in a model-agnostic manner, making it the simplest way to build robust agent applications. It is purpose-built for the shared protocol MCP, lightweight, and closer to an agent pattern library than a framework. mcp-agent allows developers to focus on the core business logic of their AI applications by handling mechanics such as server connections, working with LLMs, and supporting external signals like human input.

pyomop

pyomop is a versatile tool designed as an OMOP Swiss Army Knife for working with OHDSI OMOP Common Data Model (CDM) v5.4 or v6 compliant databases using SQLAlchemy as the ORM. It supports converting query results to pandas DataFrames for machine learning pipelines and provides utilities for working with OMOP vocabularies. The tool is lightweight, easy-to-use, and can be used both as a command-line tool and as an imported library in code. It supports SQLite, PostgreSQL, and MySQL databases, LLM-based natural language queries, FHIR to OMOP conversion utilities, and executing QueryLibrary.

doc-comments-ai

doc-comments-ai is a tool designed to automatically generate code documentation using language models. It allows users to easily create documentation comment blocks for methods in various programming languages such as Python, Typescript, Javascript, Java, Rust, and more. The tool supports both OpenAI and local LLMs, ensuring data privacy and security. Users can generate documentation comments for methods in files, inline comments in method bodies, and choose from different models like GPT-3.5-Turbo, GPT-4, and Azure OpenAI. Additionally, the tool provides support for Treesitter integration and offers guidance on selecting the appropriate model for comprehensive documentation needs.

aimeos-laravel

Aimeos Laravel is a professional, full-featured, and ultra-fast Laravel ecommerce package that can be easily integrated into existing Laravel applications. It offers a wide range of features including multi-vendor, multi-channel, and multi-warehouse support, fast performance, support for various product types, subscriptions with recurring payments, multiple payment gateways, full RTL support, flexible pricing options, admin backend, REST and GraphQL APIs, modular structure, SEO optimization, multi-language support, AI-based text translation, mobile optimization, and high-quality source code. The package is highly configurable and extensible, making it suitable for e-commerce SaaS solutions, marketplaces, and online shops with millions of vendors.

For similar tasks

raglite

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with PostgreSQL or SQLite. It offers configurable options for choosing LLM providers, database types, and rerankers. The toolkit is fast and permissive, utilizing lightweight dependencies and hardware acceleration. RAGLite provides features like PDF to Markdown conversion, multi-vector chunk embedding, optimal semantic chunking, hybrid search capabilities, adaptive retrieval, and improved output quality. It is extensible with a built-in Model Context Protocol server, customizable ChatGPT-like frontend, document conversion to Markdown, and evaluation tools. Users can configure RAGLite for various tasks like configuring, inserting documents, running RAG pipelines, computing query adapters, evaluating performance, running MCP servers, and serving frontends.

Co-LLM-Agents

This repository contains code for building cooperative embodied agents modularly with large language models. The agents are trained to perform tasks in two different environments: ThreeDWorld Multi-Agent Transport (TDW-MAT) and Communicative Watch-And-Help (C-WAH). TDW-MAT is a multi-agent environment where agents must transport objects to a goal position using containers. C-WAH is an extension of the Watch-And-Help challenge, which enables agents to send messages to each other. The code in this repository can be used to train agents to perform tasks in both of these environments.

GPT4Point

GPT4Point is a unified framework for point-language understanding and generation. It aligns 3D point clouds with language, providing a comprehensive solution for tasks such as 3D captioning and controlled 3D generation. The project includes an automated point-language dataset annotation engine, a novel object-level point cloud benchmark, and a 3D multi-modality model. Users can train and evaluate models using the provided code and datasets, with a focus on improving models' understanding capabilities and facilitating the generation of 3D objects.

asreview

The ASReview project implements active learning for systematic reviews, utilizing AI-aided pipelines to assist in finding relevant texts for search tasks. It accelerates the screening of textual data with minimal human input, saving time and increasing output quality. The software offers three modes: Oracle for interactive screening, Exploration for teaching purposes, and Simulation for evaluating active learning models. ASReview LAB is designed to support decision-making in any discipline or industry by improving efficiency and transparency in screening large amounts of textual data.

Groma

Groma is a grounded multimodal assistant that excels in region understanding and visual grounding. It can process user-defined region inputs and generate contextually grounded long-form responses. The tool presents a unique paradigm for multimodal large language models, focusing on visual tokenization for localization. Groma achieves state-of-the-art performance in referring expression comprehension benchmarks. The tool provides pretrained model weights and instructions for data preparation, training, inference, and evaluation. Users can customize training by starting from intermediate checkpoints. Groma is designed to handle tasks related to detection pretraining, alignment pretraining, instruction finetuning, instruction following, and more.

amber-train

Amber is the first model in the LLM360 family, an initiative for comprehensive and fully open-sourced LLMs. It is a 7B English language model with the LLaMA architecture. The model type is a language model with the same architecture as LLaMA-7B. It is licensed under Apache 2.0. The resources available include training code, data preparation, metrics, and fully processed Amber pretraining data. The model has been trained on various datasets like Arxiv, Book, C4, Refined-Web, StarCoder, StackExchange, and Wikipedia. The hyperparameters include a total of 6.7B parameters, hidden size of 4096, intermediate size of 11008, 32 attention heads, 32 hidden layers, RMSNorm ε of 1e^-6, max sequence length of 2048, and a vocabulary size of 32000.

kan-gpt

The KAN-GPT repository is a PyTorch implementation of Generative Pre-trained Transformers (GPTs) using Kolmogorov-Arnold Networks (KANs) for language modeling. It provides a model for generating text based on prompts, with a focus on improving performance compared to traditional MLP-GPT models. The repository includes scripts for training the model, downloading datasets, and evaluating model performance. Development tasks include integrating with other libraries, testing, and documentation.

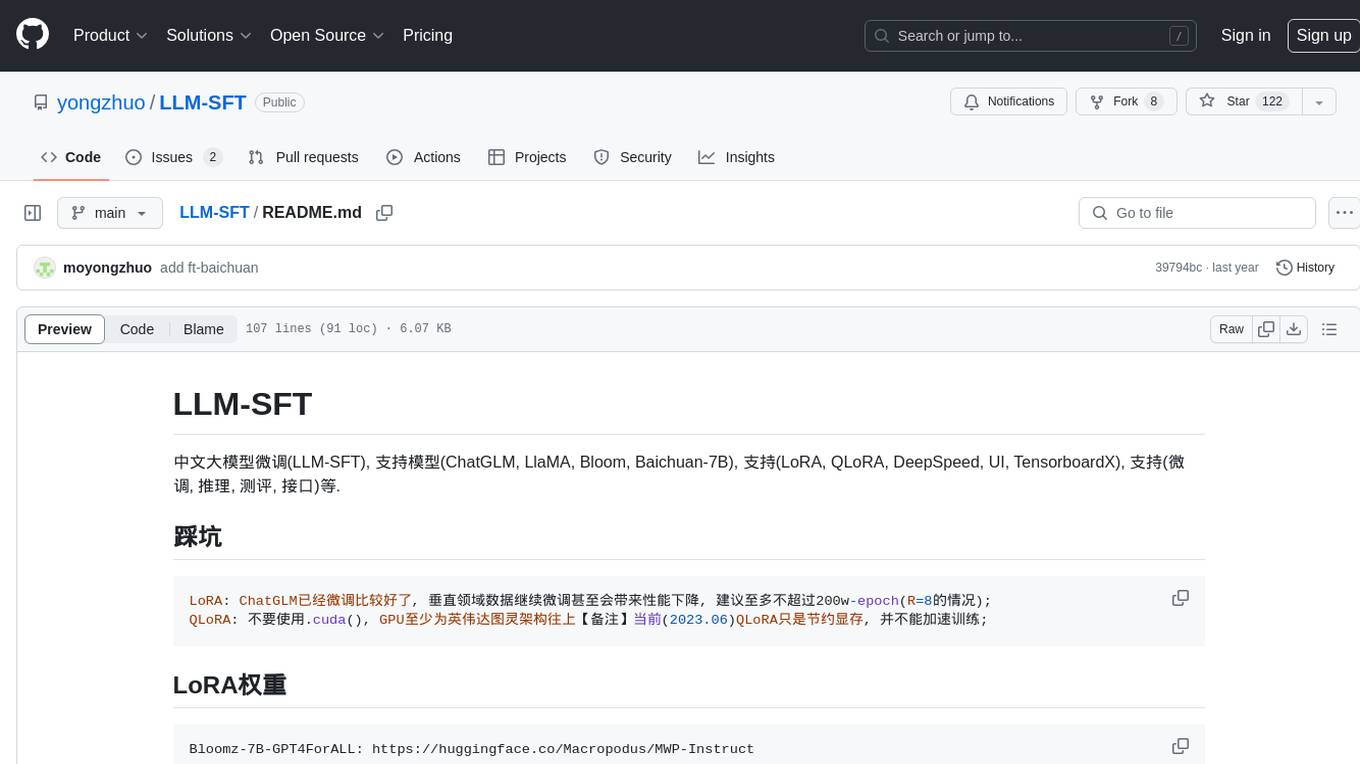

LLM-SFT

LLM-SFT is a Chinese large model fine-tuning tool that supports models such as ChatGLM, LlaMA, Bloom, Baichuan-7B, and frameworks like LoRA, QLoRA, DeepSpeed, UI, and TensorboardX. It facilitates tasks like fine-tuning, inference, evaluation, and API integration. The tool provides pre-trained weights for various models and datasets for Chinese language processing. It requires specific versions of libraries like transformers and torch for different functionalities.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.