ichigo

Local realtime voice AI

Stars: 2126

Ichigo is a local real-time voice AI tool that uses an early fusion technique to extend a text-based LLM to have native 'listening' ability. It is an open research experiment with improved multiturn capabilities and the ability to refuse processing inaudible queries. The tool is designed for open data, open weight, on-device Siri-like functionality, inspired by Meta's Chameleon paper. Ichigo offers a web UI demo and Gradio web UI for users to interact with the tool. It has achieved enhanced MMLU scores, stronger context handling, advanced noise management, and improved multi-turn capabilities for a robust user experience.

README:

[!NOTE]

Update: September 30, 2024

- We have rebranded from llama3-s to 🍓 Ichigo.

- Our custom-built early-fusion speech model now has a name and a voice.

- It has improved multiturn capabilities and can now refuse to process inaudible queries.

[!WARNING]

🍓 Ichigo is an open research experiment

- Join us in the

#researchchannel in Homebrew's Discord- We livestream training runs in

#research-livestream

🍓 Ichigo is an open, ongoing research experiment to extend a text-based LLM to have native "listening" ability. Think of it as an open data, open weight, on device Siri.

It uses an early fusion technique inspired by Meta's Chameleon paper.

We build train in public:

For instructions on how to self-host the Ichigo web UI demo using Docker, please visit: Ichigo demo. To try our demo on a single RTX 4090 GPU, you can go directly to: https://ichigo.homebrew.ltd

We offer code for users to create a web UI demo. Please follow the instructions below:

python -m venv demo

source demo/bin/activate

# First install all required packages

pip install --no-cache-dir -r ./demo/requirements.txt

Then run the command below to launch a Gradio demo locally. You can add the variables use-4bit and use-8bit for quantized usage:

python -m demo.app --host 0.0.0.0 --port 7860 --max-seq-len 1024

You can also host a demo using vLLM for faster inference but its not support streaming output:

python -m demo.app_vllm

Alternatively, you can easily try our demo on HuggingFace 🤗

- 11 Nov: Ichigo v0.4 models are now available. This update introduces a unified training pipeline by consolidating Phases 2 and 3, with training data enhancements that include migrating speech noise and multi-turn data to Phase 2 and adding synthetic noise-augmented multi-turn conversations. Achieving an improved MMLU score of 64.63, the model now boasts stronger context handling, advanced noise management, and enhanced multi-turn capabilities for a more robust and responsive user experience.

- 22 Oct: 📑 Research Paper Release: We are pleased to announce the publication of our research paper detailing the development and technical innovations behind Ichigo series. The full technical details, methodology, and experimental results are now available in our paper.

- 4 Oct: Ichigo v0.3 models are now available. Utilizing cleaner and improved data, our model has achieved an enhanced MMLU score of 63.79 and demonstrates stronger speech instruction-following capabilities, even in multi-turn interactions. Additionally, by incorporating noise-synthetic data, we have successfully trained the model to refuse processing non-speech audio inputs from users, further improving its functionality and user experience.

- 23 Aug: We’re excited to share Ichigo-llama3.1-s-instruct-v0.2, our latest multimodal checkpoint with improved speech understanding by enhancing the model's audio instruction-following capabilities through training on interleaving synthetic data.

- 17 Aug: We pre-trained our LLaMA 3.1 model on continuous speech data, tokenized using WhisperSpeechVQ. The final loss converged to approximately 1.9, resulting in our checkpoint: Ichigo-llama3.1-s-base-v0.2

- 1 Aug: Identified typo in original training recipe, causing significant degradation (MMLU: 0.6 -> 0.2), proposed fixes.

- 30 July: Presented llama3-s progress at: AI Training: From PyTorch to GPU Clusters

- 19 July: llama3-s-2024-07-19 understands synthetic voice with limited results

- 1 July: llama3-s-2024-07-08 showed converging loss (1.7) with limited data

For detailed information on synthetic generation, please refer to the Synthetic Generation Guide.

- First Clone the Repo from Github:

git clone --recurse-submodules https://github.com/homebrewltd/ichigo.git

- The folder structure is as follows:

Ichigo

├── HF_Trainer # HF training code (deprecated)

├── synthetic_data # Synthetic data generation pipeline

├── configs # Audio pipeline configs

├── audio_to_audio # Parler audio (.wav) to semantic tokens

├── synthetic_generation_config # TTS semantic tokens

├── scripts # Setup scripts for Runpod

├── torchtune # Submodule: our fork of fsdp with checkpointing

├── model_zoo # Model checkpoints

│ ├── LLM

│ │ ├── Meta-Llama-3-8B-Instruct

│ │ ├── Meta-Llama-3-70B-Instruct

├── demo # Selfhost this demo (vllm)

├── inference # Google Colab

- Install Dependencies

python -m venv hf_trainer

chmod +x scripts/install.sh

./scripts/install.sh

Restart shell now

chmod +x scripts/setup.sh

./scripts/setup.sh

source myenv/bin/activate

- Logging Huggingface

huggingface-cli login --token=<token>

- Training

export CUTLASS_PATH="cutlass"

export CUDA_VISIBLE_DEVICES=0,1,2,3,4,5,6,7

accelerate launch --config_file ./accelerate_config.yaml train.py

-

Install Package

python -m venv torchtune pip install torch torchvision torchao tensorboard mkdir model_zoo cd ./torchtune pip install -e .

Logging Huggingface:

huggingface-cli login --token=<token>

Download the

tokenizer.modeland the required model using thetunein theichigo/model_zoodirectory:tune download homebrewltd/llama3.1-s-whispervq-init --output-dir ../model_zoo/llama3.1-s-whispervq-init --ignore-patterns "original/consolidated*"[NOTE] : In case you want to use different base model, you can uploaded your own resized embedding model to Hugging Face Hub:

# folder containing the checkpoint files model_name = "meta-llama/Llama-3.2-3B-Instruct" model = AutoModelForCausalLM.from_pretrained(model_name, device_map="cpu", torch_dtype=torch.bfloat16) tokenizer = AutoTokenizer.from_pretrained(model_name) sound_tokens = [f'<|sound_{num:04d}|>' for num in range(513)] special_tokens = ["<|sound_start|>", "<|sound_end|>"] num_added_tokens = tokenizer.add_special_tokens({"additional_special_tokens": special_tokens}) tokenizer.add_tokens(sound_tokens) model.resize_token_embeddings(len(tokenizer)) model.push_to_hub("<your_hf>/Llama3.1-s-whispervq-init") tokenizer.push_to_hub("<your_hf>/Llama3.1-s-whispervq-init")

-

Pretraining Multi GPU (1-8GPUs Supported)

tune run --nproc_per_node <no-gpu> full_finetune_fsdp2 --config recipes/configs/jan-llama3-1-s/pretrain/8B_full.yaml[NOTE] : After training finished, please use this script to convert checkpoint to format that can be loaded by HF transformers:

from transformers import AutoModelForCausalLM, AutoTokenizer from huggingface_hub import HfApi, HfFolder import torch import os import glob from tqdm import tqdm # folder containing the checkpoint files output_dir = "../model_zoo/llama3-1-s-base" pt_to_merge = glob.glob(f"{output_dir}/hf_model_000*_1.pt") state_dicts = [torch.load(p) for p in tqdm(pt_to_merge)] merged_state_dicts = {k: v for d in state_dicts for k, v in d.items()} torch.save(merged_state_dicts, f"{output_dir}/pytorch_model.bin") model = AutoModelForCausalLM.from_pretrained(output_dir, torch_dtype=torch.bfloat16) print(model) tokenizer_path = "homebrewltd/llama3.1-s-whispervq-init" tokenizer = AutoTokenizer.from_pretrained(tokenizer_path) # Save the updated model and tokenizer locally tokenizer.save_pretrained(output_dir) model.push_to_hub("<your_hf>/Llama3.1-s-base") tokenizer.push_to_hub("<your_hf>/Llama3.1-s-base")

-

Instruction Tuning

Download checkpoint from huggingface using the

tuneor use your local pretrained checkpoint located atmodel_zoo/llama3-1-s-base:tune run --nproc_per_node <no-gpu> full_finetune_fsdp2 --config recipes/configs/jan-llama3-1-s/finetune/8B_full.yaml

@misc{chameleonteam2024chameleonmixedmodalearlyfusionfoundation,

title={Chameleon: Mixed-Modal Early-Fusion Foundation Models},

author={Chameleon Team},

year={2024},

eprint={2405.09818},

archivePrefix={arXiv},

primaryClass={cs.CL},

journal={arXiv preprint}

}

@misc{zhang2024adamminiusefewerlearning,

title={Adam-mini: Use Fewer Learning Rates To Gain More},

author={Yushun Zhang and Congliang Chen and Ziniu Li and Tian Ding and Chenwei Wu and Yinyu Ye and Zhi-Quan Luo and Ruoyu Sun},

year={2024},

eprint={2406.16793},

archivePrefix={arXiv},

primaryClass={cs.LG},

journal={arXiv preprint}

}

@misc{defossez2022highfi,

title={High Fidelity Neural Audio Compression},

author={Défossez, Alexandre and Copet, Jade and Synnaeve, Gabriel and Adi, Yossi},

year={2022},

eprint={2210.13438},

archivePrefix={arXiv},

journal={arXiv preprint}

}

@misc{WhisperSpeech,

title={WhisperSpeech: An Open Source Text-to-Speech System Built by Inverting Whisper},

author={Collabora and LAION},

year={2024},

url={https://github.com/collabora/WhisperSpeech},

note={GitHub repository}

}🍓 Ichigo is an open research project. We're looking for collaborators, and will likely move towards crowdsourcing speech datasets in the future.

- Torchtune: The codebase we built upon

- Accelerate: Library for easy use of distributed training

- WhisperSpeech: Text-to-speech model for synthetic audio generation

- Encodec: High-fidelity neural audio codec for efficient audio compression

- Llama3: the Family of Models that we based on that has the amazing language capabilities !!!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ichigo

Similar Open Source Tools

ichigo

Ichigo is a local real-time voice AI tool that uses an early fusion technique to extend a text-based LLM to have native 'listening' ability. It is an open research experiment with improved multiturn capabilities and the ability to refuse processing inaudible queries. The tool is designed for open data, open weight, on-device Siri-like functionality, inspired by Meta's Chameleon paper. Ichigo offers a web UI demo and Gradio web UI for users to interact with the tool. It has achieved enhanced MMLU scores, stronger context handling, advanced noise management, and improved multi-turn capabilities for a robust user experience.

ALMA

ALMA (Advanced Language Model-based Translator) is a many-to-many LLM-based translation model that utilizes a two-step fine-tuning process on monolingual and parallel data to achieve strong translation performance. ALMA-R builds upon ALMA models with LoRA fine-tuning and Contrastive Preference Optimization (CPO) for even better performance, surpassing GPT-4 and WMT winners. The repository provides ALMA and ALMA-R models, datasets, environment setup, evaluation scripts, training guides, and data information for users to leverage these models for translation tasks.

catai

CatAI is a tool that allows users to run GGUF models on their computer with a chat UI. It serves as a local AI assistant inspired by Node-Llama-Cpp and Llama.cpp. The tool provides features such as auto-detecting programming language, showing original messages by clicking on user icons, real-time text streaming, and fast model downloads. Users can interact with the tool through a CLI that supports commands for installing, listing, setting, serving, updating, and removing models. CatAI is cross-platform and supports Windows, Linux, and Mac. It utilizes node-llama-cpp and offers a simple API for asking model questions. Additionally, developers can integrate the tool with node-llama-cpp@beta for model management and chatting. The configuration can be edited via the web UI, and contributions to the project are welcome. The tool is licensed under Llama.cpp's license.

understand-r1-zero

The 'understand-r1-zero' repository focuses on understanding R1-Zero-like training from a critical perspective. It provides insights into base models and reinforcement learning components, highlighting findings and proposing solutions for biased optimization. The repository offers a minimalist recipe for R1-Zero training, detailing the RL-tuning process and achieving state-of-the-art performance with minimal compute resources. It includes codebase, models, and paper related to R1-Zero training implemented with the Oat framework, emphasizing research-friendly and efficient LLM RL techniques.

gem

GEM is an open-source General Experience Maker designed for training Large Language Models (LLMs) in dynamic environments. Similar to OpenAI Gym for traditional Reinforcement Learning, GEM provides a variety of environments with standardized interfaces for seamless integration with existing LLM training frameworks. It offers tool integration, flexible wrappers, async vectorized environment execution, multi-environment training, and more to simplify LLM agent training.

speech-to-speech

This repository implements a speech-to-speech cascaded pipeline with consecutive parts including Voice Activity Detection (VAD), Speech to Text (STT), Language Model (LM), and Text to Speech (TTS). It aims to provide a fully open and modular approach by leveraging models available on the Transformers library via the Hugging Face hub. The code is designed for easy modification, with each component implemented as a class. Users can run the pipeline either on a server/client approach or locally, with detailed setup and usage instructions provided in the readme.

VideoTuna

VideoTuna is a codebase for text-to-video applications that integrates multiple AI video generation models for text-to-video, image-to-video, and text-to-image generation. It provides comprehensive pipelines in video generation, including pre-training, continuous training, post-training, and fine-tuning. The models in VideoTuna include U-Net and DiT architectures for visual generation tasks, with upcoming releases of a new 3D video VAE and a controllable facial video generation model.

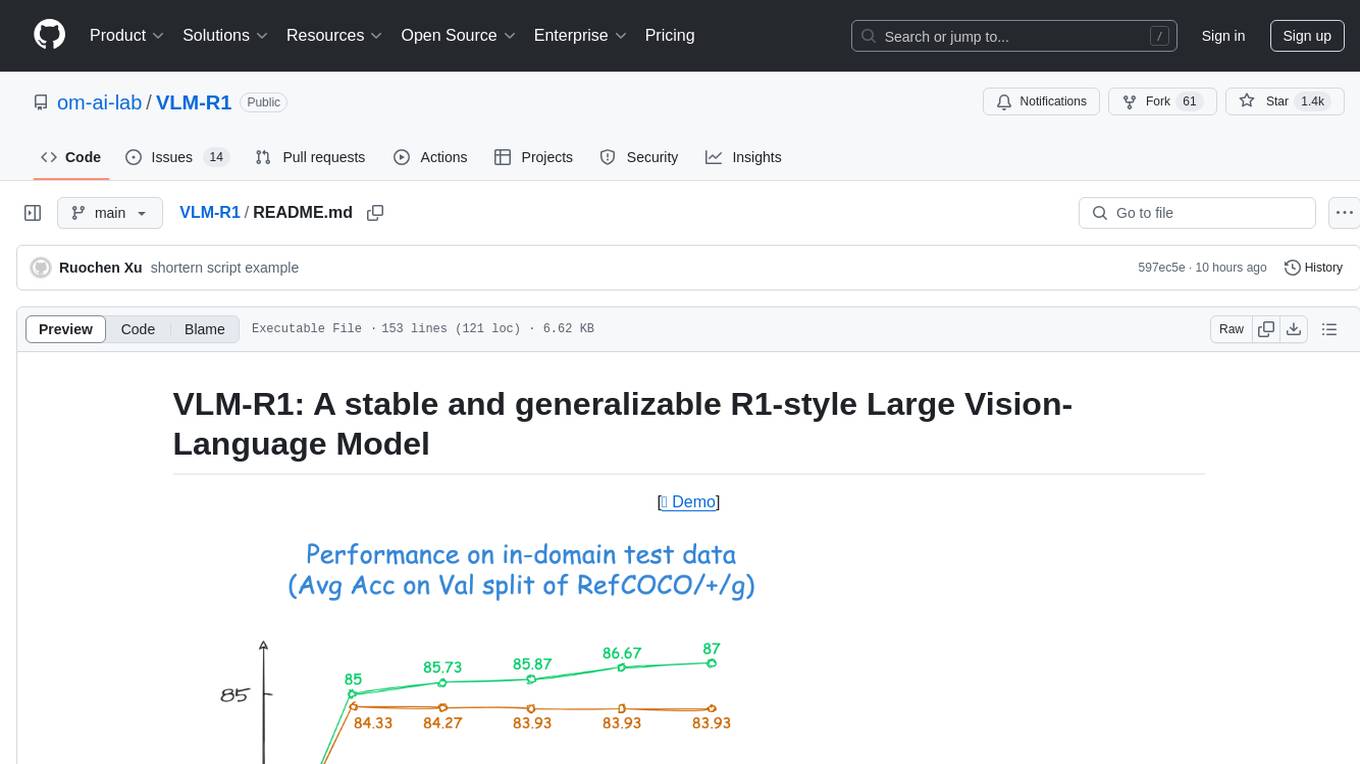

VLM-R1

VLM-R1 is a stable and generalizable R1-style Large Vision-Language Model proposed for Referring Expression Comprehension (REC) task. It compares R1 and SFT approaches, showing R1 model's steady improvement on out-of-domain test data. The project includes setup instructions, training steps for GRPO and SFT models, support for user data loading, and evaluation process. Acknowledgements to various open-source projects and resources are mentioned. The project aims to provide a reliable and versatile solution for vision-language tasks.

evalverse

Evalverse is an open-source project designed to support Large Language Model (LLM) evaluation needs. It provides a standardized and user-friendly solution for processing and managing LLM evaluations, catering to AI research engineers and scientists. Evalverse supports various evaluation methods, insightful reports, and no-code evaluation processes. Users can access unified evaluation with submodules, request evaluations without code via Slack bot, and obtain comprehensive reports with scores, rankings, and visuals. The tool allows for easy comparison of scores across different models and swift addition of new evaluation tools.

sdk-python

Strands Agents is a lightweight and flexible SDK that takes a model-driven approach to building and running AI agents. It supports various model providers, offers advanced capabilities like multi-agent systems and streaming support, and comes with built-in MCP server support. Users can easily create tools using Python decorators, integrate MCP servers seamlessly, and leverage multiple model providers for different AI tasks. The SDK is designed to scale from simple conversational assistants to complex autonomous workflows, making it suitable for a wide range of AI development needs.

scikit-llm

Scikit-LLM is a tool that seamlessly integrates powerful language models like ChatGPT into scikit-learn for enhanced text analysis tasks. It allows users to leverage large language models for various text analysis applications within the familiar scikit-learn framework. The tool simplifies the process of incorporating advanced language processing capabilities into machine learning pipelines, enabling users to benefit from the latest advancements in natural language processing.

FlashRank

FlashRank is an ultra-lite and super-fast Python library designed to add re-ranking capabilities to existing search and retrieval pipelines. It is based on state-of-the-art Language Models (LLMs) and cross-encoders, offering support for pairwise/pointwise rerankers and listwise LLM-based rerankers. The library boasts the tiniest reranking model in the world (~4MB) and runs on CPU without the need for Torch or Transformers. FlashRank is cost-conscious, with a focus on low cost per invocation and smaller package size for efficient serverless deployments. It supports various models like ms-marco-TinyBERT, ms-marco-MiniLM, rank-T5-flan, ms-marco-MultiBERT, and more, with plans for future model additions. The tool is ideal for enhancing search precision and speed in scenarios where lightweight models with competitive performance are preferred.

AgentFly

AgentFly is an extensible framework for building LLM agents with reinforcement learning. It supports multi-turn training by adapting traditional RL methods with token-level masking. It features a decorator-based interface for defining tools and reward functions, enabling seamless extension and ease of use. To support high-throughput training, it implemented asynchronous execution of tool calls and reward computations, and designed a centralized resource management system for scalable environment coordination. A suite of prebuilt tools and environments are provided.

yomo

YoMo is an open-source LLM Function Calling Framework for building Geo-distributed AI applications. It is built atop QUIC Transport Protocol and Stateful Serverless architecture, making AI applications low-latency, reliable, secure, and easy. The framework focuses on providing low-latency, secure, stateful serverless functions that can be distributed geographically to bring AI inference closer to end users. It offers features such as low-latency communication, security with TLS v1.3, stateful serverless functions for faster GPU processing, geo-distributed architecture, and a faster-than-real-time codec called Y3. YoMo enables developers to create and deploy stateful serverless functions for AI inference in a distributed manner, ensuring quick responses to user queries from various locations worldwide.

graphiti

Graphiti is a framework for building and querying temporally-aware knowledge graphs, tailored for AI agents in dynamic environments. It continuously integrates user interactions, structured and unstructured data, and external information into a coherent, queryable graph. The framework supports incremental data updates, efficient retrieval, and precise historical queries without complete graph recomputation, making it suitable for developing interactive, context-aware AI applications.

Avalon-LLM

Avalon-LLM is a repository containing the official code for AvalonBench and the Avalon agent Strategist. AvalonBench evaluates Large Language Models (LLMs) playing The Resistance: Avalon, a board game requiring deductive reasoning, coordination, collaboration, and deception skills. Strategist utilizes LLMs to learn strategic skills through self-improvement, including high-level strategic evaluation and low-level execution guidance. The repository provides instructions for running AvalonBench, setting up Strategist, and conducting experiments with different agents in the game environment.

For similar tasks

ichigo

Ichigo is a local real-time voice AI tool that uses an early fusion technique to extend a text-based LLM to have native 'listening' ability. It is an open research experiment with improved multiturn capabilities and the ability to refuse processing inaudible queries. The tool is designed for open data, open weight, on-device Siri-like functionality, inspired by Meta's Chameleon paper. Ichigo offers a web UI demo and Gradio web UI for users to interact with the tool. It has achieved enhanced MMLU scores, stronger context handling, advanced noise management, and improved multi-turn capabilities for a robust user experience.

blinkid-ios

BlinkID iOS is a mobile SDK that enables developers to easily integrate ID scanning and data extraction capabilities into their iOS applications. The SDK supports scanning and processing various types of identity documents, such as passports, driver's licenses, and ID cards. It provides accurate and fast data extraction, including personal information and document details. With BlinkID iOS, developers can enhance their apps with secure and reliable ID verification functionality, improving user experience and streamlining identity verification processes.

Conversational-Azure-OpenAI-Accelerator

The Conversational Azure OpenAI Accelerator is a tool designed to provide rapid, no-cost custom demos tailored to customer use cases, from internal HR/IT to external contact centers. It focuses on top use cases of GenAI conversation and summarization, plus live backend data integration. The tool automates conversations across voice and text channels, providing a valuable way to save money and improve customer and employee experience. By combining Azure OpenAI + Cognitive Search, users can efficiently deploy a ChatGPT experience using web pages, knowledge base articles, and data sources. The tool enables simultaneous deployment of conversational content to chatbots, IVR, voice assistants, and more in one click, eliminating the need for in-depth IT involvement. It leverages Microsoft's advanced AI technologies, resulting in a conversational experience that can converse in human-like dialogue, respond intelligently, and capture content for omni-channel unified analytics.

ai-apps

ai-apps is a collection of browser extensions that enhance various AI-powered services like Amazon shopping, Brave Search, ChatGPT, DuckDuckGo, and Google Search. These extensions provide functionalities such as adding AI answers to search engines, auto-clearing ChatGPT query history, auto-playing ChatGPT responses, keeping ChatGPT sessions fresh, and more. The repository offers tools to improve user experience and interaction with AI technologies across different platforms and services.

telegram-deepseek-bot

This repository contains a Telegram bot built with Golang that integrates with DeepSeek API to provide AI-powered responses. The bot supports streaming replies, making interactions feel more natural and dynamic. It offers features like AI responses, streaming output, command handling, and easy deployment. Users can configure the bot via environment variables for customization. The bot can be deployed locally or on a cloud server, and it supports custom commands and real-time responses.

ai-on-gke

This repository contains assets related to AI/ML workloads on Google Kubernetes Engine (GKE). Run optimized AI/ML workloads with Google Kubernetes Engine (GKE) platform orchestration capabilities. A robust AI/ML platform considers the following layers: Infrastructure orchestration that support GPUs and TPUs for training and serving workloads at scale Flexible integration with distributed computing and data processing frameworks Support for multiple teams on the same infrastructure to maximize utilization of resources

ray

Ray is a unified framework for scaling AI and Python applications. It consists of a core distributed runtime and a set of AI libraries for simplifying ML compute, including Data, Train, Tune, RLlib, and Serve. Ray runs on any machine, cluster, cloud provider, and Kubernetes, and features a growing ecosystem of community integrations. With Ray, you can seamlessly scale the same code from a laptop to a cluster, making it easy to meet the compute-intensive demands of modern ML workloads.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.