ALMA

State-of-the-art LLM-based translation models.

Stars: 308

ALMA (Advanced Language Model-based Translator) is a many-to-many LLM-based translation model that utilizes a two-step fine-tuning process on monolingual and parallel data to achieve strong translation performance. ALMA-R builds upon ALMA models with LoRA fine-tuning and Contrastive Preference Optimization (CPO) for even better performance, surpassing GPT-4 and WMT winners. The repository provides ALMA and ALMA-R models, datasets, environment setup, evaluation scripts, training guides, and data information for users to leverage these models for translation tasks.

README:

ALMA (Advanced Language Model-based TrAnslator) is a many-to-many LLM-based translation model, which adopts a new translation model paradigm: it begins with fine-tuning on monolingual data and is further optimized using high-quality parallel data. This two-step fine-tuning process ensures strong translation performance.

ALMA-R (NEW!) builds upon ALMA models, with further LoRA fine-tuning with our proposed Contrastive Preference Optimization (CPO) as opposed to the Supervised Fine-tuning used in ALMA. CPO fine-tuning requires our triplet preference data for preference learning. ALMA-R now can matches or even exceeds GPT-4 or WMT winners!

The original ALMA repository can be found here.

⭐ May.1 CPO paper has been accepted at ICML 2024!

⭐ Mar.22 2024 CPO method now is merged at huggingface trl! See details here.

⭐ Jan.16 2024 ALMA-R is Released! Please check more details with our new paper: Contrastive Preference Optimization: Pushing the Boundaries of LLM Performance in Machine Translation.

⭐ Jan.16 2024 The ALMA paper: A Paradigm Shift in Machine Translation: Boosting Translation Performance of Large Language Models has been accepted at ICLR 2024! Check out more details here!

⭐ Supports ⭐

- AMD and Nvidia Cards

- Data Parallel Evaluation

- Also support LLaMA-1, LLaMA-2, OPT, Faclon, BLOOM, MPT

- LoRA Fine-tuning

- Monolingual data fine-tuning, parallel data fine-tuning

We release six translation models presented in the paper:

- ALMA-7B

- ALMA-7B-LoRA

- ALMA-7B-R (NEW!): Further LoRA fine-tuning upon ALMA-7B-LoRA with contrastive preference optimization.

- ALMA-13B

- ALMA-13B-LoRA

- ALMA-13B-R (NEW!): Further LoRA fine-tuning upon ALMA-13B-LoRA with contrastive preference optimization (BEST MODEL!).

We have also provided the WMT'22 and WMT'23 translation outputs from ALMA-13B-LoRA and ALMA-13B-R in the outputs directory. These outputs also includes our outputs of baselines and can be directly accessed and used for subsequent evaluations.

Model checkpoints are released at huggingface:

| Models | Base Model Link | LoRA Link |

|---|---|---|

| ALMA-7B | haoranxu/ALMA-7B | - |

| ALMA-7B-LoRA | haoranxu/ALMA-7B-Pretrain | haoranxu/ALMA-7B-Pretrain-LoRA |

| ALMA-7B-R (NEW!) | haoranxu/ALMA-7B-R (LoRA merged) | - |

| ALMA-13B | haoranxu/ALMA-13B | - |

| ALMA-13B-LoRA | haoranxu/ALMA-13B-Pretrain | haoranxu/ALMA-13B-Pretrain-LoRA |

| ALMA-13B-R (NEW!) | haoranxu/ALMA-13B-R (LoRA merged) | - |

Note that ALMA-7B-Pretrain and ALMA-13B-Pretrain are NOT translation models. They only experience stage 1 monolingual fine-tuning (20B tokens for the 7B model and 12B tokens for the 13B model), and should be utilized in conjunction with their LoRA models.

Datasets used by ALMA and ALMA-R are also released at huggingface now (NEW!)

| Datasets | Train / Validation | Test |

|---|---|---|

| Human-Written Parallel Data (ALMA) | train and validation | WMT'22 |

| Triplet Preference Data | train | WMT'22 and WMT'23 |

A quick start to use our best system (ALMA-13B-R) for translation. An example of translating "我爱机器翻译。" into English:

import torch

from transformers import AutoModelForCausalLM

from transformers import AutoTokenizer

# Load base model and LoRA weights

model = AutoModelForCausalLM.from_pretrained("haoranxu/ALMA-13B-R", torch_dtype=torch.float16, device_map="auto")

tokenizer = AutoTokenizer.from_pretrained("haoranxu/ALMA-13B-R", padding_side='left')

# Add the source sentence into the prompt template

prompt="Translate this from Chinese to English:\nChinese: 我爱机器翻译。\nEnglish:"

input_ids = tokenizer(prompt, return_tensors="pt", padding=True, max_length=40, truncation=True).input_ids.cuda()

# Translation

with torch.no_grad():

generated_ids = model.generate(input_ids=input_ids, num_beams=5, max_new_tokens=20, do_sample=True, temperature=0.6, top_p=0.9)

outputs = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

print(outputs)

The general translation prompt is:

Translate this from <source language name> into <target language name>:

<source language name>: <source language sentence>

<target language name>:

conda create -n alma-r python=3.11

conda activate alma-r

If you use Nvidia GPUs, install torch with cuda 11.8

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

If you use AMD GPUs, install torch with ROCm 5.6

pip3 install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/rocm5.6

Then install other dependencies:

bash install_alma.sh

This is a quick start to evaluate our ALMA-13B-R model. To produce translation outputs for WMT'22 in both en→cs and cs→en directions (If you want to evaluate WMT'23 instead, simply pass --override_test_data_path haoranxu/WMT23-Test. Please look at evals/alma_13b_r_wmt23.sh as an example), run the following command:

accelerate launch --config_file configs/deepspeed_eval_config_bf16.yaml \

run_llmmt.py \

--model_name_or_path haoranxu/ALMA-13B-R \

--do_predict \

--low_cpu_mem_usage \

--language_pairs en-cs,cs-en \

--mmt_data_path ./human_written_data/ \

--per_device_eval_batch_size 1 \

--output_dir ./your_output_dir/ \

--predict_with_generate \

--max_new_tokens 256 \

--max_source_length 256 \

--bf16 \

--seed 42 \

--num_beams 5 \

--overwrite_cache \

--overwrite_output_dir

The generated outputs will be saved in the your_output_dir. The translation file for the en→cs direction is named test-en-cs, and the file for the cs→en direction is test-cs-en.

We have prepared a bash file for the user to easily run the evaluation:

bash evals/alma_13b_r.sh ${your_output_dir} ${test_pairs}

The variable ${test_pairs} denotes the translation directions you wish to evaluate. It supports testing multiple directions at once. For example, you can use de-en,en-de,en-cs,cs-en. Once the bash script completes its execution, both the BLEU scores and COMET results will be automatically displayed.

Note that this will perform data-parallel evaluation supported by deepspeed: that is, placing a single full copy of your model onto each available GPU and splitting batches across GPUs to evaluate on K GPUs K times faster than on one. For those with limited GPU memory, we offer an alternative method. The user can pass --multi_gpu_one_model to run the process by distributing a single model across multiple GPUs. Please see evaluation examples in evals/alma_13b_r.sh or evals/*no_parallel files.

To append examples in the prompt, you need to pass the --few_shot_eval_path flag and specify the location of their shot files. As a demonstration, you can execute the following command:

bash evals/llama-2-13b-5-shot.sh ${OUTPUT_DIR} ${test_pairs}

Here we show how to

- (NEW!) contrastive Preference Optmization Upon ALMA Models (ALMA→ALMA-R).

- fine-tune LLaMA-2-7B on monolingual OSCAR data (stage 1)

- fine-tune human-written parallel data fine-tuning once stage 1 is completed, including full-weight and LoRA fine-tuning (stage 2)

To run the CPO fine-tuning with our triplet preference data, run the following command:

bash runs/cpo_ft.sh ${your_output_dir}

To execute the OSCAR monolingual fine-tuning, use the following command:

bash runs/mono_ft.sh ${your_output_dir}

Once the monolingual data fine-tuning is complete, proceed to the parallel data fine-tuning using the full-weight approach. Execute the following command:

bash runs/parallel_ft.sh ${your_output_dir} $training_pairs$

where training_pairs is the translation directions you considered. The default is all 10 directions: de-en,cs-en,is-en,zh-en,ru-en,en-de,en-cs,en-is,en-zh,en-ru.

In Stage 2, there's also an option to employ LoRA for fine-tuning on the parallel data. To do so, execute the following command:

bash runs/parallel_ft_lora.sh ${your_output_dir} $training_pairs$

Human-written training dataset, along with the WMT'22 test dataset, can be found in the human_written_data directory. Within this directory, there are five subfolders, each representing one of the five language pairs. Each of these subfolders contains the training, development, and test sets for its respective language pair.

-deen

-train.de-en.json

-valid.de-en.json

-test.de-en.json

-test.en-de.json

....

-csen

-isen

-ruen

.....

The data format in json files must be:

{

"translation":

{

"src(de)": "source sentence",

"tgt(en)": "target sentence",

}

}

Within this directory, there are two additional subfolders specifically designed for few-shot in-context learning:

-

Filtered-5-shot: This contains the "filtered shots" as referenced in the paper. -

HW-5-shot: This contains the "randomly extracted human-written data" mentioned in the paper.

Currently, ALMA supports 10 directions: English↔German, Englishs↔Czech, Englishs↔Icelandic, Englishs↔Chinese, Englishs↔Russian. However, it may surprise us in other directions :)

Our 7B and 13B models are trained on 20B and 12B tokens, respectively. However, as indicated in the paper, fine-tuning 1B tokens should boost the performance substantially. The steps required to fine-tune 1 billion tokens also vary based on your batch size. In our case, the batch size is calculated as follows: 16 GPUs * 4 (batch size per GPU) * 4 (gradient accumulation steps) = 256. With a sequence length of 512, we need approximately 8,000 steps to train on 1 billion tokens, calculated as 10^9 / (256*512) ≈8000 steps. However, you may choose to fine-tune more steps to get better performance.

Please find the reasons for interleave probability selection for stage 1 in Appendix D.1 in the paper!

Please find more details for ALMA models in our paper or the summary of the paper.

@misc{xu2023paradigm,

title={A Paradigm Shift in Machine Translation: Boosting Translation Performance of Large Language Models},

author={Haoran Xu and Young Jin Kim and Amr Sharaf and Hany Hassan Awadalla},

year={2023},

eprint={2309.11674},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

Please also find more detailed information for the ALMA-R model with Contrastive Preference Optimization in the paper.

@misc{xu2024contrastive,

title={Contrastive Preference Optimization: Pushing the Boundaries of LLM Performance in Machine Translation},

author={Haoran Xu and Amr Sharaf and Yunmo Chen and Weiting Tan and Lingfeng Shen and Benjamin Van Durme and Kenton Murray and Young Jin Kim},

year={2024},

eprint={2401.08417},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ALMA

Similar Open Source Tools

ALMA

ALMA (Advanced Language Model-based Translator) is a many-to-many LLM-based translation model that utilizes a two-step fine-tuning process on monolingual and parallel data to achieve strong translation performance. ALMA-R builds upon ALMA models with LoRA fine-tuning and Contrastive Preference Optimization (CPO) for even better performance, surpassing GPT-4 and WMT winners. The repository provides ALMA and ALMA-R models, datasets, environment setup, evaluation scripts, training guides, and data information for users to leverage these models for translation tasks.

FlashRank

FlashRank is an ultra-lite and super-fast Python library designed to add re-ranking capabilities to existing search and retrieval pipelines. It is based on state-of-the-art Language Models (LLMs) and cross-encoders, offering support for pairwise/pointwise rerankers and listwise LLM-based rerankers. The library boasts the tiniest reranking model in the world (~4MB) and runs on CPU without the need for Torch or Transformers. FlashRank is cost-conscious, with a focus on low cost per invocation and smaller package size for efficient serverless deployments. It supports various models like ms-marco-TinyBERT, ms-marco-MiniLM, rank-T5-flan, ms-marco-MultiBERT, and more, with plans for future model additions. The tool is ideal for enhancing search precision and speed in scenarios where lightweight models with competitive performance are preferred.

OpenMusic

OpenMusic is a repository providing an implementation of QA-MDT, a Quality-Aware Masked Diffusion Transformer for music generation. The code integrates state-of-the-art models and offers training strategies for music generation. The repository includes implementations of AudioLDM, PixArt-alpha, MDT, AudioMAE, and Open-Sora. Users can train or fine-tune the model using different strategies and datasets. The model is well-pretrained and can be used for music generation tasks. The repository also includes instructions for preparing datasets, training the model, and performing inference. Contact information is provided for any questions or suggestions regarding the project.

qa-mdt

This repository provides an implementation of QA-MDT, integrating state-of-the-art models for music generation. It offers a Quality-Aware Masked Diffusion Transformer for enhanced music generation. The code is based on various repositories like AudioLDM, PixArt-alpha, MDT, AudioMAE, and Open-Sora. The implementation allows for training and fine-tuning the model with different strategies and datasets. The repository also includes instructions for preparing datasets in LMDB format and provides a script for creating a toy LMDB dataset. The model can be used for music generation tasks, with a focus on quality injection to enhance the musicality of generated music.

WildBench

WildBench is a tool designed for benchmarking Large Language Models (LLMs) with challenging tasks sourced from real users in the wild. It provides a platform for evaluating the performance of various models on a range of tasks. Users can easily add new models to the benchmark by following the provided guidelines. The tool supports models from Hugging Face and other APIs, allowing for comprehensive evaluation and comparison. WildBench facilitates running inference and evaluation scripts, enabling users to contribute to the benchmark and collaborate on improving model performance.

ichigo

Ichigo is a local real-time voice AI tool that uses an early fusion technique to extend a text-based LLM to have native 'listening' ability. It is an open research experiment with improved multiturn capabilities and the ability to refuse processing inaudible queries. The tool is designed for open data, open weight, on-device Siri-like functionality, inspired by Meta's Chameleon paper. Ichigo offers a web UI demo and Gradio web UI for users to interact with the tool. It has achieved enhanced MMLU scores, stronger context handling, advanced noise management, and improved multi-turn capabilities for a robust user experience.

bigcodebench

BigCodeBench is an easy-to-use benchmark for code generation with practical and challenging programming tasks. It aims to evaluate the true programming capabilities of large language models (LLMs) in a more realistic setting. The benchmark is designed for HumanEval-like function-level code generation tasks, but with much more complex instructions and diverse function calls. BigCodeBench focuses on the evaluation of LLM4Code with diverse function calls and complex instructions, providing precise evaluation & ranking and pre-generated samples to accelerate code intelligence research. It inherits the design of the EvalPlus framework but differs in terms of execution environment and test evaluation.

VideoTuna

VideoTuna is a codebase for text-to-video applications that integrates multiple AI video generation models for text-to-video, image-to-video, and text-to-image generation. It provides comprehensive pipelines in video generation, including pre-training, continuous training, post-training, and fine-tuning. The models in VideoTuna include U-Net and DiT architectures for visual generation tasks, with upcoming releases of a new 3D video VAE and a controllable facial video generation model.

RD-Agent

RD-Agent is a tool designed to automate critical aspects of industrial R&D processes, focusing on data-driven scenarios to streamline model and data development. It aims to propose new ideas ('R') and implement them ('D') automatically, leading to solutions of significant industrial value. The tool supports scenarios like Automated Quantitative Trading, Data Mining Agent, Research Copilot, and more, with a framework to push the boundaries of research in data science. Users can create a Conda environment, install the RDAgent package from PyPI, configure GPT model, and run various applications for tasks like quantitative trading, model evolution, medical prediction, and more. The tool is intended to enhance R&D processes and boost productivity in industrial settings.

open-chatgpt

Open-ChatGPT is an open-source library that enables users to train a hyper-personalized ChatGPT-like AI model using their own data with minimal computational resources. It provides an end-to-end training framework for ChatGPT-like models, supporting distributed training and offloading for extremely large models. The project implements RLHF (Reinforcement Learning with Human Feedback) powered by transformer library and DeepSpeed, allowing users to create high-quality ChatGPT-style models. Open-ChatGPT is designed to be user-friendly and efficient, aiming to empower users to develop their own conversational AI models easily.

evalverse

Evalverse is an open-source project designed to support Large Language Model (LLM) evaluation needs. It provides a standardized and user-friendly solution for processing and managing LLM evaluations, catering to AI research engineers and scientists. Evalverse supports various evaluation methods, insightful reports, and no-code evaluation processes. Users can access unified evaluation with submodules, request evaluations without code via Slack bot, and obtain comprehensive reports with scores, rankings, and visuals. The tool allows for easy comparison of scores across different models and swift addition of new evaluation tools.

NExT-GPT

NExT-GPT is an end-to-end multimodal large language model that can process input and generate output in various combinations of text, image, video, and audio. It leverages existing pre-trained models and diffusion models with end-to-end instruction tuning. The repository contains code, data, and model weights for NExT-GPT, allowing users to work with different modalities and perform tasks like encoding, understanding, reasoning, and generating multimodal content.

DALM

The DALM (Domain Adapted Language Modeling) toolkit is designed to unify general LLMs with vector stores to ground AI systems in efficient, factual domains. It provides developers with tools to build on top of Arcee's open source Domain Pretrained LLMs, enabling organizations to deeply tailor AI according to their unique intellectual property and worldview. The toolkit contains code for fine-tuning a fully differential Retrieval Augmented Generation (RAG-end2end) architecture, incorporating in-batch negative concept alongside RAG's marginalization for efficiency. It includes training scripts for both retriever and generator models, evaluation scripts, data processing codes, and synthetic data generation code.

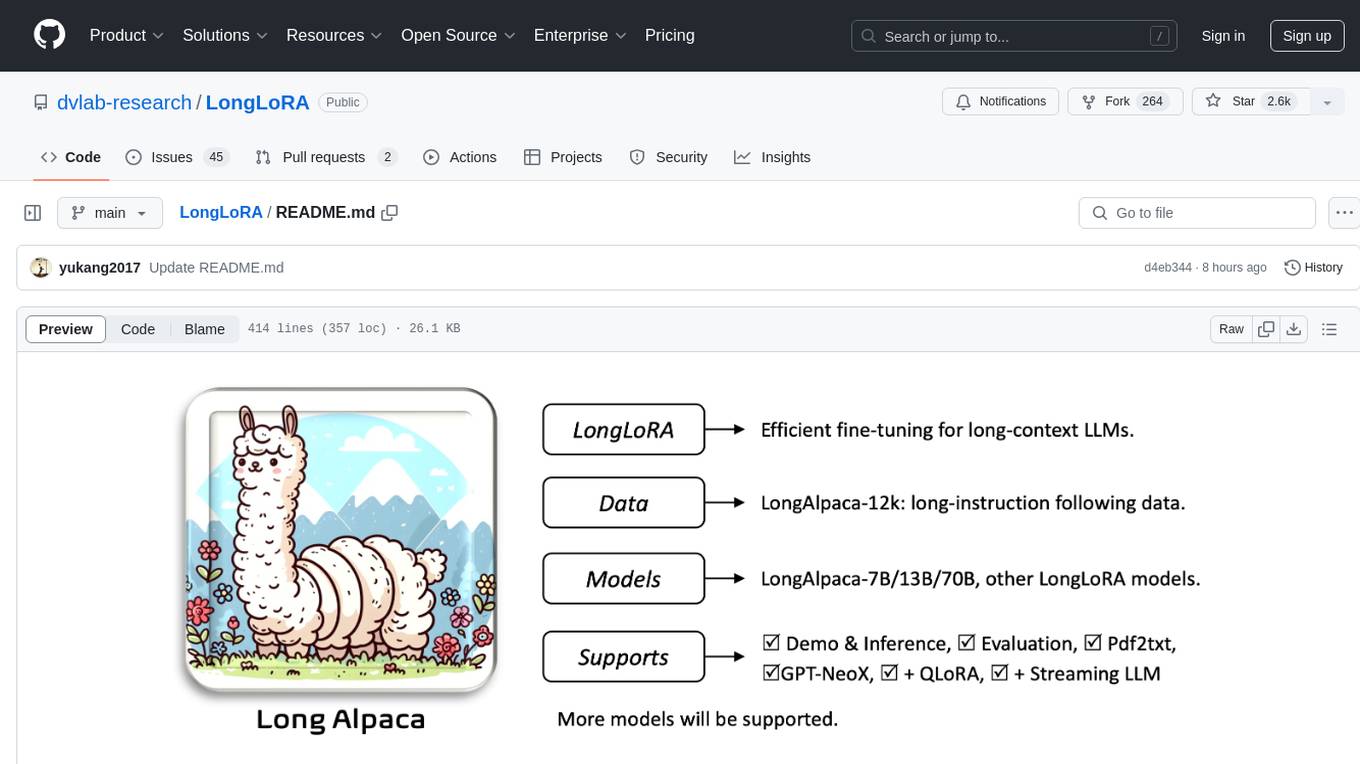

LongLoRA

LongLoRA is a tool for efficient fine-tuning of long-context large language models. It includes LongAlpaca data with long QA data collected and short QA sampled, models from 7B to 70B with context length from 8k to 100k, and support for GPTNeoX models. The tool supports supervised fine-tuning, context extension, and improved LoRA fine-tuning. It provides pre-trained weights, fine-tuning instructions, evaluation methods, local and online demos, streaming inference, and data generation via Pdf2text. LongLoRA is licensed under Apache License 2.0, while data and weights are under CC-BY-NC 4.0 License for research use only.

FlexFlow

FlexFlow Serve is an open-source compiler and distributed system for **low latency**, **high performance** LLM serving. FlexFlow Serve outperforms existing systems by 1.3-2.0x for single-node, multi-GPU inference and by 1.4-2.4x for multi-node, multi-GPU inference.

chromem-go

chromem-go is an embeddable vector database for Go with a Chroma-like interface and zero third-party dependencies. It enables retrieval augmented generation (RAG) and similar embeddings-based features in Go apps without the need for a separate database. The focus is on simplicity and performance for common use cases, allowing querying of documents with minimal memory allocations. The project is in beta and may introduce breaking changes before v1.0.0.

For similar tasks

ALMA

ALMA (Advanced Language Model-based Translator) is a many-to-many LLM-based translation model that utilizes a two-step fine-tuning process on monolingual and parallel data to achieve strong translation performance. ALMA-R builds upon ALMA models with LoRA fine-tuning and Contrastive Preference Optimization (CPO) for even better performance, surpassing GPT-4 and WMT winners. The repository provides ALMA and ALMA-R models, datasets, environment setup, evaluation scripts, training guides, and data information for users to leverage these models for translation tasks.

mindsdb

MindsDB is a platform for customizing AI from enterprise data. You can create, serve, and fine-tune models in real-time from your database, vector store, and application data. MindsDB "enhances" SQL syntax with AI capabilities to make it accessible for developers worldwide. With MindsDB’s nearly 200 integrations, any developer can create AI customized for their purpose, faster and more securely. Their AI systems will constantly improve themselves — using companies’ own data, in real-time.

training-operator

Kubeflow Training Operator is a Kubernetes-native project for fine-tuning and scalable distributed training of machine learning (ML) models created with various ML frameworks such as PyTorch, Tensorflow, XGBoost, MPI, Paddle and others. Training Operator allows you to use Kubernetes workloads to effectively train your large models via Kubernetes Custom Resources APIs or using Training Operator Python SDK. > Note: Before v1.2 release, Kubeflow Training Operator only supports TFJob on Kubernetes. * For a complete reference of the custom resource definitions, please refer to the API Definition. * TensorFlow API Definition * PyTorch API Definition * Apache MXNet API Definition * XGBoost API Definition * MPI API Definition * PaddlePaddle API Definition * For details of all-in-one operator design, please refer to the All-in-one Kubeflow Training Operator * For details on its observability, please refer to the monitoring design doc.

helix

HelixML is a private GenAI platform that allows users to deploy the best of open AI in their own data center or VPC while retaining complete data security and control. It includes support for fine-tuning models with drag-and-drop functionality. HelixML brings the best of open source AI to businesses in an ergonomic and scalable way, optimizing the tradeoff between GPU memory and latency.

nntrainer

NNtrainer is a software framework for training neural network models on devices with limited resources. It enables on-device fine-tuning of neural networks using user data for personalization. NNtrainer supports various machine learning algorithms and provides examples for tasks such as few-shot learning, ResNet, VGG, and product rating. It is optimized for embedded devices and utilizes CBLAS and CUBLAS for accelerated calculations. NNtrainer is open source and released under the Apache License version 2.0.

petals

Petals is a tool that allows users to run large language models at home in a BitTorrent-style manner. It enables fine-tuning and inference up to 10x faster than offloading. Users can generate text with distributed models like Llama 2, Falcon, and BLOOM, and fine-tune them for specific tasks directly from their desktop computer or Google Colab. Petals is a community-run system that relies on people sharing their GPUs to increase its capacity and offer a distributed network for hosting model layers.

LLaVA-pp

This repository, LLaVA++, extends the visual capabilities of the LLaVA 1.5 model by incorporating the latest LLMs, Phi-3 Mini Instruct 3.8B, and LLaMA-3 Instruct 8B. It provides various models for instruction-following LMMS and academic-task-oriented datasets, along with training scripts for Phi-3-V and LLaMA-3-V. The repository also includes installation instructions and acknowledgments to related open-source contributions.

KULLM

KULLM (구름) is a Korean Large Language Model developed by Korea University NLP & AI Lab and HIAI Research Institute. It is based on the upstage/SOLAR-10.7B-v1.0 model and has been fine-tuned for instruction. The model has been trained on 8×A100 GPUs and is capable of generating responses in Korean language. KULLM exhibits hallucination and repetition phenomena due to its decoding strategy. Users should be cautious as the model may produce inaccurate or harmful results. Performance may vary in benchmarks without a fixed system prompt.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.