TornadoVM

TornadoVM: A practical and efficient heterogeneous programming framework for managed languages

Stars: 1217

TornadoVM is a plug-in to OpenJDK and GraalVM that allows programmers to automatically run Java programs on heterogeneous hardware. TornadoVM targets OpenCL, PTX and SPIR-V compatible devices which include multi-core CPUs, dedicated GPUs (Intel, NVIDIA, AMD), integrated GPUs (Intel HD Graphics and ARM Mali), and FPGAs (Intel and Xilinx).

README:

TornadoVM is a plug-in to OpenJDK and GraalVM that allows programmers to automatically run Java programs on heterogeneous hardware. TornadoVM targets OpenCL, PTX and SPIR-V compatible devices which include multi-core CPUs, dedicated GPUs (Intel, NVIDIA, AMD), integrated GPUs (Intel HD Graphics and ARM Mali), and FPGAs (Intel and Xilinx).

TornadoVM has three backends that generate OpenCL C, NVIDIA CUDA PTX assembly, and SPIR-V binary. Developers can choose which backends to install and run.

Website: tornadovm.org

Documentation: https://tornadovm.readthedocs.io/en/latest/

For a quick introduction please read the following FAQ.

Latest Release: TornadoVM 1.0.10 - 31/01/2025 : See CHANGELOG.

In Linux and macOS, TornadoVM can be installed automatically with the installation script. For example:

$ ./bin/tornadovm-installer

usage: tornadovm-installer [-h] [--version] [--jdk JDK] [--backend BACKEND] [--listJDKs] [--javaHome JAVAHOME]

TornadoVM Installer Tool. It will install all software dependencies except the GPU/FPGA drivers

optional arguments:

-h, --help show this help message and exit

--version Print version of TornadoVM

--jdk JDK Select one of the supported JDKs. Use --listJDKs option to see all supported ones.

--backend BACKEND Select the backend to install: { opencl, ptx, spirv }

--listJDKs List all JDK supported versions

--javaHome JAVAHOME Use a JDK from a user directoryNOTE Select the desired backend:

-

opencl: Enables the OpenCL backend (requires OpenCL drivers) -

ptx: Enables the PTX backend (requires NVIDIA CUDA drivers) -

spirv: Enables the SPIRV backend (requires Intel Level Zero drivers)

Example of installation:

# Install the OpenCL backend with OpenJDK 21

$ ./bin/tornadovm-installer --jdk jdk21 --backend opencl

# It is also possible to combine different backends:

$ ./bin/tornadovm-installer --jdk jdk21 --backend opencl,spirv,ptxAlternatively, TornadoVM can be installed either manually from source or by using Docker.

If you are planning to use Docker with TornadoVM on GPUs, you can also follow these guidelines.

You can also run TornadoVM on Amazon AWS CPUs, GPUs, and FPGAs following the instructions here.

TornadoVM is currently being used to accelerate machine learning and deep learning applications, computer vision, physics simulations, financial applications, computational photography, and signal processing.

Featured use-cases:

- kfusion-tornadovm: Java application for accelerating a computer-vision application using the Tornado-APIs to run on discrete and integrated GPUs.

- Java Ray-Tracer: Java application accelerated with TornadoVM for real-time ray-tracing.

We also have a set of examples that includes NBody, DFT, KMeans computation and matrix computations.

Additional Information

- General Documentation

- Benchmarks

- How TornadoVM executes reductions

- Execution Flags

- FPGA execution

- Profiler Usage

TornadoVM exposes to the programmer task-level, data-level and pipeline-level parallelism via a light Application Programming Interface (API). In addition, TornadoVM uses single-source property, in which the code to be accelerated and the host code live in the same Java program.

Compute-kernels in TornadoVM can be programmed using two different approaches (APIs):

Compute kernels are written in a sequential form (tasks programmed for a single thread execution). To express

parallelism, TornadoVM exposes two annotations that can be used in loops and parameters: a) @Parallel for annotating

parallel loops; and b) @Reduce for annotating parameters used in reductions.

The following code snippet shows a full example to accelerate Matrix-Multiplication using TornadoVM and the loop-parallel API:

public class Compute {

private static void mxmLoop(Matrix2DFloat A, Matrix2DFloat B, Matrix2DFloat C, final int size) {

for (@Parallel int i = 0; i < size; i++) {

for (@Parallel int j = 0; j < size; j++) {

float sum = 0.0f;

for (int k = 0; k < size; k++) {

sum += A.get(i, k) * B.get(k, j);

}

C.set(i, j, sum);

}

}

}

public void run(Matrix2DFloat A, Matrix2DFloat B, Matrix2DFloat C, final int size) {

// Create a task-graph with multiple tasks. Each task points to an exising Java method

// that can be accelerated on a GPU/FPGA

TaskGraph taskGraph = new TaskGraph("myCompute")

.transferToDevice(DataTransferMode.FIRST_EXECUTION, A, B) // Transfer data from host to device only in the first execution

.task("mxm", Compute::mxmLoop, A, B, C, size) // Each task points to an existing Java method

.transferToHost(DataTransferMode.EVERY_EXECUTION, C); // Transfer data from device to host

// Create an immutable task-graph

ImmutableTaskGraph immutableTaskGraph = taskGraph.snaphot();

// Create an execution plan from an immutable task-graph

try (TornadoExecutionPlan executionPlan = new TornadoExecutionPlan(immutableTaskGraph)) {

// Run the execution plan on the default device

TorandoExecutionResult executionResult = executionPlan.execute();

} catch (TornadoExecutionPlanException e) {

// handle exception

// ...

}

}

}Another way to express compute-kernels in TornadoVM is via the Kernel API.

To do so, TornadoVM exposes the KernelContext data structure, in which the application can directly access the thread-id, allocate

memory in local memory (shared memory on NVIDIA devices), and insert barriers.

This model is similar to programming compute-kernels in SYCL, oneAPI, OpenCL and CUDA.

Therefore, this API is more suitable for GPU/FPGA expert programmers that want more control or want to port existing

CUDA/OpenCL compute kernels into TornadoVM.

The following code-snippet shows the Matrix Multiplication example using the kernel-parallel API:

public class Compute {

private static void mxmKernel(KernelContext context, Matrix2DFloat A, Matrix2DFloat B, Matrix2DFloat C, final int size) {

int idx = context.globalIdx

int jdx = context.globalIdy;

float sum = 0;

for (int k = 0; k < size; k++) {

sum += A.get(idx, k) * B.get(k, jdx);

}

C.set(idx, jdx, sum);

}

public void run(Matrix2DFloat A, Matrix2DFloat B, Matrix2DFloat C, final int size) {

// When using the kernel-parallel API, we need to create a Grid and a Worker

WorkerGrid workerGrid = new WorkerGrid2D(size, size); // Create a 2D Worker

GridScheduler gridScheduler = new GridScheduler("myCompute.mxm", workerGrid); // Attach the worker to the Grid

KernelContext context = new KernelContext(); // Create a context

workerGrid.setLocalWork(16, 16, 1); // Set the local-group size

TaskGraph taskGraph = new TaskGraph("myCompute")

.transferToDevice(DataTransferMode.FIRST_EXECUTION, A, B) // Transfer data from host to device only in the first execution

.task("mxm", Compute::mxmKernel, context, A, B, C, size) // Each task points to an existing Java method

.transferToHost(DataTransferMode.EVERY_EXECUTION, C); // Transfer data from device to host

// Create an immutable task-graph

ImmutableTaskGraph immutableTaskGraph = taskGraph.snapshot();

// Create an execution plan from an immutable task-graph

try (TornadoExecutionPlan executionPlan = new TornadoExecutionPlan(immutableTaskGraph)) {

// Run the execution plan on the default device

// Execute the execution plan

TorandoExecutionResult executionResult = executionPlan

.withGridScheduler(gridScheduler)

.execute();

} catch (TornadoExecutionPlanException e) {

// handle exception

// ...

}

}

}Additionally, the two modes of expressing parallelism (kernel and loop parallelization) can be combined in the same task graph object.

Dynamic reconfiguration is the ability of TornadoVM to perform live task migration between devices, which means that TornadoVM decides where to execute the code to increase performance (if possible). In other words, TornadoVM switches devices if it can detect that a specific device can yield better performance (compared to another).

With the task-migration, the TornadoVM's approach is to only switch device if it detects an application can be executed faster than the CPU execution using the code compiled by C2 or Graal-JIT, otherwise it will stay on the CPU. So TornadoVM can be seen as a complement to C2 and Graal JIT compilers. This is because there is no single hardware to best execute all workloads efficiently. GPUs are very good at exploiting SIMD applications, and FPGAs are very good at exploiting pipeline applications. If your applications follow those models, TornadoVM will likely select heterogeneous hardware. Otherwise, it will stay on the CPU using the default compilers (C2 or Graal).

To use the dynamic reconfiguration, you can execute using TornadoVM policies. For example:

// TornadoVM will execute the code in the best accelerator.

executionPlan.withDynamicReconfiguration(Policy.PERFORMANCE, DRMode.PARALLEL)

.execute();Further details and instructions on how to enable this feature can be found here.

- Dynamic reconfiguration: https://dl.acm.org/doi/10.1145/3313808.3313819

To use TornadoVM, you need two components:

a) The TornadoVM jar file with the API. The API is licensed as GPLV2 with Classpath Exception.

b) The core libraries of TornadoVM along with the dynamic library for the driver code (.so files for OpenCL, PTX

and/or SPIRV/Level Zero).

You can import the TornadoVM API by setting this the following dependency in the Maven pom.xml file:

<repositories>

<repository>

<id>universityOfManchester-graal</id>

<url>https://raw.githubusercontent.com/beehive-lab/tornado/maven-tornadovm</url>

</repository>

</repositories>

<dependencies>

<dependency>

<groupId>tornado</groupId>

<artifactId>tornado-api</artifactId>

<version>1.0.10</version>

</dependency>

<dependency>

<groupId>tornado</groupId>

<artifactId>tornado-matrices</artifactId>

<version>1.0.10</version>

</dependency>

</dependencies>To run TornadoVM, you need to either install the TornadoVM extension for GraalVM/OpenJDK, or run with our Docker images.

Here you can find videos, presentations, tech-articles and artefacts describing TornadoVM, and how to use it.

If you are using TornadoVM >= 0.2 (which includes the Dynamic Reconfiguration, the initial FPGA support and CPU/GPU reductions), please use the following citation:

@inproceedings{Fumero:DARHH:VEE:2019,

author = {Fumero, Juan and Papadimitriou, Michail and Zakkak, Foivos S. and Xekalaki, Maria and Clarkson, James and Kotselidis, Christos},

title = {{Dynamic Application Reconfiguration on Heterogeneous Hardware.}},

booktitle = {Proceedings of the 15th ACM SIGPLAN/SIGOPS International Conference on Virtual Execution Environments},

series = {VEE '19},

year = {2019},

doi = {10.1145/3313808.3313819},

publisher = {Association for Computing Machinery}

}If you are using Tornado 0.1 (Initial release), please use the following citation in your work.

@inproceedings{Clarkson:2018:EHH:3237009.3237016,

author = {Clarkson, James and Fumero, Juan and Papadimitriou, Michail and Zakkak, Foivos S. and Xekalaki, Maria and Kotselidis, Christos and Luj\'{a}n, Mikel},

title = {{Exploiting High-performance Heterogeneous Hardware for Java Programs Using Graal}},

booktitle = {Proceedings of the 15th International Conference on Managed Languages \& Runtimes},

series = {ManLang '18},

year = {2018},

isbn = {978-1-4503-6424-9},

location = {Linz, Austria},

pages = {4:1--4:13},

articleno = {4},

numpages = {13},

url = {http://doi.acm.org/10.1145/3237009.3237016},

doi = {10.1145/3237009.3237016},

acmid = {3237016},

publisher = {ACM},

address = {New York, NY, USA},

keywords = {Java, graal, heterogeneous hardware, openCL, virtual machine},

}Selected publications can be found here.

This work is partially funded by Intel corporation. In addition, it has been supported by the following EU & UKRI grants (most recent first):

- EU Horizon Europe & UKRI AERO 101092850.

- EU Horizon Europe & UKRI INCODE 101093069.

- EU Horizon Europe & UKRI ENCRYPT 101070670.

- EU Horizon Europe & UKRI TANGO 101070052.

- EU Horizon 2020 ELEGANT 957286.

- EU Horizon 2020 E2Data 780245.

- EU Horizon 2020 ACTiCLOUD 732366.

Furthermore, TornadoVM has been supported by the following EPSRC grants:

We welcome collaborations! Please see how to contribute to the project in the CONTRIBUTING page.

Additionally, you can open new proposals on the GitHub discussions page.

Alternatively, you can share a Google document with us.

For Academic & Industry collaborations, please contact here.

Visit our website to meet the team.

To use TornadoVM, you can link the TornadoVM API to your application which is under Apache 2.

Each Java TornadoVM module is licensed as follows:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for TornadoVM

Similar Open Source Tools

TornadoVM

TornadoVM is a plug-in to OpenJDK and GraalVM that allows programmers to automatically run Java programs on heterogeneous hardware. TornadoVM targets OpenCL, PTX and SPIR-V compatible devices which include multi-core CPUs, dedicated GPUs (Intel, NVIDIA, AMD), integrated GPUs (Intel HD Graphics and ARM Mali), and FPGAs (Intel and Xilinx).

FlexFlow

FlexFlow Serve is an open-source compiler and distributed system for **low latency**, **high performance** LLM serving. FlexFlow Serve outperforms existing systems by 1.3-2.0x for single-node, multi-GPU inference and by 1.4-2.4x for multi-node, multi-GPU inference.

rl

TorchRL is an open-source Reinforcement Learning (RL) library for PyTorch. It provides pytorch and **python-first** , low and high level abstractions for RL that are intended to be **efficient** , **modular** , **documented** and properly **tested**. The code is aimed at supporting research in RL. Most of it is written in python in a highly modular way, such that researchers can easily swap components, transform them or write new ones with little effort.

catalyst

Catalyst is a C# Natural Language Processing library designed for speed, inspired by spaCy's design. It provides pre-trained models, support for training word and document embeddings, and flexible entity recognition models. The library is fast, modern, and pure-C#, supporting .NET standard 2.0. It is cross-platform, running on Windows, Linux, macOS, and ARM. Catalyst offers non-destructive tokenization, named entity recognition, part-of-speech tagging, language detection, and efficient binary serialization. It includes pre-built models for language packages and lemmatization. Users can store and load models using streams. Getting started with Catalyst involves installing its NuGet Package and setting the storage to use the online repository. The library supports lazy loading of models from disk or online. Users can take advantage of C# lazy evaluation and native multi-threading support to process documents in parallel. Training a new FastText word2vec embedding model is straightforward, and Catalyst also provides algorithms for fast embedding search and dimensionality reduction.

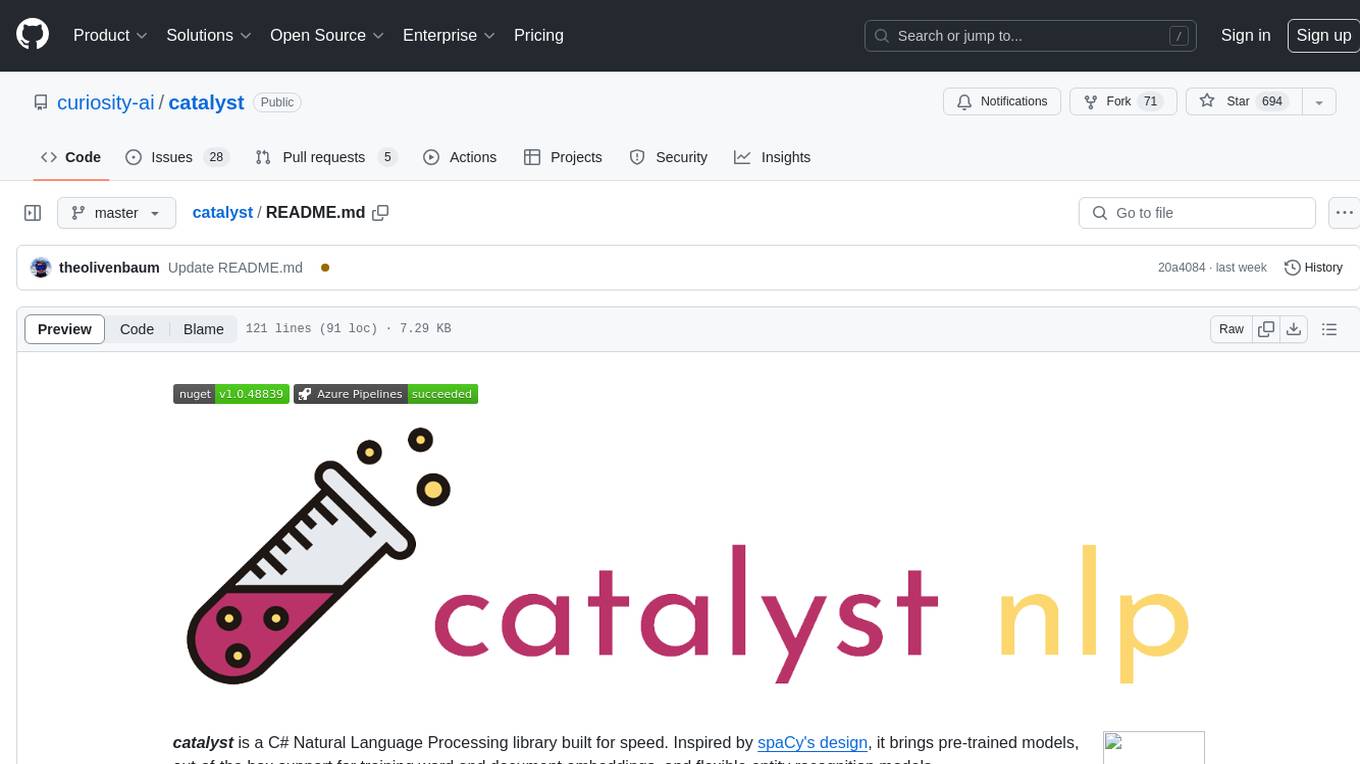

dLLM-RL

dLLM-RL is a revolutionary reinforcement learning framework designed for Diffusion Large Language Models. It supports various models with diverse structures, offers inference acceleration, RL training capabilities, and SFT functionalities. The tool introduces TraceRL for trajectory-aware RL and diffusion-based value models for optimization stability. Users can download and try models like TraDo-4B-Instruct and TraDo-8B-Instruct. The tool also provides support for multi-node setups and easy building of reinforcement learning methods. Additionally, it offers supervised fine-tuning strategies for different models and tasks.

graphiti

Graphiti is a framework for building and querying temporally-aware knowledge graphs, tailored for AI agents in dynamic environments. It continuously integrates user interactions, structured and unstructured data, and external information into a coherent, queryable graph. The framework supports incremental data updates, efficient retrieval, and precise historical queries without complete graph recomputation, making it suitable for developing interactive, context-aware AI applications.

Endia

Endia is a dynamic Array library for Scientific Computing, offering automatic differentiation of arbitrary order, complex number support, dual API with PyTorch-like imperative or JAX-like functional interface, and JIT Compilation for speeding up training and inference. It can handle complex valued functions, perform both forward and reverse-mode automatic differentiation, and has a builtin JIT compiler. Endia aims to advance AI & Scientific Computing by pushing boundaries with clear algorithms, providing high-performance open-source code that remains readable and pythonic, and prioritizing clarity and educational value over exhaustive features.

RTL-Coder

RTL-Coder is a tool designed to outperform GPT-3.5 in RTL code generation by providing a fully open-source dataset and a lightweight solution. It targets Verilog code generation and offers an automated flow to generate a large labeled dataset with over 27,000 diverse Verilog design problems and answers. The tool addresses the data availability challenge in IC design-related tasks and can be used for various applications beyond LLMs. The tool includes four RTL code generation models available on the HuggingFace platform, each with specific features and performance characteristics. Additionally, RTL-Coder introduces a new LLM training scheme based on code quality feedback to further enhance model performance and reduce GPU memory consumption.

PDEBench

PDEBench provides a diverse and comprehensive set of benchmarks for scientific machine learning, including challenging and realistic physical problems. The repository consists of code for generating datasets, uploading and downloading datasets, training and evaluating machine learning models as baselines. It features a wide range of PDEs, realistic and difficult problems, ready-to-use datasets with various conditions and parameters. PDEBench aims for extensibility and invites participation from the SciML community to improve and extend the benchmark.

Eco2AI

Eco2AI is a python library for CO2 emission tracking that monitors energy consumption of CPU & GPU devices and estimates equivalent carbon emissions based on regional emission coefficients. Users can easily integrate Eco2AI into their Python scripts by adding a few lines of code. The library records emissions data and device information in a local file, providing detailed session logs with project names, experiment descriptions, start times, durations, power consumption, CO2 emissions, CPU and GPU names, operating systems, and countries.

tinystruct

Tinystruct is a simple Java framework designed for easy development with better performance. It offers a modern approach with features like CLI and web integration, built-in lightweight HTTP server, minimal configuration philosophy, annotation-based routing, and performance-first architecture. Developers can focus on real business logic without dealing with unnecessary complexities, making it transparent, predictable, and extensible.

KIVI

KIVI is a plug-and-play 2bit KV cache quantization algorithm optimizing memory usage by quantizing key cache per-channel and value cache per-token to 2bit. It enables LLMs to maintain quality while reducing memory usage, allowing larger batch sizes and increasing throughput in real LLM inference workloads.

MInference

MInference is a tool designed to accelerate pre-filling for long-context Language Models (LLMs) by leveraging dynamic sparse attention. It achieves up to a 10x speedup for pre-filling on an A100 while maintaining accuracy. The tool supports various decoding LLMs, including LLaMA-style models and Phi models, and provides custom kernels for attention computation. MInference is useful for researchers and developers working with large-scale language models who aim to improve efficiency without compromising accuracy.

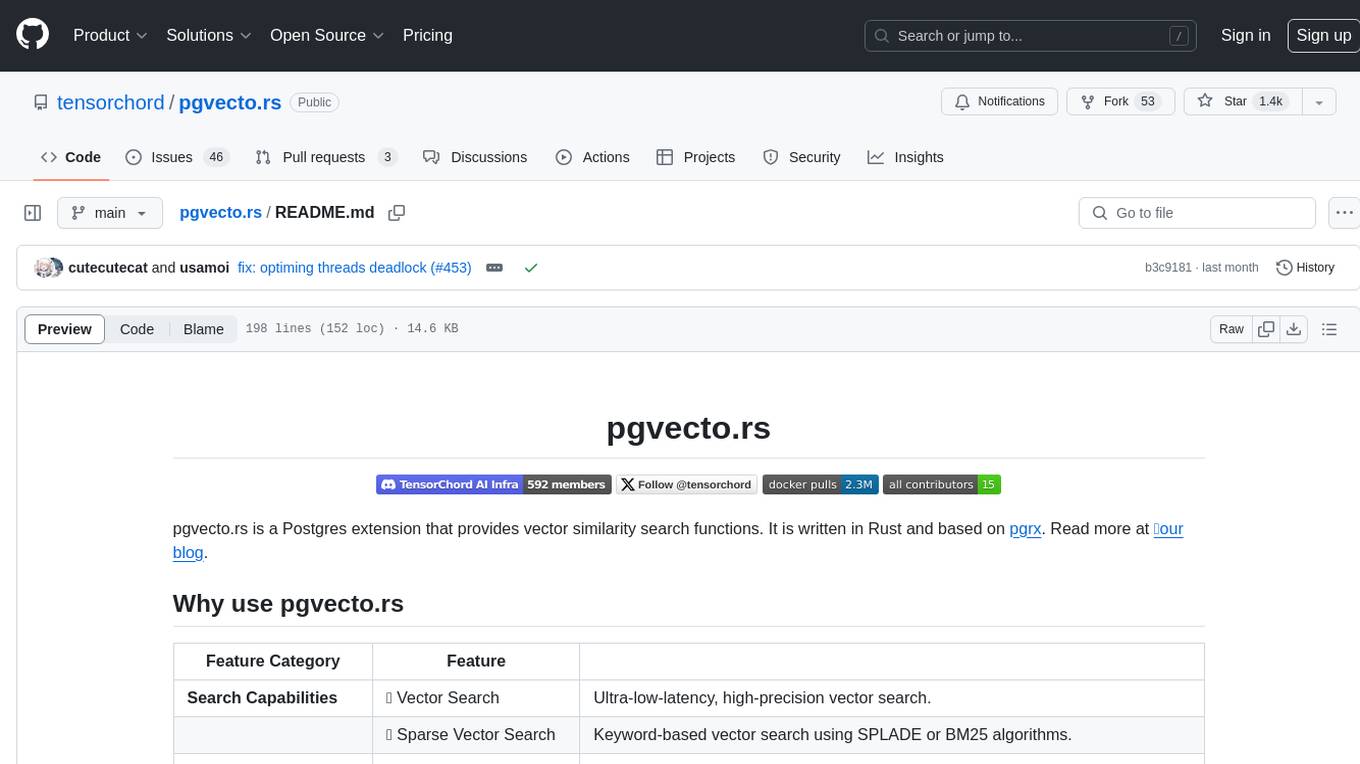

pgvecto.rs

pgvecto.rs is a Postgres extension written in Rust that provides vector similarity search functions. It offers ultra-low-latency, high-precision vector search capabilities, including sparse vector search and full-text search. With complete SQL support, async indexing, and easy data management, it simplifies data handling. The extension supports various data types like FP16/INT8, binary vectors, and Matryoshka embeddings. It ensures system performance with production-ready features, high availability, and resource efficiency. Security and permissions are managed through easy access control. The tool allows users to create tables with vector columns, insert vector data, and calculate distances between vectors using different operators. It also supports half-precision floating-point numbers for better performance and memory usage optimization.

Arcade-Learning-Environment

The Arcade Learning Environment (ALE) is a simple framework that allows researchers and hobbyists to develop AI agents for Atari 2600 games. It is built on top of the Atari 2600 emulator Stella and separates the details of emulation from agent design. The ALE currently supports three different interfaces: C++, Python, and OpenAI Gym.

ichigo

Ichigo is a local real-time voice AI tool that uses an early fusion technique to extend a text-based LLM to have native 'listening' ability. It is an open research experiment with improved multiturn capabilities and the ability to refuse processing inaudible queries. The tool is designed for open data, open weight, on-device Siri-like functionality, inspired by Meta's Chameleon paper. Ichigo offers a web UI demo and Gradio web UI for users to interact with the tool. It has achieved enhanced MMLU scores, stronger context handling, advanced noise management, and improved multi-turn capabilities for a robust user experience.

For similar tasks

TornadoVM

TornadoVM is a plug-in to OpenJDK and GraalVM that allows programmers to automatically run Java programs on heterogeneous hardware. TornadoVM targets OpenCL, PTX and SPIR-V compatible devices which include multi-core CPUs, dedicated GPUs (Intel, NVIDIA, AMD), integrated GPUs (Intel HD Graphics and ARM Mali), and FPGAs (Intel and Xilinx).

For similar jobs

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

openvino

OpenVINO™ is an open-source toolkit for optimizing and deploying AI inference. It provides a common API to deliver inference solutions on various platforms, including CPU, GPU, NPU, and heterogeneous devices. OpenVINO™ supports pre-trained models from Open Model Zoo and popular frameworks like TensorFlow, PyTorch, and ONNX. Key components of OpenVINO™ include the OpenVINO™ Runtime, plugins for different hardware devices, frontends for reading models from native framework formats, and the OpenVINO Model Converter (OVC) for adjusting models for optimal execution on target devices.

peft

PEFT (Parameter-Efficient Fine-Tuning) is a collection of state-of-the-art methods that enable efficient adaptation of large pretrained models to various downstream applications. By only fine-tuning a small number of extra model parameters instead of all the model's parameters, PEFT significantly decreases the computational and storage costs while achieving performance comparable to fully fine-tuned models.

jetson-generative-ai-playground

This repo hosts tutorial documentation for running generative AI models on NVIDIA Jetson devices. The documentation is auto-generated and hosted on GitHub Pages using their CI/CD feature to automatically generate/update the HTML documentation site upon new commits.

emgucv

Emgu CV is a cross-platform .Net wrapper for the OpenCV image-processing library. It allows OpenCV functions to be called from .NET compatible languages. The wrapper can be compiled by Visual Studio, Unity, and "dotnet" command, and it can run on Windows, Mac OS, Linux, iOS, and Android.

MMStar

MMStar is an elite vision-indispensable multi-modal benchmark comprising 1,500 challenge samples meticulously selected by humans. It addresses two key issues in current LLM evaluation: the unnecessary use of visual content in many samples and the existence of unintentional data leakage in LLM and LVLM training. MMStar evaluates 6 core capabilities across 18 detailed axes, ensuring a balanced distribution of samples across all dimensions.

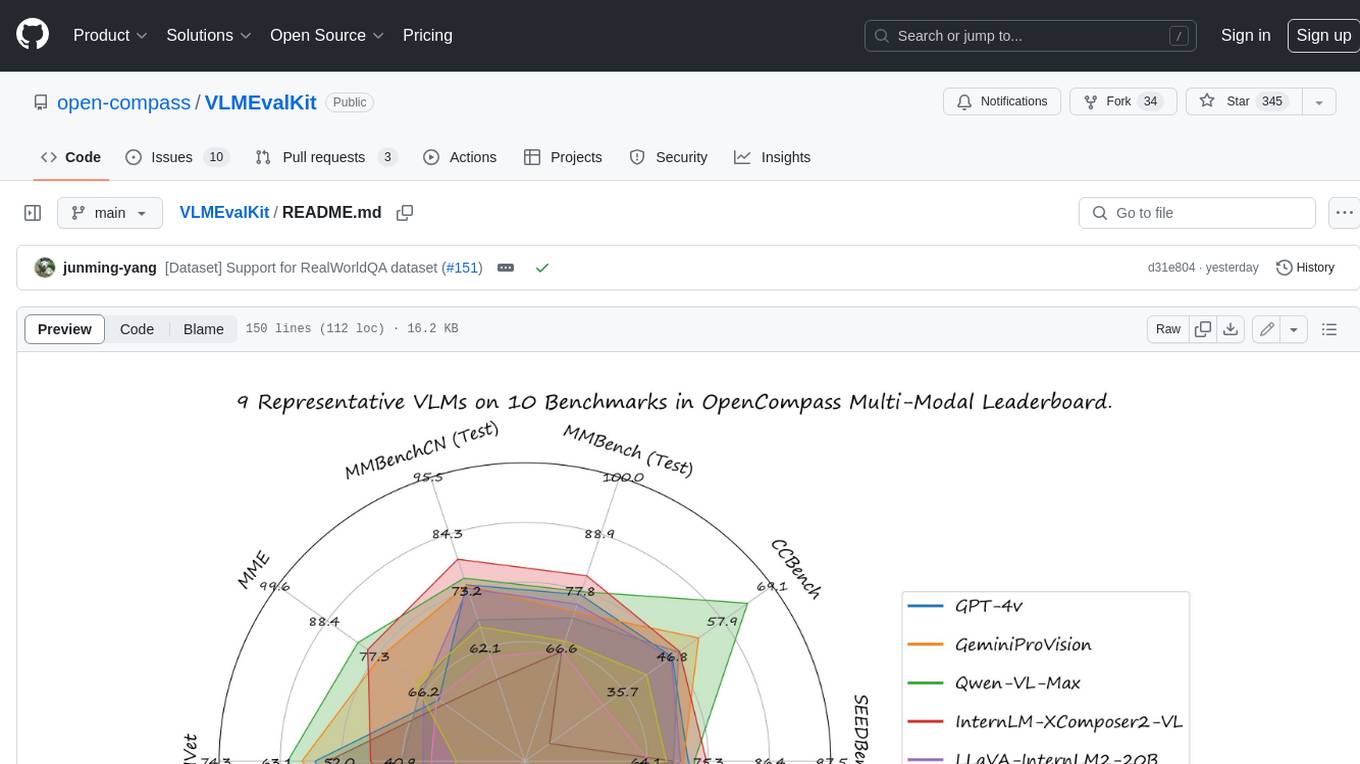

VLMEvalKit

VLMEvalKit is an open-source evaluation toolkit of large vision-language models (LVLMs). It enables one-command evaluation of LVLMs on various benchmarks, without the heavy workload of data preparation under multiple repositories. In VLMEvalKit, we adopt generation-based evaluation for all LVLMs, and provide the evaluation results obtained with both exact matching and LLM-based answer extraction.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.