Best AI tools for< Accelerate Deep Learning >

20 - AI tool Sites

Cirrascale Cloud Services

Cirrascale Cloud Services is an AI tool that offers cloud solutions for Artificial Intelligence applications. The platform provides a range of cloud services and products tailored for AI innovation, including NVIDIA GPU Cloud, AMD Instinct Series Cloud, Qualcomm Cloud, Graphcore, Cerebras, and SambaNova. Cirrascale's AI Innovation Cloud enables users to test and deploy on leading AI accelerators in one cloud, democratizing AI by delivering high-performance AI compute and scalable deep learning solutions. The platform also offers professional and managed services, tailored multi-GPU server options, and high-throughput storage and networking solutions to accelerate development, training, and inference workloads.

4Quant

4Quant is an AI-powered medical imaging platform that utilizes Big Data and Deep Learning technology to accelerate the extraction of high-quality medical labels. The platform offers a range of tools for image analysis, annotation, and data analytics in the medical field. 4Quant aims to provide scalable solutions for medical imaging analysis, statistical reporting, and personalized training in image analysis. The platform is built on the latest Big Data framework, Apache Spark, and integrates with cloud computing for efficient processing of large datasets.

Edge AI and Vision Alliance

The Edge AI and Vision Alliance is a platform that provides practical technical insights and expert advice for developers building AI or vision-enabled products. It offers information on the latest vision, AI, and deep learning technologies, standards, market research, and applications. The Alliance aims to help users incorporate visual and artificial intelligence into their products effectively and efficiently.

Fifi.ai

Fifi.ai is a managed AI cloud platform that provides users with the infrastructure and tools to deploy and run AI models. The platform is designed to be easy to use, with a focus on plug-and-play functionality. Fifi.ai also offers a range of customization and fine-tuning options, allowing users to tailor the platform to their specific needs. The platform is supported by a team of experts who can provide assistance with onboarding, API integration, and troubleshooting.

Paperspace

Paperspace is an AI tool designed to develop, train, and deploy AI models of any size and complexity. It offers a cloud GPU platform for accelerated computing, with features such as GPU cloud workflows, machine learning solutions, GPU infrastructure, virtual desktops, gaming, rendering, 3D graphics, and simulation. Paperspace provides a seamless abstraction layer for individuals and organizations to focus on building AI applications, offering low-cost GPUs with per-second billing, infrastructure abstraction, job scheduling, resource provisioning, and collaboration tools.

Microsoft Tech Community

The Microsoft Tech Community is an online forum where users can connect with experts and peers to find answers, ask questions, build skills, and accelerate their digital transformation with the Microsoft Cloud. It offers a variety of resources, including discussions, blogs, events, and learning materials, on a wide range of topics related to Microsoft products and technologies.

Hailo

Hailo is a leading provider of top-performing edge AI processors for various edge devices, offering generative AI accelerators, AI vision processors, and AI accelerators. The company's technology enables high-performance deep learning applications on edge devices, catering to industries such as automotive, security, industrial automation, retail, and personal computing.

Denvr DataWorks AI Cloud

Denvr DataWorks AI Cloud is a cloud-based AI platform that provides end-to-end AI solutions for businesses. It offers a range of features including high-performance GPUs, scalable infrastructure, ultra-efficient workflows, and cost efficiency. Denvr DataWorks is an NVIDIA Elite Partner for Compute, and its platform is used by leading AI companies to develop and deploy innovative AI solutions.

TractoAI

TractoAI is an advanced AI platform that offers deep learning solutions for various industries. It provides Batch Inference with no rate limits, DeepSeek offline inference, and helps in training open source AI models. TractoAI simplifies training infrastructure setup, accelerates workflows with GPUs, and automates deployment and scaling for tasks like ML training and big data processing. The platform supports fine-tuning models, sandboxed code execution, and building custom AI models with distributed training launcher. It is developer-friendly, scalable, and efficient, offering a solution library and expert guidance for AI projects.

MetroMax Solutions

MetroMax Solutions is a leading provider of custom software, web, and app solutions. With a team of experienced developers and a focus on quality, MetroMax Solutions provides innovative technology-based products and services to help businesses accelerate their growth. The company offers a wide range of services, including BPO, technology services, digital marketing, virtual assistants, accounting, and pre-built technology accelerators.

HireLakeAI

HireLakeAI is an AI-powered platform that helps businesses with their hiring process. It uses AI to automate tasks such as resume screening, candidate matching, and interview scheduling. HireLakeAI also provides insights into candidate data, such as their skills, experience, and personality. This information can help businesses make better hiring decisions and improve their overall hiring process.

SambaNova Systems

SambaNova Systems is an AI platform that revolutionizes AI workloads by offering an enterprise-grade full stack platform purpose-built for generative AI. It provides state-of-the-art AI and deep learning capabilities to help customers outcompete their peers. SambaNova delivers the only enterprise-grade full stack platform, from chips to models, designed for generative AI in the enterprise. The platform includes the SN40L Full Stack Platform with 1T+ parameter models, Composition of Experts, and Samba Apps. SambaNova also offers resources to accelerate AI journeys and solutions for various industries like financial services, healthcare, manufacturing, and more.

FuriosaAI

FuriosaAI is an AI application that offers Hardware RNGD for LLM and Multimodality, as well as WARBOY for Computer Vision. It provides a comprehensive developer experience through the Furiosa SDK, Model Zoo, and Dev Support. The application focuses on efficient AI inference, high-performance LLM and multimodal deployment capabilities, and sustainable mass adoption of AI. FuriosaAI features the Tensor Contraction Processor architecture, software for streamlined LLM deployment, and a robust ecosystem support. It aims to deliver powerful and efficient deep learning acceleration while ensuring future-proof programmability and efficiency.

maya.ai

Crayon Data's maya.ai platform is an AI-led revenue acceleration platform for enterprises. It helps businesses unlock the value of data to increase customer engagement and revenue. The platform offers a range of capabilities, including data cleaning and enrichment, personalized recommendations, and plug-and-play APIs. Maya.ai has been used by leading global enterprises to achieve significant results, including increased revenue, improved customer engagement, and reduced time to market.

Codimite

Codimite is an AI-assisted offshore development company that provides a range of services to help businesses accelerate their software development, reduce costs, and drive innovation. Codimite's team of experienced engineers and project managers use AI-powered tools and technologies to deliver exceptional results for their clients. The company's services include AI-assisted software development, cloud modernization, and data and artificial intelligence solutions.

Anycores

Anycores is an AI tool designed to optimize the performance of deep neural networks and reduce the cost of running AI models in the cloud. It offers a platform that provides automated solutions for tuning and inference consultation, optimized networks zoo, and platform for reducing AI model cost. Anycores focuses on faster execution, reducing inference time over 10x times, and footprint reduction during model deployment. It is device agnostic, supporting Nvidia, AMD GPUs, Intel, ARM, AMD CPUs, servers, and edge devices. The tool aims to provide highly optimized, low footprint networks tailored to specific deployment scenarios.

Gloat

Gloat is an Agile Workforce Operating System that harnesses AI-powered workforce agility to transform organizations into skill-driven powerhouses. It offers applications to accelerate business impact, build skills-based organizations, and enhance agility and speed to market. Gloat's platform enables companies to upskill and reskill at scale, restructure efficiently, accelerate innovation, retain and grow employees, and forecast capabilities needed for the future. Through deep-learning AI, Gloat's Workforce Graph provides actionable data and intelligence to support agile workforce operations.

Neural Concept

Neural Concept is an end-to-end platform for high-performance engineering teams, powered by a leading proprietary 3D AI core. It accelerates product development and innovation with industry-leading 3D deep-learning and simulation capabilities. The platform works with various CAE and CAD softwares, offering 3D visual feedback, collaborative environment, and LLM guidance to boost engineers' impact. Neural Concept is used by engineering companies to design and deliver better products faster, bringing AI-designed products to market up to 75% faster.

Embedl

Embedl is an AI tool that specializes in developing advanced solutions for efficient AI deployment in embedded systems. With a focus on deep learning optimization, Embedl offers a cost-effective solution that reduces energy consumption and accelerates product development cycles. The platform caters to industries such as automotive, aerospace, and IoT, providing cutting-edge AI products that drive innovation and competitive advantage.

Beacon Biosignals

Beacon Biosignals provides an EEG neurobiomarker platform that is designed to accelerate clinical trials and enable new treatments for patients with neurological and psychiatric diseases. Their platform is powered by machine learning and a world-class clinico-EEG database, which allows them to analyze existing EEG data for insights into mechanisms, PK/PD, and patient stratification. This information can be used to guide further development efforts, optimize clinical trials, and enhance understanding of treatment efficacy.

1 - Open Source AI Tools

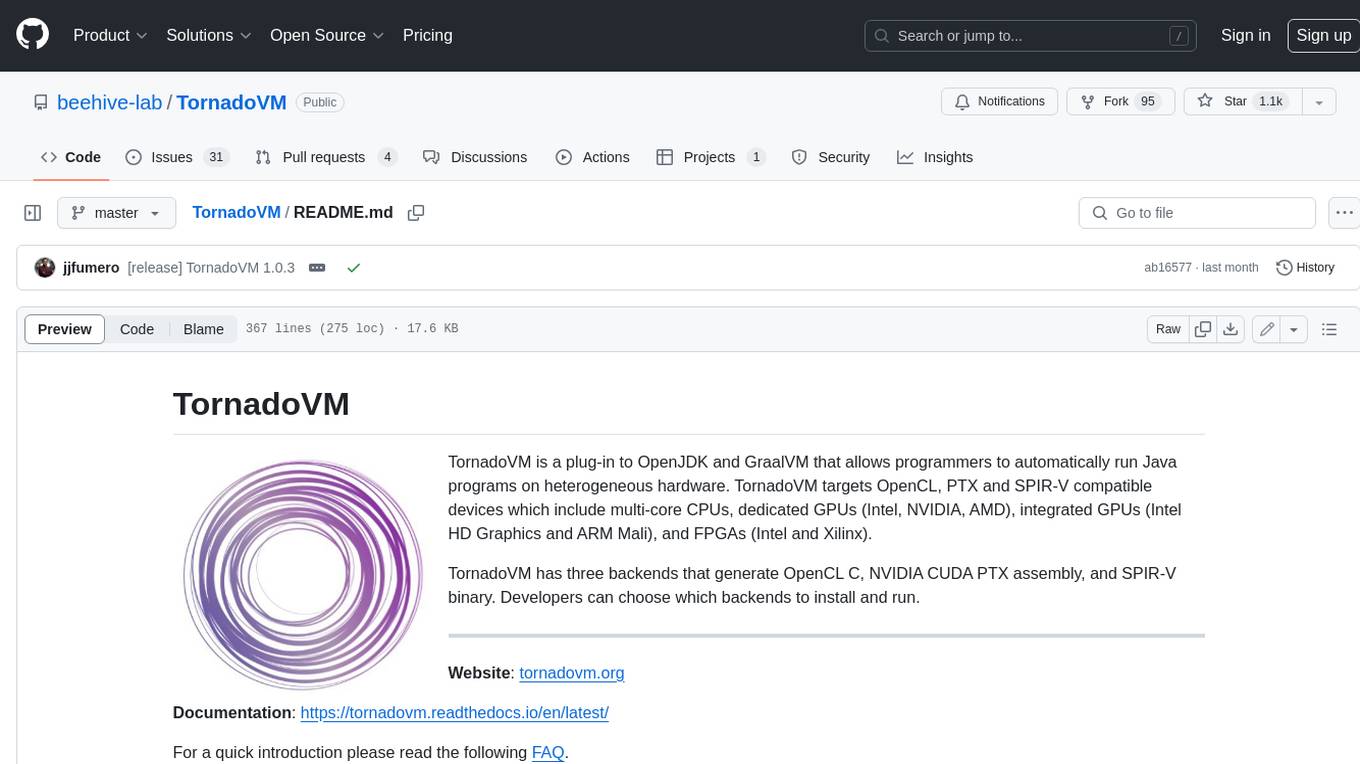

TornadoVM

TornadoVM is a plug-in to OpenJDK and GraalVM that allows programmers to automatically run Java programs on heterogeneous hardware. TornadoVM targets OpenCL, PTX and SPIR-V compatible devices which include multi-core CPUs, dedicated GPUs (Intel, NVIDIA, AMD), integrated GPUs (Intel HD Graphics and ARM Mali), and FPGAs (Intel and Xilinx).

7 - OpenAI Gpts

Material Tailwind GPT

Accelerate web app development with Material Tailwind GPT's components - 10x faster.

Tourist Language Accelerator

Accelerates the learning of key phrases and cultural norms for travelers in various languages.

Digital Entrepreneurship Accelerator Coach

The Go-To Coach for Aspiring Digital Entrepreneurs, Innovators, & Startups. Learn More at UnderdogInnovationInc.com.

24 Hour Startup Accelerator

Niche-focused startup guide, humorous, strategic, simplifying ideas.

Backloger.ai - Product MVP Accelerator

Drop in any requirements or any text ; I'll help you create an MVP with insights.

Digital Boost Lab

A guide for developing university-focused digital startup accelerator programs.