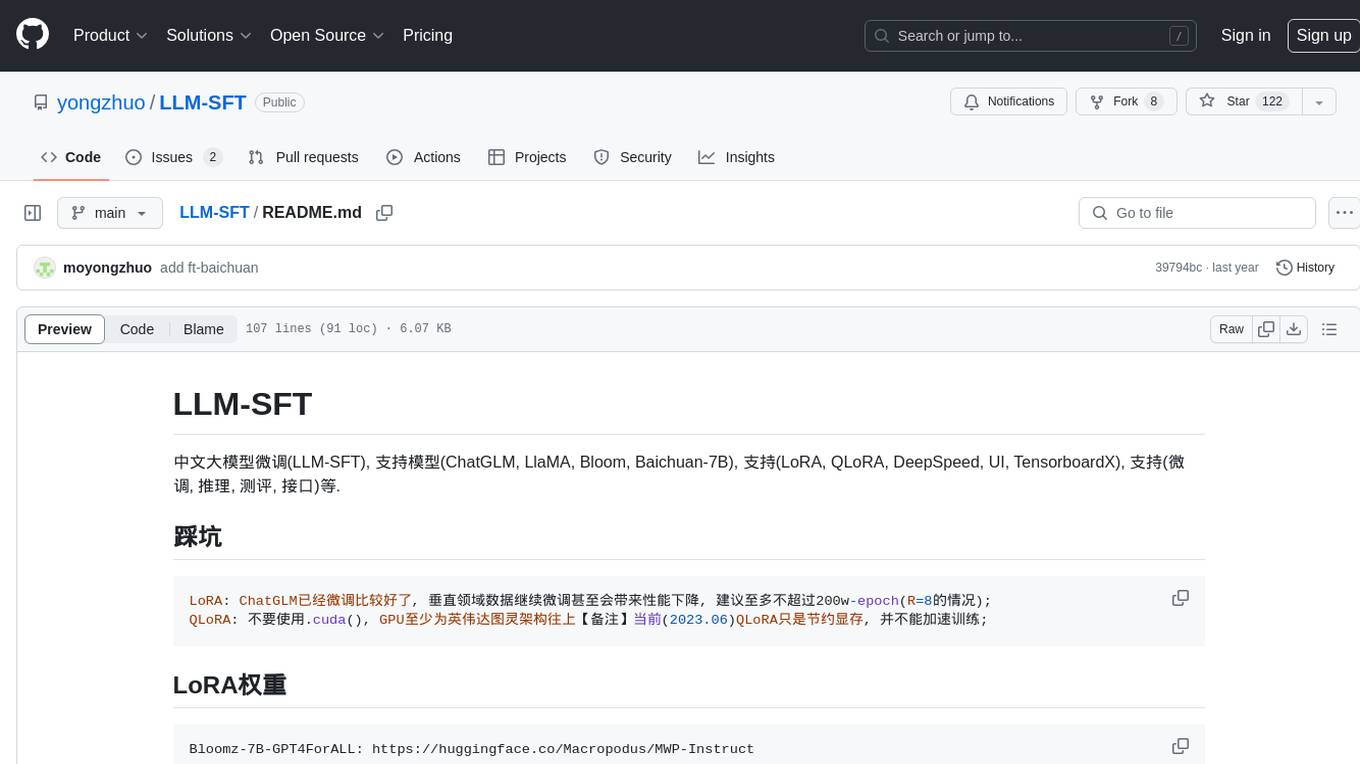

LLM-SFT

中文大模型微调(LLM-SFT), 数学指令数据集MWP-Instruct, 支持模型(ChatGLM-6B, LLaMA, Bloom-7B, baichuan-7B), 支持(LoRA, QLoRA, DeepSpeed, UI, TensorboardX), 支持(微调, 推理, 测评, 接口)等.

Stars: 122

LLM-SFT is a Chinese large model fine-tuning tool that supports models such as ChatGLM, LlaMA, Bloom, Baichuan-7B, and frameworks like LoRA, QLoRA, DeepSpeed, UI, and TensorboardX. It facilitates tasks like fine-tuning, inference, evaluation, and API integration. The tool provides pre-trained weights for various models and datasets for Chinese language processing. It requires specific versions of libraries like transformers and torch for different functionalities.

README:

中文大模型微调(LLM-SFT), 支持模型(ChatGLM, LlaMA, Bloom, Baichuan-7B), 支持(LoRA, QLoRA, DeepSpeed, UI, TensorboardX), 支持(微调, 推理, 测评, 接口)等.

LoRA: ChatGLM已经微调比较好了, 垂直领域数据继续微调甚至会带来性能下降, 建议至多不超过200w-epoch(R=8的情况);

QLoRA: 不要使用.cuda(), GPU至少为英伟达图灵架构往上【备注】当前(2023.06)QLoRA只是节约显存, 并不能加速训练;Bloomz-7B-GPT4ForALL: https://huggingface.co/Macropodus/MWP-Instruct

ChatGLM-6B-GPT4ForALL: https://huggingface.co/Macropodus/MWP-Instruct

LlaMA-7B-GPT4ForALL: https://huggingface.co/Macropodus/MWP-Instruct

ChatGLM-6B-MWP: https://huggingface.co/Macropodus/MWP-Instruct处理后的微调数据(多步计算+一/二元解方程)-MWP: https://huggingface.co/datasets/Macropodus/MWP-Instruct

- 大数加减乘除来自: https://github.com/liutiedong/goat.git

地址: llm_sft/ft_chatglm

配置: llm_sft/ft_chatglm/config.py

训练: python train.py

推理: python predict.py

验证: python evaluation.py

接口: python post_api.py

1.详见LLM-SFT/requirements.txt

transformers>=4.26.1

torch>=1.10.1

peft>=0.2.0

2.注意QLoRA需要的版本更高些, 详见LLM-SFT/llm_sft/ft_qlora/requirements.txt

transformers>=4.30.0.dev0

accelerate>=0.20.0.dev0

bitsandbytes>=0.39.0

peft>=0.4.0.dev0

torch>=1.13.1- https://huggingface.co/datasets/JosephusCheung/GuanacoDataset

- https://huggingface.co/datasets/shareAI/shareGPT_cn

- https://huggingface.co/datasets/Mutonix/RefGPT-Fact

- https://huggingface.co/datasets/BAAI/COIG

- https://github.com/Instruction-Tuning-with-GPT-4/GPT-4-LLM

- https://github.com/carbonz0/alpaca-chinese-dataset

- https://github.com/LianjiaTech/BELLE

- https://github.com/PhoebusSi/Alpaca-CoT

- https://github.com/Hello-SimpleAI/chatgpt-comparison-detection

- https://github.com/yangjianxin1/Firefly

- https://github.com/XueFuzhao/InstructionWild

- https://github.com/OpenLMLab/MOSS

- https://github.com/thu-coai/Safety-Prompts

- https://github.com/LAION-AI/Open-Assistant

- https://github.com/TigerResearch/TigerBot

- https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

- https://github.com/THUDM/ChatGLM-6B

- https://github.com/THUDM/GLM

- https://github.com/tatsu-lab/stanford_alpaca

- https://github.com/LianjiaTech/BELLE

- https://github.com/huggingface/peft

- https://github.com/mymusise/ChatGLM-Tuning

- https://github.com/huggingface/transformers

- https://github.com/bojone/bert4keras

- trl

- https://github.com/LYH-YF/MWPToolkit

- math23k

- https://github.com/ymcui/Chinese-LLaMA-Alpaca

- https://github.com/bigscience-workshop/petals

- https://github.com/facebookresearch/llama

- https://huggingface.co/spaces/multimodalart/ChatGLM-6B/tree/main

- https://huggingface.co/spaces/stabilityai/stablelm-tuned-alpha-chat/tree/main

- https://github.com/artidoro/qlora

- https://github.com/baichuan-inc/baichuan-7B

For citing this work, you can refer to the present GitHub project. For example, with BibTeX:

@misc{Keras-TextClassification,

howpublished = {\url{https://github.com/yongzhuo/LLM-SFT}},

title = {LLM-SFT},

author = {Yongzhuo Mo},

publisher = {GitHub},

year = {2023}

}

本项目相关资源仅供学术研究之用,严禁用于商业用途。 使用涉及第三方代码的部分时,请严格遵循相应的开源协议。模型生成的内容受模型计算、随机性和量化精度损失等因素影响,本项目不对其准确性作出保证。对于模型输出的任何内容,本项目不承担任何法律责任,亦不对因使用相关资源和输出结果而可能产生的任何损失承担责任。

- 大模型权重的详细协议见THUDM/chatglm-6b, bigscience/bloomz-7b1-mt, decapoda-research/llama-7b-hf

- 指令微调数据协议见GPT-4-LLM, LYH-YF/MWPToolkit, yangjianxin1/Firefly

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for LLM-SFT

Similar Open Source Tools

LLM-SFT

LLM-SFT is a Chinese large model fine-tuning tool that supports models such as ChatGLM, LlaMA, Bloom, Baichuan-7B, and frameworks like LoRA, QLoRA, DeepSpeed, UI, and TensorboardX. It facilitates tasks like fine-tuning, inference, evaluation, and API integration. The tool provides pre-trained weights for various models and datasets for Chinese language processing. It requires specific versions of libraries like transformers and torch for different functionalities.

AI-Assistant-ChatGPT

AI Assistant ChatGPT is a web client tool that allows users to create or chat using ChatGPT or Claude. It enables generating long texts and conversations with efficient control over quality and content direction. The tool supports customization of reverse proxy address, conversation management, content editing, markdown document export, JSON backup, context customization, session-topic management, role customization, dynamic content navigation, and more. Users can access the tool directly at https://eaias.com or deploy it independently. It offers features for dialogue management, assistant configuration, session configuration, and more. The tool lacks data cloud storage and synchronization but provides guidelines for independent deployment. It is a frontend project that can be deployed using Cloudflare Pages and customized with backend modifications. The project is open-source under the MIT license.

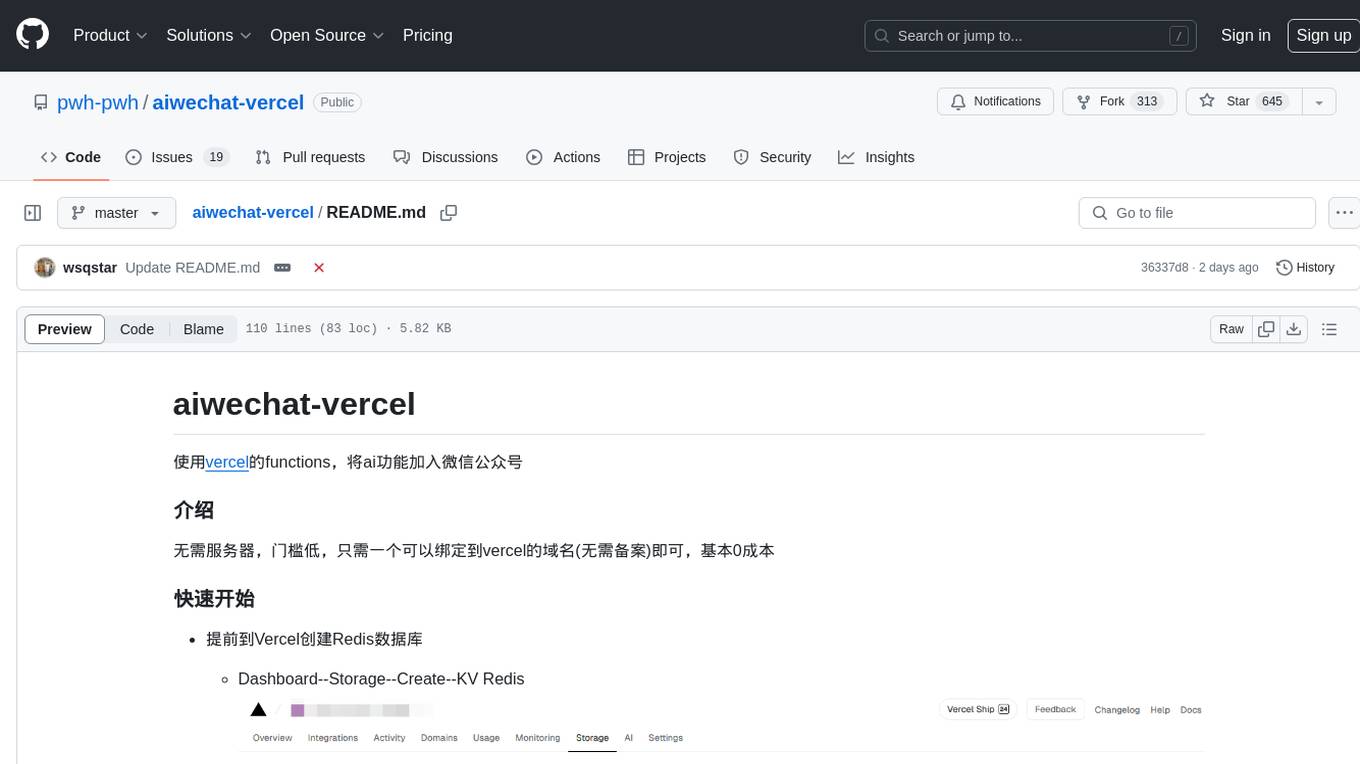

aiwechat-vercel

aiwechat-vercel is a tool that integrates AI capabilities into WeChat public accounts using Vercel functions. It requires minimal server setup, low entry barriers, and only needs a domain name that can be bound to Vercel, with almost zero cost. The tool supports various AI models, continuous Q&A sessions, chat functionality, system prompts, and custom commands. It aims to provide a platform for learning and experimentation with AI integration in WeChat public accounts.

Embodied-AI-Guide

Embodied-AI-Guide is a comprehensive guide for beginners to understand Embodied AI, focusing on the path of entry and useful information in the field. It covers topics such as Reinforcement Learning, Imitation Learning, Large Language Model for Robotics, 3D Vision, Control, Benchmarks, and provides resources for building cognitive understanding. The repository aims to help newcomers quickly establish knowledge in the field of Embodied AI.

AI-Translation-Assistant-Pro

AI Translation Assistant Pro is a powerful AI-driven platform for multilingual translation and content processing. It offers features such as text translation, image recognition, PDF processing, speech recognition, and video processing. The platform includes a subscription system with different membership levels, user management functionalities, quota management, and real-time usage statistics. It utilizes technologies like Next.js, React, TypeScript for the frontend, Node.js, PostgreSQL for the backend, NextAuth.js for authentication, Stripe for payments, and integrates with cloud services like Aliyun OSS and Tencent Cloud for AI services.

StarWhisper

StarWhisper is a multi-modal model repository developed under the support of the National Astronomical Observatory-Zhijiang Laboratory. It includes language models, temporal models, and multi-modal models ranging from 7B to 72B. The repository provides pre-trained models and technical reports for tasks such as pulsar identification, light curve classification, and telescope control. It aims to integrate astronomical knowledge using large models and explore the possibilities of solving specific astronomical problems through multi-modal approaches.

hdu-cs-wiki

The HDU Computer Science Lecture Notes is a comprehensive guide designed to help students navigate through various challenges in the field of computer science. It covers topics such as programming languages, artificial intelligence, software development, and more. The notes provide insights on how to effectively utilize university time, balance grades with project experience, and make informed decisions regarding career paths. Created by a collaborative effort involving students, teachers, and industry experts, the lecture notes aim to serve as a guiding tool for individuals seeking guidance in the computer science domain.

Avalonia-Assistant

Avalonia-Assistant is an open-source desktop intelligent assistant that aims to provide a user-friendly interactive experience based on the Avalonia UI framework and the integration of Semantic Kernel with OpenAI or other large LLM models. By utilizing Avalonia-Assistant, you can perform various desktop operations through text or voice commands, enhancing your productivity and daily office experience.

agentica

Agentica is a human-centric framework for building large language model agents. It provides functionalities for planning, memory management, tool usage, and supports features like reflection, planning and execution, RAG, multi-agent, multi-role, and workflow. The tool allows users to quickly code and orchestrate agents, customize prompts, and make API calls to various services. It supports API calls to OpenAI, Azure, Deepseek, Moonshot, Claude, Ollama, and Together. Agentica aims to simplify the process of building AI agents by providing a user-friendly interface and a range of functionalities for agent development.

LearnPrompt

LearnPrompt is a permanent, free, open-source AIGC course platform that currently supports various tools like ChatGPT, Agent, Midjourney, Runway, Stable Diffusion, AI digital humans, AI voice & music, and large model fine-tuning. The platform offers features such as multilingual support, comment sections, daily selections, and submissions. Users can explore different modules, including sound cloning, RAG, GPT-SoVits, and OpenAI Sora world model. The platform aims to continuously update and provide tutorials, examples, and knowledge systems related to AI technologies.

lmdeploy

LMDeploy is a toolkit for compressing, deploying, and serving LLM, developed by the MMRazor and MMDeploy teams. It has the following core features: * **Efficient Inference** : LMDeploy delivers up to 1.8x higher request throughput than vLLM, by introducing key features like persistent batch(a.k.a. continuous batching), blocked KV cache, dynamic split&fuse, tensor parallelism, high-performance CUDA kernels and so on. * **Effective Quantization** : LMDeploy supports weight-only and k/v quantization, and the 4-bit inference performance is 2.4x higher than FP16. The quantization quality has been confirmed via OpenCompass evaluation. * **Effortless Distribution Server** : Leveraging the request distribution service, LMDeploy facilitates an easy and efficient deployment of multi-model services across multiple machines and cards. * **Interactive Inference Mode** : By caching the k/v of attention during multi-round dialogue processes, the engine remembers dialogue history, thus avoiding repetitive processing of historical sessions.

agents-flex

Agents-Flex is a LLM Application Framework like LangChain base on Java. It provides a set of tools and components for building LLM applications, including LLM Visit, Prompt and Prompt Template Loader, Function Calling Definer, Invoker and Running, Memory, Embedding, Vector Storage, Resource Loaders, Document, Splitter, Loader, Parser, LLMs Chain, and Agents Chain.

promptMinder

PromptMinder is a professional prompt word management platform that simplifies and enhances AI prompt word management. It features prompt word version control with support for version tracking and history viewing, diff comparison similar to Git for quick identification of prompt word updates, customizable tagging for quick categorization and retrieval, support for private and public prompt words, integration of AI models for intelligent prompt word generation, team collaboration with team creation, member management, and permission control, community contribution feature with audit and publishing process. The platform also offers a responsive design for mobile devices, internationalization support for Chinese and English languages, modern interface based on Shadcn UI, intelligent search and filtering functionality, and convenient copy and share features. It is built for high performance using Next.js 16 + React 19, with security authentication provided by Clerk, reliable storage using Supabase + PostgreSQL database, and easy deployment supporting Vercel and Zeabur one-click deployment.

AI-LLM-ML-CS-Quant-Overview

AI-LLM-ML-CS-Quant-Overview is a repository providing overview notes on AI, Large Language Models (LLM), Machine Learning (ML), Computer Science (CS), and Quantitative Finance. It covers various topics such as LangGraph & Cursor AI, DeepSeek, MoE (Mixture of Experts), NVIDIA GTC, LLM Essentials, System Design, Computer Systems, Big Data and AI in Finance, Econometrics and Statistics Conference, C++ Design Patterns and Derivatives Pricing, High-Frequency Finance, Machine Learning for Algorithmic Trading, Stochastic Volatility Modeling, Quant Job Interview Questions, Distributed Systems, Language Models, Designing Machine Learning Systems, Designing Data-Intensive Applications (DDIA), Distributed Machine Learning, and The Elements of Quantitative Investing.

paper-ai

Paper-ai is a tool that helps you write papers using artificial intelligence. It provides features such as AI writing assistance, reference searching, and editing and formatting tools. With Paper-ai, you can quickly and easily create high-quality papers.

jd_scripts

jd_scripts is a repository containing scripts for automating tasks related to JD (Jingdong) platform. The scripts provide functionalities such as asset notifications, account management, and task automation for various JD services. Users can utilize these scripts to streamline their interactions with the JD platform, receive asset notifications, and perform tasks efficiently.

For similar tasks

Co-LLM-Agents

This repository contains code for building cooperative embodied agents modularly with large language models. The agents are trained to perform tasks in two different environments: ThreeDWorld Multi-Agent Transport (TDW-MAT) and Communicative Watch-And-Help (C-WAH). TDW-MAT is a multi-agent environment where agents must transport objects to a goal position using containers. C-WAH is an extension of the Watch-And-Help challenge, which enables agents to send messages to each other. The code in this repository can be used to train agents to perform tasks in both of these environments.

GPT4Point

GPT4Point is a unified framework for point-language understanding and generation. It aligns 3D point clouds with language, providing a comprehensive solution for tasks such as 3D captioning and controlled 3D generation. The project includes an automated point-language dataset annotation engine, a novel object-level point cloud benchmark, and a 3D multi-modality model. Users can train and evaluate models using the provided code and datasets, with a focus on improving models' understanding capabilities and facilitating the generation of 3D objects.

asreview

The ASReview project implements active learning for systematic reviews, utilizing AI-aided pipelines to assist in finding relevant texts for search tasks. It accelerates the screening of textual data with minimal human input, saving time and increasing output quality. The software offers three modes: Oracle for interactive screening, Exploration for teaching purposes, and Simulation for evaluating active learning models. ASReview LAB is designed to support decision-making in any discipline or industry by improving efficiency and transparency in screening large amounts of textual data.

Groma

Groma is a grounded multimodal assistant that excels in region understanding and visual grounding. It can process user-defined region inputs and generate contextually grounded long-form responses. The tool presents a unique paradigm for multimodal large language models, focusing on visual tokenization for localization. Groma achieves state-of-the-art performance in referring expression comprehension benchmarks. The tool provides pretrained model weights and instructions for data preparation, training, inference, and evaluation. Users can customize training by starting from intermediate checkpoints. Groma is designed to handle tasks related to detection pretraining, alignment pretraining, instruction finetuning, instruction following, and more.

amber-train

Amber is the first model in the LLM360 family, an initiative for comprehensive and fully open-sourced LLMs. It is a 7B English language model with the LLaMA architecture. The model type is a language model with the same architecture as LLaMA-7B. It is licensed under Apache 2.0. The resources available include training code, data preparation, metrics, and fully processed Amber pretraining data. The model has been trained on various datasets like Arxiv, Book, C4, Refined-Web, StarCoder, StackExchange, and Wikipedia. The hyperparameters include a total of 6.7B parameters, hidden size of 4096, intermediate size of 11008, 32 attention heads, 32 hidden layers, RMSNorm ε of 1e^-6, max sequence length of 2048, and a vocabulary size of 32000.

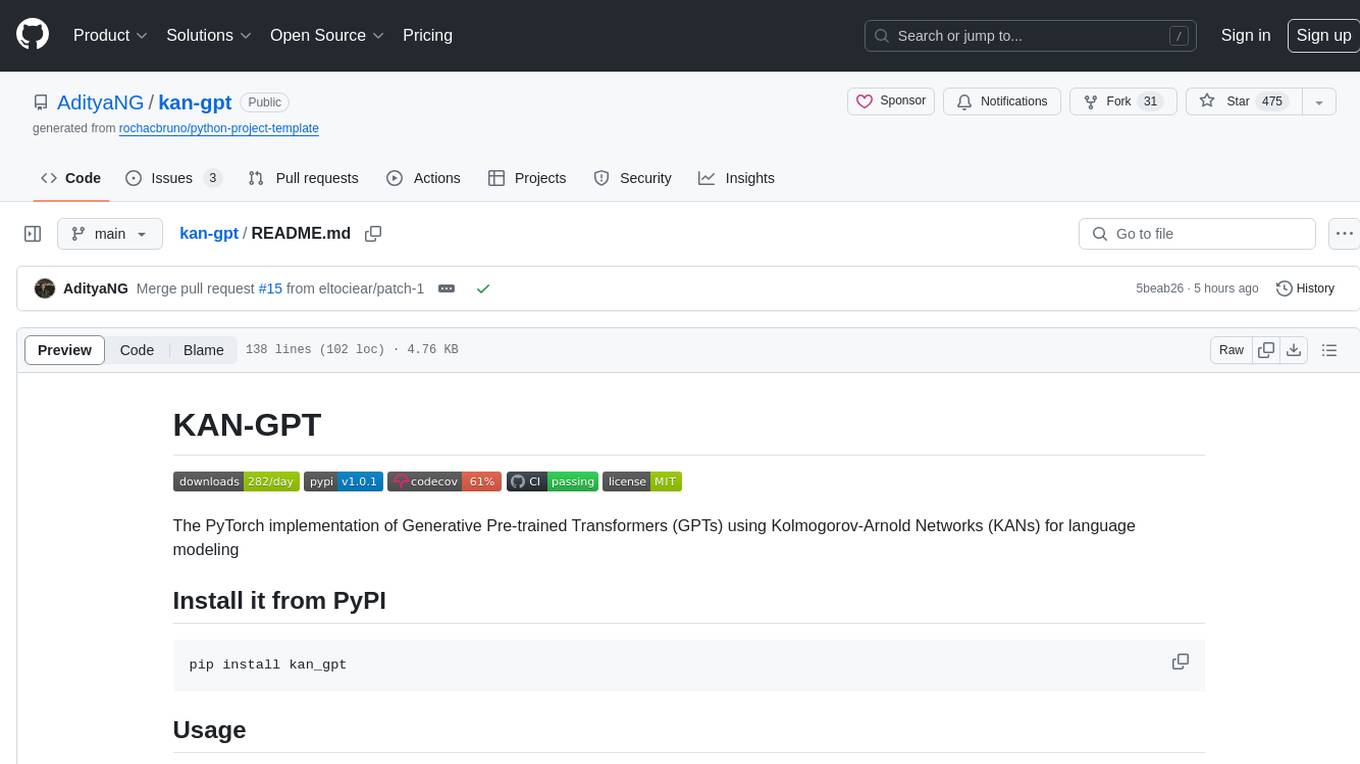

kan-gpt

The KAN-GPT repository is a PyTorch implementation of Generative Pre-trained Transformers (GPTs) using Kolmogorov-Arnold Networks (KANs) for language modeling. It provides a model for generating text based on prompts, with a focus on improving performance compared to traditional MLP-GPT models. The repository includes scripts for training the model, downloading datasets, and evaluating model performance. Development tasks include integrating with other libraries, testing, and documentation.

LLM-SFT

LLM-SFT is a Chinese large model fine-tuning tool that supports models such as ChatGLM, LlaMA, Bloom, Baichuan-7B, and frameworks like LoRA, QLoRA, DeepSpeed, UI, and TensorboardX. It facilitates tasks like fine-tuning, inference, evaluation, and API integration. The tool provides pre-trained weights for various models and datasets for Chinese language processing. It requires specific versions of libraries like transformers and torch for different functionalities.

zshot

Zshot is a highly customizable framework for performing Zero and Few shot named entity and relationships recognition. It can be used for mentions extraction, wikification, zero and few shot named entity recognition, zero and few shot named relationship recognition, and visualization of zero-shot NER and RE extraction. The framework consists of two main components: the mentions extractor and the linker. There are multiple mentions extractors and linkers available, each serving a specific purpose. Zshot also includes a relations extractor and a knowledge extractor for extracting relations among entities and performing entity classification. The tool requires Python 3.6+ and dependencies like spacy, torch, transformers, evaluate, and datasets for evaluation over datasets like OntoNotes. Optional dependencies include flair and blink for additional functionalities. Zshot provides examples, tutorials, and evaluation methods to assess the performance of the components.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.