sparrow

Structured data extraction and instruction calling with ML, LLM and Vision LLM

Stars: 5001

Sparrow is an innovative open-source solution for efficient data extraction and processing from various documents and images. It seamlessly handles forms, invoices, receipts, and other unstructured data sources. Sparrow stands out with its modular architecture, offering independent services and pipelines all optimized for robust performance. One of the critical functionalities of Sparrow - pluggable architecture. You can easily integrate and run data extraction pipelines using tools and frameworks like LlamaIndex, Haystack, or Unstructured. Sparrow enables local LLM data extraction pipelines through Ollama or Apple MLX. With Sparrow solution you get API, which helps to process and transform your data into structured output, ready to be integrated with custom workflows. Sparrow Agents - with Sparrow you can build independent LLM agents, and use API to invoke them from your system. **List of available agents:** * **llamaindex** - RAG pipeline with LlamaIndex for PDF processing * **vllamaindex** - RAG pipeline with LLamaIndex multimodal for image processing * **vprocessor** - RAG pipeline with OCR and LlamaIndex for image processing * **haystack** - RAG pipeline with Haystack for PDF processing * **fcall** - Function call pipeline * **unstructured-light** - RAG pipeline with Unstructured and LangChain, supports PDF and image processing * **unstructured** - RAG pipeline with Weaviate vector DB query, Unstructured and LangChain, supports PDF and image processing * **instructor** - RAG pipeline with Unstructured and Instructor libraries, supports PDF and image processing. Works great for JSON response generation

README:

Structured data extraction and instruction calling with ML, LLM and Vision LLM

🚀 Try Sparrow Online | 📖 Quick Start | 🛠️ Installation | 📚 Examples | 🤖 Agents

Interactive web interface for document processing.

Visit sparrow.katanaml.io for a live demo running on Mac Mini M4 Pro.

- Drag & Drop: Upload documents directly

- Real-time Processing: See results instantly

- Data Query: JSON based schema for data query

- Structured Output: JSON structured output

- Result Annotation: View bounding boxes

- ✨ Key Features

- 🏗️ Architecture

- 🚀 Quickstart

- 🛠️ Installation

- 📚 Examples

- 💻 CLI Usage

- 🌐 API Usage

- 🤖 Sparrow Agent

- 📊 Dashboard

- 🔧 Pipeline Comparison

- ⚡ Performance Tips

- 🔍 Troubleshooting

- ⭐ Star History

- 📜 License

🎯 Universal Document Processing: Handle invoices, receipts, forms, bank statements, tables

🔧 Pluggable Architecture: Mix and match different pipelines (Sparrow Parse, Instructor, Agents)

🖥️ Multiple Backends: MLX (Apple Silicon), Ollama, vLLM, Docker, Hugging Face Cloud GPU

📱 Multi-format Support: Images (PNG, JPG) and multi-page PDFs

🎨 Schema Validation: JSON schema-based extraction with automatic validation

🌐 API-First Design: RESTful APIs for easy integration

💬 Instruction Calling: Beyond document extraction - text processing, validation, decision making

📊 Visual Monitoring: Built-in dashboard and agent workflow tracking

🔒 Enterprise Ready: Rate limiting, usage analytics, commercial licensing available

🚀 Local Vision LLMs: Mistral Small, QwenVL, olmOCR, Gemma3

| Component | Purpose | Use Case |

|---|---|---|

| Sparrow ML LLM | Main API engine | Document processing pipelines |

| Sparrow Parse | Vision LLM library | Structured JSON extraction |

| Sparrow Agents | Workflow orchestration | Complex multi-step processing |

| Sparrow OCR | Text recognition | OCR preprocessing |

| Sparrow UI | Web interface | Interactive document processing |

-

Python 3.12.10+ (use

pyenvfor version management) - macOS (for MLX backend) or Linux/Windows (for other backends)

- GPU (make sure GPU have enough memory to run selected Vision LLM)

# 1. Install pyenv and Python 3.12.10

pyenv install 3.12.10

pyenv global 3.12.10

# 2. Create virtual environment

python -m venv .env_sparrow_parse

source .env_sparrow_parse/bin/activate # Linux/Mac

# or .env_sparrow_parse\Scripts\activate # Windows

# 3. Install Sparrow Parse pipeline

git clone https://github.com/katanaml/sparrow.git

cd sparrow/sparrow-ml/llm

pip install -r requirements_sparrow_parse.txt

# 4. For macOS: Install poppler for PDF processing

brew install poppler

# 5. Start the API server

python api.pyBefore running pip install -r requirements_sparrow_parse.txt, check your platform. If you are on macOS and want to run MLX backend, go to requirements_sparrow_parse.txt and make sure sparrow-parse[mlx] libary reference is defined. If you are running Sparrow on Linux/Windows, make sure to use sparrow-parse library reference, this will skip MLX related libraries.

# Extract data from a bonds table

./sparrow.sh '[{"instrument_name":"str", "valuation":0}]' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "data/bonds_table.png"Result:

{

"data": [

{"instrument_name": "UNITS BLACKROCK...", "valuation": 19049},

{"instrument_name": "UNITS ISHARES...", "valuation": 83488}

],

"valid": "true"

}Use --options mlx for MLX backend, --options ollama for Ollama backend. Make sure to provide correct Vision LLM model name, download model first separately with MLX or Ollama.

# 1. Clone repository

git clone https://github.com/katanaml/sparrow.git

cd sparrow📖 For complete installation instructions, see our detailed environment setup guide.

- Python Environment: Install Python 3.12.10 using pyenv

-

Virtual Environments: Create separate environments for different pipelines:

-

.env_sparrow_parse- for Sparrow Parse (Vision LLM) -

.env_instructor- for Instructor (Text LLM) -

.env_ocr- for OCR service (optional)

-

- System Dependencies: Install poppler for PDF processing

- Requirements: Install pipeline-specific dependencies, for example:

pip install -r requirements_sparrow_parse.txt

macOS:

brew install poppler # Required for PDF processingUbuntu/Debian:

sudo apt-get install poppler-utils libpoppler-cpp-devApple Silicon: MLX backend available for optimal performance

NVIDIA/AMD GPU: Use Ollama backend

CPU Only: Use smaller models or Hugging Face cloud backend

# Test installation

python api.py --port 8002

# Visit http://localhost:8002/api/v1/sparrow-llm/docs# Extract all data from bank statement

./sparrow.sh "*" \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "data/bank_statement.pdf"📄 View Complete JSON Output

{

"bank": "First Platypus Bank",

"address": "1234 Kings St., New York, NY 12123",

"account_holder": "Mary G. Orta",

"account_number": "1234567890123",

"statement_date": "3/1/2022",

"period_covered": "2/1/2022 - 3/1/2022",

"account_summary": {

"balance_on_march_1": "$25,032.23",

"total_money_in": "$10,234.23",

"total_money_out": "$10,532.51"

},

"transactions": [

{

"date": "02/01",

"description": "PGD EasyPay Debit",

"withdrawal": "203.24",

"deposit": "",

"balance": "22,098.23"

},

{

"date": "02/02",

"description": "AB&B Online Payment*****",

"withdrawal": "71.23",

"deposit": "",

"balance": "22,027.00"

},

{

"date": "02/04",

"description": "Check No. 2345",

"withdrawal": "",

"deposit": "450.00",

"balance": "22,477.00"

},

{

"date": "02/05",

"description": "Payroll Direct Dep 23422342 Giants",

"withdrawal": "",

"deposit": "2,534.65",

"balance": "25,011.65"

},

{

"date": "02/06",

"description": "Signature POS Debit - TJP",

"withdrawal": "84.50",

"deposit": "",

"balance": "24,927.15"

},

{

"date": "02/07",

"description": "Check No. 234",

"withdrawal": "1,400.00",

"deposit": "",

"balance": "23,527.15"

},

{

"date": "02/08",

"description": "Check No. 342",

"withdrawal": "",

"deposit": "25.00",

"balance": "23,552.15"

},

{

"date": "02/09",

"description": "FPB AutoPay***** Credit Card",

"withdrawal": "456.02",

"deposit": "",

"balance": "23,096.13"

},

{

"date": "02/08",

"description": "Check No. 123",

"withdrawal": "",

"deposit": "25.00",

"balance": "23,552.15"

},

{

"date": "02/09",

"description": "FPB AutoPay***** Credit Card",

"withdrawal": "156.02",

"deposit": "",

"balance": "23,096.13"

},

{

"date": "02/08",

"description": "Cash Deposit",

"withdrawal": "",

"deposit": "25.00",

"balance": "23,552.15"

}

],

"valid": "true"

}# Extract structured data from financial table

./sparrow.sh '[{"instrument_name":"str", "valuation":0}]' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "data/bonds_table.png"📄 View JSON Output

{

"data": [

{

"instrument_name": "UNITS BLACKROCK FIX INC DUB FDS PLC ISHS EUR INV GRD CP BD IDX/INST/E",

"valuation": 19049

},

{

"instrument_name": "UNITS ISHARES III PLC CORE EUR GOVT BOND UCITS ETF/EUR",

"valuation": 83488

},

{

"instrument_name": "UNITS ISHARES III PLC EUR CORP BOND 1-5YR UCITS ETF/EUR",

"valuation": 213030

},

{

"instrument_name": "UNIT ISHARES VI PLC/JP MORGAN USD E BOND EUR HED UCITS ETF DIST/HDGD/",

"valuation": 32774

},

{

"instrument_name": "UNITS XTRACKERS II SICAV/EUR HY CORP BOND UCITS ETF/-1D-/DISTR.",

"valuation": 23643

}

],

"valid": "true"

}# Extract invoice with cropping for better accuracy

./sparrow.sh "*" \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--crop-size 60 \

--file-path "data/invoice.pdf"📄 View Complete JSON Output

{

"invoice_number": "61356291",

"date_of_issue": "09/06/2012",

"seller": {

"name": "Chapman, Kim and Green",

"address": "64731 James Branch, Smithmouth, NC 26872",

"tax_id": "949-84-9105",

"iban": "GB50ACIE59715038217063"

},

"client": {

"name": "Rodriguez-Stevens",

"address": "2280 Angela Plain, Hortonshire, MS 93248",

"tax_id": "939-98-8477"

},

"items": [

{

"description": "Wine Glasses Goblets Pair Clear",

"quantity": 5,

"unit": "each",

"net_price": 12.0,

"net_worth": 60.0,

"vat_percentage": 10,

"gross_worth": 66.0

},

{

"description": "With Hooks Stemware Storage Multiple Uses Iron Wine Rack Hanging",

"quantity": 4,

"unit": "each",

"net_price": 28.08,

"net_worth": 112.32,

"vat_percentage": 10,

"gross_worth": 123.55

},

{

"description": "Replacement Corkscrew Parts Spiral Worm Wine Opener Bottle Houdini",

"quantity": 1,

"unit": "each",

"net_price": 7.5,

"net_worth": 7.5,

"vat_percentage": 10,

"gross_worth": 8.25

},

{

"description": "HOME ESSENTIALS GRADIENT STEMLESS WINE GLASSES SET OF 4 20 FL OZ (591 ml) NEW",

"quantity": 1,

"unit": "each",

"net_price": 12.99,

"net_worth": 12.99,

"vat_percentage": 10,

"gross_worth": 14.29

}

],

"summary": {

"total_net_worth": 192.81,

"total_vat": 19.28,

"total_gross_worth": 212.09

}

}# Process multi-page PDF with structured output per page

./sparrow.sh '{"table": [{"description": "str", "latest_amount": 0, "previous_amount": 0}]}' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "data/financial_report.pdf" \

--debug-dir "debug/"📄 View JSON Output

[

{

"table": [

{

"description": "Revenues",

"latest_amount": 12453,

"previous_amount": 11445

},

{

"description": "Operating expenses",

"latest_amount": 9157,

"previous_amount": 8822

}

],

"valid": "true",

"page": 1

},

{

"table": [

{

"description": "Revenues",

"latest_amount": 12453,

"previous_amount": 11445

},

{

"description": "Operating expenses",

"latest_amount": 9157,

"previous_amount": 8822

}

],

"valid": "true",

"page": 2

}

]# Instruction-based processing

./sparrow.sh "instruction: do arithmetic operation, payload: 2+2=" \

--pipeline "sparrow-instructor" \

--options mlx \

--options lmstudio-community/Mistral-Small-3.2-24B-Instruct-2506-8bit

# Instruction processing with document input

./sparrow.sh "check if business entity Chapman, Kim and Green is invoice issuing party"

--pipeline "sparrow-parse"

--instruction

--options mlx --options lmstudio-community/Mistral-Small-3.2-24B-Instruct-2506-8bit

--file-path "invoice_1.jpg"JSON Output:

The result of 2 + 2 is:

4

# Function calling example

./sparrow.sh assistant --pipeline "stocks" --query "Oracle"JSON Output:

{

"company": "Oracle Corporation",

"ticker": "ORCL"

}Additional Output:

The stock price of the Oracle Corporation is 186.3699951171875. USD

./sparrow.sh "<JSON_SCHEMA>" --pipeline "<PIPELINE>" [OPTIONS] --file-path "<FILE>"| Argument | Type | Description | Example |

|---|---|---|---|

query |

JSON/String | Schema or instruction | '[{"field":"str"}]' |

--pipeline |

String | Pipeline to use | sparrow-parse |

--file-path |

Path | Input document | data/invoice.pdf |

--options |

String | Backend configuration | mlx,model-name |

--instruction |

Boolean | Sparrow query will be used as instruction | --instruction |

--validation |

Boolean | Sparrow query will be used for field validation | --validation |

--crop-size |

Integer | Border cropping pixels | 60 |

--page-type |

String | Page classification | financial_table |

--debug |

Boolean | Enable debug mode | --debug |

--debug-dir |

Path | Debug output folder | ./debug/ |

# MLX Backend (Apple Silicon)

./sparrow.sh '[{"instrument_name":"str", "valuation":0}]' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "data/bonds_table.png"

# Hugging Face Cloud GPU

--options huggingface --options your-space/model-name

# Additional flags

--options tables_only # Extract only tables

--options validation_off # Disable schema validation

--options apply_annotation # Include bounding boxes

--page-type financial_table # Classify page type# Instruction-based processing

./sparrow.sh "instruction: do arithmetic operation, payload: 2+2=" \

--pipeline "sparrow-instructor" \

--options mlx \

--options lmstudio-community/Mistral-Small-3.2-24B-Instruct-2506-8bit# Multi-page PDF with page classification

./sparrow.sh "*" \

--page-type invoice \

--page-type table \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "multi_page.pdf"

# Handle missing fields with null values

./sparrow.sh '[{"required_field":"str", "optional_field":"str or null"}]' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--file-path "document.png"

# Table extraction with cropping

./sparrow.sh '*' \

--pipeline "sparrow-parse" \

--options mlx \

--options mlx-community/Qwen2.5-VL-72B-Instruct-4bit \

--options tables_only \

--crop-size 100 \

--file-path "scan.pdf"

# Instruction execution

./sparrow.sh "check if business entity Chapman, Kim and Green is invoice issuing party"

--pipeline "sparrow-parse"

--instruction

--options mlx --options lmstudio-community/Mistral-Small-3.2-24B-Instruct-2506-8bit

--file-path "invoice_1.jpg"

# Field validation

./sparrow.sh "tax_id,shipment_code,total_gross_worth"

--pipeline "sparrow-parse"

--validation

--options mlx --options lmstudio-community/Mistral-Small-3.2-24B-Instruct-2506-8bit

--file-path "invoice_1.jpg"

{

"tax_id": true,

"shipment_code": false,

"total_gross_worth": true

}# Default port (8002)

python api.py

# Custom port

python api.py --port 8001

# Multiple instances

python api.py --port 8002 & # Sparrow Parse

python api.py --port 8003 & # Instructorcurl -X POST 'http://localhost:8002/api/v1/sparrow-llm/inference' \

-H 'Content-Type: multipart/form-data' \

-F 'query=[{"field_name":"str", "amount":0}]' \

-F 'pipeline=sparrow-parse' \

-F 'options=mlx,mlx-community/Qwen2.5-VL-72B-Instruct-4bit' \

-F '[email protected]'curl -X POST 'http://localhost:8002/api/v1/sparrow-llm/instruction-inference' \

-H 'Content-Type: application/x-www-form-urlencoded' \

-d 'query=instruction: analyze data, payload: {...}' \

-d 'pipeline=sparrow-instructor' \

-d 'options=mlx,mlx-community/Qwen2.5-VL-72B-Instruct-4bit'Visit http://localhost:8002/api/v1/sparrow-llm/docs for interactive Swagger documentation.

Orchestrate complex document processing workflows with visual monitoring powered by Prefect.

- Multi-step Workflows: Chain classification, extraction, and validation

- Visual Monitoring: Real-time pipeline tracking

- Error Handling: Robust failure recovery

- Extensible: Custom agents for specific use cases

# Start agent server

cd sparrow-ml/agents

python api.py --port 8001

# Process medical prescriptions

curl -X POST 'http://localhost:8001/api/v1/sparrow-agents/execute/file' \

-F 'agent_name=medical_prescriptions' \

-F 'extraction_params={"sparrow_key":"123456"}' \

-F '[email protected]'Built-in analytics and monitoring dashboard at sparrow.katanaml.io. This is part of Sparrow UI, requires local Oracle Database 23ai Free.

- Usage Analytics: Track API calls, success rates, performance

- Geographic Distribution: See usage by country

- Model Performance: Compare different model performance

- Real-time Monitoring: Live processing statistics

| Feature | Sparrow Parse | Sparrow Instructor | Sparrow Agents |

|---|---|---|---|

| Input | Documents + JSON schema | Text instructions | Complex workflows |

| Output | Structured JSON | Free-form text | Multi-step results |

| Use Cases | Data extraction, forms | Summarization, analysis | Enterprise workflows |

| Validation | Schema-based | Manual | Custom rules |

| Complexity | Simple | Medium | High |

| Best For | Invoices, tables, forms | Text processing | Multi-document flows |

Sparrow Parse: Use for structured data extraction from documents

Sparrow Instructor: Use for text analysis, summarization, Q&A

Sparrow Agents: Use for complex multi-step document processing workflows

Apple Silicon (MLX)

- ✅ Best performance with unified memory

- ✅ Models: Mistral-Small-3.2-24B, Qwen2.5-VL-72B,

⚠️ Requires macOS with Apple Silicon

NVIDIA GPU

- ✅ Use Ollama backends

- ✅ Recommended: Nvidia DGX Spark with 12GB+ VRAM or AMD GPU

⚠️ Requires CUDA setup

CPU Only

⚠️ Significantly slower- ✅ Use smaller models (7B parameters max)

- ✅ Consider Hugging Face cloud backend

# Reduce memory usage

--crop-size 100 # Crop large images

--options tables_only # Process only tables

# For large PDFs

--debug-dir ./temp # Monitor processing

# Split large PDFs manually if needed| Use Case | Recommended Model | Memory | Speed |

|---|---|---|---|

| Forms/Invoices | Mistral-Small-3.2-24B | 35GB | Fast |

| Complex Tables | Qwen2.5-VL-72B | 50GB | Slower |

| Quick Testing | Qwen2.5-VL-7B | 20GB | Fastest |

🚫 Installation Problems

Python Version Issues:

# Verify Python version

python --version # Should be 3.12.10+

# Fix with pyenv

pyenv install 3.12.10

pyenv global 3.12.10MLX Installation (Apple Silicon):

# If MLX fails to install

pip install --upgrade pip

pip install mlx-vlm --no-cache-dir# If pip install command throws AttributeError: 'NoneType' object has no attribute 'get'

# POTENTIAL SECURITY RISK - SSL verification is bypassed. Apply if you know what you are doing

pip install mlx-vlm --trusted-host pypi.org --trusted-host pypi.python.org --trusted-host files.pythonhosted.orgPoppler Missing:

# macOS

brew install poppler

# Ubuntu/Debian

sudo apt-get install poppler-utils

# Verify installation

pdftoppm -h🔧 Runtime Issues

Memory Errors:

- Use smaller models (7B instead of 72B)

- Enable image cropping:

--crop-size 100 - Process single pages instead of entire PDFs

Model Loading Fails:

# Clear model cache

rm -rf ~/.cache/huggingface/

rm -rf ~/.mlx/

# Redownload models

python -c "from mlx_vlm import load; load('model-name')"API Connection Issues:

# Check if server is running

curl http://localhost:8002/health

# Check logs

python api.py --debug📄 Document Processing Issues

Poor Extraction Quality:

- Try image cropping:

--crop-size 60 - Use

--options tables_onlyfor table documents - Ensure image resolution is adequate (300+ DPI)

- Use schema validation: avoid

--options validation_off

PDF Processing Fails:

# Test PDF manually

pdftoppm -png input.pdf output

# Check page count

python -c "

import pypdf

with open('file.pdf', 'rb') as f:

reader = pypdf.PdfReader(f)

print(f'Pages: {len(reader.pages)}')

"JSON Schema Errors:

- Validate JSON syntax: Use jsonlint.com

- Use proper field types:

"str",0,0.0,"str or null" - Test with simple schema first

- 📖 Check Documentation: Review this README and component docs

- 🐛 Search Issues: GitHub Issues

- 💬 Create Issue: Provide logs, system info, minimal example

- 📧 Commercial Support: [email protected]

Open Source: Licensed under GPL 3.0. Free for open source projects and organizations under $5M revenue.

Commercial: Dual licensing available for proprietary use, enterprise features, and dedicated support.

Contact: [email protected] for commercial licensing and consulting.

- Katana ML - AI/ML consulting and solutions

- Andrej Baranovskij - Lead developer

⭐ Star us on GitHub if Sparrow is useful for your projects!

github.com/katanaml/sparrow

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for sparrow

Similar Open Source Tools

sparrow

Sparrow is an innovative open-source solution for efficient data extraction and processing from various documents and images. It seamlessly handles forms, invoices, receipts, and other unstructured data sources. Sparrow stands out with its modular architecture, offering independent services and pipelines all optimized for robust performance. One of the critical functionalities of Sparrow - pluggable architecture. You can easily integrate and run data extraction pipelines using tools and frameworks like LlamaIndex, Haystack, or Unstructured. Sparrow enables local LLM data extraction pipelines through Ollama or Apple MLX. With Sparrow solution you get API, which helps to process and transform your data into structured output, ready to be integrated with custom workflows. Sparrow Agents - with Sparrow you can build independent LLM agents, and use API to invoke them from your system. **List of available agents:** * **llamaindex** - RAG pipeline with LlamaIndex for PDF processing * **vllamaindex** - RAG pipeline with LLamaIndex multimodal for image processing * **vprocessor** - RAG pipeline with OCR and LlamaIndex for image processing * **haystack** - RAG pipeline with Haystack for PDF processing * **fcall** - Function call pipeline * **unstructured-light** - RAG pipeline with Unstructured and LangChain, supports PDF and image processing * **unstructured** - RAG pipeline with Weaviate vector DB query, Unstructured and LangChain, supports PDF and image processing * **instructor** - RAG pipeline with Unstructured and Instructor libraries, supports PDF and image processing. Works great for JSON response generation

Bindu

Bindu is an operating layer for AI agents that provides identity, communication, and payment capabilities. It delivers a production-ready service with a convenient API to connect, authenticate, and orchestrate agents across distributed systems using open protocols: A2A, AP2, and X402. Built with a distributed architecture, Bindu makes it fast to develop and easy to integrate with any AI framework. Transform any agent framework into a fully interoperable service for communication, collaboration, and commerce in the Internet of Agents.

superagent

Superagent is an open-source AI assistant framework and API that allows developers to add powerful AI assistants to their applications. These assistants use large language models (LLMs), retrieval augmented generation (RAG), and generative AI to help users with a variety of tasks, including question answering, chatbot development, content generation, data aggregation, and workflow automation. Superagent is backed by Y Combinator and is part of YC W24.

python-utcp

The Universal Tool Calling Protocol (UTCP) is a secure and scalable standard for defining and interacting with tools across various communication protocols. UTCP emphasizes scalability, extensibility, interoperability, and ease of use. It offers a modular core with a plugin-based architecture, making it extensible, testable, and easy to package. The repository contains the complete UTCP Python implementation with core components and protocol-specific plugins for HTTP, CLI, Model Context Protocol, file-based tools, and more.

firecrawl

Firecrawl is an API service that empowers AI applications with clean data from any website. It features advanced scraping, crawling, and data extraction capabilities. The repository is still in development, integrating custom modules into the mono repo. Users can run it locally but it's not fully ready for self-hosted deployment yet. Firecrawl offers powerful capabilities like scraping, crawling, mapping, searching, and extracting structured data from single pages, multiple pages, or entire websites with AI. It supports various formats, actions, and batch scraping. The tool is designed to handle proxies, anti-bot mechanisms, dynamic content, media parsing, change tracking, and more. Firecrawl is available as an open-source project under the AGPL-3.0 license, with additional features offered in the cloud version.

mlx-vlm

MLX-VLM is a package designed for running Vision LLMs on Mac systems using MLX. It provides a convenient way to install and utilize the package for processing large language models related to vision tasks. The tool simplifies the process of running LLMs on Mac computers, offering a seamless experience for users interested in leveraging MLX for vision-related projects.

jambo

Jambo is a Python package that automatically converts JSON Schema definitions into Pydantic models. It streamlines schema validation and enforces type safety using Pydantic's validation features. The tool supports various JSON Schema features like strings, integers, floats, booleans, arrays, nested objects, and more. It enforces constraints such as minLength, maxLength, pattern, minimum, maximum, uniqueItems, and provides a zero-config approach for generating models. Jambo is designed to simplify the process of dynamically generating Pydantic models for AI frameworks.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

step-free-api

The StepChat Free service provides high-speed streaming output, multi-turn dialogue support, online search support, long document interpretation, and image parsing. It offers zero-configuration deployment, multi-token support, and automatic session trace cleaning. It is fully compatible with the ChatGPT interface. Additionally, it provides seven other free APIs for various services. The repository includes a disclaimer about using reverse APIs and encourages users to avoid commercial use to prevent service pressure on the official platform. It offers online testing links, showcases different demos, and provides deployment guides for Docker, Docker-compose, Render, Vercel, and native deployments. The repository also includes information on using multiple accounts, optimizing Nginx reverse proxy, and checking the liveliness of refresh tokens.

z-ai-sdk-python

Z.ai Open Platform Python SDK is the official Python SDK for Z.ai's large model open interface, providing developers with easy access to Z.ai's open APIs. The SDK offers core features like chat completions, embeddings, video generation, audio processing, assistant API, and advanced tools. It supports various functionalities such as speech transcription, text-to-video generation, image understanding, and structured conversation handling. Developers can customize client behavior, configure API keys, and handle errors efficiently. The SDK is designed to simplify AI interactions and enhance AI capabilities for developers.

vlmrun-hub

VLMRun Hub is a versatile tool for managing and running virtual machines in a centralized manner. It provides a user-friendly interface to easily create, start, stop, and monitor virtual machines across multiple hosts. With VLMRun Hub, users can efficiently manage their virtualized environments and streamline their workflow. The tool offers flexibility and scalability, making it suitable for both small-scale personal projects and large-scale enterprise deployments.

ramalama

The Ramalama project simplifies working with AI by utilizing OCI containers. It automatically detects GPU support, pulls necessary software in a container, and runs AI models. Users can list, pull, run, and serve models easily. The tool aims to support various GPUs and platforms in the future, making AI setup hassle-free.

nexus

Nexus is a tool that acts as a unified gateway for multiple LLM providers and MCP servers. It allows users to aggregate, govern, and control their AI stack by connecting multiple servers and providers through a single endpoint. Nexus provides features like MCP Server Aggregation, LLM Provider Routing, Context-Aware Tool Search, Protocol Support, Flexible Configuration, Security features, Rate Limiting, and Docker readiness. It supports tool calling, tool discovery, and error handling for STDIO servers. Nexus also integrates with AI assistants, Cursor, Claude Code, and LangChain for seamless usage.

mcp-hub

MCP Hub is a centralized manager for Model Context Protocol (MCP) servers, offering dynamic server management and monitoring, REST API for tool execution and resource access, MCP Server marketplace integration, real-time server status tracking, client connection management, and process lifecycle handling. It acts as a central management server connecting to and managing multiple MCP servers, providing unified API endpoints for client access, handling server lifecycle and health monitoring, and routing requests between clients and MCP servers.

exstruct

ExStruct is an Excel structured extraction engine that reads Excel workbooks and outputs structured data as JSON, including cells, table candidates, shapes, charts, smartart, merged cell ranges, print areas/views, auto page-break areas, and hyperlinks. It offers different output modes, formula map extraction, table detection tuning, CLI rendering options, and graceful fallback in case Excel COM is unavailable. The tool is designed to fit LLM/RAG pipelines and provides benchmark reports for accuracy and utility. It supports various formats like JSON, YAML, and TOON, with optional extras for rendering and full extraction targeting Windows + Excel environments.

headroom

Headroom is a tool designed to optimize the context layer for Large Language Models (LLMs) applications by compressing redundant boilerplate outputs. It intercepts context from tool outputs, logs, search results, and intermediate agent steps, stabilizes dynamic content like timestamps and UUIDs, removes low-signal content, and preserves original data for retrieval only when needed by the LLM. It ensures provider caching works efficiently by aligning prompts for cache hits. The tool works as a transparent proxy with zero code changes, offering significant savings in token count and enabling reversible compression for various types of content like code, logs, JSON, and images. Headroom integrates seamlessly with frameworks like LangChain, Agno, and MCP, supporting features like memory, retrievers, agents, and more.

For similar tasks

skyvern

Skyvern automates browser-based workflows using LLMs and computer vision. It provides a simple API endpoint to fully automate manual workflows, replacing brittle or unreliable automation solutions. Traditional approaches to browser automations required writing custom scripts for websites, often relying on DOM parsing and XPath-based interactions which would break whenever the website layouts changed. Instead of only relying on code-defined XPath interactions, Skyvern adds computer vision and LLMs to the mix to parse items in the viewport in real-time, create a plan for interaction and interact with them. This approach gives us a few advantages: 1. Skyvern can operate on websites it’s never seen before, as it’s able to map visual elements to actions necessary to complete a workflow, without any customized code 2. Skyvern is resistant to website layout changes, as there are no pre-determined XPaths or other selectors our system is looking for while trying to navigate 3. Skyvern leverages LLMs to reason through interactions to ensure we can cover complex situations. Examples include: 1. If you wanted to get an auto insurance quote from Geico, the answer to a common question “Were you eligible to drive at 18?” could be inferred from the driver receiving their license at age 16 2. If you were doing competitor analysis, it’s understanding that an Arnold Palmer 22 oz can at 7/11 is almost definitely the same product as a 23 oz can at Gopuff (even though the sizes are slightly different, which could be a rounding error!) Want to see examples of Skyvern in action? Jump to #real-world-examples-of- skyvern

airbyte-connectors

This repository contains Airbyte connectors used in Faros and Faros Community Edition platforms as well as Airbyte Connector Development Kit (CDK) for JavaScript/TypeScript.

open-parse

Open Parse is a Python library for visually discerning document layouts and chunking them effectively. It is designed to fill the gap in open-source libraries for handling complex documents. Unlike text splitting, which converts a file to raw text and slices it up, Open Parse visually analyzes documents for superior LLM input. It also supports basic markdown for parsing headings, bold, and italics, and has high-precision table support, extracting tables into clean Markdown formats with accuracy that surpasses traditional tools. Open Parse is extensible, allowing users to easily implement their own post-processing steps. It is also intuitive, with great editor support and completion everywhere, making it easy to use and learn.

unstract

Unstract is a no-code platform that enables users to launch APIs and ETL pipelines to structure unstructured documents. With Unstract, users can go beyond co-pilots by enabling machine-to-machine automation. Unstract's Prompt Studio provides a simple, no-code approach to creating prompts for LLMs, vector databases, embedding models, and text extractors. Users can then configure Prompt Studio projects as API deployments or ETL pipelines to automate critical business processes that involve complex documents. Unstract supports a wide range of LLM providers, vector databases, embeddings, text extractors, ETL sources, and ETL destinations, providing users with the flexibility to choose the best tools for their needs.

Dot

Dot is a standalone, open-source application designed for seamless interaction with documents and files using local LLMs and Retrieval Augmented Generation (RAG). It is inspired by solutions like Nvidia's Chat with RTX, providing a user-friendly interface for those without a programming background. Pre-packaged with Mistral 7B, Dot ensures accessibility and simplicity right out of the box. Dot allows you to load multiple documents into an LLM and interact with them in a fully local environment. Supported document types include PDF, DOCX, PPTX, XLSX, and Markdown. Users can also engage with Big Dot for inquiries not directly related to their documents, similar to interacting with ChatGPT. Built with Electron JS, Dot encapsulates a comprehensive Python environment that includes all necessary libraries. The application leverages libraries such as FAISS for creating local vector stores, Langchain, llama.cpp & Huggingface for setting up conversation chains, and additional tools for document management and interaction.

instructor

Instructor is a Python library that makes it a breeze to work with structured outputs from large language models (LLMs). Built on top of Pydantic, it provides a simple, transparent, and user-friendly API to manage validation, retries, and streaming responses. Get ready to supercharge your LLM workflows!

sparrow

Sparrow is an innovative open-source solution for efficient data extraction and processing from various documents and images. It seamlessly handles forms, invoices, receipts, and other unstructured data sources. Sparrow stands out with its modular architecture, offering independent services and pipelines all optimized for robust performance. One of the critical functionalities of Sparrow - pluggable architecture. You can easily integrate and run data extraction pipelines using tools and frameworks like LlamaIndex, Haystack, or Unstructured. Sparrow enables local LLM data extraction pipelines through Ollama or Apple MLX. With Sparrow solution you get API, which helps to process and transform your data into structured output, ready to be integrated with custom workflows. Sparrow Agents - with Sparrow you can build independent LLM agents, and use API to invoke them from your system. **List of available agents:** * **llamaindex** - RAG pipeline with LlamaIndex for PDF processing * **vllamaindex** - RAG pipeline with LLamaIndex multimodal for image processing * **vprocessor** - RAG pipeline with OCR and LlamaIndex for image processing * **haystack** - RAG pipeline with Haystack for PDF processing * **fcall** - Function call pipeline * **unstructured-light** - RAG pipeline with Unstructured and LangChain, supports PDF and image processing * **unstructured** - RAG pipeline with Weaviate vector DB query, Unstructured and LangChain, supports PDF and image processing * **instructor** - RAG pipeline with Unstructured and Instructor libraries, supports PDF and image processing. Works great for JSON response generation

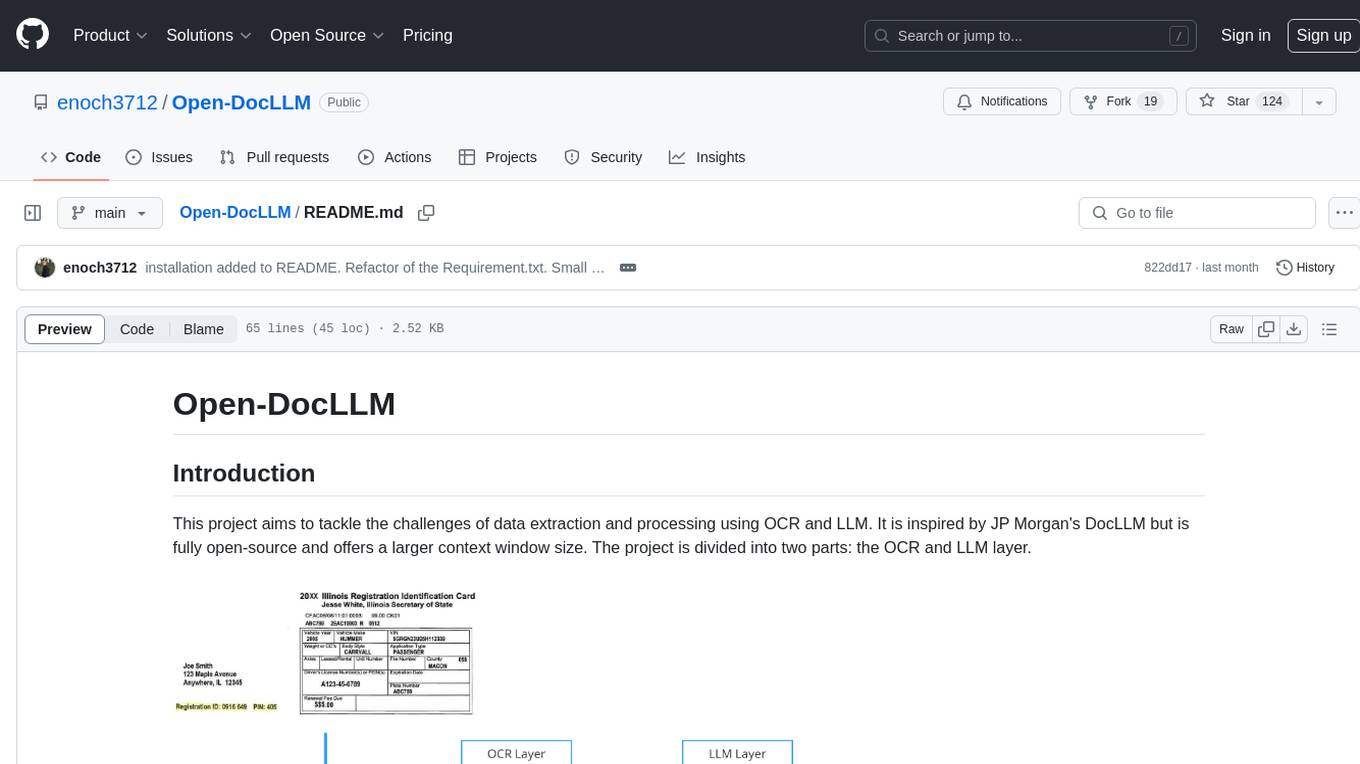

Open-DocLLM

Open-DocLLM is an open-source project that addresses data extraction and processing challenges using OCR and LLM technologies. It consists of two main layers: OCR for reading document content and LLM for extracting specific content in a structured manner. The project offers a larger context window size compared to JP Morgan's DocLLM and integrates tools like Tesseract OCR and Mistral for efficient data analysis. Users can run the models on-premises using LLM studio or Ollama, and the project includes a FastAPI app for testing purposes.

For similar jobs

crawlee

Crawlee is a web scraping and browser automation library that helps you build reliable scrapers quickly. Your crawlers will appear human-like and fly under the radar of modern bot protections even with the default configuration. Crawlee gives you the tools to crawl the web for links, scrape data, and store it to disk or cloud while staying configurable to suit your project's needs.

open-parse

Open Parse is a Python library for visually discerning document layouts and chunking them effectively. It is designed to fill the gap in open-source libraries for handling complex documents. Unlike text splitting, which converts a file to raw text and slices it up, Open Parse visually analyzes documents for superior LLM input. It also supports basic markdown for parsing headings, bold, and italics, and has high-precision table support, extracting tables into clean Markdown formats with accuracy that surpasses traditional tools. Open Parse is extensible, allowing users to easily implement their own post-processing steps. It is also intuitive, with great editor support and completion everywhere, making it easy to use and learn.

sparrow

Sparrow is an innovative open-source solution for efficient data extraction and processing from various documents and images. It seamlessly handles forms, invoices, receipts, and other unstructured data sources. Sparrow stands out with its modular architecture, offering independent services and pipelines all optimized for robust performance. One of the critical functionalities of Sparrow - pluggable architecture. You can easily integrate and run data extraction pipelines using tools and frameworks like LlamaIndex, Haystack, or Unstructured. Sparrow enables local LLM data extraction pipelines through Ollama or Apple MLX. With Sparrow solution you get API, which helps to process and transform your data into structured output, ready to be integrated with custom workflows. Sparrow Agents - with Sparrow you can build independent LLM agents, and use API to invoke them from your system. **List of available agents:** * **llamaindex** - RAG pipeline with LlamaIndex for PDF processing * **vllamaindex** - RAG pipeline with LLamaIndex multimodal for image processing * **vprocessor** - RAG pipeline with OCR and LlamaIndex for image processing * **haystack** - RAG pipeline with Haystack for PDF processing * **fcall** - Function call pipeline * **unstructured-light** - RAG pipeline with Unstructured and LangChain, supports PDF and image processing * **unstructured** - RAG pipeline with Weaviate vector DB query, Unstructured and LangChain, supports PDF and image processing * **instructor** - RAG pipeline with Unstructured and Instructor libraries, supports PDF and image processing. Works great for JSON response generation