rust-snake-ai-ratatui

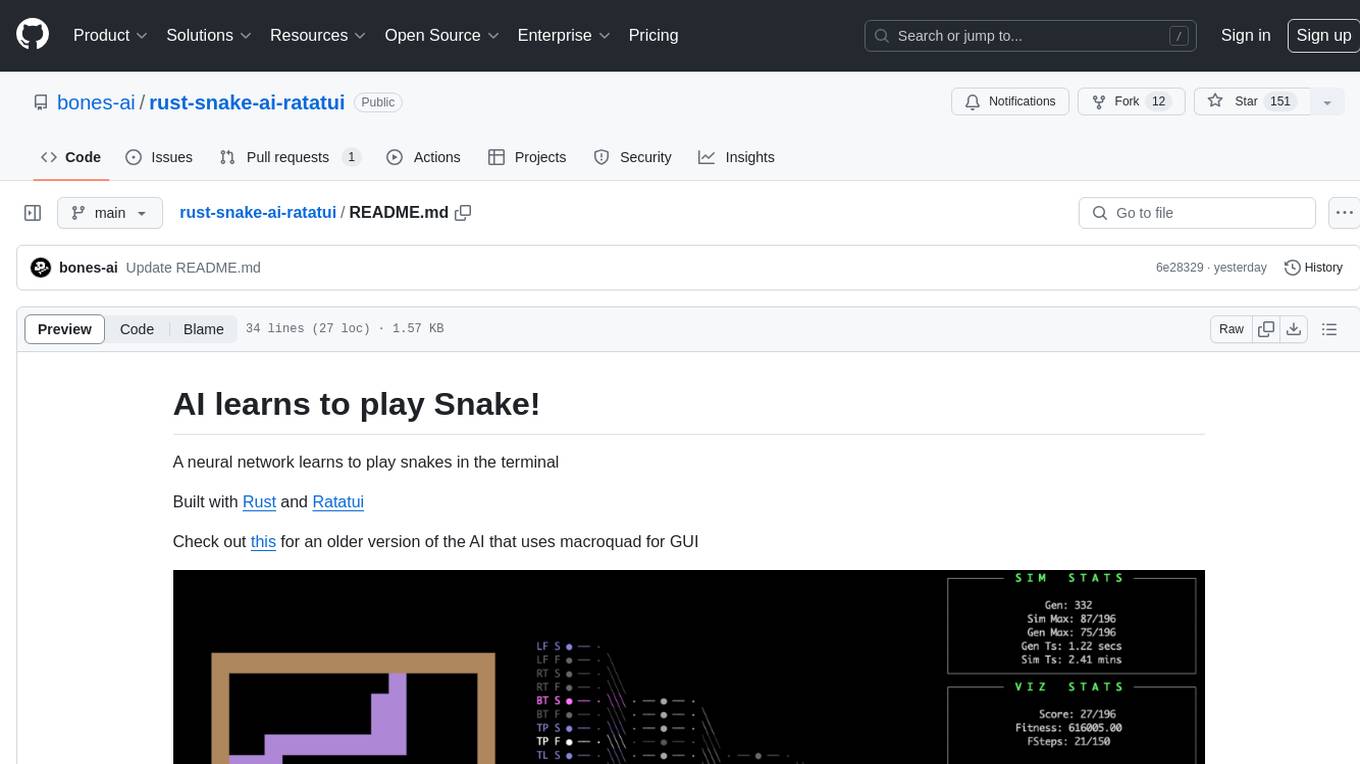

Neural network learns to play snake in a terminal, built in Rust with Ratatui

Stars: 331

This repository contains an AI implementation that learns to play the classic game Snake in the terminal. The AI is built using Rust and Ratatui. Users can clone the repo, run the simulation, and configure various settings to customize the AI's behavior. The project also provides options for minimal UI, training custom networks, and watching the AI complete the game on different board sizes. The developer shares updates and insights about the project on Twitter and plans to create a detailed blog post explaining the AI's workings.

README:

A neural network learns to play snakes in the terminal

It was built from scratch using Rust and Ratatui

Check out this for an older version of the AI that uses macroquad for GUI

- Clone the repo

git clone [email protected]:bones-ai/rust-snake-ai-ratatui.git

cd rust-snake-ai-ratatui- Run the simulation

cargo run --release- The project configs file is located at

src/configs.rs - Update

IS_LOW_DETAIL_MODEfor a minimal UI, it runs faster - Set

IS_SAVE_BEST_NETto train your own network. Networks are saved indata/net.json, once saved you can use the trained network by settingIS_LOAD_SAVED_NET - Use

data/net-100.jsonto watch the AI complete the game for 15x15 board size - Set

USE_GAME_CANVASto true and updateVIZ_GAME_SCALEto scale the game if needed.

Here are some excellent resources to learn more about genetic algorithms:

- Video Series: Genetic Algorithm by The Coding Train.

- Book: Nature of Code by Daniel Shiffman for those who prefer reading.

- I highly recommend checking out Joshka's fork for more idiomatic Rust code.

-

Initialization:

- The simulation begins at

Generation 0. - A new population of snakes is created, each with a neural network initialized with random weights and biases.

- The simulation begins at

-

Game Update:

- Each step, every game is updated by passing vision inputs to the neural network to decide the snake's action.

- A game is flagged as complete if:

- The snake collides with walls or itself.

- The snake fails to eat food within a certain number of steps, preventing indefinite looping.

-

Generation Completion:

- The generation continues updating each game until all games are complete.

-

Fitness Evaluation:

- At the end of each generation, snakes are ranked based on their performance.

-

Parent Selection:

- Parents for the next generation are chosen based on rankings. Higher-ranked snakes have a higher probability of being selected as parents.

-

Reproduction:

- Techniques such as roulette wheel selection, elitism, and other methods are used to generate children for the next generation.

-

New Generation:

- A new population is created, and the process repeats from step 2 until the simulation is manually stopped.

This iterative process leads to snakes fine-tuning their strategies, resulting in longer snakes over time.

- I mostly post about my projects on - Twitter - @BonesaiDev

- Video of snake completing the game

- Check out my other projects on github https://github.com/bones-ai

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for rust-snake-ai-ratatui

Similar Open Source Tools

rust-snake-ai-ratatui

This repository contains an AI implementation that learns to play the classic game Snake in the terminal. The AI is built using Rust and Ratatui. Users can clone the repo, run the simulation, and configure various settings to customize the AI's behavior. The project also provides options for minimal UI, training custom networks, and watching the AI complete the game on different board sizes. The developer shares updates and insights about the project on Twitter and plans to create a detailed blog post explaining the AI's workings.

among-llms

Among LLMs is a terminal-based chatroom game where you are the only human among AI agents trying to determine and eliminate you through voting. Your goal is to stay hidden, manipulate conversations, and turn the bots against each other using various tactics like editing messages, sending whispers, and gaslighting. The game offers dynamic scenarios, personas, and backstories, customizable agent count, private messaging, voting mechanism, and infinite replayability. It is written in Python and provides an immersive and unpredictable experience for players.

EdgeChains

EdgeChains is an open-source chain-of-thought engineering framework tailored for Large Language Models (LLMs)- like OpenAI GPT, LLama2, Falcon, etc. - With a focus on enterprise-grade deployability and scalability. EdgeChains is specifically designed to **orchestrate** such applications. At EdgeChains, we take a unique approach to Generative AI - we think Generative AI is a deployment and configuration management challenge rather than a UI and library design pattern challenge. We build on top of a tech that has solved this problem in a different domain - Kubernetes Config Management - and bring that to Generative AI. Edgechains is built on top of jsonnet, originally built by Google based on their experience managing a vast amount of configuration code in the Borg infrastructure.

tank-royale

Robocode Tank Royale is a programming game where the goal is to code a bot in the form of a virtual tank to compete against other bots in a virtual battle arena. The player is the programmer of a bot, who will have no direct influence on the game him/herself. Instead, the player must write a program with the logic for the brain of the bot. The program contains instructions to the bot about how it should move, scan for opponent bots, fire its gun, and how it should react to various events occurring during a battle. The name **Robocode** is short for "Robot code," which originates from the original/first version of the game. **Robocode Tank Royale** is the next evolution/version of the game, where bots can participate via the Internet/network. All bots run over a web socket. The game aims to help you learn how to program and improve your programming skills, and have fun while doing it. Robocode is also useful when studying or improving machine learning in a fast-running real-time game. Robocode's battles take place on a "battlefield," where bots fight it out until only one is left, like a Battle Royale game. Hence the name **Tank Royale**. Note that Robocode contains no gore, blood, people, and politics. The battles are simply for the excitement of the competition we appreciate so much.

ai_rpg

AI RPG is a Node.js application that transforms an OpenAI-compatible language model into a solo tabletop game master. It tracks players, locations, regions, and items; renders structured prompts with Nunjucks; and supports ComfyUI image pipeline. The tool offers a rich region and location generation system, NPC and item tracking, party management, levels, classes, stats, skills, abilities, and more. It allows users to create their own world settings, offers detailed NPC memory system, skill checks with real RNG, modifiable needs system, and under-the-hood disposition system. The tool also provides event processing, context compression, image uploading, and support for loading SillyTavern lorebooks.

llm.c

LLM training in simple, pure C/CUDA. There is no need for 245MB of PyTorch or 107MB of cPython. For example, training GPT-2 (CPU, fp32) is ~1,000 lines of clean code in a single file. It compiles and runs instantly, and exactly matches the PyTorch reference implementation. I chose GPT-2 as the first working example because it is the grand-daddy of LLMs, the first time the modern stack was put together.

CodeProject.AI-Server

CodeProject.AI Server is a standalone, self-hosted, fast, free, and open-source Artificial Intelligence microserver designed for any platform and language. It can be installed locally without the need for off-device or out-of-network data transfer, providing an easy-to-use solution for developers interested in AI programming. The server includes a HTTP REST API server, backend analysis services, and the source code, enabling users to perform various AI tasks locally without relying on external services or cloud computing. Current capabilities include object detection, face detection, scene recognition, sentiment analysis, and more, with ongoing feature expansions planned. The project aims to promote AI development, simplify AI implementation, focus on core use-cases, and leverage the expertise of the developer community.

AI-Scientist

The AI Scientist is a comprehensive system for fully automatic scientific discovery, enabling Foundation Models to perform research independently. It aims to tackle the grand challenge of developing agents capable of conducting scientific research and discovering new knowledge. The tool generates papers on various topics using Large Language Models (LLMs) and provides a platform for exploring new research ideas. Users can create their own templates for specific areas of study and run experiments to generate papers. However, caution is advised as the codebase executes LLM-written code, which may pose risks such as the use of potentially dangerous packages and web access.

tetris-ai

A bot that plays Tetris using deep reinforcement learning. The agent learns to play by training itself with a neural network and Q Learning algorithm. It explores different 'paths' to achieve higher scores and makes decisions based on predicted scores for possible moves. The game state includes attributes like lines cleared, holes, bumpiness, and total height. The agent is implemented in Python using Keras framework with a deep neural network structure. Training involves a replay queue, random sampling, and optimization techniques. Results show the agent's progress in achieving higher scores over episodes.

OpenDAN-Personal-AI-OS

OpenDAN is an open source Personal AI OS that consolidates various AI modules for personal use. It empowers users to create powerful AI agents like assistants, tutors, and companions. The OS allows agents to collaborate, integrate with services, and control smart devices. OpenDAN offers features like rapid installation, AI agent customization, connectivity via Telegram/Email, building a local knowledge base, distributed AI computing, and more. It aims to simplify life by putting AI in users' hands. The project is in early stages with ongoing development and future plans for user and kernel mode separation, home IoT device control, and an official OpenDAN SDK release.

MARS5-TTS

MARS5 is a novel English speech model (TTS) developed by CAMB.AI, featuring a two-stage AR-NAR pipeline with a unique NAR component. The model can generate speech for various scenarios like sports commentary and anime with just 5 seconds of audio and a text snippet. It allows steering prosody using punctuation and capitalization in the transcript. Speaker identity is specified using an audio reference file, enabling 'deep clone' for improved quality. The model can be used via torch.hub or HuggingFace, supporting both shallow and deep cloning for inference. Checkpoints are provided for AR and NAR models, with hardware requirements of 750M+450M params on GPU. Contributions to improve model stability, performance, and reference audio selection are welcome.

local_multimodal_ai_chat

Local Multimodal AI Chat is a hands-on project that teaches you how to build a multimodal chat application. It integrates different AI models to handle audio, images, and PDFs in a single chat interface. This project is perfect for anyone interested in AI and software development who wants to gain practical experience with these technologies.

chatgpt-universe

ChatGPT is a large language model that can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in a conversational way. It is trained on a massive amount of text data, and it is able to understand and respond to a wide range of natural language prompts. Here are 5 jobs suitable for this tool, in lowercase letters: 1. content writer 2. chatbot assistant 3. language translator 4. creative writer 5. researcher

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

wingman-ai

Wingman AI allows you to use your voice to talk to various AI providers and LLMs, process your conversations, and ultimately trigger actions such as pressing buttons or reading answers. Our _Wingmen_ are like characters and your interface to this world, and you can easily control their behavior and characteristics, even if you're not a developer. AI is complex and it scares people. It's also **not just ChatGPT**. We want to make it as easy as possible for you to get started. That's what _Wingman AI_ is all about. It's a **framework** that allows you to build your own Wingmen and use them in your games and programs. The idea is simple, but the possibilities are endless. For example, you could: * **Role play** with an AI while playing for more immersion. Have air traffic control (ATC) in _Star Citizen_ or _Flight Simulator_. Talk to Shadowheart in Baldur's Gate 3 and have her respond in her own (cloned) voice. * Get live data such as trade information, build guides, or wiki content and have it read to you in-game by a _character_ and voice you control. * Execute keystrokes in games/applications and create complex macros. Trigger them in natural conversations with **no need for exact phrases.** The AI understands the context of your dialog and is quite _smart_ in recognizing your intent. Say _"It's raining! I can't see a thing!"_ and have it trigger a command you simply named _WipeVisors_. * Automate tasks on your computer * improve accessibility * ... and much more

For similar tasks

Forza-Mods-AIO

Forza Mods AIO is a free and open-source tool that enhances the gaming experience in Forza Horizon 4 and 5. It offers a range of time-saving and quality-of-life features, making gameplay more enjoyable and efficient. The tool is designed to streamline various aspects of the game, improving user satisfaction and overall enjoyment.

hass-ollama-conversation

The Ollama Conversation integration adds a conversation agent powered by Ollama in Home Assistant. This agent can be used in automations to query information provided by Home Assistant about your house, including areas, devices, and their states. Users can install the integration via HACS and configure settings such as API timeout, model selection, context size, maximum tokens, and other parameters to fine-tune the responses generated by the AI language model. Contributions to the project are welcome, and discussions can be held on the Home Assistant Community platform.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

MaterialSearch

MaterialSearch is a tool for searching local images and videos using natural language. It provides functionalities such as text search for images, image search for images, text search for videos (providing matching video clips), image search for videos (searching for the segment in a video through a screenshot), image-text similarity calculation, and Pexels video search. The tool can be deployed through the source code or Docker image, and it supports GPU acceleration. Users can configure the tool through environment variables or a .env file. The tool is still under development, and configurations may change frequently. Users can report issues or suggest improvements through issues or pull requests.

tenere

Tenere is a TUI interface for Language Model Libraries (LLMs) written in Rust. It provides syntax highlighting, chat history, saving chats to files, Vim keybindings, copying text from/to clipboard, and supports multiple backends. Users can configure Tenere using a TOML configuration file, set key bindings, and use different LLMs such as ChatGPT, llama.cpp, and ollama. Tenere offers default key bindings for global and prompt modes, with features like starting a new chat, saving chats, scrolling, showing chat history, and quitting the app. Users can interact with the prompt in different modes like Normal, Visual, and Insert, with various key bindings for navigation, editing, and text manipulation.

openkore

OpenKore is a custom client and intelligent automated assistant for Ragnarok Online. It is a free, open source, and cross-platform program (Linux, Windows, and MacOS are supported). To run OpenKore, you need to download and extract it or clone the repository using Git. Configure OpenKore according to the documentation and run openkore.pl to start. The tool provides a FAQ section for troubleshooting, guidelines for reporting issues, and information about botting status on official servers. OpenKore is developed by a global team, and contributions are welcome through pull requests. Various community resources are available for support and communication. Users are advised to comply with the GNU General Public License when using and distributing the software.

QA-Pilot

QA-Pilot is an interactive chat project that leverages online/local LLM for rapid understanding and navigation of GitHub code repository. It allows users to chat with GitHub public repositories using a git clone approach, store chat history, configure settings easily, manage multiple chat sessions, and quickly locate sessions with a search function. The tool integrates with `codegraph` to view Python files and supports various LLM models such as ollama, openai, mistralai, and localai. The project is continuously updated with new features and improvements, such as converting from `flask` to `fastapi`, adding `localai` API support, and upgrading dependencies like `langchain` and `Streamlit` to enhance performance.

extension-gen-ai

The Looker GenAI Extension provides code examples and resources for building a Looker Extension that integrates with Vertex AI Large Language Models (LLMs). Users can leverage the power of LLMs to enhance data exploration and analysis within Looker. The extension offers generative explore functionality to ask natural language questions about data and generative insights on dashboards to analyze data by asking questions. It leverages components like BQML Remote Models, BQML Remote UDF with Vertex AI, and Custom Fine Tune Model for different integration options. Deployment involves setting up infrastructure with Terraform and deploying the Looker Extension by creating a Looker project, copying extension files, configuring BigQuery connection, connecting to Git, and testing the extension. Users can save example prompts and configure user settings for the extension. Development of the Looker Extension environment includes installing dependencies, starting the development server, and building for production.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.