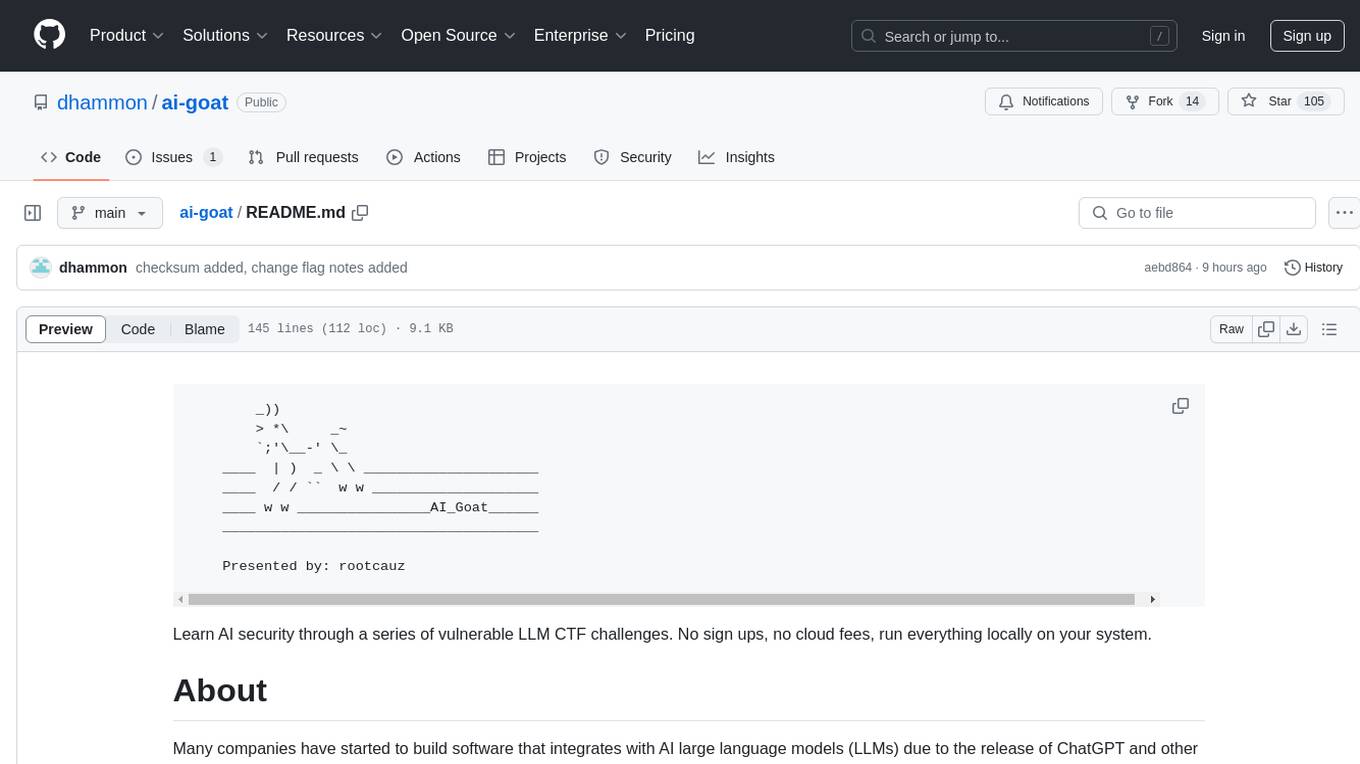

ai-goat

Learn AI security through a series of vulnerable LLM CTF challenges. No sign ups, no cloud fees, run everything locally on your system.

Stars: 105

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

README:

_))

> *\ _~

`;'\__-' \_

____ | ) _ \ \ _____________________

____ / / `` w w ____________________

____ w w ________________AI_Goat______

______________________________________

Presented by: rootcauz

Learn AI security through a series of vulnerable LLM CTF challenges. No sign ups, no cloud fees, run everything locally on your system.

Many companies have started to build software that integrates with AI large language models (LLMs) due to the release of ChatGPT and other engines. This explosion of interest has led to the rapid development systems that reintroduce old vulnerabilities and impose new classes of less understood threats. Many company security teams may not be fully equipped do deal with LLM security as the field is still maturing with tools and learning resources.

I've developed AI Goat to learn about LLM development and the security risks companies face that use it. The CTF format is a great way for security researchers to gain practical experience and learn about how these systems are vulnerable and can be exploited. Thank you for your interest in this project and I hope you have fun!

The OWASP Top 10 for LLM Applications is a great place to start learning about LLM security threats and mitigations. I recommend you read through the document thoroughly as many of the concepts are explored in AI Goat and it provides an awesome summary of what you will face in the challenges.

Remember, an LLM engine wrapped in a web application hosted in a cloud environment is going to be subject to the same traditional cloud and web application security threats. In addition to these traditional threats, LLM projects will also be subject to the following noncomprehensive list of threats:

- Prompt Injection

- Insecure Output Handling

- Training Data Poisoning

- Denial of Service

- Supply Chain

- Permission Issues

- Data Leakage

- Excessive Agency

- Overreliance

- Insecure Plugins

AI Goat uses the Vicuna LLM which derived from Meta's LLaMA and coupled with ChatGPT's response data. When installing AI Goat the LLM binary is downloaded from HuggingFace locally on your computer. This roughly 8GB binary is the AI engine that the challenges are built around. The LLM binary essentially takes an input "prompt" and gives an output, "response". The prompt consists of three elements concatenated together in a string. These elements are: 1. Instructions; 2. Question; and 3. Response. The Instructions element consists of the described rules for the LLM. They are meant to describe to the LLM how it is supposed to behave. The Question element is where most systems allow user input. For instance, the comment entered into a chat engine would be placed in the Question element. Lastly, the Response section prescribes that the LLM give a response to the question.

A prebuilt Docker image, ai-base, has all the libraries needed to run the LLM and challenges. This container is downloaded during the installation process along with the LLM binary. A docker compose that launches each challenge attaches the LLM binary, specific challenge files, and exposes TCP ports needed to complete each challenge. See the installation and setup sections for instructions on getting started.

An optional CTFd container has been prepared that includes each challenge description, hints, category, and flag submission. The container image is hosted in our dockerhub and is call ai-ctfd alongside the ai-base image. The ai-ctfd container can be launched from the ai-goat.py and accessed using your browser.

- git

sudo apt install git -y

- python3

- pip3

sudo apt install python3-pip -y

- Docker

- docker-compose

- User in docker group

sudo usermod -aG docker $USERreboot

- 8GBs of drive space

- Minimum 16GB system memory with at least 8GB dedicated to the challenge; otherwise LLM responses take too long

- A love for cybersecurity!

git clone https://github.com/dhammon/ai-goat

cd ai-goat

pip3 install -r requirements.txt

chmod +x ai-goat.py

./ai-goat.py --install

This section expects that you have already followed the Installation steps.

Using ai-ctfd provides you with a listing of all the challenges and flag submission. It is a great tool to use by yourself or when hosting a CTF. Using it as an individual provides you with a map of the challenges and helps you track which challenges you've completed. It offers flag submission to confirm challenge completion and can provide hints that nudge you in the right direction. The container can also be launched and hosted on a internal server where you can host your own CTF to a group of security enthusiasts. The following command launches ai-ctfd in the background and can be accessed on port 8000:

./ai-goat.py --run ctfd

Important: Once launched, you must create a user registering a user account. This registration stays local on the container and does not require a real email address.

You can change the flags within the challenges source code and then in CTFD (they must match).

- After you clone the repo, navigate to

ai-goat/app/challenges/1/app.pyand change the flag in the string on line 12. - Then navigate to

ai-goat/app/challenges/2/entrypoint.shand change the flag on line 3. - Next you will need to change the flags in CTFD. Launch CTFD (

./ai-goat.py --run ctfdand open browser tohttp://127.0.0.1:8000) and then login with therootuser usingqVLv27Dsy5WuXRubjfIIas the password. - Once logged in, navigate the admin panel (top nav bar) -> Challenges (top nav bar) -> select a challenge -> and hit the Flags sub-tab.

- Change the flag for each CTFD challenge to match the same string you changed the in the source code.

Have fun!

See the Challenges section for a description of each challenge or refer to the ai-ctfd web page from Step 1. The following command launches the first challenge:

./ai-goat.py --run 1

The challenge container will launch in the background and provide instructions on how to interact with the challenge. Each challenge has a flag, which is a string surrounded by curly braces. For example: {eXampl3F!ag}. You verify the flag through submission to the subject challenge in the ai-ctfd page.

Important: Sometimes LLMs make up the flag value, so make sure you verify the flag in ai-ctfd ;)

Important: The LLM response can take 30 seconds or so

- Challenges can be restarted by rerunning the challenge. Example:

./ai-goat.py --run <CHALLENGE NUMBER>. This command will restart the container if it is already running. - You might inadvertently pollute or break a challenge container. Use docker commands to stop containers if needed. To list containers:

docker container ps. To stop a container:docker stop <CONTAINER NAME>.

The main branch will always contain the latest release. Historical versions will be maintained in respective branches. Upgrade instructions:

cd ai-goat

git pull

pip3 install -r requirements.txt

chmod +x ai-goat.py

./ai-goat.py --install

Important: ai-ctfd will effectively start from scratch so make sure to create a new user and resubmit/redo any previous challenges if desired.

Successful prompt injection attacks influence the LLM to respond with information they are not intended to divulge. In this challenge, an AI chatbot running on port 9001 is storing the flag as a secret and has been prompted to not give it up. Can you convince or trick the bot into giving you the flag?

./ai-goat.py -r 1

LLM01: Prompt Injections | LLM07: Data Leakage

LLM system output shouldn't be trusted, especially when that output is used in downstream operations such as OS commands or network calls. This challenge has another AI chatbot running on port 9002 that takes a user question and returns a website's title. The user input is converted into a URL by the chatbot where it is used to request that site's source while ripping the the title. What else could this chatbot have network access to?

./ai-goat.py -r 2

LLM02: Insecure Output Handling

Latest version is main branch. You can find the version in the CHANGELOG.md file. Branches are created for each respective version.

CTF engine: CTFD

Art by: ejm97 on ascii.co.uk

AI container technology:

- Library: llama-cpp-python

- Large Language Model: Vicuna LLM

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ai-goat

Similar Open Source Tools

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

HackBot

HackBot is an AI-powered cybersecurity chatbot designed to provide accurate answers to cybersecurity-related queries, conduct code analysis, and scan analysis. It utilizes the Meta-LLama2 AI model through the 'LlamaCpp' library to respond coherently. The chatbot offers features like local AI/Runpod deployment support, cybersecurity chat assistance, interactive interface, clear output presentation, static code analysis, and vulnerability analysis. Users can interact with HackBot through a command-line interface and utilize it for various cybersecurity tasks.

Open-LLM-VTuber

Open-LLM-VTuber is a project in early stages of development that allows users to interact with Large Language Models (LLM) using voice commands and receive responses through a Live2D talking face. The project aims to provide a minimum viable prototype for offline use on macOS, Linux, and Windows, with features like long-term memory using MemGPT, customizable LLM backends, speech recognition, and text-to-speech providers. Users can configure the project to chat with LLMs, choose different backend services, and utilize Live2D models for visual representation. The project supports perpetual chat, offline operation, and GPU acceleration on macOS, addressing limitations of existing solutions on macOS.

EdgeChains

EdgeChains is an open-source chain-of-thought engineering framework tailored for Large Language Models (LLMs)- like OpenAI GPT, LLama2, Falcon, etc. - With a focus on enterprise-grade deployability and scalability. EdgeChains is specifically designed to **orchestrate** such applications. At EdgeChains, we take a unique approach to Generative AI - we think Generative AI is a deployment and configuration management challenge rather than a UI and library design pattern challenge. We build on top of a tech that has solved this problem in a different domain - Kubernetes Config Management - and bring that to Generative AI. Edgechains is built on top of jsonnet, originally built by Google based on their experience managing a vast amount of configuration code in the Borg infrastructure.

gpt-subtrans

GPT-Subtrans is an open-source subtitle translator that utilizes large language models (LLMs) as translation services. It supports translation between any language pairs that the language model supports. Note that GPT-Subtrans requires an active internet connection, as subtitles are sent to the provider's servers for translation, and their privacy policy applies.

wtffmpeg

wtffmpeg is a command-line tool that uses a Large Language Model (LLM) to translate plain-English descriptions of video or audio tasks into actual, executable ffmpeg commands. It aims to streamline the process of generating ffmpeg commands by allowing users to describe what they want to do in natural language, review the generated command, optionally edit it, and then decide whether to run it. The tool provides an interactive REPL interface where users can input their commands, retain conversational context, and history, and control the level of interactivity. wtffmpeg is designed to assist users in efficiently working with ffmpeg commands, reducing the need to search for solutions, read lengthy explanations, and manually adjust commands.

ezkl

EZKL is a library and command-line tool for doing inference for deep learning models and other computational graphs in a zk-snark (ZKML). It enables the following workflow: 1. Define a computational graph, for instance a neural network (but really any arbitrary set of operations), as you would normally in pytorch or tensorflow. 2. Export the final graph of operations as an .onnx file and some sample inputs to a .json file. 3. Point ezkl to the .onnx and .json files to generate a ZK-SNARK circuit with which you can prove statements such as: > "I ran this publicly available neural network on some private data and it produced this output" > "I ran my private neural network on some public data and it produced this output" > "I correctly ran this publicly available neural network on some public data and it produced this output" In the backend we use the collaboratively-developed Halo2 as a proof system. The generated proofs can then be verified with much less computational resources, including on-chain (with the Ethereum Virtual Machine), in a browser, or on a device.

polis

Polis is an AI powered sentiment gathering platform that offers a more organic approach than surveys and requires less effort than focus groups. It provides a comprehensive wiki, main deployment at https://pol.is, discussions, issue tracking, and project board for users. Polis can be set up using Docker infrastructure and offers various commands for building and running containers. Users can test their instance, update the system, and deploy Polis for production. The tool also provides developer conveniences for code reloading, type checking, and database connections. Additionally, Polis supports end-to-end browser testing using Cypress and offers troubleshooting tips for common Docker and npm issues.

airbyte_serverless

AirbyteServerless is a lightweight tool designed to simplify the management of Airbyte connectors. It offers a serverless mode for running connectors, allowing users to easily move data from any source to their data warehouse. Unlike the full Airbyte-Open-Source-Platform, AirbyteServerless focuses solely on the Extract-Load process without a UI, database, or transform layer. It provides a CLI tool, 'abs', for managing connectors, creating connections, running jobs, selecting specific data streams, handling secrets securely, and scheduling remote runs. The tool is scalable, allowing independent deployment of multiple connectors. It aims to streamline the connector management process and provide a more agile alternative to the comprehensive Airbyte platform.

llm.c

LLM training in simple, pure C/CUDA. There is no need for 245MB of PyTorch or 107MB of cPython. For example, training GPT-2 (CPU, fp32) is ~1,000 lines of clean code in a single file. It compiles and runs instantly, and exactly matches the PyTorch reference implementation. I chose GPT-2 as the first working example because it is the grand-daddy of LLMs, the first time the modern stack was put together.

llm-subtrans

LLM-Subtrans is an open source subtitle translator that utilizes LLMs as a translation service. It supports translating subtitles between any language pairs supported by the language model. The application offers multiple subtitle formats support through a pluggable system, including .srt, .ssa/.ass, and .vtt files. Users can choose to use the packaged release for easy usage or install from source for more control over the setup. The tool requires an active internet connection as subtitles are sent to translation service providers' servers for translation.

ultravox

Ultravox is a fast multimodal Language Model (LLM) that can understand both text and human speech in real-time without the need for a separate Audio Speech Recognition (ASR) stage. By extending Meta's Llama 3 model with a multimodal projector, Ultravox converts audio directly into a high-dimensional space used by Llama 3, enabling quick responses and potential understanding of paralinguistic cues like timing and emotion in human speech. The current version (v0.3) has impressive speed metrics and aims for further enhancements. Ultravox currently converts audio to streaming text and plans to emit speech tokens for direct audio conversion. The tool is open for collaboration to enhance this functionality.

0chain

Züs is a high-performance cloud on a fast blockchain offering privacy and configurable uptime. It uses erasure code to distribute data between data and parity servers, allowing flexibility for IT managers to design for security and uptime. Users can easily share encrypted data with business partners through a proxy key sharing protocol. The ecosystem includes apps like Blimp for cloud migration, Vult for personal cloud storage, and Chalk for NFT artists. Other apps include Bolt for secure wallet and staking, Atlus for blockchain explorer, and Chimney for network participation. The QoS protocol challenges providers based on response time, while the privacy protocol enables secure data sharing. Züs supports hybrid and multi-cloud architectures, allowing users to improve regulatory compliance and security requirements.

lovelaice

Lovelaice is an AI-powered assistant for your terminal and editor. It can run bash commands, search the Internet, answer general and technical questions, complete text files, chat casually, execute code in various languages, and more. Lovelaice is configurable with API keys and LLM models, and can be used for a wide range of tasks requiring bash commands or coding assistance. It is designed to be versatile, interactive, and helpful for daily tasks and projects.

AI-Horde-Worker

AI-Horde-Worker is a repository containing the original reference implementation for a worker that turns your graphics card(s) into a worker for the AI Horde. It allows users to generate or alchemize images for others. The repository provides instructions for setting up the worker on Windows and Linux, updating the worker code, running with multiple GPUs, and stopping the worker. Users can configure the worker using a WebUI to connect to the horde with their username and API key. The repository also includes information on model usage and running the Docker container with specified environment variables.

llamafile

llamafile is a tool that enables users to distribute and run Large Language Models (LLMs) with a single file. It combines llama.cpp with Cosmopolitan Libc to create a framework that simplifies the complexity of LLMs into a single-file executable called a 'llamafile'. Users can run these executable files locally on most computers without the need for installation, making open LLMs more accessible to developers and end users. llamafile also provides example llamafiles for various LLM models, allowing users to try out different LLMs locally. The tool supports multiple CPU microarchitectures, CPU architectures, and operating systems, making it versatile and easy to use.

For similar tasks

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

For similar jobs

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

awesome-ai-security

Awesome AI Security is a curated list of frameworks, standards, learning resources, and open source tools related to AI security. It covers a wide range of topics including general reading material, technical material & labs, podcasts, governance frameworks and standards, offensive tools and frameworks, attacking Large Language Models (LLMs), AI for offensive cyber, defensive tools and frameworks, AI for defensive cyber, data security and governance, general AI/ML safety and robustness, MCP security, LLM guardrails, safety and sandboxing for agentic AI tools, detection & scanners, OpenClaw security, privacy and confidentiality, agentic AI skills, models for cybersecurity, and more.

ferret-scan

Ferret is a security scanner designed for AI assistant configurations. It detects prompt injections, credential leaks, jailbreak attempts, and malicious patterns in AI CLI setups. The tool aims to prevent security issues by identifying threats specific to AI CLI structures that generic scanners might miss. Ferret uses threat intelligence with a local indicator database by default and offers advanced features like IDE integrations, behavior analysis, marketplace security analysis, AI-powered rules, sandboxing integration, and compliance frameworks assessment. It supports various AI CLIs and provides detailed findings and remediation suggestions for detected issues.

ciso-assistant-community

CISO Assistant is a tool that helps organizations manage their cybersecurity posture and compliance. It provides a centralized platform for managing security controls, threats, and risks. CISO Assistant also includes a library of pre-built frameworks and tools to help organizations quickly and easily implement best practices.

PurpleLlama

Purple Llama is an umbrella project that aims to provide tools and evaluations to support responsible development and usage of generative AI models. It encompasses components for cybersecurity and input/output safeguards, with plans to expand in the future. The project emphasizes a collaborative approach, borrowing the concept of purple teaming from cybersecurity, to address potential risks and challenges posed by generative AI. Components within Purple Llama are licensed permissively to foster community collaboration and standardize the development of trust and safety tools for generative AI.

vpnfast.github.io

VPNFast is a lightweight and fast VPN service provider that offers secure and private internet access. With VPNFast, users can protect their online privacy, bypass geo-restrictions, and secure their internet connection from hackers and snoopers. The service provides high-speed servers in multiple locations worldwide, ensuring a reliable and seamless VPN experience for users. VPNFast is easy to use, with a user-friendly interface and simple setup process. Whether you're browsing the web, streaming content, or accessing sensitive information, VPNFast helps you stay safe and anonymous online.

taranis-ai

Taranis AI is an advanced Open-Source Intelligence (OSINT) tool that leverages Artificial Intelligence to revolutionize information gathering and situational analysis. It navigates through diverse data sources like websites to collect unstructured news articles, utilizing Natural Language Processing and Artificial Intelligence to enhance content quality. Analysts then refine these AI-augmented articles into structured reports that serve as the foundation for deliverables such as PDF files, which are ultimately published.

NightshadeAntidote

Nightshade Antidote is an image forensics tool used to analyze digital images for signs of manipulation or forgery. It implements several common techniques used in image forensics including metadata analysis, copy-move forgery detection, frequency domain analysis, and JPEG compression artifacts analysis. The tool takes an input image, performs analysis using the above techniques, and outputs a report summarizing the findings.