Best AI tools for< Ctf Challenge Creator >

Infographic

1 - AI tool Sites

SMshrimant

SMshrimant is a personal website belonging to a Bug Bounty Hunter, Security Researcher, Penetration Tester, and Ethical Hacker. The website showcases the creator's skills and experiences in the field of cybersecurity, including bug hunting, security vulnerability reporting, open-source tool development, and participation in Capture The Flag competitions. Visitors can learn about the creator's projects, achievements, and contact information for inquiries or collaborations.

1 - Open Source Tools

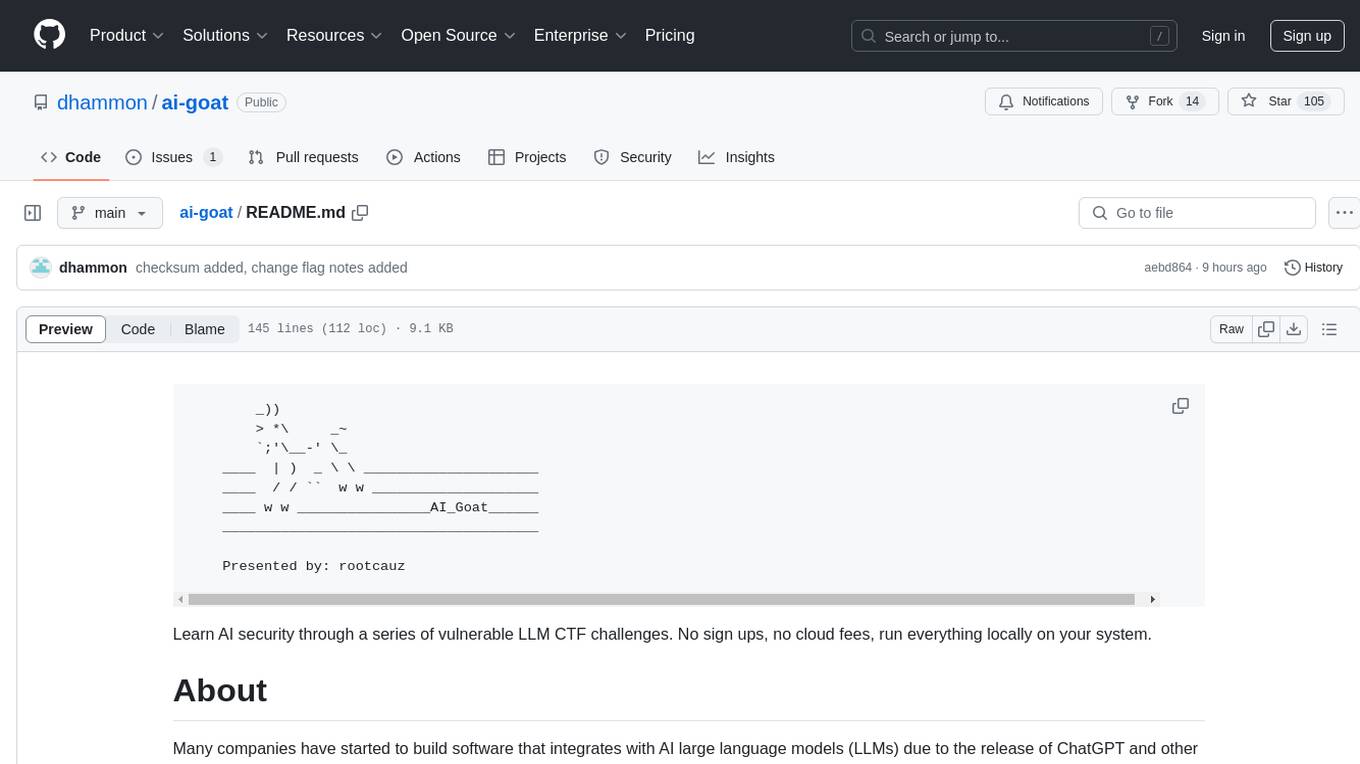

ai-goat

AI Goat is a tool designed to help users learn about AI security through a series of vulnerable LLM CTF challenges. It allows users to run everything locally on their system without the need for sign-ups or cloud fees. The tool focuses on exploring security risks associated with large language models (LLMs) like ChatGPT, providing practical experience for security researchers to understand vulnerabilities and exploitation techniques. AI Goat uses the Vicuna LLM, derived from Meta's LLaMA and ChatGPT's response data, to create challenges that involve prompt injections, insecure output handling, and other LLM security threats. The tool also includes a prebuilt Docker image, ai-base, containing all necessary libraries to run the LLM and challenges, along with an optional CTFd container for challenge management and flag submission.

5 - OpenAI Gpts

Avalanche - Reverse Engineering & CTF Assistant

Assisting with reverse engineering and CTF using write ups and instructions for solving challenges

HTB

A helper that will provide some insight in case you get stuck trying to solve a machine on HTB or a CTF.