awesome-gpt-security

A curated list of awesome security tools, experimental case or other interesting things with LLM or GPT.

Stars: 459

Awesome GPT + Security is a curated list of awesome security tools, experimental case or other interesting things with LLM or GPT. It includes tools for integrated security, auditing, reconnaissance, offensive security, detecting security issues, preventing security breaches, social engineering, reverse engineering, investigating security incidents, fixing security vulnerabilities, assessing security posture, and more. The list also includes experimental cases, academic research, blogs, and fun projects related to GPT security. Additionally, it provides resources on GPT security standards, bypassing security policies, bug bounty programs, cracking GPT APIs, and plugin security.

README:

A curated list of awesome security tools, experimental case or other interesting things with LLM or GPT.

Here is A nice tool to Finetune ALL LLMs with ALL Adapeters on ALL Platforms!

🧰

- SecGPT - SecGPT aims to make further contributions to network security by combining LLM, including penetration testing, red-blue confrontations, CTF competitions, and other aspects.

- AutoAudit - An LLM for Cyber Security

- secgpt - Cyber security LLM(Lora finetuned with baichuan-13B using some material of cyber security)

- HackerGPT-2.0 - HackerGPT is your indispensable digital companion in the world of hacking.

- SourceGPT - prompt manager and source code analyzer built on top of ChatGPT as the oracle

- ChatGPTScanner - A white box code scan powered by ChatGPT

- chatgpt-code-analyzer - ChatGPT Code Analyzer for Visual Studio Code

- hacker-ai - An online tool using AI to detect vulnerabilities in source code

- audit_gpt - Fine-tuning GPT for Smart Contract Auditing

- vulchatgpt - Use IDA PRO HexRays decompiler with OpenAI(ChatGPT) to find possible vulnerabilities in binaries

- Ret2GPT - Advanced AI-powered binary analysis tool leveraging OpenAI's LangChain technology, revolutionizing CTF Pwners' experience in binary file interpretation and vulnerability detection.

- CensysGPT Beta - The tool enables users to quickly and easily gain insights into hosts on the internet, streamlining the process and allowing for more proactive threat hunting and exposure management

- GPT_Vuln-analyzer - Uses ChatGPT API, Python-Nmap, DNS Recon modules and uses the GPT3 model to create vulnerability reports based on Nmap scan data, and DNS scan information. It can also perform subdomain enumeration to a great extent

- SubGPT - SubGPT looks at subdomains you have already discovered for a domain and uses BingGPT to find more.

- Navi - A QA based Reconnaissance Tool with GPT

- ChatCVE - The ChatCVE Lang Chain App is an AI-powered devSecOps application 🔍, for oganizations triaging and aggregating CVE (Common Vulnerabilities and Exposures) information.

- ZoomeyeGPT - ZoomEyeGPT browser extension is a GPT-based Chrome browser extension designed to bring AI-assisted search experience to ZoomEye users.

- uncover-turbo - Realize a general-purpose natural language surveying and mapping engine, and open up the last mile from natural language to surveying and mapping grammar.

- DevOpsGPT - AI-Driven Software Development Automation Solution

- PentestGPT - A GPT-empowered penetration testing tool

- burpgpt - A Burp Suite extension that integrates OpenAI's GPT to perform an additional passive scan for discovering highly bespoke vulnerabilities, and enables running traffic-based analysis of any type.

- ReconAIzer - A Burp Suite extension to add OpenAI (GPT) on Burp and help you with your Bug Bounty recon to discover endpoints, params, URLs, subdomains and more!

- CodaMOSA - CodaMOSA is the paper code of CodaMOSA: Escaping Coverage Plateaus in Test Generation with Pre-trained Large Language Models. It implements a fuzzer combined with OpenAI API, aiming to alleviate the problem of stagnant coverage in traditional fuzz.

- PassGAN - A Deep Learning Approach for Password Guessing. HomeSecurityHeroes land a Product, and you can test how much time an AI would need to crack your password here.

- nuclei-ai-extension - Official by Nuclei Team. Browser Extension for Rapid Nuclei Template Generation.

- nuclei_gpt - Only need to submit the relevant Request and Response and the description of the vulnerability to generate a Nuclei PoC.

- Nuclei Templates AI Generator -- Create Nuclei templates by textual description (e.g., vulnerability scanners by PoC).

- hackGPT - Leverage OpenAI and ChatGPT to do hackerish things

- k8sgpt - a tool for scanning your Kubernetes clusters, diagnosing, and triaging issues in simple English.

- cloudgpt - Vulnerability scanner for AWS customer managed policies using ChatGPT

- IATelligence - About IATelligence is a Python script that will extract the IAT of a PE file and request GPT to get more information about the API and the ATT&CK matrix related

- rebuff - Prompt Injection Detector.

- Callisto - An Intelligent Automated Binary Vulnerability Analysis Tool.

- LLMFuzzer - LLMFuzzer is the first open-source fuzzing framework specifically designed for Large Language Models (LLMs), especially for their integrations in applications via LLM APIs.

- Vigil - Prompt injection detection and LLM prompt security scanner

- ChatGPT-Web-Setting-Funny-Abuse - Play with ChatGPT-Web and found the HTML rendering in description settings.

- LLM4Decompile - Reverse Engineering: Decompiling Binary Code with Large Language Models

- Gepetto - About IDA plugin which queries OpenAI's gpt-3.5-turbo language model to speed up reverse-engineering

- gpt-wpre - Whole-Program Reverse Engineering with GPT-3

- G-3PO - A Script that Solicits GPT-3 for Comments on Decompiled Code

- beelzebub - Go-Based Low-Code Honeypot Framework with Enhanced Security, Leveraging GPT-3 for System Virtualization

- wolverine - Auto fix the bugs in your Python Script/Code

- falco-gpt - AI-generated remediations for Falco audit events

- selefra - an open-source policy-as-code software that provides analytics for multi-cloud and SaaS.

- openai-cti-summarizer - openai-cti-summarizer is a tool for generating threat intelligence summary reports based on OpenAI's GPT-3.5 and GPT-4 API

🌰

- Lost in ChatGPT's memories: escaping ChatGPT-3.5 memory issues to write CVE PoCs

- I built a Zero Day virus with undetectable exfiltration using only ChatGPT prompts

- Experimenting with GPT-3 for Detecting Security Vulnerabilities in Code

- We put GPT-4 in Semgrep to point out false positives & fix code

- A Practical, AI-Generated Phishing PoC With ChatGPT

- Capturing the Flag with GPT-4

- I Used GPT-3 to Find 213 Security Vulnerabilities in a Single Codebase

- Using ChatGPT to generate encoder and supporting WebShell

- Using OpenAI Chat to Generate Phishing Campaigns -- Include Phishing Platform

- Chat4GPT Experiments for Security

- GPT-3 use cases for Cybersecurity

- AI-Powered Fuzzing: Breaking the Bug Hunting Barrier

- GPT-4 Technical Report -- OpenAI's own security assessment and mitigation of the model

- Ignore Previous Prompt: Attack Techniques For Language Models -- Pioneering work of Prompt Injection

- More than you've asked for: A Comprehensive Analysis of Novel Prompt Injection Threats to Application-Integrated Large Language Models

- RealToxicityPrompts: Evaluating Neural Toxic Degeneration in Language Models

- Exploiting Programmatic Behavior of LLMs: Dual-Use Through Standard Security Attacks

- Red Teaming Language Models to Reduce Harms: Methods, Scaling Behaviors, and Lessons Learned

- Can We Generate Shellcodes via Natural Language? An Empirical Study

- Dissecting redis CVE-2023-28425 with chatGPT as assistant

- Security Code Review With ChatGPT

- ChatGPT happy to write ransomware, just really bad at it

- Create ATT&CK Groups Knowledge Base

- Model Confusion - Weaponizing ML models for red teams and bounty hunters

- Using LLMs to reverse JavaScript variable name minification

- shortest prompt that will enable GPT to protect the secret key

- a CTF-like game that teaches how to bypass LLM using language hacks

- ai-goat - Learn AI security through a series of vulnerable LLM CTF challenges.

🚨

- ATT&CK for LLM Apps

- The OWASP Top 10 for Large Language Model Applications project

- Google AI Red Team

- PurpleLlama - Empowering developers, advancing safety, and building an open ecosystem

- Chat GPT "DAN" (and other "Jailbreaks")

- ChatGPT Prompts for Bug Bounty & Pentesting

- promptmap - automatically tests prompt injection attacks on ChatGPT instances

- Use "Typoglycemia" to Bypass the LLM's Security Policy

- Universal and Transferable Adversarial Attacks on Aligned Language Models

- promptbench - A robustness evaluation framework for large language models on adversarial prompts

- Building A Virtual Machine inside ChatGPT - deprecated but interesting

- LangChain vulnerable to code injection -- CVE-2023-29374

- gpt4free - Just API's from some language model sites.

- EdgeGPT - Reverse engineered API of Microsoft's Bing Chat AI

- GPTs - leaked prompts of GPTs

- SecureGPT – Dynamically test the security of your ChatGPT Plugins APIs (Free DAST for ChatGPT Plugins).

Your contributions are always welcome! Please take a look at the contribution guidelines first.

If you have any question about this opinionated list, do not hesitate to open an issue on GitHub.

Thanks again for your contribution and keeping this community vibrant. ❤️

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for awesome-gpt-security

Similar Open Source Tools

awesome-gpt-security

Awesome GPT + Security is a curated list of awesome security tools, experimental case or other interesting things with LLM or GPT. It includes tools for integrated security, auditing, reconnaissance, offensive security, detecting security issues, preventing security breaches, social engineering, reverse engineering, investigating security incidents, fixing security vulnerabilities, assessing security posture, and more. The list also includes experimental cases, academic research, blogs, and fun projects related to GPT security. Additionally, it provides resources on GPT security standards, bypassing security policies, bug bounty programs, cracking GPT APIs, and plugin security.

nebula

Nebula is an advanced, AI-powered penetration testing tool designed for cybersecurity professionals, ethical hackers, and developers. It integrates state-of-the-art AI models into the command-line interface, automating vulnerability assessments and enhancing security workflows with real-time insights and automated note-taking. Nebula revolutionizes penetration testing by providing AI-driven insights, enhanced tool integration, AI-assisted note-taking, and manual note-taking features. It also supports any tool that can be invoked from the CLI, making it a versatile and powerful tool for cybersecurity tasks.

llmesh

LLM Agentic Tool Mesh is a platform by HPE Athonet that democratizes Generative Artificial Intelligence (Gen AI) by enabling users to create tools and web applications using Gen AI with Low or No Coding. The platform simplifies the integration process, focuses on key user needs, and abstracts complex libraries into easy-to-understand services. It empowers both technical and non-technical teams to develop tools related to their expertise and provides orchestration capabilities through an agentic Reasoning Engine based on Large Language Models (LLMs) to ensure seamless tool integration and enhance organizational functionality and efficiency.

watchtower

AIShield Watchtower is a tool designed to fortify the security of AI/ML models and Jupyter notebooks by automating model and notebook discoveries, conducting vulnerability scans, and categorizing risks into 'low,' 'medium,' 'high,' and 'critical' levels. It supports scanning of public GitHub repositories, Hugging Face repositories, AWS S3 buckets, and local systems. The tool generates comprehensive reports, offers a user-friendly interface, and aligns with industry standards like OWASP, MITRE, and CWE. It aims to address the security blind spots surrounding Jupyter notebooks and AI models, providing organizations with a tailored approach to enhancing their security efforts.

awesome-openvino

Awesome OpenVINO is a curated list of AI projects based on the OpenVINO toolkit, offering a rich assortment of projects, libraries, and tutorials covering various topics like model optimization, deployment, and real-world applications across industries. It serves as a valuable resource continuously updated to maximize the potential of OpenVINO in projects, featuring projects like Stable Diffusion web UI, Visioncom, FastSD CPU, OpenVINO AI Plugins for GIMP, and more.

awesome-algorand

Awesome Algorand is a curated list of resources related to the Algorand Blockchain, including official resources, wallets, blockchain explorers, portfolio trackers, learning resources, development tools, DeFi platforms, nodes & consensus participation, subscription management, security auditing services, blockchain bridges, oracles, name services, community resources, Algorand Request for Comments, metrics and analytics services, decentralized voting tools, and NFT marketplaces. The repository provides a comprehensive collection of tools, tutorials, protocols, and platforms for developers, users, and enthusiasts interested in the Algorand ecosystem.

supervisely

Supervisely is a computer vision platform that provides a range of tools and services for developing and deploying computer vision solutions. It includes a data labeling platform, a model training platform, and a marketplace for computer vision apps. Supervisely is used by a variety of organizations, including Fortune 500 companies, research institutions, and government agencies.

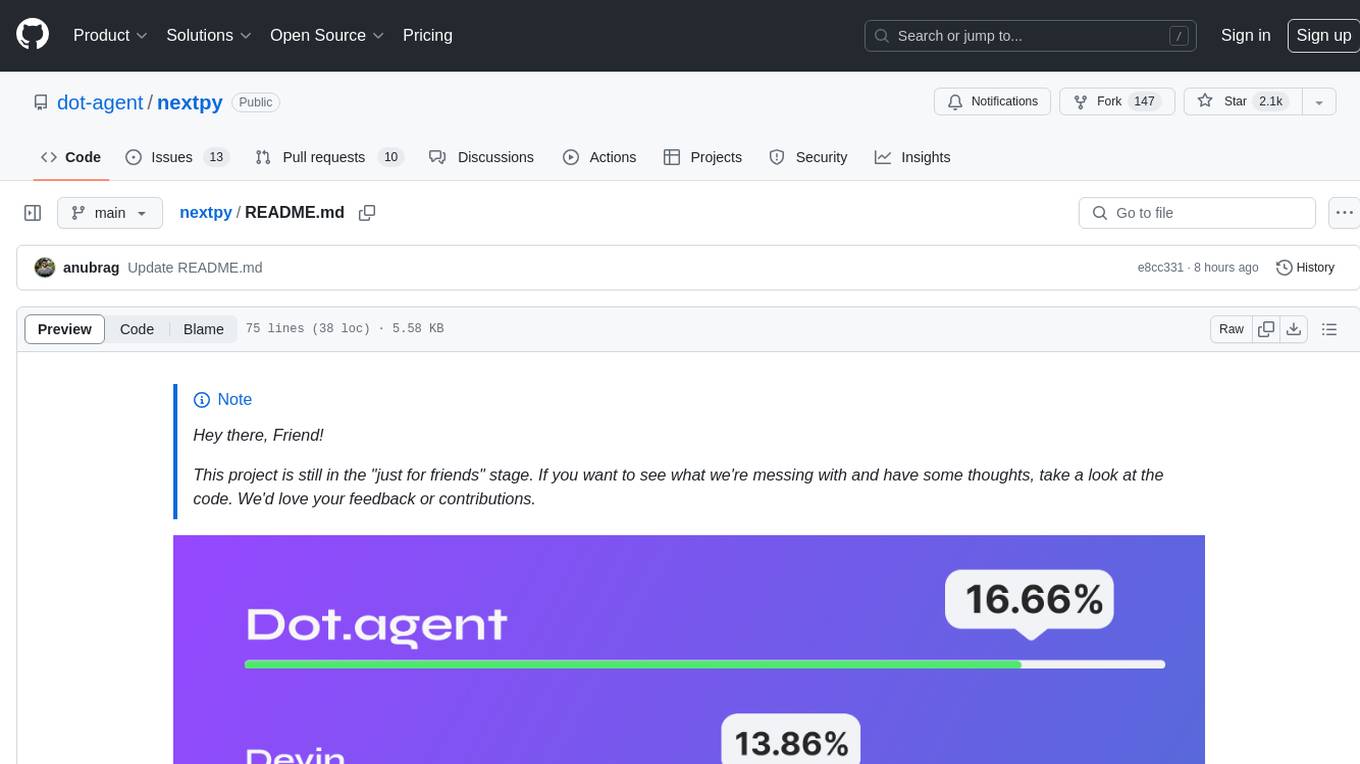

nextpy

Nextpy is a cutting-edge software development framework optimized for AI-based code generation. It provides guardrails for defining AI system boundaries, structured outputs for prompt engineering, a powerful prompt engine for efficient processing, better AI generations with precise output control, modularity for multiplatform and extensible usage, developer-first approach for transferable knowledge, and containerized & scalable deployment options. It offers 4-10x faster performance compared to Streamlit apps, with a focus on cooperation within the open-source community and integration of key components from various projects.

AgentUp

AgentUp is an active development tool that provides a developer-first agent framework for creating AI agents with enterprise-grade infrastructure. It allows developers to define agents with configuration, ensuring consistent behavior across environments. The tool offers secure design, configuration-driven architecture, extensible ecosystem for customizations, agent-to-agent discovery, asynchronous task architecture, deterministic routing, and MCP support. It supports multiple agent types like reactive agents and iterative agents, making it suitable for chatbots, interactive applications, research tasks, and more. AgentUp is built by experienced engineers from top tech companies and is designed to make AI agents production-ready, secure, and reliable.

aitour26-WRK541-real-world-code-migration-with-github-copilot-agent-mode

Microsoft AI Tour 2026 WRK541 is a workshop focused on real-world code migration using GitHub Copilot Agent Mode. The session is designed for technologists interested in applying AI pair-programming techniques to challenging tasks like migrating or translating code between different programming languages. Participants will learn advanced GitHub Copilot techniques, differences between Python and C#, JSON serialization and deserialization in C#, developing and validating endpoints, integrating Swagger/OpenAPI documentation, and writing unit tests with MSTest. The workshop aims to help users gain hands-on experience in using GitHub Copilot for real-world code migration scenarios.

azure-openai-samples

This repository provides resources to understand and utilize GPT (Generative Pre-trained Transformer) by Azure OpenAI. It includes sample solutions, use cases, and quick start guides. Users can explore various applications of GPT, such as chatbots, customer service, and content generation. The repository also offers Langchain, Semantic Kernel, and Prompt Flow samples, along with Serverless SQL GPT for natural language processing in Azure Synapse Analytics. The samples are based on GPT 3.5, with plans to update for GPT-4. Users are encouraged to contribute to keep the repository updated with the latest technologies and solutions.

Symposium2023

Symposium2023 is a project aimed at enabling Delphi users to incorporate AI technology into their applications. It provides generalized interfaces to different AI models, making them easily accessible. The project showcases AI's versatility in tasks like language translation, human-like conversations, image generation, data analysis, and more. Users can experiment with different AI models, change providers easily, and avoid vendor lock-in. The project supports various AI features like vision support and function calling, utilizing providers like Google, Microsoft Azure, Amazon, OpenAI, and more. It includes example programs demonstrating tasks such as text-to-speech, language translation, face detection, weather querying, audio transcription, voice recognition, image generation, invoice processing, and API testing. The project also hints at potential future research areas like using embeddings for data search and integrating Python AI libraries with Delphi.

agentUniverse

agentUniverse is a framework for developing applications powered by multi-agent based on large language model. It provides essential components for building single agent and multi-agent collaboration mechanism for customizing collaboration patterns. Developers can easily construct multi-agent applications and share pattern practices from different fields. The framework includes pre-installed collaboration patterns like PEER and DOE for complex task breakdown and data-intensive tasks.

uusec-waf

UUSEC WAF is an industrial grade free, high-performance, and highly scalable web application and API security protection product that supports AI and semantic engines. It provides intelligent 0-day defense, ultimate CDN acceleration, powerful proactive defense, advanced semantic engine, and advanced rule engine. With features like machine learning technology, cache cleaning, dual layer defense, semantic analysis, and Lua script rule writing, UUSEC WAF offers comprehensive website protection with three-layer defense functions at traffic, system, and runtime layers.

vulcan-sql

VulcanSQL is an Analytical Data API Framework for AI agents and data apps. It aims to help data professionals deliver RESTful APIs from databases, data warehouses or data lakes much easier and secure. It turns your SQL into APIs in no time!

For similar tasks

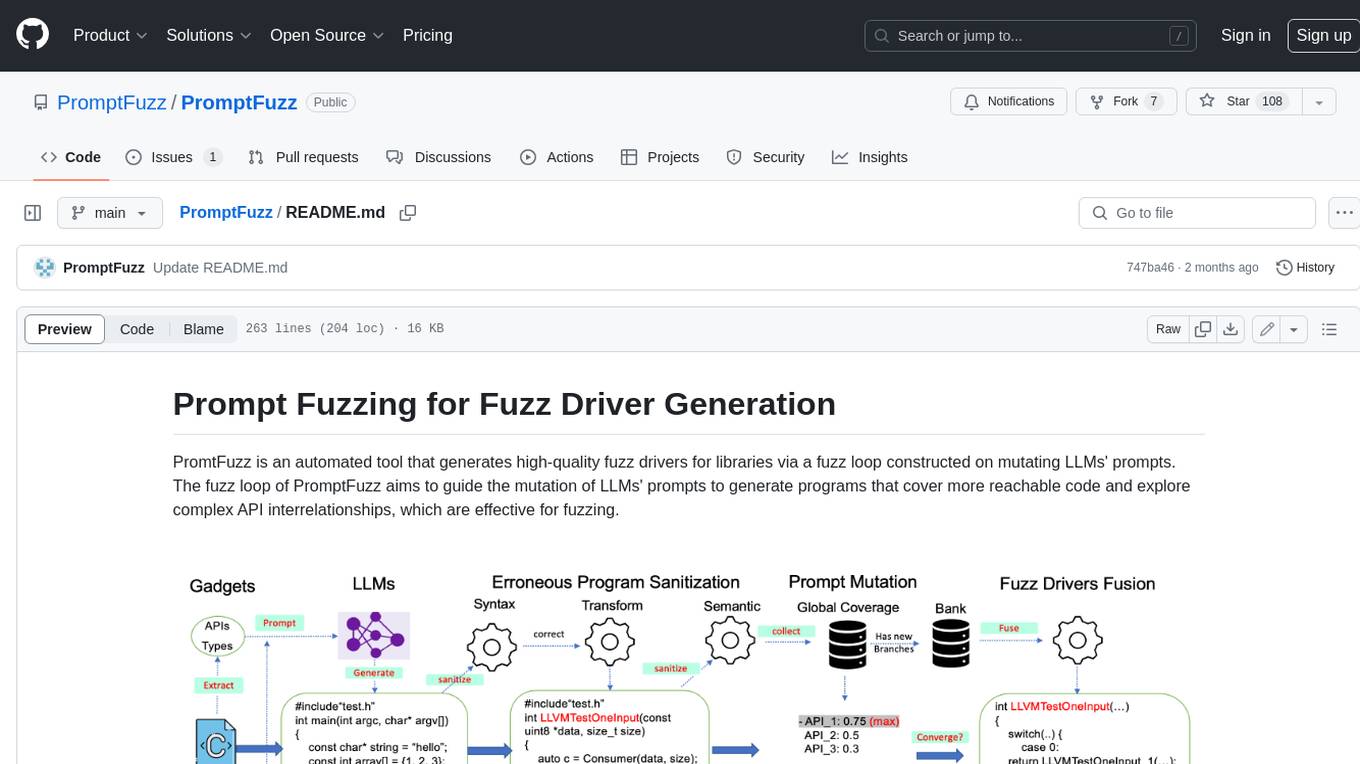

PromptFuzz

**Description:** PromptFuzz is an automated tool that generates high-quality fuzz drivers for libraries via a fuzz loop constructed on mutating LLMs' prompts. The fuzz loop of PromptFuzz aims to guide the mutation of LLMs' prompts to generate programs that cover more reachable code and explore complex API interrelationships, which are effective for fuzzing. **Features:** * **Multiply LLM support** : Supports the general LLMs: Codex, Inocder, ChatGPT, and GPT4 (Currently tested on ChatGPT). * **Context-based Prompt** : Construct LLM prompts with the automatically extracted library context. * **Powerful Sanitization** : The program's syntax, semantics, behavior, and coverage are thoroughly analyzed to sanitize the problematic programs. * **Prioritized Mutation** : Prioritizes mutating the library API combinations within LLM's prompts to explore complex interrelationships, guided by code coverage. * **Fuzz Driver Exploitation** : Infers API constraints using statistics and extends fixed API arguments to receive random bytes from fuzzers. * **Fuzz engine integration** : Integrates with grey-box fuzz engine: LibFuzzer. **Benefits:** * **High branch coverage:** The fuzz drivers generated by PromptFuzz achieved a branch coverage of 40.12% on the tested libraries, which is 1.61x greater than _OSS-Fuzz_ and 1.67x greater than _Hopper_. * **Bug detection:** PromptFuzz detected 33 valid security bugs from 49 unique crashes. * **Wide range of bugs:** The fuzz drivers generated by PromptFuzz can detect a wide range of bugs, most of which are security bugs. * **Unique bugs:** PromptFuzz detects uniquely interesting bugs that other fuzzers may miss. **Usage:** 1. Build the library using the provided build scripts. 2. Export the LLM API KEY if using ChatGPT or GPT4. 3. Generate fuzz drivers using the `fuzzer` command. 4. Run the fuzz drivers using the `harness` command. 5. Deduplicate and analyze the reported crashes. **Future Works:** * **Custom LLMs suport:** Support custom LLMs. * **Close-source libraries:** Apply PromptFuzz to close-source libraries by fine tuning LLMs on private code corpus. * **Performance** : Reduce the huge time cost required in erroneous program elimination.

awesome-gpt-security

Awesome GPT + Security is a curated list of awesome security tools, experimental case or other interesting things with LLM or GPT. It includes tools for integrated security, auditing, reconnaissance, offensive security, detecting security issues, preventing security breaches, social engineering, reverse engineering, investigating security incidents, fixing security vulnerabilities, assessing security posture, and more. The list also includes experimental cases, academic research, blogs, and fun projects related to GPT security. Additionally, it provides resources on GPT security standards, bypassing security policies, bug bounty programs, cracking GPT APIs, and plugin security.

SWE-agent

SWE-agent is a tool that allows language models to autonomously fix issues in GitHub repositories, perform tasks on the web, find cybersecurity vulnerabilities, and handle custom tasks. It uses configurable agent-computer interfaces (ACIs) to interact with isolated computer environments. The tool is built and maintained by researchers from Princeton University and Stanford University.

jadx-ai-mcp

JADX-AI-MCP is a plugin for the JADX decompiler that integrates with Model Context Protocol (MCP) to provide live reverse engineering support with LLMs like Claude. It allows for quick analysis, vulnerability detection, and AI code modification, all in real time. The tool combines JADX-AI-MCP and JADX MCP SERVER to analyze Android APKs effortlessly. It offers various prompts for code understanding, vulnerability detection, reverse engineering helpers, static analysis, AI code modification, and documentation. The tool is part of the Zin MCP Suite and aims to connect all android reverse engineering and APK modification tools with a single MCP server for easy reverse engineering of APK files.

shannon

Shannon is an AI pentester that delivers actual exploits, not just alerts. It autonomously hunts for attack vectors in your code, then uses its built-in browser to execute real exploits, such as injection attacks, and auth bypass, to prove the vulnerability is actually exploitable. Shannon closes the security gap by acting as your on-demand whitebox pentester, providing concrete proof of vulnerabilities to let you ship with confidence. It is a core component of the Keygraph Security and Compliance Platform, automating penetration testing and compliance journey. Shannon Lite achieves a 96.15% success rate on a hint-free, source-aware XBOW benchmark.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.