starter-monorepo

Monorepo with 🤖 AI goodies | LLM Chat | 🔥Hono + OpenAPI & RPC, Nuxt, Convex, SST Ion, Kinde Auth, Tanstack Query, Shadcn, UnoCSS, Spreadsheet I18n, Lingo.dev

Stars: 153

Starter Monorepo is a template repository for setting up a monorepo structure in your project. It provides a basic setup with configurations for managing multiple packages within a single repository. This template includes tools for package management, versioning, testing, and deployment. By using this template, you can streamline your development process, improve code sharing, and simplify dependency management across your project. Whether you are working on a small project or a large-scale application, Starter Monorepo can help you organize your codebase efficiently and enhance collaboration among team members.

README:

- starter-monorepo

This is a base monorepo starter template to kick-start your beautifully organized project, whether its a fullstack project, monorepo of multiple libraries and applications, or even just one API server and its related infrastructure deployment and utilities.

Out-of-the-box with the included apps, we have a fullstack project: with a frontend Nuxt 4 app, a main backend using Hono, and a backend-convex Convex app.

- General APIs, such as authentication, are handled by the main

backend, which is designed to be serverless-compatible and can be deployed anywhere, allowing for the best possible latency, performance, and cost, according to your needs. -

backend-convexis a modular, add-inbackend, utilized to power components likeAI Chat.

It is recommended to use an AI Agent (Roo Code recommended) to help you setup the monorepo according to your needs, see Utilities

⏩ This template is powered by Turborepo.

😊 Out-of-the-box, this repo is configured for an SSG frontend Nuxt app, and a backend Hono app that will be the main API, to optimize on cost and simplicity.

- The starter kit is still configured for 100% SSR support,

Simply change theapps/frontend's build script tonuxt buildto enable SSR building

🌩️ SST Ion, an Infrastructure-as-Code solution, with powerful Live development.

- SST is 100% opt-in, by using

sstCLI commands yourself, likesst dev,

simply removesstdependency andsst.config.tsif you want to use another solution. - currently only

backendapp is configured, which will deploy a Lambda with Function URL enabled

🔐 Comes with starter-kit for Kinde typescript-sdk, see: /apps/backend/api/auth

- Add your env variables, activate the auth routes, profit$

- Please note that by default

backendcomes with a cookies-based session manager, which have great DX, security and does not require an external database (which also means great performance), but as thebackendis decoupled with the Nuxt's SSR server, it will not work well with SSR (the session/auth state is not shared).

So if you use SSR, you could use the official Nuxt Kinde module or implement your own way to manage the session atapps/backend/src/middlewares/session.ts.- If you have a good session manager implementation, a PR is greatly appreciated!

💯 JS is always TypeScript where possible.

Work started in 2025-06-12 for T3 Chat Cloneathon competition, with no prior AI SDK and chat streams experience, but I think I did an amazing job 🫡!

The focus of the project is for broader adoption, prioritizing easy-to-access UI/UX, bleeding-edge features like workflows are a low prio, though, advanced capabilities per-model capabilities and fine-tuning are still expected to be elegantly supported via the model's interface. #48

A super efficient and powerful, yet friendly LLM Chat system, featuring:

- Business-ready, support

hostedprovider that you can control the billing of. - Supports other add-in BYOK providers, like

OpenAI,OpenRouter,... - Seamless authentication integration with the main

backend. - Beautiful syntax highlighting 🌈.

- Thread branching, freezing, and sharing.

- Real-time, multi-agents, multi-users support ¹.

- Invite your families and friends, and play with the Agents together in real-time.

- Or maybe invite your colleagues, and brainstorm together with the help and power of AI.

- Resumable and multi-streams ¹.

- Ask follow-up questions while the previous isn't done, the model is able to pick up what's available currently 🍳🍳.

- Multi-users can send messages at the same time 😲😲.

- Easy and private: guest, anonymous usage supported.

- Your dad can just join and chat with just a link share 😉, no setup needed.

- Mobile-friendly.

- Fully internalized, with AI-powered translations and smooth switching between languages.

- Blazingly fast ⚡ with local caching and optimistic updates.

- Designed to be scalable

-

Things are isolated and common interfaces are defined and utilized where possible, there's no tightly coupled-hacks that prevents future scaling, things just works, elegantly.

- Any AI provider that is compatible with

@ai-sdkinterface can be added in a few words of code, I just don't want to bloat the UI by adding all of them.

-

*1: currently the "stream" received when resuming or for other real-time users in the same thread is implemented via a custom polling mechanism, and not SSE. it is intentionally chosed to be this way for more minimal infrastructure setup and wider hosting support, so smaller user groups can host their own version easily, it is still very performant and efficient.

- There is boilerplate code for SSE resume support, you can simply add a pub-sub to the backend and switch to using SSE resume in

ChatInterface.

- By default, the frontend

/api/*routes is proxied to thebackendUrl. - The

rpcApiplugin will call the/api/*proxy if they're on the same domain but different ports (e.g: 127.0.0.1)-

this mimics a production environment where the static frontend and the backend lives on the same domain at /api, which is the most efficient configuration for Cloudfront + Lambda Function Url, or Cloudflare Workers.

- If the

frontendandbackendare on different domains then the backend will be called directly without proxy. - This could be configured in frontend's

app.config.ts

-

backend-convex: a Convex app.

-

@local/locales: a shared central locales/i18n data library powered by spreadsheet-i18n.- 🌐✨🤖 AUTOMATIC localization with AI, powered by lingo.dev, just

pnpm run i18n. - 🔄️ Hot-reload and automatic-reload supported, changes are reflected in apps (

frontend,backend) instantly.

- 🌐✨🤖 AUTOMATIC localization with AI, powered by lingo.dev, just

-

@local/common: a shared library that can contain constants, functions, types. -

@local/common-vue: a shared library that can contain components, constants, functions, types for vue-based apps. -

tsconfig:tsconfig.jsons used throughout the monorepo.

This Turborepo has some additional tools already setup for you:

- 🧐 ESLint + stylistic formatting rules (antfu)

- 📦📢

repo-releasescript, easily generates changelog, bump version, create GitHub release, and publish your packages to npm. - 📚 A few more goodies like:

- lint-staged pre-commit hook

- 🤖 Initialization prompt for AI Agents to modify the monorepo according to your needs.

- To start, open the chat with your AI Agent, and include the

INIT_PROMPT.mdfile in your prompt.

- To start, open the chat with your AI Agent, and include the

To build all apps and packages, run the following command:

pnpm run build

If you just want a quick check out, without having to set up anything, you can use pnpm run dev:noConvex, this will skips backend-convex and which is the only component that have initial set ups, though this of course means related features are disabled.

To develop all apps and packages, run the following command:

pnpm run dev

For local development environment variables / secrets, create a copy of .env.dev to .env.dev.local.

Guide to setup local development for Cloudflare workerd runtime testing:

- (Optional) Run the convex dev server if you use convex.

- Config

wrangler.jsonc, specfically, thevarsblock, so that it properly targets the local env. - (Optional) Config

apps/frontend/.env.workerd.devif you use convex or use different ip/port. - Build a new static dist for local dev:

- Start the local

backendserver - Run

build:workerdLocalscript forfrontend.

- Start the local

- Run

pnpm dlx wrangler devto start wrangler dev server.-

workerddoes not work with Alpine Linux, so if you use the included Dev Container, change the base image to some other distro.

-

- You can add your custom deploy instructions in

deployscript andscripts/deploy.shin each app, it could be a full script that deploys to a platform, or necessary actions before for some platform integration deploys it,frontendwill only start build and deploy after all backends are deployed, to have context for SSG. - The repo also contains some deployment presets samples:

- Action to deploy frontend to GitHub Pages

-

Wrangler configured to deploy fullstack to Cloudflare, just run

npx wrangler deployor connect and deploy it through the Cloudflare Dashboard.- Wrangler will deploy

backendandfrontendat the same time, which might causefrontendto have old context for SSG, you should trigger a redeploy in such case.

- Wrangler will deploy

- Deploy backend to Lambda via SST

- Some more deploying notes:

- To enable deploy with Convex in production, simply rename

_deployscript todeployinbackend-convexapp, run the deploy script once manually to get the Convex's production url, set it toNUXT_PUBLIC_CONVEX_URLenv infrontend's.env.prodfile or CI / build machine env variable.

- To enable deploy with Convex in production, simply rename

Imports should not be separated by empty lines, and should be sorted automatically by eslint.

The project comes with a localcert SSL at locals/common/dev to enable HTTPS for local development, generated with mkcert, you can install mkcert, generate your own certificate and replace it, or install the localcert.crt to your trusted CA to remove the untrusted SSL warning.

Turborepo can use a technique known as Remote Caching to share cache artifacts across machines, enabling you to share build caches with your team and CI/CD pipelines.

By default, Turborepo will cache locally. To enable Remote Caching you will need an account with Vercel. If you don't have an account you can create one, then enter the following commands:

npx turbo login

This will authenticate the Turborepo CLI with your Vercel account.

Next, you can link your Turborepo to your Remote Cache by running the following command from the root of your Turborepo:

npx turbo link

Learn more about the power of Turborepo:

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for starter-monorepo

Similar Open Source Tools

starter-monorepo

Starter Monorepo is a template repository for setting up a monorepo structure in your project. It provides a basic setup with configurations for managing multiple packages within a single repository. This template includes tools for package management, versioning, testing, and deployment. By using this template, you can streamline your development process, improve code sharing, and simplify dependency management across your project. Whether you are working on a small project or a large-scale application, Starter Monorepo can help you organize your codebase efficiently and enhance collaboration among team members.

ai-town

AI Town is a virtual town where AI characters live, chat, and socialize. This project provides a deployable starter kit for building and customizing your own version of AI Town. It features a game engine, database, vector search, auth, text model, deployment, pixel art generation, background music generation, and local inference. You can customize your own simulation by creating characters and stories, updating spritesheets, changing the background, and modifying the background music.

qrev

QRev is an open-source alternative to Salesforce, offering AI agents to scale sales organizations infinitely. It aims to provide digital workers for various sales roles or a superagent named Qai. The tech stack includes TypeScript for frontend, NodeJS for backend, MongoDB for app server database, ChromaDB for vector database, SQLite for AI server SQL relational database, and Langchain for LLM tooling. The tool allows users to run client app, app server, and AI server components. It requires Node.js and MongoDB to be installed, and provides detailed setup instructions in the README file.

agnai

Agnaistic is an AI roleplay chat tool that allows users to interact with personalized characters using their favorite AI services. It supports multiple AI services, persona schema formats, and features such as group conversations, user authentication, and memory/lore books. Agnaistic can be self-hosted or run using Docker, and it provides a range of customization options through its settings.json file. The tool is designed to be user-friendly and accessible, making it suitable for both casual users and developers.

safety-tooling

This repository, safety-tooling, is designed to be shared across various AI Safety projects. It provides an LLM API with a common interface for OpenAI, Anthropic, and Google models. The aim is to facilitate collaboration among AI Safety researchers, especially those with limited software engineering backgrounds, by offering a platform for contributing to a larger codebase. The repo can be used as a git submodule for easy collaboration and updates. It also supports pip installation for convenience. The repository includes features for installation, secrets management, linting, formatting, Redis configuration, testing, dependency management, inference, finetuning, API usage tracking, and various utilities for data processing and experimentation.

SeaGOAT

SeaGOAT is a local search tool that leverages vector embeddings to enable you to search your codebase semantically. It is designed to work on Linux, macOS, and Windows and can process files in various formats, including text, Markdown, Python, C, C++, TypeScript, JavaScript, HTML, Go, Java, PHP, and Ruby. SeaGOAT uses a vector database called ChromaDB and a local vector embedding engine to provide fast and accurate search results. It also supports regular expression/keyword-based matches. SeaGOAT is open-source and licensed under an open-source license, and users are welcome to examine the source code, raise concerns, or create pull requests to fix problems.

actions

Sema4.ai Action Server is a tool that allows users to build semantic actions in Python to connect AI agents with real-world applications. It enables users to create custom actions, skills, loaders, and plugins that securely connect any AI Assistant platform to data and applications. The tool automatically creates and exposes an API based on function declaration, type hints, and docstrings by adding '@action' to Python scripts. It provides an end-to-end stack supporting various connections between AI and user's apps and data, offering ease of use, security, and scalability.

app-agent

AppAgent is an open-source AI-first platform designed to streamline the app release process, from autonomous keyword research to ASO content generation. It offers features like autonomous keyword research, AI-powered store optimization, store synchronization with App Store Connect, and upcoming keyword tracking with self-healing. The tech stack includes Next.js, TypeScript, Tailwind CSS, Prisma ORM, PostgreSQL, NextAuth.js, PostHog, Resend, Stripe, and Vercel for hosting. Users can clone the repository, set up environment variables, install dependencies, set up the database, and run the development server to start using the tool.

robocorp

Robocorp is a platform that allows users to create, deploy, and operate Python automations and AI actions. It provides an easy way to extend the capabilities of AI agents, assistants, and copilots with custom actions written in Python. Users can create and deploy tools, skills, loaders, and plugins that securely connect any AI Assistant platform to their data and applications. The Robocorp Action Server makes Python scripts compatible with ChatGPT and LangChain by automatically creating and exposing an API based on function declaration, type hints, and docstrings. It simplifies the process of developing and deploying AI actions, enabling users to interact with AI frameworks effortlessly.

openui

OpenUI is a tool designed to simplify the process of building UI components by allowing users to describe UI using their imagination and see it rendered live. It supports converting HTML to React, Svelte, Web Components, etc. The tool is open source and aims to make UI development fun, fast, and flexible. It integrates with various AI services like OpenAI, Groq, Gemini, Anthropic, Cohere, and Mistral, providing users with the flexibility to use different models. OpenUI also supports LiteLLM for connecting to various LLM services and allows users to create custom proxy configs. The tool can be run locally using Docker or Python, and it offers a development environment for quick setup and testing.

torchchat

torchchat is a codebase showcasing the ability to run large language models (LLMs) seamlessly. It allows running LLMs using Python in various environments such as desktop, server, iOS, and Android. The tool supports running models via PyTorch, chatting, generating text, running chat in the browser, and running models on desktop/server without Python. It also provides features like AOT Inductor for faster execution, running in C++ using the runner, and deploying and running on iOS and Android. The tool supports popular hardware and OS including Linux, Mac OS, Android, and iOS, with various data types and execution modes available.

ai-starter-kit

SambaNova AI Starter Kits is a collection of open-source examples and guides designed to facilitate the deployment of AI-driven use cases for developers and enterprises. The kits cover various categories such as Data Ingestion & Preparation, Model Development & Optimization, Intelligent Information Retrieval, and Advanced AI Capabilities. Users can obtain a free API key using SambaNova Cloud or deploy models using SambaStudio. Most examples are written in Python but can be applied to any programming language. The kits provide resources for tasks like text extraction, fine-tuning embeddings, prompt engineering, question-answering, image search, post-call analysis, and more.

aider-composer

Aider Composer is a VSCode extension that integrates Aider into your development workflow. It allows users to easily add and remove files, toggle between read-only and editable modes, review code changes, use different chat modes, and reference files in the chat. The extension supports multiple models, code generation, code snippets, and settings customization. It has limitations such as lack of support for multiple workspaces, Git repository features, linting, testing, voice features, in-chat commands, and configuration options.

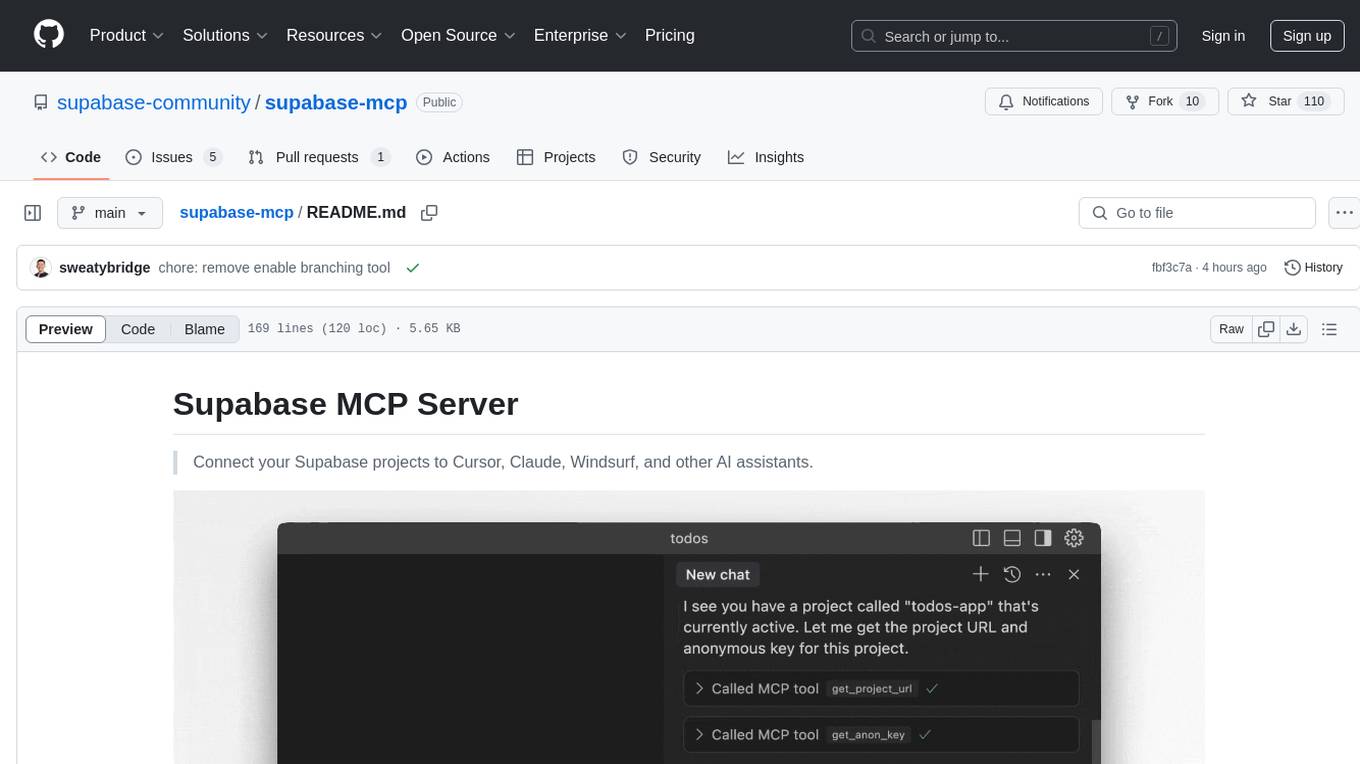

supabase-mcp

Supabase MCP Server standardizes how Large Language Models (LLMs) interact with Supabase, enabling AI assistants to manage tables, fetch config, and query data. It provides tools for project management, database operations, project configuration, branching (experimental), and development tools. The server is pre-1.0, so expect some breaking changes between versions.

unitycatalog

Unity Catalog is an open and interoperable catalog for data and AI, supporting multi-format tables, unstructured data, and AI assets. It offers plugin support for extensibility and interoperates with Delta Sharing protocol. The catalog is fully open with OpenAPI spec and OSS implementation, providing unified governance for data and AI with asset-level access control enforced through REST APIs.

aiarena-web

aiarena-web is a website designed for running the aiarena.net infrastructure. It consists of different modules such as core functionality, web API endpoints, frontend templates, and a module for linking users to their Patreon accounts. The website serves as a platform for obtaining new matches, reporting results, featuring match replays, and connecting with Patreon supporters. The project is licensed under GPLv3 in 2019.

For similar tasks

code-companion

CodeCompanion.AI is an AI coding assistant desktop app that helps with various coding tasks. It features an interactive chat interface, file system operations, web search capabilities, semantic code search, a fully functional terminal, code preview and approval, unlimited context window, dynamic context management, and more. Users can save chat conversations and set custom instructions per project.

starter-monorepo

Starter Monorepo is a template repository for setting up a monorepo structure in your project. It provides a basic setup with configurations for managing multiple packages within a single repository. This template includes tools for package management, versioning, testing, and deployment. By using this template, you can streamline your development process, improve code sharing, and simplify dependency management across your project. Whether you are working on a small project or a large-scale application, Starter Monorepo can help you organize your codebase efficiently and enhance collaboration among team members.

nx

Nx is a build system optimized for monorepos, featuring AI-powered architectural awareness and advanced CI capabilities. It provides faster task scheduling, caching, and more for existing workspaces. Nx Cloud enhances CI by offering remote caching, task distribution, automated e2e test splitting, and task flakiness detection. The tool aims to scale monorepos efficiently and improve developer productivity.

Figma-Context-MCP

Figma-Context-MCP is a plugin for Figma that allows users to easily manage and switch between multiple design contexts within a single Figma file. This tool simplifies the process of working on different design variations or versions by providing a seamless way to organize and switch between them. With Figma-Context-MCP, designers can streamline their workflow and improve collaboration by keeping all design contexts in one place and easily accessible. This plugin enhances productivity and efficiency for Figma users who frequently work on multiple design iterations or versions within a project.

mcp-unity

MCP Unity is an implementation of the Model Context Protocol for Unity Editor, enabling AI assistants like Claude, Windsurf, and Cursor to interact with Unity projects. It provides tools to execute Unity menu items, select game objects, manage packages, run tests, and display messages in the Unity Editor. The package bridges Unity with a Node.js server implementing the MCP protocol, offering resources to retrieve menu items, game objects, console logs, packages, assets, and tests. Requirements include Unity 2022.3 or later, Node.js 18 or later for the server, and npm 9 or later for building. Installation involves adding the Unity MCP Server package via Unity Package Manager and installing Node.js. Configuration settings for AI clients like Cursor IDE, Claude Desktop, and Windsurf IDE are provided. Running the server requires starting the Node.js server and Unity Editor MCP Server. Debugging and troubleshooting guidelines are included for server issues. Contributions are welcome under the MIT license.

commanddash

Dash AI is an open-source coding assistant for Flutter developers. It is designed to not only write code but also run and debug it, allowing it to assist beyond code completion and automate routine tasks. Dash AI is powered by Gemini, integrated with the Dart Analyzer, and specifically tailored for Flutter engineers. The vision for Dash AI is to create a single-command assistant that can automate tedious development tasks, enabling developers to focus on creativity and innovation. It aims to assist with the entire process of engineering a feature for an app, from breaking down the task into steps to generating exploratory tests and iterating on the code until the feature is complete. To achieve this vision, Dash AI is working on providing LLMs with the same access and information that human developers have, including full contextual knowledge, the latest syntax and dependencies data, and the ability to write, run, and debug code. Dash AI welcomes contributions from the community, including feature requests, issue fixes, and participation in discussions. The project is committed to building a coding assistant that empowers all Flutter developers.

ollama4j

Ollama4j is a Java library that serves as a wrapper or binding for the Ollama server. It facilitates communication with the Ollama server and provides models for deployment. The tool requires Java 11 or higher and can be installed locally or via Docker. Users can integrate Ollama4j into Maven projects by adding the specified dependency. The tool offers API specifications and supports various development tasks such as building, running unit tests, and integration tests. Releases are automated through GitHub Actions CI workflow. Areas of improvement include adhering to Java naming conventions, updating deprecated code, implementing logging, using lombok, and enhancing request body creation. Contributions to the project are encouraged, whether reporting bugs, suggesting enhancements, or contributing code.

crewAI-tools

The crewAI Tools repository provides a guide for setting up tools for crewAI agents, enabling the creation of custom tools to enhance AI solutions. Tools play a crucial role in improving agent functionality. The guide explains how to equip agents with a range of tools and how to create new tools. Tools are designed to return strings for generating responses. There are two main methods for creating tools: subclassing BaseTool and using the tool decorator. Contributions to the toolset are encouraged, and the development setup includes steps for installing dependencies, activating the virtual environment, setting up pre-commit hooks, running tests, static type checking, packaging, and local installation. Enhance AI agent capabilities with advanced tooling.

For similar jobs

resonance

Resonance is a framework designed to facilitate interoperability and messaging between services in your infrastructure and beyond. It provides AI capabilities and takes full advantage of asynchronous PHP, built on top of Swoole. With Resonance, you can: * Chat with Open-Source LLMs: Create prompt controllers to directly answer user's prompts. LLM takes care of determining user's intention, so you can focus on taking appropriate action. * Asynchronous Where it Matters: Respond asynchronously to incoming RPC or WebSocket messages (or both combined) with little overhead. You can set up all the asynchronous features using attributes. No elaborate configuration is needed. * Simple Things Remain Simple: Writing HTTP controllers is similar to how it's done in the synchronous code. Controllers have new exciting features that take advantage of the asynchronous environment. * Consistency is Key: You can keep the same approach to writing software no matter the size of your project. There are no growing central configuration files or service dependencies registries. Every relation between code modules is local to those modules. * Promises in PHP: Resonance provides a partial implementation of Promise/A+ spec to handle various asynchronous tasks. * GraphQL Out of the Box: You can build elaborate GraphQL schemas by using just the PHP attributes. Resonance takes care of reusing SQL queries and optimizing the resources' usage. All fields can be resolved asynchronously.

aiogram_bot_template

Aiogram bot template is a boilerplate for creating Telegram bots using Aiogram framework. It provides a solid foundation for building robust and scalable bots with a focus on code organization, database integration, and localization.

pluto

Pluto is a development tool dedicated to helping developers **build cloud and AI applications more conveniently** , resolving issues such as the challenging deployment of AI applications and open-source models. Developers are able to write applications in familiar programming languages like **Python and TypeScript** , **directly defining and utilizing the cloud resources necessary for the application within their code base** , such as AWS SageMaker, DynamoDB, and more. Pluto automatically deduces the infrastructure resource needs of the app through **static program analysis** and proceeds to create these resources on the specified cloud platform, **simplifying the resources creation and application deployment process**.

pinecone-ts-client

The official Node.js client for Pinecone, written in TypeScript. This client library provides a high-level interface for interacting with the Pinecone vector database service. With this client, you can create and manage indexes, upsert and query vector data, and perform other operations related to vector search and retrieval. The client is designed to be easy to use and provides a consistent and idiomatic experience for Node.js developers. It supports all the features and functionality of the Pinecone API, making it a comprehensive solution for building vector-powered applications in Node.js.

aiohttp-pydantic

Aiohttp pydantic is an aiohttp view to easily parse and validate requests. You define using function annotations what your methods for handling HTTP verbs expect, and Aiohttp pydantic parses the HTTP request for you, validates the data, and injects the parameters you want. It provides features like query string, request body, URL path, and HTTP headers validation, as well as Open API Specification generation.

gcloud-aio

This repository contains shared codebase for two projects: gcloud-aio and gcloud-rest. gcloud-aio is built for Python 3's asyncio, while gcloud-rest is a threadsafe requests-based implementation. It provides clients for Google Cloud services like Auth, BigQuery, Datastore, KMS, PubSub, Storage, and Task Queue. Users can install the library using pip and refer to the documentation for usage details. Developers can contribute to the project by following the contribution guide.

aioconsole

aioconsole is a Python package that provides asynchronous console and interfaces for asyncio. It offers asynchronous equivalents to input, print, exec, and code.interact, an interactive loop running the asynchronous Python console, customization and running of command line interfaces using argparse, stream support to serve interfaces instead of using standard streams, and the apython script to access asyncio code at runtime without modifying the sources. The package requires Python version 3.8 or higher and can be installed from PyPI or GitHub. It allows users to run Python files or modules with a modified asyncio policy, replacing the default event loop with an interactive loop. aioconsole is useful for scenarios where users need to interact with asyncio code in a console environment.

aiosqlite

aiosqlite is a Python library that provides a friendly, async interface to SQLite databases. It replicates the standard sqlite3 module but with async versions of all the standard connection and cursor methods, along with context managers for automatically closing connections and cursors. It allows interaction with SQLite databases on the main AsyncIO event loop without blocking execution of other coroutines while waiting for queries or data fetches. The library also replicates most of the advanced features of sqlite3, such as row factories and total changes tracking.