pluto

Pluto provides a unified programming interface that allows you to seamlessly tap into cloud capabilities and develop your cloud and AI applications.

Stars: 90

Pluto is a development tool dedicated to helping developers **build cloud and AI applications more conveniently** , resolving issues such as the challenging deployment of AI applications and open-source models. Developers are able to write applications in familiar programming languages like **Python and TypeScript** , **directly defining and utilizing the cloud resources necessary for the application within their code base** , such as AWS SageMaker, DynamoDB, and more. Pluto automatically deduces the infrastructure resource needs of the app through **static program analysis** and proceeds to create these resources on the specified cloud platform, **simplifying the resources creation and application deployment process**.

README:

Pluto is a development tool dedicated to helping developers build cloud and AI applications more conveniently, resolving issues such as the challenging deployment of AI applications and open-source models.

Developers are able to write applications in familiar programming languages like Python and TypeScript, directly defining and utilizing the cloud resources necessary for the application within their code base, such as AWS SageMaker, DynamoDB, and more. Pluto automatically deduces the infrastructure resource needs of the app through static program analysis and proceeds to create these resources on the specified cloud platform, simplifying the resources creation and application deployment process.

Let's develop a text generation application based on GPT2, where the user input is processed by the GPT2 model to generate and return text. Below is how the development process with Pluto looks:

AWS SageMaker is utilized as the model deployment platform, and AWS Api Gateway and Lambda support the application's HTTP services. The deployed application architecture, as shown in the top right graphic

The top left graphic new SageMaker(), you can directly interact with the SageMaker model using methods like sagemaker.invoke() and obtain the model endpoint URL using sagemaker.endpointUrl(). Establishing an Api Gateway requires only creating a new variable router with new Router(), and the function arguments within the methods of router, such as router.get(), router.post(), etc., will automatically be converted into Lambda functions. The same application could be implemented in Python as well.

Once the application code has been written, executing pluto deploy allows Pluto to deduce the application's infrastructure needs and automatically provision around 30 cloud resources, which includes instances such as SageMaker, Lambda, Api Gateway, along with setups like triggers, IAM roles, and policy permissions.

Finally, Pluto hands back the URL of the Api Gateway, providing direct access to use the application.

Interested in exploring more examples?

- TypeScript applications:

- Python applications:

Online Experience:CodeSandbox offers an online development environmentwhere we've set up Pluto templates in both Python and TypeScript. This allows you to explore Pluto directly in your browser. To create your own project, simply open the template and click the "Fork" button in the top right corner. The environment comes pre-configured with AWS CLI, Pulumi, and Pluto's basic dependencies, adhering to the README for operations.

Alternatively, you can also enjoy an online development experience by clicking

Container Experience: We offer a container image plutolang/pluto:latest for application development, which contains essential dependencies like AWS CLI, Pulumi, and Pluto, along with Node.js 20.x and Python 3.10 environments pre-configured. If you are interested in developing only TypeScript applications, you can use the plutolang/pluto:latest-typescript image. You can partake in Pluto development within a container using the following command:

docker run -it --name pluto-app plutolang/pluto:latest bashLocal Experience: For local use, please follow these steps for setup:

npm install -g @plutolang/clipluto new # Interactively create a new project, allowing selection of TypeScript or Python

cd <project_dir> # Enter your project directory

npm install # Download dependencies

# If it's a Python project, in addition to npm install, Python dependencies must also be installed.

pip install -r requirements.txt

pluto deploy # Deploy with one click!- If the target platform is AWS, Pluto attempts to read your AWS configuration file to acquire the default AWS Region, or alternatively, tries to fetch it from the environment variable

AWS_REGION. Deployment will fail if neither is set. - If the target platform is Kubernetes, Knative must firstly be installed within K8s and the scale-to-zero feature should be deactivated (as Pluto doesn't yet support Ingress forwarding to Knative serving). You can configure the required Kubernetes environment following this document.

For detailed steps, refer to the Getting Started Guide.

Currently, Pluto only supports single-file configurations. Inside each handler function, access is provided to literal constants and plain functions outside of the handler's scope; however, Python allows direct access to classes, interfaces, etc., outside of the scope, whereas TypeScript requires encapsulating these within functions for access.

Here you can find out why Pluto was created. To put it simply, we aim to address several pain points you might often encounter:

- High learning curve: Developing a cloud application requires mastery of both the business and infrastructure skills, and it often demands significant efforts in testing and debugging. Thus, developers spend a considerable amount of energy on aspects beyond writing the core business logic.

- High cognitive load: With cloud service providers offering hundreds of capabilities and Kubernetes offering nearly limitless possibilities, average developers often lack a deep understanding of cloud infrastructure, making it challenging to choose the proper architecture for their particular needs.

- Poor programming experience: Developers must maintain separate codebases for infrastructure and business logic or intertwine infrastructure configuration within the business logic, leading to a sub-optimal programming experience that falls short of the simplicity of creating a local standalone program.

- Vendor lock-in: Coding for a specific cloud provider can lead to poor flexibility in the resulting code. When it becomes necessary to migrate to another cloud platform due to cost or other factors, adapting the existing code to the new environment can require substantial changes.

- No learning curve: The programming interface is fully compatible with TypeScript, Python, and supports the majority of dependency libraries such as LangChain, LangServe, FastAPI, etc.

- Focus on pure business logic: Developers only need to write the business logic. Pluto, via static analysis, automatically deduces the infrastructure requirements of the application.

- One-click cloud deployment: The CLI provides basic capabilities such as compilation and deployment. Beyond coding and basic configuration, everything else is handled automatically by Pluto.

- Support for various runtime environments: With a unified abstraction based on the SDK, it allows developers to migrate between different runtime environments without altering the source code.

Overall, the Pluto deployment process comprises three stages—deduction, generation, and deployment:

- Deduction Phase: The deducer analyzes the application code to derive the required cloud resources and their interdependencies, resulting in an architecture reference. It also splits user business code into business modules, which, along with the dependent SDK, form the business bundle.

- Generation Phase: The generator creates IaC code that is independent of user code, guided by the architecture reference.

- Deployment Phase: Depending on the IaC code type, Pluto invokes the corresponding adapter, which, in turn, works with the respective IaC engine to execute the IaC code, managing infrastructure configuration and application deployment.

Components such as the deducer, generator, and adapter are extendable, which allows support for a broader range of programming languages and platform integration methods. Currently, Pluto provides deducers for Python and TypeScript, and a generator and adapter for Pulumi. Learn more about Pluto's processes in detail in this document.

Pluto distinguishes itself from other offerings by leveraging static program analysis techniques to infer resource dependencies directly from application code and generate infrastructure code that remains separate from business logic. This approach ensures infrastructure configuration does not intrude into business logic, providing developers with a development experience free from infrastructure concerns.

- Compared to BaaS (Backend as a Service) products like Supabase or Appwrite, Pluto assists developers in creating the necessary infrastructure environment within their own cloud account, rather than offering managed components.

- Differing from PaaS (Platform as a Service) offerings like Fly.io, Render, Heroku, or LeptonAI, Pluto does not handle application hosting. Instead, it compiles application into finely-grained compute modules, and integrates with rich cloud platform capabilities like FaaS, GPU instances, and message queues, enabling deployment to cloud platforms without requiring developers to write extra configurations.

- In contrast to scaffolding tools such as the Serverless Framework or Serverless Devs, Pluto does not impose an application programming framework specific to particular cloud providers or frameworks, but instead offers a uniform programming interface.

- Unlike IfC (Infrastructure from Code) products based purely on annotations like Klotho, Pluto infers resource dependencies directly from user code, eliminating the need for extra annotations.

- Different from other IfC products that rely on dynamic analysis, like Shuttle, Nitric, and Winglang, Pluto employs static program analysis to identify application resource dependencies, generating independent infrastructure code without having to execute user code.

You can learn more about the differences with other projects in this document.

Pluto is still in its infancy, and we warmly welcome contributions from those who are interested. Any suggestions or ideas about the issues Pluto aims to solve, the features it offers, or its code implementation can be shared and contributed to the community. Please refer to our project contribution guide for more information.

- Complete implementation of the resource static deduction process

- 🚧 Resource type checking

- ❌ Conversion of local variables into cloud resources

- SDK development

- 🚧 Client SDK development

- 🚧 Infra SDK development

- ❌ Support for additional resources and more platforms

- Engine extension support

- 🚧 Pulumi

- ❌ Terraform

- 🚧 Local simulation and testing functionality

Please see the Issue list for further details.

✅: Indicates that all user-visible interfaces are available

🚧: Indicates that some of the user-visible interfaces are available

❌: Indicates not yet supported

| Resource Type | AWS | Kubernetes | Alibaba Cloud | Simulation |

|---|---|---|---|---|

| Router | ✅ | 🚧 | 🚧 | 🚧 |

| Queue | ✅ | ✅ | ❌ | ✅ |

| KVStore | ✅ | ✅ | ❌ | ✅ |

| Function | ✅ | ✅ | ✅ | ✅ |

| Schedule | ✅ | ✅ | ❌ | ❌ |

| Tester | ✅ | ❌ | ❌ | ✅ |

| SageMaker | ✅ | ❌ | ❌ | ❌ |

| Resource Type | AWS | Kubernetes | Alibaba Cloud | Simulation |

|---|---|---|---|---|

| Router | ✅ | ❌ | ❌ | ❌ |

| Queue | ✅ | ❌ | ❌ | ❌ |

| KVStore | ✅ | ❌ | ❌ | ❌ |

| Function | ✅ | ❌ | ❌ | ❌ |

| Schedule | ✅ | ❌ | ❌ | ❌ |

| Tester | ❌ | ❌ | ❌ | ❌ |

| SageMaker | ✅ | ❌ | ❌ | ❌ |

Join our Slack community to communicate and contribute ideas.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for pluto

Similar Open Source Tools

pluto

Pluto is a development tool dedicated to helping developers **build cloud and AI applications more conveniently** , resolving issues such as the challenging deployment of AI applications and open-source models. Developers are able to write applications in familiar programming languages like **Python and TypeScript** , **directly defining and utilizing the cloud resources necessary for the application within their code base** , such as AWS SageMaker, DynamoDB, and more. Pluto automatically deduces the infrastructure resource needs of the app through **static program analysis** and proceeds to create these resources on the specified cloud platform, **simplifying the resources creation and application deployment process**.

LazyLLM

LazyLLM is a low-code development tool for building complex AI applications with multiple agents. It assists developers in building AI applications at a low cost and continuously optimizing their performance. The tool provides a convenient workflow for application development and offers standard processes and tools for various stages of application development. Users can quickly prototype applications with LazyLLM, analyze bad cases with scenario task data, and iteratively optimize key components to enhance the overall application performance. LazyLLM aims to simplify the AI application development process and provide flexibility for both beginners and experts to create high-quality applications.

uusec-waf

UUSEC WAF is an industrial grade free, high-performance, and highly scalable web application and API security protection product that supports AI and semantic engines. It provides intelligent 0-day defense, ultimate CDN acceleration, powerful proactive defense, advanced semantic engine, and advanced rule engine. With features like machine learning technology, cache cleaning, dual layer defense, semantic analysis, and Lua script rule writing, UUSEC WAF offers comprehensive website protection with three-layer defense functions at traffic, system, and runtime layers.

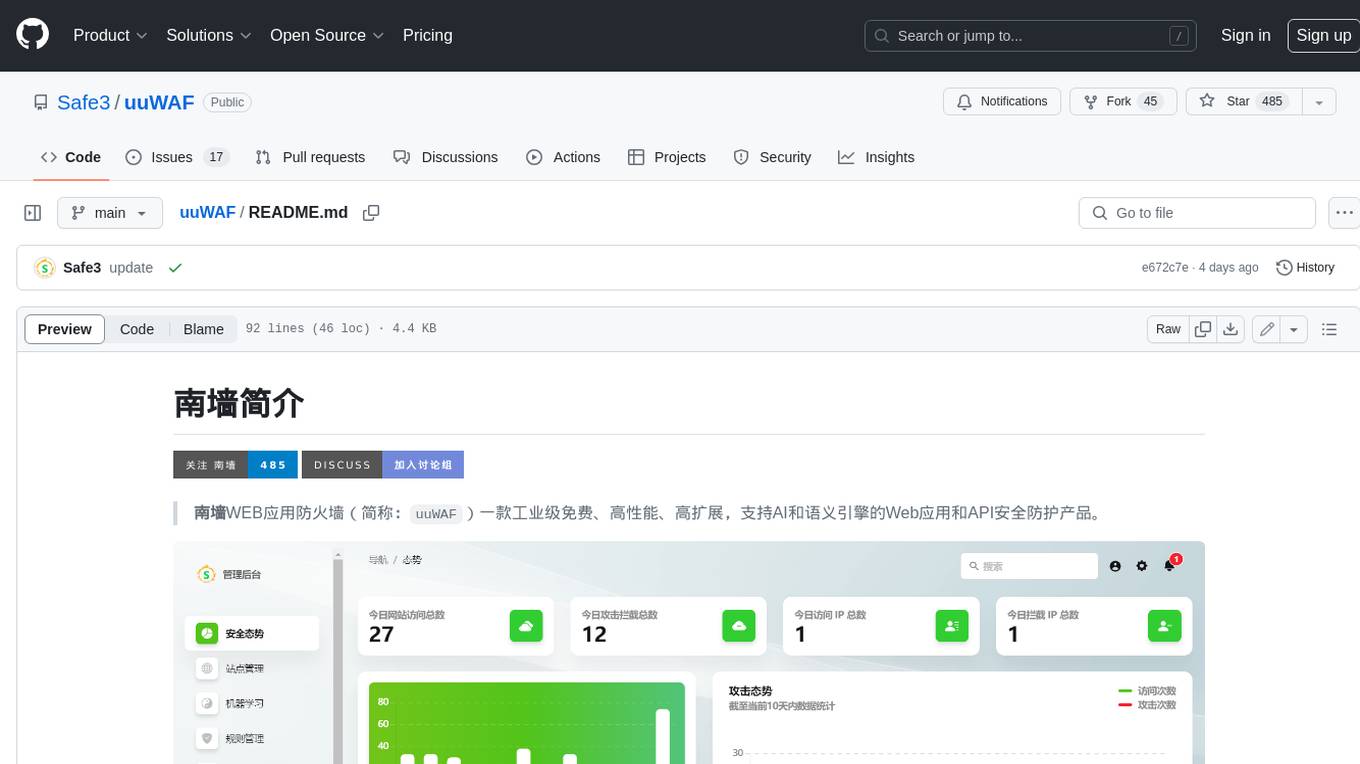

uuWAF

uuWAF is an industrial-grade, free, high-performance, highly extensible web application and API security protection product that supports AI and semantic engines.

AntSK

AntSK is an AI knowledge base/agent built with .Net8+Blazor+SemanticKernel. It features a semantic kernel for accurate natural language processing, a memory kernel for continuous learning and knowledge storage, a knowledge base for importing and querying knowledge from various document formats, a text-to-image generator integrated with StableDiffusion, GPTs generation for creating personalized GPT models, API interfaces for integrating AntSK into other applications, an open API plugin system for extending functionality, a .Net plugin system for integrating business functions, real-time information retrieval from the internet, model management for adapting and managing different models from different vendors, support for domestic models and databases for operation in a trusted environment, and planned model fine-tuning based on llamafactory.

pathway

Pathway is a Python data processing framework for analytics and AI pipelines over data streams. It's the ideal solution for real-time processing use cases like streaming ETL or RAG pipelines for unstructured data. Pathway comes with an **easy-to-use Python API** , allowing you to seamlessly integrate your favorite Python ML libraries. Pathway code is versatile and robust: **you can use it in both development and production environments, handling both batch and streaming data effectively**. The same code can be used for local development, CI/CD tests, running batch jobs, handling stream replays, and processing data streams. Pathway is powered by a **scalable Rust engine** based on Differential Dataflow and performs incremental computation. Your Pathway code, despite being written in Python, is run by the Rust engine, enabling multithreading, multiprocessing, and distributed computations. All the pipeline is kept in memory and can be easily deployed with **Docker and Kubernetes**. You can install Pathway with pip: `pip install -U pathway` For any questions, you will find the community and team behind the project on Discord.

fridon-ai

FridonAI is an open-source project offering AI-powered tools for cryptocurrency analysis and blockchain operations. It includes modules like FridonAnalytics for price analysis, FridonSearch for technical indicators, FridonNotifier for custom alerts, FridonBlockchain for blockchain operations, and FridonChat as a unified chat interface. The platform empowers users to create custom AI chatbots, access crypto tools, and interact effortlessly through chat. The core functionality is modular, with plugins, tools, and utilities for easy extension and development. FridonAI implements a scoring system to assess user interactions and incentivize engagement. The application uses Redis extensively for communication and includes a Nest.js backend for system operations.

CSGHub

CSGHub is an open source, trustworthy large model asset management platform that can assist users in governing the assets involved in the lifecycle of LLM and LLM applications (datasets, model files, codes, etc). With CSGHub, users can perform operations on LLM assets, including uploading, downloading, storing, verifying, and distributing, through Web interface, Git command line, or natural language Chatbot. Meanwhile, the platform provides microservice submodules and standardized OpenAPIs, which could be easily integrated with users' own systems. CSGHub is committed to bringing users an asset management platform that is natively designed for large models and can be deployed On-Premise for fully offline operation. CSGHub offers functionalities similar to a privatized Huggingface(on-premise Huggingface), managing LLM assets in a manner akin to how OpenStack Glance manages virtual machine images, Harbor manages container images, and Sonatype Nexus manages artifacts.

reductstore

ReductStore is a high-performance time series database designed for storing and managing large amounts of unstructured blob data. It offers features such as real-time querying, batching data, and HTTP(S) API for edge computing, computer vision, and IoT applications. The database ensures data integrity, implements retention policies, and provides efficient data access, making it a cost-effective solution for applications requiring unstructured data storage and access at specific time intervals.

video-search-and-summarization

The NVIDIA AI Blueprint for Video Search and Summarization is a repository showcasing video search and summarization agent with NVIDIA NIM microservices. It enables industries to make better decisions faster by providing insightful, accurate, and interactive video analytics AI agents. These agents can perform tasks like video summarization and visual question-answering, unlocking new application possibilities. The repository includes software components like NIM microservices, ingestion pipeline, and CA-RAG module, offering a comprehensive solution for analyzing and summarizing large volumes of video data. The target audience includes video analysts, IT engineers, and GenAI developers who can benefit from the blueprint's 1-click deployment steps, easy-to-manage configurations, and customization options. The repository structure overview includes directories for deployment, source code, and training notebooks, along with documentation for detailed instructions. Hardware requirements vary based on deployment topology and dependencies like VLM and LLM, with different deployment methods such as Launchable Deployment, Docker Compose Deployment, and Helm Chart Deployment provided for various use cases.

llama_deploy

llama_deploy is an async-first framework for deploying, scaling, and productionizing agentic multi-service systems based on workflows from llama_index. It allows building workflows in llama_index and deploying them seamlessly with minimal changes to code. The system includes services endlessly processing tasks, a control plane managing state and services, an orchestrator deciding task handling, and fault tolerance mechanisms. It is designed for high-concurrency scenarios, enabling real-time and high-throughput applications.

PulsarRPA

PulsarRPA is a high-performance, distributed, open-source Robotic Process Automation (RPA) framework designed to handle large-scale RPA tasks with ease. It provides a comprehensive solution for browser automation, web content understanding, and data extraction. PulsarRPA addresses challenges of browser automation and accurate web data extraction from complex and evolving websites. It incorporates innovative technologies like browser rendering, RPA, intelligent scraping, advanced DOM parsing, and distributed architecture to ensure efficient, accurate, and scalable web data extraction. The tool is open-source, customizable, and supports cutting-edge information extraction technology, making it a preferred solution for large-scale web data extraction.

bionic-gpt

BionicGPT is an on-premise replacement for ChatGPT, offering the advantages of Generative AI while maintaining strict data confidentiality. BionicGPT can run on your laptop or scale into the data center.

LabelLLM

LabelLLM is an open-source data annotation platform designed to optimize the data annotation process for LLM development. It offers flexible configuration, multimodal data support, comprehensive task management, and AI-assisted annotation. Users can access a suite of annotation tools, enjoy a user-friendly experience, and enhance efficiency. The platform allows real-time monitoring of annotation progress and quality control, ensuring data integrity and timeliness.

stride-gpt

STRIDE GPT is an AI-powered threat modelling tool that leverages Large Language Models (LLMs) to generate threat models and attack trees for a given application based on the STRIDE methodology. Users provide application details, such as the application type, authentication methods, and whether the application is internet-facing or processes sensitive data. The model then generates its output based on the provided information. It features a simple and user-friendly interface, supports multi-modal threat modelling, generates attack trees, suggests possible mitigations for identified threats, and does not store application details. STRIDE GPT can be accessed via OpenAI API, Azure OpenAI Service, Google AI API, or Mistral API. It is available as a Docker container image for easy deployment.

For similar tasks

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.

AI-in-a-Box

AI-in-a-Box is a curated collection of solution accelerators that can help engineers establish their AI/ML environments and solutions rapidly and with minimal friction, while maintaining the highest standards of quality and efficiency. It provides essential guidance on the responsible use of AI and LLM technologies, specific security guidance for Generative AI (GenAI) applications, and best practices for scaling OpenAI applications within Azure. The available accelerators include: Azure ML Operationalization in-a-box, Edge AI in-a-box, Doc Intelligence in-a-box, Image and Video Analysis in-a-box, Cognitive Services Landing Zone in-a-box, Semantic Kernel Bot in-a-box, NLP to SQL in-a-box, Assistants API in-a-box, and Assistants API Bot in-a-box.

spring-ai

The Spring AI project provides a Spring-friendly API and abstractions for developing AI applications. It offers a portable client API for interacting with generative AI models, enabling developers to easily swap out implementations and access various models like OpenAI, Azure OpenAI, and HuggingFace. Spring AI also supports prompt engineering, providing classes and interfaces for creating and parsing prompts, as well as incorporating proprietary data into generative AI without retraining the model. This is achieved through Retrieval Augmented Generation (RAG), which involves extracting, transforming, and loading data into a vector database for use by AI models. Spring AI's VectorStore abstraction allows for seamless transitions between different vector database implementations.

ragstack-ai

RAGStack is an out-of-the-box solution simplifying Retrieval Augmented Generation (RAG) in GenAI apps. RAGStack includes the best open-source for implementing RAG, giving developers a comprehensive Gen AI Stack leveraging LangChain, CassIO, and more. RAGStack leverages the LangChain ecosystem and is fully compatible with LangSmith for monitoring your AI deployments.

breadboard

Breadboard is a library for prototyping generative AI applications. It is inspired by the hardware maker community and their boundless creativity. Breadboard makes it easy to wire prototypes and share, remix, reuse, and compose them. The library emphasizes ease and flexibility of wiring, as well as modularity and composability.

cloudflare-ai-web

Cloudflare-ai-web is a lightweight and easy-to-use tool that allows you to quickly deploy a multi-modal AI platform using Cloudflare Workers AI. It supports serverless deployment, password protection, and local storage of chat logs. With a size of only ~638 kB gzip, it is a great option for building AI-powered applications without the need for a dedicated server.

app-builder

AppBuilder SDK is a one-stop development tool for AI native applications, providing basic cloud resources, AI capability engine, Qianfan large model, and related capability components to improve the development efficiency of AI native applications.

cookbook

This repository contains community-driven practical examples of building AI applications and solving various tasks with AI using open-source tools and models. Everyone is welcome to contribute, and we value everybody's contribution! There are several ways you can contribute to the Open-Source AI Cookbook: Submit an idea for a desired example/guide via GitHub Issues. Contribute a new notebook with a practical example. Improve existing examples by fixing issues/typos. Before contributing, check currently open issues and pull requests to avoid working on something that someone else is already working on.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.