AI-Gateway

APIM ❤️ AI - This repo contains experiments on Azure API Management's AI capabilities, integrating with Azure OpenAI, AI Foundry, and much more 🚀 . New workshop experience at https://aka.ms/ai-gateway/workshop

Stars: 755

The AI-Gateway repository explores the AI Gateway pattern through a series of experimental labs, focusing on Azure API Management for handling AI services APIs. The labs provide step-by-step instructions using Jupyter notebooks with Python scripts, Bicep files, and APIM policies. The goal is to accelerate experimentation of advanced use cases and pave the way for further innovation in the rapidly evolving field of AI. The repository also includes a Mock Server to mimic the behavior of the OpenAI API for testing and development purposes.

README:

🧪 AI Gateway labs

➕ AI Gateway workshop provides a comprehensive learning experience using the Azure Portal

➕ Refactor most of the labs to use the new LLM built-in logging that supports streaming completions.

➕ Realtime API (Audio and Text) with Azure OpenAI 🔥 experiments with the AOAI Realtime

➕ Realtime API (Audio and Text) with Azure OpenAI + MCP tools 🔥 experiments with the AOAI Realtime + MCP

➕ Model Context Protocol (MCP) ⚙️ experiments with the client authorization flow

➕ the FinOps Framework lab to manage AI budgets effectively 💰

➕ Agentic ✨ experiments with Model Context Protocol (MCP).

➕ Agentic ✨ experiments with OpenAI Agents SDK.

➕ Agentic ✨ experiments with AI Agent Service from Azure AI Foundry.

- 🧠 AI Gateway

- 🧪 Labs with AI Agents

- 🧪 Labs with the Inference API

- 🧪 Labs based on Azure OpenAI

- 🚀 Getting started

- 🔨 Supporting tools

- 🏛️ Well-Architected Framework

- 🥇 Other Resources

The rapid pace of AI advances demands experimentation-driven approaches for organizations to remain at the forefront of the industry. With AI steadily becoming a game-changer for an array of sectors, maintaining a fast-paced innovation trajectory is crucial for businesses aiming to leverage its full potential.

AI services are predominantly accessed via APIs, underscoring the essential need for a robust and efficient API management strategy. This strategy is instrumental for maintaining control and governance over the consumption of AI models, data and tools.

With the expanding horizons of AI services and their seamless integration with APIs, there is a considerable demand for a comprehensive AI Gateway pattern, which broadens the core principles of API management. Aiming to accelerate the experimentation of advanced use cases and pave the road for further innovation in this rapidly evolving field. The well-architected principles of the AI Gateway provides a framework for the confident deployment of Intelligent Apps into production.

This repo explores the AI Gateway pattern through a series of experimental labs. The AI Gateway capabilities of Azure API Management plays a crucial role within these labs, handling AI services APIs, with security, reliability, performance, overall operational efficiency and cost controls. The primary focus is on Azure AI Foundry models, which sets the standard reference for Large Language Models (LLM). However, the same principles and design patterns could potentially be applied to any third party model.

Acknowledging the rising dominance of Python, particularly in the realm of AI, along with the powerful experimental capabilities of Jupyter notebooks, the following labs are structured around Jupyter notebooks, with step-by-step instructions with Python scripts, Bicep files and Azure API Management policies:

Playground to experiment the Model Context Protocol with the client authorization flow. In this flow, Azure API Management act both as an OAuth client connecting to the Microsoft Entra ID authorization server and as an OAuth authorization server for the MCP client (MCP inspector in this lab).

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to experiment the Model Context Protocol with Azure API Management to enable plug & play of tools to LLMs. Leverages the credential manager for managing OAuth 2.0 tokens to backend tools and client token validation to ensure end-to-end authentication and authorization.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the OpenAI Agents with Azure OpenAI models and API based tools controlled by Azure API Management.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Use this playground to explore the Azure AI Agent Service, leveraging Azure API Management to control multiple services, including Azure OpenAI models, Logic Apps Workflows, and OpenAPI-based APIs.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the OpenAI function calling feature with an Azure Functions API that is also managed by Azure API Management.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the Deepseek R1 model via the AI Model Inference from Azure AI Foundry. This lab uses the Azure AI Model Inference API and two APIM LLM policies: llm-token-limit and llm-emit-token-metric.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

🧪 SLM self-hosting (Phi-3)

Playground to try the self-hosted Phi-3 Small Language Model (SLM) through the Azure API Management self-hosted gateway with OpenAI API compatibility.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

This playground leverages the FinOps Framework and Azure API Management to control AI costs. It uses the token limit policy for each product and integrates Azure Monitor alerts with Logic Apps to automatically disable APIM subscriptions that exceed cost quotas.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

🧪 Backend pool load balancing - Available with Bicep and Terraform

Playground to try the built-in load balancing backend pool functionality of Azure API Management to either a list of Azure OpenAI endpoints or mock servers.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the token rate limiting policy to one or more Azure OpenAI endpoints. When the token usage is exceeded, the caller receives a 429.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the emit token metric policy. The policy sends metrics to Application Insights about consumption of large language model tokens through Azure OpenAI Service APIs.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the semantic caching policy. Uses vector proximity of the prompt to previous requests and a specified similarity score threshold.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the OAuth 2.0 authorization feature using identity provider to enable more fine-grained access to OpenAPI APIs by particular users or client.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to create a combination of several policies in an iterative approach. We start with load balancing, then progressively add token emitting, rate limiting, and, eventually, semantic caching. Each of these sets of policies is derived from other labs in this repo.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try routing to a backend based on Azure OpenAI model and version.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the Retrieval Augmented Generation (RAG) pattern with Azure AI Search, Azure OpenAI embeddings and Azure OpenAI completions.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the buil-in LLM logging capabilities of Azure API Management. Logs requests into Azure Monitor to track details and token usage.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to test storing message details into Cosmos DB through the LLM Logging to event hub.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

Playground to try the content safety policy. The policy enforces content safety checks on any LLM prompts by transmitting them to the Azure AI Content Safety service before sending to the backend LLM API.

🦾 Bicep ➕ ⚙️ Policy ➕ 🧾 Notebook

This is a list of potential future labs to be developed.

- Logic Apps RAG

- PII handling

- Python 3.12 or later version installed

- VS Code installed with the Jupyter notebook extension enabled

-

Python environment with the requirements.txt or run

pip install -r requirements.txtin your terminal - An Azure Subscription with Contributor + RBAC Administrator or Owner roles

- Azure CLI installed and Signed into your Azure subscription

- Clone this repo and configure your local machine with the prerequisites. Or just create a GitHub Codespace and run it on the browser or in VS Code.

- Navigate through the available labs and select one that best suits your needs. For starters we recommend the token rate limiting.

- Open the notebook and run the provided steps.

- Tailor the experiment according to your requirements. If you wish to contribute to our collective work, we would appreciate your submission of a pull request.

[!NOTE] 🪲 Please feel free to open a new issue if you find something that should be fixed or enhanced.

- Tracing - Invoke OpenAI API with trace enabled and returns the tracing information.

- Streaming - Invoke OpenAI API with stream enabled and returns response in chunks.

- AI-Gateway Mock server is designed to mimic the behavior and responses of the OpenAI API, thereby creating an efficient simulation environment suitable for testing and development purposes on the integration with Azure API Management and other use cases. The app.py can be customized to tailor the Mock server to specific use cases.

The Azure Well-Architected Framework is a design framework that can improve the quality of a workload. The following table maps labs with the Well-Architected Framework pillars to set you up for success through architectural experimentation.

| Lab | Security | Reliability | Performance | Operations | Costs |

|---|---|---|---|---|---|

| Access controlling | ⭐ | ||||

| Backend pool load balancing | ⭐ | ⭐ | ⭐ | ||

| Semantic caching | ⭐ | ⭐ | |||

| Token rate limiting | ⭐ | ⭐ | |||

| Built-in LLM logging | ⭐ | ||||

| FinOps framework | ⭐ | ⭐ |

[!TIP] Check the Azure Well-Architected Framework perspective on Azure OpenAI Service for aditional guidance.

We believe that there may be valuable content that we are currently unaware of. We would greatly appreciate any suggestions or recommendations to enhance this list.

[!IMPORTANT] This software is provided for demonstration purposes only. It is not intended to be relied upon for any purpose. The creators of this software make no representations or warranties of any kind, express or implied, about the completeness, accuracy, reliability, suitability or availability with respect to the software or the information, products, services, or related graphics contained in the software for any purpose. Any reliance you place on such information is therefore strictly at your own risk.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for AI-Gateway

Similar Open Source Tools

AI-Gateway

The AI-Gateway repository explores the AI Gateway pattern through a series of experimental labs, focusing on Azure API Management for handling AI services APIs. The labs provide step-by-step instructions using Jupyter notebooks with Python scripts, Bicep files, and APIM policies. The goal is to accelerate experimentation of advanced use cases and pave the way for further innovation in the rapidly evolving field of AI. The repository also includes a Mock Server to mimic the behavior of the OpenAI API for testing and development purposes.

agent-zero

Agent Zero is a personal, organic agentic framework designed to be dynamic, transparent, customizable, and interactive. It uses the computer as a tool to accomplish tasks, with features like general-purpose assistant, computer as a tool, multi-agent cooperation, customizable and extensible framework, and communication skills. The tool is fully Dockerized, with Speech-to-Text and TTS capabilities, and offers real-world use cases like financial analysis, Excel automation, API integration, server monitoring, and project isolation. Agent Zero can be dangerous if not used properly and is prompt-based, guided by the prompts folder. The tool is extensively documented and has a changelog highlighting various updates and improvements.

UltraRAG

The UltraRAG framework is a researcher and developer-friendly RAG system solution that simplifies the process from data construction to model fine-tuning in domain adaptation. It introduces an automated knowledge adaptation technology system, supporting no-code programming, one-click synthesis and fine-tuning, multidimensional evaluation, and research-friendly exploration work integration. The architecture consists of Frontend, Service, and Backend components, offering flexibility in customization and optimization. Performance evaluation in the legal field shows improved results compared to VanillaRAG, with specific metrics provided. The repository is licensed under Apache-2.0 and encourages citation for support.

bionic-gpt

BionicGPT is an on-premise replacement for ChatGPT, offering the advantages of Generative AI while maintaining strict data confidentiality. BionicGPT can run on your laptop or scale into the data center.

codegate

CodeGate is a local gateway that enhances the safety of AI coding assistants by ensuring AI-generated recommendations adhere to best practices, safeguarding code integrity, and protecting individual privacy. Developed by Stacklok, CodeGate allows users to confidently leverage AI in their development workflow without compromising security or productivity. It works seamlessly with coding assistants, providing real-time security analysis of AI suggestions. CodeGate is designed with privacy at its core, keeping all data on the user's machine and offering complete control over data.

qdrant

Qdrant is a vector similarity search engine and vector database. It is written in Rust, which makes it fast and reliable even under high load. Qdrant can be used for a variety of applications, including: * Semantic search * Image search * Product recommendations * Chatbots * Anomaly detection Qdrant offers a variety of features, including: * Payload storage and filtering * Hybrid search with sparse vectors * Vector quantization and on-disk storage * Distributed deployment * Highlighted features such as query planning, payload indexes, SIMD hardware acceleration, async I/O, and write-ahead logging Qdrant is available as a fully managed cloud service or as an open-source software that can be deployed on-premises.

beeai-platform

BeeAI is an open-source platform that simplifies the discovery, running, and sharing of AI agents across different frameworks. It addresses challenges such as framework fragmentation, deployment complexity, and discovery issues by providing a standardized platform for individuals and teams to access agents easily. With features like a centralized agent catalog, framework-agnostic interfaces, containerized agents, and consistent user experiences, BeeAI aims to streamline the process of working with AI agents for both developers and teams.

neuro-san-studio

Neuro SAN Studio is an open-source library for building agent networks across various industries. It simplifies the development of collaborative AI systems by enabling users to create sophisticated multi-agent applications using declarative configuration files. The tool offers features like data-driven configuration, adaptive communication protocols, safe data handling, dynamic agent network designer, flexible tool integration, robust traceability, and cloud-agnostic deployment. It has been used in various use-cases such as automated generation of multi-agent configurations, airline policy assistance, banking operations, market analysis in consumer packaged goods, insurance claims processing, intranet knowledge management, retail operations, telco network support, therapy vignette supervision, and more.

ai-platform-engineering

The AI Platform Engineering repository provides a collection of tools and resources for building and deploying AI models. It includes libraries for data preprocessing, model training, and model serving. The repository also contains example code and tutorials to help users get started with AI development. Whether you are a beginner or an experienced AI engineer, this repository offers valuable insights and best practices to streamline your AI projects.

LiteRT

LiteRT is Google's open-source high-performance runtime for on-device AI, previously known as TensorFlow Lite. The repository is currently not intended for open-source development, but aims to evolve to allow direct building and contributions. LiteRT supports Python versions 3.9, 3.10, 3.11 on Linux and MacOS. It ensures compatibility with existing .tflite file extension and format, offering conversion tools and continued active development under the name LiteRT.

neo

Neo.mjs is a revolutionary Application Engine for the web that offers true multithreading and context engineering, enabling desktop-class UI performance and AI-driven runtime mutation. It is not a framework but a complete runtime and toolchain for enterprise applications, excelling in single page apps and browser-based multi-window applications. With a pioneering Off-Main-Thread architecture, Neo.mjs ensures butter-smooth UI performance by keeping the main thread free for flawless user interactions. The latest version, v11, introduces AI-native capabilities, allowing developers to work with AI agents as first-class partners in the development process. The platform offers a suite of dedicated Model Context Protocol servers that give agents the context they need to understand, build, and reason about the code, enabling a new level of human-AI collaboration.

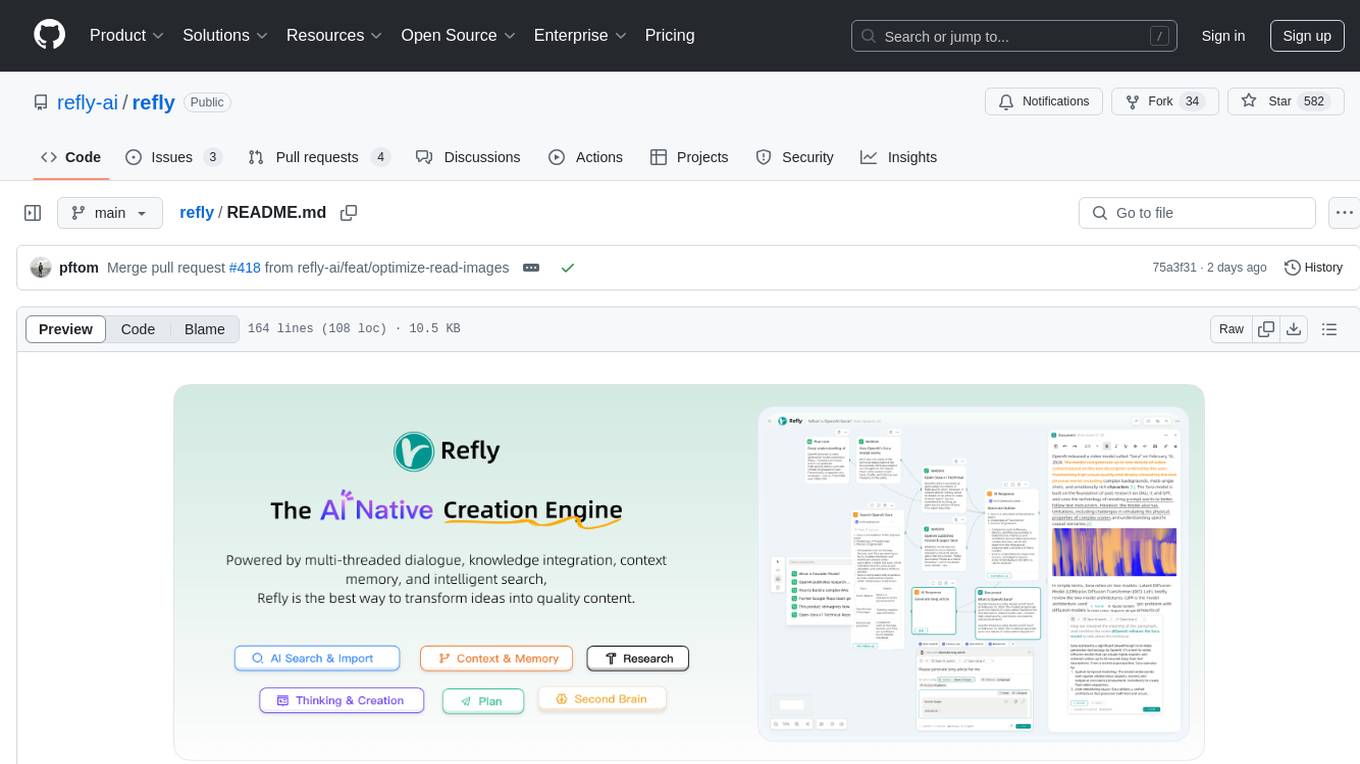

refly

Refly.AI is an open-source AI-native creation engine that empowers users to transform ideas into production-ready content. It features a free-form canvas interface with multi-threaded conversations, knowledge base integration, contextual memory, intelligent search, WYSIWYG AI editor, and more. Users can leverage AI-powered capabilities, context memory, knowledge base integration, quotes, and AI document editing to enhance their content creation process. Refly offers both cloud and self-hosting options, making it suitable for individuals, enterprises, and organizations. The tool is designed to facilitate human-AI collaboration and streamline content creation workflows.

amazon-bedrock-agentcore-samples

Amazon Bedrock AgentCore Samples repository provides examples and tutorials to deploy and operate AI agents securely at scale using any framework and model. It is framework-agnostic and model-agnostic, allowing flexibility in deployment. The repository includes tutorials, end-to-end applications, integration guides, deployment automation, and full-stack reference applications for developers to understand and implement Amazon Bedrock AgentCore capabilities into their applications.

magic

Magic is an open-source all-in-one AI productivity platform designed to help enterprises quickly build and deploy AI applications, aiming for a 100x increase in productivity. It consists of various AI products and infrastructure tools, such as Super Magic, Magic IM, Magic Flow, and more. Super Magic is a general-purpose AI Agent for complex task scenarios, while Magic Flow is a visual AI workflow orchestration system. Magic IM is an enterprise-grade AI Agent conversation system for internal knowledge management. Teamshare OS is a collaborative office platform integrating AI capabilities. The platform provides cloud services, enterprise solutions, and a self-hosted community edition for users to leverage its features.

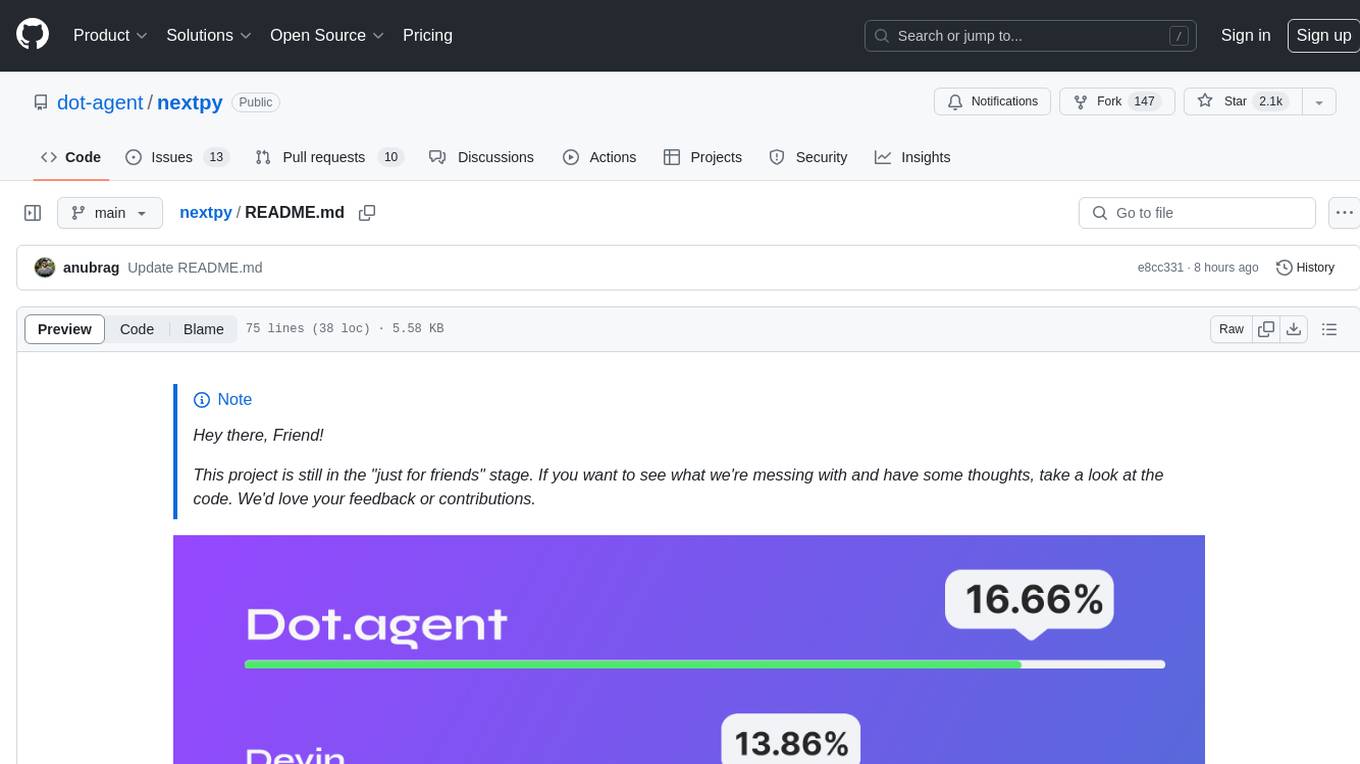

nextpy

Nextpy is a cutting-edge software development framework optimized for AI-based code generation. It provides guardrails for defining AI system boundaries, structured outputs for prompt engineering, a powerful prompt engine for efficient processing, better AI generations with precise output control, modularity for multiplatform and extensible usage, developer-first approach for transferable knowledge, and containerized & scalable deployment options. It offers 4-10x faster performance compared to Streamlit apps, with a focus on cooperation within the open-source community and integration of key components from various projects.

arbigent

Arbigent (Arbiter-Agent) is an AI agent testing framework designed to make AI agent testing practical for modern applications. It addresses challenges faced by traditional UI testing frameworks and AI agents by breaking down complex tasks into smaller, dependent scenarios. The framework is customizable for various AI providers, operating systems, and form factors, empowering users with extensive customization capabilities. Arbigent offers an intuitive UI for scenario creation and a powerful code interface for seamless test execution. It supports multiple form factors, optimizes UI for AI interaction, and is cost-effective by utilizing models like GPT-4o mini. With a flexible code interface and open-source nature, Arbigent aims to revolutionize AI agent testing in modern applications.

For similar tasks

AI-Gateway

The AI-Gateway repository explores the AI Gateway pattern through a series of experimental labs, focusing on Azure API Management for handling AI services APIs. The labs provide step-by-step instructions using Jupyter notebooks with Python scripts, Bicep files, and APIM policies. The goal is to accelerate experimentation of advanced use cases and pave the way for further innovation in the rapidly evolving field of AI. The repository also includes a Mock Server to mimic the behavior of the OpenAI API for testing and development purposes.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.