wdoc

Summarize and query from a lot of heterogeneous documents. Any LLM provider, any filetype, scalable (?), WIP

Stars: 416

wdoc is a powerful Retrieval-Augmented Generation (RAG) system designed to summarize, search, and query documents across various file types. It aims to handle large volumes of diverse document types, making it ideal for researchers, students, and professionals dealing with extensive information sources. wdoc uses LangChain to process and analyze documents, supporting tens of thousands of documents simultaneously. The system includes features like high recall and specificity, support for various Language Model Models (LLMs), advanced RAG capabilities, advanced document summaries, and support for multiple tasks. It offers markdown-formatted answers and summaries, customizable embeddings, extensive documentation, scriptability, and runtime type checking. wdoc is suitable for power users seeking document querying capabilities and AI-powered document summaries.

README:

I'm wdoc. I solve RAG problems.

- wdoc, imitating Winston "The Wolf" Wolf

wdoc is a powerful RAG (Retrieval-Augmented Generation) system designed to summarize, search, and query documents across various file types. It's particularly useful for handling large volumes of diverse document types, making it ideal for researchers, students, and professionals dealing with extensive information sources. Created by a medical student who needed a better way to search through diverse knowledge sources (lectures, Anki cards, PDFs, EPUBs, etc.), this tool was born from frustration with existing RAG solutions for querying and summarizing.

(The online documentation can be found here)

-

Goal and project specifications:

wdocuses LangChain to process and analyze documents. It's capable of querying tens of thousands of documents across various file types at the same time. The project also includes a tailored summary feature to help users efficiently keep up with large amounts of information. -

Current status: Under active development

- Used daily by the developer for several months: but still in alpha. I would greatly benefit from testing by users as it's the quickest way for me to find the many small bugs that are quick to fix.

- May have some instabilities, but issues can usually be resolved quickly

- The main branch is more stable than the dev branch, which offers more features

- Open to feature requests and pull requests

- All feedbacks, including reports of typos, are highly appreciated

- Please open an issue before making a PR, as there may be ongoing improvements in the pipeline

-

Key Features:

- Aims to support any filetypes and query from all of them at the same time (15+ are already implemented!)

- High recall and specificity: it was made to find A LOT of documents using carefully designed embedding search then carefully aggregate gradually each answer using semantic batch to produce a single answer that mentions the source pointing to the exact portion of the source document.

- Supports virtually any LLM providers, including local ones, and even with extra layers of security for super secret stuff.

- Use both an expensive and cheap LLM to make recall as high as possible because we can afford fetching a lot of documents per query (via embeddings)

- Finally a useful AI powered summary: get the thought process of the author instead of nebulous takeaways.

- Extensible, this is both a tool and a library. It was even turned into an Open-WebUI Tool

Give it to me I am in a hurry!

Note: a list of examples can be found in examples.md

link="https://situational-awareness.ai/wp-content/uploads/2024/06/situationalawareness.pdf"

wdoc --path=$link --task=query --filetype="online_pdf" --query="What does it say about alphago?" --query_retrievers='default_multiquery' --top_k=auto_200_500- This will:

- parse what's in --path as a link to a pdf to download (otherwise the url could simply be a webpage, but in most cases you can leave it to 'auto' by default as heuristics are in place to detect the most appropriate parser).

- cut the text into chunks and create embeddings for each

- Take the user query, create embeddings for it ('default') AND ask the default LLM to generate alternative queries and embed those

- Use those embeddings to search through all chunks of the text and get the 200 most appropriate documents

- Pass each of those documents to the smaller LLM (default: anthropic/claude-3-5-haiku-20241022) to tell us if the document seems appropriate given the user query

- If More than 90% of the 200 documents are appropriate, then we do another search with a higher top_k and repeat until documents start to be irrelevant OR we it 500 documents.

- Then each relevant doc is sent to the strong LLM (by default, anthropic/claude-3-7-sonnet-20250219) to extract relevant info and give one answer.

- Then all those "intermediate" answers are 'semantic batched' (meaning we create embeddings, do hierarchical clustering, then create small batch containing several intermediate answers) and each batch is combined into a single answer.

- Rinse and repeat steps 7+8 until we have only one answer, that is returned to the user.

link="https://situational-awareness.ai/wp-content/uploads/2024/06/situationalawareness.pdf"

wdoc --path=$link --task=summarize --filetype="online_pdf"-

This will:

- Split the text into chunks

- pass each chunk into the strong LLM (by default anthropic/claude-3-7-sonnet-20250219) for a very low level (=with all details) summary. The format is markdown bullet points for each idea and with logical indentation.

- When creating each new chunk, the LLM has access to the previous chunk for context.

- All summary are then concatenated and returned to the user

-

For extra large documents like books for example, this summary can be recusively fed to

wdocusing argument --summary_n_recursion=2 for example. -

Those two tasks, query and summary, can be combined with --task summarize_then_query which will summarize the document but give you a prompt at the end to ask question in case you want to clarify things.

-

For more, you can read examples.md.

-

Note that there is an official Open-WebUI Tool that is even simpler to use.

- 15+ filetypes: also supports combination to load recursively or define complex heterogenous corpus like a list of files, list of links, using regex, youtube playlists etc. See Supported filestypes. All filetype can be seamlessly combined in the same index, meaning you can query your anki collection at the same time as your work PDFs). It supports removing silence from audio files and youtube videos too!

- 100+ LLMs and many embeddings: Supports any LLM by OpenAI, Mistral, Claude, Ollama, Openrouter, etc. thanks to litellm. The list of supported embeddings engine can be found here but includes at least Openai (or any openai API compatible models), Cohere, Azure, Bedrock, NVIDIA NIM, Hugginface, Mistral, Ollama, Gemini, Vertex, Voyage.

-

Local and Private LLM: take some measures to make sure no data leaves your computer and goes to an LLM provider: no API keys are used, all

api_baseare user set, cache are isolated from the rest, outgoing connections are censored by overloading sockets, etc. -

Advanced RAG to query lots of diverse documents:

- The documents are retrieved using embeddings

- Then a weak LLM model ("Eve the Evaluator") is used to tell which of those document is not relevant

- Then the strong LLM is used to answer ("Anna the Answerer") the question using each individual remaining documents.

- Then all relevant answers are combined ("Carl the Combiner") into a single short markdown-formatted answer. Before being combined, they are batched by semantic clusters

and semantic order using scipy's hierarchical clustering and leaf ordering, this makes it easier for the LLM to combine the answers in a manner that makes bottom up sense.

Eve the Evaluator,Anna the AnswererandCarl the Combinerare the names given to each LLM in their system prompt, this way you can easily add specific additional instructions to a specific step. There's alsoSam the Summarizerfor summaries andRaphael the Rephraserto expand your query. - Each document is identified by a unique hash and the answers are sourced, meaning you know from which document comes each information of the answer.

- Supports a special syntax like "QE >>>> QA" were QE is a question used to filter the embeddings and QA is the actual question you want answered.

-

Advanced summary:

- Instead of unusable "high level takeaway" points, compress the reasoning, arguments, though process etc of the author into an easy to skim markdown file.

- The summaries are then checked again n times for correct logical indentation etc.

- The summary can be in the same language as the documents or directly translated.

- Many tasks: See Supported tasks.

-

Trust but verify: The answer is sourced:

wdockeeps track of the hash of each document used in the answer, allowing you to verify each assertion. - Markdown formatted answers and summaries: using rich.

- Sane embeddings: By default use sophisticated embeddings like multi query retrievers but also include SVM, KNN, parent retriever etc. Customizable.

-

Fully documented Lots of docstrings, lots of in code comments, detailed

--helpetc. Take a look at the examples.md for a list of shell and python examples. The full help can be found in the file help.md or viapython -m wdoc --help. I work hard to maintain an exhaustive documentation. The complete documentation in a single page is available on the website. -

Scriptable / Extensible: You can use

wdocin other python project using--import_mode. Take a look at the scripts below. There is even an open-webui Tool. -

Statically typed: Runtime type checking. Opt out with an environment flag:

WDOC_TYPECHECKING="disabled / warn / crash" wdoc(by default:warn). Thanks to beartype it shouldn't even slow down the code! - LLM (and embeddings) caching: speed things up, as well as index storing and loading (handy for large collections).

- Good PDF parsing PDF parsers are notoriously unreliable, so 15 (!) different loaders are used, and the best according to a parsing scorer is kept. Including table support via openparse (no GPU needed by default) or via UnstructuredPDFLoader.

- Langfuse support: If you set the appropriate langfuse environment variables they will be used. See this guide or this one to learn more (Note: this is disabled if using private_mode to avoid any leaks).

- Document filtering: based on regex for document content or metadata.

- Fast: Parallel document loading, parsing, embeddings, querying, etc.

- Shell autocompletion using python-fire

- Notification callback: Can be used for example to get summaries on your phone using ntfy.sh.

- Hacker mindset: I'm a friendly dev! Just open an issue if you have a feature request or anything else.

Click to read more

This TODO list is maintained automatically by MdXLogseqTODOSync

-

- add more tests

- add test for the private mode

- add test for the testing models

- add test for each loader

- the logit bias is wrong for openai models: the token is specific to a given family of model

- rewrite the python API to make it more useable. (also related to https://github.com/thiswillbeyourgithub/wdoc/issues/13)

- be careful to how to use import_mode

- pay attention to how to modify the init and main.py files

- pay attention to how the --help flag works

- pay attention to how the USAGE document is structured

- make it easy to use wdoc as an openwebui pipeline (also related to https://github.com/thiswillbeyourgithub/wdoc/issues/4)

- probably by creating a server with fastapi then a quick pipeline file

- support other vector databases

- understand why it appears that in some cases the sources id is never properly parsed

- crash if source got lost + arg to disable

- add more tests

-

- add crawl4ai parser: https://github.com/unclecode/crawl4ai

- Way to add the title (or all metadata) of a document to its own text. Enabled by default. Because this would allow searching among many documents that don't refer to the original title (for example: material safety datasheets)

- default value is "author" "page" title"

- pay attention to avoid including personnal info (for example use relative paths instead of absolute paths)

- add a /save PATH command to save the chat and metadata to a json file

- Accept input from stdin, to for example query directly from a manpage

- make wdoc work if used with shell pipes

- add image support printing via icat or via the other lib you found last time, would be useful for summaries etc

- add an audio backend to use the subtitles from a video file directly

- store the anki images as 'imagekeys' as the idea works for other parsers too

- add an argument --whole_text to avoid chunking (this would just increase the chunk size to a super large number I guess)

- add apprise callback support

- add a filetype "custom_parser" and an argument "--custom_parser" containing a path to a python file. Must receive a docdict and a few other things and return a list of documents

- then make it work with an online search engine for technical things

- add a langchain code loader that uses aider to get the repomap

- add a pikepdf loader because it can be used to automatically decrypt pdfs

- add a query_branching_nb argument that asks an LLM to identify a list of keywords from the intermediate answers, then look again for documents using this keyword and filtering via the weak llm

- write a script that shows how to use bertopic on the documents of wdoc

- add a jina web search and async retriever https://jina.ai/news/jina-reader-for-search-grounding-to-improve-factuality-of-llms/

- add a retriever where the LLM answer without any context

- add support for readabilipy for parsing html

- add an obsidian loader

- add a /chat command to the prompt, it would enable starting an interactive session directly with the llm

- make sure to expose loaders and batch_loader to make it easy to import by others

- find a way to make it work with llm from simonw

- make images an actual filetype

-

- store the available tasks in a single var in misc.py

- check that the task search work on things other than anki

- create a custom custom retriever, derived from multiquery retriever that does actual parallel requests. Right now it's not the case (maybe in async but I don't plan on using async for now). This retriever seems a good part of the slow down.

- stop using your own youtube timecode parser and instead use langchain's chunk transcript format

- implement usearch instead of faiss, it seems in all points faster, supports quantized embeddings, i trust their langchain implementation more

- Use an env var to drop_params of litellm

- add more specific exceptions for file loading error. One exception for all, one for batch and one for individual loader

- use heuristics to find the best number of clusters when doing semantic reranking

- arg to use jina v3 embeddings for semantic batching because it allows specifying tasks that seem appropriate for that

- add an env variable or arg to overload the backend url for whisper. Then set it always for you and mention it there: https://github.com/fedirz/faster-whisper-server/issues/5

- find a way to set a max cost at which to crash if it exceeds a maximum cost during a query, probably via the price callback

- anki_profile should be able to be a path

- store wdoc's version and indexing timestamp in the metadata of the document

- arg --oneoff that does not trigger the chat after replying. Allowing to not hog all the RAM if ran in multiple terminals for example through SSH

- add a (high) token threshold above which two texts are not combined but just concatenated in the semantic order. It would avoid it loosing context. Use a --- separator

- compute the cost of whisper and deepgram

- use a pydantic basemodel for output instead of a dict

- same for summaries, it should at least contain the method to substitute the sources and then back

- investigate storing the vectors in a sqlite3 file

- make a plugin to llm that looks like file-to-prompt from simonw

- Always bind a user metadata to litellm for langfuse etc

- Add more metadata to each request to langfuse more informative

- add a reranker to better sort the output of the retrievers. Right now with the multiquery it returns way too many and I'm thinking it might be a bad idea to just crop at top_k as I'm doing currently

- add a status argument that just outputs the logs location and size, the cache location and size, the number of documents etc

- add the python magic of the file as a file metadata

- add an env var to specify the threshold for relevant document by the query eval llm

- find a way to return the evaluations for each document also

- move retrievers.py in an embeddings folder

- stop using lambda functions in the chains because it makes the code barely readable

- when doing recursive summary: tell the model that if it's really sure that there are no modifications to do: it should just reply "EXIT" and it would save time and money instead of waiting for it to copy back the exact content

- add image parsing as base64 metadata from pdf

- use multiple small chains instead of one large and complicated and hard to maintain

- add an arg to bypass query combine, useful for small models

- tell the llm to write a special message if the parsing failed or we got a 404 or paywall etc

- catch this text and crash

- add check that all metadata is only made of int float and str

- move the code that filters embeddings inside the embeddings.py file

- this way we can dynamically refilter using the chat prompt

- task summary then query should keep in context both the full text and the summary

- if there's only one intermediate answer, pass it as answer without trying to recombine

- filter_metadata should support an OR syntax

- add a --show_models argument to display the list of available models

- add a way to open the documents automatically, based on platform dirs etc. For ex if okular is installed, open pdfs directly at the right page

- the best way would be to create opener.py that does a bit like loader but for all filetypes and platforms

- add an image filetype: it will be either OCR'd using format and/or will be captioned using a multimodal llm, for example gpt4o mini

- nanollava is a 0.5b that probably can be used for that with proper prompting

- add a key/val arg to specify the trust we have in a doc, call this metadata context in the prompt

- add an arg to return just the dict of all documents and embeddings. Notably useful to debug documents

- use a class for the cli prompt, instead of a dumb function

- arg to disable eval llm filtering

- just answer 1 directly if no eval llm is set

- display the number of documents and tokens in the bottom toolbar

- add a demo gif

- investigate asking the LLM to add leading emojis to the bullet point for quicker reading of summaries

- see how easy or hard it is to use an async chain

- ability to cap the search documents capped by a number of tokens instead of a number of documents

- for anki, allow using a query instead of loading with ankipandas

- add a "try_all" filetype that will try each filetype and keep the first that works

- add bespoke-minicheck from ollama to fact check when using RAG: https://ollama.com/library/bespoke-minicheck

- or via their API directly : https://docs.bespokelabs.ai/bespoke-minicheck/api but they don't seem to properly disclose what they do with the data

- add a way to use binary faiss index as its as efficient but faster and way more compact

-

auto: default, guess the filetype for you

-

url: try many ways to load a webpage, with heuristics to find the better parsed one

-

youtube: text is then either from the yt subtitles / translation or even better: using whisper / deepgram. Note that youtube subtitles are downloaded with the timecode (so you can ask 'when does the author talks about such and such) but at a lower sampling frequency (instead of one timecode per second, only one per 15s). Youtube chapters are also given as context to the LLM when summarizing, which probably help it a lot.

-

pdf: 15 default loaders are implemented, heuristics are used to keep the best one and stop early. Table support via openparse or UnstructuredPDFLoader. Easy to add more.

-

online_pdf: via URL then treated as a pdf (see above)

-

anki: any subset of an anki collection db.

altandtitleof images can be shown to the LLM, meaning that if you used the ankiOCR addon this information will help contextualize the note for the LLM. -

string: the cli prompts you for a text so you can easily paste something, handy for paywalled articles!

-

txt: .txt, markdown, etc

-

text: send a text content directly as path

-

local_html: useful for website dumps

-

logseq_markdown: thanks to my other project: LogseqMarkdownParser you can use your Logseq graph

-

local_audio: supports many file formats, can use either OpenAI's whisper or deepgram's Nova-3 model. Supports automatically removing silence etc. Note: audio that are too large for whisper (usually >25mb) are automatically split into smaller files, transcribed, then combined. Also, audio transcripts are converted to text containing timestamps at regular intervals, making it possible to ask the LLM when something was said.

-

local_video: extract the audio then treat it as local_audio

-

online_media: use youtube_dl to try to download videos/audio, if fails try to intercept good url candidates using playwright to load the page. Then processed as local_audio (but works with video too).

-

epub: barely tested because epub is in general a poorly defined format

-

powerpoint: .ppt, .pptx, .odp, ...

-

word: .doc, .docx, .odt, ...

-

json_dict: a text file containing a single json dict.

-

Recursive types

- youtube playlists: get the link for each video then process as youtube

- recursive_paths: turns a path, a regex pattern and a filetype into all the files found recurisvely, and treated a the specified filetype (for example many PDFs or lots of HTML files etc).

- link_file: turn a text file where each line contains a url into appropriate loader arguments. Supports any link, so for example webpage, link to pdfs and youtube links can be in the same file. Handy for summarizing lots of things!

-

json_entries: turns a path to a file where each line is a json dict: that contains arguments to use when loading. Example: load several other recursive types. An example can be found in

docs/json_entries_example.json. -

toml_entries: read a .toml file. An example can be found in

docs/toml_entries_example.toml.

- query give documents and asks questions about it.

- search only returns the documents and their metadata. For anki it can be used to directly open cards in the browser.

-

summarize give documents and read a summary. The summary prompt can be found in

utils/prompts.py. - summarize_then_query summarize the document then allow you to query directly about it.

Refer to examples.md.

wdoc was mainly developped on python 3.11.7 but I'm not sure all the versions that work. When in doubt, make sure that your Python version matches this one.

- To install:

- Using pip:

pip install -U wdoc - Or to get a specific git branch:

-

devbranch:pip install git+https://github.com/thiswillbeyourgithub/wdoc@dev -

mainbranch:pip install git+https://github.com/thiswillbeyourgithub/wdoc@main

-

- You can also use uvx or pipx. But as I'm not experiences with them I don't know if that can cause issues with for example caching etc. Do tell me if you tested it!

- Using uvx:

uvx wdoc --help - Using pipx:

pipx run wdoc --help

- Using uvx:

- In any case, it is recommended to try to install pdftotext with

pip install -U wdoc[pdftotext]as well as add fasttext support withpip install -U wdoc[fasttext]. - If you plan on contributing, you will also need

wdoc[dev]for the commit hooks.

- Using pip:

- Add the API key for the backend you want as an environment variable: for example

export OPENAI_API_KEY="***my_key***" - Launch is as easy as using

wdoc --task=query --path=MYDOC [ARGS]- If for some reason this fails, maybe try with

python -m wdoc. And if everything fails, try withuvx wdoc@latest, or as last resort clone this repo and try again aftercdinside it? Don't hesitate to open an issue. - To get shell autocompletion: if you're using zsh:

eval $(cat shell_completions/wdoc_completion.zsh). Also provided forbashandfish. You can generate your own withwdoc -- --completion MYSHELL > my_completion_file". - Don't forget that if you're using a lot of documents (notably via recursive filetypes) it can take a lot of time (depending on parallel processing too, but you then might run into memory errors).

- Take a look at the examples.md for a list of shell and python examples.

- If for some reason this fails, maybe try with

- To ask questions about a local document:

wdoc query --path="PATH/TO/YOUR/FILE" --filetype="auto"- If you want to reduce the startup time by directly loading the embeddings from a previous run (although the embeddings are always cached anyway): add

--saveas="some/path"to the previous command to save the generated embeddings to a file and replace with--loadfrom "some/path"on every subsequent call.

- If you want to reduce the startup time by directly loading the embeddings from a previous run (although the embeddings are always cached anyway): add

- For more: read the documentation at

wdoc --help

- More to come in the scripts folder.

- Ntfy Summarizer: automatically summarize a document from your android phone using ntfy.sh.

- TheFiche: create summaries for specific notions directly as a logseq page.

-

FilteredDeckCreator: directly create an anki filtered deck from the cards found by

wdoc. - Official Open-WebUI Tool, hosted here.

FAQ

-

Who is this for?

-

wdocis for power users who want document querying on steroid, and in depth AI powered document summaries.

-

-

What's RAG?

- A RAG system (retrieval augmented generation) is basically an LLM powered search through a text corpus.

-

Why make another RAG system? Can't you use any of the others?

- I'm Olicorne, a medical student who needed a tool to ask medical questions from a lot (tens of thousands) of documents, of different types (epub, pdf, anki database, Logseq, website dump, youtube videos and playlists, recorded conferences, audio files, etc). Existing solutions couldn't handle this diversity and scale of content.

-

Why is

wdocbetter than most RAG system to ask questions on documents?- It uses both a strong and query_eval LLM. After finding the appropriate documents using embeddings, the query_eval LLM is used to filter through the documents that don't seem to be about the question, then the strong LLM answers the question based on each remaining documents, then combines them all in a neat markdown. Also

wdocis very customizable.

- It uses both a strong and query_eval LLM. After finding the appropriate documents using embeddings, the query_eval LLM is used to filter through the documents that don't seem to be about the question, then the strong LLM answers the question based on each remaining documents, then combines them all in a neat markdown. Also

-

Can you use wdoc on

wdoc's documentation?- Yes of course!

wdoc --task=query --path https://wdoc.readthedocs.io/en/latest/all_docs.html

- Yes of course!

-

Why can

wdocalso produce summaries?- I have little free time so I needed a tailor made summary feature to keep up with the news. But most summary systems are rubbish and just try to give you the high level takeaway points, and don't handle properly text chunking. So I made my own tailor made summarizer. The summary prompts can be found in

utils/prompts.pyand focus on extracting the arguments/reasonning/though process/arguments of the author then use markdown indented bullet points to make it easy to read. It's really good! The prompts dataclass is not frozen so you can provide your own prompt if you want.

- I have little free time so I needed a tailor made summary feature to keep up with the news. But most summary systems are rubbish and just try to give you the high level takeaway points, and don't handle properly text chunking. So I made my own tailor made summarizer. The summary prompts can be found in

-

What other tasks are supported by

wdoc?- See Supported tasks.

-

Which LLM providers are supported by

wdoc?-

wdocsupports virtually any LLM provider thanks to litellm. It even supports local LLM and local embeddings (see examples.md). The list of supported embeddings engine can be found here but includes at least Openai (or any openai API compatible models), Cohere, Azure, Bedrock, NVIDIA NIM, Hugginface, Mistral, Ollama, Gemini, Vertex, Voyage.

-

-

What do you use

wdocfor?- I follow heterogeneous sources to keep up with the news: youtube, website, etc. So thanks to

wdocI can automatically create awesome markdown summaries that end up straight into my Logseq database as a bunch ofTODOblocks. - I use it to ask technical questions to my vast heterogeneous corpus of medical knowledge.

- I use it to query my personal documents using the

--privateargument. - I sometimes use it to summarize a documents then go straight to asking questions about it, all in the same command.

- I use it to ask questions about entire youtube playlists.

- Other use case are the reason I made the scripts made with

wdocsection

- I follow heterogeneous sources to keep up with the news: youtube, website, etc. So thanks to

-

What's up with the name?

- One of my favorite character (and somewhat of a rolemodel is Winston Wolf and after much hesitation I decided

WolfDocwould be too confusing andWinstonDocsounds like something micro$oft would do. Alsowdandwdocwere free, whereasdoctoolswas already taken. The initial name of the project wasDocToolsLLM, a play on words between 'doctor' and 'tool'.

- One of my favorite character (and somewhat of a rolemodel is Winston Wolf and after much hesitation I decided

-

How can I improve the prompt for a specific task without coding?

- Each prompt of the

querytask are roleplaying as employees working for WDOC-CORP©, either asEve the Evaluator(the LLM that filters out relevant documents),Anna the Answerer(the LLM that answers the question from a filtered document) orCarl the Combiner(the LLM that combines answers from Answerer as one). There's alsoSam the Summarizerfor summaries andRaphael the Rephraserto expand your query. They are all receiving orders from you if you talk to them in a prompt.

- Each prompt of the

-

How can I use

wdoc's parser for my own documents?- If you are in the shell cli you can easily use

wdoc parse my_file.pdf(this actually replaces the call to call insteadwdoc_parse_file my_file.pdf). add--format=langchain_dictto get the text and metadata as a list of dict, otherwise you will only get the text. Other formats exist including--format=xmlto make it LLM friendly like files-to-promt If you're having problem with argument parsing you can try adding the--pipeargument. - If you want the document using python:

from wdoc import wdoc list_of_docs = Wdoc.parse_file(path=my_path)

- Another example would be to use wdoc to parse an anki deck:

wdoc_parse_file --filetype "anki" --anki_profile "Main" --anki_deck "mydeck::subdeck1" --anki_notetype "my_notetype" --anki_template "<header>\n{header}\n</header>\n<body>\n{body}\n</body>\n<personal_notes>\n{more}\n</personal_notes>\n<tags>{tags}</tags>\n{image_ocr_alt}" --anki_tag_filter "a::tag::regex::.*something.*" --format=text

- If you are in the shell cli you can easily use

-

What should I do if my PDF are encrypted?

- If you're on linux you can try running

qpdf --decrypt input.pdf output.pdf- I made a quick and dirty batch script for in this repo

- If you're on linux you can try running

-

How can I add my own pdf parser?

- Write a python class and add it there:

wdoc.utils.loaders.pdf_loaders['parser_name']=parser_objectthen callwdocwith--pdf_parsers=parser_name.- The class has to take a

pathargument in__init__, have aloadmethod taking no argument but returning aList[Document]. Take a look at theOpenparseDocumentParserclass for an example.

- The class has to take a

- Write a python class and add it there:

-

What should I do if I keep hitting rate limits?

- The simplest way is to add the

debugargument. It will disable multithreading, multiprocessing and LLM concurrency. A less harsh alternative is to set the environment variableWDOC_LLM_MAX_CONCURRENCYto a lower value.

- The simplest way is to add the

-

How can I run the tests?

- Try

python -m pytest tests/test_wdoc.py -v -m basicto run the basic tests, andpython -m pytest tests/test_wdoc.py -v -m apito run the test that use external APIs. To install the needed packages you can douv pip install wdoc[dev].

- Try

-

How can I query a text but without chunking? / How can I query a text with the full text as context?

- If you set the environment variable

WDOC_MAX_CHUNK_SIZEto a very high value and use a model with enough context according to litellm's metadata, then no chunking will happen and the LLM will have the full text as context.

- If you set the environment variable

-

Is there a way to use

wdocwith Open-WebUI?- Yes! I am maintaining an official Open-WebUI Tool which is hosted here.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for wdoc

Similar Open Source Tools

wdoc

wdoc is a powerful Retrieval-Augmented Generation (RAG) system designed to summarize, search, and query documents across various file types. It aims to handle large volumes of diverse document types, making it ideal for researchers, students, and professionals dealing with extensive information sources. wdoc uses LangChain to process and analyze documents, supporting tens of thousands of documents simultaneously. The system includes features like high recall and specificity, support for various Language Model Models (LLMs), advanced RAG capabilities, advanced document summaries, and support for multiple tasks. It offers markdown-formatted answers and summaries, customizable embeddings, extensive documentation, scriptability, and runtime type checking. wdoc is suitable for power users seeking document querying capabilities and AI-powered document summaries.

WDoc

WDoc is a powerful Retrieval-Augmented Generation (RAG) system designed to summarize, search, and query documents across various file types. It supports querying tens of thousands of documents simultaneously, offers tailored summaries to efficiently manage large amounts of information, and includes features like supporting multiple file types, various LLMs, local and private LLMs, advanced RAG capabilities, advanced summaries, trust verification, markdown formatted answers, sophisticated embeddings, extensive documentation, scriptability, type checking, lazy imports, caching, fast processing, shell autocompletion, notification callbacks, and more. WDoc is ideal for researchers, students, and professionals dealing with extensive information sources.

lovelaice

Lovelaice is an AI-powered assistant for your terminal and editor. It can run bash commands, search the Internet, answer general and technical questions, complete text files, chat casually, execute code in various languages, and more. Lovelaice is configurable with API keys and LLM models, and can be used for a wide range of tasks requiring bash commands or coding assistance. It is designed to be versatile, interactive, and helpful for daily tasks and projects.

feedgen

FeedGen is an open-source tool that uses Google Cloud's state-of-the-art Large Language Models (LLMs) to improve product titles, generate more comprehensive descriptions, and fill missing attributes in product feeds. It helps merchants and advertisers surface and fix quality issues in their feeds using Generative AI in a simple and configurable way. The tool relies on GCP's Vertex AI API to provide both zero-shot and few-shot inference capabilities on GCP's foundational LLMs. With few-shot prompting, users can customize the model's responses towards their own data, achieving higher quality and more consistent output. FeedGen is an Apps Script based application that runs as an HTML sidebar in Google Sheets, allowing users to optimize their feeds with ease.

noScribe

noScribe is an AI-based software designed for automated audio transcription, specifically tailored for transcribing interviews for qualitative social research or journalistic purposes. It is a free and open-source tool that runs locally on the user's computer, ensuring data privacy. The software can differentiate between speakers and supports transcription in 99 languages. It includes a user-friendly editor for reviewing and correcting transcripts. Developed by Kai Dröge, a PhD in sociology with a background in computer science, noScribe aims to streamline the transcription process and enhance the efficiency of qualitative analysis.

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve and Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. Best run on GPU with 16GB vRAM. Users can combine RAG with fine-tuning using LLaMa2Lang repository. The tool allows configuration for LLM, data, LLM parameters, prompt, and document splitting. Funding is sought to democratize AI and advance its applications.

brokk

Brokk is a code assistant designed to understand code semantically, allowing LLMs to work effectively on large codebases. It offers features like agentic search, summarizing related classes, parsing stack traces, adding source for usages, and autonomously fixing errors. Users can interact with Brokk through different panels and commands, enabling them to manipulate context, ask questions, search codebase, run shell commands, and more. Brokk helps with tasks like debugging regressions, exploring codebase, AI-powered refactoring, and working with dependencies. It is particularly useful for making complex, multi-file edits with o1pro.

RAGMeUp

RAG Me Up is a generic framework that enables users to perform Retrieve, Answer, Generate (RAG) on their own dataset easily. It consists of a small server and UIs for communication. The tool can run on CPU but is optimized for GPUs with at least 16GB of vRAM. Users can combine RAG with fine-tuning using the LLaMa2Lang repository. The tool provides a configurable RAG pipeline without the need for coding, utilizing indexing and inference steps to accurately answer user queries.

gpt-subtrans

GPT-Subtrans is an open-source subtitle translator that utilizes large language models (LLMs) as translation services. It supports translation between any language pairs that the language model supports. Note that GPT-Subtrans requires an active internet connection, as subtitles are sent to the provider's servers for translation, and their privacy policy applies.

llm-subtrans

LLM-Subtrans is an open source subtitle translator that utilizes LLMs as a translation service. It supports translating subtitles between any language pairs supported by the language model. The application offers multiple subtitle formats support through a pluggable system, including .srt, .ssa/.ass, and .vtt files. Users can choose to use the packaged release for easy usage or install from source for more control over the setup. The tool requires an active internet connection as subtitles are sent to translation service providers' servers for translation.

blurt

Blurt is a Gnome shell extension that enables accurate speech-to-text input in Linux. It is based on the command line utility NoteWhispers and supports Gnome shell version 48. Users can transcribe speech using a local whisper.cpp installation or a whisper.cpp server. The extension allows for easy setup, start/stop of speech-to-text input with key bindings or icon click, and provides visual indicators during operation. It offers convenience by enabling speech input into any window that allows text input, with the transcribed text sent to the clipboard for easy pasting.

serena

Serena is a powerful coding agent that integrates with existing LLMs to provide essential semantic code retrieval and editing tools. It is free to use and does not require API keys or subscriptions. Serena can be used for coding tasks such as analyzing, planning, and editing code directly on your codebase. It supports various programming languages and offers semantic code analysis capabilities through language servers. Serena can be integrated with different LLMs using the model context protocol (MCP) or Agno framework. The tool provides a range of functionalities for code retrieval, editing, and execution, making it a versatile coding assistant for developers.

warc-gpt

WARC-GPT is an experimental retrieval augmented generation pipeline for web archive collections. It allows users to interact with WARC files, extract text, generate text embeddings, visualize embeddings, and interact with a web UI and API. The tool is highly customizable, supporting various LLMs, providers, and embedding models. Users can configure the application using environment variables, ingest WARC files, start the server, and interact with the web UI and API to search for content and generate text completions. WARC-GPT is designed for exploration and experimentation in exploring web archives using AI.

aici

The Artificial Intelligence Controller Interface (AICI) lets you build Controllers that constrain and direct output of a Large Language Model (LLM) in real time. Controllers are flexible programs capable of implementing constrained decoding, dynamic editing of prompts and generated text, and coordinating execution across multiple, parallel generations. Controllers incorporate custom logic during the token-by-token decoding and maintain state during an LLM request. This allows diverse Controller strategies, from programmatic or query-based decoding to multi-agent conversations to execute efficiently in tight integration with the LLM itself.

MARS5-TTS

MARS5 is a novel English speech model (TTS) developed by CAMB.AI, featuring a two-stage AR-NAR pipeline with a unique NAR component. The model can generate speech for various scenarios like sports commentary and anime with just 5 seconds of audio and a text snippet. It allows steering prosody using punctuation and capitalization in the transcript. Speaker identity is specified using an audio reference file, enabling 'deep clone' for improved quality. The model can be used via torch.hub or HuggingFace, supporting both shallow and deep cloning for inference. Checkpoints are provided for AR and NAR models, with hardware requirements of 750M+450M params on GPU. Contributions to improve model stability, performance, and reference audio selection are welcome.

For similar tasks

document-ai-samples

The Google Cloud Document AI Samples repository contains code samples and Community Samples demonstrating how to analyze, classify, and search documents using Google Cloud Document AI. It includes various projects showcasing different functionalities such as integrating with Google Drive, processing documents using Python, content moderation with Dialogflow CX, fraud detection, language extraction, paper summarization, tax processing pipeline, and more. The repository also provides access to test document files stored in a publicly-accessible Google Cloud Storage Bucket. Additionally, there are codelabs available for optical character recognition (OCR), form parsing, specialized processors, and managing Document AI processors. Community samples, like the PDF Annotator Sample, are also included. Contributions are welcome, and users can seek help or report issues through the repository's issues page. Please note that this repository is not an officially supported Google product and is intended for demonstrative purposes only.

step-free-api

The StepChat Free service provides high-speed streaming output, multi-turn dialogue support, online search support, long document interpretation, and image parsing. It offers zero-configuration deployment, multi-token support, and automatic session trace cleaning. It is fully compatible with the ChatGPT interface. Additionally, it provides seven other free APIs for various services. The repository includes a disclaimer about using reverse APIs and encourages users to avoid commercial use to prevent service pressure on the official platform. It offers online testing links, showcases different demos, and provides deployment guides for Docker, Docker-compose, Render, Vercel, and native deployments. The repository also includes information on using multiple accounts, optimizing Nginx reverse proxy, and checking the liveliness of refresh tokens.

unilm

The 'unilm' repository is a collection of tools, models, and architectures for Foundation Models and General AI, focusing on tasks such as NLP, MT, Speech, Document AI, and Multimodal AI. It includes various pre-trained models, such as UniLM, InfoXLM, DeltaLM, MiniLM, AdaLM, BEiT, LayoutLM, WavLM, VALL-E, and more, designed for tasks like language understanding, generation, translation, vision, speech, and multimodal processing. The repository also features toolkits like s2s-ft for sequence-to-sequence fine-tuning and Aggressive Decoding for efficient sequence-to-sequence decoding. Additionally, it offers applications like TrOCR for OCR, LayoutReader for reading order detection, and XLM-T for multilingual NMT.

searchGPT

searchGPT is an open-source project that aims to build a search engine based on Large Language Model (LLM) technology to provide natural language answers. It supports web search with real-time results, file content search, and semantic search from sources like the Internet. The tool integrates LLM technologies such as OpenAI and GooseAI, and offers an easy-to-use frontend user interface. The project is designed to provide grounded answers by referencing real-time factual information, addressing the limitations of LLM's training data. Contributions, especially from frontend developers, are welcome under the MIT License.

LLMs-at-DoD

This repository contains tutorials for using Large Language Models (LLMs) in the U.S. Department of Defense. The tutorials utilize open-source frameworks and LLMs, allowing users to run them in their own cloud environments. The repository is maintained by the Defense Digital Service and welcomes contributions from users.

LARS

LARS is an application that enables users to run Large Language Models (LLMs) locally on their devices, upload their own documents, and engage in conversations where the LLM grounds its responses with the uploaded content. The application focuses on Retrieval Augmented Generation (RAG) to increase accuracy and reduce AI-generated inaccuracies. LARS provides advanced citations, supports various file formats, allows follow-up questions, provides full chat history, and offers customization options for LLM settings. Users can force enable or disable RAG, change system prompts, and tweak advanced LLM settings. The application also supports GPU-accelerated inferencing, multiple embedding models, and text extraction methods. LARS is open-source and aims to be the ultimate RAG-centric LLM application.

EAGLE

Eagle is a family of Vision-Centric High-Resolution Multimodal LLMs that enhance multimodal LLM perception using a mix of vision encoders and various input resolutions. The model features a channel-concatenation-based fusion for vision experts with different architectures and knowledge, supporting up to over 1K input resolution. It excels in resolution-sensitive tasks like optical character recognition and document understanding.

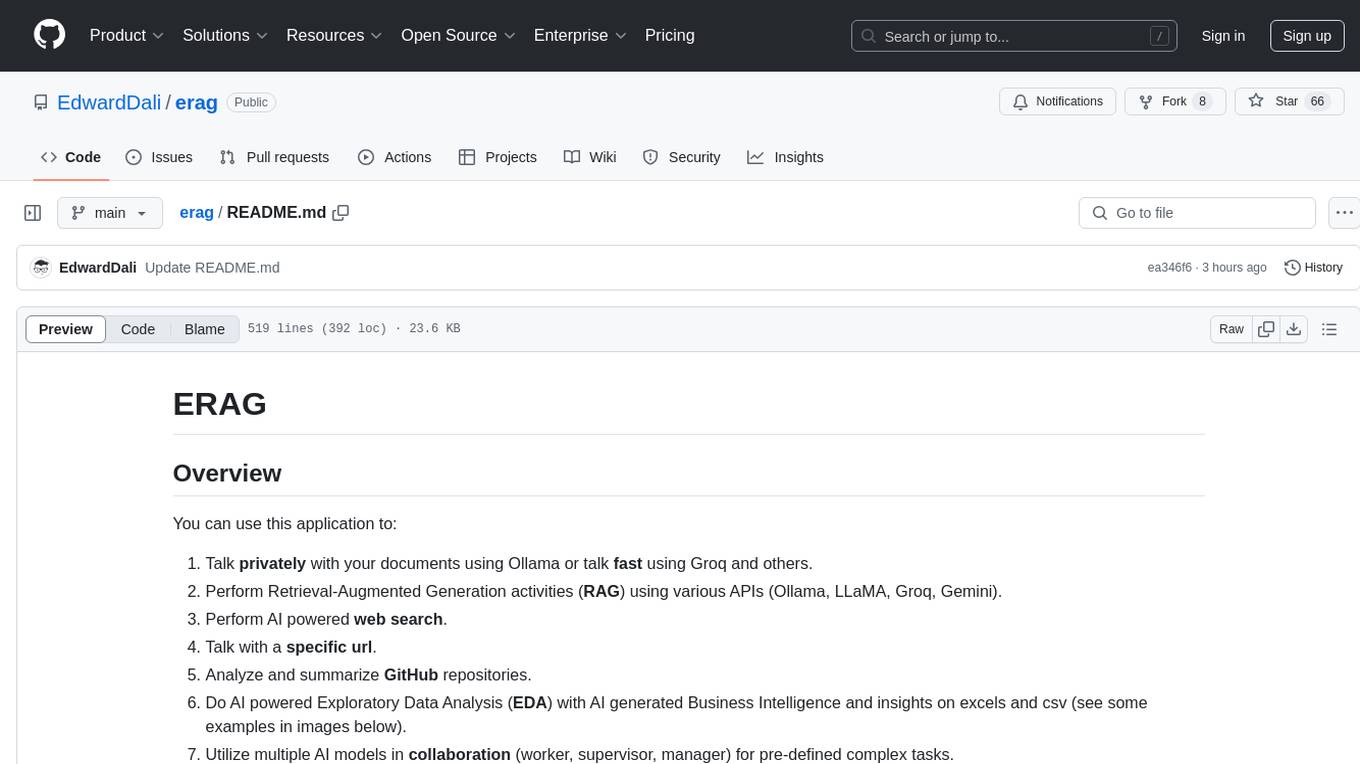

erag

ERAG is an advanced system that combines lexical, semantic, text, and knowledge graph searches with conversation context to provide accurate and contextually relevant responses. This tool processes various document types, creates embeddings, builds knowledge graphs, and uses this information to answer user queries intelligently. It includes modules for interacting with web content, GitHub repositories, and performing exploratory data analysis using various language models.

For similar jobs

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.