lance

Modern columnar data format for ML and LLMs implemented in Rust. Convert from parquet in 2 lines of code for 100x faster random access, vector index, and data versioning. Compatible with Pandas, DuckDB, Polars, Pyarrow, and PyTorch with more integrations coming..

Stars: 5425

Lance is a modern columnar data format optimized for ML workflows and datasets. It offers high-performance random access, vector search, zero-copy automatic versioning, and ecosystem integrations with Apache Arrow, Pandas, Polars, and DuckDB. Lance is designed to address the challenges of the ML development cycle, providing a unified data format for collection, exploration, analytics, feature engineering, training, evaluation, deployment, and monitoring. It aims to reduce data silos and streamline the ML development process.

README:

Modern columnar data format for ML. Convert from Parquet in 2-lines of code for 100x faster random access, zero-cost schema evolution, rich secondary indices, versioning, and more.

Compatible with Pandas, DuckDB, Polars, Pyarrow, and Ray with more integrations on the way.

Documentation • Blog • Discord • X

Lance is a modern columnar data format that is optimized for ML workflows and datasets. Lance is perfect for:

- Building search engines and feature stores.

- Large-scale ML training requiring high performance IO and shuffles.

- Storing, querying, and inspecting deeply nested data for robotics or large blobs like images, point clouds, and more.

The key features of Lance include:

-

High-performance random access: 100x faster than Parquet without sacrificing scan performance.

-

Vector search: find nearest neighbors in milliseconds and combine OLAP-queries with vector search.

-

Zero-copy, automatic versioning: manage versions of your data without needing extra infrastructure.

-

Ecosystem integrations: Apache Arrow, Pandas, Polars, DuckDB, Ray, Spark and more on the way.

[!TIP] Lance is in active development and we welcome contributions. Please see our contributing guide for more information.

Installation

pip install pylanceTo install a preview release:

pip install --pre --extra-index-url https://pypi.fury.io/lancedb/ pylance[!TIP] Preview releases are released more often than full releases and contain the latest features and bug fixes. They receive the same level of testing as full releases. We guarantee they will remain published and available for download for at least 6 months. When you want to pin to a specific version, prefer a stable release.

Converting to Lance

import lance

import pandas as pd

import pyarrow as pa

import pyarrow.dataset

df = pd.DataFrame({"a": [5], "b": [10]})

uri = "/tmp/test.parquet"

tbl = pa.Table.from_pandas(df)

pa.dataset.write_dataset(tbl, uri, format='parquet')

parquet = pa.dataset.dataset(uri, format='parquet')

lance.write_dataset(parquet, "/tmp/test.lance")Reading Lance data

dataset = lance.dataset("/tmp/test.lance")

assert isinstance(dataset, pa.dataset.Dataset)Pandas

df = dataset.to_table().to_pandas()

dfDuckDB

import duckdb

# If this segfaults, make sure you have duckdb v0.7+ installed

duckdb.query("SELECT * FROM dataset LIMIT 10").to_df()Vector search

Download the sift1m subset

wget ftp://ftp.irisa.fr/local/texmex/corpus/sift.tar.gz

tar -xzf sift.tar.gzConvert it to Lance

import lance

from lance.vector import vec_to_table

import numpy as np

import struct

nvecs = 1000000

ndims = 128

with open("sift/sift_base.fvecs", mode="rb") as fobj:

buf = fobj.read()

data = np.array(struct.unpack("<128000000f", buf[4 : 4 + 4 * nvecs * ndims])).reshape((nvecs, ndims))

dd = dict(zip(range(nvecs), data))

table = vec_to_table(dd)

uri = "vec_data.lance"

sift1m = lance.write_dataset(table, uri, max_rows_per_group=8192, max_rows_per_file=1024*1024)Build the index

sift1m.create_index("vector",

index_type="IVF_PQ",

num_partitions=256, # IVF

num_sub_vectors=16) # PQSearch the dataset

# Get top 10 similar vectors

import duckdb

dataset = lance.dataset(uri)

# Sample 100 query vectors. If this segfaults, make sure you have duckdb v0.7+ installed

sample = duckdb.query("SELECT vector FROM dataset USING SAMPLE 100").to_df()

query_vectors = np.array([np.array(x) for x in sample.vector])

# Get nearest neighbors for all of them

rs = [dataset.to_table(nearest={"column": "vector", "k": 10, "q": q})

for q in query_vectors]| Directory | Description |

|---|---|

| rust | Core Rust implementation |

| python | Python bindings (PyO3) |

| java | Java bindings (JNI) |

| docs | Documentation source |

Here we will highlight a few aspects of Lance’s design. For more details, see the full Lance design document.

Vector index: Vector index for similarity search over embedding space.

Support both CPUs (x86_64 and arm) and GPU (Nvidia (cuda) and Apple Silicon (mps)).

Encodings: To achieve both fast columnar scan and sub-linear point queries, Lance uses custom encodings and layouts.

Nested fields: Lance stores each subfield as a separate column to support efficient filters like “find images where detected objects include cats”.

Versioning: A Manifest can be used to record snapshots. Currently we support creating new versions automatically via appends, overwrites, and index creation.

Fast updates (ROADMAP): Updates will be supported via write-ahead logs.

Rich secondary indices: Support BTree, Bitmap, Full text search, Label list,

NGrams, and more.

We used the SIFT dataset to benchmark our results with 1M vectors of 128D

- For 100 randomly sampled query vectors, we get <1ms average response time (on a 2023 m2 MacBook Air)

- ANNs are always a trade-off between recall and performance

We create a Lance dataset using the Oxford Pet dataset to do some preliminary performance testing of Lance as compared to Parquet and raw image/XMLs. For analytics queries, Lance is 50-100x better than reading the raw metadata. For batched random access, Lance is 100x better than both parquet and raw files.

The machine learning development cycle involves the steps:

graph LR

A[Collection] --> B[Exploration];

B --> C[Analytics];

C --> D[Feature Engineer];

D --> E[Training];

E --> F[Evaluation];

F --> C;

E --> G[Deployment];

G --> H[Monitoring];

H --> A;People use different data representations to varying stages for the performance or limited by the tooling available. Academia mainly uses XML / JSON for annotations and zipped images/sensors data for deep learning, which is difficult to integrate into data infrastructure and slow to train over cloud storage. While industry uses data lakes (Parquet-based techniques, i.e., Delta Lake, Iceberg) or data warehouses (AWS Redshift or Google BigQuery) to collect and analyze data, they have to convert the data into training-friendly formats, such as Rikai/Petastorm or TFRecord. Multiple single-purpose data transforms, as well as syncing copies between cloud storage to local training instances have become a common practice.

While each of the existing data formats excels at the workload it was originally designed for, we need a new data format tailored for multistage ML development cycles to reduce and data silos.

A comparison of different data formats in each stage of ML development cycle.

| Lance | Parquet & ORC | JSON & XML | TFRecord | Database | Warehouse | |

|---|---|---|---|---|---|---|

| Analytics | Fast | Fast | Slow | Slow | Decent | Fast |

| Feature Engineering | Fast | Fast | Decent | Slow | Decent | Good |

| Training | Fast | Decent | Slow | Fast | N/A | N/A |

| Exploration | Fast | Slow | Fast | Slow | Fast | Decent |

| Infra Support | Rich | Rich | Decent | Limited | Rich | Rich |

Lance is currently used in production by:

- LanceDB, a serverless, low-latency vector database for ML applications

- LanceDB Enterprise, hyperscale LanceDB with enterprise SLA.

- Leading multimodal Gen AI companies for training over petabyte-scale multimodal data.

- Self-driving car company for large-scale storage, retrieval and processing of multi-modal data.

- E-commerce company for billion-scale+ vector personalized search.

- and more.

- Designing a Table Format for ML Workloads, Feb 2025.

- Transforming Multimodal Data Management with LanceDB, Ray Summit, Oct 2024.

- Lance v2: A columnar container format for modern data, Apr 2024.

- Lance Deep Dive. July 2023.

- Lance: A New Columnar Data Format, Scipy 2022, Austin, TX. July, 2022.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for lance

Similar Open Source Tools

lance

Lance is a modern columnar data format optimized for ML workflows and datasets. It offers high-performance random access, vector search, zero-copy automatic versioning, and ecosystem integrations with Apache Arrow, Pandas, Polars, and DuckDB. Lance is designed to address the challenges of the ML development cycle, providing a unified data format for collection, exploration, analytics, feature engineering, training, evaluation, deployment, and monitoring. It aims to reduce data silos and streamline the ML development process.

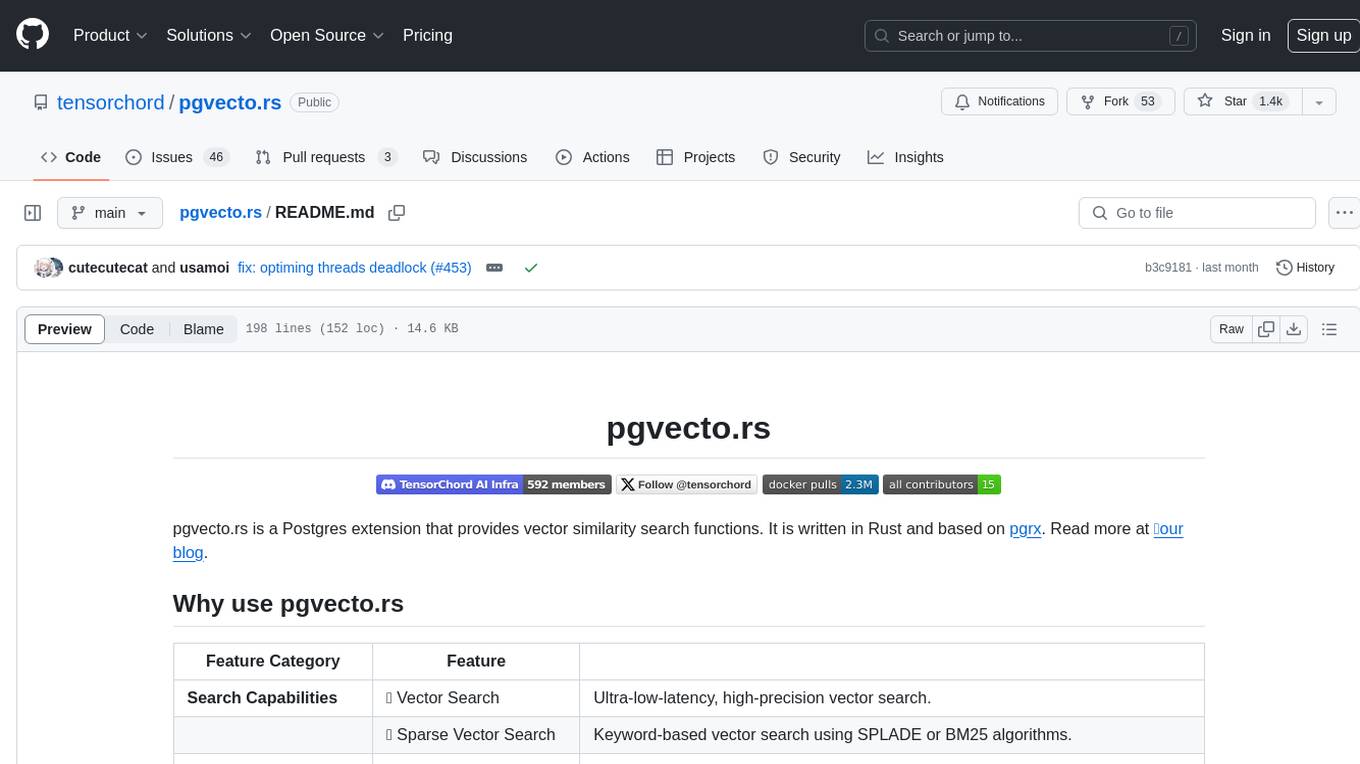

pgvecto.rs

pgvecto.rs is a Postgres extension written in Rust that provides vector similarity search functions. It offers ultra-low-latency, high-precision vector search capabilities, including sparse vector search and full-text search. With complete SQL support, async indexing, and easy data management, it simplifies data handling. The extension supports various data types like FP16/INT8, binary vectors, and Matryoshka embeddings. It ensures system performance with production-ready features, high availability, and resource efficiency. Security and permissions are managed through easy access control. The tool allows users to create tables with vector columns, insert vector data, and calculate distances between vectors using different operators. It also supports half-precision floating-point numbers for better performance and memory usage optimization.

evidently

Evidently is an open-source Python library designed for evaluating, testing, and monitoring machine learning (ML) and large language model (LLM) powered systems. It offers a wide range of functionalities, including working with tabular, text data, and embeddings, supporting predictive and generative systems, providing over 100 built-in metrics for data drift detection and LLM evaluation, allowing for custom metrics and tests, enabling both offline evaluations and live monitoring, and offering an open architecture for easy data export and integration with existing tools. Users can utilize Evidently for one-off evaluations using Reports or Test Suites in Python, or opt for real-time monitoring through the Dashboard service.

rl

TorchRL is an open-source Reinforcement Learning (RL) library for PyTorch. It provides pytorch and **python-first** , low and high level abstractions for RL that are intended to be **efficient** , **modular** , **documented** and properly **tested**. The code is aimed at supporting research in RL. Most of it is written in python in a highly modular way, such that researchers can easily swap components, transform them or write new ones with little effort.

curator

Bespoke Curator is an open-source tool for data curation and structured data extraction. It provides a Python library for generating synthetic data at scale, with features like programmability, performance optimization, caching, and integration with HuggingFace Datasets. The tool includes a Curator Viewer for dataset visualization and offers a rich set of functionalities for creating and refining data generation strategies.

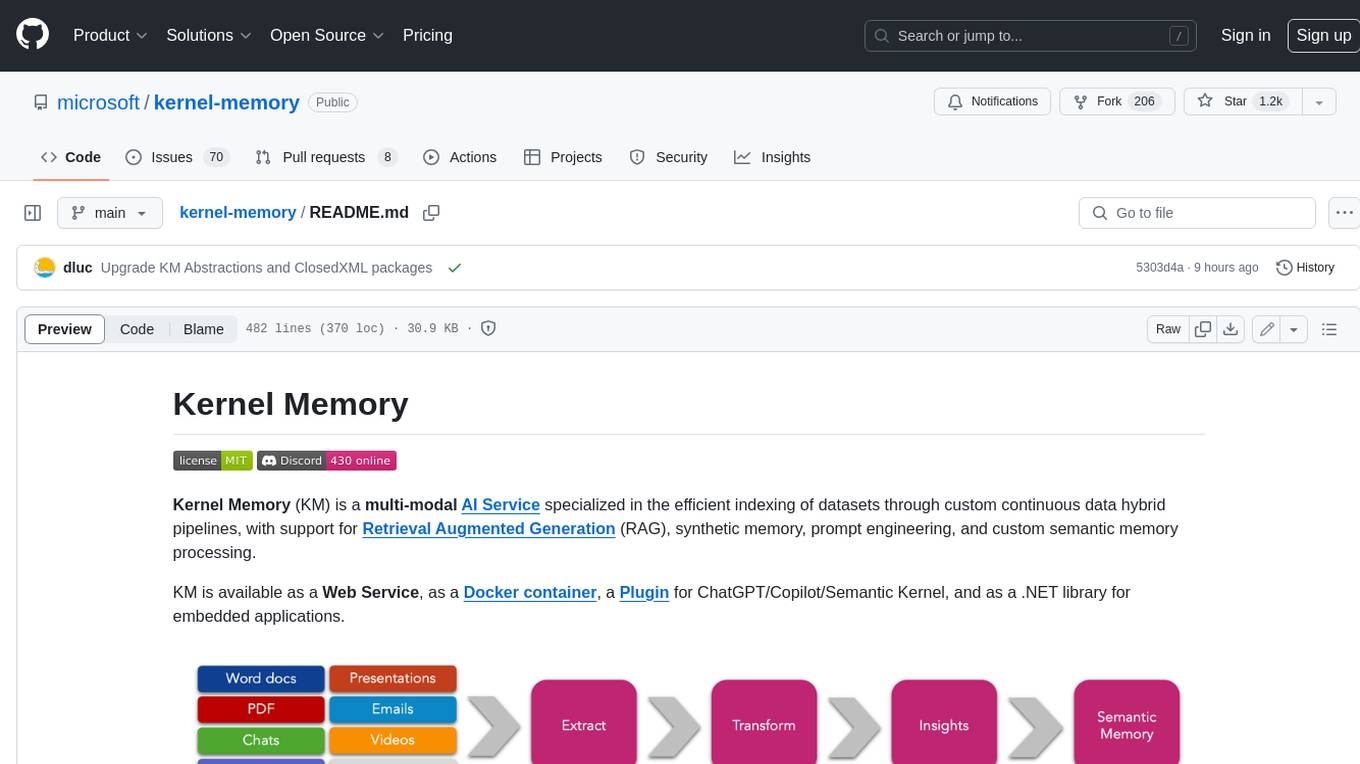

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

catalyst

Catalyst is a C# Natural Language Processing library designed for speed, inspired by spaCy's design. It provides pre-trained models, support for training word and document embeddings, and flexible entity recognition models. The library is fast, modern, and pure-C#, supporting .NET standard 2.0. It is cross-platform, running on Windows, Linux, macOS, and ARM. Catalyst offers non-destructive tokenization, named entity recognition, part-of-speech tagging, language detection, and efficient binary serialization. It includes pre-built models for language packages and lemmatization. Users can store and load models using streams. Getting started with Catalyst involves installing its NuGet Package and setting the storage to use the online repository. The library supports lazy loading of models from disk or online. Users can take advantage of C# lazy evaluation and native multi-threading support to process documents in parallel. Training a new FastText word2vec embedding model is straightforward, and Catalyst also provides algorithms for fast embedding search and dimensionality reduction.

lmnr

Laminar is an all-in-one open-source platform designed for engineering AI products. It allows users to trace, evaluate, label, and analyze LLM data efficiently. The platform offers features such as automatic tracing of common AI frameworks and SDKs, local and online evaluations, simple UI for data labeling, dataset management, and scalability with gRPC communication. Laminar is built with a modern open-source stack including RabbitMQ, Postgres, Clickhouse, and Qdrant for semantic similarity search. It provides fast and beautiful dashboards for traces, evaluations, and labels, making it a comprehensive tool for AI product development.

CodeGeeX4

CodeGeeX4-ALL-9B is an open-source multilingual code generation model based on GLM-4-9B, offering enhanced code generation capabilities. It supports functions like code completion, code interpreter, web search, function call, and repository-level code Q&A. The model has competitive performance on benchmarks like BigCodeBench and NaturalCodeBench, outperforming larger models in terms of speed and performance.

WordLlama

WordLlama is a fast, lightweight NLP toolkit optimized for CPU hardware. It recycles components from large language models to create efficient word representations. It offers features like Matryoshka Representations, low resource requirements, binarization, and numpy-only inference. The tool is suitable for tasks like semantic matching, fuzzy deduplication, ranking, and clustering, making it a good option for NLP-lite tasks and exploratory analysis.

superlinked

Superlinked is a compute framework for information retrieval and feature engineering systems, focusing on converting complex data into vector embeddings for RAG, Search, RecSys, and Analytics stack integration. It enables custom model performance in machine learning with pre-trained model convenience. The tool allows users to build multimodal vectors, define weights at query time, and avoid postprocessing & rerank requirements. Users can explore the computational model through simple scripts and python notebooks, with a future release planned for production usage with built-in data infra and vector database integrations.

superduperdb

SuperDuperDB is a Python framework for integrating AI models, APIs, and vector search engines directly with your existing databases, including hosting of your own models, streaming inference and scalable model training/fine-tuning. Build, deploy and manage any AI application without the need for complex pipelines, infrastructure as well as specialized vector databases, and moving our data there, by integrating AI at your data's source: - Generative AI, LLMs, RAG, vector search - Standard machine learning use-cases (classification, segmentation, regression, forecasting recommendation etc.) - Custom AI use-cases involving specialized models - Even the most complex applications/workflows in which different models work together SuperDuperDB is **not** a database. Think `db = superduper(db)`: SuperDuperDB transforms your databases into an intelligent platform that allows you to leverage the full AI and Python ecosystem. A single development and deployment environment for all your AI applications in one place, fully scalable and easy to manage.

Eco2AI

Eco2AI is a python library for CO2 emission tracking that monitors energy consumption of CPU & GPU devices and estimates equivalent carbon emissions based on regional emission coefficients. Users can easily integrate Eco2AI into their Python scripts by adding a few lines of code. The library records emissions data and device information in a local file, providing detailed session logs with project names, experiment descriptions, start times, durations, power consumption, CO2 emissions, CPU and GPU names, operating systems, and countries.

HuixiangDou

HuixiangDou is a **group chat** assistant based on LLM (Large Language Model). Advantages: 1. Design a two-stage pipeline of rejection and response to cope with group chat scenario, answer user questions without message flooding, see arxiv2401.08772 2. Low cost, requiring only 1.5GB memory and no need for training 3. Offers a complete suite of Web, Android, and pipeline source code, which is industrial-grade and commercially viable Check out the scenes in which HuixiangDou are running and join WeChat Group to try AI assistant inside. If this helps you, please give it a star ⭐

swiftide

Swiftide is a fast, streaming indexing and query library tailored for Retrieval Augmented Generation (RAG) in AI applications. It is built in Rust, utilizing parallel, asynchronous streams for blazingly fast performance. With Swiftide, users can easily build AI applications from idea to production in just a few lines of code. The tool addresses frustrations around performance, stability, and ease of use encountered while working with Python-based tooling. It offers features like fast streaming indexing pipeline, experimental query pipeline, integrations with various platforms, loaders, transformers, chunkers, embedders, and more. Swiftide aims to provide a platform for data indexing and querying to advance the development of automated Large Language Model (LLM) applications.

mflux

MFLUX is a line-by-line port of the FLUX implementation in the Huggingface Diffusers library to Apple MLX. It aims to run powerful FLUX models from Black Forest Labs locally on Mac machines. The codebase is minimal and explicit, prioritizing readability over generality and performance. Models are implemented from scratch in MLX, with tokenizers from the Huggingface Transformers library. Dependencies include Numpy and Pillow for image post-processing. Installation can be done using `uv tool` or classic virtual environment setup. Command-line arguments allow for image generation with specified models, prompts, and optional parameters. Quantization options for speed and memory reduction are available. LoRA adapters can be loaded for fine-tuning image generation. Controlnet support provides more control over image generation with reference images. Current limitations include generating images one by one, lack of support for negative prompts, and some LoRA adapters not working.

For similar tasks

lance

Lance is a modern columnar data format optimized for ML workflows and datasets. It offers high-performance random access, vector search, zero-copy automatic versioning, and ecosystem integrations with Apache Arrow, Pandas, Polars, and DuckDB. Lance is designed to address the challenges of the ML development cycle, providing a unified data format for collection, exploration, analytics, feature engineering, training, evaluation, deployment, and monitoring. It aims to reduce data silos and streamline the ML development process.

ai-powered-search

AI-Powered Search provides code examples for the book 'AI-Powered Search' by Trey Grainger, Doug Turnbull, and Max Irwin. The book teaches modern machine learning techniques for building search engines that continuously learn from users and content to deliver more intelligent and domain-aware search experiences. It covers semantic search, retrieval augmented generation, question answering, summarization, fine-tuning transformer-based models, personalized search, machine-learned ranking, click models, and more. The code examples are in Python, leveraging PySpark for data processing and Apache Solr as the default search engine. The repository is open source under the Apache License, Version 2.0.

RAGHub

RAGHub is a community-driven project focused on cataloging new and emerging frameworks, projects, and resources in the Retrieval-Augmented Generation (RAG) ecosystem. It aims to help users stay ahead of changes in the field by providing a platform for the latest innovations in RAG. The repository includes information on RAG frameworks, evaluation frameworks, optimization frameworks, citation frameworks, engines, search reranker frameworks, projects, resources, and real-world use cases across industries and professions.

For similar jobs

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.