DB-GPT

An LLM Based Diagnosis System (https://arxiv.org/pdf/2312.01454.pdf)

Stars: 480

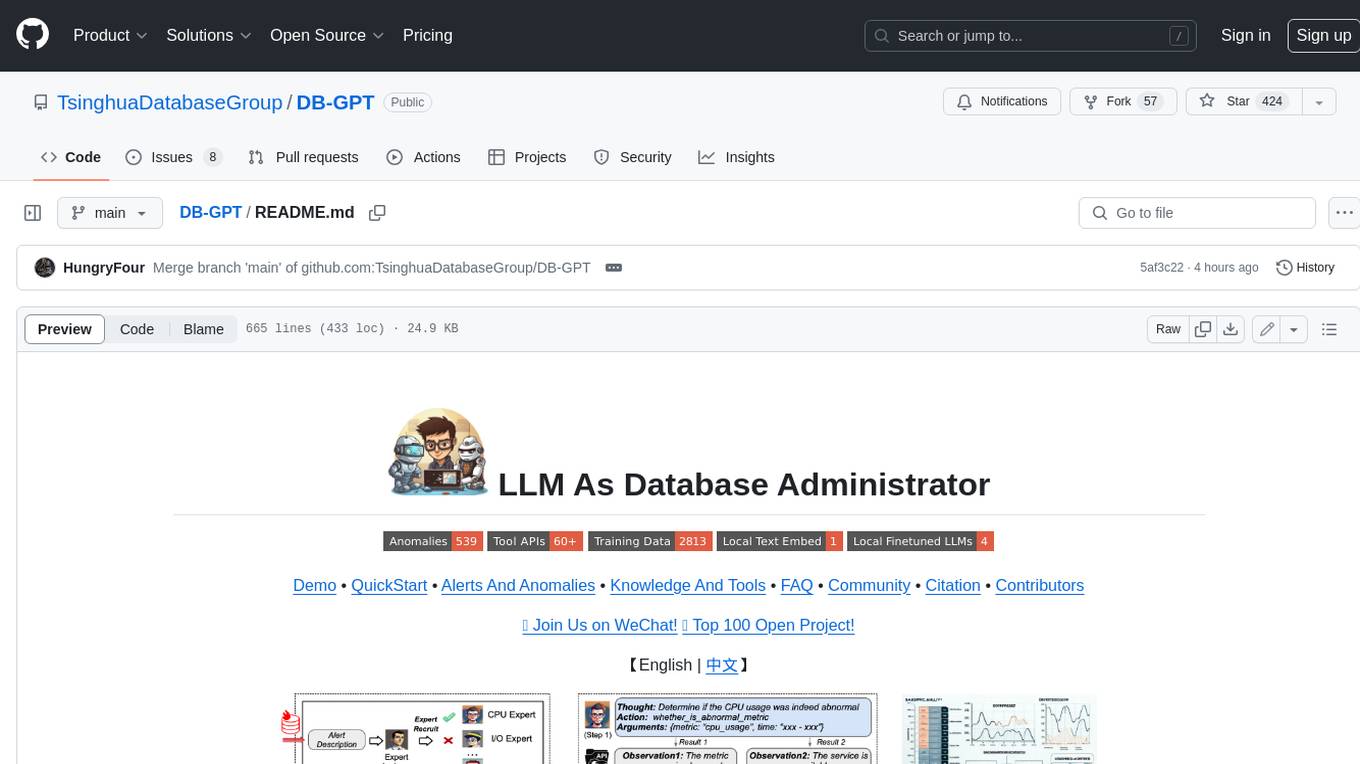

DB-GPT is a personal database administrator that can solve database problems by reading documents, using various tools, and writing analysis reports. It is currently undergoing an upgrade. **Features:** * **Online Demo:** * Import documents into the knowledge base * Utilize the knowledge base for well-founded Q&A and diagnosis analysis of abnormal alarms * Send feedbacks to refine the intermediate diagnosis results * Edit the diagnosis result * Browse all historical diagnosis results, used metrics, and detailed diagnosis processes * **Language Support:** * English (default) * Chinese (add "language: zh" in config.yaml) * **New Frontend:** * Knowledgebase + Chat Q&A + Diagnosis + Report Replay * **Extreme Speed Version for localized llms:** * 4-bit quantized LLM (reducing inference time by 1/3) * vllm for fast inference (qwen) * Tiny LLM * **Multi-path extraction of document knowledge:** * Vector database (ChromaDB) * RESTful Search Engine (Elasticsearch) * **Expert prompt generation using document knowledge** * **Upgrade the LLM-based diagnosis mechanism:** * Task Dispatching -> Concurrent Diagnosis -> Cross Review -> Report Generation * Synchronous Concurrency Mechanism during LLM inference * **Support monitoring and optimization tools in multiple levels:** * Monitoring metrics (Prometheus) * Flame graph in code level * Diagnosis knowledge retrieval (dbmind) * Logical query transformations (Calcite) * Index optimization algorithms (for PostgreSQL) * Physical operator hints (for PostgreSQL) * Backup and Point-in-time Recovery (Pigsty) * **Continuously updated papers and experimental reports** This project is constantly evolving with new features. Don't forget to star ⭐ and watch 👀 to stay up to date.

README:

Demo • QuickStart • Alerts And Anomalies • Knowledge And Tools • Dockers • FAQ • Community • Citation • Contributors • OpenAI, Azure aggregated API discounted access plan.

👫 Join Us on WeChat! 🏆 Top 100 Open Project! 🌟 VLDB 2024!

【English | 中文】

🦾 Build your personal database administrator (D-Bot)🧑💻, which is good at solving database problems by reading documents, using various tools, writing analysis reports! Undergoing An Upgrade!

- After launching the local service (adopting frontend and configs from Chatchat), you can easily import documents into the knowledge base, utilize the knowledge base for well-founded Q&A and diagnosis analysis of abnormal alarms.

- With the user feedback function 🔗, you can (1) send feedbacks to make D-Bot follow and refine the intermediate diagnosis results, and (2) edit the diagnosis result by clicking the “Edit” button. D-Bot can accumulate refinement patterns from the user feedbacks (stored in vector database) and adaptively align to user's diagnosis preference.

- On the online website (http://dbgpt.dbmind.cn), you can browse all historical diagnosis results, used metrics, and detailed diagnosis processes.

Old Version 1: [Gradio for Diag Game] (no langchain)

Old Version 2: [Vue for Report Replay] (no langchain)

-

[ ] Docker for a quick and safe use of D-Bot

-

[x] Metric Monitoring (prometheus), Database (postgres_db), Alert (alertmanager) and Alert Recording (python_app).

-

[ ] D-bot (still too large, with over 12GB)

-

-

[ ] Human Feedback 🔥🔥🔥

-

[x] Test-based Diagnosis Refinement with User Feedbacks

-

[x] Refinement Patterns Extraction & Management

-

-

[ ] Language Support (english / chinese)

- [x] english : default

- [x] chinese : add "language: zh" in config.yaml

-

[ ] New Frontend

- [x] Knowledgebase + Chat Q&A + Diagnosis + Report Replay

-

[x] Result Report with reference

-

[ ] Extreme Speed Version for localized llms

-

[x] 4-bit quantized LLM (reducing inference time by 1/3)

-

[x] vllm for fast inference (qwen)

-

[ ] Tiny LLM

-

-

[x] Multi-path extraction of document knowledge

-

[x] Vector database (ChromaDB)

-

[x] RESTful Search Engine (Elasticsearch)

-

-

[x] Expert prompt generation using document knowledge

-

[ ] Upgrade the LLM-based diagnosis mechanism:

-

[x] Task Dispatching -> Concurrent Diagnosis -> Cross Review -> Report Generation

-

[ ] Synchronous Concurrency Mechanism during LLM inference

-

-

[ ] Support monitoring and optimization tools in multiple levels 🔗 link

- [x] Monitoring metrics (Prometheus)

- [ ] Flame graph in code level

- [x] Diagnosis knowledge retrieval (dbmind)

- [x] Logical query transformations (Calcite)

- [x] Index optimization algorithms (for PostgreSQL)

- [x] Physical operator hints (for PostgreSQL)

- [ ] Backup and Point-in-time Recovery (Pigsty)

-

[x] Papers and experimental reports are continuously updated

This project is evolving with new features 👫👫

Don't forget to star ⭐ and watch 👀 to stay up to date :)

- First, ensure that your machine has Python (>= 3.10) installed.

$ python --version

Python 3.10.12

- Next, create a virtual environment and install the dependencies for the project within it.

# Clone the repository

$ git clone https://github.com/TsinghuaDatabaseGroup/DB-GPT.git

# Enter the directory

$ cd DB-GPT

# Install all dependencies

$ pip3 install -r requirements.txt

$ pip3 install -r requirements_api.txt # If only running the API, you can just install the API dependencies, please use requirements_api.txt

# Default dependencies include the basic runtime environment (Chroma-DB vector library). If you want to use other vector libraries, please uncomment the respective dependencies in requirements.txt before installation.If fail to install google-colab, try conda install -c conda-forge google-colab

-

PostgreSQL v12 (We have developed and tested based on PostgreSQL v12, we do not guarantee compatibility with other versions of PostgreSQL)

Ensure your database supports remote connections (link)

Moreover, install extensions like pg_stat_statements (track frequent queries), pg_hint_plan (optimize physical operators), and hypopg (create hypothetical indexes).

Note pg_stat_statements accumulates query statistics over time. Therefore, you need to regularly clear the statistics: 1) to discard all statistics, execute "SELECT pg_stat_statements_reset();"; 2) to discard statistics for a specific query, execute "SELECT pg_stat_statements_reset(userid, dbid, queryid);".

-

(optional) If you need to run this project locally or in an offline environment, you first need to download the required models to your local machine and then correctly adapt some configurations.

- Download the model parameters of Sentence Trasformer

Create a new directory ./multiagents/localized_llms/sentence_embedding/

Place the downloaded sentence-transformer.zip in the ./multiagents/localized_llms/sentence_embedding/ directory; unzip the archive.

- Download LLM and embedding models from HuggingFace.

To download models, first install Git LFS, then run

$ git lfs install

$ git clone https://huggingface.co/moka-ai/m3e-base

$ git clone https://huggingface.co/Qwen/Qwen-1_8B-Chat- Adapt the model configuration to the download model paths, e.g.,

EMBEDDING_MODEL = "m3e-base"

LLM_MODELS = ["Qwen-1_8B-Chat"]

MODEL_PATH = {

"embed_model": {

"m3e-base": "m3e-base", # Download path of embedding model.

},

"llm_model": {

"Qwen-1_8B-Chat": "Qwen-1_8B-Chat", # Download path of LLM.

},

}- Download and config localized LLMs.

- Ensure that your machine has Node (>= 18.15.0)

$ node -v

v18.15.0

Install pnpm and dependencies

cd webui

# pnpm address https://pnpm.io/zh/motivation

# install dependency(Recommend use pnpm)

# you can use "npm -g i pnpm" to install pnpm

pnpm installCopy the configuration files

$ python copy_config_example.py

# The generated configuration files are in the configs/ directory

# basic_config.py is the basic configuration file, no modification needed

# diagnose_config.py is the diagnostic configuration file, needs to be modified according to your environment.

# kb_config.py is the knowledge base configuration file, you can modify DEFAULT_VS_TYPE to specify the storage vector library of the knowledge base, or modify related paths.

# model_config.py is the model configuration file, you can modify LLM_MODELS to specify the model used, the current model configuration is mainly for knowledge base search, diagnostic related models are still hardcoded in the code, they will be unified here later.

# prompt_config.py is the prompt configuration file, mainly for LLM dialogue and knowledge base prompts.

# server_config.py is the server configuration file, mainly for server port numbers, etc.!!! Attention, please modify the following configurations before initializing the knowledge base, otherwise, it may cause the database initialization to fail.

- model_config.py

# EMBEDDING_MODEL Vectorization model, if choosing a local model, it needs to be downloaded to the root directory as required.

# LLM_MODELS LLM, if choosing a local model, it needs to be downloaded to the root directory as required.

# ONLINE_LLM_MODEL If using an online model, you need to modify the configuration.- server_config.py

# WEBUI_SERVER.api_base_url Pay attention to this parameter, if deploying the project on a server, then you need to modify the configuration.- In diagnose_config.py, we set config.yaml as the default LLM expert configuration file.

DIAGNOSTIC_CONFIG_FILE = "config.yaml"- To enable interactive diagnosis refinement with user feedbacks, you can set

DIAGNOSTIC_CONFIG_FILE = "config_feedback.yaml"- To enable diagnosis in Chinese with Qwen, you can set

DIAGNOSTIC_CONFIG_FILE = "config_qwen.yaml"- Initialize the knowledge base

$ python init_database.py --recreate-vsStart the project with the following commands

$ python startup.py -aIf started correctly, you will see the following interface

- FastAPI Docs Interface

- Web UI Launch Interface Examples:

- Web UI Knowledge Base Management Page:

- Web UI Conversation Interface:

- Web UI UI Diagnostic Page:

Save time by trying out the docker deployment.

-

(optional) Enable slow query log in PostgreSQL (link)

(1) For "systemctl restart postgresql", the service name can be different (e.g., postgresql-12.service);

(2) Use absolute log path name like "log_directory = '/var/lib/pgsql/12/data/log'";

(3) Set "log_line_prefix = '%m [%p] [%d]'" in postgresql.conf (to record the database names of different queries).

-

(optional) Prometheus

Check prometheus.md for detailed installation guides.

We put multiple test cases under the test_case folder. You can select a case file on the front-end page for diagnosis or use the command line.

python3 run_diagnose.py --anomaly_file ./test_cases/testing_cases_5.json --config_file config.yaml

Check out how to deploy prometheus and alertmanager in prometheus_service_docker.

- You can also choose to quickly put your hands on by using our docker (docker deployment)

We provide scripts that trigger typical anomalies (anomalies directory) using highly concurrent operations (e.g., inserts, deletes, updates) in combination with specific test benches.

Single Root Cause Anomalies:

Execute the following command to trigger a single type of anomaly with customized parameters:

python anomaly_trigger/main.py --anomaly MISSING_INDEXES --threads 100 --ncolumn 20 --colsize 100 --nrow 20000Parameters:

-

--anomaly: Specifies the type of anomaly to trigger. -

--threads: Sets the number of concurrent clients. -

--ncolumn: Defines the number of columns. -

--colsize: Determines the size of each column (in bytes). -

--nrow: Indicates the number of rows.

Multiple Root Cause Anomalies:

To trigger anomalies caused by multiple factors, use the following command:

python anomaly_trigger/multi_anomalies.pyModify the script as needed to simulate different types of anomalies.

Check detailed use cases at http://dbgpt.dbmind.cn.

Click to check 29 typical anomalies together with expert analysis (supported by the DBMind team)

(Basic version by Zui Chen)

(1) If you only need simple document splitting, you can directly use the document import function in the "Knowledge Base Management Page".

(2) We require the document itself to have chapter format information, and currently only support the docx format.

Step 1. Configure the ROOT_DIR_NAME path in ./doc2knowledge/doc_to_section.py and store all docx format documents in ROOT_DIR_NAME.

Step 2. Configure OPENAI_KEY.

export OPENAI_API_KEY=XXXXXStep 3. Split the document into separate chapter files by chapter index.

cd doc2knowledge/

python doc_to_section.pyStep 4. Modify parameters in the doc2knowledge.py script and run the script:

python doc2knowledge.pyStep 5. With the extracted knowledge, you can visualize their clustering results:

python knowledge_clustering.py

-

Tool APIs (for optimization)

Module Functions index_selection (equipped) heuristic algorithm query_rewrite (equipped) 45 rules physical_hint (equipped) 15 parameters For functions within [query_rewrite, physical_hint], you can use api_test.py script to verify the effectiveness.

If the function actually works, append it to the api.py of corresponding module.

We utilize db2advis heuristic algorithm to recommend indexes for given workloads. The function api is optimize_index_selection.

You can use docker for a quick and safe use of the monitoring platform and database.

Refer to tutorials (e.g., on CentOS) for installing Docker and Docoker-Compose.

We use docker-compose to build and manage multiple dockers for metric monitoring (prometheus), alert (alertmanager), database (postgres_db), and alert recording (python_app).

cd prometheus_service_docker

docker-compose -p prometheus_service -f docker-compose.yml up --buildNext time starting the prometheus_service, you can directly execute "docker-compose -p prometheus_service -f docker-compose.yml up" without building the dockers.

Configure the settings in anomaly_trigger/utils/database.py (e.g., replace "host" with the IP address of the server) and execute an anomaly generation command, like:

cd anomaly_trigger

python3 main.py --anomaly MISSING_INDEXES --threads 100 --ncolumn 20 --colsize 100 --nrow 20000You may need to modify the arugment values like "--threads 100" if no alert is recorded after execution.

After receiving a request sent to http://127.0.0.1:8023/alert from prometheus_service, the alert summary will be recorded in prometheus_and_db_docker/alert_history.txt, like:

This way, you can use the alert marked as `resolved' as a new anomaly (under the ./diagnostic_files directory) for diagnosis by d-bot.

🤨 The '.sh' script command cannot be executed on windows system.

Switch the shell to *git bash* or use *git bash* to execute the '.sh' script.🤨 "No module named 'xxx'" on windows system.

This error is caused by issues with the Python runtime environment path. You need to perform the following steps:Step 1: Check Environment Variables.

You must configure the "Scripts" in the environment variables.

Step 2: Check IDE Settings.

For VS Code, download the Python extension for code. For PyCharm, specify the Python version for the current project.

Project cleaningSupport more anomaliesSupport more knowledge sourcesQuery log option (potential to take up disk space and we need to consider it carefully)Add more communication mechanismsPrometheus-as-a-Service- Localized model that reaches D-bot(gpt4)'s capability

- Support other databases (e.g., mysql/redis)

https://github.com/OpenBMB/AgentVerse

https://github.com/Vonng/pigsty

https://github.com/UKPLab/sentence-transformers

https://github.com/chatchat-space/Langchain-Chatchat

https://github.com/shreyashankar/spade-experiments

Feel free to cite us (paper link) if you like this project.

@misc{zhou2023llm4diag,

title={D-Bot: Database Diagnosis System using Large Language Models},

author={Xuanhe Zhou, Guoliang Li, Zhaoyan Sun, Zhiyuan Liu, Weize Chen, Jianming Wu, Jiesi Liu, Ruohang Feng, Guoyang Zeng},

year={2023},

eprint={2312.01454},

archivePrefix={arXiv},

primaryClass={cs.DB}

}@misc{zhou2023dbgpt,

title={DB-GPT: Large Language Model Meets Database},

author={Xuanhe Zhou, Zhaoyan Sun, Guoliang Li},

year={2023},

archivePrefix={Data Science and Engineering},

}

Other Collaborators: Wei Zhou, Kunyi Li.

We thank all the contributors to this project. Do not hesitate if you would like to get involved or contribute!

👏🏻Welcome to our wechat group!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for DB-GPT

Similar Open Source Tools

DB-GPT

DB-GPT is a personal database administrator that can solve database problems by reading documents, using various tools, and writing analysis reports. It is currently undergoing an upgrade. **Features:** * **Online Demo:** * Import documents into the knowledge base * Utilize the knowledge base for well-founded Q&A and diagnosis analysis of abnormal alarms * Send feedbacks to refine the intermediate diagnosis results * Edit the diagnosis result * Browse all historical diagnosis results, used metrics, and detailed diagnosis processes * **Language Support:** * English (default) * Chinese (add "language: zh" in config.yaml) * **New Frontend:** * Knowledgebase + Chat Q&A + Diagnosis + Report Replay * **Extreme Speed Version for localized llms:** * 4-bit quantized LLM (reducing inference time by 1/3) * vllm for fast inference (qwen) * Tiny LLM * **Multi-path extraction of document knowledge:** * Vector database (ChromaDB) * RESTful Search Engine (Elasticsearch) * **Expert prompt generation using document knowledge** * **Upgrade the LLM-based diagnosis mechanism:** * Task Dispatching -> Concurrent Diagnosis -> Cross Review -> Report Generation * Synchronous Concurrency Mechanism during LLM inference * **Support monitoring and optimization tools in multiple levels:** * Monitoring metrics (Prometheus) * Flame graph in code level * Diagnosis knowledge retrieval (dbmind) * Logical query transformations (Calcite) * Index optimization algorithms (for PostgreSQL) * Physical operator hints (for PostgreSQL) * Backup and Point-in-time Recovery (Pigsty) * **Continuously updated papers and experimental reports** This project is constantly evolving with new features. Don't forget to star ⭐ and watch 👀 to stay up to date.

rl

TorchRL is an open-source Reinforcement Learning (RL) library for PyTorch. It provides pytorch and **python-first** , low and high level abstractions for RL that are intended to be **efficient** , **modular** , **documented** and properly **tested**. The code is aimed at supporting research in RL. Most of it is written in python in a highly modular way, such that researchers can easily swap components, transform them or write new ones with little effort.

HuixiangDou

HuixiangDou is a **group chat** assistant based on LLM (Large Language Model). Advantages: 1. Design a two-stage pipeline of rejection and response to cope with group chat scenario, answer user questions without message flooding, see arxiv2401.08772 2. Low cost, requiring only 1.5GB memory and no need for training 3. Offers a complete suite of Web, Android, and pipeline source code, which is industrial-grade and commercially viable Check out the scenes in which HuixiangDou are running and join WeChat Group to try AI assistant inside. If this helps you, please give it a star ⭐

ragflow

RAGFlow is an open-source Retrieval-Augmented Generation (RAG) engine that combines deep document understanding with Large Language Models (LLMs) to provide accurate question-answering capabilities. It offers a streamlined RAG workflow for businesses of all sizes, enabling them to extract knowledge from unstructured data in various formats, including Word documents, slides, Excel files, images, and more. RAGFlow's key features include deep document understanding, template-based chunking, grounded citations with reduced hallucinations, compatibility with heterogeneous data sources, and an automated and effortless RAG workflow. It supports multiple recall paired with fused re-ranking, configurable LLMs and embedding models, and intuitive APIs for seamless integration with business applications.

superlinked

Superlinked is a compute framework for information retrieval and feature engineering systems, focusing on converting complex data into vector embeddings for RAG, Search, RecSys, and Analytics stack integration. It enables custom model performance in machine learning with pre-trained model convenience. The tool allows users to build multimodal vectors, define weights at query time, and avoid postprocessing & rerank requirements. Users can explore the computational model through simple scripts and python notebooks, with a future release planned for production usage with built-in data infra and vector database integrations.

mcp-agent

mcp-agent is a simple, composable framework designed to build agents using the Model Context Protocol. It handles the lifecycle of MCP server connections and implements patterns for building production-ready AI agents in a composable way. The framework also includes OpenAI's Swarm pattern for multi-agent orchestration in a model-agnostic manner, making it the simplest way to build robust agent applications. It is purpose-built for the shared protocol MCP, lightweight, and closer to an agent pattern library than a framework. mcp-agent allows developers to focus on the core business logic of their AI applications by handling mechanics such as server connections, working with LLMs, and supporting external signals like human input.

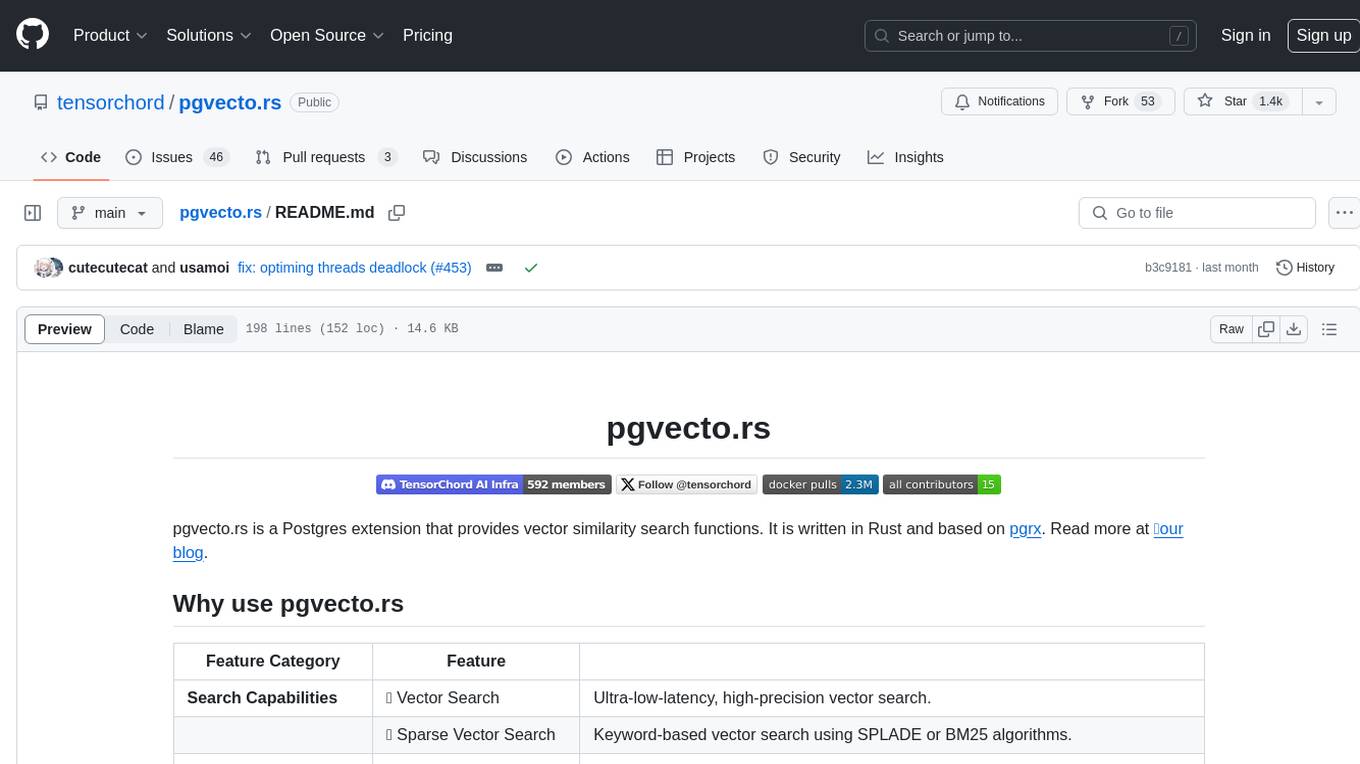

pgvecto.rs

pgvecto.rs is a Postgres extension written in Rust that provides vector similarity search functions. It offers ultra-low-latency, high-precision vector search capabilities, including sparse vector search and full-text search. With complete SQL support, async indexing, and easy data management, it simplifies data handling. The extension supports various data types like FP16/INT8, binary vectors, and Matryoshka embeddings. It ensures system performance with production-ready features, high availability, and resource efficiency. Security and permissions are managed through easy access control. The tool allows users to create tables with vector columns, insert vector data, and calculate distances between vectors using different operators. It also supports half-precision floating-point numbers for better performance and memory usage optimization.

DeepResearch

Tongyi DeepResearch is an agentic large language model with 30.5 billion total parameters, designed for long-horizon, deep information-seeking tasks. It demonstrates state-of-the-art performance across various search benchmarks. The model features a fully automated synthetic data generation pipeline, large-scale continual pre-training on agentic data, end-to-end reinforcement learning, and compatibility with two inference paradigms. Users can download the model directly from HuggingFace or ModelScope. The repository also provides benchmark evaluation scripts and information on the Deep Research Agent Family.

catai

CatAI is a tool that allows users to run GGUF models on their computer with a chat UI. It serves as a local AI assistant inspired by Node-Llama-Cpp and Llama.cpp. The tool provides features such as auto-detecting programming language, showing original messages by clicking on user icons, real-time text streaming, and fast model downloads. Users can interact with the tool through a CLI that supports commands for installing, listing, setting, serving, updating, and removing models. CatAI is cross-platform and supports Windows, Linux, and Mac. It utilizes node-llama-cpp and offers a simple API for asking model questions. Additionally, developers can integrate the tool with node-llama-cpp@beta for model management and chatting. The configuration can be edited via the web UI, and contributions to the project are welcome. The tool is licensed under Llama.cpp's license.

EasySteer

EasySteer is a unified framework built on vLLM for high-performance LLM steering. It offers fast, flexible, and easy-to-use steering capabilities with features like high performance, modular design, fine-grained control, pre-computed steering vectors, and an interactive demo. Users can interactively configure models, adjust steering parameters, and test interventions without writing code. The tool supports OpenAI-compatible APIs and provides modules for hidden states extraction, analysis-based steering, learning-based steering, and a frontend web interface for interactive steering and ReFT interventions.

refact-lsp

Refact Agent is a small executable written in Rust as part of the Refact Agent project. It lives inside your IDE to keep AST and VecDB indexes up to date, supporting connection graphs between definitions and usages in popular programming languages. It functions as an LSP server, offering code completion, chat functionality, and integration with various tools like browsers, databases, and debuggers. Users can interact with it through a Text UI in the command line.

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

xFasterTransformer

xFasterTransformer is an optimized solution for Large Language Models (LLMs) on the X86 platform, providing high performance and scalability for inference on mainstream LLM models. It offers C++ and Python APIs for easy integration, along with example codes and benchmark scripts. Users can prepare models in a different format, convert them, and use the APIs for tasks like encoding input prompts, generating token ids, and serving inference requests. The tool supports various data types and models, and can run in single or multi-rank modes using MPI. A web demo based on Gradio is available for popular LLM models like ChatGLM and Llama2. Benchmark scripts help evaluate model inference performance quickly, and MLServer enables serving with REST and gRPC interfaces.

NExT-GPT

NExT-GPT is an end-to-end multimodal large language model that can process input and generate output in various combinations of text, image, video, and audio. It leverages existing pre-trained models and diffusion models with end-to-end instruction tuning. The repository contains code, data, and model weights for NExT-GPT, allowing users to work with different modalities and perform tasks like encoding, understanding, reasoning, and generating multimodal content.

dom-to-semantic-markdown

DOM to Semantic Markdown is a tool that converts HTML DOM to Semantic Markdown for use in Large Language Models (LLMs). It maximizes semantic information, token efficiency, and preserves metadata to enhance LLMs' processing capabilities. The tool captures rich web content structure, including semantic tags, image metadata, table structures, and link destinations. It offers customizable conversion options and supports both browser and Node.js environments.

mLoRA

mLoRA (Multi-LoRA Fine-Tune) is an open-source framework for efficient fine-tuning of multiple Large Language Models (LLMs) using LoRA and its variants. It allows concurrent fine-tuning of multiple LoRA adapters with a shared base model, efficient pipeline parallelism algorithm, support for various LoRA variant algorithms, and reinforcement learning preference alignment algorithms. mLoRA helps save computational and memory resources when training multiple adapters simultaneously, achieving high performance on consumer hardware.

For similar tasks

DB-GPT

DB-GPT is a personal database administrator that can solve database problems by reading documents, using various tools, and writing analysis reports. It is currently undergoing an upgrade. **Features:** * **Online Demo:** * Import documents into the knowledge base * Utilize the knowledge base for well-founded Q&A and diagnosis analysis of abnormal alarms * Send feedbacks to refine the intermediate diagnosis results * Edit the diagnosis result * Browse all historical diagnosis results, used metrics, and detailed diagnosis processes * **Language Support:** * English (default) * Chinese (add "language: zh" in config.yaml) * **New Frontend:** * Knowledgebase + Chat Q&A + Diagnosis + Report Replay * **Extreme Speed Version for localized llms:** * 4-bit quantized LLM (reducing inference time by 1/3) * vllm for fast inference (qwen) * Tiny LLM * **Multi-path extraction of document knowledge:** * Vector database (ChromaDB) * RESTful Search Engine (Elasticsearch) * **Expert prompt generation using document knowledge** * **Upgrade the LLM-based diagnosis mechanism:** * Task Dispatching -> Concurrent Diagnosis -> Cross Review -> Report Generation * Synchronous Concurrency Mechanism during LLM inference * **Support monitoring and optimization tools in multiple levels:** * Monitoring metrics (Prometheus) * Flame graph in code level * Diagnosis knowledge retrieval (dbmind) * Logical query transformations (Calcite) * Index optimization algorithms (for PostgreSQL) * Physical operator hints (for PostgreSQL) * Backup and Point-in-time Recovery (Pigsty) * **Continuously updated papers and experimental reports** This project is constantly evolving with new features. Don't forget to star ⭐ and watch 👀 to stay up to date.

dbeaver

DBeaver is a free multi-platform database tool designed for developers, SQL programmers, database administrators, and analysts. It offers a wide range of features including schema editor, SQL editor, data editor, AI integration, ER diagrams, data export/import/migration, SQL execution plans, database administration tools, database dashboards, Spatial data viewer, proxy and SSH tunnelling, custom database drivers editor, etc. It supports over 100 database drivers out of the box and is compatible with any database that has a JDBC or ODBC driver. DBeaver also supports smart AI completion and code generation with OpenAI or Copilot.

spiceai

Spice is a portable runtime written in Rust that offers developers a unified SQL interface to materialize, accelerate, and query data from any database, data warehouse, or data lake. It connects, fuses, and delivers data to applications, machine-learning models, and AI-backends, functioning as an application-specific, tier-optimized Database CDN. Built with industry-leading technologies such as Apache DataFusion, Apache Arrow, Apache Arrow Flight, SQLite, and DuckDB. Spice makes it fast and easy to query data from one or more sources using SQL, co-locating a managed dataset with applications or machine learning models, and accelerating it with Arrow in-memory, SQLite/DuckDB, or attached PostgreSQL for fast, high-concurrency, low-latency queries.

For similar jobs

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.