lean-spec

Lightweight, flexible Spec-Driven Development (SDD) for modern AI-powered development

Stars: 184

LeanSpec is a tool for Spec-Driven Development that aims to help users ship faster with higher quality by creating small, focused documents that both humans and AI can understand. It provides features like Kanban board, smart search, dependency tracking, web UI, project stats, and AI integration. The tool is designed to work with various AI coding assistants and offers agent skills to teach AI the Spec-Driven Development methodology. LeanSpec is compatible with tools like VS Code Copilot, Claude Code, GitHub Copilot, and more, and it requires Node.js, pnpm, and Rust for development. The desktop app has a separate repository for development, and the tool supports common development tasks like testing, building, and documentation.

README:

Documentation • 中文文档 • Live Examples • Tutorials

Ship faster with higher quality. Lean specs that both humans and AI understand.

LeanSpec brings agile principles to SDD (Spec-Driven Development)—small, focused documents (<2,000 tokens) that keep you and your AI aligned.

# Try with a tutorial project

npx lean-spec init --example dark-theme

cd dark-theme && npm install && npm start

# Or add to your existing project

npm install -g lean-spec && lean-spec initVisualize your project:

lean-spec board # Kanban view

lean-spec stats # Project metrics

lean-spec ui # Web UI at localhost:3000Next: Your First Spec with AI (10 min tutorial)

High velocity + High quality. Other SDD frameworks add process overhead (multi-step workflows, rigid templates). Vibe coding is fast but chaotic (no shared understanding). LeanSpec hits the sweet spot:

- Fast iteration - Living documents that grow with your code

- AI performance - Small specs = better AI output (context rot is real)

- Always current - Lightweight enough that you actually update them

📖 Compare with Spec Kit, OpenSpec, Kiro →

Works with any AI coding assistant via MCP or CLI:

{

"mcpServers": {

"lean-spec": { "command": "npx", "args": ["@leanspec/mcp"] }

}

}Compatible with: VS Code Copilot, Claude Code, Gemini CLI, Cursor, Windsurf, Kiro CLI, Kimi CLI, Qodo CLI, Amp, Trae Agent, Qwen Code, Droid, and more.

Teach your AI assistant the Spec-Driven Development methodology:

# Recommended (uses skills.sh)

lean-spec skill install

# Or directly via skills.sh

npx skills add codervisor/lean-spec -yThis installs the leanspec-sdd skill which teaches AI agents:

- When to create specs vs. implement directly

- How to discover existing specs before creating new ones

- Best practices for context economy and progressive disclosure

- Complete SDD workflow (Discover → Design → Implement → Validate)

Compatible with: Claude Code, Cursor, Windsurf, GitHub Copilot, and other Agent Skills compatible tools.

| Feature | Description |

|---|---|

| 📊 Kanban Board |

lean-spec board - visual project tracking |

| 🔍 Smart Search |

lean-spec search - find specs by content or metadata |

| 🔗 Dependencies | Track spec relationships with depends_on and related

|

| 🎨 Web UI |

lean-spec ui - browser-based dashboard |

| 📈 Project Stats |

lean-spec stats - health metrics and bottleneck detection |

| 🤖 AI-Native | MCP server + CLI for AI assistants |

| 🖥️ Desktop App | Desktop app repo: codervisor/lean-spec-desktop |

-

Node.js:

>= 20.0.0 -

pnpm:

>= 10.0.0(preferred package manager)

-

Node.js:

>= 20.0.0 -

Rust:

>= 1.70(for building CLI/MCP/HTTP binaries) -

pnpm:

>= 10.0.0

Quick Check:

node --version # Should be v20.0.0 or higher

pnpm --version # Should be 10.0.0 or higher

rustc --version # Should be 1.70 or higher (dev only)The desktop application has moved to a dedicated repository:

Use that repository for desktop development, CI, and release workflows.

Common development tasks using pnpm:

# Development

pnpm install # Install dependencies

pnpm build # Build all packages

pnpm dev # Start dev mode (UI + Core)

pnpm dev:web # UI only

pnpm dev:cli # CLI only

# Testing

pnpm test # Run all tests

pnpm test:ui # Tests with UI

pnpm test:coverage # Coverage report

pnpm typecheck # Type check all packages

# Rust

pnpm rust:build # Build Rust packages (release)

pnpm rust:build:dev # Build Rust (dev, faster)

pnpm rust:test # Run Rust tests

pnpm rust:check # Quick Rust check

pnpm rust:clippy # Rust linting

pnpm rust:fmt # Format Rust code

# CLI (run locally)

pnpm cli board # Show spec board

pnpm cli list # List specs

pnpm cli create my-feat # Create new spec

pnpm cli validate # Validate specs

# Documentation

pnpm docs:dev # Start docs site

pnpm docs:build # Build docs

# Release

pnpm pre-release # Run all pre-release checks

pnpm prepare-publish # Prepare for npm publish

pnpm restore-packages # Restore after publishSee package.json for all available scripts.

📖 Full Documentation · CLI Reference · First Principles · FAQ · 中文文档

💬 Discussions · 🐛 Issues · 🤝 Contributing · 📋 Changelog · 📄 LICENSE

If you find LeanSpec helpful, feel free to add me on WeChat (note "LeanSpec") to join the discussion group.

如果您觉得 LeanSpec 对您有帮助,欢迎添加微信(备注 "LeanSpec")加入交流群。

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for lean-spec

Similar Open Source Tools

lean-spec

LeanSpec is a tool for Spec-Driven Development that aims to help users ship faster with higher quality by creating small, focused documents that both humans and AI can understand. It provides features like Kanban board, smart search, dependency tracking, web UI, project stats, and AI integration. The tool is designed to work with various AI coding assistants and offers agent skills to teach AI the Spec-Driven Development methodology. LeanSpec is compatible with tools like VS Code Copilot, Claude Code, GitHub Copilot, and more, and it requires Node.js, pnpm, and Rust for development. The desktop app has a separate repository for development, and the tool supports common development tasks like testing, building, and documentation.

local-cocoa

Local Cocoa is a privacy-focused tool that runs entirely on your device, turning files into memory to spark insights and power actions. It offers features like fully local privacy, multimodal memory, vector-powered retrieval, intelligent indexing, vision understanding, hardware acceleration, focused user experience, integrated notes, and auto-sync. The tool combines file ingestion, intelligent chunking, and local retrieval to build a private on-device knowledge system. The ultimate goal includes more connectors like Google Drive integration, voice mode for local speech-to-text interaction, and a plugin ecosystem for community tools and agents. Local Cocoa is built using Electron, React, TypeScript, FastAPI, llama.cpp, and Qdrant.

ClaudeBar

ClaudeBar is a macOS menu bar application that monitors AI coding assistant usage quotas. It allows users to keep track of their usage of Claude, Codex, Gemini, GitHub Copilot, Antigravity, and Z.ai at a glance. The application offers multi-provider support, real-time quota tracking, multiple themes, visual status indicators, system notifications, auto-refresh feature, and keyboard shortcuts for quick access. Users can customize monitoring by toggling individual providers on/off and receive alerts when quota status changes. The tool requires macOS 15+, Swift 6.2+, and CLI tools installed for the providers to be monitored.

Lumina-Note

Lumina Note is a local-first AI note-taking app designed to help users write, connect, and evolve knowledge with AI capabilities while ensuring data ownership. It offers a knowledge-centered workflow with features like Markdown editor, WikiLinks, and graph view. The app includes AI workspace modes such as Chat, Agent, Deep Research, and Codex, along with support for multiple model providers. Users can benefit from bidirectional links, LaTeX support, graph visualization, PDF reader with annotations, real-time voice input, and plugin ecosystem for extended functionalities. Lumina Note is built on Tauri v2 framework with a tech stack including React 18, TypeScript, Tailwind CSS, and SQLite for vector storage.

mistral.rs

Mistral.rs is a fast LLM inference platform written in Rust. We support inference on a variety of devices, quantization, and easy-to-use application with an Open-AI API compatible HTTP server and Python bindings.

antigravity-awesome-skills

Antigravity Awesome Skills is a curated library of 946+ high-performance agentic skills for AI coding assistants like Claude Code, Gemini CLI, Codex CLI, and more. It provides essential skills to transform your AI assistant into a full-stack digital agency, including capabilities from major companies like Anthropic, OpenAI, Google, and more. The repository offers a complete operating system for your AI agent, with skills organized into categories like Architecture, Business, Data & AI, Development, Security, Testing, and more. Users can easily install and use these skills to enhance their AI assistant's capabilities.

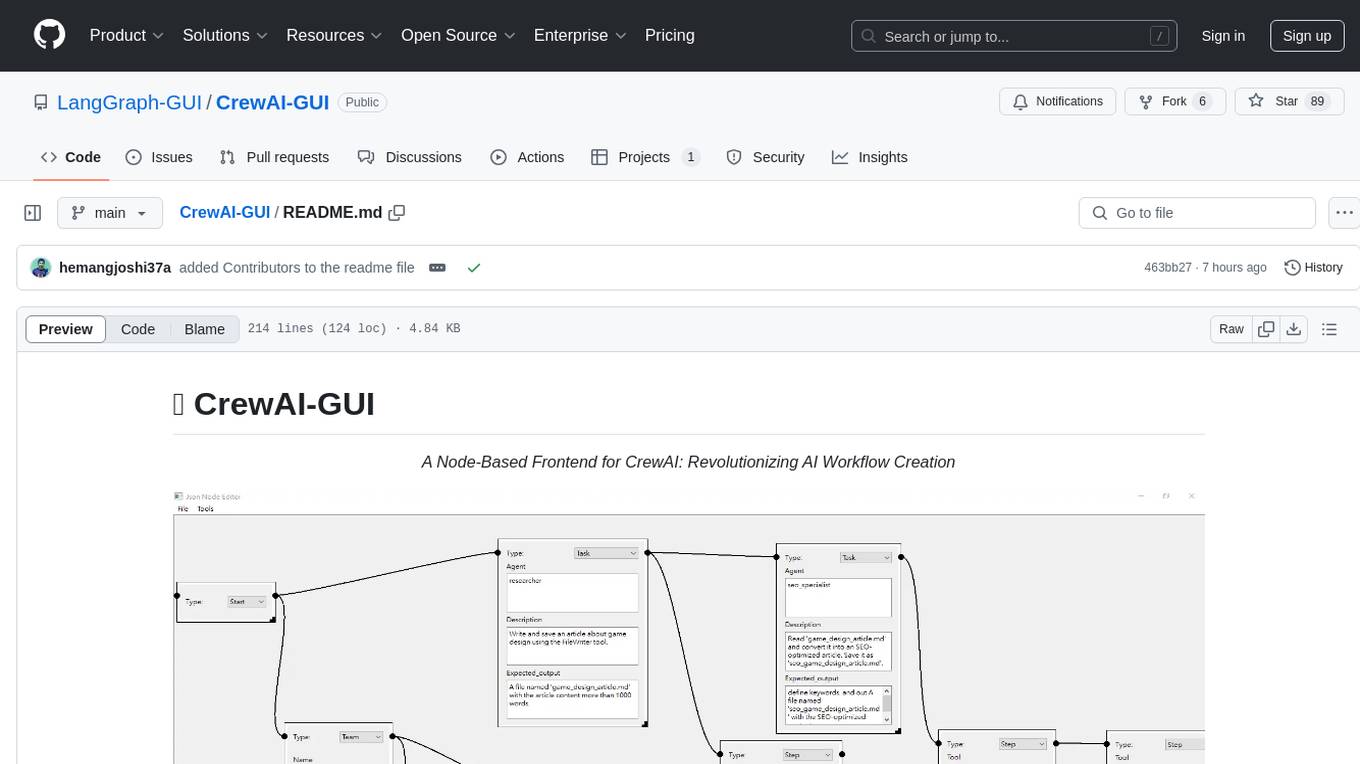

CrewAI-GUI

CrewAI-GUI is a Node-Based Frontend tool designed to revolutionize AI workflow creation. It empowers users to design complex AI agent interactions through an intuitive drag-and-drop interface, export designs to JSON for modularity and reusability, and supports both GPT-4 API and Ollama for flexible AI backend. The tool ensures cross-platform compatibility, allowing users to create AI workflows on Windows, Linux, or macOS efficiently.

orchestkit

OrchestKit is a powerful and flexible orchestration tool designed to streamline and automate complex workflows. It provides a user-friendly interface for defining and managing orchestration tasks, allowing users to easily create, schedule, and monitor workflows. With support for various integrations and plugins, OrchestKit enables seamless automation of tasks across different systems and applications. Whether you are a developer looking to automate deployment processes or a system administrator managing complex IT operations, OrchestKit offers a comprehensive solution to simplify and optimize your workflow management.

tingly-box

Tingly Box is a tool that helps in deciding which model to call, compressing context, and routing requests efficiently. It offers secure, reliable, and customizable functional extensions. With features like unified API, smart routing, context compression, auto API translation, blazing fast performance, flexible authentication, visual control panel, and client-side usage stats, Tingly Box provides a comprehensive solution for managing AI models and tokens. It supports integration with various IDEs, CLI tools, SDKs, and AI applications, making it versatile and easy to use. The tool also allows seamless integration with OAuth providers like Claude Code, enabling users to utilize existing quotas in OpenAI-compatible tools. Tingly Box aims to simplify AI model management and usage by providing a single endpoint for multiple providers with minimal configuration, promoting seamless integration with SDKs and CLI tools.

handit.ai

Handit.ai is an autonomous engineer tool designed to fix AI failures 24/7. It catches failures, writes fixes, tests them, and ships PRs automatically. It monitors AI applications, detects issues, generates fixes, tests them against real data, and ships them as pull requests—all automatically. Users can write JavaScript, TypeScript, Python, and more, and the tool automates what used to require manual debugging and firefighting.

R2R

R2R (RAG to Riches) is a fast and efficient framework for serving high-quality Retrieval-Augmented Generation (RAG) to end users. The framework is designed with customizable pipelines and a feature-rich FastAPI implementation, enabling developers to quickly deploy and scale RAG-based applications. R2R was conceived to bridge the gap between local LLM experimentation and scalable production solutions. **R2R is to LangChain/LlamaIndex what NextJS is to React**. A JavaScript client for R2R deployments can be found here. ### Key Features * **🚀 Deploy** : Instantly launch production-ready RAG pipelines with streaming capabilities. * **🧩 Customize** : Tailor your pipeline with intuitive configuration files. * **🔌 Extend** : Enhance your pipeline with custom code integrations. * **⚖️ Autoscale** : Scale your pipeline effortlessly in the cloud using SciPhi. * **🤖 OSS** : Benefit from a framework developed by the open-source community, designed to simplify RAG deployment.

ai-real-estate-assistant

AI Real Estate Assistant is a modern platform that uses AI to assist real estate agencies in helping buyers and renters find their ideal properties. It features multiple AI model providers, intelligent query processing, advanced search and retrieval capabilities, and enhanced user experience. The tool is built with a FastAPI backend and Next.js frontend, offering semantic search, hybrid agent routing, and real-time analytics.

MassGen

MassGen is a cutting-edge multi-agent system that leverages the power of collaborative AI to solve complex tasks. It assigns a task to multiple AI agents who work in parallel, observe each other's progress, and refine their approaches to converge on the best solution to deliver a comprehensive and high-quality result. The system operates through an architecture designed for seamless multi-agent collaboration, with key features including cross-model/agent synergy, parallel processing, intelligence sharing, consensus building, and live visualization. Users can install the system, configure API settings, and run MassGen for various tasks such as question answering, creative writing, research, development & coding tasks, and web automation & browser tasks. The roadmap includes plans for advanced agent collaboration, expanded model, tool & agent integration, improved performance & scalability, enhanced developer experience, and a web interface.

local-deep-research

Local Deep Research is a powerful AI-powered research assistant that performs deep, iterative analysis using multiple LLMs and web searches. It can be run locally for privacy or configured to use cloud-based LLMs for enhanced capabilities. The tool offers advanced research capabilities, flexible LLM support, rich output options, privacy-focused operation, enhanced search integration, and academic & scientific integration. It also provides a web interface, command line interface, and supports multiple LLM providers and search engines. Users can configure AI models, search engines, and research parameters for customized research experiences.

inspector

A developer tool for testing and debugging Model Context Protocol (MCP) servers. It allows users to test the compliance of their MCP servers with the latest MCP specs, supports various transports like STDIO, SSE, and Streamable HTTP, features an LLM Playground for testing server behavior against different models, provides comprehensive logging and error reporting for MCP server development, and offers a modern developer experience with multiple server connections and saved configurations. The tool is built using Next.js and integrates MCP capabilities, AI SDKs from OpenAI, Anthropic, and Ollama, and various technologies like Node.js, TypeScript, and Next.js.

aegra

Aegra is a self-hosted AI agent backend platform that provides LangGraph power without vendor lock-in. Built with FastAPI + PostgreSQL, it offers complete control over agent orchestration for teams looking to escape vendor lock-in, meet data sovereignty requirements, enable custom deployments, and optimize costs. Aegra is Agent Protocol compliant and perfect for teams seeking a free, self-hosted alternative to LangGraph Platform with zero lock-in, full control, and compatibility with existing LangGraph Client SDK.

For similar tasks

metaflow

Metaflow is a user-friendly library designed to assist scientists and engineers in developing and managing real-world data science projects. Initially created at Netflix, Metaflow aimed to enhance the productivity of data scientists working on diverse projects ranging from traditional statistics to cutting-edge deep learning. For further information, refer to Metaflow's website and documentation.

ck

Collective Mind (CM) is a collection of portable, extensible, technology-agnostic and ready-to-use automation recipes with a human-friendly interface (aka CM scripts) to unify and automate all the manual steps required to compose, run, benchmark and optimize complex ML/AI applications on any platform with any software and hardware: see online catalog and source code. CM scripts require Python 3.7+ with minimal dependencies and are continuously extended by the community and MLCommons members to run natively on Ubuntu, MacOS, Windows, RHEL, Debian, Amazon Linux and any other operating system, in a cloud or inside automatically generated containers while keeping backward compatibility - please don't hesitate to report encountered issues here and contact us via public Discord Server to help this collaborative engineering effort! CM scripts were originally developed based on the following requirements from the MLCommons members to help them automatically compose and optimize complex MLPerf benchmarks, applications and systems across diverse and continuously changing models, data sets, software and hardware from Nvidia, Intel, AMD, Google, Qualcomm, Amazon and other vendors: * must work out of the box with the default options and without the need to edit some paths, environment variables and configuration files; * must be non-intrusive, easy to debug and must reuse existing user scripts and automation tools (such as cmake, make, ML workflows, python poetry and containers) rather than substituting them; * must have a very simple and human-friendly command line with a Python API and minimal dependencies; * must require minimal or zero learning curve by using plain Python, native scripts, environment variables and simple JSON/YAML descriptions instead of inventing new workflow languages; * must have the same interface to run all automations natively, in a cloud or inside containers. CM scripts were successfully validated by MLCommons to modularize MLPerf inference benchmarks and help the community automate more than 95% of all performance and power submissions in the v3.1 round across more than 120 system configurations (models, frameworks, hardware) while reducing development and maintenance costs.

client-python

The Mistral Python Client is a tool inspired by cohere-python that allows users to interact with the Mistral AI API. It provides functionalities to access and utilize the AI capabilities offered by Mistral. Users can easily install the client using pip and manage dependencies using poetry. The client includes examples demonstrating how to use the API for various tasks, such as chat interactions. To get started, users need to obtain a Mistral API Key and set it as an environment variable. Overall, the Mistral Python Client simplifies the integration of Mistral AI services into Python applications.

ComposeAI

ComposeAI is an Android & iOS application similar to ChatGPT, built using Compose Multiplatform. It utilizes various technologies such as Compose Multiplatform, Material 3, OpenAI Kotlin, Voyager, Koin, SQLDelight, Multiplatform Settings, Coil3, Napier, BuildKonfig, Firebase Analytics & Crashlytics, and AdMob. The app architecture follows Google's latest guidelines. Users need to set up their own OpenAI API key before using the app.

aiohttp-devtools

aiohttp-devtools provides dev tools for developing applications with aiohttp and associated libraries. It includes CLI commands for running a local server with live reloading and serving static files. The tools aim to simplify the development process by automating tasks such as setting up a new application and managing dependencies. Developers can easily create and run aiohttp applications, manage static files, and utilize live reloading for efficient development.

minefield

BitBom Minefield is a tool that uses roaring bit maps to graph Software Bill of Materials (SBOMs) with a focus on speed, air-gapped operation, scalability, and customizability. It is optimized for rapid data processing, operates securely in isolated environments, supports millions of nodes effortlessly, and allows users to extend the project without relying on upstream changes. The tool enables users to manage and explore software dependencies within isolated environments by offline processing and analyzing SBOMs.

gez

Gez is a high-performance micro frontend framework based on ESM. It uses Rspack compilation and maps modules to URLs with strong caching and content-based hashing. Gez embraces modern micro frontend architecture by leveraging ESM and importmap for dependency management, providing reliable isolation with module scope, seamless integration with any modern frontend framework, intuitive development experience, and optimal performance with zero runtime overhead and reliable caching strategies.

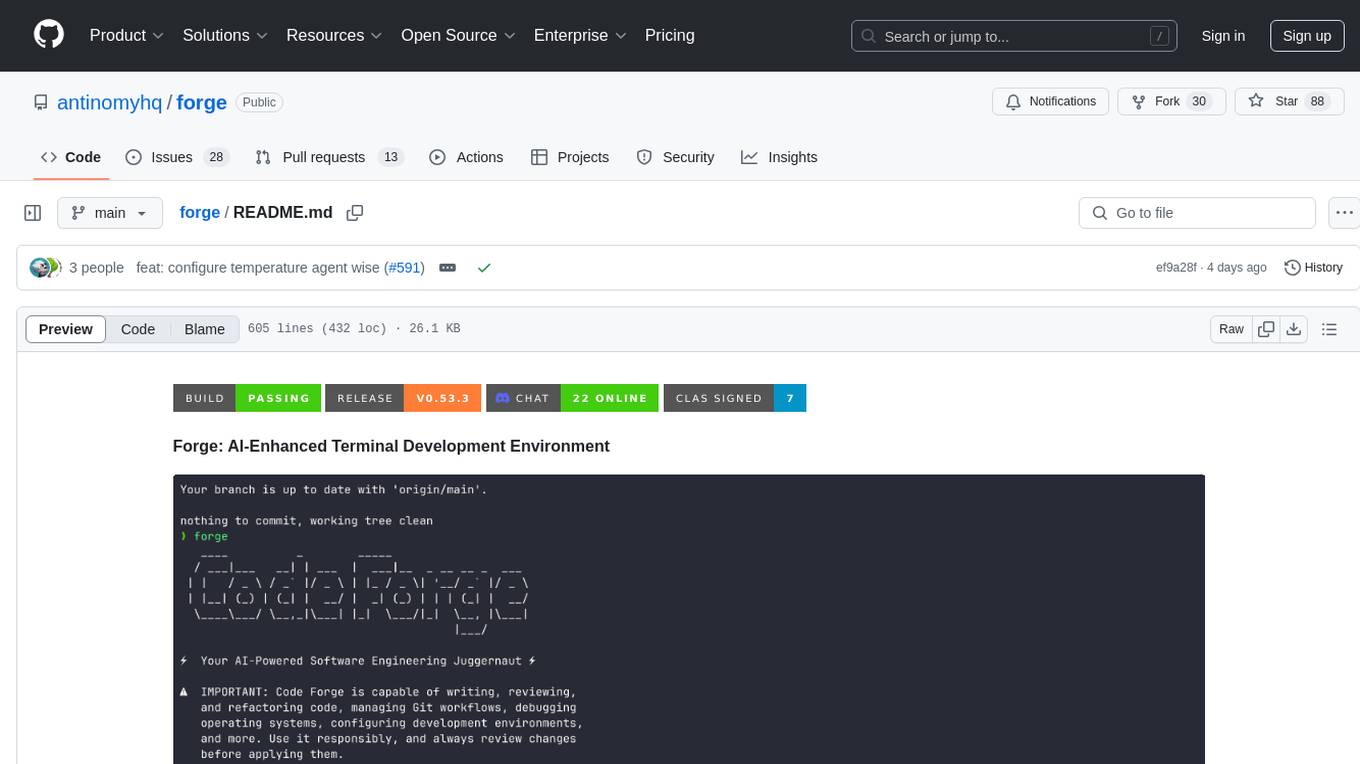

forge

Forge is a powerful open-source tool for building modern web applications. It provides a simple and intuitive interface for developers to quickly scaffold and deploy projects. With Forge, you can easily create custom components, manage dependencies, and streamline your development workflow. Whether you are a beginner or an experienced developer, Forge offers a flexible and efficient solution for your web development needs.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.