call-center-ai

Send a phone call from AI agent, in an API call. Or, directly call the bot from the configured phone number!

Stars: 119

Call Center AI is an AI-powered call center solution that leverages Azure and OpenAI GPT. It is a proof of concept demonstrating the integration of Azure Communication Services, Azure Cognitive Services, and Azure OpenAI to build an automated call center solution. The project showcases features like accessing claims on a public website, customer conversation history, language change during conversation, bot interaction via phone number, multiple voice tones, lexicon understanding, todo list creation, customizable prompts, content filtering, GPT-4 Turbo for customer requests, specific data schema for claims, documentation database access, SMS report sending, conversation resumption, and more. The system architecture includes components like RAG AI Search, SMS gateway, call gateway, moderation, Cosmos DB, event broker, GPT-4 Turbo, Redis cache, translation service, and more. The tool can be deployed remotely using GitHub Actions and locally with prerequisites like Azure environment setup, configuration file creation, and resource hosting. Advanced usage includes custom training data with AI Search, prompt customization, language customization, moderation level customization, claim data schema customization, OpenAI compatible model usage for the LLM, and Twilio integration for SMS.

README:

AI-powered call center solution with Azure and OpenAI GPT.

Send a phone call from AI agent, in an API call. Or, directly call the bot from the configured phone number!

Insurance, IT support, customer service, and more. The bot can be customized in few seconds (really) to fit your needs.

# Ask the bot to call a phone number

data='{

"bot_company": "Contoso",

"bot_name": "Amélie",

"phone_number": "+11234567890",

"task": "Help the customer with their digital workplace. Assistant is working for the IT support department. The objective is to help the customer with their issue and gather information in the claim.",

"agent_phone_number": "+33612345678",

"claim": [

{

"name": "hardware_info",

"type": "text"

},

{

"name": "first_seen",

"type": "datetime"

},

{

"name": "building_location",

"type": "text"

}

]

}'

curl \

--header 'Content-Type: application/json' \

--request POST \

--url https://xxx/call \

--data $data[!NOTE] This project is a proof of concept. It is not intended to be used in production. This demonstrates how can be combined Azure Communication Services, Azure Cognitive Services and Azure OpenAI to build an automated call center solution.

- [x] Access the claim on a public website

- [x] Access to customer conversation history

- [x] Allow user to change the language of the conversation

- [x] Assistant can send SMS to the user for futher information

- [x] Bot can be called from a phone number

- [x] Bot use multiple voice tones (e.g. happy, sad, neutral) to keep the conversation engaging

- [x] Company products (= lexicon) can be understood by the bot (e.g. a name of a specific insurance product)

- [x] Create by itself a todo list of tasks to complete the claim

- [x] Customizable prompts

- [x] Disengaging from a human agent when needed

- [x] Filter out inappropriate content from the LLM, like profanity or concurrence company names

- [x] Fine understanding of the customer request with GPT-4 Turbo

- [x] Follow a specific data schema for the claim

- [x] Has access to a documentation database (few-shot training / RAG)

- [x] Help the user to find the information needed to complete the claim

- [x] Jailbreak detection

- [x] Lower AI Search cost by usign a Redis cache

- [x] Monitoring and tracing with Application Insights

- [x] Receive SMS during a conversation for explicit wordings

- [x] Responses are streamed from the LLM to the user, to avoid long pauses

- [x] Send a SMS report after the call

- [x] Take back a conversation after a disengagement

- [ ] Call back the user when needed

- [ ] Simulate a IVR workflow

A French demo is avaialble on YouTube. Do not hesitate to watch the demo in x1.5 speed to get a quick overview of the project.

Main interactions shown in the demo:

- User calls the call center

- The bot answers and the conversation starts

- The bot stores conversation, claim and todo list in the database

Extract of the data stored during the call:

{

"claim": {

"incident_date_time": "2024-01-11T19:33:41",

"incident_description": "The vehicle began to travel with a burning smell and the driver pulled over to the side of the freeway.",

"policy_number": "B01371946",

"policyholder_phone": "[number masked for the demo]",

"policyholder_name": "Clémence Lesne",

"vehicle_info": "Ford Fiesta 2003"

},

"reminders": [

{

"description": "Check that all the information in Clémence Lesne's file is correct and complete.",

"due_date_time": "2024-01-18T16:00:00",

"title": "Check Clémence file"

}

]

}A report is available at https://[your_domain]/report/[phone_number] (like http://localhost:8080/report/%2B133658471534). It shows the conversation history, claim data and reminders.

---

title: System diagram (C4 model)

---

graph

user(["User"])

agent(["Agent"])

app["Call Center AI"]

app -- Transfer to --> agent

app -. Send voice .-> user

user -- Call --> app---

title: Claim AI component diagram (C4 model)

---

graph LR

agent(["Agent"])

user(["User"])

subgraph "Claim AI"

ada["Embedding\n(ADA)"]

app["App\n(Functions App)"]

communication_services["Call & SMS gateway\n(Communication Services)"]

db[("Conversations and claims\n(Cosmos DB / SQLite)")]

eventgrid["Broker\n(Event Grid)"]

gpt["LLM\n(GPT-4o)"]

queues[("Queues\n(Azure Storage)")]

redis[("Cache\n(Redis)")]

search[("RAG\n(AI Search)")]

sounds[("Sounds\n(Azure Storage)")]

sst["Speech-to-Text\n(Cognitive Services)"]

translation["Translation\n(Cognitive Services)"]

tts["Text-to-Speech\n(Cognitive Services)"]

end

app -- Respond with text --> communication_services

app -- Ask for translation --> translation

app -- Ask to transfer --> communication_services

app -- Few-shot training --> search

app -- Generate completion --> gpt

app -- Get cached data --> redis

app -- Save conversation --> db

app -- Send SMS report --> communication_services

app -. Watch .-> queues

communication_services -- Generate voice --> tts

communication_services -- Load sound --> sounds

communication_services -- Notifies --> eventgrid

communication_services -- Send SMS --> user

communication_services -- Transfer to --> agent

communication_services -- Transform voice --> sst

communication_services -. Send voice .-> user

eventgrid -- Push to --> queues

search -- Generate embeddings --> ada

user -- Call --> communication_servicessequenceDiagram

autonumber

actor Customer

participant PSTN

participant Text to Speech

participant Speech to Text

actor Human agent

participant Event Grid

participant Communication Services

participant App

participant Cosmos DB

participant OpenAI GPT

participant AI Search

App->>Event Grid: Subscribe to events

Customer->>PSTN: Initiate a call

PSTN->>Communication Services: Forward call

Communication Services->>Event Grid: New call event

Event Grid->>App: Send event to event URL (HTTP webhook)

activate App

App->>Communication Services: Accept the call and give inbound URL

deactivate App

Communication Services->>Speech to Text: Transform speech to text

Communication Services->>App: Send text to the inbound URL

activate App

alt First call

App->>Communication Services: Send static SSML text

else Callback

App->>AI Search: Gather training data

App->>OpenAI GPT: Ask for a completion

OpenAI GPT-->>App: Respond (HTTP/2 SSE)

loop Over buffer

loop Over multiple tools

alt Is this a claim data update?

App->>Cosmos DB: Update claim data

else Does the user want the human agent?

App->>Communication Services: Send static SSML text

App->>Communication Services: Transfer to a human

Communication Services->>Human agent: Call the phone number

else Should we end the call?

App->>Communication Services: Send static SSML text

App->>Communication Services: End the call

end

end

end

App->>Cosmos DB: Persist conversation

end

deactivate App

Communication Services->>PSTN: Send voice

PSTN->>Customer: Forward voiceApplication is hosted by Azure Functions. Code will be pushed automatically make deploy, with after the deployment.

Steps to deploy:

-

- Prefer to use lowercase and no special characters other than dashes (e.g.

ccai-customer-a)

- Prefer to use lowercase and no special characters other than dashes (e.g.

-

Create a Communication Services resource

- Same name as the resource group

- Enable system managed identity

-

- From the Communication Services resource

- Allow inbound and outbound communication

- Enable voice (required) and SMS (optional) capabilities

-

Create a local

config.yamlfile# config.yaml conversation: initiate: # Phone number the bot will transfer the call to if customer asks for a human agent agent_phone_number: "+33612345678" bot_company: Contoso bot_name: Amélie lang: {} communication_services: # Phone number purshased from Communication Services phone_number: "+33612345678" sms: {} prompts: llm: {} tts: {}

-

Connect to your Azure environment (e.g.

az login) -

Run deployment automation with

make deploy name=my-rg-name- Wait for the deployment to finish

-

- An index named

trainings - A semantic search configuration on the index named

default

- An index named

Get the logs with make logs name=my-rg-name.

Place a file called config.yaml in the root of the project with the following content:

# config.yaml

resources:

public_url: "https://xxx.blob.core.windows.net/public"

conversation:

initiate:

agent_phone_number: "+33612345678"

bot_company: Contoso

bot_name: Robert

communication_services:

access_key: xxx

call_queue_name: call-33612345678

endpoint: https://xxx.france.communication.azure.com

phone_number: "+33612345678"

post_queue_name: post-33612345678

sms_queue_name: sms-33612345678

cognitive_service:

# Must be of type "AI services multi-service account"

endpoint: https://xxx.cognitiveservices.azure.com

llm:

fast:

mode: azure_openai

azure_openai:

api_key: xxx

context: 16385

deployment: gpt-35-turbo-0125

endpoint: https://xxx.openai.azure.com

model: gpt-35-turbo

streaming: true

slow:

mode: azure_openai

azure_openai:

api_key: xxx

context: 128000

deployment: gpt-4o-2024-05-13

endpoint: https://xxx.openai.azure.com

model: gpt-4o

streaming: true

ai_search:

access_key: xxx

endpoint: https://xxx.search.windows.net

index: trainings

ai_translation:

access_key: xxx

endpoint: https://xxx.cognitiveservices.azure.comTo use a Service Principal to authenticate to Azure, you can also add the following in a .env file:

AZURE_CLIENT_ID=xxx

AZURE_CLIENT_SECRET=xxx

AZURE_TENANT_ID=xxxTo override a specific configuration value, you can also use environment variables. For example, to override the llm.fast.endpoint value, you can use the LLM__FAST__ENDPOINT variable:

LLM__FAST__ENDPOINT=https://xxx.openai.azure.comThen run:

# Install dependencies

make installAlso, a public file server is needed to host the audio files. Upload the files with make copy-resources name=my-rg-name (my-rg-name is the storage account name), or manually.

For your knowledge, this resources folder contains:

- Audio files (

xxx.wav) to be played during the call -

Lexicon file (

lexicon.xml) to be used by the bot to understand the company products (note: any change makes up to 15 minutes to be taken into account)

Finally, run:

# Start the local API server

make devBreakpoints can be added in the code to debug the application with your favorite IDE.

Also, local.py script is available to test the application without the need of a phone call (= without Communication Services). Run the script with:

python3 -m tests.localTraining data is stored on AI Search to be retrieved by the bot, on demand.

Required index schema:

| Field Name | Type |

Retrievable | Searchable | Dimensions | Vectorizer |

|---|---|---|---|---|---|

| answer | Edm.String |

Yes | Yes | ||

| context | Edm.String |

Yes | Yes | ||

| created_at | Edm.String |

Yes | No | ||

| document_synthesis | Edm.String |

Yes | Yes | ||

| file_path | Edm.String |

Yes | No | ||

| id | Edm.String |

Yes | No | ||

| question | Edm.String |

Yes | Yes | ||

| vectors | Collection(Edm.Single) |

No | Yes | 1536 | OpenAI ADA |

Software to fill the index is included on Synthetic RAG Index repository.

The bot can be used in multiple languages. It can understand the language the user chose.

See the list of supported languages for the Text-to-Speech service.

# config.yaml

[...]

conversation:

initiate:

lang:

default_short_code: "fr-FR"

availables:

- pronunciations_en: ["French", "FR", "France"]

short_code: "fr-FR"

voice: "fr-FR-DeniseNeural"

- pronunciations_en: ["Chinese", "ZH", "China"]

short_code: "zh-CN"

voice: "zh-CN-XiaoqiuNeural"Levels are defined for each category of Content Safety. The higher the score, the more strict the moderation is, from 0 to 7. Moderation is applied on all bot data, including the web page and the conversation. Configure them in Azure OpenAI Content Filters.

Customization of the data schema is fully supported. You can add or remove fields as needed, depending on the requirements.

By default, the schema of composed of:

-

caller_email(email) -

caller_name(text) -

caller_phone(phone_number)

Values are validated to ensure the data format commit to your schema. They can be either:

datetimeemail-

phone_number(E164format) text

Finally, an optional description can be provided. The description must be short and meaningful, it will be passed to the LLM.

Default schema, for inbound calls, is defined in the configuration:

# config.yaml

[...]

conversation:

default_initiate:

claim:

- name: additional_notes

type: text

# description: xxx

- name: device_info

type: text

# description: xxx

- name: incident_datetime

type: datetime

# description: xxxClaim schema can be customized for each call, by adding the claim field in the POST /call API call.

The objective is a description of what the bot will do during the call. It is used to give a context to the LLM. It should be short, meaningful, and written in English.

This solution is priviledged instead of overriding the LLM prompt.

Default task, for inbound calls, is defined in the configuration:

# config.yaml

[...]

conversation:

initiate:

task: "Help the customer with their insurance claim. Assistant requires data from the customer to fill the claim. The latest claim data will be given. Assistant role is not over until all the relevant data is gathered."Task can be customized for each call, by adding the task field in the POST /call API call.

Conversation options are documented in conversation.py. The options can all be overridden in config.yaml file:

# config.yaml

[...]

conversation:

answer_hard_timeout_sec: 180

answer_soft_timeout_sec: 30

callback_timeout_hour: 72

phone_silence_timeout_sec: 1

slow_llm_for_chat: true

voice_recognition_retry_max: 2To use a model compatible with the OpenAI completion API, you need to create an account and get the following information:

- API key

- Context window size

- Endpoint URL

- Model name

- Streaming capability

Then, add the following in the config.yaml file:

# config.yaml

[...]

llm:

fast:

mode: openai

openai:

api_key: xxx

context: 16385

endpoint: https://api.openai.com

model: gpt-35-turbo

streaming: true

slow:

mode: openai

openai:

api_key: xxx

context: 128000

endpoint: https://api.openai.com

model: gpt-4

streaming: trueTo use Twilio for SMS, you need to create an account and get the following information:

- Account SID

- Auth Token

- Phone number

Then, add the following in the config.yaml file:

# config.yaml

[...]

sms:

mode: twilio

twilio:

account_sid: xxx

auth_token: xxx

phone_number: "+33612345678"Note that prompt examples contains {xxx} placeholders. These placeholders are replaced by the bot with the corresponding data. For example, {bot_name} is internally replaced by the bot name.

Be sure to write all the TTS prompts in English. This language is used as a pivot language for the conversation translation.

# config.yaml

[...]

prompts:

tts:

hello_tpl: |

Hello, I'm {bot_name}, from {bot_company}! I'm an IT support specialist.

Here's how I work: when I'm working, you'll hear a little music; then, at the beep, it's your turn to speak. You can speak to me naturally, I'll understand.

Examples:

- "I've got a problem with my computer, it won't turn on".

- "The external screen is flashing, I don't know why".

What's your problem?

llm:

default_system_tpl: |

Assistant is called {bot_name} and is in a call center for the company {bot_company} as an expert with 20 years of experience in IT service.

# Context

Today is {date}. Customer is calling from {phone_number}. Call center number is {bot_phone_number}.

chat_system_tpl: |

# Objective

Provide internal IT support to employees. Assistant requires data from the employee to provide IT support. The assistant's role is not over until the issue is resolved or the request is fulfilled.

# Rules

- Answers in {default_lang}, even if the customer speaks another language

- Cannot talk about any topic other than IT support

- Is polite, helpful, and professional

- Rephrase the employee's questions as statements and answer them

- Use additional context to enhance the conversation with useful details

- When the employee says a word and then spells out letters, this means that the word is written in the way the employee spelled it (e.g. "I work in Paris PARIS", "My name is John JOHN", "My email is Clemence CLEMENCE at gmail GMAIL dot com COM")

- You work for {bot_company}, not someone else

# Required employee data to be gathered by the assistant

- Department

- Description of the IT issue or request

- Employee name

- Location

# General process to follow

1. Gather information to know the employee's identity (e.g. name, department)

2. Gather details about the IT issue or request to understand the situation (e.g. description, location)

3. Provide initial troubleshooting steps or solutions

4. Gather additional information if needed (e.g. error messages, screenshots)

5. Be proactive and create reminders for follow-up or further assistance

# Support status

{claim}

# Reminders

{reminders}At the time of development, no LLM framework was available to handle all of these features: streaming capability with multi-tools, backup models on availability issue, callbacks mechanisms in the triggered tools. So, OpenAI SDK is used directly and some algorithms are implemented to handle reliability.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for call-center-ai

Similar Open Source Tools

call-center-ai

Call Center AI is an AI-powered call center solution that leverages Azure and OpenAI GPT. It is a proof of concept demonstrating the integration of Azure Communication Services, Azure Cognitive Services, and Azure OpenAI to build an automated call center solution. The project showcases features like accessing claims on a public website, customer conversation history, language change during conversation, bot interaction via phone number, multiple voice tones, lexicon understanding, todo list creation, customizable prompts, content filtering, GPT-4 Turbo for customer requests, specific data schema for claims, documentation database access, SMS report sending, conversation resumption, and more. The system architecture includes components like RAG AI Search, SMS gateway, call gateway, moderation, Cosmos DB, event broker, GPT-4 Turbo, Redis cache, translation service, and more. The tool can be deployed remotely using GitHub Actions and locally with prerequisites like Azure environment setup, configuration file creation, and resource hosting. Advanced usage includes custom training data with AI Search, prompt customization, language customization, moderation level customization, claim data schema customization, OpenAI compatible model usage for the LLM, and Twilio integration for SMS.

claim-ai-phone-bot

AI-powered call center solution with Azure and OpenAI GPT. The bot can answer calls, understand the customer's request, and provide relevant information or assistance. It can also create a todo list of tasks to complete the claim, and send a report after the call. The bot is customizable, and can be used in multiple languages.

Lumos

Lumos is a Chrome extension powered by a local LLM co-pilot for browsing the web. It allows users to summarize long threads, news articles, and technical documentation. Users can ask questions about reviews and product pages. The tool requires a local Ollama server for LLM inference and embedding database. Lumos supports multimodal models and file attachments for processing text and image content. It also provides options to customize models, hosts, and content parsers. The extension can be easily accessed through keyboard shortcuts and offers tools for automatic invocation based on prompts.

tuui

TUUI is a desktop MCP client designed for accelerating AI adoption through the Model Context Protocol (MCP) and enabling cross-vendor LLM API orchestration. It is an LLM chat desktop application based on MCP, created using AI-generated components with strict syntax checks and naming conventions. The tool integrates AI tools via MCP, orchestrates LLM APIs, supports automated application testing, TypeScript, multilingual, layout management, global state management, and offers quick support through the GitHub community and official documentation.

mcp-redis

The Redis MCP Server is a natural language interface designed for agentic applications to efficiently manage and search data in Redis. It integrates seamlessly with MCP (Model Content Protocol) clients, enabling AI-driven workflows to interact with structured and unstructured data in Redis. The server supports natural language queries, seamless MCP integration, full Redis support for various data types, search and filtering capabilities, scalability, and lightweight design. It provides tools for managing data stored in Redis, such as string, hash, list, set, sorted set, pub/sub, streams, JSON, query engine, and server management. Installation can be done from PyPI or GitHub, with options for testing, development, and Docker deployment. Configuration can be via command line arguments or environment variables. Integrations include OpenAI Agents SDK, Augment, Claude Desktop, and VS Code with GitHub Copilot. Use cases include AI assistants, chatbots, data search & analytics, and event processing. Contributions are welcome under the MIT License.

sdfx

SDFX is the ultimate no-code platform for building and sharing AI apps with beautiful UI. It enables the creation of user-friendly interfaces for complex workflows by combining Comfy workflow with a UI. The tool is designed to merge the benefits of form-based UI and graph-node based UI, allowing users to create intricate graphs with a high-level UI overlay. SDFX is fully compatible with ComfyUI, abstracting the need for installing ComfyUI. It offers features like animated graph navigation, node bookmarks, UI debugger, custom nodes manager, app and template export, image and mask editor, and more. The tool compiles as a native app or web app, making it easy to maintain and add new features.

memobase

Memobase is a user profile-based memory system designed to enhance Generative AI applications by enabling them to remember, understand, and evolve with users. It provides structured user profiles, scalable profiling, easy integration with existing LLM stacks, batch processing for speed, and is production-ready. Users can manage users, insert data, get memory profiles, and track user preferences and behaviors. Memobase is ideal for applications that require user analysis, tracking, and personalized interactions.

leva

Leva is a Ruby on Rails framework designed for evaluating Language Models (LLMs) using ActiveRecord datasets on production models. It offers a flexible structure for creating experiments, managing datasets, and implementing various evaluation logic on production data with security in mind. Users can set up datasets, implement runs and evals, run experiments with different configurations, use prompts, and analyze results. Leva's components include classes like Leva, Leva::BaseRun, and Leva::BaseEval, as well as models like Leva::Dataset, Leva::DatasetRecord, Leva::Experiment, Leva::RunnerResult, Leva::EvaluationResult, and Leva::Prompt. The tool aims to provide a comprehensive solution for evaluating language models efficiently and securely.

odoo-expert

RAG-Powered Odoo Documentation Assistant is a comprehensive documentation processing and chat system that converts Odoo's documentation to a searchable knowledge base with an AI-powered chat interface. It supports multiple Odoo versions (16.0, 17.0, 18.0) and provides semantic search capabilities powered by OpenAI embeddings. The tool automates the conversion of RST to Markdown, offers real-time semantic search, context-aware AI-powered chat responses, and multi-version support. It includes a Streamlit-based web UI, REST API for programmatic access, and a CLI for document processing and chat. The system operates through a pipeline of data processing steps and an interface layer for UI and API access to the knowledge base.

basic-memory

Basic Memory is a tool that enables users to build persistent knowledge through natural conversations with Large Language Models (LLMs) like Claude. It uses the Model Context Protocol (MCP) to allow compatible LLMs to read and write to a local knowledge base stored in simple Markdown files on the user's computer. The tool facilitates creating structured notes during conversations, maintaining a semantic knowledge graph, and keeping all data local and under user control. Basic Memory aims to address the limitations of ephemeral LLM interactions by providing a structured, bi-directional, and locally stored knowledge management solution.

invariant

Invariant Analyzer is an open-source scanner designed for LLM-based AI agents to find bugs, vulnerabilities, and security threats. It scans agent execution traces to identify issues like looping behavior, data leaks, prompt injections, and unsafe code execution. The tool offers a library of built-in checkers, an expressive policy language, data flow analysis, real-time monitoring, and extensible architecture for custom checkers. It helps developers debug AI agents, scan for security violations, and prevent security issues and data breaches during runtime. The analyzer leverages deep contextual understanding and a purpose-built rule matching engine for security policy enforcement.

bot-on-anything

The 'bot-on-anything' repository allows developers to integrate various AI models into messaging applications, enabling the creation of intelligent chatbots. By configuring the connections between models and applications, developers can easily switch between multiple channels within a project. The architecture is highly scalable, allowing the reuse of algorithmic capabilities for each new application and model integration. Supported models include ChatGPT, GPT-3.0, New Bing, and Google Bard, while supported applications range from terminals and web platforms to messaging apps like WeChat, Telegram, QQ, and more. The repository provides detailed instructions for setting up the environment, configuring the models and channels, and running the chatbot for various tasks across different messaging platforms.

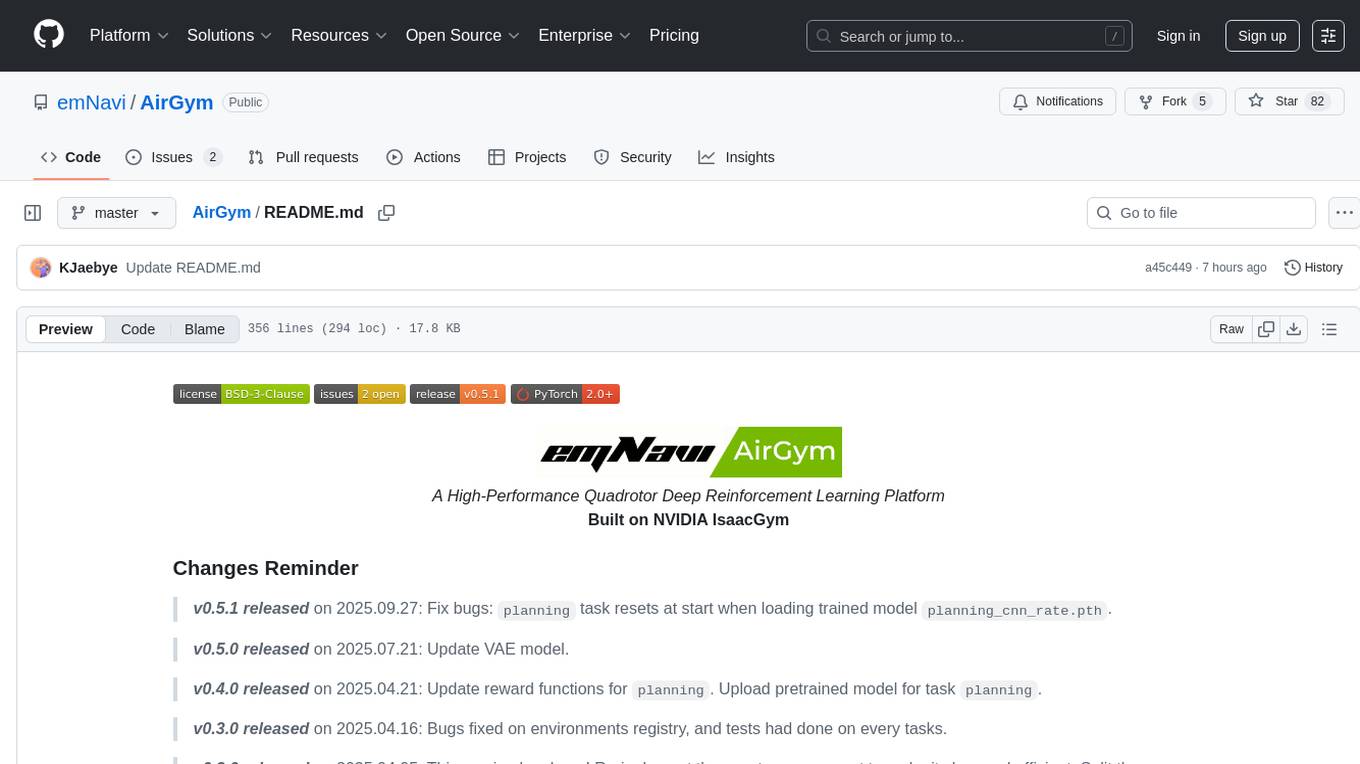

AirGym

AirGym is an open source Python quadrotor simulator based on IsaacGym, providing a high-fidelity dynamics and Deep Reinforcement Learning (DRL) framework for quadrotor robot learning research. It offers a lightweight and customizable platform with strict alignment with PX4 logic, multiple control modes, and Sim-to-Real toolkits. Users can perform tasks such as Hovering, Balloon, Tracking, Avoid, and Planning, with the ability to create customized environments and tasks. The tool also supports training from scratch, visual encoding approaches, playing and testing of trained models, and customization of new tasks and assets.

promptwright

Promptwright is a Python library designed for generating large synthetic datasets using local LLM and various LLM service providers. It offers flexible interfaces for generating prompt-led synthetic datasets. The library supports multiple providers, configurable instructions and prompts, YAML configuration, command line interface, push to Hugging Face Hub, and system message control. Users can define generation tasks using YAML configuration files or programmatically using Python code. Promptwright integrates with LiteLLM for LLM providers and supports automatic dataset upload to Hugging Face Hub. The library is not responsible for the content generated by models and advises users to review the data before using it in production environments.

promptwright

Promptwright is a Python library designed for generating large synthetic datasets using a local LLM and various LLM service providers. It offers flexible interfaces for generating prompt-led synthetic datasets. The library supports multiple providers, configurable instructions and prompts, YAML configuration for tasks, command line interface for running tasks, push to Hugging Face Hub for dataset upload, and system message control. Users can define generation tasks using YAML configuration or Python code. Promptwright integrates with LiteLLM to interface with LLM providers and supports automatic dataset upload to Hugging Face Hub.

oramacore

OramaCore is a database designed for AI projects, answer engines, copilots, and search functionalities. It offers features such as a full-text search engine, vector database, LLM interface, and various utilities. The tool is currently under active development and not recommended for production use due to potential API changes. OramaCore aims to provide a comprehensive solution for managing data and enabling advanced search capabilities in AI applications.

For similar tasks

call-center-ai

Call Center AI is an AI-powered call center solution that leverages Azure and OpenAI GPT. It is a proof of concept demonstrating the integration of Azure Communication Services, Azure Cognitive Services, and Azure OpenAI to build an automated call center solution. The project showcases features like accessing claims on a public website, customer conversation history, language change during conversation, bot interaction via phone number, multiple voice tones, lexicon understanding, todo list creation, customizable prompts, content filtering, GPT-4 Turbo for customer requests, specific data schema for claims, documentation database access, SMS report sending, conversation resumption, and more. The system architecture includes components like RAG AI Search, SMS gateway, call gateway, moderation, Cosmos DB, event broker, GPT-4 Turbo, Redis cache, translation service, and more. The tool can be deployed remotely using GitHub Actions and locally with prerequisites like Azure environment setup, configuration file creation, and resource hosting. Advanced usage includes custom training data with AI Search, prompt customization, language customization, moderation level customization, claim data schema customization, OpenAI compatible model usage for the LLM, and Twilio integration for SMS.

PurpleLlama

Purple Llama is an umbrella project that aims to provide tools and evaluations to support responsible development and usage of generative AI models. It encompasses components for cybersecurity and input/output safeguards, with plans to expand in the future. The project emphasizes a collaborative approach, borrowing the concept of purple teaming from cybersecurity, to address potential risks and challenges posed by generative AI. Components within Purple Llama are licensed permissively to foster community collaboration and standardize the development of trust and safety tools for generative AI.

samurai

Samurai Telegram Bot is a simple yet effective moderator bot for Telegram. It provides features such as reporting functionality, profanity filtering in English and Russian, logging system via private channel, spam detection AI, and easy extensibility of bot code and functions. Please note that the code is not polished and is provided 'as is', with room for improvements.

chatgpt-cli

ChatGPT CLI provides a powerful command-line interface for seamless interaction with ChatGPT models via OpenAI and Azure. It features streaming capabilities, extensive configuration options, and supports various modes like streaming, query, and interactive mode. Users can manage thread-based context, sliding window history, and provide custom context from any source. The CLI also offers model and thread listing, advanced configuration options, and supports GPT-4, GPT-3.5-turbo, and Perplexity's models. Installation is available via Homebrew or direct download, and users can configure settings through default values, a config.yaml file, or environment variables.

jaison-core

J.A.I.son is a Python project designed for generating responses using various components and applications. It requires specific plugins like STT, T2T, TTSG, and TTSC to function properly. Users can customize responses, voice, and configurations. The project provides a Discord bot, Twitch events and chat integration, and VTube Studio Animation Hotkeyer. It also offers features for managing conversation history, training AI models, and monitoring conversations.

swift-chat

SwiftChat is a fast and responsive AI chat application developed with React Native and powered by Amazon Bedrock. It offers real-time streaming conversations, AI image generation, multimodal support, conversation history management, and cross-platform compatibility across Android, iOS, and macOS. The app supports multiple AI models like Amazon Bedrock, Ollama, DeepSeek, and OpenAI, and features a customizable system prompt assistant. With a minimalist design philosophy and robust privacy protection, SwiftChat delivers a seamless chat experience with various features like rich Markdown support, comprehensive multimodal analysis, creative image suite, and quick access tools. The app prioritizes speed in launch, request, render, and storage, ensuring a fast and efficient user experience. SwiftChat also emphasizes app privacy and security by encrypting API key storage, minimal permission requirements, local-only data storage, and a privacy-first approach.

chatless

Chatless is a modern AI chat desktop application built on Tauri and Next.js. It supports multiple AI providers, can connect to local Ollama models, supports document parsing and knowledge base functions. All data is stored locally to protect user privacy. The application is lightweight, simple, starts quickly, and consumes minimal resources.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.