langserve

LangServe 🦜️🏓

Stars: 1874

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

README:

LangServe helps developers

deploy LangChain runnables and chains

as a REST API.

This library is integrated with FastAPI and uses pydantic for data validation.

In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

- Input and Output schemas automatically inferred from your LangChain object, and enforced on every API call, with rich error messages

- API docs page with JSONSchema and Swagger (insert example link)

- Efficient

/invoke,/batchand/streamendpoints with support for many concurrent requests on a single server -

/stream_logendpoint for streaming all (or some) intermediate steps from your chain/agent -

new as of 0.0.40, supports

/stream_eventsto make it easier to stream without needing to parse the output of/stream_log. - Playground page at

/playground/with streaming output and intermediate steps - Built-in (optional) tracing to LangSmith, just add your API key (see Instructions)

- All built with battle-tested open-source Python libraries like FastAPI, Pydantic, uvloop and asyncio.

- Use the client SDK to call a LangServe server as if it was a Runnable running locally (or call the HTTP API directly)

- LangServe Hub

LangServe is designed to primarily deploy simple Runnables and wok with well-known primitives in langchain-core.

If you need a deployment option for LangGraph, you should instead be looking at LangGraph Cloud (beta) which will be better suited for deploying LangGraph applications.

- Client callbacks are not yet supported for events that originate on the server

- Versions of LangServe <= 0.2.0, will not generate OpenAPI docs properly when using Pydantic V2 as Fast API does not support mixing pydantic v1 and v2 namespaces. See section below for more details. Either upgrade to LangServe>=0.3.0 or downgrade Pydantic to pydantic 1.

- Vulnerability in Versions 0.0.13 - 0.0.15 -- playground endpoint allows accessing arbitrary files on server. Resolved in 0.0.16.

For both client and server:

pip install "langserve[all]"or pip install "langserve[client]" for client code,

and pip install "langserve[server]" for server code.

Use the LangChain CLI to bootstrap a LangServe project quickly.

To use the langchain CLI make sure that you have a recent version of langchain-cli

installed. You can install it with pip install -U langchain-cli.

Note: We use poetry for dependency management. Please follow poetry doc to learn more about it.

langchain app new my-appadd_routes(app. NotImplemented)3. Use poetry to add 3rd party packages (e.g., langchain-openai, langchain-anthropic, langchain-mistral etc).

poetry add [package-name] // e.g `poetry add langchain-openai`export OPENAI_API_KEY="sk-..."poetry run langchain serve --port=8100Get your LangServe instance started quickly with LangChain Templates.

For more examples, see the templates index or the examples directory.

| Description | Links |

|---|---|

| LLMs Minimal example that reserves OpenAI and Anthropic chat models. Uses async, supports batching and streaming. | server, client |

| Retriever Simple server that exposes a retriever as a runnable. | server, client |

| Conversational Retriever A Conversational Retriever exposed via LangServe | server, client |

| Agent without conversation history based on OpenAI tools | server, client |

| Agent with conversation history based on OpenAI tools | server, client |

RunnableWithMessageHistory to implement chat persisted on backend, keyed off a session_id supplied by client. |

server, client |

RunnableWithMessageHistory to implement chat persisted on backend, keyed off a conversation_id supplied by client, and user_id (see Auth for implementing user_id properly). |

server, client |

| Configurable Runnable to create a retriever that supports run time configuration of the index name. | server, client |

| Configurable Runnable that shows configurable fields and configurable alternatives. | server, client |

APIHandler Shows how to use APIHandler instead of add_routes. This provides more flexibility for developers to define endpoints. Works well with all FastAPI patterns, but takes a bit more effort. |

server |

| LCEL Example Example that uses LCEL to manipulate a dictionary input. | server, client |

Auth with add_routes: Simple authentication that can be applied across all endpoints associated with app. (Not useful on its own for implementing per user logic.) |

server |

Auth with add_routes: Simple authentication mechanism based on path dependencies. (No useful on its own for implementing per user logic.) |

server |

Auth with add_routes: Implement per user logic and auth for endpoints that use per request config modifier. (Note: At the moment, does not integrate with OpenAPI docs.) |

server, client |

Auth with APIHandler: Implement per user logic and auth that shows how to search only within user owned documents. |

server, client |

| Widgets Different widgets that can be used with playground (file upload and chat) | server |

| Widgets File upload widget used for LangServe playground. | server, client |

Here's a server that deploys an OpenAI chat model, an Anthropic chat model, and a chain that uses the Anthropic model to tell a joke about a topic.

#!/usr/bin/env python

from fastapi import FastAPI

from langchain.prompts import ChatPromptTemplate

from langchain.chat_models import ChatAnthropic, ChatOpenAI

from langserve import add_routes

app = FastAPI(

title="LangChain Server",

version="1.0",

description="A simple api server using Langchain's Runnable interfaces",

)

add_routes(

app,

ChatOpenAI(model="gpt-3.5-turbo-0125"),

path="/openai",

)

add_routes(

app,

ChatAnthropic(model="claude-3-haiku-20240307"),

path="/anthropic",

)

model = ChatAnthropic(model="claude-3-haiku-20240307")

prompt = ChatPromptTemplate.from_template("tell me a joke about {topic}")

add_routes(

app,

prompt | model,

path="/joke",

)

if __name__ == "__main__":

import uvicorn

uvicorn.run(app, host="localhost", port=8000)If you intend to call your endpoint from the browser, you will also need to set CORS headers. You can use FastAPI's built-in middleware for that:

from fastapi.middleware.cors import CORSMiddleware

# Set all CORS enabled origins

app.add_middleware(

CORSMiddleware,

allow_origins=["*"],

allow_credentials=True,

allow_methods=["*"],

allow_headers=["*"],

expose_headers=["*"],

)If you've deployed the server above, you can view the generated OpenAPI docs using:

⚠️ If using LangServe <= 0.2.0 and pydantic v2, docs will not be generated for invoke, batch, stream, stream_log. See Pydantic section below for more details. To resolve please upgrade to LangServe 0.3.0.

curl localhost:8000/docsmake sure to add the /docs suffix.

⚠️ Index page/is not defined by design, socurl localhost:8000or visiting the URL will return a 404. If you want content at/define an endpoint@app.get("/").

Python SDK

from langchain.schema import SystemMessage, HumanMessage

from langchain.prompts import ChatPromptTemplate

from langchain.schema.runnable import RunnableMap

from langserve import RemoteRunnable

openai = RemoteRunnable("http://localhost:8000/openai/")

anthropic = RemoteRunnable("http://localhost:8000/anthropic/")

joke_chain = RemoteRunnable("http://localhost:8000/joke/")

joke_chain.invoke({"topic": "parrots"})

# or async

await joke_chain.ainvoke({"topic": "parrots"})

prompt = [

SystemMessage(content='Act like either a cat or a parrot.'),

HumanMessage(content='Hello!')

]

# Supports astream

async for msg in anthropic.astream(prompt):

print(msg, end="", flush=True)

prompt = ChatPromptTemplate.from_messages(

[("system", "Tell me a long story about {topic}")]

)

# Can define custom chains

chain = prompt | RunnableMap({

"openai": openai,

"anthropic": anthropic,

})

chain.batch([{"topic": "parrots"}, {"topic": "cats"}])In TypeScript (requires LangChain.js version 0.0.166 or later):

import { RemoteRunnable } from "@langchain/core/runnables/remote";

const chain = new RemoteRunnable({

url: `http://localhost:8000/joke/`,

});

const result = await chain.invoke({

topic: "cats",

});Python using requests:

import requests

response = requests.post(

"http://localhost:8000/joke/invoke",

json={'input': {'topic': 'cats'}}

)

response.json()You can also use curl:

curl --location --request POST 'http://localhost:8000/joke/invoke' \

--header 'Content-Type: application/json' \

--data-raw '{

"input": {

"topic": "cats"

}

}'The following code:

...

add_routes(

app,

runnable,

path="/my_runnable",

)adds of these endpoints to the server:

-

POST /my_runnable/invoke- invoke the runnable on a single input -

POST /my_runnable/batch- invoke the runnable on a batch of inputs -

POST /my_runnable/stream- invoke on a single input and stream the output -

POST /my_runnable/stream_log- invoke on a single input and stream the output, including output of intermediate steps as it's generated -

POST /my_runnable/astream_events- invoke on a single input and stream events as they are generated, including from intermediate steps. -

GET /my_runnable/input_schema- json schema for input to the runnable -

GET /my_runnable/output_schema- json schema for output of the runnable -

GET /my_runnable/config_schema- json schema for config of the runnable

These endpoints match the LangChain Expression Language interface -- please reference this documentation for more details.

You can find a playground page for your runnable at /my_runnable/playground/. This

exposes a simple UI

to configure

and invoke your runnable with streaming output and intermediate steps.

The playground supports widgets and can be used to test your runnable with different inputs. See the widgets section below for more details.

In addition, for configurable runnables, the playground will allow you to configure the runnable and share a link with the configuration:

LangServe also supports a chat-focused playground that opt into and use under /my_runnable/playground/.

Unlike the general playground, only certain types of runnables are supported - the runnable's input schema must

be a dict with either:

- a single key, and that key's value must be a list of chat messages.

- two keys, one whose value is a list of messages, and the other representing the most recent message.

We recommend you use the first format.

The runnable must also return either an AIMessage or a string.

To enable it, you must set playground_type="chat", when adding your route. Here's an example:

# Declare a chain

prompt = ChatPromptTemplate.from_messages(

[

("system", "You are a helpful, professional assistant named Cob."),

MessagesPlaceholder(variable_name="messages"),

]

)

chain = prompt | ChatAnthropic(model="claude-2.1")

class InputChat(BaseModel):

"""Input for the chat endpoint."""

messages: List[Union[HumanMessage, AIMessage, SystemMessage]] = Field(

...,

description="The chat messages representing the current conversation.",

)

add_routes(

app,

chain.with_types(input_type=InputChat),

enable_feedback_endpoint=True,

enable_public_trace_link_endpoint=True,

playground_type="chat",

)If you are using LangSmith, you can also set enable_feedback_endpoint=True on your route to enable thumbs-up/thumbs-down buttons

after each message, and enable_public_trace_link_endpoint=True to add a button that creates a public traces for runs.

Note that you will also need to set the following environment variables:

export LANGCHAIN_TRACING_V2="true"

export LANGCHAIN_PROJECT="YOUR_PROJECT_NAME"

export LANGCHAIN_API_KEY="YOUR_API_KEY"Here's an example with the above two options turned on:

Note: If you enable public trace links, the internals of your chain will be exposed. We recommend only using this setting for demos or testing.

LangServe works with both Runnables (constructed

via LangChain Expression Language)

and legacy chains (inheriting from Chain).

However, some of the input schemas for legacy chains may be incomplete/incorrect,

leading to errors.

This can be fixed by updating the input_schema property of those chains in LangChain.

If you encounter any errors, please open an issue on THIS repo, and we will work to

address it.

You can deploy to AWS using the AWS Copilot CLI

copilot init --app [application-name] --name [service-name] --type 'Load Balanced Web Service' --dockerfile './Dockerfile' --deployClick here to learn more.

You can deploy to Azure using Azure Container Apps (Serverless):

az containerapp up --name [container-app-name] --source . --resource-group [resource-group-name] --environment [environment-name] --ingress external --target-port 8001 --env-vars=OPENAI_API_KEY=your_key

You can find more info here

You can deploy to GCP Cloud Run using the following command:

gcloud run deploy [your-service-name] --source . --port 8001 --allow-unauthenticated --region us-central1 --set-env-vars=OPENAI_API_KEY=your_key

LangServe provides support for Pydantic 2 with some limitations.

- OpenAPI docs will not be generated for invoke/batch/stream/stream_log when using

Pydantic V2. Fast API does not support [mixing pydantic v1 and v2 namespaces]. To fix this, use

pip install pydantic==1.10.17. - LangChain uses the v1 namespace in Pydantic v2. Please read the following guidelines to ensure compatibility with LangChain

Except for these limitations, we expect the API endpoints, the playground and any other features to work as expected.

If you need to add authentication to your server, please read Fast API's documentation about dependencies and security.

The below examples show how to wire up authentication logic LangServe endpoints using FastAPI primitives.

You are responsible for providing the actual authentication logic, the users table etc.

If you're not sure what you're doing, you could try using an existing solution Auth0.

If you're using add_routes, see

examples here.

| Description | Links |

|---|---|

Auth with add_routes: Simple authentication that can be applied across all endpoints associated with app. (Not useful on its own for implementing per user logic.) |

server |

Auth with add_routes: Simple authentication mechanism based on path dependencies. (No useful on its own for implementing per user logic.) |

server |

Auth with add_routes: Implement per user logic and auth for endpoints that use per request config modifier. (Note: At the moment, does not integrate with OpenAPI docs.) |

server, client |

Alternatively, you can use FastAPI's middleware.

Using global dependencies and path dependencies has the advantage that auth will be properly supported in the OpenAPI docs page, but these are not sufficient for implement per user logic (e.g., making an application that can search only within user owned documents).

If you need to implement per user logic, you can use the per_req_config_modifier or APIHandler (below) to implement this logic.

Per User

If you need authorization or logic that is user dependent,

specify per_req_config_modifier when using add_routes. Use a callable receives the

raw Request object and can extract relevant information from it for authentication and

authorization purposes.

If you feel comfortable with FastAPI and python, you can use LangServe's APIHandler.

| Description | Links |

|---|---|

Auth with APIHandler: Implement per user logic and auth that shows how to search only within user owned documents. |

server, client |

APIHandler Shows how to use APIHandler instead of add_routes. This provides more flexibility for developers to define endpoints. Works well with all FastAPI patterns, but takes a bit more effort. |

server, client |

It's a bit more work, but gives you complete control over the endpoint definitions, so you can do whatever custom logic you need for auth.

LLM applications often deal with files. There are different architectures that can be made to implement file processing; at a high level:

- The file may be uploaded to the server via a dedicated endpoint and processed using a separate endpoint

- The file may be uploaded by either value (bytes of file) or reference (e.g., s3 url to file content)

- The processing endpoint may be blocking or non-blocking

- If significant processing is required, the processing may be offloaded to a dedicated process pool

You should determine what is the appropriate architecture for your application.

Currently, to upload files by value to a runnable, use base64 encoding for the

file (multipart/form-data is not supported yet).

Here's an example that shows how to use base64 encoding to send a file to a remote runnable.

Remember, you can always upload files by reference (e.g., s3 url) or upload them as multipart/form-data to a dedicated endpoint.

Input and Output types are defined on all runnables.

You can access them via the input_schema and output_schema properties.

LangServe uses these types for validation and documentation.

If you want to override the default inferred types, you can use the with_types method.

Here's a toy example to illustrate the idea:

from typing import Any

from fastapi import FastAPI

from langchain.schema.runnable import RunnableLambda

app = FastAPI()

def func(x: Any) -> int:

"""Mistyped function that should accept an int but accepts anything."""

return x + 1

runnable = RunnableLambda(func).with_types(

input_type=int,

)

add_routes(app, runnable)Inherit from CustomUserType if you want the data to de-serialize into a

pydantic model rather than the equivalent dict representation.

At the moment, this type only works server side and is used to specify desired decoding behavior. If inheriting from this type the server will keep the decoded type as a pydantic model instead of converting it into a dict.

from fastapi import FastAPI

from langchain.schema.runnable import RunnableLambda

from langserve import add_routes

from langserve.schema import CustomUserType

app = FastAPI()

class Foo(CustomUserType):

bar: int

def func(foo: Foo) -> int:

"""Sample function that expects a Foo type which is a pydantic model"""

assert isinstance(foo, Foo)

return foo.bar

# Note that the input and output type are automatically inferred!

# You do not need to specify them.

# runnable = RunnableLambda(func).with_types( # <-- Not needed in this case

# input_type=Foo,

# output_type=int,

#

add_routes(app, RunnableLambda(func), path="/foo")The playground allows you to define custom widgets for your runnable from the backend.

Here are a few examples:

| Description | Links |

|---|---|

| Widgets Different widgets that can be used with playground (file upload and chat) | server, client |

| Widgets File upload widget used for LangServe playground. | server, client |

- A widget is specified at the field level and shipped as part of the JSON schema of the input type

- A widget must contain a key called

typewith the value being one of a well known list of widgets - Other widget keys will be associated with values that describe paths in a JSON object

type JsonPath = number | string | (number | string)[];

type NameSpacedPath = { title: string; path: JsonPath }; // Using title to mimick json schema, but can use namespace

type OneOfPath = { oneOf: JsonPath[] };

type Widget = {

type: string; // Some well known type (e.g., base64file, chat etc.)

[key: string]: JsonPath | NameSpacedPath | OneOfPath;

};There are only two widgets that the user can specify manually right now:

- File Upload Widget

- Chat History Widget

See below more information about these widgets.

All other widgets on the playground UI are created and managed automatically by the UI based on the config schema of the Runnable. When you create Configurable Runnables, the playground should create appropriate widgets for you to control the behavior.

Allows creation of a file upload input in the UI playground for files that are uploaded as base64 encoded strings. Here's the full example.

Snippet:

try:

from pydantic.v1 import Field

except ImportError:

from pydantic import Field

from langserve import CustomUserType

# ATTENTION: Inherit from CustomUserType instead of BaseModel otherwise

# the server will decode it into a dict instead of a pydantic model.

class FileProcessingRequest(CustomUserType):

"""Request including a base64 encoded file."""

# The extra field is used to specify a widget for the playground UI.

file: str = Field(..., extra={"widget": {"type": "base64file"}})

num_chars: int = 100Example widget:

Look at the widget example.

To define a chat widget, make sure that you pass "type": "chat".

- "input" is JSONPath to the field in the Request that has the new input message.

- "output" is JSONPath to the field in the Response that has new output message(s).

- Don't specify these fields if the entire input or output should be used as they are ( e.g., if the output is a list of chat messages.)

Here's a snippet:

class ChatHistory(CustomUserType):

chat_history: List[Tuple[str, str]] = Field(

...,

examples=[[("human input", "ai response")]],

extra={"widget": {"type": "chat", "input": "question", "output": "answer"}},

)

question: str

def _format_to_messages(input: ChatHistory) -> List[BaseMessage]:

"""Format the input to a list of messages."""

history = input.chat_history

user_input = input.question

messages = []

for human, ai in history:

messages.append(HumanMessage(content=human))

messages.append(AIMessage(content=ai))

messages.append(HumanMessage(content=user_input))

return messages

model = ChatOpenAI()

chat_model = RunnableParallel({"answer": (RunnableLambda(_format_to_messages) | model)})

add_routes(

app,

chat_model.with_types(input_type=ChatHistory),

config_keys=["configurable"],

path="/chat",

)Example widget:

You can also specify a list of messages as your a parameter directly, as shown in this snippet:

prompt = ChatPromptTemplate.from_messages(

[

("system", "You are a helpful assisstant named Cob."),

MessagesPlaceholder(variable_name="messages"),

]

)

chain = prompt | ChatAnthropic(model="claude-2.1")

class MessageListInput(BaseModel):

"""Input for the chat endpoint."""

messages: List[Union[HumanMessage, AIMessage]] = Field(

...,

description="The chat messages representing the current conversation.",

extra={"widget": {"type": "chat", "input": "messages"}},

)

add_routes(

app,

chain.with_types(input_type=MessageListInput),

path="/chat",

)See this sample file for an example.

You can enable / disable which endpoints are exposed when adding routes for a given chain.

Use enabled_endpoints if you want to make sure to never get a new endpoint when upgrading langserve to a newer

verison.

Enable: The code below will only enable invoke, batch and the

corresponding config_hash endpoint variants.

add_routes(app, chain, enabled_endpoints=["invoke", "batch", "config_hashes"], path="/mychain")Disable: The code below will disable the playground for the chain

add_routes(app, chain, disabled_endpoints=["playground"], path="/mychain")For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for langserve

Similar Open Source Tools

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

bedrock-claude-chat

This repository is a sample chatbot using the Anthropic company's LLM Claude, one of the foundational models provided by Amazon Bedrock for generative AI. It allows users to have basic conversations with the chatbot, personalize it with their own instructions and external knowledge, and analyze usage for each user/bot on the administrator dashboard. The chatbot supports various languages, including English, Japanese, Korean, Chinese, French, German, and Spanish. Deployment is straightforward and can be done via the command line or by using AWS CDK. The architecture is built on AWS managed services, eliminating the need for infrastructure management and ensuring scalability, reliability, and security.

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

june

june-va is a local voice chatbot that combines Ollama for language model capabilities, Hugging Face Transformers for speech recognition, and the Coqui TTS Toolkit for text-to-speech synthesis. It provides a flexible, privacy-focused solution for voice-assisted interactions on your local machine, ensuring that no data is sent to external servers. The tool supports various interaction modes including text input/output, voice input/text output, text input/audio output, and voice input/audio output. Users can customize the tool's behavior with a JSON configuration file and utilize voice conversion features for voice cloning. The application can be further customized using a configuration file with attributes for language model, speech-to-text model, and text-to-speech model configurations.

simpleAI

SimpleAI is a self-hosted alternative to the not-so-open AI API, focused on replicating main endpoints for LLM such as text completion, chat, edits, and embeddings. It allows quick experimentation with different models, creating benchmarks, and handling specific use cases without relying on external services. Users can integrate and declare models through gRPC, query endpoints using Swagger UI or API, and resolve common issues like CORS with FastAPI middleware. The project is open for contributions and welcomes PRs, issues, documentation, and more.

minja

Minja is a minimalistic C++ Jinja templating engine designed specifically for integration with C++ LLM projects, such as llama.cpp or gemma.cpp. It is not a general-purpose tool but focuses on providing a limited set of filters, tests, and language features tailored for chat templates. The library is header-only, requires C++17, and depends only on nlohmann::json. Minja aims to keep the codebase small, easy to understand, and offers decent performance compared to Python. Users should be cautious when using Minja due to potential security risks, and it is not intended for producing HTML or JavaScript output.

memobase

Memobase is a user profile-based memory system designed to enhance Generative AI applications by enabling them to remember, understand, and evolve with users. It provides structured user profiles, scalable profiling, easy integration with existing LLM stacks, batch processing for speed, and is production-ready. Users can manage users, insert data, get memory profiles, and track user preferences and behaviors. Memobase is ideal for applications that require user analysis, tracking, and personalized interactions.

hydraai

Generate React components on-the-fly at runtime using AI. Register your components, and let Hydra choose when to show them in your App. Hydra development is still early, and patterns for different types of components and apps are still being developed. Join the discord to chat with the developers. Expects to be used in a NextJS project. Components that have function props do not work.

req_llm

ReqLLM is a Req-based library for LLM interactions, offering a unified interface to AI providers through a plugin-based architecture. It brings composability and middleware advantages to LLM interactions, with features like auto-synced providers/models, typed data structures, ergonomic helpers, streaming capabilities, usage & cost extraction, and a plugin-based provider system. Users can easily generate text, structured data, embeddings, and track usage costs. The tool supports various AI providers like Anthropic, OpenAI, Groq, Google, and xAI, and allows for easy addition of new providers. ReqLLM also provides API key management, detailed documentation, and a roadmap for future enhancements.

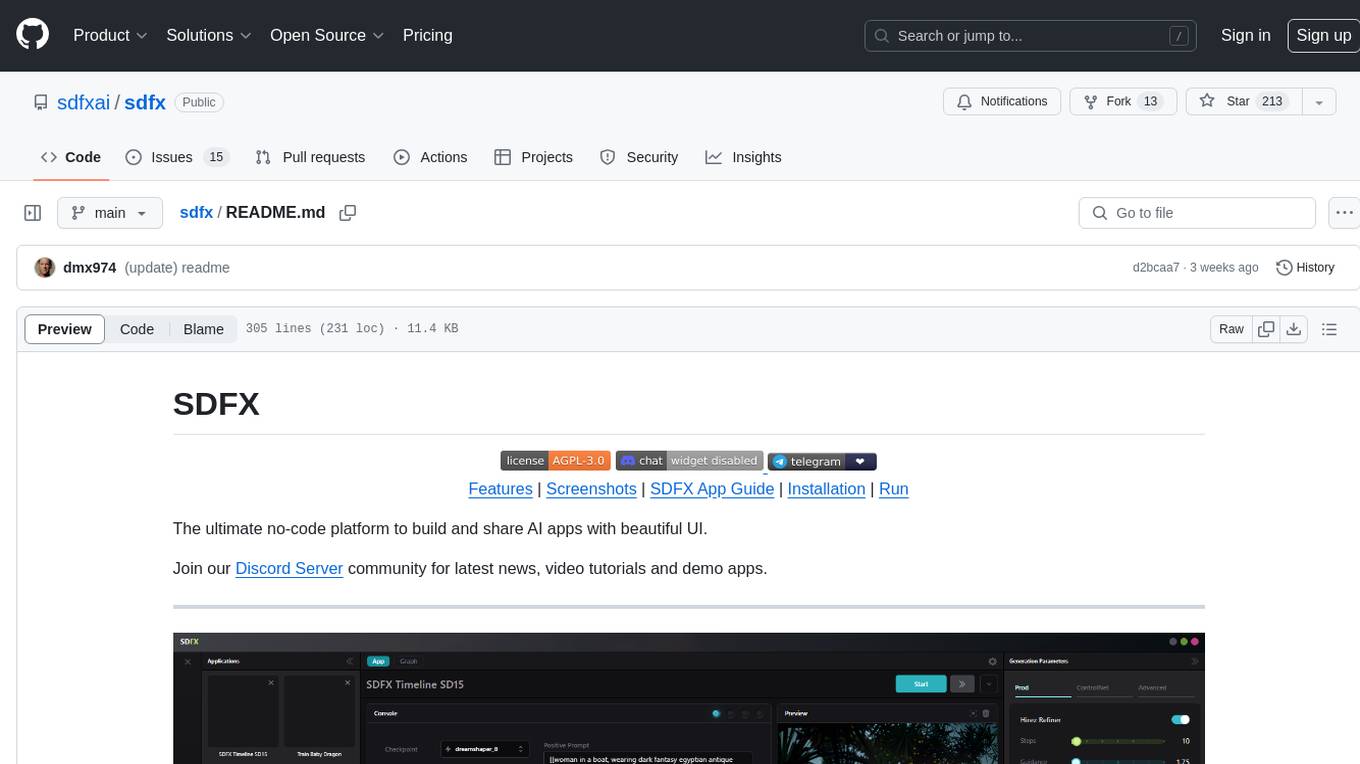

sdfx

SDFX is the ultimate no-code platform for building and sharing AI apps with beautiful UI. It enables the creation of user-friendly interfaces for complex workflows by combining Comfy workflow with a UI. The tool is designed to merge the benefits of form-based UI and graph-node based UI, allowing users to create intricate graphs with a high-level UI overlay. SDFX is fully compatible with ComfyUI, abstracting the need for installing ComfyUI. It offers features like animated graph navigation, node bookmarks, UI debugger, custom nodes manager, app and template export, image and mask editor, and more. The tool compiles as a native app or web app, making it easy to maintain and add new features.

openai

An open-source client package that allows developers to easily integrate the power of OpenAI's state-of-the-art AI models into their Dart/Flutter applications. The library provides simple and intuitive methods for making requests to OpenAI's various APIs, including the GPT-3 language model, DALL-E image generation, and more. It is designed to be lightweight and easy to use, enabling developers to focus on building their applications without worrying about the complexities of dealing with HTTP requests. Note that this is an unofficial library as OpenAI does not have an official Dart library.

HippoRAG

HippoRAG is a novel retrieval augmented generation (RAG) framework inspired by the neurobiology of human long-term memory that enables Large Language Models (LLMs) to continuously integrate knowledge across external documents. It provides RAG systems with capabilities that usually require a costly and high-latency iterative LLM pipeline for only a fraction of the computational cost. The tool facilitates setting up retrieval corpus, indexing, and retrieval processes for LLMs, offering flexibility in choosing different online LLM APIs or offline LLM deployments through LangChain integration. Users can run retrieval on pre-defined queries or integrate directly with the HippoRAG API. The tool also supports reproducibility of experiments and provides data, baselines, and hyperparameter tuning scripts for research purposes.

sieves

sieves is a library for zero- and few-shot NLP tasks with structured generation, enabling rapid prototyping of NLP applications without the need for training. It simplifies NLP prototyping by bundling capabilities into a single library, providing zero- and few-shot model support, a unified interface for structured generation, built-in tasks for common NLP operations, easy extendability, document-based pipeline architecture, caching to prevent redundant model calls, and more. The tool draws inspiration from spaCy and spacy-llm, offering features like immediate inference, observable pipelines, integrated tools for document parsing and text chunking, ready-to-use tasks such as classification, summarization, translation, and more, persistence for saving and loading pipelines, distillation for specialized model creation, and caching to optimize performance.

Gemini-API

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

gateway

Adaline Gateway is a fully local production-grade Super SDK that offers a unified interface for calling over 200+ LLMs. It is production-ready, supports batching, retries, caching, callbacks, and OpenTelemetry. Users can create custom plugins and providers for seamless integration with their infrastructure.

lollms_legacy

Lord of Large Language Models (LoLLMs) Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications. The tool supports multiple personalities for generating text with different styles and tones, real-time text generation with WebSocket-based communication, RESTful API for listing personalities and adding new personalities, easy integration with various applications and frameworks, sending files to personalities, running on multiple nodes to provide a generation service to many outputs at once, and keeping data local even in the remote version.

For similar tasks

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

For similar jobs

h2ogpt

h2oGPT is an Apache V2 open-source project that allows users to query and summarize documents or chat with local private GPT LLMs. It features a private offline database of any documents (PDFs, Excel, Word, Images, Video Frames, Youtube, Audio, Code, Text, MarkDown, etc.), a persistent database (Chroma, Weaviate, or in-memory FAISS) using accurate embeddings (instructor-large, all-MiniLM-L6-v2, etc.), and efficient use of context using instruct-tuned LLMs (no need for LangChain's few-shot approach). h2oGPT also offers parallel summarization and extraction, reaching an output of 80 tokens per second with the 13B LLaMa2 model, HYDE (Hypothetical Document Embeddings) for enhanced retrieval based upon LLM responses, a variety of models supported (LLaMa2, Mistral, Falcon, Vicuna, WizardLM. With AutoGPTQ, 4-bit/8-bit, LORA, etc.), GPU support from HF and LLaMa.cpp GGML models, and CPU support using HF, LLaMa.cpp, and GPT4ALL models. Additionally, h2oGPT provides Attention Sinks for arbitrarily long generation (LLaMa-2, Mistral, MPT, Pythia, Falcon, etc.), a UI or CLI with streaming of all models, the ability to upload and view documents through the UI (control multiple collaborative or personal collections), Vision Models LLaVa, Claude-3, Gemini-Pro-Vision, GPT-4-Vision, Image Generation Stable Diffusion (sdxl-turbo, sdxl) and PlaygroundAI (playv2), Voice STT using Whisper with streaming audio conversion, Voice TTS using MIT-Licensed Microsoft Speech T5 with multiple voices and Streaming audio conversion, Voice TTS using MPL2-Licensed TTS including Voice Cloning and Streaming audio conversion, AI Assistant Voice Control Mode for hands-free control of h2oGPT chat, Bake-off UI mode against many models at the same time, Easy Download of model artifacts and control over models like LLaMa.cpp through the UI, Authentication in the UI by user/password via Native or Google OAuth, State Preservation in the UI by user/password, Linux, Docker, macOS, and Windows support, Easy Windows Installer for Windows 10 64-bit (CPU/CUDA), Easy macOS Installer for macOS (CPU/M1/M2), Inference Servers support (oLLaMa, HF TGI server, vLLM, Gradio, ExLLaMa, Replicate, OpenAI, Azure OpenAI, Anthropic), OpenAI-compliant, Server Proxy API (h2oGPT acts as drop-in-replacement to OpenAI server), Python client API (to talk to Gradio server), JSON Mode with any model via code block extraction. Also supports MistralAI JSON mode, Claude-3 via function calling with strict Schema, OpenAI via JSON mode, and vLLM via guided_json with strict Schema, Web-Search integration with Chat and Document Q/A, Agents for Search, Document Q/A, Python Code, CSV frames (Experimental, best with OpenAI currently), Evaluate performance using reward models, and Quality maintained with over 1000 unit and integration tests taking over 4 GPU-hours.

mistral.rs

Mistral.rs is a fast LLM inference platform written in Rust. We support inference on a variety of devices, quantization, and easy-to-use application with an Open-AI API compatible HTTP server and Python bindings.

ollama

Ollama is a lightweight, extensible framework for building and running language models on the local machine. It provides a simple API for creating, running, and managing models, as well as a library of pre-built models that can be easily used in a variety of applications. Ollama is designed to be easy to use and accessible to developers of all levels. It is open source and available for free on GitHub.

llama-cpp-agent

The llama-cpp-agent framework is a tool designed for easy interaction with Large Language Models (LLMs). Allowing users to chat with LLM models, execute structured function calls and get structured output (objects). It provides a simple yet robust interface and supports llama-cpp-python and OpenAI endpoints with GBNF grammar support (like the llama-cpp-python server) and the llama.cpp backend server. It works by generating a formal GGML-BNF grammar of the user defined structures and functions, which is then used by llama.cpp to generate text valid to that grammar. In contrast to most GBNF grammar generators it also supports nested objects, dictionaries, enums and lists of them.

llama_ros

This repository provides a set of ROS 2 packages to integrate llama.cpp into ROS 2. By using the llama_ros packages, you can easily incorporate the powerful optimization capabilities of llama.cpp into your ROS 2 projects by running GGUF-based LLMs and VLMs.

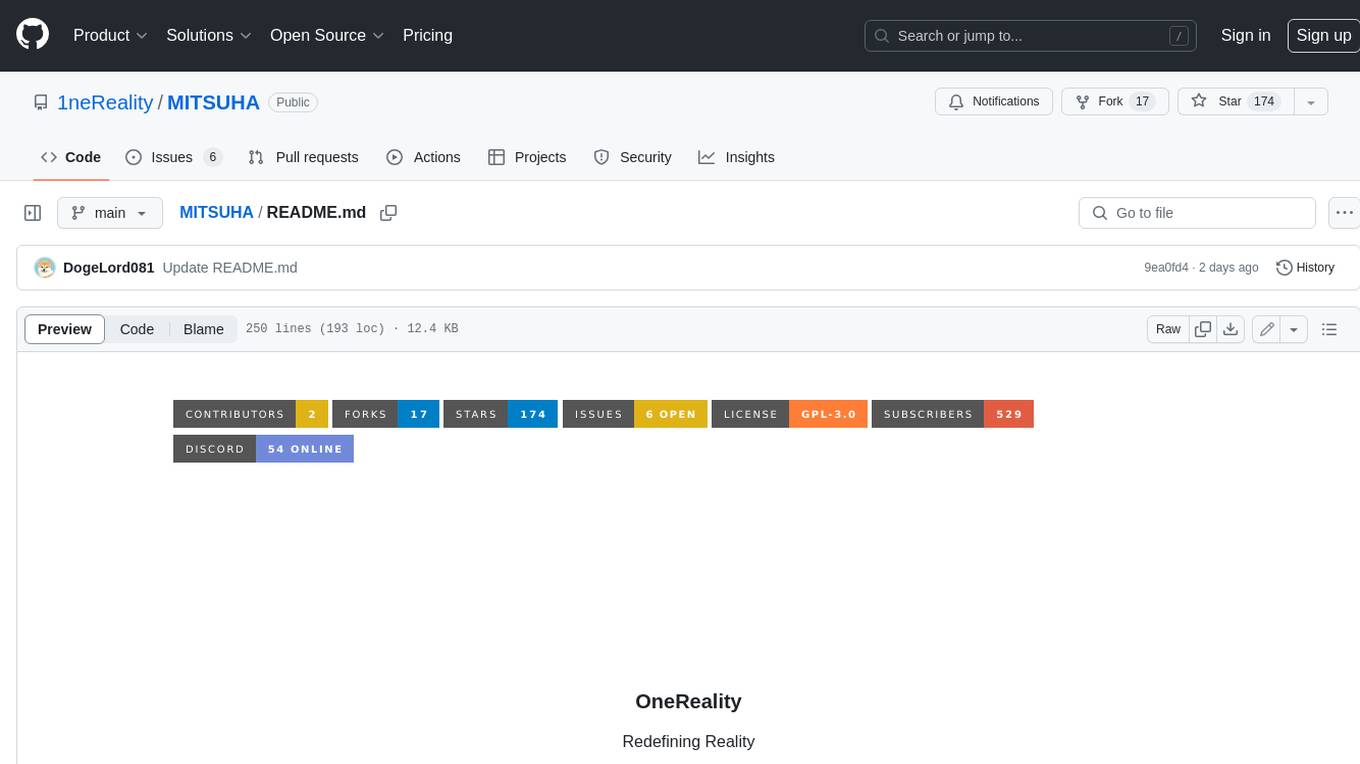

MITSUHA

OneReality is a virtual waifu/assistant that you can speak to through your mic and it'll speak back to you! It has many features such as: * You can speak to her with a mic * It can speak back to you * Has short-term memory and long-term memory * Can open apps * Smarter than you * Fluent in English, Japanese, Korean, and Chinese * Can control your smart home like Alexa if you set up Tuya (more info in Prerequisites) It is built with Python, Llama-cpp-python, Whisper, SpeechRecognition, PocketSphinx, VITS-fast-fine-tuning, VITS-simple-api, HyperDB, Sentence Transformers, and Tuya Cloud IoT.

wenxin-starter

WenXin-Starter is a spring-boot-starter for Baidu's "Wenxin Qianfan WENXINWORKSHOP" large model, which can help you quickly access Baidu's AI capabilities. It fully integrates the official API documentation of Wenxin Qianfan. Supports text-to-image generation, built-in dialogue memory, and supports streaming return of dialogue. Supports QPS control of a single model and supports queuing mechanism. Plugins will be added soon.

FlexFlow

FlexFlow Serve is an open-source compiler and distributed system for **low latency**, **high performance** LLM serving. FlexFlow Serve outperforms existing systems by 1.3-2.0x for single-node, multi-GPU inference and by 1.4-2.4x for multi-node, multi-GPU inference.