suno-api

Use API to call the music generation AI of suno.ai, and easily integrate it into agents like GPTs.

Stars: 1689

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

README:

Use API to call the music generation AI of Suno.ai and easily integrate it into agents like GPTs.

👉 We update quickly, please star.

English | 简体中文 | русский | Demo | Docs | Deploy with Vercel

🔥 Check out my new project: ReadPo - 10x Speed Up Your Reading and Writing

Suno is an amazing AI music service. Although the official API is not yet available, we couldn't wait to integrate its capabilities somewhere.

We discovered that some users have similar needs, so we decided to open-source this project, hoping you'll like it.

This implementation uses the paid 2Captcha service (a.k.a. ruCaptcha) to solve the hCaptcha challenges automatically and does not use any already made closed-source paid Suno API implementations.

We have deployed an example bound to a free Suno account, so it has daily usage limits, but you can see how it runs: suno.gcui.ai

- Perfectly implements the creation API from suno.ai.

- Automatically keep the account active.

- Solve CAPTCHAs automatically using 2Captcha and Playwright with rebrowser-patches.

- Compatible with the format of OpenAI’s

/v1/chat/completionsAPI. - Supports Custom Mode.

- One-click deployment to Vercel & Docker.

- In addition to the standard API, it also adapts to the API Schema of Agent platforms like GPTs and Coze, so you can use it as a tool/plugin/Action for LLMs and integrate it into any AI Agent.

- Permissive open-source license, allowing you to freely integrate and modify.

- Head over to suno.com/create using your browser.

- Open up the browser console: hit

F12or access theDeveloper Tools. - Navigate to the

Networktab. - Give the page a quick refresh.

- Identify the latest request that includes the keyword

?__clerk_api_version. - Click on it and switch over to the

Headertab. - Locate the

Cookiesection, hover your mouse over it, and copy the value of the Cookie.

2Captcha is a paid CAPTCHA solving service that uses real workers to solve the CAPTCHA and has high accuracy. It is needed because of Suno constantly requesting hCaptcha solving that currently isn't possible for free by any means.

Create a new 2Captcha account, top up your balance and get your API key.

[!NOTE] If you are located in Russia or Belarus, use the ruCaptcha interface instead of 2Captcha. It's the same service, but it supports payments from those countries.

[!TIP] If you want as few CAPTCHAs as possible, it is recommended to use a macOS system. macOS systems usually get fewer CAPTCHAs than Linux and Windows—this is due to its unpopularity in the web scraping industry. Running suno-api on Windows and Linux will work, but in some cases, you could get a pretty large number of CAPTCHAs.

You can choose your preferred deployment method:

git clone https://github.com/gcui-art/suno-api.git

cd suno-api

npm install[!IMPORTANT] GPU acceleration will be disabled in Docker. If you have a slow CPU, it is recommended to deploy locally.

Alternatively, you can use Docker Compose. However, follow the step below before running.

docker compose build && docker compose up-

If deployed to Vercel, please add the environment variables in the Vercel dashboard.

-

If you’re running this locally, be sure to add the following to your

.envfile:

-

SUNO_COOKIE— theCookieheader you obtained in the first step. -

TWOCAPTCHA_KEY— your 2Captcha API key from the second step. -

BROWSER— the name of the browser that is going to be used to solve the CAPTCHA. Onlychromiumandfirefoxsupported. -

BROWSER_GHOST_CURSOR— use ghost-cursor-playwright to simulate smooth mouse movements. Please note that it doesn't seem to make any difference in the rate of CAPTCHAs, so you can set it tofalse. Retained for future testing. -

BROWSER_LOCALE— the language of the browser. Using eitherenorruis recommended, since those have the most workers on 2Captcha. List of supported languages -

BROWSER_HEADLESS— run the browser without the window. You probably want to set this totrue.

SUNO_COOKIE=<…>

TWOCAPTCHA_KEY=<…>

BROWSER=chromium

BROWSER_GHOST_CURSOR=false

BROWSER_LOCALE=en

BROWSER_HEADLESS=true- If you’ve deployed to Vercel:

- Please click on Deploy in the Vercel dashboard and wait for the deployment to be successful.

- Visit the

https://<vercel-assigned-domain>/api/get_limitAPI for testing.

- If running locally:

- Run

npm run dev. - Visit the

http://localhost:3000/api/get_limitAPI for testing.

- Run

- If the following result is returned:

{

"credits_left": 50,

"period": "day",

"monthly_limit": 50,

"monthly_usage": 50

}it means the program is running normally.

You can check out the detailed API documentation at : suno.gcui.ai/docs

Suno API currently mainly implements the following APIs:

- `/api/generate`: Generate music

- `/v1/chat/completions`: Generate music - Call the generate API in a format that works with OpenAI’s API.

- `/api/custom_generate`: Generate music (Custom Mode, support setting lyrics, music style, title, etc.)

- `/api/generate_lyrics`: Generate lyrics based on prompt

- `/api/get`: Get music information based on the id. Use “,” to separate multiple ids.

If no IDs are provided, all music will be returned.

- `/api/get_limit`: Get quota Info

- `/api/extend_audio`: Extend audio length

- `/api/generate_stems`: Make stem tracks (separate audio and music track)

- `/api/get_aligned_lyrics`: Get list of timestamps for each word in the lyrics

- `/api/clip`: Get clip information based on ID passed as query parameter `id`

- `/api/concat`: Generate the whole song from extensionsYou can also specify the cookies in the Cookie header of your request, overriding the default cookies in the SUNO_COOKIE environment variable. This comes in handy when, for example, you want to use multiple free accounts at the same time.

For more detailed documentation, please check out the demo site: suno.gcui.ai/docs

import time

import requests

# replace with your suno-api URL

base_url = 'http://localhost:3000'

def custom_generate_audio(payload):

url = f"{base_url}/api/custom_generate"

response = requests.post(url, json=payload, headers={'Content-Type': 'application/json'})

return response.json()

def extend_audio(payload):

url = f"{base_url}/api/extend_audio"

response = requests.post(url, json=payload, headers={'Content-Type': 'application/json'})

return response.json()

def generate_audio_by_prompt(payload):

url = f"{base_url}/api/generate"

response = requests.post(url, json=payload, headers={'Content-Type': 'application/json'})

return response.json()

def get_audio_information(audio_ids):

url = f"{base_url}/api/get?ids={audio_ids}"

response = requests.get(url)

return response.json()

def get_quota_information():

url = f"{base_url}/api/get_limit"

response = requests.get(url)

return response.json()

def get_clip(clip_id):

url = f"{base_url}/api/clip?id={clip_id}"

response = requests.get(url)

return response.json()

def generate_whole_song(clip_id):

payload = {"clip_id": clip_id}

url = f"{base_url}/api/concat"

response = requests.post(url, json=payload)

return response.json()

if __name__ == '__main__':

data = generate_audio_by_prompt({

"prompt": "A popular heavy metal song about war, sung by a deep-voiced male singer, slowly and melodiously. The lyrics depict the sorrow of people after the war.",

"make_instrumental": False,

"wait_audio": False

})

ids = f"{data[0]['id']},{data[1]['id']}"

print(f"ids: {ids}")

for _ in range(60):

data = get_audio_information(ids)

if data[0]["status"] == 'streaming':

print(f"{data[0]['id']} ==> {data[0]['audio_url']}")

print(f"{data[1]['id']} ==> {data[1]['audio_url']}")

break

# sleep 5s

time.sleep(5)const axios = require("axios");

// replace your vercel domain

const baseUrl = "http://localhost:3000";

async function customGenerateAudio(payload) {

const url = `${baseUrl}/api/custom_generate`;

const response = await axios.post(url, payload, {

headers: { "Content-Type": "application/json" },

});

return response.data;

}

async function generateAudioByPrompt(payload) {

const url = `${baseUrl}/api/generate`;

const response = await axios.post(url, payload, {

headers: { "Content-Type": "application/json" },

});

return response.data;

}

async function extendAudio(payload) {

const url = `${baseUrl}/api/extend_audio`;

const response = await axios.post(url, payload, {

headers: { "Content-Type": "application/json" },

});

return response.data;

}

async function getAudioInformation(audioIds) {

const url = `${baseUrl}/api/get?ids=${audioIds}`;

const response = await axios.get(url);

return response.data;

}

async function getQuotaInformation() {

const url = `${baseUrl}/api/get_limit`;

const response = await axios.get(url);

return response.data;

}

async function getClipInformation(clipId) {

const url = `${baseUrl}/api/clip?id=${clipId}`;

const response = await axios.get(url);

return response.data;

}

async function main() {

const data = await generateAudioByPrompt({

prompt:

"A popular heavy metal song about war, sung by a deep-voiced male singer, slowly and melodiously. The lyrics depict the sorrow of people after the war.",

make_instrumental: false,

wait_audio: false,

});

const ids = `${data[0].id},${data[1].id}`;

console.log(`ids: ${ids}`);

for (let i = 0; i < 60; i++) {

const data = await getAudioInformation(ids);

if (data[0].status === "streaming") {

console.log(`${data[0].id} ==> ${data[0].audio_url}`);

console.log(`${data[1].id} ==> ${data[1].audio_url}`);

break;

}

// sleep 5s

await new Promise((resolve) => setTimeout(resolve, 5000));

}

}

main();You can integrate Suno AI as a tool/plugin/action into your AI agent.

[coming soon...]

[coming soon...]

[coming soon...]

There are four ways you can support this project:

- Fork and Submit Pull Requests: We welcome any PRs that enhance the functionality, APIs, response time and availability. You can also help us just by translating this README into your language—any help for this project is welcome!

- Open Issues: We appreciate reasonable suggestions and bug reports.

- Donate: If this project has helped you, consider buying us a coffee using the Sponsor button at the top of the project. Cheers! ☕

- Spread the Word: Recommend this project to others, star the repo, or add a backlink after using the project.

We use GitHub Issues to manage feedback. Feel free to open an issue, and we'll address it promptly.

The license of this project is LGPL-3.0 or later. See LICENSE for more information.

- Project repository: github.com/gcui-art/suno-api

- Suno.ai official website: suno.ai

- Demo: suno.gcui.ai

- Readpo: ReadPo is an AI-powered reading and writing assistant. Collect, curate, and create content at lightning speed.

- Album AI: Auto generate image metadata and chat with the album. RAG + Album.

suno-api is an unofficial open source project, intended for learning and research purposes only.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for suno-api

Similar Open Source Tools

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

hydraai

Generate React components on-the-fly at runtime using AI. Register your components, and let Hydra choose when to show them in your App. Hydra development is still early, and patterns for different types of components and apps are still being developed. Join the discord to chat with the developers. Expects to be used in a NextJS project. Components that have function props do not work.

openai

An open-source client package that allows developers to easily integrate the power of OpenAI's state-of-the-art AI models into their Dart/Flutter applications. The library provides simple and intuitive methods for making requests to OpenAI's various APIs, including the GPT-3 language model, DALL-E image generation, and more. It is designed to be lightweight and easy to use, enabling developers to focus on building their applications without worrying about the complexities of dealing with HTTP requests. Note that this is an unofficial library as OpenAI does not have an official Dart library.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

generative-ai

The 'Generative AI' repository provides a C# library for interacting with Google's Generative AI models, specifically the Gemini models. It allows users to access and integrate the Gemini API into .NET applications, supporting functionalities such as listing available models, generating content, creating tuned models, working with large files, starting chat sessions, and more. The repository also includes helper classes and enums for Gemini API aspects. Authentication methods include API key, OAuth, and various authentication modes for Google AI and Vertex AI. The package offers features for both Google AI Studio and Google Cloud Vertex AI, with detailed instructions on installation, usage, and troubleshooting.

APIMyLlama

APIMyLlama is a server application that provides an interface to interact with the Ollama API, a powerful AI tool to run LLMs. It allows users to easily distribute API keys to create amazing things. The tool offers commands to generate, list, remove, add, change, activate, deactivate, and manage API keys, as well as functionalities to work with webhooks, set rate limits, and get detailed information about API keys. Users can install APIMyLlama packages with NPM, PIP, Jitpack Repo+Gradle or Maven, or from the Crates Repository. The tool supports Node.JS, Python, Java, and Rust for generating responses from the API. Additionally, it provides built-in health checking commands for monitoring API health status.

simpleAI

SimpleAI is a self-hosted alternative to the not-so-open AI API, focused on replicating main endpoints for LLM such as text completion, chat, edits, and embeddings. It allows quick experimentation with different models, creating benchmarks, and handling specific use cases without relying on external services. Users can integrate and declare models through gRPC, query endpoints using Swagger UI or API, and resolve common issues like CORS with FastAPI middleware. The project is open for contributions and welcomes PRs, issues, documentation, and more.

instructor

Instructor is a popular Python library for managing structured outputs from large language models (LLMs). It offers a user-friendly API for validation, retries, and streaming responses. With support for various LLM providers and multiple languages, Instructor simplifies working with LLM outputs. The library includes features like response models, retry management, validation, streaming support, and flexible backends. It also provides hooks for logging and monitoring LLM interactions, and supports integration with Anthropic, Cohere, Gemini, Litellm, and Google AI models. Instructor facilitates tasks such as extracting user data from natural language, creating fine-tuned models, managing uploaded files, and monitoring usage of OpenAI models.

banks

Banks is a linguist professor tool that helps generate meaningful LLM prompts using a template language. It provides a user-friendly way to create prompts for various tasks such as blog writing, summarizing documents, lemmatizing text, and generating text using a LLM. The tool supports async operations and comes with predefined filters for data processing. Banks leverages Jinja's macro system to create prompts and interact with OpenAI API for text generation. It also offers a cache mechanism to avoid regenerating text for the same template and context.

lollms

LoLLMs Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications.

memobase

Memobase is a user profile-based memory system designed to enhance Generative AI applications by enabling them to remember, understand, and evolve with users. It provides structured user profiles, scalable profiling, easy integration with existing LLM stacks, batch processing for speed, and is production-ready. Users can manage users, insert data, get memory profiles, and track user preferences and behaviors. Memobase is ideal for applications that require user analysis, tracking, and personalized interactions.

instructor

Instructor is a Python library that makes it a breeze to work with structured outputs from large language models (LLMs). Built on top of Pydantic, it provides a simple, transparent, and user-friendly API to manage validation, retries, and streaming responses. Get ready to supercharge your LLM workflows!

hugging-chat-api

Unofficial HuggingChat Python API for creating chatbots, supporting features like image generation, web search, memorizing context, and changing LLMs. Users can log in, chat with the ChatBot, perform web searches, create new conversations, manage conversations, switch models, get conversation info, use assistants, and delete conversations. The API also includes a CLI mode with various commands for interacting with the tool. Users are advised not to use the application for high-stakes decisions or advice and to avoid high-frequency requests to preserve server resources.

python-tgpt

Python-tgpt is a Python package that enables seamless interaction with over 45 free LLM providers without requiring an API key. It also provides image generation capabilities. The name _python-tgpt_ draws inspiration from its parent project tgpt, which operates on Golang. Through this Python adaptation, users can effortlessly engage with a number of free LLMs available, fostering a smoother AI interaction experience.

llm-client

LLMClient is a JavaScript/TypeScript library that simplifies working with large language models (LLMs) by providing an easy-to-use interface for building and composing efficient prompts using prompt signatures. These signatures enable the automatic generation of typed prompts, allowing developers to leverage advanced capabilities like reasoning, function calling, RAG, ReAcT, and Chain of Thought. The library supports various LLMs and vector databases, making it a versatile tool for a wide range of applications.

parea-sdk-py

Parea AI provides a SDK to evaluate & monitor AI applications. It allows users to test, evaluate, and monitor their AI models by defining and running experiments. The SDK also enables logging and observability for AI applications, as well as deploying prompts to facilitate collaboration between engineers and subject-matter experts. Users can automatically log calls to OpenAI and Anthropic, create hierarchical traces of their applications, and deploy prompts for integration into their applications.

For similar tasks

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

musegpt

Run local Large Language Models (LLMs) in your Digital Audio Workstation (DAW) to provide inspiration, instructions, and analysis for your music creation. Currently supported features include LLM chat, VST3 plugin, MIDI input, and Audio input. Requires C++17 compatible compiler, CMake, and Python 3.10 or later. Licensed under AGPL v3. Built by Grey Newell.

MOSS-TTS

MOSS-TTS Family is an open-source speech and sound generation model family designed for high-fidelity, high-expressiveness, and complex real-world scenarios. It includes five production-ready models: MOSS-TTS, MOSS-TTSD, MOSS-VoiceGenerator, MOSS-TTS-Realtime, and MOSS-SoundEffect, each serving specific purposes in speech generation, dialogue, voice design, real-time interactions, and sound effect generation. The models offer features like long-speech generation, fine-grained control over phonemes and duration, multilingual synthesis, voice cloning, and real-time voice agents.

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

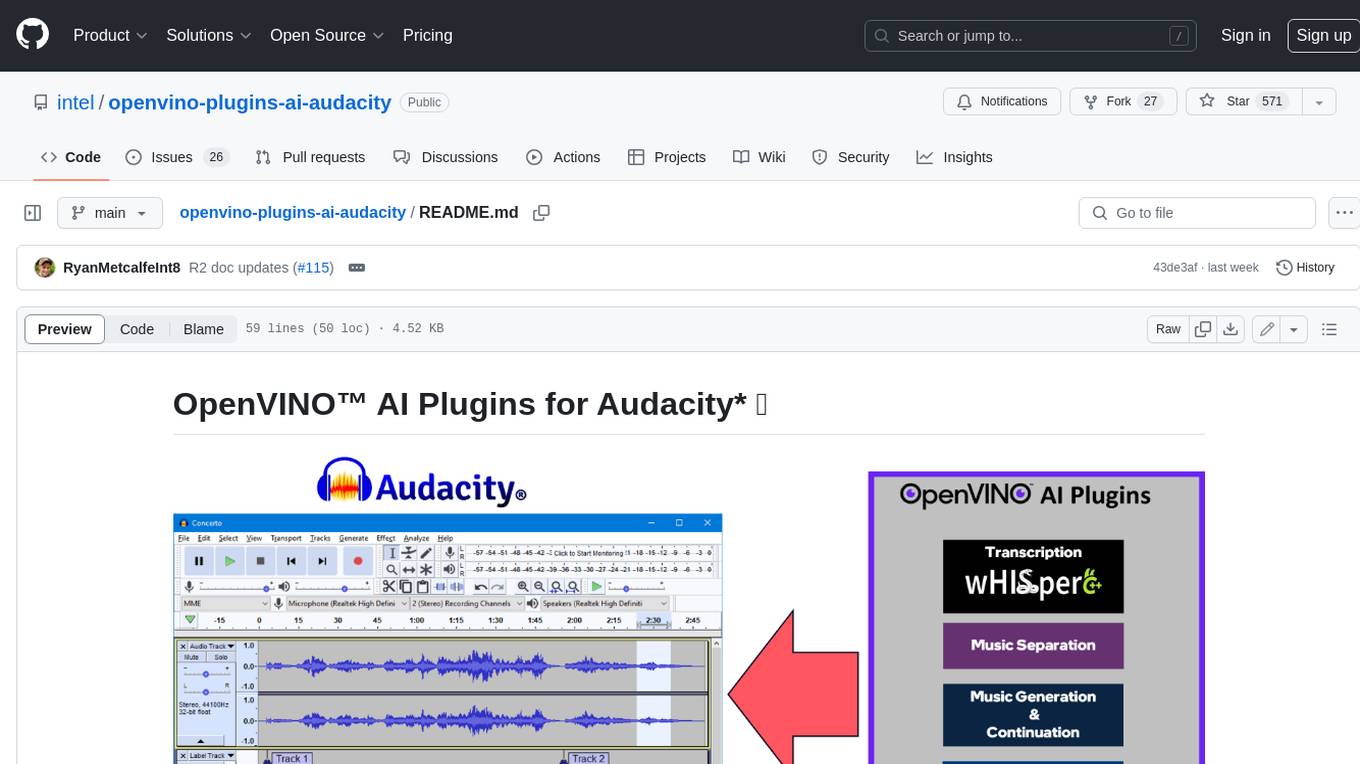

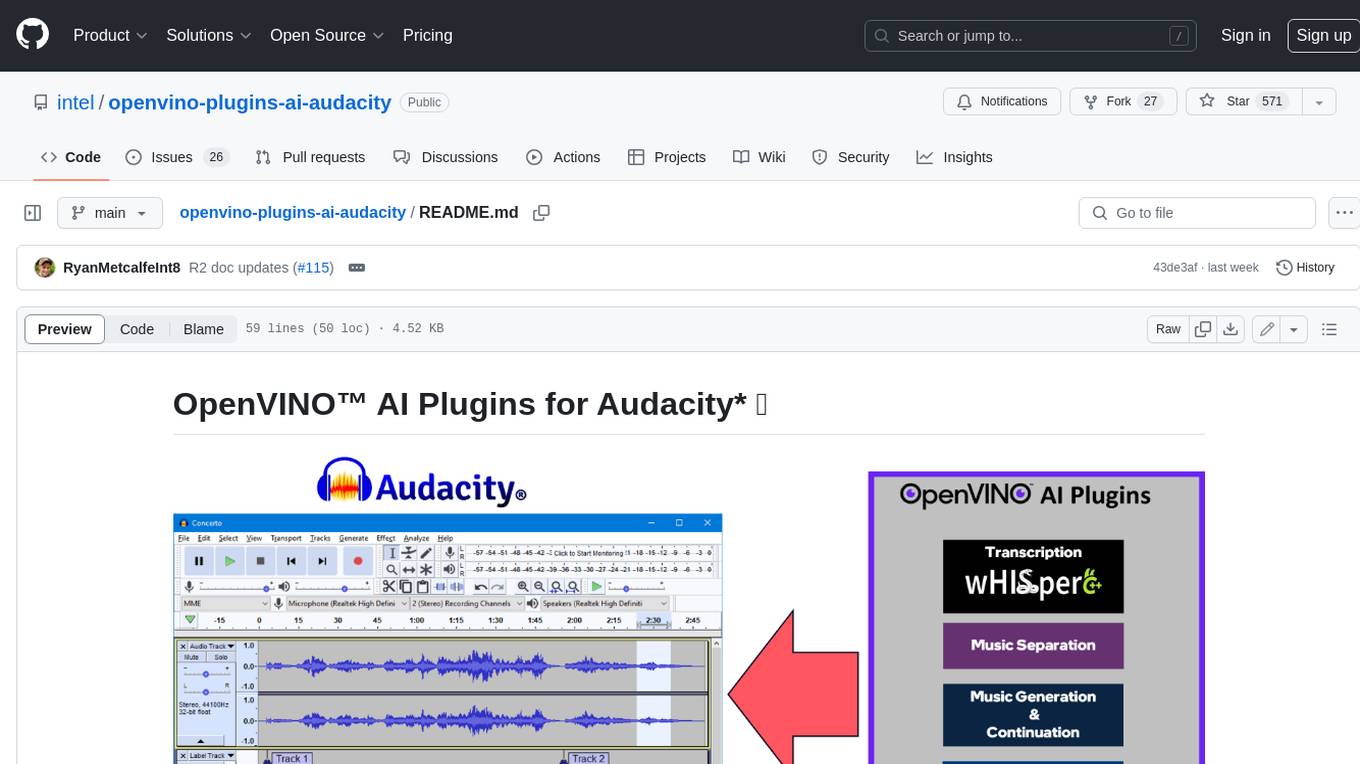

openvino-plugins-ai-audacity

OpenVINO™ AI Plugins for Audacity* are a set of AI-enabled effects, generators, and analyzers for Audacity®. These AI features run 100% locally on your PC -- no internet connection necessary! OpenVINO™ is used to run AI models on supported accelerators found on the user's system such as CPU, GPU, and NPU. * **Music Separation**: Separate a mono or stereo track into individual stems -- Drums, Bass, Vocals, & Other Instruments. * **Noise Suppression**: Removes background noise from an audio sample. * **Music Generation & Continuation**: Uses MusicGen LLM to generate snippets of music, or to generate a continuation of an existing snippet of music. * **Whisper Transcription**: Uses whisper.cpp to generate a label track containing the transcription or translation for a given selection of spoken audio or vocals.

SunoApi

SunoAPI is an unofficial client for Suno AI, built on Python and Streamlit. It supports functions like generating music and obtaining music information. Users can set up multiple account information to be saved for use. The tool also features built-in maintenance and activation functions for tokens, eliminating concerns about token expiration. It supports multiple languages and allows users to upload pictures for generating songs based on image content analysis.

awesome-generative-ai

Awesome Generative AI is a curated list of modern Generative Artificial Intelligence projects and services. Generative AI technology creates original content like images, sounds, and texts using machine learning algorithms trained on large data sets. It can produce unique and realistic outputs such as photorealistic images, digital art, music, and writing. The repo covers a wide range of applications in art, entertainment, marketing, academia, and computer science.

ai-audio-datasets

AI Audio Datasets List (AI-ADL) is a comprehensive collection of datasets consisting of speech, music, and sound effects, used for Generative AI, AIGC, AI model training, and audio applications. It includes datasets for speech recognition, speech synthesis, music information retrieval, music generation, audio processing, sound synthesis, and more. The repository provides a curated list of diverse datasets suitable for various AI audio tasks.

For similar jobs

metavoice-src

MetaVoice-1B is a 1.2B parameter base model trained on 100K hours of speech for TTS (text-to-speech). It has been built with the following priorities: * Emotional speech rhythm and tone in English. * Zero-shot cloning for American & British voices, with 30s reference audio. * Support for (cross-lingual) voice cloning with finetuning. * We have had success with as little as 1 minute training data for Indian speakers. * Synthesis of arbitrary length text

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

bark.cpp

Bark.cpp is a C/C++ implementation of the Bark model, a real-time, multilingual text-to-speech generation model. It supports AVX, AVX2, and AVX512 for x86 architectures, and is compatible with both CPU and GPU backends. Bark.cpp also supports mixed F16/F32 precision and 4-bit, 5-bit, and 8-bit integer quantization. It can be used to generate realistic-sounding audio from text prompts.

NSMusicS

NSMusicS is a local music software that is expected to support multiple platforms with AI capabilities and multimodal features. The goal of NSMusicS is to integrate various functions (such as artificial intelligence, streaming, music library management, cross platform, etc.), which can be understood as similar to Navidrome but with more features than Navidrome. It wants to become a plugin integrated application that can almost have all music functions.

ai-voice-cloning

This repository provides a tool for AI voice cloning, allowing users to generate synthetic speech that closely resembles a target speaker's voice. The tool is designed to be user-friendly and accessible, with a graphical user interface that guides users through the process of training a voice model and generating synthetic speech. The tool also includes a variety of features that allow users to customize the generated speech, such as the pitch, volume, and speaking rate. Overall, this tool is a valuable resource for anyone interested in creating realistic and engaging synthetic speech.

RVC_CLI

**RVC_CLI: Retrieval-based Voice Conversion Command Line Interface** This command-line interface (CLI) provides a comprehensive set of tools for voice conversion, enabling you to modify the pitch, timbre, and other characteristics of audio recordings. It leverages advanced machine learning models to achieve realistic and high-quality voice conversions. **Key Features:** * **Inference:** Convert the pitch and timbre of audio in real-time or process audio files in batch mode. * **TTS Inference:** Synthesize speech from text using a variety of voices and apply voice conversion techniques. * **Training:** Train custom voice conversion models to meet specific requirements. * **Model Management:** Extract, blend, and analyze models to fine-tune and optimize performance. * **Audio Analysis:** Inspect audio files to gain insights into their characteristics. * **API:** Integrate the CLI's functionality into your own applications or workflows. **Applications:** The RVC_CLI finds applications in various domains, including: * **Music Production:** Create unique vocal effects, harmonies, and backing vocals. * **Voiceovers:** Generate voiceovers with different accents, emotions, and styles. * **Audio Editing:** Enhance or modify audio recordings for podcasts, audiobooks, and other content. * **Research and Development:** Explore and advance the field of voice conversion technology. **For Jobs:** * Audio Engineer * Music Producer * Voiceover Artist * Audio Editor * Machine Learning Engineer **AI Keywords:** * Voice Conversion * Pitch Shifting * Timbre Modification * Machine Learning * Audio Processing **For Tasks:** * Convert Pitch * Change Timbre * Synthesize Speech * Train Model * Analyze Audio

openvino-plugins-ai-audacity

OpenVINO™ AI Plugins for Audacity* are a set of AI-enabled effects, generators, and analyzers for Audacity®. These AI features run 100% locally on your PC -- no internet connection necessary! OpenVINO™ is used to run AI models on supported accelerators found on the user's system such as CPU, GPU, and NPU. * **Music Separation**: Separate a mono or stereo track into individual stems -- Drums, Bass, Vocals, & Other Instruments. * **Noise Suppression**: Removes background noise from an audio sample. * **Music Generation & Continuation**: Uses MusicGen LLM to generate snippets of music, or to generate a continuation of an existing snippet of music. * **Whisper Transcription**: Uses whisper.cpp to generate a label track containing the transcription or translation for a given selection of spoken audio or vocals.

WavCraft

WavCraft is an LLM-driven agent for audio content creation and editing. It applies LLM to connect various audio expert models and DSP function together. With WavCraft, users can edit the content of given audio clip(s) conditioned on text input, create an audio clip given text input, get more inspiration from WavCraft by prompting a script setting and let the model do the scriptwriting and create the sound, and check if your audio file is synthesized by WavCraft.