Gemini-API

✨ Elegant async Python API for Google Gemini web app

Stars: 160

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

README:

A reverse-engineered asynchronous python wrapper for Google Gemini web app (formerly Bard).

- Persistent Cookies - Automatically refreshes cookies in background. Optimized for always-on services.

- ImageFx Support - Supports retrieving images generated by ImageFx, Google's latest AI image generator.

- Extension Support - Supports generating contents with Gemini extensions on, like YouTube and Gmail.

- Classified Outputs - Automatically categorizes texts, web images and AI generated images in the response.

- Official Flavor - Provides a simple and elegant interface inspired by Google Generative AI's official API.

-

Asynchronous - Utilizes

asyncioto run generating tasks and return outputs efficiently.

- Features

- Table of Contents

- Installation

- Authentication

-

Usage

- Initialization

- Generate contents from text

- Generate contents from image

- Conversations across multiple turns

- Continue previous conversations

- Retrieve images in response

- Generate images with ImageFx

- Save images to local files

- Generate contents with Gemini extensions

- Check and switch to other reply candidates

- Control log level

- References

- Stargazers

[!NOTE]

This package requires Python 3.10 or higher.

Install/update the package with pip.

pip install -U gemini_webapiOptionally, package offers a way to automatically import cookies from your local browser. To enable this feature, install browser-cookie3 as well. Supported platforms and browsers can be found here.

pip install -U browser-cookie3[!TIP]

If

browser-cookie3is installed, you can skip this step and go directly to usage section. Just make sure you have logged in to https://gemini.google.com in your browser.

- Go to https://gemini.google.com and login with your Google account

- Press F12 for web inspector, go to

Networktab and refresh the page - Click any request and copy cookie values of

__Secure-1PSIDand__Secure-1PSIDTS

[!NOTE]

If your application is deployed in a containerized environment (e.g. Docker), you may want to persist the cookies with a volume to avoid re-authentication every time the container rebuilds.

Here's part of a sample

docker-compose.ymlfile:

services:

main:

volumes:

- ./gemini_cookies:/usr/local/lib/python3.12/site-packages/gemini_webapi/utils/temp[!NOTE]

API's auto cookie refreshing feature doesn't require

browser-cookie3, and by default is enabled. It allows you to keep the API service running without worrying about cookie expiration.This feature may cause that you need to re-login to your Google account in the browser. This is an expected behavior and won't affect the API's functionality.

To avoid such result, it's recommended to get cookies from a separate browser session and close it as asap for best utilization (e.g. a fresh login in browser's private mode). More details can be found here.

Import required packages and initialize a client with your cookies obtained from the previous step. After a successful initialization, the API will automatically refresh __Secure-1PSIDTS in background as long as the process is alive.

import asyncio

from gemini_webapi import GeminiClient

# Replace "COOKIE VALUE HERE" with your actual cookie values.

# Leave Secure_1PSIDTS empty if it's not available for your account.

Secure_1PSID = "COOKIE VALUE HERE"

Secure_1PSIDTS = "COOKIE VALUE HERE"

async def main():

# If browser-cookie3 is installed, simply use `client = GeminiClient()`

client = GeminiClient(Secure_1PSID, Secure_1PSIDTS, proxies=None)

await client.init(timeout=30, auto_close=False, close_delay=300, auto_refresh=True)

asyncio.run(main())[!TIP]

auto_closeandclose_delayare optional arguments for automatically closing the client after a certain period of inactivity. This feature is disabled by default. In an always-on service like chatbot, it's recommended to setauto_closetoTruecombined with reasonable seconds ofclose_delayfor better resource management.

Ask a one-turn quick question by calling GeminiClient.generate_content.

async def main():

response = await client.generate_content("Hello World!")

print(response.text)

asyncio.run(main())[!TIP]

Simply use

print(response)to get the same output if you just want to see the response text

Gemini supports image recognition and generating contents from images. Optionally, you can pass images in a list of file data in bytes or their paths in str or pathlib.Path to GeminiClient.generate_content together with text prompt.

async def main():

response = await client.generate_content(

"Describe each of these images",

images=["assets/banner.png", "assets/favicon.png"],

)

print(response.text)

asyncio.run(main())If you want to keep conversation continuous, please use GeminiClient.start_chat to create a ChatSession object and send messages through it. The conversation history will be automatically handled and get updated after each turn.

async def main():

chat = client.start_chat()

response1 = await chat.send_message("Briefly introduce Europe")

response2 = await chat.send_message("What's the population there?")

print(response1.text, response2.text, sep="\n\n----------------------------------\n\n")

asyncio.run(main())[!TIP]

Same as

GeminiClient.generate_content,ChatSession.send_messagealso acceptsimageas an optional argument.

To manually retrieve previous conversations, you can pass previous ChatSession's metadata to GeminiClient.start_chat when creating a new ChatSession. Alternatively, you can persist previous metadata to a file or db if you need to access them after the current Python process has exited.

async def main():

# Start a new chat session

chat = client.start_chat()

response = await chat.send_message("Fine weather today")

# Save chat's metadata

previous_session = chat.metadata

# Load the previous conversation

previous_chat = client.start_chat(metadata=previous_session)

response = await previous_chat.send_message("What was my previous message?")

print(response)

asyncio.run(main())Images in the API's output are stored as a list of Image objects. You can access the image title, URL, and description by calling image.title, image.url and image.alt respectively.

async def main():

response = await client.generate_content("Send me some pictures of cats")

for image in response.images:

print(image, "\n\n----------------------------------\n")

asyncio.run(main())In February 2022, Google introduced a new AI image generator called ImageFx and integrated it into Gemini. You can ask Gemini to generate images with ImageFx simply by natural language.

[!IMPORTANT]

Google has some limitations on the image generation feature in Gemini, so its availability could be different per region/account. Here's a summary copied from official documentation (as of February 15th, 2024):

Image generation in Gemini Apps is available in most countries, except in the European Economic Area (EEA), Switzerland, and the UK. It’s only available for English prompts.

This feature’s availability in any specific Gemini app is also limited to the supported languages and countries of that app.

For now, this feature isn’t available to users under 18.

async def main():

response = await client.generate_content("Generate some pictures of cats")

for image in response.images:

print(image, "\n\n----------------------------------\n")

asyncio.run(main())[!NOTE]

by default, when asked to send images (like the previous example), Gemini will send images fetched from web instead of generating images with AI model, unless you specifically require to "generate" images in your prompt. In this package, web images and generated images are treated differently as

WebImageandGeneratedImage, and will be automatically categorized in the output.

You can save images returned from Gemini to local files under /temp by calling Image.save(). Optionally, you can specify the file path and file name by passing path and filename arguments to the function and skip images with invalid file names by passing skip_invalid_filename=True. Works for both WebImage and GeneratedImage.

async def main():

response = await client.generate_content("Generate some pictures of cats")

for i, image in enumerate(response.images):

await image.save(path="temp/", filename=f"cat_{i}.png", verbose=True)

asyncio.run(main())[!IMPORTANT]

To access Gemini extensions in API, you must activate them on the Gemini website first. Same as image generation, Google also has limitations on the availability of Gemini extensions. Here's a summary copied from official documentation (as of February 18th, 2024):

To use extensions in Gemini Apps:

Sign in with your personal Google Account that you manage on your own. Extensions, including the Google Workspace extension, are currently not available to Google Workspace accounts for school, business, or other organizations.

Have Gemini Apps Activity on. Extensions are only available when Gemini Apps Activity is turned on.

Important: For now, extensions are available in English, Japanese, and Korean only.

After activating extensions for your account, you can access them in your prompts either by natural language or by starting your prompt with "@" followed by the extension keyword.

async def main():

response1 = await client.generate_content("@Gmail What's the latest message in my mailbox?")

print(response1, "\n\n----------------------------------\n")

response2 = await client.generate_content("@Youtube What's the latest activity of Taylor Swift?")

print(response2, "\n\n----------------------------------\n")

asyncio.run(main())[!NOTE]

For the available regions limitation, it actually only requires your Google account's preferred language to be set to one of the three supported languages listed above. You can change your language settings here.

A response from Gemini usually contains multiple reply candidates with different generated contents. You can check all candidates and choose one to continue the conversation. By default, the first candidate will be chosen automatically.

async def main():

# Start a conversation and list all reply candidates

chat = client.start_chat()

response = await chat.send_message("Recommend a science fiction book for me.")

for candidate in response.candidates:

print(candidate, "\n\n----------------------------------\n")

if len(response.candidates) > 1:

# Control the ongoing conversation flow by choosing candidate manually

new_candidate = chat.choose_candidate(index=1) # Choose the second candidate here

followup_response = await chat.send_message("Tell me more about it.") # Will generate contents based on the chosen candidate

print(new_candidate, followup_response, sep="\n\n----------------------------------\n\n")

else:

print("Only one candidate available.")

asyncio.run(main())You can set the log level of the package to one of the following values: DEBUG, INFO, WARNING, ERROR and CRITICAL. The default value is INFO.

from gemini_webapi import set_log_level

set_log_level("DEBUG")For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Gemini-API

Similar Open Source Tools

Gemini-API

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

DeepPavlov

DeepPavlov is an open-source conversational AI library built on PyTorch. It is designed for the development of production-ready chatbots and complex conversational systems, as well as for research in the area of NLP and dialog systems. The library offers a wide range of models for tasks such as Named Entity Recognition, Intent/Sentence Classification, Question Answering, Sentence Similarity/Ranking, Syntactic Parsing, and more. DeepPavlov also provides embeddings like BERT, ELMo, and FastText for various languages, along with AutoML capabilities and integrations with REST API, Socket API, and Amazon AWS.

minja

Minja is a minimalistic C++ Jinja templating engine designed specifically for integration with C++ LLM projects, such as llama.cpp or gemma.cpp. It is not a general-purpose tool but focuses on providing a limited set of filters, tests, and language features tailored for chat templates. The library is header-only, requires C++17, and depends only on nlohmann::json. Minja aims to keep the codebase small, easy to understand, and offers decent performance compared to Python. Users should be cautious when using Minja due to potential security risks, and it is not intended for producing HTML or JavaScript output.

aiogram_dialog

Aiogram Dialog is a framework for developing interactive messages and menus in Telegram bots, inspired by Android SDK. It allows splitting data retrieval, rendering, and action processing, creating reusable widgets, and designing bots with a focus on user experience. The tool supports rich text rendering, automatic message updating, multiple dialog stacks, inline keyboard widgets, stateful widgets, various button layouts, media handling, transitions between windows, and offline HTML-preview for messages and transitions diagram.

lantern

Lantern is an open-source PostgreSQL database extension designed to store vector data, generate embeddings, and handle vector search operations efficiently. It introduces a new index type called 'lantern_hnsw' for vector columns, which speeds up 'ORDER BY ... LIMIT' queries. Lantern utilizes the state-of-the-art HNSW implementation called usearch. Users can easily install Lantern using Docker, Homebrew, or precompiled binaries. The tool supports various distance functions, index construction parameters, and operator classes for efficient querying. Lantern offers features like embedding generation, interoperability with pgvector, parallel index creation, and external index graph generation. It aims to provide superior performance metrics compared to other similar tools and has a roadmap for future enhancements such as cloud-hosted version, hardware-accelerated distance metrics, industry-specific application templates, and support for version control and A/B testing of embeddings.

HuggingFaceGuidedTourForMac

HuggingFaceGuidedTourForMac is a guided tour on how to install optimized pytorch and optionally Apple's new MLX, JAX, and TensorFlow on Apple Silicon Macs. The repository provides steps to install homebrew, pytorch with MPS support, MLX, JAX, TensorFlow, and Jupyter lab. It also includes instructions on running large language models using HuggingFace transformers. The repository aims to help users set up their Macs for deep learning experiments with optimized performance.

videodb-python

VideoDB Python SDK allows you to interact with the VideoDB serverless database. Manage videos as intelligent data, not files. It's scalable, cost-efficient & optimized for AI applications and LLM integration. The SDK provides functionalities for uploading videos, viewing videos, streaming specific sections of videos, searching inside a video, searching inside multiple videos in a collection, adding subtitles to a video, generating thumbnails, and more. It also offers features like indexing videos by spoken words, semantic indexing, and future indexing options for scenes, faces, and specific domains like sports. The SDK aims to simplify video management and enhance AI applications with video data.

bedrock-claude-chat

This repository is a sample chatbot using the Anthropic company's LLM Claude, one of the foundational models provided by Amazon Bedrock for generative AI. It allows users to have basic conversations with the chatbot, personalize it with their own instructions and external knowledge, and analyze usage for each user/bot on the administrator dashboard. The chatbot supports various languages, including English, Japanese, Korean, Chinese, French, German, and Spanish. Deployment is straightforward and can be done via the command line or by using AWS CDK. The architecture is built on AWS managed services, eliminating the need for infrastructure management and ensuring scalability, reliability, and security.

jina

Jina is a tool that allows users to build multimodal AI services and pipelines using cloud-native technologies. It provides a Pythonic experience for serving ML models and transitioning from local deployment to advanced orchestration frameworks like Docker-Compose, Kubernetes, or Jina AI Cloud. Users can build and serve models for any data type and deep learning framework, design high-performance services with easy scaling, serve LLM models while streaming their output, integrate with Docker containers via Executor Hub, and host on CPU/GPU using Jina AI Cloud. Jina also offers advanced orchestration and scaling capabilities, a smooth transition to the cloud, and easy scalability and concurrency features for applications. Users can deploy to their own cloud or system with Kubernetes and Docker Compose integration, and even deploy to JCloud for autoscaling and monitoring.

ChatDBG

ChatDBG is an AI-based debugging assistant for C/C++/Python/Rust code that integrates large language models into a standard debugger (`pdb`, `lldb`, `gdb`, and `windbg`) to help debug your code. With ChatDBG, you can engage in a dialog with your debugger, asking open-ended questions about your program, like `why is x null?`. ChatDBG will _take the wheel_ and steer the debugger to answer your queries. ChatDBG can provide error diagnoses and suggest fixes. As far as we are aware, ChatDBG is the _first_ debugger to automatically perform root cause analysis and to provide suggested fixes.

ellmer

ellmer is a tool that facilitates the use of large language models (LLM) from R. It supports various LLM providers and offers features such as streaming outputs, tool/function calling, and structured data extraction. Users can interact with ellmer in different ways, including interactive chat console, interactive method call, and programmatic chat. The tool provides support for multiple model providers and offers recommendations for different use cases, such as exploration or organizational use.

openai

An open-source client package that allows developers to easily integrate the power of OpenAI's state-of-the-art AI models into their Dart/Flutter applications. The library provides simple and intuitive methods for making requests to OpenAI's various APIs, including the GPT-3 language model, DALL-E image generation, and more. It is designed to be lightweight and easy to use, enabling developers to focus on building their applications without worrying about the complexities of dealing with HTTP requests. Note that this is an unofficial library as OpenAI does not have an official Dart library.

ShortcutsBench

ShortcutsBench is a project focused on collecting and analyzing workflows created in the Shortcuts app, providing a dataset of shortcut metadata, source files, and API information. It aims to study the integration of large language models with Apple devices, particularly focusing on the role of shortcuts in enhancing user experience. The project offers insights for Shortcuts users, enthusiasts, and researchers to explore, customize workflows, and study automated workflows, low-code programming, and API-based agents.

sieves

sieves is a library for zero- and few-shot NLP tasks with structured generation, enabling rapid prototyping of NLP applications without the need for training. It simplifies NLP prototyping by bundling capabilities into a single library, providing zero- and few-shot model support, a unified interface for structured generation, built-in tasks for common NLP operations, easy extendability, document-based pipeline architecture, caching to prevent redundant model calls, and more. The tool draws inspiration from spaCy and spacy-llm, offering features like immediate inference, observable pipelines, integrated tools for document parsing and text chunking, ready-to-use tasks such as classification, summarization, translation, and more, persistence for saving and loading pipelines, distillation for specialized model creation, and caching to optimize performance.

magic-cli

Magic CLI is a command line utility that leverages Large Language Models (LLMs) to enhance command line efficiency. It is inspired by projects like Amazon Q and GitHub Copilot for CLI. The tool allows users to suggest commands, search across command history, and generate commands for specific tasks using local or remote LLM providers. Magic CLI also provides configuration options for LLM selection and response generation. The project is still in early development, so users should expect breaking changes and bugs.

SpeziLLM

The Spezi LLM Swift Package includes modules that help integrate LLM-related functionality in applications. It provides tools for local LLM execution, usage of remote OpenAI-based LLMs, and LLMs running on Fog node resources within the local network. The package contains targets like SpeziLLM, SpeziLLMLocal, SpeziLLMLocalDownload, SpeziLLMOpenAI, and SpeziLLMFog for different LLM functionalities. Users can configure and interact with local LLMs, OpenAI LLMs, and Fog LLMs using the provided APIs and platforms within the Spezi ecosystem.

For similar tasks

Gemini-API

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

floneum

Floneum is a graph editor that makes it easy to develop your own AI workflows. It uses large language models (LLMs) to run AI models locally, without any external dependencies or even a GPU. This makes it easy to use LLMs with your own data, without worrying about privacy. Floneum also has a plugin system that allows you to improve the performance of LLMs and make them work better for your specific use case. Plugins can be used in any language that supports web assembly, and they can control the output of LLMs with a process similar to JSONformer or guidance.

llm-answer-engine

This repository contains the code and instructions needed to build a sophisticated answer engine that leverages the capabilities of Groq, Mistral AI's Mixtral, Langchain.JS, Brave Search, Serper API, and OpenAI. Designed to efficiently return sources, answers, images, videos, and follow-up questions based on user queries, this project is an ideal starting point for developers interested in natural language processing and search technologies.

discourse-ai

Discourse AI is a plugin for the Discourse forum software that uses artificial intelligence to improve the user experience. It can automatically generate content, moderate posts, and answer questions. This can free up moderators and administrators to focus on other tasks, and it can help to create a more engaging and informative community.

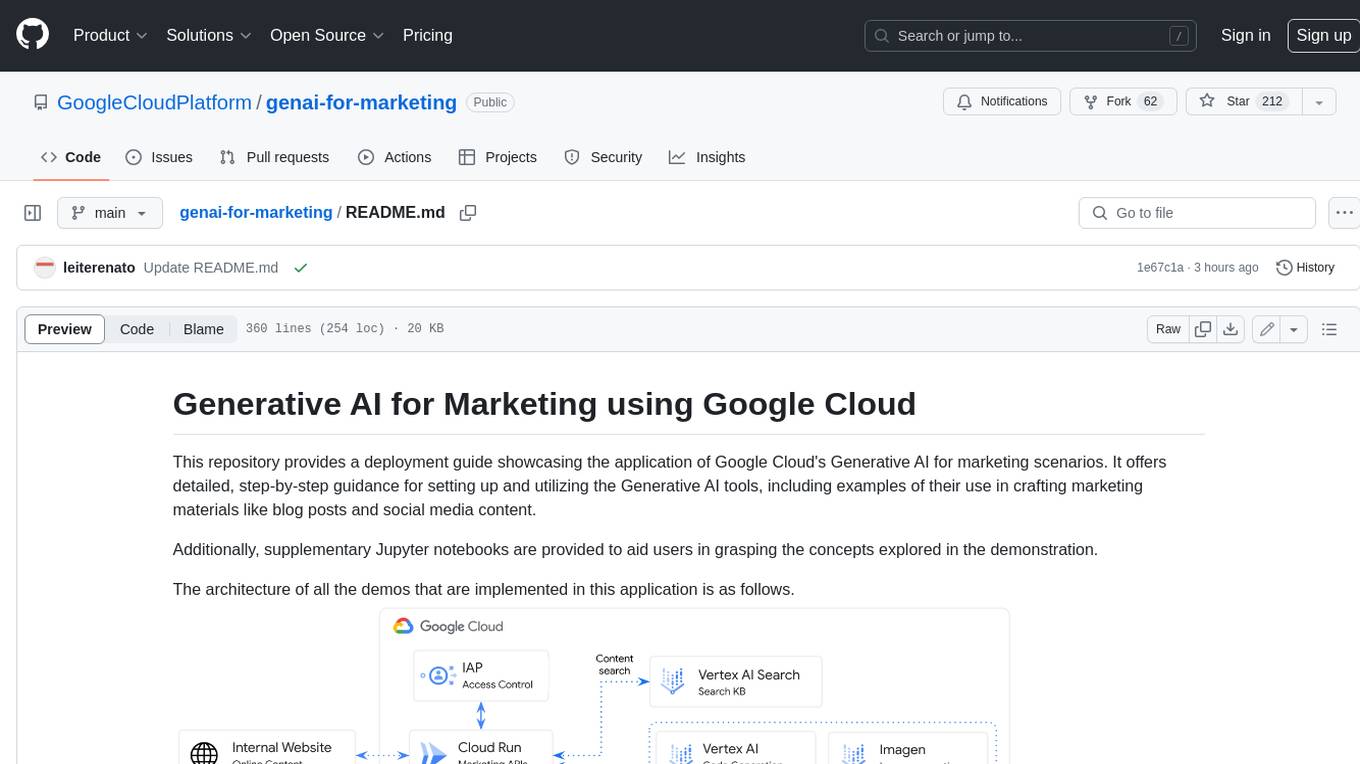

genai-for-marketing

This repository provides a deployment guide for utilizing Google Cloud's Generative AI tools in marketing scenarios. It includes step-by-step instructions, examples of crafting marketing materials, and supplementary Jupyter notebooks. The demos cover marketing insights, audience analysis, trendspotting, content search, content generation, and workspace integration. Users can access and visualize marketing data, analyze trends, improve search experience, and generate compelling content. The repository structure includes backend APIs, frontend code, sample notebooks, templates, and installation scripts.

generative-ai-dart

The Google Generative AI SDK for Dart enables developers to utilize cutting-edge Large Language Models (LLMs) for creating language applications. It provides access to the Gemini API for generating content using state-of-the-art models. Developers can integrate the SDK into their Dart or Flutter applications to leverage powerful AI capabilities. It is recommended to use the SDK for server-side API calls to ensure the security of API keys and protect against potential key exposure in mobile or web apps.

Dough

Dough is a tool for crafting videos with AI, allowing users to guide video generations with precision using images and example videos. Users can create guidance frames, assemble shots, and animate them by defining parameters and selecting guidance videos. The tool aims to help users make beautiful and unique video creations, providing control over the generation process. Setup instructions are available for Linux and Windows platforms, with detailed steps for installation and running the app.

ChaKt-KMP

ChaKt is a multiplatform app built using Kotlin and Compose Multiplatform to demonstrate the use of Generative AI SDK for Kotlin Multiplatform to generate content using Google's Generative AI models. It features a simple chat based user interface and experience to interact with AI. The app supports mobile, desktop, and web platforms, and is built with Kotlin Multiplatform, Kotlin Coroutines, Compose Multiplatform, Generative AI SDK, Calf - File picker, and BuildKonfig. Users can contribute to the project by following the guidelines in CONTRIBUTING.md. The app is licensed under the MIT License.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.