shortest

QA via natural language AI tests

Stars: 4425

Shortest is an AI-powered natural language end-to-end testing framework built on Playwright. It provides a seamless testing experience by allowing users to write tests in natural language and execute them using Anthropic Claude API. The framework also offers GitHub integration with 2FA support, making it suitable for testing web applications with complex authentication flows. Shortest simplifies the testing process by enabling users to run tests locally or in CI/CD pipelines, ensuring the reliability and efficiency of web applications.

README:

AI-powered natural language end-to-end testing framework.

Your browser does not support the video tag.- Natural language E2E testing framework

- AI-powered test execution using Anthropic Claude API

- Built on Playwright

- GitHub integration with 2FA support

- Email validation with Mailosaur

If helpful, here's a short video!

Use the shortest init command to streamline the setup process in a new or existing project.

The shortest init command will:

npx @antiwork/shortest initThis will:

- Automatically install the

@antiwork/shortestpackage as a dev dependency if it is not already installed - Create a default

shortest.config.tsfile with boilerplate configuration - Generate a

.env.localfile (unless present) with placeholders for required environment variables, such asANTHROPIC_API_KEY - Add

.env.localand.shortest/to.gitignore

- Determine your test entry and add your Anthropic API key in config file:

shortest.config.ts

import type { ShortestConfig } from "@antiwork/shortest";

export default {

headless: false,

baseUrl: "http://localhost:3000",

testPattern: "**/*.test.ts",

ai: {

provider: "anthropic",

},

} satisfies ShortestConfig;Anthropic API key will default to SHORTEST_ANTHROPIC_API_KEY / ANTHROPIC_API_KEY environment variables. Can be overwritten via ai.config.apiKey.

- Create test files using the pattern specified in the config:

app/login.test.ts

import { shortest } from "@antiwork/shortest";

shortest("Login to the app using email and password", {

username: process.env.GITHUB_USERNAME,

password: process.env.GITHUB_PASSWORD,

});You can also use callback functions to add additional assertions and other logic. AI will execute the callback function after the test execution in browser is completed.

import { shortest } from "@antiwork/shortest";

import { db } from "@/lib/db/drizzle";

import { users } from "@/lib/db/schema";

import { eq } from "drizzle-orm";

shortest("Login to the app using username and password", {

username: process.env.USERNAME,

password: process.env.PASSWORD,

}).after(async ({ page }) => {

// Get current user's clerk ID from the page

const clerkId = await page.evaluate(() => {

return window.localStorage.getItem("clerk-user");

});

if (!clerkId) {

throw new Error("User not found in database");

}

// Query the database

const [user] = await db

.select()

.from(users)

.where(eq(users.clerkId, clerkId))

.limit(1);

expect(user).toBeDefined();

});You can use lifecycle hooks to run code before and after the test.

import { shortest } from "@antiwork/shortest";

shortest.beforeAll(async ({ page }) => {

await clerkSetup({

frontendApiUrl:

process.env.PLAYWRIGHT_TEST_BASE_URL ?? "http://localhost:3000",

});

});

shortest.beforeEach(async ({ page }) => {

await clerk.signIn({

page,

signInParams: {

strategy: "email_code",

identifier: "[email protected]",

},

});

});

shortest.afterEach(async ({ page }) => {

await page.close();

});

shortest.afterAll(async ({ page }) => {

await clerk.signOut({ page });

});Shortest supports flexible test chaining patterns:

// Sequential test chain

shortest([

"user can login with email and password",

"user can modify their account-level refund policy",

]);

// Reusable test flows

const loginAsLawyer = "login as lawyer with valid credentials";

const loginAsContractor = "login as contractor with valid credentials";

const allAppActions = ["send invoice to company", "view invoices"];

// Combine flows with spread operator

shortest([loginAsLawyer, ...allAppActions]);

shortest([loginAsContractor, ...allAppActions]);Test API endpoints using natural language

const req = new APIRequest({

baseURL: API_BASE_URI,

});

shortest(

"Ensure the response contains only active users",

req.fetch({

url: "/users",

method: "GET",

params: new URLSearchParams({

active: true,

}),

}),

);Or simply:

shortest(`

Test the API GET endpoint ${API_BASE_URI}/users with query parameter { "active": true }

Expect the response to contain only active users

`);pnpm shortest # Run all tests

pnpm shortest __tests__/login.test.ts # Run specific test

pnpm shortest --headless # Run in headless mode using CLIYou can find example tests in the examples directory.

You can run Shortest in your CI/CD pipeline by running tests in headless mode. Make sure to add your Anthropic API key to your CI/CD pipeline secrets.

Shortest supports login using GitHub 2FA. For GitHub authentication tests:

- Go to your repository settings

- Navigate to "Password and Authentication"

- Click on "Authenticator App"

- Select "Use your authenticator app"

- Click "Setup key" to obtain the OTP secret

- Add the OTP secret to your

.env.localfile or use the Shortest CLI to add it - Enter the 2FA code displayed in your terminal into Github's Authenticator setup page to complete the process

shortest --github-code --secret=<OTP_SECRET>Required in .env.local:

ANTHROPIC_API_KEY=your_api_key

GITHUB_TOTP_SECRET=your_secret # Only for GitHub auth testsThe NPM package is located in packages/shortest/. See CONTRIBUTING guide.

This guide will help you set up the Shortest web app for local development.

- React >=19.0.0 (if using with Next.js 14+ or Server Actions)

- Next.js >=14.0.0 (if using Server Components/Actions)

[!WARNING] Using this package with React 18 in Next.js 14+ projects may cause type conflicts with Server Actions and

useFormStatusIf you encounter type errors with form actions or React hooks, ensure you're using React 19

- Clone the repository:

git clone https://github.com/anti-work/shortest.git

cd shortest- Install dependencies:

npm install -g pnpm

pnpm installPull Vercel env vars:

pnpm i -g vercel

vercel link

vercel env pull- Run

pnpm run setupto configure the environment variables. - The setup wizard will ask you for information. Refer to "Services Configuration" section below for more details.

pnpm drizzle-kit generate

pnpm db:migrate

pnpm db:seed # creates stripe products, currently unusedYou'll need to set up the following services for local development. If you're not a Anti-Work Vercel team member, you'll need to either run the setup wizard pnpm run setup or manually configure each of these services and add the corresponding environment variables to your .env.local file:

Clerk

- Go to clerk.com and create a new app.

- Name it whatever you like and disable all login methods except GitHub.

- Once created, copy the environment variables to your

.env.localfile. - In the Clerk dashboard, disable the "Require the same device and browser" setting to ensure tests with Mailosaur work properly.

Vercel Postgres

- Go to your dashboard at vercel.com.

- Navigate to the Storage tab and click the

Create Databasebutton. - Choose

Postgresfrom theBrowse Storagemenu. - Copy your environment variables from the

Quickstart.env.localtab.

Anthropic

- Go to your dashboard at anthropic.com and grab your API Key.

Stripe

- Go to your

Developersdashboard at stripe.com. - Turn on

Test mode. - Go to the

API Keystab and copy yourSecret key. - Go to the terminal of your project and type

pnpm run stripe:webhooks. It will prompt you to login with a code then give you yourSTRIPE_WEBHOOK_SECRET.

GitHub OAuth

-

Create a GitHub OAuth App:

- Go to your GitHub account settings.

- Navigate to

Developer settings>OAuth Apps>New OAuth App. - Fill in the application details:

-

Configure Clerk with GitHub OAuth:

Mailosaur

- Sign up for an account with Mailosaur.

- Create a new Inbox/Server.

- Go to API Keys and create a standard key.

- Update the environment variables:

-

MAILOSAUR_API_KEY: Your API key -

MAILOSAUR_SERVER_ID: Your server ID

-

The email used to test the login flow will have the format shortest@<MAILOSAUR_SERVER_ID>.mailosaur.net, where

MAILOSAUR_SERVER_ID is your server ID.

Make sure to add the email as a new user under the Clerk app.

Run the development server:

pnpm devOpen http://localhost:3000 in your browser to see the app in action.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for shortest

Similar Open Source Tools

shortest

Shortest is an AI-powered natural language end-to-end testing framework built on Playwright. It provides a seamless testing experience by allowing users to write tests in natural language and execute them using Anthropic Claude API. The framework also offers GitHub integration with 2FA support, making it suitable for testing web applications with complex authentication flows. Shortest simplifies the testing process by enabling users to run tests locally or in CI/CD pipelines, ensuring the reliability and efficiency of web applications.

llm-vscode

llm-vscode is an extension designed for all things LLM, utilizing llm-ls as its backend. It offers features such as code completion with 'ghost-text' suggestions, the ability to choose models for code generation via HTTP requests, ensuring prompt size fits within the context window, and code attribution checks. Users can configure the backend, suggestion behavior, keybindings, llm-ls settings, and tokenization options. Additionally, the extension supports testing models like Code Llama 13B, Phind/Phind-CodeLlama-34B-v2, and WizardLM/WizardCoder-Python-34B-V1.0. Development involves cloning llm-ls, building it, and setting up the llm-vscode extension for use.

lollms

LoLLMs Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

lollms_legacy

Lord of Large Language Models (LoLLMs) Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications. The tool supports multiple personalities for generating text with different styles and tones, real-time text generation with WebSocket-based communication, RESTful API for listing personalities and adding new personalities, easy integration with various applications and frameworks, sending files to personalities, running on multiple nodes to provide a generation service to many outputs at once, and keeping data local even in the remote version.

APIMyLlama

APIMyLlama is a server application that provides an interface to interact with the Ollama API, a powerful AI tool to run LLMs. It allows users to easily distribute API keys to create amazing things. The tool offers commands to generate, list, remove, add, change, activate, deactivate, and manage API keys, as well as functionalities to work with webhooks, set rate limits, and get detailed information about API keys. Users can install APIMyLlama packages with NPM, PIP, Jitpack Repo+Gradle or Maven, or from the Crates Repository. The tool supports Node.JS, Python, Java, and Rust for generating responses from the API. Additionally, it provides built-in health checking commands for monitoring API health status.

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

instructor

Instructor is a popular Python library for managing structured outputs from large language models (LLMs). It offers a user-friendly API for validation, retries, and streaming responses. With support for various LLM providers and multiple languages, Instructor simplifies working with LLM outputs. The library includes features like response models, retry management, validation, streaming support, and flexible backends. It also provides hooks for logging and monitoring LLM interactions, and supports integration with Anthropic, Cohere, Gemini, Litellm, and Google AI models. Instructor facilitates tasks such as extracting user data from natural language, creating fine-tuned models, managing uploaded files, and monitoring usage of OpenAI models.

agent-mimir

Agent Mimir is a command line and Discord chat client 'agent' manager for LLM's like Chat-GPT that provides the models with access to tooling and a framework with which accomplish multi-step tasks. It is easy to configure your own agent with a custom personality or profession as well as enabling access to all tools that are compatible with LangchainJS. Agent Mimir is based on LangchainJS, every tool or LLM that works on Langchain should also work with Mimir. The tasking system is based on Auto-GPT and BabyAGI where the agent needs to come up with a plan, iterate over its steps and review as it completes the task.

consult-llm-mcp

Consult LLM MCP is an MCP server that enables users to consult powerful AI models like GPT-5.2, Gemini 3.0 Pro, and DeepSeek Reasoner for complex problem-solving. It supports multi-turn conversations, direct queries with optional file context, git changes inclusion for code review, comprehensive logging with cost estimation, and various CLI modes for Gemini and Codex. The tool is designed to simplify the process of querying AI models for assistance in resolving coding issues and improving code quality.

flapi

flAPI is a powerful service that automatically generates read-only APIs for datasets by utilizing SQL templates. Built on top of DuckDB, it offers features like automatic API generation, support for Model Context Protocol (MCP), connecting to multiple data sources, caching, security implementation, and easy deployment. The tool allows users to create APIs without coding and enables the creation of AI tools alongside REST endpoints using SQL templates. It supports unified configuration for REST endpoints and MCP tools/resources, concurrent servers for REST API and MCP server, and automatic tool discovery. The tool also provides DuckLake-backed caching for modern, snapshot-based caching with features like full refresh, incremental sync, retention, compaction, and audit logs.

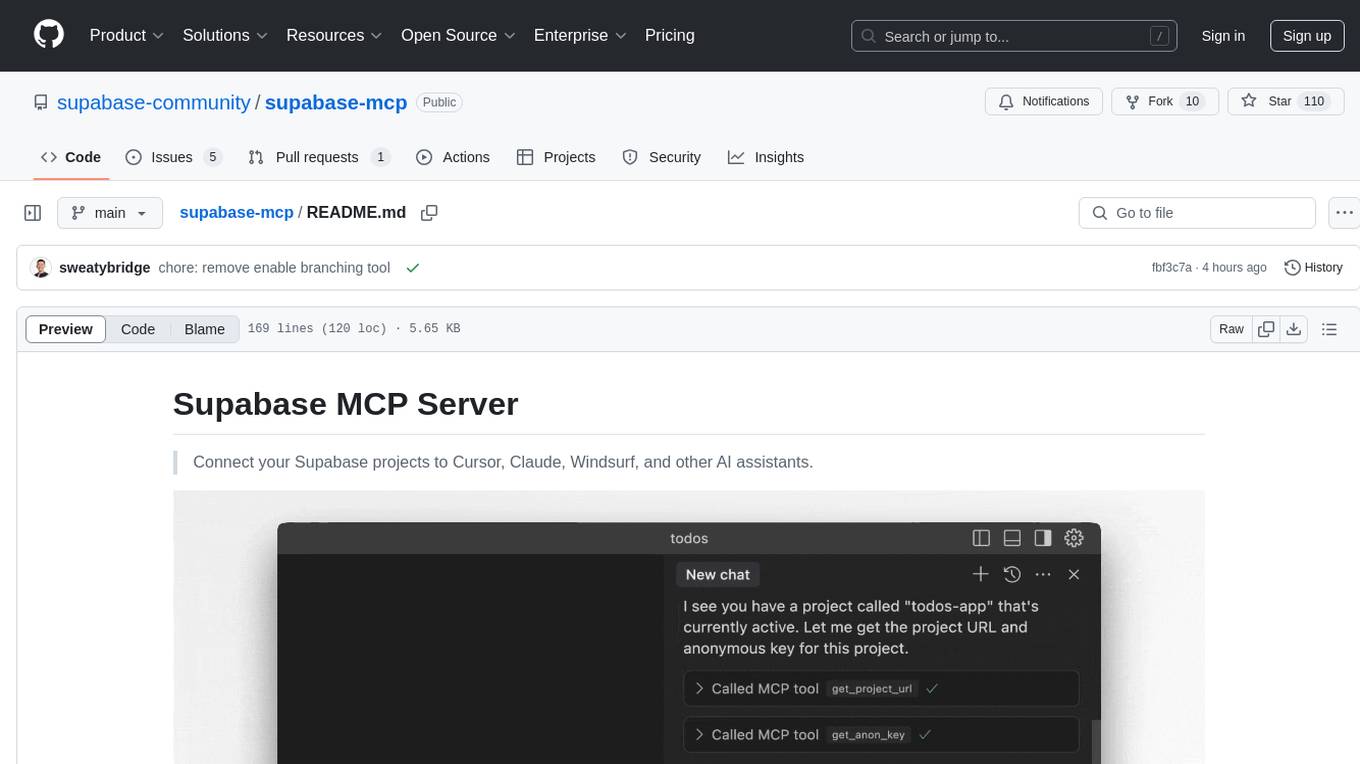

supabase-mcp

Supabase MCP Server standardizes how Large Language Models (LLMs) interact with Supabase, enabling AI assistants to manage tables, fetch config, and query data. It provides tools for project management, database operations, project configuration, branching (experimental), and development tools. The server is pre-1.0, so expect some breaking changes between versions.

python-tgpt

Python-tgpt is a Python package that enables seamless interaction with over 45 free LLM providers without requiring an API key. It also provides image generation capabilities. The name _python-tgpt_ draws inspiration from its parent project tgpt, which operates on Golang. Through this Python adaptation, users can effortlessly engage with a number of free LLMs available, fostering a smoother AI interaction experience.

Lumos

Lumos is a Chrome extension powered by a local LLM co-pilot for browsing the web. It allows users to summarize long threads, news articles, and technical documentation. Users can ask questions about reviews and product pages. The tool requires a local Ollama server for LLM inference and embedding database. Lumos supports multimodal models and file attachments for processing text and image content. It also provides options to customize models, hosts, and content parsers. The extension can be easily accessed through keyboard shortcuts and offers tools for automatic invocation based on prompts.

swarmzero

SwarmZero SDK is a library that simplifies the creation and execution of AI Agents and Swarms of Agents. It supports various LLM Providers such as OpenAI, Azure OpenAI, Anthropic, MistralAI, Gemini, Nebius, and Ollama. Users can easily install the library using pip or poetry, set up the environment and configuration, create and run Agents, collaborate with Swarms, add tools for complex tasks, and utilize retriever tools for semantic information retrieval. Sample prompts are provided to help users explore the capabilities of the agents and swarms. The SDK also includes detailed examples and documentation for reference.

raglite

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with PostgreSQL or SQLite. It offers configurable options for choosing LLM providers, database types, and rerankers. The toolkit is fast and permissive, utilizing lightweight dependencies and hardware acceleration. RAGLite provides features like PDF to Markdown conversion, multi-vector chunk embedding, optimal semantic chunking, hybrid search capabilities, adaptive retrieval, and improved output quality. It is extensible with a built-in Model Context Protocol server, customizable ChatGPT-like frontend, document conversion to Markdown, and evaluation tools. Users can configure RAGLite for various tasks like configuring, inserting documents, running RAG pipelines, computing query adapters, evaluating performance, running MCP servers, and serving frontends.

For similar tasks

LLMstudio

LLMstudio by TensorOps is a platform that offers prompt engineering tools for accessing models from providers like OpenAI, VertexAI, and Bedrock. It provides features such as Python Client Gateway, Prompt Editing UI, History Management, and Context Limit Adaptability. Users can track past runs, log costs and latency, and export history to CSV. The tool also supports automatic switching to larger-context models when needed. Coming soon features include side-by-side comparison of LLMs, automated testing, API key administration, project organization, and resilience against rate limits. LLMstudio aims to streamline prompt engineering, provide execution history tracking, and enable effortless data export, offering an evolving environment for teams to experiment with advanced language models.

kaizen

Kaizen is an open-source project that helps teams ensure quality in their software delivery by providing a suite of tools for code review, test generation, and end-to-end testing. It integrates with your existing code repositories and workflows, allowing you to streamline your software development process. Kaizen generates comprehensive end-to-end tests, provides UI testing and review, and automates code review with insightful feedback. The file structure includes components for API server, logic, actors, generators, LLM integrations, documentation, and sample code. Getting started involves installing the Kaizen package, generating tests for websites, and executing tests. The tool also runs an API server for GitHub App actions. Contributions are welcome under the AGPL License.

flux-fine-tuner

This is a Cog training model that creates LoRA-based fine-tunes for the FLUX.1 family of image generation models. It includes features such as automatic image captioning during training, image generation using LoRA, uploading fine-tuned weights to Hugging Face, automated test suite for continuous deployment, and Weights and biases integration. The tool is designed for users to fine-tune Flux models on Replicate for image generation tasks.

shortest

Shortest is an AI-powered natural language end-to-end testing framework built on Playwright. It provides a seamless testing experience by allowing users to write tests in natural language and execute them using Anthropic Claude API. The framework also offers GitHub integration with 2FA support, making it suitable for testing web applications with complex authentication flows. Shortest simplifies the testing process by enabling users to run tests locally or in CI/CD pipelines, ensuring the reliability and efficiency of web applications.

lmstudio-python

LM Studio Python SDK provides a convenient API for interacting with LM Studio instance, including text completion and chat response functionalities. The SDK allows users to manage websocket connections and chat history easily. It also offers tools for code consistency checks, automated testing, and expanding the API.

mastering-github-copilot-for-dotnet-csharp-developers

Enhance coding efficiency with expert-led GitHub Copilot course for C#/.NET developers. Learn to integrate AI-powered coding assistance, automate testing, and boost collaboration using Visual Studio Code and Copilot Chat. From autocompletion to unit testing, cover essential techniques for cleaner, faster, smarter code.

agentql

AgentQL is a suite of tools for extracting data and automating workflows on live web sites featuring an AI-powered query language, Python and JavaScript SDKs, a browser-based debugger, and a REST API endpoint. It uses natural language queries to pinpoint data and elements on any web page, including authenticated and dynamically generated content. Users can define structured data output and apply transforms within queries. AgentQL's natural language selectors find elements intuitively based on the content of the web page and work across similar web sites, self-healing as UI changes over time.

c4-genai-suite

C4-GenAI-Suite is a comprehensive AI tool for generating code snippets and automating software development tasks. It leverages advanced machine learning models to assist developers in writing efficient and error-free code. The suite includes features such as code completion, refactoring suggestions, and automated testing, making it a valuable asset for enhancing productivity and code quality in software development projects.

For similar jobs

aiscript

AiScript is a lightweight scripting language that runs on JavaScript. It supports arrays, objects, and functions as first-class citizens, and is easy to write without the need for semicolons or commas. AiScript runs in a secure sandbox environment, preventing infinite loops from freezing the host. It also allows for easy provision of variables and functions from the host.

askui

AskUI is a reliable, automated end-to-end automation tool that only depends on what is shown on your screen instead of the technology or platform you are running on.

bots

The 'bots' repository is a collection of guides, tools, and example bots for programming bots to play video games. It provides resources on running bots live, installing the BotLab client, debugging bots, testing bots in simulated environments, and more. The repository also includes example bots for games like EVE Online, Tribal Wars 2, and Elvenar. Users can learn about developing bots for specific games, syntax of the Elm programming language, and tools for memory reading development. Additionally, there are guides on bot programming, contributing to BotLab, and exploring Elm syntax and core library.

ain

Ain is a terminal HTTP API client designed for scripting input and processing output via pipes. It allows flexible organization of APIs using files and folders, supports shell-scripts and executables for common tasks, handles url-encoding, and enables sharing the resulting curl, wget, or httpie command-line. Users can put things that change in environment variables or .env-files, and pipe the API output for further processing. Ain targets users who work with many APIs using a simple file format and uses curl, wget, or httpie to make the actual calls.

LaVague

LaVague is an open-source Large Action Model framework that uses advanced AI techniques to compile natural language instructions into browser automation code. It leverages Selenium or Playwright for browser actions. Users can interact with LaVague through an interactive Gradio interface to automate web interactions. The tool requires an OpenAI API key for default examples and offers a Playwright integration guide. Contributors can help by working on outlined tasks, submitting PRs, and engaging with the community on Discord. The project roadmap is available to track progress, but users should exercise caution when executing LLM-generated code using 'exec'.

robocorp

Robocorp is a platform that allows users to create, deploy, and operate Python automations and AI actions. It provides an easy way to extend the capabilities of AI agents, assistants, and copilots with custom actions written in Python. Users can create and deploy tools, skills, loaders, and plugins that securely connect any AI Assistant platform to their data and applications. The Robocorp Action Server makes Python scripts compatible with ChatGPT and LangChain by automatically creating and exposing an API based on function declaration, type hints, and docstrings. It simplifies the process of developing and deploying AI actions, enabling users to interact with AI frameworks effortlessly.

Open-Interface

Open Interface is a self-driving software that automates computer tasks by sending user requests to a language model backend (e.g., GPT-4V) and simulating keyboard and mouse inputs to execute the steps. It course-corrects by sending current screenshots to the language models. The tool supports MacOS, Linux, and Windows, and requires setting up the OpenAI API key for access to GPT-4V. It can automate tasks like creating meal plans, setting up custom language model backends, and more. Open Interface is currently not efficient in accurate spatial reasoning, tracking itself in tabular contexts, and navigating complex GUI-rich applications. Future improvements aim to enhance the tool's capabilities with better models trained on video walkthroughs. The tool is cost-effective, with user requests priced between $0.05 - $0.20, and offers features like interrupting the app and primary display visibility in multi-monitor setups.

AI-Case-Sorter-CS7.1

AI-Case-Sorter-CS7.1 is a project focused on building a case sorter using machine vision and machine learning AI to sort cases by headstamp. The repository includes Arduino code and 3D models necessary for the project.