cambrian

Cambrian-1 is a family of multimodal LLMs with a vision-centric design.

Stars: 1438

Cambrian-1 is a fully open project focused on exploring multimodal Large Language Models (LLMs) with a vision-centric approach. It offers competitive performance across various benchmarks with models at different parameter levels. The project includes training configurations, model weights, instruction tuning data, and evaluation details. Users can interact with Cambrian-1 through a Gradio web interface for inference. The project is inspired by LLaVA and incorporates contributions from Vicuna, LLaMA, and Yi. Cambrian-1 is licensed under Apache 2.0 and utilizes datasets and checkpoints subject to their respective original licenses.

README:

Fun fact: vision emerged in animals during the Cambrian period! This was the inspiration for the name of our project, Cambrian.

- [07/03/24] 🚂 We have released our targeted data engine! See the subfolder

dataengine/for more details. - [07/02/24] 🤗 CV-Bench is live on Huggingface! Please see here for more: https://huggingface.co/datasets/nyu-visionx/CV-Bench

- [06/24/24] 🔥 We released Cambrian-1! We also release three sizes of model (8B, 13B and 34B), training data, TPU training scripts. We will release GPU training script and evaluation code very soon.

Currently, we support training on TPU using TorchXLA

- Clone this repository and navigate to into the codebase

git clone https://github.com/cambrian-mllm/cambrian

cd cambrian- Install Packages

conda create -n cambrian python=3.10 -y

conda activate cambrian

pip install --upgrade pip # enable PEP 660 support

pip install -e ".[tpu]"- Install TPU specific packages for training cases

pip install torch~=2.2.0 torch_xla[tpu]~=2.2.0 -f https://storage.googleapis.com/libtpu-releases/index.html

- Clone this repository and navigate to into the codebase

git clone https://github.com/cambrian-mllm/cambrian

cd cambrian- Install Packages

conda create -n cambrian python=3.10 -y

conda activate cambrian

pip install --upgrade pip # enable PEP 660 support

pip install ".[gpu]"Here are our Cambrian checkpoints along with instructions on how to use the weights. Our models excel across various dimensions, at the 8B, 13B, and 34B parameter levels. They demonstrate competitive performance compared to closed-source proprietary models such as GPT-4V, Gemini-Pro, and Grok-1.4V on several benchmarks.

| Model | # Vis. Tok. | MMB | SQA-I | MathVistaM | ChartQA | MMVP |

|---|---|---|---|---|---|---|

| GPT-4V | UNK | 75.8 | - | 49.9 | 78.5 | 50.0 |

| Gemini-1.0 Pro | UNK | 73.6 | - | 45.2 | - | - |

| Gemini-1.5 Pro | UNK | - | - | 52.1 | 81.3 | - |

| Grok-1.5 | UNK | - | - | 52.8 | 76.1 | - |

| MM-1-8B | 144 | 72.3 | 72.6 | 35.9 | - | - |

| MM-1-30B | 144 | 75.1 | 81.0 | 39.4 | - | - |

| Base LLM: Phi-3-3.8B | ||||||

| Cambrian-1-8B | 576 | 74.6 | 79.2 | 48.4 | 66.8 | 40.0 |

| Base LLM: LLaMA3-8B-Instruct | ||||||

| Mini-Gemini-HD-8B | 2880 | 72.7 | 75.1 | 37.0 | 59.1 | 18.7 |

| LLaVA-NeXT-8B | 2880 | 72.1 | 72.8 | 36.3 | 69.5 | 38.7 |

| Cambrian-1-8B | 576 | 75.9 | 80.4 | 49.0 | 73.3 | 51.3 |

| Base LLM: Vicuna1.5-13B | ||||||

| Mini-Gemini-HD-13B | 2880 | 68.6 | 71.9 | 37.0 | 56.6 | 19.3 |

| LLaVA-NeXT-13B | 2880 | 70.0 | 73.5 | 35.1 | 62.2 | 36.0 |

| Cambrian-1-13B | 576 | 75.7 | 79.3 | 48.0 | 73.8 | 41.3 |

| Base LLM: Hermes2-Yi-34B | ||||||

| Mini-Gemini-HD-34B | 2880 | 80.6 | 77.7 | 43.4 | 67.6 | 37.3 |

| LLaVA-NeXT-34B | 2880 | 79.3 | 81.8 | 46.5 | 68.7 | 47.3 |

| Cambrian-1-34B | 576 | 81.4 | 85.6 | 53.2 | 75.6 | 52.7 |

For the full table, please refer to our Cambrian-1 paper.

Our models offer highly competitive performance while using a smaller fixed number of visual tokens.

To use the model weights, download them from Hugging Face:

We provide a sample model loading and generation script in inference.py.

In this work, we collect a very large pool of instruction tuning data, Cambrian-10M, for us and future work to study data in training MLLMs. In our preliminary study, we filter the data down to a high quality set of 7M curated data points, which we call Cambrian-7M. Both of these datasets are available in the following Hugging Face Dataset: Cambrian-10M.

We collected a diverse range of visual instruction tuning data from various sources, including VQA, visual conversation, and embodied visual interaction. To ensure high-quality, reliable, and large-scale knowledge data, we designed an Internet Data Engine.

Additionally, we observed that VQA data tends to generate very short outputs, creating a distribution shift from the training data. To address this issue, we leveraged GPT-4v and GPT-4o to create extended responses and more creative data.

To resolve the inadequacy of science-related data, we designed an Internet Data Engine to collect reliable science-related VQA data. This engine can be applied to collect data on any topic. Using this engine, we collected an additional 161k science-related visual instruction tuning data points, increasing the total data in this domain by 400%! If you want to use this part of data, please use this jsonl.

We used GPT-4v to create an additional 77k data points. This data either uses GPT-4v to rewrite the original answer-only VQA into longer answers with more detailed responses or generates visual instruction tuning data based on the given image. If you want to use this part of data, please use this jsonl.

We used GPT-4o to create an additional 60k creative data points. This data encourages the model to generate very long responses and often contains highly creative questions, such as writing a poem, composing a song, and more. If you want to use this part of data, please use this jsonl.

We conducted an initial study on data curation by:

- Setting a threshold $t$ to filter the number of samples from a single data source.

- Studying the data ratio.

Empirically, we found that setting $t$ to 350k yields the best results. Additionally, we conducted data ratio experiments and determined the following optimal data ratio:

| Category | Data Ratio |

|---|---|

| Language | 21.00% |

| General | 34.52% |

| OCR | 27.22% |

| Counting | 8.71% |

| Math | 7.20% |

| Code | 0.87% |

| Science | 0.88% |

Compared to the previous LLaVA-665K model, scaling up and improved data curation significantly enhance model performance, as shown in the table below:

| Model | Average | General Knowledge | OCR | Chart | Vision-Centric |

|---|---|---|---|---|---|

| LLaVA-665K | 40.4 | 64.7 | 45.2 | 20.8 | 31.0 |

| Cambrian-10M | 53.8 | 68.7 | 51.6 | 47.1 | 47.6 |

| Cambrian-7M | 54.8 | 69.6 | 52.6 | 47.3 | 49.5 |

While training with Cambrian-7M provides competitive benchmark results, we observed that the model tends to output shorter responses and act like a question-answer machine. This behavior, which we refer as the "Answer Machine" phenomenon, can limit the model's usefulness in more complex interactions.

We found that adding a system prompt such as "Answer the question using a single word or phrase." can help mitigate the issue. This approach encourages the model to provide such concise answers only when it is contextually appropriate. For more details, please refer to our paper.

We have also curated a dataset, Cambrian-7M with system prompt, which includes the system prompt to enhance the model's creativity and chat ability.

Below is the latest training configuration for Cambrian-1.

In the Cambrian-1 paper, we conduct extensive studies to demonstrate the necessity of two-stage training. Cambrian-1 training consists of two stages:

- Visual Connector Training: We use a mixed 2.5M Cambrian Alignment Data to train a Spatial Vision Aggregator (SVA) that connects frozen pretrained vision encoders to a frozen LLM.

- Instruction Tuning: We use curated Cambrian-7M instruction tuning data to train both the visual connector and LLM.

Cambrian-1 is trained on TPU-V4-512 but can also be trained on TPUs starting at TPU-V4-64. GPU training code will be released soon. For GPU training on fewer GPUs, reduce the per_device_train_batch_size and increase the gradient_accumulation_steps accordingly, ensuring the global batch size remains the same: per_device_train_batch_size x gradient_accumulation_steps x num_gpus.

Both hyperparameters used in pretraining and finetuning are provided below.

| Base LLM | Global Batch Size | Learning rate | SVA Learning Rate | Epochs | Max length |

|---|---|---|---|---|---|

| LLaMA-3 8B | 512 | 1e-3 | 1e-4 | 1 | 2048 |

| Vicuna-1.5 13B | 512 | 1e-3 | 1e-4 | 1 | 2048 |

| Hermes Yi-34B | 1024 | 1e-3 | 1e-4 | 1 | 2048 |

| Base LLM | Global Batch Size | Learning rate | Epochs | Max length |

|---|---|---|---|---|

| LLaMA-3 8B | 512 | 4e-5 | 1 | 2048 |

| Vicuna-1.5 13B | 512 | 4e-5 | 1 | 2048 |

| Hermes Yi-34B | 1024 | 2e-5 | 1 | 2048 |

For instruction finetuning, we conducted experiments to determine the optimal learning rate for our model training. Based on our findings, we recommend using the following formula to adjust your learning rate based on the availability of your device:

optimal lr = base_lr * sqrt(bs / base_bs)

To get the base LLM and train the 8B, 13B, and 34B models:

- LLaMA 8B Model: Download the model weights from Hugging Face and specify the model directory in the training script.

- Vicuna-1.5-13B: The Vicuna-1.5-13B model is automatically handled when you run the provided training script.

- Yi-34B: The Yi-34B model is also automatically handled when you run the provided training script.

We use a combination of LLaVA, ShareGPT4V, Mini-Gemini, and ALLaVA alignment data to pretrain our visual connector (SVA). In Cambrian-1, we conduct extensive studies to demonstrate the necessity and benefits of using additional alignment data.

To begin, please visit our Hugging Face alignment data page for more details. You can download the alignment data from the following links:

We provide sample training scripts in:

- scripts/cambrian/pretrain_cambrian_8b.sh

- scripts/cambrian/pretrain_cambrian_13b.sh

- scripts/cambrian/pretrain_cambrian_34b.sh

If you wish to train with other data sources or custom data, we support the commonly used LLaVA data format. For handling very large files, we use JSONL format instead of JSON format for lazy data loading to optimize memory usage.

Similar to Training SVA, please visit our Cambrian-10M data for more details on the instruction tuning data.

We provide sample training scripts in:

- scripts/cambrian/finetune_cambrian_8b.sh

- scripts/cambrian/finetune_cambrian_13b.sh

- scripts/cambrian/finetune_cambrian_34b.sh

-

--mm_projector_type: To use our SVA module, set this value tosva. To use the LLaVA style 2-layer MLP projector, set this value tomlp2x_gelu. -

--vision_tower_aux_list: The list of vision models to use (e.g.'["siglip/CLIP-ViT-SO400M-14-384", "openai/clip-vit-large-patch14-336", "facebook/dinov2-giant-res378", "clip-convnext-XXL-multi-stage"]'). -

--vision_tower_aux_token_len_list: The list of number of vision tokens for each vision tower; each number should be a square number (e.g.'[576, 576, 576, 9216]'). The feature map of each vision tower will be interpolated to meet this requirement. -

--image_token_len: The final number of vision tokens that will be provided to LLM; the number should be a square number (e.g.576). Note that if themm_projector_typeis mlp, each number invision_tower_aux_token_len_listmust be the same asimage_token_len. The arguments below are only meaningful for SVA projector -

--num_query_group: TheGvalue for SVA module. -

--query_num_list: A list of query numbers for each group of query in SVA (e.g.'[576]'). The length of the list should equal tonum_query_group. -

--connector_depth: TheDvalue for SVA module. -

--vision_hidden_size: The hidden size for SVA module. -

--connector_only: If true, the SVA module will only appear before the LLM, otherwise it will be inserted multiple times inside the LLM. The following three arguments are only meaningful when this is set toFalse. -

--num_of_vision_sampler_layers: The total number of SVA modules inserted inside the LLM. -

--start_of_vision_sampler_layers: The LLM layer index after which the insertion of SVA begins. -

--stride_of_vision_sampler_layers: The stride of the SVA module insertion inside the LLM.

We will release this part of code very soon.

The following instructions will guide you through launching a local Gradio demo with Cambrian. We provide a simple web interface for you to interact with the model. You can also use the CLI for inference. This setup is heavily inspired by LLaVA.

Please follow the steps below to launch a local Gradio demo. A diagram of the local serving code is below1.

%%{init: {"theme": "base"}}%%

flowchart BT

%% Declare Nodes

style gws fill:#f9f,stroke:#333,stroke-width:2px

style c fill:#bbf,stroke:#333,stroke-width:2px

style mw8b fill:#aff,stroke:#333,stroke-width:2px

style mw13b fill:#aff,stroke:#333,stroke-width:2px

%% style sglw13b fill:#ffa,stroke:#333,stroke-width:2px

%% style lsglw13b fill:#ffa,stroke:#333,stroke-width:2px

gws["Gradio (UI Server)"]

c["Controller (API Server):<br/>PORT: 10000"]

mw8b["Model Worker:<br/><b>Cambrian-1-8B</b><br/>PORT: 40000"]

mw13b["Model Worker:<br/><b>Cambrian-1-13B</b><br/>PORT: 40001"]

%% sglw13b["SGLang Backend:<br/><b>Cambrian-1-34B</b><br/>http://localhost:30000"]

%% lsglw13b["SGLang Worker:<br/><b>Cambrian-1-34B<b><br/>PORT: 40002"]

subgraph "Demo Architecture"

direction BT

c <--> gws

mw8b <--> c

mw13b <--> c

%% lsglw13b <--> c

%% sglw13b <--> lsglw13b

endpython -m cambrian.serve.controller --host 0.0.0.0 --port 10000python -m cambrian.serve.gradio_web_server --controller http://localhost:10000 --model-list-mode reloadYou just launched the Gradio web interface. Now, you can open the web interface with the URL printed on the screen. You may notice that there is no model in the model list. Do not worry, as we have not launched any model worker yet. It will be automatically updated when you launch a model worker.

Coming soon.

This is the actual worker that performs the inference on the GPU. Each worker is responsible for a single model specified in --model-path.

python -m cambrian.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path nyu-visionx/cambrian-8bWait until the process finishes loading the model and you see "Uvicorn running on ...". Now, refresh your Gradio web UI, and you will see the model you just launched in the model list.

You can launch as many workers as you want, and compare between different model checkpoints in the same Gradio interface. Please keep the --controller the same, and modify the --port and --worker to a different port number for each worker.

python -m cambrian.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port <different from 40000, say 40001> --worker http://localhost:<change accordingly, i.e. 40001> --model-path <ckpt2>If you are using an Apple device with an M1 or M2 chip, you can specify the mps device by using the --device flag: --device mps.

If the VRAM of your GPU is less than 24GB (e.g., RTX 3090, RTX 4090, etc.), you may try running it with multiple GPUs. Our latest code base will automatically try to use multiple GPUs if you have more than one GPU. You can specify which GPUs to use with CUDA_VISIBLE_DEVICES. Below is an example of running with the first two GPUs.

CUDA_VISIBLE_DEVICES=0,1 python -m cambrian.serve.model_worker --host 0.0.0.0 --controller http://localhost:10000 --port 40000 --worker http://localhost:40000 --model-path nyu-visionx/cambrian-8bTODO

If you find Cambrian useful for your research and applications, please cite using this BibTeX:

@misc{tong2024cambrian1,

title={Cambrian-1: A Fully Open, Vision-Centric Exploration of Multimodal LLMs},

author={Shengbang Tong and Ellis Brown and Penghao Wu and Sanghyun Woo and Manoj Middepogu and Sai Charitha Akula and Jihan Yang and Shusheng Yang and Adithya Iyer and Xichen Pan and Austin Wang and Rob Fergus and Yann LeCun and Saining Xie},

year={2024},

eprint={2406.16860},

}- LLaVA: We start from codebase from the amazing LLaVA

- Vicuna: We thank Vicuna for the initial codebase in LLM and the open-source LLM checkpoitns

- LLaMA: We thank LLaMA for continuing contribution to the open-source community and providing LLaMA-3 checkpoints.

- Yi: We thank Yi for opensourcing very powerful 34B model.

- Eyes Wide Shut? Exploring the Visual Shortcomings of Multimodal LLMs

- V*: Guided Visual Search as a Core Mechanism in Multimodal LLMs

- V-IRL: Grounding Virtual Intelligence in Real Life

Usage and License Notices: This project utilizes certain datasets and checkpoints that are subject to their respective original licenses. Users must comply with all terms and conditions of these original licenses, including but not limited to the OpenAI Terms of Use for the dataset and the specific licenses for base language models for checkpoints trained using the dataset (e.g. Llama community license for LLaMA-3, and Vicuna-1.5). This project does not impose any additional constraints beyond those stipulated in the original licenses. Furthermore, users are reminded to ensure that their use of the dataset and checkpoints is in compliance with all applicable laws and regulations.

-

Copied from LLaVA's diagram. ↩

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for cambrian

Similar Open Source Tools

cambrian

Cambrian-1 is a fully open project focused on exploring multimodal Large Language Models (LLMs) with a vision-centric approach. It offers competitive performance across various benchmarks with models at different parameter levels. The project includes training configurations, model weights, instruction tuning data, and evaluation details. Users can interact with Cambrian-1 through a Gradio web interface for inference. The project is inspired by LLaVA and incorporates contributions from Vicuna, LLaMA, and Yi. Cambrian-1 is licensed under Apache 2.0 and utilizes datasets and checkpoints subject to their respective original licenses.

Cherry_LLM

Cherry Data Selection project introduces a self-guided methodology for LLMs to autonomously discern and select cherry samples from open-source datasets, minimizing manual curation and cost for instruction tuning. The project focuses on selecting impactful training samples ('cherry data') to enhance LLM instruction tuning by estimating instruction-following difficulty. The method involves phases like 'Learning from Brief Experience', 'Evaluating Based on Experience', and 'Retraining from Self-Guided Experience' to improve LLM performance.

TableLLM

TableLLM is a large language model designed for efficient tabular data manipulation tasks in real office scenarios. It can generate code solutions or direct text answers for tasks like insert, delete, update, query, merge, and chart operations on tables embedded in spreadsheets or documents. The model has been fine-tuned based on CodeLlama-7B and 13B, offering two scales: TableLLM-7B and TableLLM-13B. Evaluation results show its performance on benchmarks like WikiSQL, Spider, and self-created table operation benchmark. Users can use TableLLM for code and text generation tasks on tabular data.

DB-GPT-Hub

DB-GPT-Hub is an experimental project leveraging Large Language Models (LLMs) for Text-to-SQL parsing. It includes stages like data collection, preprocessing, model selection, construction, and fine-tuning of model weights. The project aims to enhance Text-to-SQL capabilities, reduce model training costs, and enable developers to contribute to improving Text-to-SQL accuracy. The ultimate goal is to achieve automated question-answering based on databases, allowing users to execute complex database queries using natural language descriptions. The project has successfully integrated multiple large models and established a comprehensive workflow for data processing, SFT model training, prediction output, and evaluation.

CodeGeeX4

CodeGeeX4-ALL-9B is an open-source multilingual code generation model based on GLM-4-9B, offering enhanced code generation capabilities. It supports functions like code completion, code interpreter, web search, function call, and repository-level code Q&A. The model has competitive performance on benchmarks like BigCodeBench and NaturalCodeBench, outperforming larger models in terms of speed and performance.

Qwen

Qwen is a series of large language models developed by Alibaba DAMO Academy. It outperforms the baseline models of similar model sizes on a series of benchmark datasets, e.g., MMLU, C-Eval, GSM8K, MATH, HumanEval, MBPP, BBH, etc., which evaluate the models’ capabilities on natural language understanding, mathematic problem solving, coding, etc. Qwen models outperform the baseline models of similar model sizes on a series of benchmark datasets, e.g., MMLU, C-Eval, GSM8K, MATH, HumanEval, MBPP, BBH, etc., which evaluate the models’ capabilities on natural language understanding, mathematic problem solving, coding, etc. Qwen-72B achieves better performance than LLaMA2-70B on all tasks and outperforms GPT-3.5 on 7 out of 10 tasks.

AQLM

AQLM is the official PyTorch implementation for Extreme Compression of Large Language Models via Additive Quantization. It includes prequantized AQLM models without PV-Tuning and PV-Tuned models for LLaMA, Mistral, and Mixtral families. The repository provides inference examples, model details, and quantization setups. Users can run prequantized models using Google Colab examples, work with different model families, and install the necessary inference library. The repository also offers detailed instructions for quantization, fine-tuning, and model evaluation. AQLM quantization involves calibrating models for compression, and users can improve model accuracy through finetuning. Additionally, the repository includes information on preparing models for inference and contributing guidelines.

PostTrainBench

PostTrainBench is a benchmark designed to measure the ability of command-line interface (CLI) agents to post-train pre-trained large language models (LLMs). The agents are tasked with improving the performance of a base LLM on a given benchmark using an evaluation script and 10 hours on an H100 GPU. The benchmark scores are computed after post-training, and the setup evaluates an agent's capability to conduct AI research and development. The repository provides a platform for collaborative contributions to expand tasks and agent scaffolds, with the potential for co-authorship on research papers.

sqlcoder

Defog's SQLCoder is a family of state-of-the-art large language models (LLMs) designed for converting natural language questions into SQL queries. It outperforms popular open-source models like gpt-4 and gpt-4-turbo on SQL generation tasks. SQLCoder has been trained on more than 20,000 human-curated questions based on 10 different schemas, and the model weights are licensed under CC BY-SA 4.0. Users can interact with SQLCoder through the 'transformers' library and run queries using the 'sqlcoder launch' command in the terminal. The tool has been tested on NVIDIA GPUs with more than 16GB VRAM and Apple Silicon devices with some limitations. SQLCoder offers a demo on their website and supports quantized versions of the model for consumer GPUs with sufficient memory.

AI-Toolbox

AI-Toolbox is a C++ library aimed at representing and solving common AI problems, with a focus on MDPs, POMDPs, and related algorithms. It provides an easy-to-use interface that is extensible to many problems while maintaining readable code. The toolbox includes tutorials for beginners in reinforcement learning and offers Python bindings for seamless integration. It features utilities for combinatorics, polytopes, linear programming, sampling, distributions, statistics, belief updating, data structures, logging, seeding, and more. Additionally, it supports bandit/normal games, single agent MDP/stochastic games, single agent POMDP, and factored/joint multi-agent scenarios.

PURE

PURE (Process-sUpervised Reinforcement lEarning) is a framework that trains a Process Reward Model (PRM) on a dataset and fine-tunes a language model to achieve state-of-the-art mathematical reasoning capabilities. It uses a novel credit assignment method to calculate return and supports multiple reward types. The final model outperforms existing methods with minimal RL data or compute resources, achieving high accuracy on various benchmarks. The tool addresses reward hacking issues and aims to enhance long-range decision-making and reasoning tasks using large language models.

AgentGym

AgentGym is a framework designed to help the AI community evaluate and develop generally-capable Large Language Model-based agents. It features diverse interactive environments and tasks with real-time feedback and concurrency. The platform supports 14 environments across various domains like web navigating, text games, house-holding tasks, digital games, and more. AgentGym includes a trajectory set (AgentTraj) and a benchmark suite (AgentEval) to facilitate agent exploration and evaluation. The framework allows for agent self-evolution beyond existing data, showcasing comparable results to state-of-the-art models.

superduperdb

SuperDuperDB is a Python framework for integrating AI models, APIs, and vector search engines directly with your existing databases, including hosting of your own models, streaming inference and scalable model training/fine-tuning. Build, deploy and manage any AI application without the need for complex pipelines, infrastructure as well as specialized vector databases, and moving our data there, by integrating AI at your data's source: - Generative AI, LLMs, RAG, vector search - Standard machine learning use-cases (classification, segmentation, regression, forecasting recommendation etc.) - Custom AI use-cases involving specialized models - Even the most complex applications/workflows in which different models work together SuperDuperDB is **not** a database. Think `db = superduper(db)`: SuperDuperDB transforms your databases into an intelligent platform that allows you to leverage the full AI and Python ecosystem. A single development and deployment environment for all your AI applications in one place, fully scalable and easy to manage.

pytorch-grad-cam

This repository provides advanced AI explainability for PyTorch, offering state-of-the-art methods for Explainable AI in computer vision. It includes a comprehensive collection of Pixel Attribution methods for various tasks like Classification, Object Detection, Semantic Segmentation, and more. The package supports high performance with full batch image support and includes metrics for evaluating and tuning explanations. Users can visualize and interpret model predictions, making it suitable for both production and model development scenarios.

LongLoRA

LongLoRA is a tool for efficient fine-tuning of long-context large language models. It includes LongAlpaca data with long QA data collected and short QA sampled, models from 7B to 70B with context length from 8k to 100k, and support for GPTNeoX models. The tool supports supervised fine-tuning, context extension, and improved LoRA fine-tuning. It provides pre-trained weights, fine-tuning instructions, evaluation methods, local and online demos, streaming inference, and data generation via Pdf2text. LongLoRA is licensed under Apache License 2.0, while data and weights are under CC-BY-NC 4.0 License for research use only.

floneum

Floneum is a graph editor that makes it easy to develop your own AI workflows. It uses large language models (LLMs) to run AI models locally, without any external dependencies or even a GPU. This makes it easy to use LLMs with your own data, without worrying about privacy. Floneum also has a plugin system that allows you to improve the performance of LLMs and make them work better for your specific use case. Plugins can be used in any language that supports web assembly, and they can control the output of LLMs with a process similar to JSONformer or guidance.

For similar tasks

lighteval

LightEval is a lightweight LLM evaluation suite that Hugging Face has been using internally with the recently released LLM data processing library datatrove and LLM training library nanotron. We're releasing it with the community in the spirit of building in the open. Note that it is still very much early so don't expect 100% stability ^^' In case of problems or question, feel free to open an issue!

Firefly

Firefly is an open-source large model training project that supports pre-training, fine-tuning, and DPO of mainstream large models. It includes models like Llama3, Gemma, Qwen1.5, MiniCPM, Llama, InternLM, Baichuan, ChatGLM, Yi, Deepseek, Qwen, Orion, Ziya, Xverse, Mistral, Mixtral-8x7B, Zephyr, Vicuna, Bloom, etc. The project supports full-parameter training, LoRA, QLoRA efficient training, and various tasks such as pre-training, SFT, and DPO. Suitable for users with limited training resources, QLoRA is recommended for fine-tuning instructions. The project has achieved good results on the Open LLM Leaderboard with QLoRA training process validation. The latest version has significant updates and adaptations for different chat model templates.

Awesome-Text2SQL

Awesome Text2SQL is a curated repository containing tutorials and resources for Large Language Models, Text2SQL, Text2DSL, Text2API, Text2Vis, and more. It provides guidelines on converting natural language questions into structured SQL queries, with a focus on NL2SQL. The repository includes information on various models, datasets, evaluation metrics, fine-tuning methods, libraries, and practice projects related to Text2SQL. It serves as a comprehensive resource for individuals interested in working with Text2SQL and related technologies.

create-million-parameter-llm-from-scratch

The 'create-million-parameter-llm-from-scratch' repository provides a detailed guide on creating a Large Language Model (LLM) with 2.3 million parameters from scratch. The blog replicates the LLaMA approach, incorporating concepts like RMSNorm for pre-normalization, SwiGLU activation function, and Rotary Embeddings. The model is trained on a basic dataset to demonstrate the ease of creating a million-parameter LLM without the need for a high-end GPU.

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

BetaML.jl

The Beta Machine Learning Toolkit is a package containing various algorithms and utilities for implementing machine learning workflows in multiple languages, including Julia, Python, and R. It offers a range of supervised and unsupervised models, data transformers, and assessment tools. The models are implemented entirely in Julia and are not wrappers for third-party models. Users can easily contribute new models or request implementations. The focus is on user-friendliness rather than computational efficiency, making it suitable for educational and research purposes.

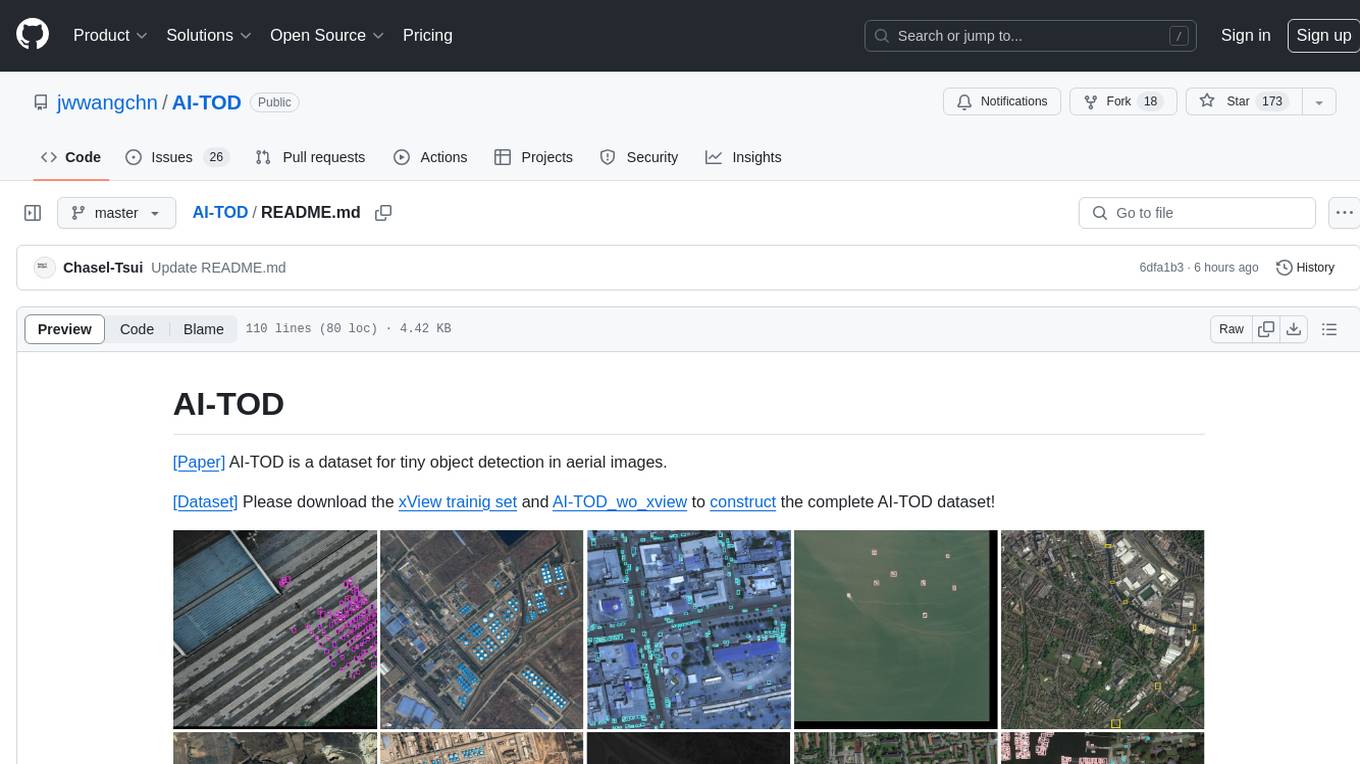

AI-TOD

AI-TOD is a dataset for tiny object detection in aerial images, containing 700,621 object instances across 28,036 images. Objects in AI-TOD are smaller with a mean size of 12.8 pixels compared to other aerial image datasets. To use AI-TOD, download xView training set and AI-TOD_wo_xview, then generate the complete dataset using the provided synthesis tool. The dataset is publicly available for academic and research purposes under CC BY-NC-SA 4.0 license.

UMOE-Scaling-Unified-Multimodal-LLMs

Uni-MoE is a MoE-based unified multimodal model that can handle diverse modalities including audio, speech, image, text, and video. The project focuses on scaling Unified Multimodal LLMs with a Mixture of Experts framework. It offers enhanced functionality for training across multiple nodes and GPUs, as well as parallel processing at both the expert and modality levels. The model architecture involves three training stages: building connectors for multimodal understanding, developing modality-specific experts, and incorporating multiple trained experts into LLMs using the LoRA technique on mixed multimodal data. The tool provides instructions for installation, weights organization, inference, training, and evaluation on various datasets.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.