StableToolBench

A new tool learning benchmark aiming at well-balanced stability and reality, based on ToolBench.

Stars: 59

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

README:

Project • Server • Solvable Queries • Inference • StableToolEval • Paper • Citation

Welcome to StableToolBench. Faced with the instability of Tool Learning benchmarks, we developed this new benchmark aiming to balance the stability and reality, based on ToolBench (Qin et al., 2023).

Based on the large scale of ToolBench, we introduce the following features to ensure the stability and reality of the benchmark:

- Virtual API System, which comprises a caching system and API simulators. The caching system stores API call responses to ensure consistency, while the API simulators, powered by LLMs, are used for unavailable APIs. Note that we keep the large-scale diverse APIs environment from ToolBench.

- A New Set of Solvable Queries. Query solvability is hard to determine on the fly, causing significant randomness and instability. In StableToolBench, we use state-of-the-art LLMs to determine task solvability to filter queries beforehand. We maintain the same query and answer format as ToolBench for seamless transition from it.

- Stable Evaluation System: Implements a two-phase evaluation process using GPT-4 as an automatic evaluator. It involves judging the solvability of tasks and employing metrics like Solvable Pass Rate (SoPR) and Solvable Win Rate (SoWR).

Our Virtual API server featured two components, the API simulation system with GPT 4 Turbo and the caching system. We provide two methods to use the virtual API system: building from source and using our prebuilt Docker.

Before you run any code, please first set up the environment by running pip install -r requirements.txt.

To start the server, you need to provide a cache directory and an OpenAI key.

We provide a cache to download from HuggingFace or Tsinghua Cloud. After downloading the cache, unzip the folder into the server folder and ensure the server folder contains tool_response_cache folder and tools folder. The resulting folder of server looks like:

├── /server/

│ ├── /tools/

│ │ └── ...

│ ├── /tool_response_cache/

│ │ └── ...

│ ├── config.yml

│ ├── main.py

│ ├── utils.py

You need to first specify your configurations in server/config.yml before running the server. Parameters needed are:

-

api_key: The API key for OpenAI models. -

api_base: The API base for OpenAI models if you are using Azure. -

model: The OpenAI model to use. The default value is gpt-4-turbo-preview. -

temperature: The temperature for LLM simulation. The default value is 0. -

toolbench_url: The real ToolBench server URL. The default value ishttp://8.218.239.54:8080/rapidapi. -

tools_folder: The tools environment folder path. Default to./tools. -

cache_folder: The cache folder path. Default to./tool_response_cache. -

is_save: A flag to indicate whether to save real and simulated responses into the cache. The new cache is saved at./tool_response_new_cache. -

port: The server port to run on, default to 8080.

Now you can run the server by running:

cd server

python main.py

The server will be run at http://localhost:{port}/virtual.

To use the server, you will further need a toolbench key. You can apply one from this form.

We provide a Dockerfile for easy deployment and consistent server environment. This allows you to run the server on various platforms that support Docker.

Prerequisites:

- Docker installed: https://docs.docker.com/engine/install/

Building the Docker Image:

- Navigate to your project directory in the terminal.

- Build the Docker image using the following command:

docker build -t my-fastapi-server . # Replace 'my-fastapi-server' with your desired image name

docker run -p {port}:8080 my-fastapi-server # Replace 'my-fastapi-server' with your image nameYou can also use our prebuilt Docker image from Docker Hub hosted at https://hub.docker.com/repository/docker/zhichengg/stb-docker/general. Before running the docker, you will need to install docker and download the cache files as described in Building from Source. Then you can run the server using the following command:

docker pull zhichengg/stb-docker:latest

docker run -p {port}:8080 -v {tool_response_cache_path}:/app/tool_response_cache -v {tools_path}:/app/tools -e OPENAI_API_KEY= -e OPENAI_API_BASE= zhichengg/stb-dockerRemember to fill in the port, tool_response_cache_path, and tools_path with your own values. The OPENAI_API_KEY and OPENAI_API_BASE are the OpenAI API key and API base if you are using Azure. The server will be run at http://localhost:{port}/virtual.

You can test the server with

import requests

import json

import os

url = 'http://0.0.0.0:8080/virtual'

data = {

"category": "Media",

"tool_name": "newapi_for_media",

"api_name": "url",

"tool_input": {'url': 'https://api.socialmedia.com/friend/photos'},

"strip": "",

"toolbench_key": ""

}

headers = {

'accept': 'application/json',

'Content-Type': 'application/json',

}

# Make the POST request

response = requests.post(url, headers=headers, data=json.dumps(data))

print(response.text)

The original queries are curated without considering the solvability but judging the solvability with ChatGPT on the fly will cause significant instability. Therefore, we judge the solvability of the original queries with the majority vote of gpt-4-turbo, gemini-pro and claude-2. The filtered queries are saved in solvable_queries.

If you have not set up the environment, please first do so by running pip install -r requirements.txt.

We currently implement all models and algorithms supported by ToolBench. We show ChatGPT (gpt-3.5-turbo-16k) with CoT as an example here. The script is also shown in inference_chatgpt_pipeline_virtual.sh. An example of the results is shown in data_example/answer.

To use ChatGPT, run:

export TOOLBENCH_KEY=""

export OPENAI_KEY=""

export OPENAI_API_BASE=""

export PYTHONPATH=./

export GPT_MODEL="gpt-3.5-turbo-16k"

export SERVICE_URL="http://localhost:8080/virtual"

export OUTPUT_DIR="data/answer/virtual_chatgpt_cot"

group=G1_instruction

mkdir -p $OUTPUT_DIR; mkdir -p $OUTPUT_DIR/$group

python toolbench/inference/qa_pipeline_multithread.py \

--tool_root_dir toolenv/tools \

--backbone_model chatgpt_function \

--openai_key $OPENAI_KEY \

--max_observation_length 1024 \

--method CoT@1 \

--input_query_file solvable_queries/test_instruction/${group}.json \

--output_answer_file $OUTPUT_DIR/$group \

--toolbench_key $TOOLBENCH_KEY \

--num_thread 1We follow the evaluation process of ToolBench. The difference is that we update the evaluation logic of the Pass Rate and Win Rate, resulting in the Solvable Pass Rate and Solvable Win Rate.

The first step is to prepare data. This step is the same as ToolEval in ToolBench.

The following paragraph is adapted from ToolBench.

To evaluate your model and method using ToolEval, you first need to prepare all the model predictions for the six test subsets. Create a directory naming with your model and method, e.g. chatgpt_cot then put each test set's predictions under the directory. The file structure of the directory should be:

├── /chatgpt_cot/

│ ├── /G1_instruction/

│ │ ├── /[email protected]

│ │ └── ...

│ ├── /G1_tool/

│ │ ├── /[email protected]

│ │ └── ...

│ ├── ...

│ ├── /G3_instruction/

│ │ ├── /[email protected]

│ │ └── ...

Then preprocess the predictions by running the following commands:

cd toolbench/tooleval

export RAW_ANSWER_PATH=../../data_example/answer

export CONVERTED_ANSWER_PATH=../../data_example/model_predictions_converted

export MODEL_NAME=virtual_chatgpt_cot

export test_set=G1_instruction

mkdir -p ${CONVERTED_ANSWER_PATH}/${MODEL_NAME}

answer_dir=${RAW_ANSWER_PATH}/${MODEL_NAME}/${test_set}

output_file=${CONVERTED_ANSWER_PATH}/${MODEL_NAME}/${test_set}.json

python convert_to_answer_format.py\

--answer_dir ${answer_dir} \

--method CoT@1 # DFS_woFilter_w2 for DFS \

--output ${output_file}Next, you can calculate the Solvable Pass Rate. Before running the process, you need to specify your evaluation OpenAI key in openai_key.json as follows:

[

{

"api_key": "your_openai_key",

"api_base": "your_organization"

},

...

]Then calculate SoPR with :

cd toolbench/tooleval

export API_POOL_FILE=../../openai_key.json

export CONVERTED_ANSWER_PATH=../../data_example/model_predictions_converted

export SAVE_PATH=../../data_example/pass_rate_results

mkdir -p ${SAVE_PATH}

export CANDIDATE_MODEL=virtual_chatgpt_cot

export EVAL_MODEL=gpt-4-turbo-preview

mkdir -p ${SAVE_PATH}/${CANDIDATE_MODEL}

python eval_pass_rate.py \

--converted_answer_path ${CONVERTED_ANSWER_PATH} \

--save_path ${SAVE_PATH}/${CANDIDATE_MODEL} \

--reference_model ${CANDIDATE_MODEL} \

--test_ids ../../solvable_queries_example/test_query_ids \

--max_eval_threads 35 \

--evaluate_times 3 \

--test_set G1_instruction

Note that we use gpt-4-turbo-preview as the standard evaluation model, which provided much better stability than gpt-3.5 series models.

The result files will be stored under the ${SAVE_PATH}.

Then you can calculate the SoWR. The below example takes ChatGPT-CoT as the reference model and ChatGPT-DFS as the candidate model. Note that you need to get both model's pass rate results first.

cd toolbench/tooleval

export API_POOL_FILE=../../openai_key.json

export CONVERTED_ANSWER_PATH=../../data_example/model_predictions_converted

export SAVE_PATH=../../data_example/preference_results

export PASS_RATE_PATH=../../data_example/pass_rate_results

export REFERENCE_MODEL=virtual_chatgpt_cot

export CANDIDATE_MODEL=virtual_chatgpt_dfs

export EVAL_MODEL=gpt-4-turbo-preview

mkdir -p ${SAVE_PATH}

python eval_preference.py \

--converted_answer_path ${CONVERTED_ANSWER_PATH} \

--reference_model ${REFERENCE_MODEL} \

--output_model ${CANDIDATE_MODEL} \

--test_ids ../../solvable_queries_example/test_query_ids/ \

--save_path ${SAVE_PATH} \

--pass_rate_result_path ${PASS_RATE_PATH} \

--max_eval_threads 10 \

--use_pass_rate true \

--evaluate_times 3 \

--test_set G1_instructionThe result files will be stored under the ${SAVE_PATH}.

In our main experiments, ToolLLaMA(v2) demonstrates a compelling capability to handle both single-tool and complex multi-tool instructions, which is on par with ChatGPT. Below are the main results. The win rate for each model is compared with ChatGPT-ReACT.

Solvable Pass Rate:

| Method | I1 Instruction | I1 Category | I1 Tool | I2 Category | I2 Instruction | I3 Instruction | Average |

|---|---|---|---|---|---|---|---|

| GPT-3.5-Turbo-0613 (CoT) | 55.9±1.0 | 50.8±0.8 | 55.9±1.0 | 44.1±0.8 | 36.2±0.4 | 51.4±1.5 | 49.1±1.0 |

| GPT-3.5-Turbo-0613 (DFS) | 66.4±1.5 | 64.3±1.0 | 67.2±2.4 | 67.7±0.8 | 61.5±1.0 | 81.4±1.5 | 68.1±1.4 |

| GPT-4-0613 (CoT) | 50.7±0.4 | 57.1±0.3 | 51.9±0.3 | 55.0±1.1 | 61.6±0.8 | 56.3±0.8 | 55.4±0.6 |

| GPT-4-0613 (DFS) | 65.5±1.1 | 62.0±1.7 | 72.1±1.6 | 70.8±1.3 | 73.1±1.4 | 74.9±1.5 | 69.7±1.4 |

| ToolLLaMA v2 (CoT) | 37.2±0.1 | 42.3±0.4 | 43.0±0.5 | 37.4±0.4 | 33.6±1.2 | 39.6±1.0 | 38.9±0.6 |

| ToolLLaMA v2 (DFS) | 59.8±1.5 | 59.5±1.4 | 65.7±1.1 | 56.5±0.3 | 47.6±0.4 | 62.8±1.9 | 58.7±1.1 |

| GPT-3.5-Turbo-1106 (CoT) | 51.3±0.6 | 48.8±0.3 | 59.9±0.8 | 50.8±0.7 | 43.2±0.8 | 58.5±0.8 | 52.1±0.7 |

| GPT-3.5-Turbo-1106 (DFS) | 67.8±0.9 | 67.2±0.3 | 72.9±0.7 | 63.2±1.0 | 70.9±0.4 | 77.6±0.8 | 69.9±0.7 |

| GPT-4-Turbo-Preview (CoT) | 63.1±1.0 | 64.5±0.5 | 55.3±0.3 | 63.0±0.8 | 57.3±0.8 | 61.7±0.8 | 60.8±0.7 |

| GPT-4-Turbo-Preview (DFS) | 70.8±1.0 | 71.1±0.7 | 70.4±1.2 | 70.4±1.3 | 71.7±0.4 | 84.7±1.7 | 73.2±1.1 |

In this experiment, we run all models once, evaluate them three times, and take the average results.

Solvable Win Rate: (Reference model: ChatGPT-CoT)

| Method | I1 Instruction | I1 Category | I1 Tool | I2 Category | I2 Instruction | I3 Instruction | Average |

|---|---|---|---|---|---|---|---|

| GPT-3.5-Turbo-0613 (DFS) | 57.7 | 60.8 | 61.4 | 66.1 | 63.2 | 70.5 | 63.3 |

| GPT-4-0613 (CoT) | 50.3 | 54.2 | 50.6 | 50.0 | 64.2 | 55.7 | 54.2 |

| GPT-4-0613 (DFS) | 57.1 | 60.1 | 57.0 | 64.5 | 74.5 | 72.1 | 64.2 |

| ToolLLaMA v2 (CoT) | 35.0 | 30.7 | 37.3 | 31.5 | 36.8 | 23.0 | 32.4 |

| ToolLLaMA v2 (DFS) | 43.6 | 45.1 | 38.6 | 42.7 | 53.8 | 45.9 | 44.9 |

| GPT-3.5-Turbo-1106 (CoT) | 46.6 | 45.1 | 48.1 | 44.4 | 37.7 | 52.5 | 45.7 |

| GPT-3.5-Turbo-1106 (DFS) | 56.4 | 54.2 | 51.9 | 54.0 | 62.3 | 72.1 | 58.5 |

| GPT-4-Turbo-Preview (CoT) | 68.7 | 71.9 | 58.2 | 71.0 | 76.4 | 73.8 | 70.0 |

| GPT-4-Turbo-Preview (DFS) | 66.9 | 73.9 | 68.4 | 72.6 | 78.3 | 77.0 | 72.9 |

We run all models once against GPT-3.5-Turbo-0613 + CoT and evaluate them three times. We follow the ToolBench implementation to take the most frequent result for each query during evaluation.

We thank Jingwen Wu and Yao Li for their contributions to experiments and result presentation. We also appreciate Yile Wang and Jitao Xu for their valuable suggestions during discussions.

@misc{guo2024stabletoolbench,

title={StableToolBench: Towards Stable Large-Scale Benchmarking on Tool Learning of Large Language Models},

author={Zhicheng Guo and Sijie Cheng and Hao Wang and Shihao Liang and Yujia Qin and Peng Li and Zhiyuan Liu and Maosong Sun and Yang Liu},

year={2024},

eprint={2403.07714},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for StableToolBench

Similar Open Source Tools

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features like Virtual API System, Solvable Queries, and Stable Evaluation System. The benchmark ensures consistency through a caching system and API simulators, filters queries based on solvability using LLMs, and evaluates model performance using GPT-4 with metrics like Solvable Pass Rate and Solvable Win Rate.

optillm

optillm is an OpenAI API compatible optimizing inference proxy implementing state-of-the-art techniques to enhance accuracy and performance of LLMs, focusing on reasoning over coding, logical, and mathematical queries. By leveraging additional compute at inference time, it surpasses frontier models across diverse tasks.

MooER

MooER (摩耳) is an LLM-based speech recognition and translation model developed by Moore Threads. It allows users to transcribe speech into text (ASR) and translate speech into other languages (AST) in an end-to-end manner. The model was trained using 5K hours of data and is now also available with an 80K hours version. MooER is the first LLM-based speech model trained and inferred using domestic GPUs. The repository includes pretrained models, inference code, and a Gradio demo for a better user experience.

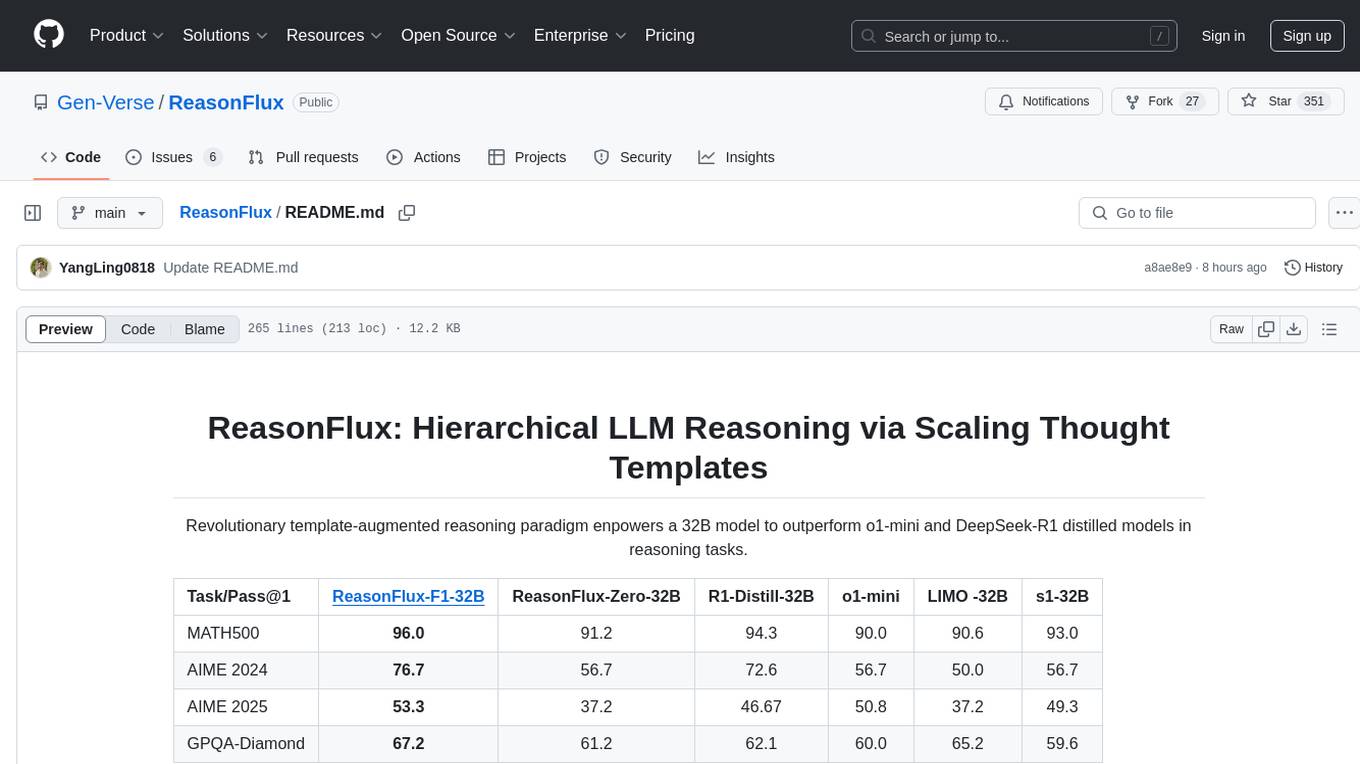

ReasonFlux

ReasonFlux is a revolutionary template-augmented reasoning paradigm that empowers a 32B model to outperform other models in reasoning tasks. The repository provides official resources for the paper 'ReasonFlux: Hierarchical LLM Reasoning via Scaling Thought Templates', including the latest released model ReasonFlux-F1-32B. It includes updates, dataset links, model zoo, getting started guide, training instructions, evaluation details, inference examples, performance comparisons, reasoning examples, preliminary work references, and citation information.

EasyEdit

EasyEdit is a Python package for edit Large Language Models (LLM) like `GPT-J`, `Llama`, `GPT-NEO`, `GPT2`, `T5`(support models from **1B** to **65B**), the objective of which is to alter the behavior of LLMs efficiently within a specific domain without negatively impacting performance across other inputs. It is designed to be easy to use and easy to extend.

KwaiAgents

KwaiAgents is a series of Agent-related works open-sourced by the [KwaiKEG](https://github.com/KwaiKEG) from [Kuaishou Technology](https://www.kuaishou.com/en). The open-sourced content includes: 1. **KAgentSys-Lite**: a lite version of the KAgentSys in the paper. While retaining some of the original system's functionality, KAgentSys-Lite has certain differences and limitations when compared to its full-featured counterpart, such as: (1) a more limited set of tools; (2) a lack of memory mechanisms; (3) slightly reduced performance capabilities; and (4) a different codebase, as it evolves from open-source projects like BabyAGI and Auto-GPT. Despite these modifications, KAgentSys-Lite still delivers comparable performance among numerous open-source Agent systems available. 2. **KAgentLMs**: a series of large language models with agent capabilities such as planning, reflection, and tool-use, acquired through the Meta-agent tuning proposed in the paper. 3. **KAgentInstruct**: over 200k Agent-related instructions finetuning data (partially human-edited) proposed in the paper. 4. **KAgentBench**: over 3,000 human-edited, automated evaluation data for testing Agent capabilities, with evaluation dimensions including planning, tool-use, reflection, concluding, and profiling.

llm-checker

LLM Checker is an AI-powered CLI tool that analyzes your hardware to recommend optimal LLM models. It features deterministic scoring across 35+ curated models with hardware-calibrated memory estimation. The tool helps users understand memory bandwidth, VRAM limits, and performance characteristics to choose the right LLM for their hardware. It provides actionable recommendations in seconds by scoring compatible models across four dimensions: Quality, Speed, Fit, and Context. LLM Checker is designed to work on any Node.js 16+ system, with optional SQLite search features for advanced functionality.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

roam-code

Roam is a tool that builds a semantic graph of your codebase and allows AI agents to query it with one shell command. It pre-indexes your codebase into a semantic graph stored in a local SQLite DB, providing architecture-level graph queries offline, cross-language, and compact. Roam understands functions, modules, tests coverage, and overall architecture structure. It is best suited for agent-assisted coding, large codebases, architecture governance, safe refactoring, and multi-repo projects. Roam is not suitable for real-time type checking, dynamic/runtime analysis, small scripts, or pure text search. It offers speed, dependency-awareness, LLM-optimized output, fully local operation, and CI readiness.

qserve

QServe is a serving system designed for efficient and accurate Large Language Models (LLM) on GPUs with W4A8KV4 quantization. It achieves higher throughput compared to leading industry solutions, allowing users to achieve A100-level throughput on cheaper L40S GPUs. The system introduces the QoQ quantization algorithm with 4-bit weight, 8-bit activation, and 4-bit KV cache, addressing runtime overhead challenges. QServe improves serving throughput for various LLM models by implementing compute-aware weight reordering, register-level parallelism, and fused attention memory-bound techniques.

ai-coders-context

The @ai-coders/context repository provides the Ultimate MCP for AI Agent Orchestration, Context Engineering, and Spec-Driven Development. It simplifies context engineering for AI by offering a universal process called PREVC, which consists of Planning, Review, Execution, Validation, and Confirmation steps. The tool aims to address the problem of context fragmentation by introducing a single `.context/` directory that works universally across different tools. It enables users to create structured documentation, generate agent playbooks, manage workflows, provide on-demand expertise, and sync across various AI tools. The tool follows a structured, spec-driven development approach to improve AI output quality and ensure reproducible results across projects.

doc-scraper

A configurable, concurrent, and resumable web crawler written in Go, specifically designed to scrape technical documentation websites, extract core content, convert it cleanly to Markdown format suitable for ingestion by Large Language Models (LLMs), and save the results locally. The tool is built for LLM training and RAG systems, preserving documentation structure, offering production-ready features like resumable crawls and rate limiting, and using Go's concurrency model for efficient parallel processing. It automates the process of gathering and cleaning web-based documentation for use with Large Language Models, providing a dataset that is text-focused, structured, cleaned, and locally accessible.

gpt-load

GPT-Load is a high-performance, enterprise-grade AI API transparent proxy service designed for enterprises and developers needing to integrate multiple AI services. Built with Go, it features intelligent key management, load balancing, and comprehensive monitoring capabilities for high-concurrency production environments. The tool serves as a transparent proxy service, preserving native API formats of various AI service providers like OpenAI, Google Gemini, and Anthropic Claude. It supports dynamic configuration, distributed leader-follower deployment, and a Vue 3-based web management interface. GPT-Load is production-ready with features like dual authentication, graceful shutdown, and error recovery.

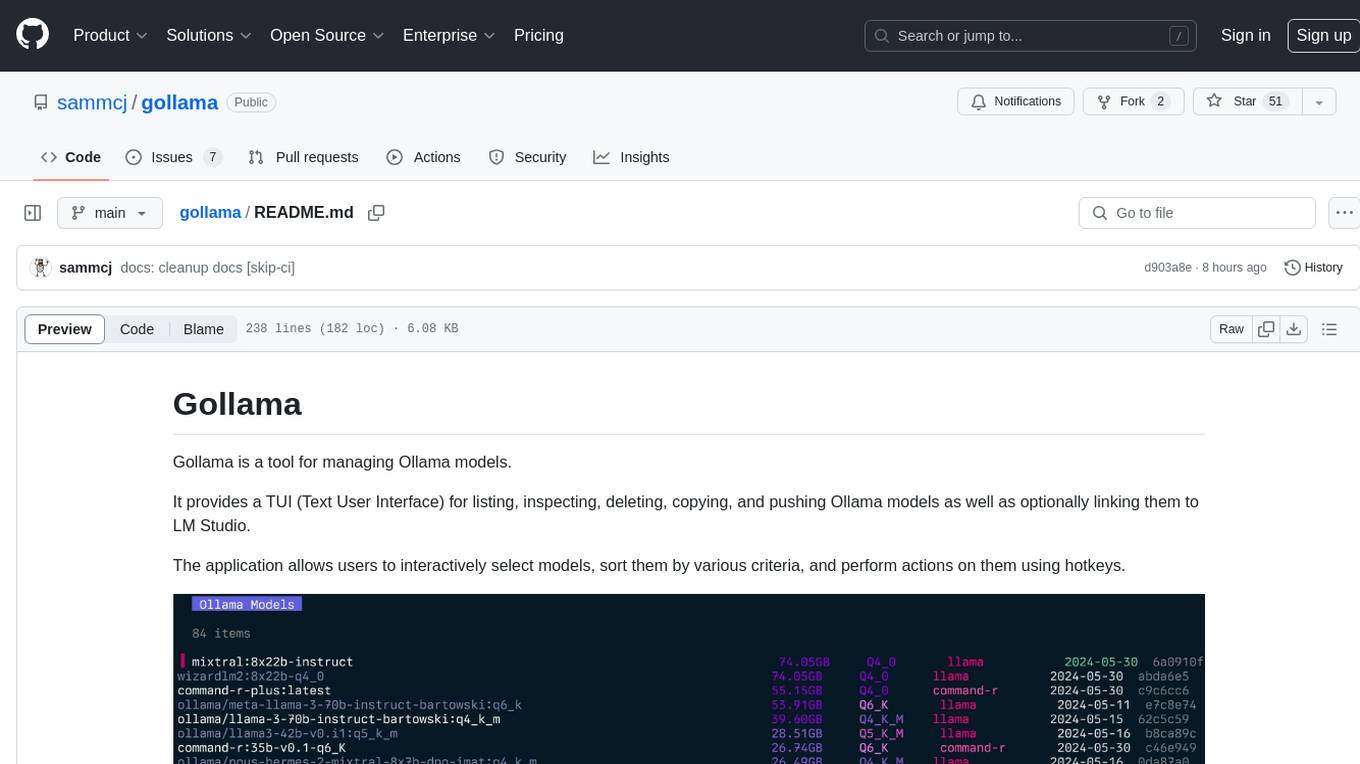

gollama

Gollama is a tool designed for managing Ollama models through a Text User Interface (TUI). Users can list, inspect, delete, copy, and push Ollama models, as well as link them to LM Studio. The application offers interactive model selection, sorting by various criteria, and actions using hotkeys. It provides features like sorting and filtering capabilities, displaying model metadata, model linking, copying, pushing, and more. Gollama aims to be user-friendly and useful for managing models, especially for cleaning up old models.

EVE

EVE is an official PyTorch implementation of Unveiling Encoder-Free Vision-Language Models. The project aims to explore the removal of vision encoders from Vision-Language Models (VLMs) and transfer LLMs to encoder-free VLMs efficiently. It also focuses on bridging the performance gap between encoder-free and encoder-based VLMs. EVE offers a superior capability with arbitrary image aspect ratio, data efficiency by utilizing publicly available data for pre-training, and training efficiency with a transparent and practical strategy for developing a pure decoder-only architecture across modalities.

For similar tasks

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

lighteval

LightEval is a lightweight LLM evaluation suite that Hugging Face has been using internally with the recently released LLM data processing library datatrove and LLM training library nanotron. We're releasing it with the community in the spirit of building in the open. Note that it is still very much early so don't expect 100% stability ^^' In case of problems or question, feel free to open an issue!

Firefly

Firefly is an open-source large model training project that supports pre-training, fine-tuning, and DPO of mainstream large models. It includes models like Llama3, Gemma, Qwen1.5, MiniCPM, Llama, InternLM, Baichuan, ChatGLM, Yi, Deepseek, Qwen, Orion, Ziya, Xverse, Mistral, Mixtral-8x7B, Zephyr, Vicuna, Bloom, etc. The project supports full-parameter training, LoRA, QLoRA efficient training, and various tasks such as pre-training, SFT, and DPO. Suitable for users with limited training resources, QLoRA is recommended for fine-tuning instructions. The project has achieved good results on the Open LLM Leaderboard with QLoRA training process validation. The latest version has significant updates and adaptations for different chat model templates.

Awesome-Text2SQL

Awesome Text2SQL is a curated repository containing tutorials and resources for Large Language Models, Text2SQL, Text2DSL, Text2API, Text2Vis, and more. It provides guidelines on converting natural language questions into structured SQL queries, with a focus on NL2SQL. The repository includes information on various models, datasets, evaluation metrics, fine-tuning methods, libraries, and practice projects related to Text2SQL. It serves as a comprehensive resource for individuals interested in working with Text2SQL and related technologies.

create-million-parameter-llm-from-scratch

The 'create-million-parameter-llm-from-scratch' repository provides a detailed guide on creating a Large Language Model (LLM) with 2.3 million parameters from scratch. The blog replicates the LLaMA approach, incorporating concepts like RMSNorm for pre-normalization, SwiGLU activation function, and Rotary Embeddings. The model is trained on a basic dataset to demonstrate the ease of creating a million-parameter LLM without the need for a high-end GPU.

BetaML.jl

The Beta Machine Learning Toolkit is a package containing various algorithms and utilities for implementing machine learning workflows in multiple languages, including Julia, Python, and R. It offers a range of supervised and unsupervised models, data transformers, and assessment tools. The models are implemented entirely in Julia and are not wrappers for third-party models. Users can easily contribute new models or request implementations. The focus is on user-friendliness rather than computational efficiency, making it suitable for educational and research purposes.

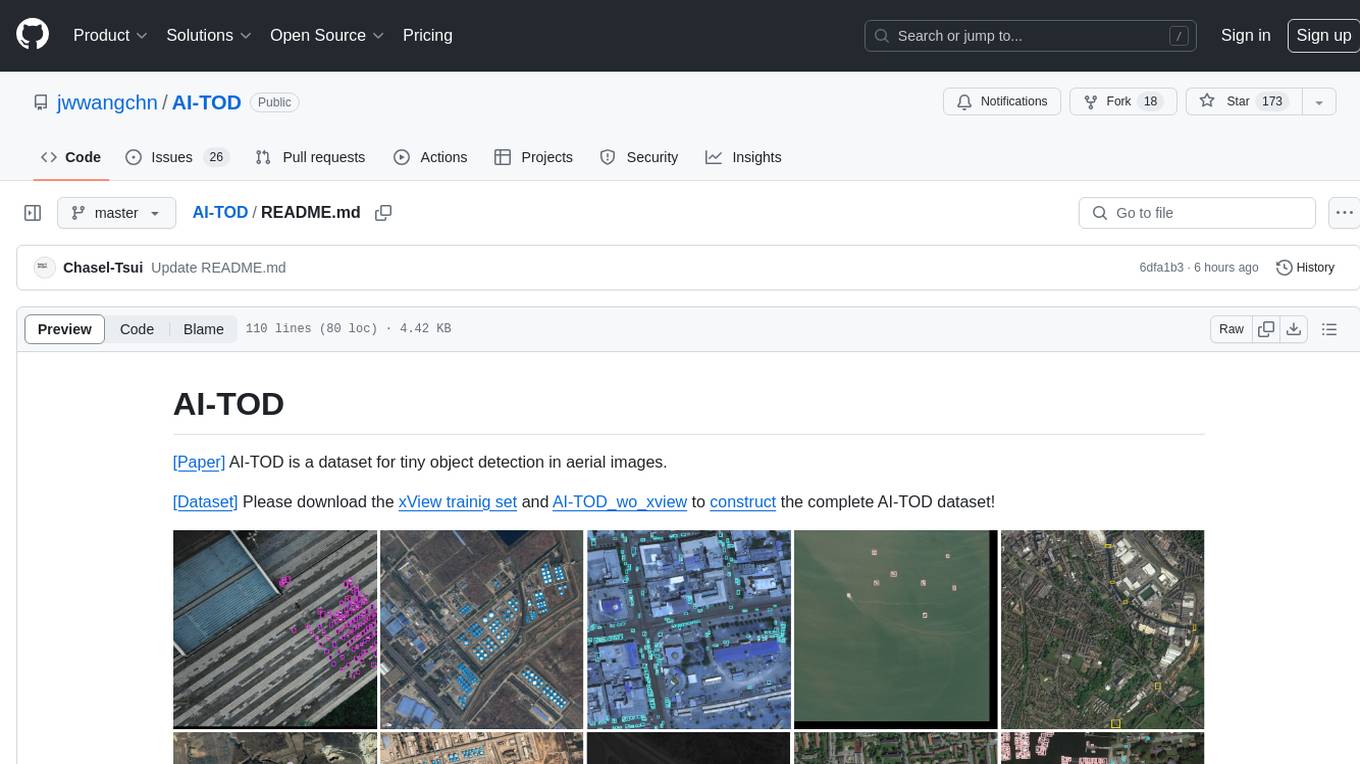

AI-TOD

AI-TOD is a dataset for tiny object detection in aerial images, containing 700,621 object instances across 28,036 images. Objects in AI-TOD are smaller with a mean size of 12.8 pixels compared to other aerial image datasets. To use AI-TOD, download xView training set and AI-TOD_wo_xview, then generate the complete dataset using the provided synthesis tool. The dataset is publicly available for academic and research purposes under CC BY-NC-SA 4.0 license.

UMOE-Scaling-Unified-Multimodal-LLMs

Uni-MoE is a MoE-based unified multimodal model that can handle diverse modalities including audio, speech, image, text, and video. The project focuses on scaling Unified Multimodal LLMs with a Mixture of Experts framework. It offers enhanced functionality for training across multiple nodes and GPUs, as well as parallel processing at both the expert and modality levels. The model architecture involves three training stages: building connectors for multimodal understanding, developing modality-specific experts, and incorporating multiple trained experts into LLMs using the LoRA technique on mixed multimodal data. The tool provides instructions for installation, weights organization, inference, training, and evaluation on various datasets.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.