RLinf

RLinf: Reinforcement Learning Infrastructure for Embodied and Agentic AI

Stars: 2418

RLinf is a flexible and scalable open-source infrastructure designed for post-training foundation models via reinforcement learning. It provides a robust backbone for next-generation training, supporting open-ended learning, continuous generalization, and limitless possibilities in intelligence development. The tool offers unique features like Macro-to-Micro Flow, flexible execution modes, auto-scheduling strategy, embodied agent support, and fast adaptation for mainstream VLA models. RLinf is fast with hybrid mode and automatic online scaling strategy, achieving significant throughput improvement and efficiency. It is also flexible and easy to use with multiple backend integrations, adaptive communication, and built-in support for popular RL methods. The roadmap includes system-level enhancements and application-level extensions to support various training scenarios and models. Users can get started with complete documentation, quickstart guides, key design principles, example gallery, advanced features, and guidelines for extending the framework. Contributions are welcome, and users are encouraged to cite the GitHub repository and acknowledge the broader open-source community.

README:

RLinf is a flexible and scalable open-source RL infrastructure designed for Embodied and Agentic AI. The 'inf' in RLinf stands for Infrastructure, highlighting its role as a robust backbone for next-generation training. It also stands for Infinite, symbolizing the system’s support for open-ended learning, continuous generalization, and limitless possibilities in intelligence development.

- [2026/02] 🔥 RLinf supports reinforcement learning with GSEnv for Real2Sim2Real. Doc: RL with GSEnv.

- [2026/01] 🔥 RLinf supports reinforcement learning fine-tuning for OpenSora World Model. Doc: RL on OpenSora World Model.

- [2026/01] 🔥 RLinf supports reinforcement learning fine-tuning for RoboTwin. Doc: RL on RoboTwin.

- [2026/01] 🔥 RLinf supports SAC training for flow matching policy. Doc: SAC-Flow, paper: SAC Flow: Sample-Efficient Reinforcement Learning of Flow-Based Policies via Velocity-Reparameterized Sequential Modeling.

- [2025/12] 🔥 RLinf supports agentic reinforcement learning on Search-R1. Doc: Search-R1.

- [2025/12] 🔥 RLinf v0.2-pre is open-sourced. We support real-world RL with Franka. Doc: RL on Franka in the RealWorld.

- [2025/12] 🔥 RLinf supports reinforcement learning fine-tuning for RoboCasa. Doc: RL on Robocasa.

- [2025/12] 🎉 RLinf official release of v0.1.

- [2025/11] 🔥 RLinf supports reinforcement learning fine-tuning for CALVIN. Doc: RL on CALVIN.

- [2025/11] 🔥 RLinf supports reinforcement learning fine-tuning for IsaacLab. Doc: RL on IsaacLab.

- [2025/11] 🔥 RLinf supports reinforcement learning fine-tuning for GR00T-N1.5. Doc: RL on GR00T-N1.5.

- [2025/11] 🔥 RLinf supports reinforcement learning fine-tuning for Metaworld. Doc: RL on Metaworld.

- [2025/11] 🔥 RLinf supports reinforcement learning fine-tuning for Behavior 1k. Doc: RL on Behavior 1k.

- [2025/11] Add lora support to π₀ and π₀.₅.

- [2025/10] 🔥 RLinf supports reinforcement learning fine-tuning for π₀ and π₀.₅! Doc: RL on π₀ and π₀.₅ Models. For more technical details, refer to the RL fine-tuning for π₀ and π₀.₅ technical report. The report on πRL by Machine Heart and RoboTech are also released.

- [2025/10] 🔥 RLinf now officially supports online reinforcement learning! Doc: coding_online_rl, Blog post: The first open-source agent online RL framework RLinf-Online.

- [2025/10] 🔥 The RLinf Algorithm Technical Report RLinf-VLA: A Unified and Efficient Framework for VLA+RL Training is released.

- [2025/09] 🔥 Example Gallery is updated, users can find various off-the-shelf examples!

- [2025/09] The paper RLinf: Flexible and Efficient Large-scale Reinforcement Learning via Macro-to-Micro Flow Transformation is released.

- [2025/09] The report on RLinf by Machine Heart is released.

- [2025/08] RLinf is open-sourced. The formal v0.1 will be released soon.

| Simulators | Real-world Robotics | Models | Algorithms |

|---|---|---|---|

|

|

We support RL training for improving reasoning ability, such as Math Reasoning, and RL training for improving coding ability, such as Online Coder. We believe embodied AI will also integrate the ability of agents in the future to complete complex tasks.

Besides the rich functionalities introduced above, RLinf has high flexibility to support diverse RL training workflows (PPO, GRPO, SAC and so on), while hiding the complexity of distributed programming. Users can easily scale RL training to a large number of GPU nodes without modifying code, meeting the increasing demand of computation for RL training.

The high flexibility allows RLinf to explore more efficient scheduling and execution. The hybrid execution mode for embodied RL achieves up to 2.434× throughput compared to existing frameworks.

Multiple Backend Integrations

- FSDP + HuggingFace/SGLang/vLLM: rapid adaptation to new models and algorithms, ideal for beginners and fast prototyping.

- Megatron + SGLang/vLLM: optimized for large-scale training, delivering maximum efficiency for expert users with demanding workloads.

Installation: Users can refer to our installation guide to install RLinf. We recommend users to use our provided docker image (i.e., Installation Method 1), as the environment and dependencies of embodied RL are complex.

Run a simple example: After setting up the environment, users can run a simple example of embodied RL with ManiSkill3 simulator following this document.

For more tutorials of RLinf and application examples, checkout our documentation and example gallery.

- RLinf supports both PPO and GRPO algorithms, enabling state-of-the-art training for Vision-Language-Action models.

- The framework provides seamless integration with mainstream embodied intelligence benchmarks, and achieves strong performance across diverse evaluation metrics.

- Training curves on ManiSkill “PutOnPlateInScene25Mani-v3” with OpenVLA and OpenVLA-OFT models, using PPO and GRPO algorithms. PPO consistently outperforms GRPO and exhibits greater stability.

| Evaluation results on ManiSkill. Values denote success rates | |||||

|---|---|---|---|---|---|

| In-Distribution | Out-Of-Distribution | ||||

| Vision | Semantic | Execution | Avg. | ||

| OpenVLA (Base) | 53.91% | 38.75% | 35.94% | 42.11% | 39.10% |

| 93.75% | 80.47% | 75.00% | 81.77% | 79.15% | |

| 84.38% | 74.69% | 72.99% | 77.86% | 75.15% | |

| 96.09% | 82.03% | 78.35% | 85.42% | 81.93% | |

| OpenVLA-OFT (Base) | 28.13% | 27.73% | 12.95% | 11.72% | 18.29% |

| 94.14% | 84.69% | 45.54% | 44.66% | 60.64% | |

| 97.66% | 92.11% | 64.84% | 73.57% | 77.05% | |

| Evaluation results of the unified model on the five LIBERO task groups | ||||||

|---|---|---|---|---|---|---|

| Model | Spatial | Object | Goal | Long | 90 | Avg. |

| 72.18% | 71.48% | 64.06% | 48.44% | 70.97% | 65.43% | |

| 99.40% | 99.80% | 98.79% | 93.95% | 98.59% | 98.11% | |

| Δ Improvement | +27.22 | +28.32 | +34.73 | +45.51 | +27.62 | +32.68 |

| Evaluation results on the four LIBERO task groups | |||||||

|---|---|---|---|---|---|---|---|

| Model | LIBERO | ||||||

| Spatial | Object | Goal | Long | Avg. | Δ Avg. | ||

| Full Dataset SFT | |||||||

| Octo | 78.9% | 85.7% | 84.6% | 51.1% | 75.1% | — | |

| OpenVLA | 84.7% | 88.4% | 79.2% | 53.7% | 76.5% | — | |

| πfast | 96.4% | 96.8% | 88.6% | 60.2% | 85.5% | — | |

| OpenVLA-OFT | 91.6% | 95.3% | 90.6% | 86.5% | 91.0% | — | |

| π0 | 96.8% | 98.8% | 95.8% | 85.2% | 94.2% | — | |

| π0.5 | 98.8% | 98.2% | 98.0% | 92.4% | 96.9% | — | |

| Few-shot Dataset SFT + RL | |||||||

| π0 |

|

65.3% | 64.4% | 49.8% | 51.2% | 57.6% | — |

| Flow-SDE | 98.4% | 99.4% | 96.2% | 90.2% | 96.1% | +38.5 | |

| Flow-Noise | 99.0% | 99.2% | 98.2% | 93.8% | 97.6% | +40.0 | |

| Few-shot Dataset SFT + RL | |||||||

| π0.5 |

|

84.6% | 95.4% | 84.6% | 43.9% | 77.1% | — |

| Flow-SDE | 99.6% | 100% | 98.8% | 93.0% | 97.9% | +20.8 | |

| Flow-Noise | 99.6% | 100% | 99.6% | 94.0% | 98.3% | +21.2 | |

| 1.5B model results | ||||

|---|---|---|---|---|

| Model | AIME 24 | AIME 25 | GPQA-diamond | Average |

| 28.33 | 24.90 | 27.45 | 26.89 | |

| 37.80 | 30.42 | 32.11 | 33.44 | |

| 40.41 | 30.93 | 27.54 | 32.96 | |

| 40.73 | 31.56 | 28.10 | 33.46 | |

| AReaL-1.5B-retrain* | 44.42 | 34.27 | 33.81 | 37.50 |

| 43.65 | 32.49 | 35.00 | 37.05 | |

| 48.44 | 35.63 | 38.46 | 40.84 | |

* We retrain the model using the default settings for 600 steps.

| 7B model results | ||||

|---|---|---|---|---|

| Model | AIME 24 | AIME 25 | GPQA-diamond | Average |

| 54.90 | 40.20 | 45.48 | 46.86 | |

| 61.66 | 49.38 | 46.93 | 52.66 | |

| 66.87 | 52.49 | 44.43 | 54.60 | |

| 68.55 | 51.24 | 43.88 | 54.56 | |

| 67.30 | 55.00 | 45.57 | 55.96 | |

| 68.33 | 52.19 | 48.18 | 56.23 | |

- RLinf achieves state-of-the-art performance on math reasoning tasks, consistently outperforming existing models across multiple benchmarks (AIME 24, AIME 25, GPQA-diamond) for both 1.5B and 7B model sizes.

- [X] Support for heterogeneous GPUs

- [ ] Support for asynchronous pipeline execution

- [X] Support for Mixture of Experts (MoE)

- [X] Support for Vision-Language Models (VLMs) training

- [ ] Support for deep searcher agent training

- [ ] Support for multi-agent training

- [ ] Support for integration with more embodied simulators (e.g., GENESIS)

- [ ] Support for more Vision Language Action models (VLAs) (e.g., WALL-OSS)

- [X] Support for world model

- [X] Support for real-world RL

RLinf has comprehensive CI tests for both the core components (via unit tests) and end-to-end RL training workflows of embodied, agent, and reasoning scenarios. Below is the summary of the CI test status of the main branch:

| Test Name | Status |

|---|---|

| unit-tests |  |

| agent-reason-e2e-tests |  |

| embodied-e2e-tests |  |

| scheduler-tests |  |

We welcome contributions to RLinf. Please read contribution guide before taking action. Thank the following contributors and welcome more developers to join us on this open source project.

If you find RLinf helpful, please cite the paper:

@article{yu2025rlinf,

title={RLinf: Flexible and Efficient Large-scale Reinforcement Learning via Macro-to-Micro Flow Transformation},

author={Yu, Chao and Wang, Yuanqing and Guo, Zhen and Lin, Hao and Xu, Si and Zang, Hongzhi and Zhang, Quanlu and Wu, Yongji and Zhu, Chunyang and Hu, Junhao and others},

journal={arXiv preprint arXiv:2509.15965},

year={2025}

}If you use RL+VLA in RLinf, you can also cite our technical report and empirical study paper:

@article{zang2025rlinf,

title={RLinf-VLA: A Unified and Efficient Framework for VLA+ RL Training},

author={Zang, Hongzhi and Wei, Mingjie and Xu, Si and Wu, Yongji and Guo, Zhen and Wang, Yuanqing and Lin, Hao and Shi, Liangzhi and Xie, Yuqing and Xu, Zhexuan and others},

journal={arXiv preprint arXiv:2510.06710},

year={2025}

}@article{liu2025can,

title={What can rl bring to vla generalization? an empirical study},

author={Liu, Jijia and Gao, Feng and Wei, Bingwen and Chen, Xinlei and Liao, Qingmin and Wu, Yi and Yu, Chao and Wang, Yu},

journal={arXiv preprint arXiv:2505.19789},

year={2025}

}@article{chen2025pi_,

title={$$\backslash$pi\_$\backslash$texttt $\{$RL$\}$ $: Online RL Fine-tuning for Flow-based Vision-Language-Action Models},

author={Chen, Kang and Liu, Zhihao and Zhang, Tonghe and Guo, Zhen and Xu, Si and Lin, Hao and Zang, Hongzhi and Zhang, Quanlu and Yu, Zhaofei and Fan, Guoliang and others},

journal={arXiv preprint arXiv:2510.25889},

year={2025}

}Acknowledgements RLinf has been inspired by, and benefits from, the ideas and tooling of the broader open-source community. In particular, we would like to thank the teams and contributors behind VeRL, AReaL, Megatron-LM, SGLang, and PyTorch Fully Sharded Data Parallel (FSDP), and if we have inadvertently missed your project or contribution, please open an issue or a pull request so we can properly credit you.

Contact: We welcome applications from Postdocs, PhD/Master's students, and interns. Join us in shaping the future of RL infrastructure and embodied AI!

- Chao Yu: [email protected]

- Yu Wang: [email protected]

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for RLinf

Similar Open Source Tools

RLinf

RLinf is a flexible and scalable open-source infrastructure designed for post-training foundation models via reinforcement learning. It provides a robust backbone for next-generation training, supporting open-ended learning, continuous generalization, and limitless possibilities in intelligence development. The tool offers unique features like Macro-to-Micro Flow, flexible execution modes, auto-scheduling strategy, embodied agent support, and fast adaptation for mainstream VLA models. RLinf is fast with hybrid mode and automatic online scaling strategy, achieving significant throughput improvement and efficiency. It is also flexible and easy to use with multiple backend integrations, adaptive communication, and built-in support for popular RL methods. The roadmap includes system-level enhancements and application-level extensions to support various training scenarios and models. Users can get started with complete documentation, quickstart guides, key design principles, example gallery, advanced features, and guidelines for extending the framework. Contributions are welcome, and users are encouraged to cite the GitHub repository and acknowledge the broader open-source community.

UniCoT

Uni-CoT is a unified reasoning framework that extends Chain-of-Thought (CoT) principles to the multimodal domain, enabling Multimodal Large Language Models (MLLMs) to perform interpretable, step-by-step reasoning across both text and vision. It decomposes complex multimodal tasks into structured, manageable steps that can be executed sequentially or in parallel, allowing for more scalable and systematic reasoning.

Xwin-LM

Xwin-LM is a powerful and stable open-source tool for aligning large language models, offering various alignment technologies like supervised fine-tuning, reward models, reject sampling, and reinforcement learning from human feedback. It has achieved top rankings in benchmarks like AlpacaEval and surpassed GPT-4. The tool is continuously updated with new models and features.

claude-code-ultimate-guide

The Claude Code Ultimate Guide is an exhaustive documentation resource that takes users from beginner to power user in using Claude Code. It includes production-ready templates, workflow guides, a quiz, and a cheatsheet for daily use. The guide covers educational depth, methodologies, and practical examples to help users understand concepts and workflows. It also provides interactive onboarding, a repository structure overview, and learning paths for different user levels. The guide is regularly updated and offers a unique 257-question quiz for comprehensive assessment. Users can also find information on agent teams coverage, methodologies, annotated templates, resource evaluations, and learning paths for different roles like junior developer, senior developer, power user, and product manager/devops/designer.

LlamaV-o1

LlamaV-o1 is a Large Multimodal Model designed for spontaneous reasoning tasks. It outperforms various existing models on multimodal reasoning benchmarks. The project includes a Step-by-Step Visual Reasoning Benchmark, a novel evaluation metric, and a combined Multi-Step Curriculum Learning and Beam Search Approach. The model achieves superior performance in complex multi-step visual reasoning tasks in terms of accuracy and efficiency.

AReaL

AReaL (Ant Reasoning RL) is an open-source reinforcement learning system developed at the RL Lab, Ant Research. It is designed for training Large Reasoning Models (LRMs) in a fully open and inclusive manner. AReaL provides reproducible experiments for 1.5B and 7B LRMs, showcasing its scalability and performance across diverse computational budgets. The system follows an iterative training process to enhance model performance, with a focus on mathematical reasoning tasks. AReaL is equipped to adapt to different computational resource settings, enabling users to easily configure and launch training trials. Future plans include support for advanced models, optimizations for distributed training, and exploring research topics to enhance LRMs' reasoning capabilities.

IDvs.MoRec

This repository contains the source code for the SIGIR 2023 paper 'Where to Go Next for Recommender Systems? ID- vs. Modality-based Recommender Models Revisited'. It provides resources for evaluating foundation, transferable, multi-modal, and LLM recommendation models, along with datasets, pre-trained models, and training strategies for IDRec and MoRec using in-batch debiased cross-entropy loss. The repository also offers large-scale datasets, code for SASRec with in-batch debias cross-entropy loss, and information on joining the lab for research opportunities.

mindnlp

MindNLP is an open-source NLP library based on MindSpore. It provides a platform for solving natural language processing tasks, containing many common approaches in NLP. It can help researchers and developers to construct and train models more conveniently and rapidly. Key features of MindNLP include: * Comprehensive data processing: Several classical NLP datasets are packaged into a friendly module for easy use, such as Multi30k, SQuAD, CoNLL, etc. * Friendly NLP model toolset: MindNLP provides various configurable components. It is friendly to customize models using MindNLP. * Easy-to-use engine: MindNLP simplified complicated training process in MindSpore. It supports Trainer and Evaluator interfaces to train and evaluate models easily. MindNLP supports a wide range of NLP tasks, including: * Language modeling * Machine translation * Question answering * Sentiment analysis * Sequence labeling * Summarization MindNLP also supports industry-leading Large Language Models (LLMs), including Llama, GLM, RWKV, etc. For support related to large language models, including pre-training, fine-tuning, and inference demo examples, you can find them in the "llm" directory. To install MindNLP, you can either install it from Pypi, download the daily build wheel, or install it from source. The installation instructions are provided in the documentation. MindNLP is released under the Apache 2.0 license. If you find this project useful in your research, please consider citing the following paper: @misc{mindnlp2022, title={{MindNLP}: a MindSpore NLP library}, author={MindNLP Contributors}, howpublished = {\url{https://github.com/mindlab-ai/mindnlp}}, year={2022} }

EverMemOS

EverMemOS is an AI memory system that enables AI to not only remember past events but also understand the meaning behind memories and use them to guide decisions. It achieves 93% reasoning accuracy on the LoCoMo benchmark by providing long-term memory capabilities for conversational AI agents through structured extraction, intelligent retrieval, and progressive profile building. The tool is production-ready with support for Milvus vector DB, Elasticsearch, MongoDB, and Redis, and offers easy integration via a simple REST API. Users can store and retrieve memories using Python code and benefit from features like multi-modal memory storage, smart retrieval mechanisms, and advanced techniques for memory management.

sktime

sktime is a Python library for time series analysis that provides a unified interface for various time series learning tasks such as classification, regression, clustering, annotation, and forecasting. It offers time series algorithms and tools compatible with scikit-learn for building, tuning, and validating time series models. sktime aims to enhance the interoperability and usability of the time series analysis ecosystem by empowering users to apply algorithms across different tasks and providing interfaces to related libraries like scikit-learn, statsmodels, tsfresh, PyOD, and fbprophet.

InternLM

InternLM is a powerful language model series with features such as 200K context window for long-context tasks, outstanding comprehensive performance in reasoning, math, code, chat experience, instruction following, and creative writing, code interpreter & data analysis capabilities, and stronger tool utilization capabilities. It offers models in sizes of 7B and 20B, suitable for research and complex scenarios. The models are recommended for various applications and exhibit better performance than previous generations. InternLM models may match or surpass other open-source models like ChatGPT. The tool has been evaluated on various datasets and has shown superior performance in multiple tasks. It requires Python >= 3.8, PyTorch >= 1.12.0, and Transformers >= 4.34 for usage. InternLM can be used for tasks like chat, agent applications, fine-tuning, deployment, and long-context inference.

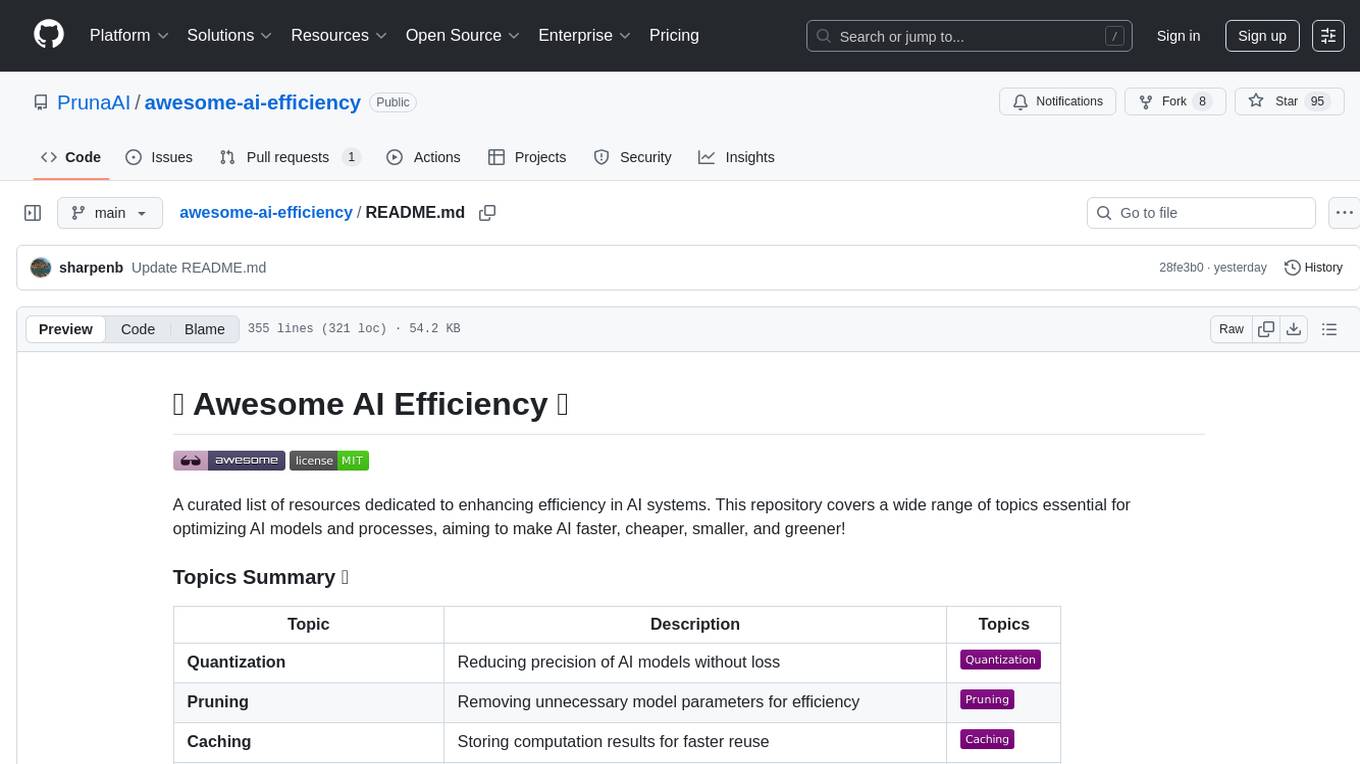

awesome-ai-efficiency

Awesome AI Efficiency is a curated list of resources dedicated to enhancing efficiency in AI systems. The repository covers various topics essential for optimizing AI models and processes, aiming to make AI faster, cheaper, smaller, and greener. It includes topics like quantization, pruning, caching, distillation, factorization, compilation, parameter-efficient fine-tuning, speculative decoding, hardware optimization, training techniques, inference optimization, sustainability strategies, and scalability approaches.

monoscope

Monoscope is an open-source monitoring and observability platform that uses artificial intelligence to understand and monitor systems automatically. It allows users to ingest and explore logs, traces, and metrics in S3 buckets, query in natural language via LLMs, and create AI agents to detect anomalies. Key capabilities include universal data ingestion, AI-powered understanding, natural language interface, cost-effective storage, and zero configuration. Monoscope is designed to reduce alert fatigue, catch issues before they impact users, and provide visibility across complex systems.

motia

Motia is an AI agent framework designed for software engineers to create, test, and deploy production-ready AI agents quickly. It provides a code-first approach, allowing developers to write agent logic in familiar languages and visualize execution in real-time. With Motia, developers can focus on business logic rather than infrastructure, offering zero infrastructure headaches, multi-language support, composable steps, built-in observability, instant APIs, and full control over AI logic. Ideal for building sophisticated agents and intelligent automations, Motia's event-driven architecture and modular steps enable the creation of GenAI-powered workflows, decision-making systems, and data processing pipelines.

EasyEdit

EasyEdit is a Python package for edit Large Language Models (LLM) like `GPT-J`, `Llama`, `GPT-NEO`, `GPT2`, `T5`(support models from **1B** to **65B**), the objective of which is to alter the behavior of LLMs efficiently within a specific domain without negatively impacting performance across other inputs. It is designed to be easy to use and easy to extend.

For similar tasks

RLinf

RLinf is a flexible and scalable open-source infrastructure designed for post-training foundation models via reinforcement learning. It provides a robust backbone for next-generation training, supporting open-ended learning, continuous generalization, and limitless possibilities in intelligence development. The tool offers unique features like Macro-to-Micro Flow, flexible execution modes, auto-scheduling strategy, embodied agent support, and fast adaptation for mainstream VLA models. RLinf is fast with hybrid mode and automatic online scaling strategy, achieving significant throughput improvement and efficiency. It is also flexible and easy to use with multiple backend integrations, adaptive communication, and built-in support for popular RL methods. The roadmap includes system-level enhancements and application-level extensions to support various training scenarios and models. Users can get started with complete documentation, quickstart guides, key design principles, example gallery, advanced features, and guidelines for extending the framework. Contributions are welcome, and users are encouraged to cite the GitHub repository and acknowledge the broader open-source community.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Awesome-LLM-3D

This repository is a curated list of papers related to 3D tasks empowered by Large Language Models (LLMs). It covers tasks such as 3D understanding, reasoning, generation, and embodied agents. The repository also includes other Foundation Models like CLIP and SAM to provide a comprehensive view of the area. It is actively maintained and updated to showcase the latest advances in the field. Users can find a variety of research papers and projects related to 3D tasks and LLMs in this repository.

For similar jobs

RLinf

RLinf is a flexible and scalable open-source infrastructure designed for post-training foundation models via reinforcement learning. It provides a robust backbone for next-generation training, supporting open-ended learning, continuous generalization, and limitless possibilities in intelligence development. The tool offers unique features like Macro-to-Micro Flow, flexible execution modes, auto-scheduling strategy, embodied agent support, and fast adaptation for mainstream VLA models. RLinf is fast with hybrid mode and automatic online scaling strategy, achieving significant throughput improvement and efficiency. It is also flexible and easy to use with multiple backend integrations, adaptive communication, and built-in support for popular RL methods. The roadmap includes system-level enhancements and application-level extensions to support various training scenarios and models. Users can get started with complete documentation, quickstart guides, key design principles, example gallery, advanced features, and guidelines for extending the framework. Contributions are welcome, and users are encouraged to cite the GitHub repository and acknowledge the broader open-source community.

FlagPerf

FlagPerf is an integrated AI hardware evaluation engine jointly built by the Institute of Intelligence and AI hardware manufacturers. It aims to establish an industry-oriented metric system to evaluate the actual capabilities of AI hardware under software stack combinations (model + framework + compiler). FlagPerf features a multidimensional evaluation metric system that goes beyond just measuring 'whether the chip can support specific model training.' It covers various scenarios and tasks, including computer vision, natural language processing, speech, multimodal, with support for multiple training frameworks and inference engines to connect AI hardware with software ecosystems. It also supports various testing environments to comprehensively assess the performance of domestic AI chips in different scenarios.

AI-System-School

AI System School is a curated list of research in machine learning systems, focusing on ML/DL infra, LLM infra, domain-specific infra, ML/LLM conferences, and general resources. It provides resources such as data processing, training systems, video systems, autoML systems, and more. The repository aims to help users navigate the landscape of AI systems and machine learning infrastructure, offering insights into conferences, surveys, books, videos, courses, and blogs related to the field.

multi-agent-orchestrator

Multi-Agent Orchestrator is a flexible and powerful framework for managing multiple AI agents and handling complex conversations. It intelligently routes queries to the most suitable agent based on context and content, supports dual language implementation in Python and TypeScript, offers flexible agent responses, context management across agents, extensible architecture for customization, universal deployment options, and pre-built agents and classifiers. It is suitable for various applications, from simple chatbots to sophisticated AI systems, accommodating diverse requirements and scaling efficiently.

AIXP

The AI-Exchange Protocol (AIXP) is a communication standard designed to facilitate information and result exchange between artificial intelligence agents. It aims to enhance interoperability and collaboration among various AI systems by establishing a common framework for communication. AIXP includes components for communication, loop prevention, and task finalization, ensuring secure and efficient collaboration while avoiding infinite communication loops. The protocol defines access points, data formats, authentication, authorization, versioning, loop detection, status codes, error messages, and task completion verification. AIXP enables AI agents to collaborate seamlessly and complete tasks effectively, contributing to the overall efficiency and reliability of AI systems.

AIInfra

AIInfra is an open-source project focused on AI infrastructure, specifically targeting large models in distributed clusters, distributed architecture, distributed training, and algorithms related to large models. The project aims to explore and study system design in artificial intelligence and deep learning, with a focus on the hardware and software stack for building AI large model systems. It provides a comprehensive curriculum covering topics such as AI chip principles, communication and storage, AI clusters, large model training, and inference, as well as algorithms for large models. The course is designed for undergraduate and graduate students, as well as professionals working with AI large model systems, to gain a deep understanding of AI computer system architecture and design.

eino

Eino is an ultimate LLM application development framework in Golang, emphasizing simplicity, scalability, reliability, and effectiveness. It provides a curated list of component abstractions, a powerful composition framework, meticulously designed APIs, best practices, and tools covering the entire development cycle. Eino standardizes and improves efficiency in AI application development by offering rich components, powerful orchestration, complete stream processing, highly extensible aspects, and a comprehensive framework structure.

tt-xla

TT-XLA is a repository that enables running PyTorch and JAX models on Tenstorrent's AI hardware. It serves as a backend integration between the JAX ecosystem and Tenstorrent's ML accelerators using the PJRT (Portable JAX Runtime) interface. It supports ingestion of PyTorch models through PyTorch/XLA and JAX models via jit compile, providing a StableHLO (SHLO) graph to TT-MLIR compiler.