llm-memorization

Give your local LLM a real memory with a lightweight, fully local memory system. 100% offline and under your control.

Stars: 56

The 'llm-memorization' project is a tool designed to index, archive, and search conversations with a local LLM using a SQLite database enriched with automatically extracted keywords. It aims to provide personalized context at the start of a conversation by adding memory information to the initial prompt. The tool automates queries from local LLM conversational management libraries, offers a hybrid search function, enhances prompts based on posed questions, and provides an all-in-one graphical user interface for data visualization. It supports both French and English conversations and prompts for bilingual use.

README:

A project to index, archive, and search conversations with a local LLM (accessed via a library like LM Studio) using a SQLite database enriched with automatically extracted keywords.

The project is designed to work without calling any external APIs, ensuring data privacy.

The idea is to provide substantial and personalized context at the start of a conversation with an LLM by adding memory information to the initial prompt.

The collected data (database and generated prompts) can be analyzed directly within the script.

- Automate queries from local LLM conversational management libraries (LM Studio, Transformer Labs, Ollama, ...) to build a SQLite database.

- Hybrid search function to find the most relevant contexts in the database:

- Filter conversations using keywords extracted from the question,

- Use a vector index to measure semantic similarity.

- Enhance prompts by providing a tailored context based on the posed question relying on previous exchanges.

- All-in-one graphical user interface:

- Adjustable number of extracted keywords and contexts via sliders.

- Data visualization :

- Information on the generated prompt based on keywords.

- Insights on the conversation database.

- Supports both French and English conversations and prompts, for bilingual use.

The sync_lmstudio.py script scans the LM Studio conversations folder, reads all .json files, and extracts (input, output, model) datas.

Each exchange is:

- Stored in the table

conversations. - Hashed with MD5 to avoid duplicates.

- Timestamped to retrieve conversations by date/time.

- Analysed using KeyBERT to extract 15 keywords, which are stored in the

keywordstable (in text and in vectors).

The llm_cortexpander.py script, executable via llm_memorization.command :

- Takes the initial question,

- Extracts the corresponding keywords,

- Retrieves similar question/answer pairs from the SQLite database,

- Combining keyword filtering for fast targeting of relevant conversations and vector search for refinement,

- Summarizes the answers using a local model (

plguillou/t5-base-fr-sum-cnndm), - Copies a complete prompt to the clipboard, containing summarized previous exchanges, ending with the original question,

- Provides a graphical interface including:

- Help window,

- Data analysis window.

For a guide to add the script to your dock (on a Mac), see the guide in the mac_shortcut directory.

- Enhance the existing database visualization to provide clearer insights into user data (e.g. interactive topic maps, conversation heatmaps).

- Transform the script into an LM Studio plugin, to enable seamless prompt enhancement and real-time analytics directly within the interface.

- Clone the repository

git clone https://github.com/victorcarre6/llm-memorization

cd llm-memorization- Create a virtual environment

python3 -m venv venv

source venv/bin/activate # macOS/Linux

venv\Scripts\activate # Windows- Install dependencies

pip install -r requirements.txt- Then install the NLP models

fr_core_news_lganden_core_web_lg.

python -m spacy download fr_core_news_lg

python -m spacy download en_core_web_lg- Download the local model

- Either with the dedicated script:

python scripts/model_download.py- Or via GitLFS (see

Notesbellow)

- Directory structure

- The

config.jsonfile at the root contains the paths required for the scripts to function properly.

./llm_memorization.commandor read the README.md in /mac_shortcut to install a shortcut that launch the script directly from your dock.

- These scripts work with LM Studio but can be adapted to any software providing conversations in

.jsonformat.

-

A French stop-word dictionary is used to eliminate irrelevant keywords (coordinating conjunctions, prepositions, etc.). The file

resources/stopwords_fr.jsoncan be modified to keep or remove specific keywords. This dictionary can be replaced with a custom file via thestopwords_file_pathlabel inconfig.json. -

datas/database_example.db: ~100 Q&A pairs with OpenChat-3.5, Mistral-7B and DeepSeek-Coder-6.7B on topics including :- Green Chemistry & Catalysis

(FR) - Pharmaceutical Applications & AI

(FR) - Plant Science & Biostimulants

(FR) - Cross-disciplinary Tools

(FR) - OLED Materials

(EN) - Machine Learning in Agrochemistry

(EN)It is highly advised to build your own database in order to have prompts generated in a single language only.

- Green Chemistry & Catalysis

-

To avoid syncing conversations, they can be hidden in

~/.lmstudio/conversations/unsync.

-

The script applies a multiplier factor (default 2) to the requested number of extracted keywords to obtain more raw keywords, then filters irrelevant ones to ensure a sufficient, high-quality final set. This multiplier is configurable in

config.jsonunderkeyword_multiplier. -

A similarity threshold is used to fine-tune the selection the most relevant contexts. You can change its value in

config.jsonunder the keysimilarity_threshold(0.2 by default). Based on my testing, values above 0.35 tend to exclude relevant contexts and are therefore not recommended.

The script uses the model plguillou/t5-base-fr-sum-cnndm, selected for its good balance between performance and hardware requirements (4 GB of free RAM). This multilingual model allows summarization of both French and English conversations, keeping response times under 30 seconds.

You can configure the summarization model in config.json via the summarizing_model key, using either:

"summarizing_model": "plguillou/t5-base-fr-sum-cnndm" // Hugging Face model (loaded online)

"summarizing_model": "resources/models/t5-base-fr-sum-cnndm" // Local model (directory path)If the Hugging Face model is unreachable (e.g. offline usage), the script will automatically fall back to the local model if the directory exists.

➤ Local model setup with GitLFS

- Install Git LFS (if not already installed):

git lfs install- Pull the model from the repository:

git lfs pullThe model file will appear here: resources/models/t5-base-fr-sum-cnndm/model.safetensors

Make sure your config.json points to this local folder: "summarizing_model": "resources/models/t5-base-fr-sum-cnndm"

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for llm-memorization

Similar Open Source Tools

llm-memorization

The 'llm-memorization' project is a tool designed to index, archive, and search conversations with a local LLM using a SQLite database enriched with automatically extracted keywords. It aims to provide personalized context at the start of a conversation by adding memory information to the initial prompt. The tool automates queries from local LLM conversational management libraries, offers a hybrid search function, enhances prompts based on posed questions, and provides an all-in-one graphical user interface for data visualization. It supports both French and English conversations and prompts for bilingual use.

bedrock-claude-chatbot

Bedrock Claude ChatBot is a Streamlit application that provides a conversational interface for users to interact with various Large Language Models (LLMs) on Amazon Bedrock. Users can ask questions, upload documents, and receive responses from the AI assistant. The app features conversational UI, document upload, caching, chat history storage, session management, model selection, cost tracking, logging, and advanced data analytics tool integration. It can be customized using a config file and is extensible for implementing specialized tools using Docker containers and AWS Lambda. The app requires access to Amazon Bedrock Anthropic Claude Model, S3 bucket, Amazon DynamoDB, Amazon Textract, and optionally Amazon Elastic Container Registry and Amazon Athena for advanced analytics features.

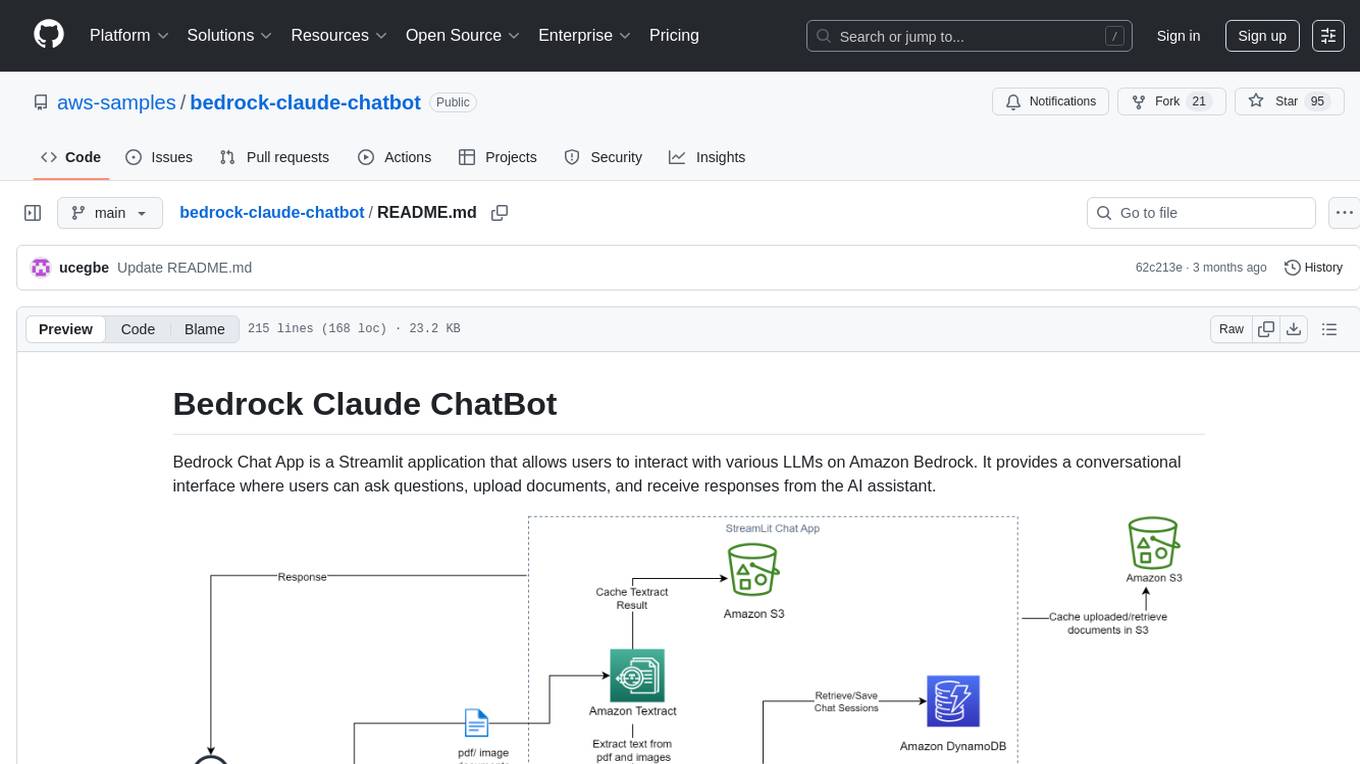

premsql

PremSQL is an open-source library designed to help developers create secure, fully local Text-to-SQL solutions using small language models. It provides essential tools for building and deploying end-to-end Text-to-SQL pipelines with customizable components, ideal for secure, autonomous AI-powered data analysis. The library offers features like Local-First approach, Customizable Datasets, Robust Executors and Evaluators, Advanced Generators, Error Handling and Self-Correction, Fine-Tuning Support, and End-to-End Pipelines. Users can fine-tune models, generate SQL queries from natural language inputs, handle errors, and evaluate model performance against predefined metrics. PremSQL is extendible for customization and private data usage.

datalore-localgen-cli

Datalore is a terminal tool for generating structured datasets from local files like PDFs, Word docs, images, and text. It extracts content, uses semantic search to understand context, applies instructions through a generated schema, and outputs clean, structured data. Perfect for converting raw or unstructured local documents into ready-to-use datasets for training, analysis, or experimentation, all without manual formatting.

honcho

Honcho is a platform for creating personalized AI agents and LLM powered applications for end users. The repository is a monorepo containing the server/API for managing database interactions and storing application state, along with a Python SDK. It utilizes FastAPI for user context management and Poetry for dependency management. The API can be run using Docker or manually by setting environment variables. The client SDK can be installed using pip or Poetry. The project is open source and welcomes contributions, following a fork and PR workflow. Honcho is licensed under the AGPL-3.0 License.

deep-research

Deep Research is a lightning-fast tool that uses powerful AI models to generate comprehensive research reports in just a few minutes. It leverages advanced 'Thinking' and 'Task' models, combined with an internet connection, to provide fast and insightful analysis on various topics. The tool ensures privacy by processing and storing all data locally. It supports multi-platform deployment, offers support for various large language models, web search functionality, knowledge graph generation, research history preservation, local and server API support, PWA technology, multi-key payload support, multi-language support, and is built with modern technologies like Next.js and Shadcn UI. Deep Research is open-source under the MIT License.

open-deep-research

Open Deep Research is an open-source project that serves as a clone of Open AI's Deep Research experiment. It utilizes Firecrawl's extract and search method along with a reasoning model to conduct in-depth research on the web. The project features Firecrawl Search + Extract, real-time data feeding to AI via search, structured data extraction from multiple websites, Next.js App Router for advanced routing, React Server Components and Server Actions for server-side rendering, AI SDK for generating text and structured objects, support for various model providers, styling with Tailwind CSS, data persistence with Vercel Postgres and Blob, and simple and secure authentication with NextAuth.js.

lmql

LMQL is a programming language designed for large language models (LLMs) that offers a unique way of integrating traditional programming with LLM interaction. It allows users to write programs that combine algorithmic logic with LLM calls, enabling model reasoning capabilities within the context of the program. LMQL provides features such as Python syntax integration, rich control-flow options, advanced decoding techniques, powerful constraints via logit masking, runtime optimization, sync and async API support, multi-model compatibility, and extensive applications like JSON decoding and interactive chat interfaces. The tool also offers library integration, flexible tooling, and output streaming options for easy model output handling.

log10

Log10 is a one-line Python integration to manage your LLM data. It helps you log both closed and open-source LLM calls, compare and identify the best models and prompts, store feedback for fine-tuning, collect performance metrics such as latency and usage, and perform analytics and monitor compliance for LLM powered applications. Log10 offers various integration methods, including a python LLM library wrapper, the Log10 LLM abstraction, and callbacks, to facilitate its use in both existing production environments and new projects. Pick the one that works best for you. Log10 also provides a copilot that can help you with suggestions on how to optimize your prompt, and a feedback feature that allows you to add feedback to your completions. Additionally, Log10 provides prompt provenance, session tracking and call stack functionality to help debug prompt chains. With Log10, you can use your data and feedback from users to fine-tune custom models with RLHF, and build and deploy more reliable, accurate and efficient self-hosted models. Log10 also supports collaboration, allowing you to create flexible groups to share and collaborate over all of the above features.

VoiceStreamAI

VoiceStreamAI is a Python 3-based server and JavaScript client solution for near-realtime audio streaming and transcription using WebSocket. It employs Huggingface's Voice Activity Detection (VAD) and OpenAI's Whisper model for accurate speech recognition. The system features real-time audio streaming, modular design for easy integration of VAD and ASR technologies, customizable audio chunk processing strategies, support for multilingual transcription, and secure sockets support. It uses a factory and strategy pattern implementation for flexible component management and provides a unit testing framework for robust development.

RepoAgent

RepoAgent is an LLM-powered framework designed for repository-level code documentation generation. It automates the process of detecting changes in Git repositories, analyzing code structure through AST, identifying inter-object relationships, replacing Markdown content, and executing multi-threaded operations. The tool aims to assist developers in understanding and maintaining codebases by providing comprehensive documentation, ultimately improving efficiency and saving time.

CoolCline

CoolCline is a proactive programming assistant that combines the best features of Cline, Roo Code, and Bao Cline. It seamlessly collaborates with your command line interface and editor, providing the most powerful AI development experience. It optimizes queries, allows quick switching of LLM Providers, and offers auto-approve options for actions. Users can configure LLM Providers, select different chat modes, perform file and editor operations, integrate with the command line, automate browser tasks, and extend capabilities through the Model Context Protocol (MCP). Context mentions help provide explicit context, and installation is easy through the editor's extension panel or by dragging and dropping the `.vsix` file. Local setup and development instructions are available for contributors.

langmanus

LangManus is a community-driven AI automation framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It implements a hierarchical multi-agent system with agents like Coordinator, Planner, Supervisor, Researcher, Coder, Browser, and Reporter. The framework supports LLM integration, search and retrieval tools, Python integration, workflow management, and visualization. LangManus aims to give back to the open-source community and welcomes contributions in various forms.

ShortcutsBench

ShortcutsBench is a project focused on collecting and analyzing workflows created in the Shortcuts app, providing a dataset of shortcut metadata, source files, and API information. It aims to study the integration of large language models with Apple devices, particularly focusing on the role of shortcuts in enhancing user experience. The project offers insights for Shortcuts users, enthusiasts, and researchers to explore, customize workflows, and study automated workflows, low-code programming, and API-based agents.

GraphRAG-Local-UI

GraphRAG Local with Interactive UI is an adaptation of Microsoft's GraphRAG, tailored to support local models and featuring a comprehensive interactive user interface. It allows users to leverage local models for LLM and embeddings, visualize knowledge graphs in 2D or 3D, manage files, settings, and queries, and explore indexing outputs. The tool aims to be cost-effective by eliminating dependency on costly cloud-based models and offers flexible querying options for global, local, and direct chat queries.

agentok

Agentok Studio is a tool built upon AG2, a powerful agent framework from Microsoft, offering intuitive visual tools to streamline the creation and management of complex agent-based workflows. It simplifies the process for creators and developers by generating native Python code with minimal dependencies, enabling users to create self-contained code that can be executed anywhere. The tool is currently under development and not recommended for production use, but contributions are welcome from the community to enhance its capabilities and functionalities.

For similar tasks

Callytics

Callytics is an advanced call analytics solution that leverages speech recognition and large language models (LLMs) technologies to analyze phone conversations from customer service and call centers. By processing both the audio and text of each call, it provides insights such as sentiment analysis, topic detection, conflict detection, profanity word detection, and summary. These cutting-edge techniques help businesses optimize customer interactions, identify areas for improvement, and enhance overall service quality. When an audio file is placed in the .data/input directory, the entire pipeline automatically starts running, and the resulting data is inserted into the database. This is only a v1.1.0 version; many new features will be added, models will be fine-tuned or trained from scratch, and various optimization efforts will be applied.

llm-memorization

The 'llm-memorization' project is a tool designed to index, archive, and search conversations with a local LLM using a SQLite database enriched with automatically extracted keywords. It aims to provide personalized context at the start of a conversation by adding memory information to the initial prompt. The tool automates queries from local LLM conversational management libraries, offers a hybrid search function, enhances prompts based on posed questions, and provides an all-in-one graphical user interface for data visualization. It supports both French and English conversations and prompts for bilingual use.

genassist

GenAssist is an AI-powered platform for managing and leveraging various AI workflows, focusing on conversation management, analytics, and agent-based interactions. It provides user management, AI agents configuration, knowledge base management, analytics, conversation management, and audit logging features. The platform is built with React, TypeScript, Vite, Tailwind CSS, FastAPI, SQLAlchemy ORM, and PostgreSQL database. GenAssist offers integration options for React, JavaScript Widget, and iOS, along with UI test automation and backend testing capabilities.

Advanced-Prompt-Generator

This project is an LLM-based Advanced Prompt Generator designed to automate the process of prompt engineering by enhancing given input prompts using large language models (LLMs). The tool can generate advanced prompts with minimal user input, leveraging LLM agents for optimized prompt generation. It supports gpt-4o or gpt-4o-mini, offers FastAPI & Docker deployment for efficiency, provides a Gradio interface for easy testing, and is hosted on Hugging Face Spaces for quick demos. Users can expand model support to offer more variety and flexibility.

opencode.nvim

Opencode.nvim is a neovim frontend for Opencode, a terminal-based AI coding agent. It provides a chat interface between neovim and the Opencode AI agent, capturing editor context to enhance prompts. The plugin maintains persistent sessions for continuous conversations with the AI assistant, similar to Cursor AI.

off-grid-mobile

Off Grid is a complete offline AI suite that allows users to perform various tasks such as text generation, image generation, vision AI, voice transcription, and document analysis on their mobile devices without sending any data out. The tool offers high performance on flagship devices and supports a wide range of models for different tasks. Users can easily install the tool on Android by downloading the APK from GitHub Releases or build it from source with Node.js and JDK. The documentation provides detailed information on the system architecture, codebase, design system, visual hierarchy, test flows, and more. Contributions are welcome, and the tool is built with a focus on user privacy and data security, ensuring no cloud, subscription, or data harvesting.

De-AI-Prompt-Enhancer-Writer-Booster-SKILL

De-AI-Prompt-Enhancer-Writer-Booster-SKILL is a tool designed to enhance AI-generated prompts for writing tasks. It provides advanced features to improve the quality and creativity of the generated content. The tool offers a user-friendly interface and various customization options to tailor the prompts according to specific requirements. With De-AI-Prompt-Enhancer-Writer-Booster-SKILL, users can boost their writing skills and productivity by generating high-quality prompts for various purposes.

ComfyUI_VLM_nodes

ComfyUI_VLM_nodes is a repository containing various nodes for utilizing Vision Language Models (VLMs) and Language Models (LLMs). The repository provides nodes for tasks such as structured output generation, image to music conversion, LLM prompt generation, automatic prompt generation, and more. Users can integrate different models like InternLM-XComposer2-VL, UForm-Gen2, Kosmos-2, moondream1, moondream2, JoyTag, and Chat Musician. The nodes support features like extracting keywords, generating prompts, suggesting prompts, and obtaining structured outputs. The repository includes examples and instructions for using the nodes effectively.

For similar jobs

Muice-Chatbot

Muice-Chatbot is an AI chatbot designed to proactively engage in conversations with users. It is based on the ChatGLM2-6B and Qwen-7B models, with a training dataset of 1.8K+ dialogues. The chatbot has a speaking style similar to a 2D girl, being somewhat tsundere but willing to share daily life details and greet users differently every day. It provides various functionalities, including initiating chats and offering 5 available commands. The project supports model loading through different methods and provides onebot service support for QQ users. Users can interact with the chatbot by running the main.py file in the project directory.

mahilo

Mahilo is a flexible framework for creating multi-agent systems that can interact with humans while sharing context internally. It allows developers to set up complex agent networks for various applications, from customer service to emergency response simulations. Agents can communicate with each other and with humans, making the system efficient by handling context from multiple agents and helping humans stay focused on specific problems. The system supports Realtime API for voice interactions, WebSocket-based communication, flexible communication patterns, session management, and easy agent definition.

pipecat-flows

Pipecat Flows is a framework designed for building structured conversations in AI applications. It allows users to create both predefined conversation paths and dynamically generated flows, handling state management and LLM interactions. The framework includes a Python module for building conversation flows and a visual editor for designing and exporting flow configurations. Pipecat Flows is suitable for scenarios such as customer service scripts, intake forms, personalized experiences, and complex decision trees.

YesImBot

YesImBot, also known as Athena, is a Koishi plugin designed to allow large AI models to participate in group chat discussions. It offers easy customization of the bot's name, personality, emotions, and other messages. The plugin supports load balancing multiple API interfaces for large models, provides immersive context awareness, blocks potentially harmful messages, and automatically fetches high-quality prompts. Users can adjust various settings for the bot and customize system prompt words. The ultimate goal is to seamlessly integrate the bot into group chats without detection, with ongoing improvements and features like message recognition, emoji sending, multimodal image support, and more.

tiledesk-chatbot

Tiledesk Chatbot Engine is a Node.js-based framework for creating and managing interactive chatbots. It is designed to work seamlessly with the Tiledesk Design Studio, allowing easy design and customization of chatbot behavior. The engine is scalable, performant, and encourages collaboration and innovation through its open-source nature under the MIT license.

nonebot-plugin-marshoai

nonebot-plugin-marshoai is a chatbot plugin that utilizes the OpenAI standard format API, such as the GitHub Models API, to enable chat functionalities. The plugin features the character Marsho, a cute cat girl, for engaging conversations. It supports OneBot adapters and GitHub Models API, with limited validation for other adapters. Developed by Melobot.

WeClone

WeClone is an all-in-one solution for creating your digital twin from chat records. It allows users to fine-tune large language models using their chat history, capturing their unique style and personality to integrate into a chatbot, effectively creating a digital avatar. The tool offers digital cloning, chatbot integration, user-friendly interface for managing chat records, fine-tuning with LoRA, and cross-platform compatibility.

llm-memorization

The 'llm-memorization' project is a tool designed to index, archive, and search conversations with a local LLM using a SQLite database enriched with automatically extracted keywords. It aims to provide personalized context at the start of a conversation by adding memory information to the initial prompt. The tool automates queries from local LLM conversational management libraries, offers a hybrid search function, enhances prompts based on posed questions, and provides an all-in-one graphical user interface for data visualization. It supports both French and English conversations and prompts for bilingual use.