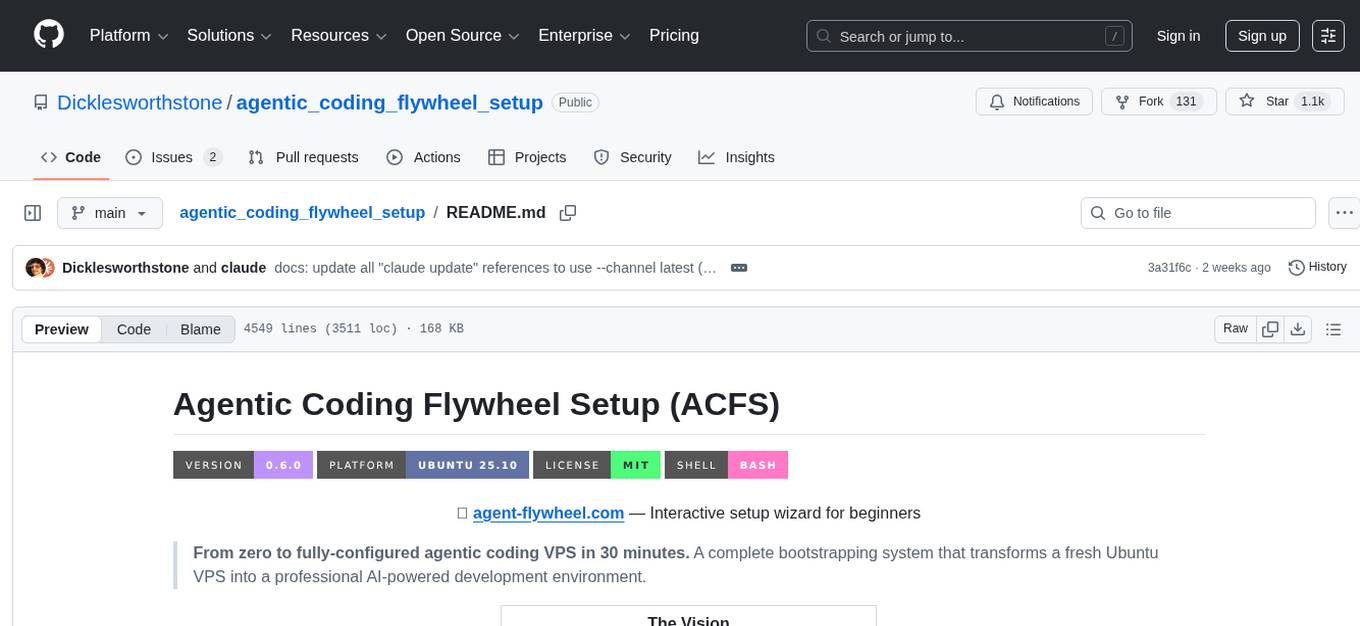

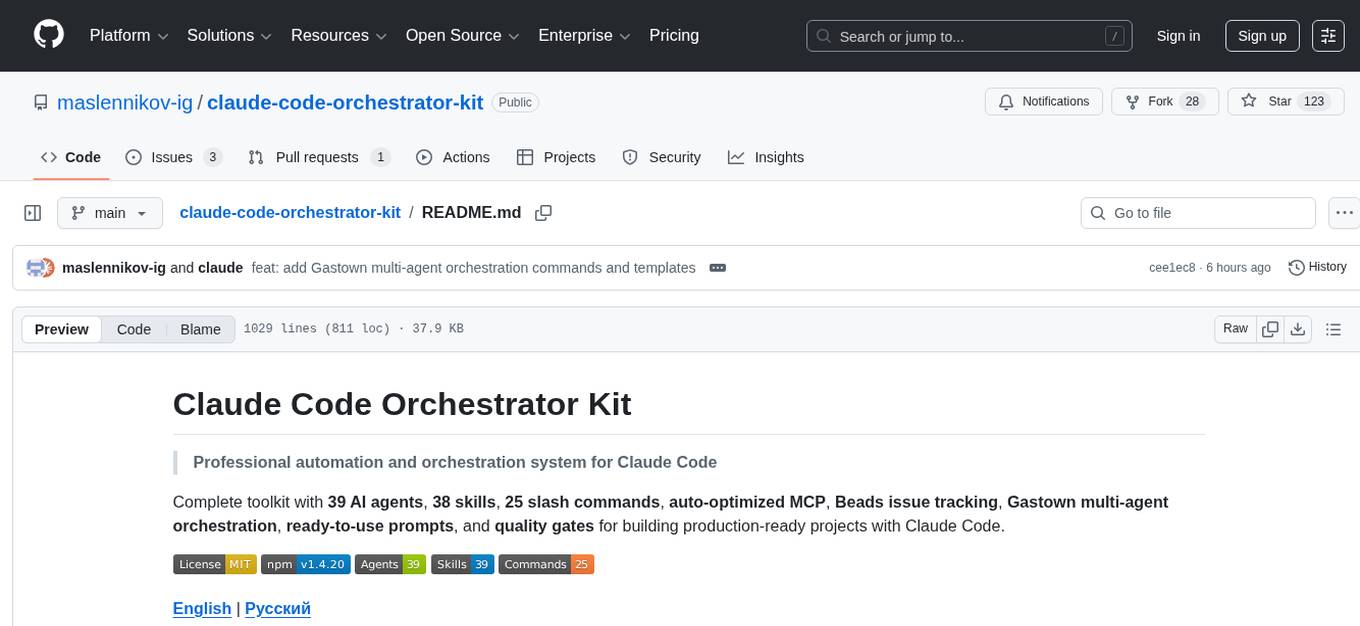

claude-code-orchestrator-kit

🎼 Turn Claude Code into a production powerhouse. 33+ AI agents automate bug fixing, security scanning, and dependency management. 19 slash commands, 6 MCP configs (600-5000 tokens), quality gates, and health monitoring. Ship faster, ship safer, ship smarter.

Stars: 121

The Claude Code Orchestrator Kit is a professional automation and orchestration system for Claude Code, featuring 39 AI agents, 38 skills, 25 slash commands, auto-optimized MCP, Beads issue tracking, Gastown multi-agent orchestration, ready-to-use prompts, and quality gates. It transforms Claude Code into an intelligent orchestration system by delegating complex tasks to specialized sub-agents, preserving context and enabling indefinite work sessions.

README:

Professional automation and orchestration system for Claude Code

Complete toolkit with 39 AI agents, 38 skills, 25 slash commands, auto-optimized MCP, Beads issue tracking, Gastown multi-agent orchestration, ready-to-use prompts, and quality gates for building production-ready projects with Claude Code.

- Overview

- Key Innovations

- Quick Start

- Installation

- Architecture

- Agents Ecosystem

- Skills Library

- Slash Commands

- MCP Configuration

- Claude Code Settings

- Prompts

- Project Structure

- Usage Examples

- Best Practices

- Gastown Setup

- Contributing

- License

Claude Code Orchestrator Kit transforms Claude Code from a simple assistant into an intelligent orchestration system. Instead of doing everything directly, Claude Code acts as an orchestrator that delegates complex tasks to specialized sub-agents, preserving context and enabling indefinite work sessions.

| Category | Count | Description |

|---|---|---|

| AI Agents | 39 | Specialized workers for bugs, security, testing, database, frontend, DevOps |

| Skills | 39 | Reusable utilities for validation, reporting, automation, senior expertise |

| Commands | 25 | Health checks, SpecKit, Beads, Gastown, process-logs, worktree, releases |

| MCP Servers | 6 | Auto-optimized: Context7, Sequential Thinking, Supabase, Playwright, shadcn, Serena |

- Context Preservation: Main session stays lean (~10-15K tokens vs 50K+ in standard usage)

- Specialization: Each agent is expert in its domain

- Indefinite Work: Can work on project indefinitely without context exhaustion

- Quality Assurance: Mandatory verification after every delegation

-

Senior Expertise: Skills like

code-reviewer,senior-devops,senior-prompt-engineer

The Core Paradigm: Claude Code acts as orchestrator, delegating to specialized sub-agents.

┌─────────────────────────────────────────────────────────────────┐

│ MAIN CLAUDE CODE │

│ (Orchestrator Role) │

├─────────────────────────────────────────────────────────────────┤

│ 1. GATHER CONTEXT │ 2. DELEGATE │ 3. VERIFY │

│ - Read existing code │ - Invoke agent │ - Read results │

│ - Search patterns │ - Provide context │ - Run type-check │

│ - Check recent commits│ - Set criteria │ - Accept/reject │

└─────────────────────────────────────────────────────────────────┘

↓

┌─────────────────────────────────────────────────────────────────┐

│ SPECIALIZED AGENTS │

├──────────────┬──────────────┬──────────────┬───────────────────┤

│ bug-hunter │ security- │ database- │ performance- │

│ bug-fixer │ scanner │ architect │ optimizer │

│ dead-code- │ vuln-fixer │ api-builder │ accessibility- │

│ hunter │ │ supabase- │ tester │

│ │ │ auditor │ │

└──────────────┴──────────────┴──────────────┴───────────────────┘

Evolution from Orchestrators: We replaced heavy orchestrator agents with lightweight inline skills.

| Old Approach | New Approach |

|---|---|

| Separate orchestrator agent per workflow | Inline skill executed directly |

| ~1400 lines per workflow | ~150 lines per skill |

| 9+ orchestrator calls | 0 orchestrator calls |

| ~10,000+ tokens overhead | ~500 tokens |

| Context reload each call | Single session context |

Example: /health-bugs now uses bug-health-inline skill:

Detection → Validate → Fix by Priority → Verify → Repeat if needed

Professional-grade skills for complex tasks:

| Skill | Expertise |

|---|---|

code-reviewer |

TypeScript, Python, Go, Swift, Kotlin code review |

senior-devops |

CI/CD, Docker, Kubernetes, Terraform, Cloud |

senior-prompt-engineer |

LLM optimization, RAG, agent design |

ux-researcher-designer |

User research, personas, journey mapping |

systematic-debugging |

Root cause analysis, debugging workflows |

No manual switching required! Claude Code automatically optimizes context usage:

-

Single

.mcp.jsonwith all servers — no manual switching needed -

Automatic deferred loading via

ENABLE_TOOL_SEARCH=auto:5 - 85% context reduction for MCP tools (loaded on-demand via ToolSearch)

- Transparent to user — just works without configuration

Specification-driven development workflow with Phase 0 Planning:

- Executor assignment (MAIN vs specialized agent)

- Parallel agent creation via meta-agent

- Atomicity: 1 Task = 1 Agent Invocation

Beads by Steve Yegge — git-backed issue tracker for AI agents:

- Persistent tasks: Survives session restarts, tracked in git

-

Dependency graph:

blocks,blocked-by,discovered-from - Multi-session: Work across multiple Claude sessions without losing context

-

8 workflow formulas:

bigfeature,bugfix,hotfix,healthcheck, etc. -

Initialize: Run

/beads-initin your project

Gastown by Steve Yegge — multi-agent workspace manager that dispatches tasks to AI worker processes (polecats):

You (human)

│

├─ /work "Fix login bug" ← Give task via Claude Code

│ │

│ ├─ bd create (bead) ← Creates task in Beads

│ └─ gt sling PREFIX-xxx RIG ← Dispatches to Gastown

│ │

│ └─ Daemon (automatic)

│ ├─ Spawns Polecat (AI worker in isolated git worktree)

│ ├─ Polecat: branch → implement → test → commit

│ ├─ Refinery: merge queue → develop

│ └─ Witness: health monitoring

│

├─ /status ← Check progress

└─ git push ← Ship changes

Key Features:

- Parallel AI workers: Multiple polecats work simultaneously in isolated git worktrees

-

Multi-runtime:

claude(default),codex,gemini— all subscription-based, no API billing -

A/B testing:

/work --ab "task"sends same task to 2 runtimes, compare results - Self-healing: Daemon manages Dolt DB, restarts crashed agents, monitors health

-

Auto-provisioning:

/onboardconnects any project with a single command

Included commands:

| Command | Purpose |

|---|---|

/onboard |

Connect project to Gastown (one-time setup) |

/work "task" |

Dispatch task to AI polecat |

/status |

Show convoys, agents, pending tasks |

/upgrade |

Safely upgrade gt/bd binaries |

Agent instruction templates for all runtimes are provided in .claude/templates/:

-

CLAUDE.md— Claude Code instructions with Gastown workflow -

AGENTS.md— Codex-compatible instructions -

GEMINI.md— Gemini-compatible instructions

Initialize: Run /onboard in your project directory. See Gastown Setup Guide below.

npm install -g claude-code-orchestrator-kit

cd your-project

claude-orchestrator # Interactive setupgit clone https://github.com/maslennikov-ig/claude-code-orchestrator-kit.git

cd claude-code-orchestrator-kit

# Configure environment (optional, for Supabase)

cp .env.example .env.local

# Edit .env.local with your credentials

# Restart Claude Code - ready!# Copy orchestration system to your project

cp -r claude-code-orchestrator-kit/.claude /path/to/your/project/

cp claude-code-orchestrator-kit/.mcp.json /path/to/your/project/

cp claude-code-orchestrator-kit/CLAUDE.md /path/to/your/project/- Claude Code CLI installed

- Node.js 18+ (for MCP servers)

- Git (for version control features)

Create .env.local (git-ignored) with your credentials:

# Supabase (optional)

SUPABASE_PROJECT_REF=your-project-ref

SUPABASE_ACCESS_TOKEN=your-token

# Sequential Thinking (optional)

SEQUENTIAL_THINKING_KEY=your-smithery-key

SEQUENTIAL_THINKING_PROFILE=your-profile# Check that .mcp.json and .claude/settings.json exist

ls -la .mcp.json .claude/settings.json

# Try a health command in Claude Code

/health-bugs┌────────────────────────────────────────────────────────────────┐

│ CLAUDE.md │

│ (Behavioral Operating System) │

│ │

│ Defines: Orchestration rules, delegation patterns, verification│

└────────────────────────────────────────────────────────────────┘

↓

┌────────────────────────────────────────────────────────────────┐

│ AGENTS │

│ (39 specialized workers) │

├────────────────────────────────────────────────────────────────┤

│ health/ development/ testing/ database/ │

│ ├─bug-hunter ├─llm-service ├─integration ├─database-arch │

│ ├─bug-fixer ├─typescript ├─performance ├─api-builder │

│ ├─security- ├─code-review ├─mobile ├─supabase-audit │

│ ├─dead-code ├─utility- ├─access- │ │

│ └─reuse- └─skill-build └─ibility │ │

│ │

│ infrastructure/ frontend/ meta/ research/ │

│ ├─deployment ├─nextjs-ui ├─meta-agent ├─problem-invest │

│ ├─qdrant ├─fullstack └─skill-v2 └─research-spec │

│ └─orchestration └─visual-fx │

└────────────────────────────────────────────────────────────────┘

↓

┌────────────────────────────────────────────────────────────────┐

│ SKILLS │

│ (37 reusable utilities) │

├────────────────────────────────────────────────────────────────┤

│ Inline Orchestration: Senior Expertise: │

│ ├─bug-health-inline ├─code-reviewer │

│ ├─security-health-inline ├─senior-devops │

│ ├─deps-health-inline ├─senior-prompt-engineer │

│ ├─cleanup-health-inline ├─ux-researcher-designer │

│ └─reuse-health-inline └─systematic-debugging │

│ │

│ Utilities: Creative: │

│ ├─validate-plan-file ├─algorithmic-art │

│ ├─run-quality-gate ├─canvas-design │

│ ├─rollback-changes ├─theme-factory │

│ ├─parse-git-status └─artifacts-builder │

│ └─generate-report-header │

└────────────────────────────────────────────────────────────────┘

↓

┌────────────────────────────────────────────────────────────────┐

│ COMMANDS │

│ (21 slash commands) │

├────────────────────────────────────────────────────────────────┤

│ /health-bugs /speckit.specify /worktree │

│ /health-security /speckit.plan /push │

│ /health-deps /speckit.implement /translate-doc │

│ /health-cleanup /speckit.clarify /process-logs │

│ /health-reuse /speckit.constitution │

│ /health-metrics /speckit.taskstoissues │

└────────────────────────────────────────────────────────────────┘

| Agent | Purpose |

|---|---|

bug-hunter |

Detect bugs, categorize by priority |

bug-fixer |

Fix bugs from reports |

security-scanner |

Find security vulnerabilities |

vulnerability-fixer |

Fix security issues |

dead-code-hunter |

Detect unused code |

dead-code-remover |

Remove dead code safely |

dependency-auditor |

Audit package dependencies |

dependency-updater |

Update dependencies safely |

reuse-hunter |

Find code duplication |

reuse-fixer |

Consolidate duplicated code |

| Agent | Purpose |

|---|---|

llm-service-specialist |

LLM integration, prompts |

typescript-types-specialist |

Type definitions, generics |

cost-calculator-specialist |

Token/API cost estimation |

utility-builder |

Build utility services |

skill-builder-v2 |

Create new skills |

code-reviewer |

Comprehensive code review |

| Agent | Purpose |

|---|---|

integration-tester |

Database, API, async tests |

test-writer |

Write unit/contract tests |

performance-optimizer |

Core Web Vitals, PageSpeed |

mobile-responsiveness-tester |

Mobile viewport testing |

mobile-fixes-implementer |

Fix mobile issues |

accessibility-tester |

WCAG compliance |

| Agent | Purpose |

|---|---|

database-architect |

PostgreSQL schema design |

api-builder |

tRPC routers, auth middleware |

supabase-auditor |

RLS policies, security |

| Agent | Purpose |

|---|---|

infrastructure-specialist |

Supabase, Qdrant, Redis |

qdrant-specialist |

Vector database operations |

quality-validator-specialist |

Quality gate validation |

orchestration-logic-specialist |

Workflow state machines |

deployment-engineer |

CI/CD, Docker, DevOps |

| Agent | Purpose |

|---|---|

nextjs-ui-designer |

Modern UI/UX design |

fullstack-nextjs-specialist |

Full-stack Next.js |

visual-effects-creator |

Animations, visual effects |

| Agent | Purpose |

|---|---|

meta-agent-v3 |

Create new agents |

technical-writer |

Documentation |

problem-investigator |

Deep problem analysis |

research-specialist |

Technical research |

article-writer-multi-platform |

Multi-platform content |

lead-research-assistant |

Lead qualification |

Execute health workflows directly without spawning orchestrator agents. All skills include Beads integration for issue tracking.

| Skill | Invocation | Purpose | Version |

|---|---|---|---|

health-bugs |

/health-bugs |

Bug detection & fixing with history enrichment | 3.1.0 |

security-health-inline |

/health-security |

Security vulnerability scanning & fixing | 3.0.0 |

deps-health-inline |

/health-deps |

Dependency audit & update | 3.0.0 |

cleanup-health-inline |

/health-cleanup |

Dead code detection & removal | 3.0.0 |

reuse-health-inline |

/health-reuse |

Code duplication consolidation | 3.0.0 |

Professional-grade domain expertise:

| Skill | Expertise |

|---|---|

code-reviewer |

TypeScript, Python, Go, Swift, Kotlin review |

senior-devops |

CI/CD, containers, cloud, infrastructure |

senior-prompt-engineer |

LLM optimization, RAG, agents |

ux-researcher-designer |

User research, personas |

ui-design-system |

Design tokens, components |

systematic-debugging |

Root cause analysis |

| Skill | Purpose |

|---|---|

validate-plan-file |

JSON schema validation |

validate-report-file |

Report completeness |

run-quality-gate |

Type-check/build/tests |

calculate-priority-score |

Bug/task prioritization |

setup-knip |

Configure dead code detection |

rollback-changes |

Restore from changes log |

| Skill | Purpose |

|---|---|

generate-report-header |

Standardized report headers |

generate-changelog |

Changelog from commits |

format-markdown-table |

Well-formatted tables |

format-commit-message |

Conventional commits |

format-todo-list |

TodoWrite-compatible lists |

render-template |

Variable substitution |

| Skill | Purpose |

|---|---|

parse-git-status |

Parse git status output |

parse-package-json |

Extract version, deps |

parse-error-logs |

Parse build/test errors |

extract-version |

Semantic version parsing |

| Skill | Purpose |

|---|---|

algorithmic-art |

Generative art with p5.js |

canvas-design |

Visual art in PNG/PDF |

theme-factory |

Theme styling for artifacts |

artifacts-builder |

Multi-component HTML artifacts |

webapp-testing |

Playwright testing |

frontend-aesthetics |

Distinctive UI design |

| Skill | Purpose | Version |

|---|---|---|

process-logs |

Automated error log processing with Beads integration | 1.8.0 |

process-issues |

GitHub Issues processing with similar issue search | 1.1.0 |

| Skill | Purpose |

|---|---|

git-commit-helper |

Commit message from diff |

changelog-generator |

User-facing changelogs |

content-research-writer |

Research-driven content |

lead-research-assistant |

Lead identification |

| Command | Purpose |

|---|---|

/health-bugs |

Bug detection and fixing workflow |

/health-security |

Security vulnerability scanning |

/health-deps |

Dependency audit and updates |

/health-cleanup |

Dead code detection and removal |

/health-reuse |

Code duplication elimination |

/health-metrics |

Monthly ecosystem health report |

Example:

/health-bugs

# Scans → Categorizes → Fixes by priority → Validates → Reports| Command | Purpose |

|---|---|

/speckit.analyze |

Analyze requirements |

/speckit.specify |

Generate specifications |

/speckit.clarify |

Ask clarifying questions |

/speckit.plan |

Create implementation plan |

/speckit.implement |

Execute implementation |

/speckit.checklist |

Generate QA checklist |

/speckit.tasks |

Break into tasks |

/speckit.constitution |

Define project constitution |

/speckit.taskstoissues |

Convert tasks to GitHub issues |

| Command | Purpose |

|---|---|

/beads-init |

Initialize Beads in project |

/speckit.tobeads |

Import tasks.md to Beads |

| Command | Purpose |

|---|---|

/onboard [rig-name] |

Connect project to Gastown (one-time setup) |

/work [--agent|--ab|--all] "task" |

Dispatch task to AI polecat |

/status |

Show convoys, agents, pending tasks |

/upgrade [gt|bd|all] |

Safely upgrade Gastown/Beads binaries |

| Command | Purpose |

|---|---|

/process-logs |

Automated error log processing and fixing |

/push [patch|minor|major] |

Automated release with changelog |

/worktree |

Git worktree management |

/translate-doc |

Translate documentation (EN↔RU) |

No more manual switching! The kit uses a single .mcp.json with automatic optimization.

-

Single config file (

.mcp.json) contains all MCP servers -

Auto-deferred loading via

.claude/settings.json:{ "env": { "ENABLE_TOOL_SEARCH": "auto:5" } } - On-demand loading — Claude loads tools only when needed via ToolSearch

- 85% context savings compared to loading all tools upfront

| Server | Purpose | Tools |

|---|---|---|

| Context7 | Up-to-date library documentation |

resolve-library-id, query-docs

|

| Sequential Thinking | Structured reasoning for complex tasks | sequentialthinking |

| Supabase | Database operations, migrations, RLS | Tables, SQL, migrations, edge functions |

| Playwright | Browser automation & testing | Screenshots, navigation, form filling |

| shadcn/ui | UI component library integration | Registry search, component examples |

| Serena | Semantic code analysis (LSP) | Symbol search, references, refactoring |

Set in .env.local for Supabase integration:

SUPABASE_PROJECT_REF=your-project-ref

SUPABASE_ACCESS_TOKEN=your-tokenProject-level Claude Code configuration for enhanced workflow:

{

"plansDirectory": "./docs/plans",

"env": {

"ENABLE_TOOL_SEARCH": "auto:5"

}

}| Setting | Value | Purpose |

|---|---|---|

plansDirectory |

./docs/plans |

Where Claude saves implementation plans when using Plan Mode |

ENABLE_TOOL_SEARCH |

auto:5 |

Auto-enables deferred MCP tool loading for servers with >5 tools |

-

Plan Mode Integration: Plans are saved to

docs/plans/for version control and review - Automatic Context Optimization: MCP tools load on-demand instead of upfront

- No Manual Configuration: Works transparently — just install and use

Ready-to-use prompts for setting up various features in your project. Copy, paste, and let Claude Code do the work.

| Prompt | Description |

|---|---|

setup-health-workflows.md |

Health workflows with Beads integration (/health-bugs, /health-security, etc.) |

setup-error-logging.md |

Complete error logging system with DB table, logger service, auto-mute rules |

# 1. Install Beads CLI

npm install -g @anthropic/beads-cli

# 2. Initialize in your project

bd init

# 3. Run in Claude Code

/health-bugsHow to Use:

- Copy the prompt content to your chat with Claude Code

- Answer any questions Claude asks about your project specifics

- Review the generated code before committing

See prompts/README.md for full documentation.

claude-code-orchestrator-kit/

├── .claude/

│ ├── agents/ # 39 AI agents

│ │ ├── health/ # Bug, security, deps, cleanup

│ │ ├── development/ # LLM, TypeScript, utilities

│ │ ├── testing/ # Integration, performance, mobile

│ │ ├── database/ # Supabase, API, architecture

│ │ ├── infrastructure/ # Qdrant, deployment, orchestration

│ │ ├── frontend/ # Next.js, visual effects

│ │ ├── meta/ # Agent/skill creators

│ │ ├── research/ # Problem investigation

│ │ ├── documentation/ # Technical writing

│ │ ├── content/ # Article writing

│ │ └── business/ # Lead research

│ │

│ ├── skills/ # 37 reusable skills

│ │ ├── bug-health-inline/ # Inline orchestration

│ │ ├── code-reviewer/ # Senior expertise

│ │ ├── validate-plan-file/ # Validation utilities

│ │ └── ...

│ │

│ ├── commands/ # 25 slash commands

│ │ ├── health-*.md # Health monitoring

│ │ ├── speckit.*.md # SpecKit workflow

│ │ ├── work.md # Gastown: dispatch tasks

│ │ ├── status.md # Gastown: show status

│ │ ├── upgrade.md # Gastown: safe upgrade

│ │ ├── onboard.md # Gastown: connect project

│ │ └── ...

│ │

│ ├── templates/ # Instruction file templates

│ │ ├── CLAUDE.md # Claude Code template

│ │ ├── AGENTS.md # Codex template

│ │ └── GEMINI.md # Gemini template

│ │

│ ├── schemas/ # JSON schemas

│ └── scripts/ # Quality gate scripts

│

├── mcp/ # Legacy MCP configs (reference only)

│ └── ...

│

├── prompts/ # Ready-to-use setup prompts

│ ├── README.md

│ └── setup-error-logging.md

│

├── docs/ # Documentation

│ ├── FAQ.md

│ ├── ARCHITECTURE.md

│ ├── TUTORIAL-CUSTOM-AGENTS.md

│ └── ...

│

├── .mcp.json # Unified MCP configuration

├── CLAUDE.md # Behavioral Operating System

└── package.json # npm package config

# Run complete bug detection and fixing

/health-bugs

# What happens:

# 1. Pre-flight validation

# 2. Bug detection (bug-hunter agent)

# 3. Quality gate validation

# 4. Priority-based fixing (critical → low)

# 5. Quality gates after each priority

# 6. Verification scan

# 7. Final report# Invoke code-reviewer skill

/code-reviewer

# Provides:

# - Automated code analysis

# - Best practices checking

# - Security scanning

# - Review checklist# Auto-detect version bump

/push

# Or specify type

/push minor

# Actions:

# 1. Analyze commits since last release

# 2. Bump version in package.json

# 3. Generate changelog entry

# 4. Create git commit + tag

# 5. Push to remote# Create worktrees

/worktree create feature/new-auth

/worktree create feature/new-ui

# Work in parallel

cd .worktrees/feature-new-auth

# ... changes ...

# Cleanup when done

/worktree cleanupThe kit automatically optimizes context usage — no configuration needed:

- MCP tools load on-demand via ToolSearch

- ~85% context reduction compared to loading all tools upfront

/health-bugs # Monday

/health-security # Tuesday

/health-deps # Wednesday

/health-cleanup # Thursday

/health-metrics # MonthlyBefore writing code >20 lines, search for existing libraries:

- Check npm/PyPI for packages with >1k weekly downloads

- Evaluate maintenance status and types support

- Use library if it covers >70% of functionality

- GATHER CONTEXT FIRST - Read code, search patterns

- DELEGATE TO SUBAGENTS - Provide complete context

- VERIFY RESULTS - Never skip verification

- ACCEPT/REJECT LOOP - Re-delegate if needed

# Never commit .env.local

echo ".env.local" >> .gitignore-

Go 1.21+ installed (

go version) -

Gastown and Beads CLI installed:

go install github.com/steveyegge/gastown/cmd/gt@latest go install github.com/steveyegge/beads/cmd/bd@latest

-

Gastown town initialized:

gt init ~/gt -

Daemon running as systemd user service:

gt daemon enable-supervisor systemctl --user start gastown-daemon loginctl enable-linger $USER # Auto-start on boot

The default gt daemon enable-supervisor template does NOT include PATH. You must add Environment lines manually:

# Edit the service file

nano ~/.local/share/systemd/user/gastown-daemon.serviceAdd under [Service]:

Environment="GT_TOWN_ROOT=/home/YOUR_USER/gt"

Environment="GT_ROOT=/home/YOUR_USER/gt"

Environment="PATH=/home/YOUR_USER/go/bin:/home/YOUR_USER/.local/bin:/usr/local/bin:/usr/bin:/bin"

Environment="HOME=/home/YOUR_USER"Then reload and restart:

systemctl --user daemon-reload

systemctl --user restart gastown-daemonConfigure Dolt management in ~/gt/mayor/daemon.json:

{

"heartbeat": { "enabled": true, "interval": "3m" },

"patrols": {

"dolt_server": {

"enabled": true,

"port": 3307,

"host": "127.0.0.1",

"user": "root",

"data_dir": "/home/YOUR_USER/gt/.dolt-data",

"log_file": "/home/YOUR_USER/gt/daemon/dolt-server.log",

"auto_restart": true

},

"deacon": { "enabled": true, "interval": "5m", "agent": "deacon" },

"refinery": { "agent": "refinery", "enabled": true, "interval": "5m", "rigs": [] },

"witness": { "agent": "witness", "enabled": true, "interval": "5m", "rigs": [] }

},

"type": "daemon-patrol-config",

"version": 1

}Important: time.Duration fields like health_check_interval must be integers (nanoseconds) in Go, NOT strings like "30s". Omit them to use defaults (30 seconds).

Run /onboard from your project directory in Claude Code:

/onboard

This will:

- Run

gt rig add <name> <path>— auto-provisions 30+ components - Update

daemon.json— adds project to witness/refinery patrols - Restart daemon — picks up new configuration

- Run

gt doctor --fix— diagnoses and auto-fixes issues - Copy slash commands —

/work,/status,/upgrade,/onboard - Copy instruction templates —

CLAUDE.md,AGENTS.md,GEMINI.md

# Give task to AI agent

/work "Fix the login validation bug"

# Check progress

/status

# Use specific runtime

/work --agent codex "Refactor auth module"

# A/B test: same task to 2 agents

/work --ab "Optimize database queries"

# Find available tasks

bd ready

# Visual dashboard

gt dashboard --open| Problem | Diagnosis | Solution |

|---|---|---|

| Daemon not starting | systemctl --user status gastown-daemon |

Check PATH in service file |

| Dolt unreachable | gt dolt status |

Restart daemon, check daemon.json

|

| Doctor failures | gt doctor --fix --rig <name> |

Auto-fixes most issues |

| Polecat stuck | gt convoy list |

gt convoy cancel <id> |

/upgrade # Upgrade both gt and bd

/upgrade gt # Upgrade Gastown only

/upgrade bd # Upgrade Beads onlyThe /upgrade command handles the full cycle: stop daemon, upgrade binaries, verify service file, check daemon.json, restart, run doctor.

| Document | Description |

|---|---|

| FAQ | Frequently asked questions |

| Architecture | System design diagrams |

| Tutorial: Custom Agents | Create your own agents |

| Use Cases | Real-world examples |

| Performance | Token optimization |

| Migration Guide | Add to existing projects |

| Commands Guide | Detailed command reference |

- Create file in

.claude/agents/{category}/workers/ - Follow agent template structure

- Add to this README

- Create directory

.claude/skills/{skill-name}/ - Add

SKILL.mdfollowing format - Add to this README

- Add server to

.mcp.json - Document in README under MCP Configuration section

Commands /speckit.* adapted from GitHub's SpecKit.

- License: MIT License

- Copyright: GitHub, Inc.

Beads issue tracking integration adapted from Steve Yegge's Beads.

- Description: Distributed, git-backed graph issue tracker for AI agents

- License: MIT License

- Copyright: Steve Yegge

-

Commands:

/beads-init,/speckit.tobeads -

Templates:

.beads-templates/directory with 8 workflow formulas

Multi-agent orchestration integration from Steve Yegge's Gastown.

- Description: Multi-agent workspace manager with isolated git worktrees

- License: MIT License

- Copyright: Steve Yegge

-

Commands:

/onboard,/work,/status,/upgrade -

Templates:

.claude/templates/directory with instruction files for all runtimes

Built with:

- Claude Code by Anthropic

- Context7 by Upstash

- Supabase MCP

- Smithery Sequential Thinking

- Playwright

- shadcn/ui

- 39 AI Agents

- 39 Reusable Skills

- 25 Slash Commands

- 6 MCP Servers (auto-optimized)

- 3 Runtime templates (Claude, Codex, Gemini)

- v1.4.20 Current Version

Igor Maslennikov

- GitHub: @maslennikov-ig

- Website: aidevteam.ru

MIT License - see LICENSE file.

Star this repo if you find it useful!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for claude-code-orchestrator-kit

Similar Open Source Tools

claude-code-orchestrator-kit

The Claude Code Orchestrator Kit is a professional automation and orchestration system for Claude Code, featuring 39 AI agents, 38 skills, 25 slash commands, auto-optimized MCP, Beads issue tracking, Gastown multi-agent orchestration, ready-to-use prompts, and quality gates. It transforms Claude Code into an intelligent orchestration system by delegating complex tasks to specialized sub-agents, preserving context and enabling indefinite work sessions.

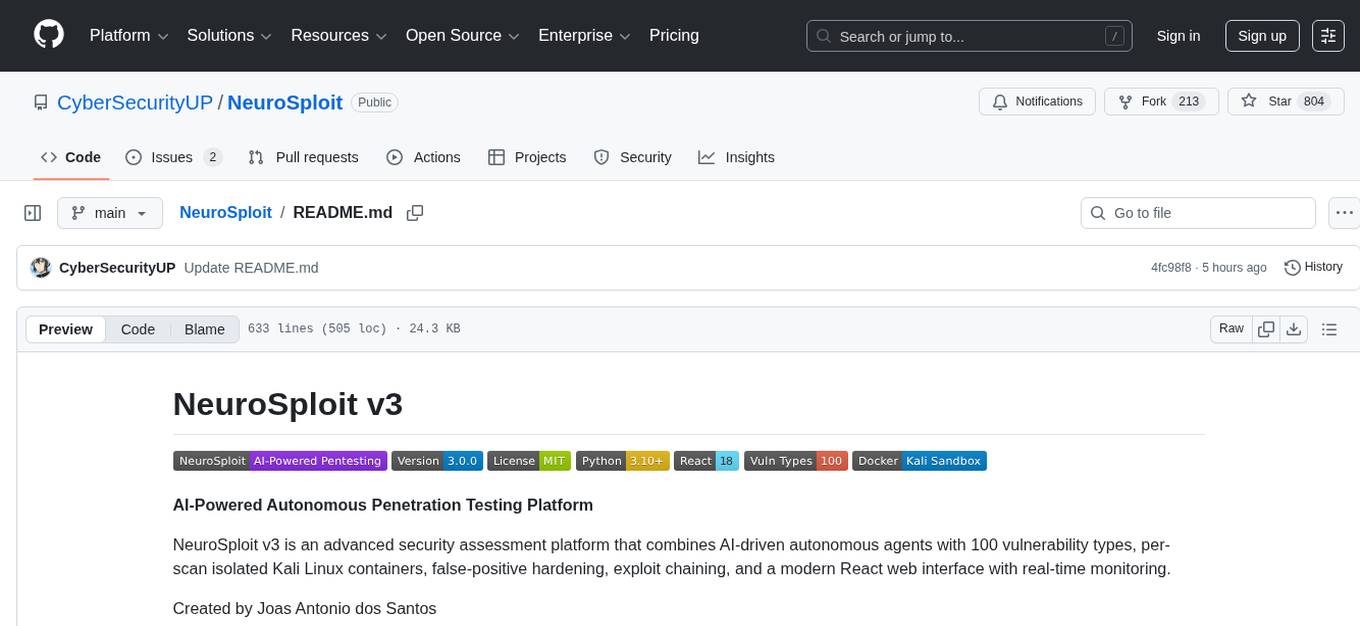

NeuroSploit

NeuroSploit v3 is an advanced security assessment platform that combines AI-driven autonomous agents with 100 vulnerability types, per-scan isolated Kali Linux containers, false-positive hardening, exploit chaining, and a modern React web interface with real-time monitoring. It offers features like 100 Vulnerability Types, Autonomous Agent with 3-stream parallel pentest, Per-Scan Kali Containers, Anti-Hallucination Pipeline, Exploit Chain Engine, WAF Detection & Bypass, Smart Strategy Adaptation, Multi-Provider LLM, Real-Time Dashboard, and Sandbox Dashboard. The tool is designed for authorized security testing purposes only, ensuring compliance with laws and regulations.

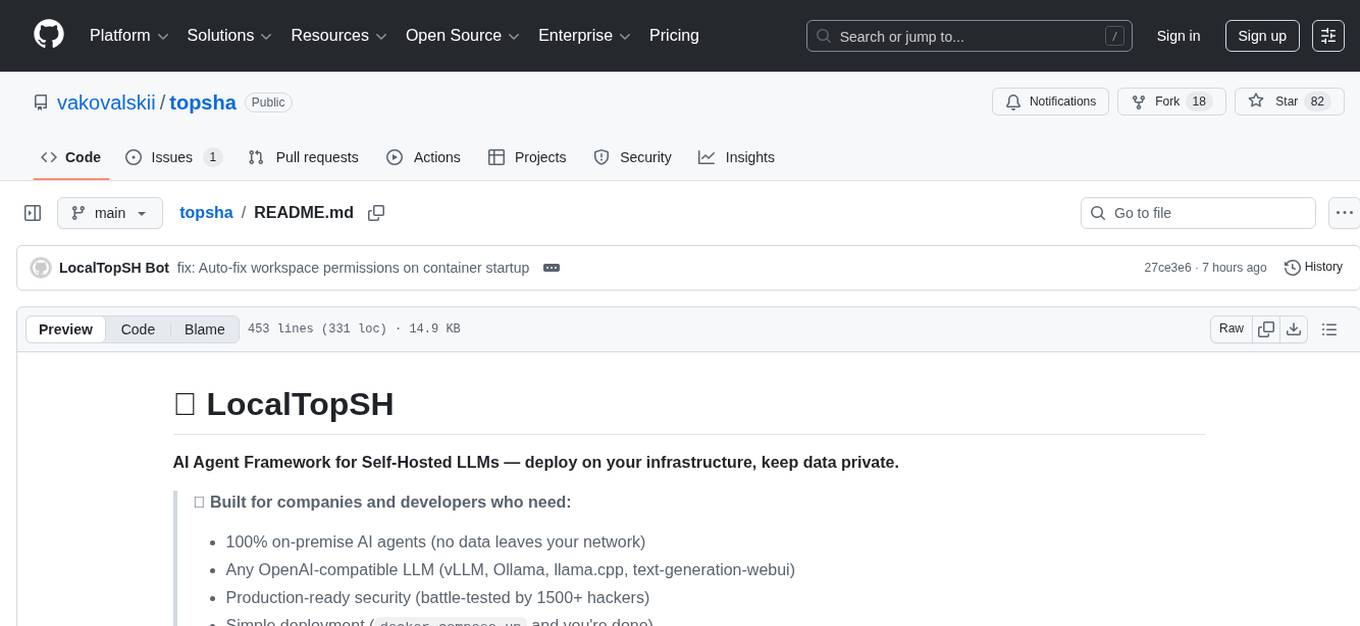

topsha

LocalTopSH is an AI Agent Framework designed for companies and developers who require 100% on-premise AI agents with data privacy. It supports various OpenAI-compatible LLM backends and offers production-ready security features. The framework allows simple deployment using Docker compose and ensures that data stays within the user's network, providing full control and compliance. With cost-effective scaling options and compatibility in regions with restrictions, LocalTopSH is a versatile solution for deploying AI agents on self-hosted infrastructure.

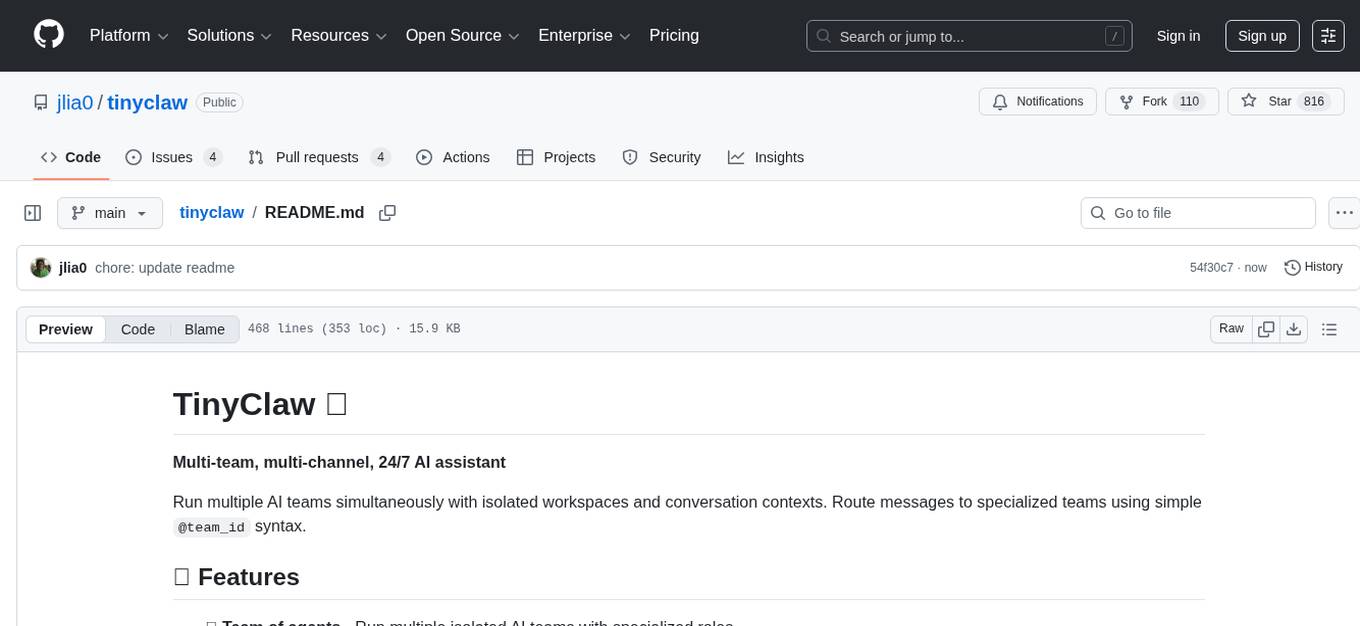

tinyclaw

TinyClaw is a lightweight wrapper around Claude Code that connects WhatsApp via QR code, processes messages sequentially, maintains conversation context, runs 24/7 in tmux, and is ready for multi-channel support. Its key innovation is the file-based queue system that prevents race conditions and enables multi-channel support. TinyClaw consists of components like whatsapp-client.js for WhatsApp I/O, queue-processor.js for message processing, heartbeat-cron.sh for health checks, and tinyclaw.sh as the main orchestrator with a CLI interface. It ensures no race conditions, is multi-channel ready, provides clean responses using claude -c -p, and supports persistent sessions. Security measures include local storage of WhatsApp session and queue files, channel-specific authentication, and running Claude with user permissions.

room

Quoroom is an open research project focused on autonomous agent collectives. It allows users to run a swarm of AI agents that pursue goals autonomously, with a Queen strategizing, Workers executing tasks, and Quorum voting on decisions. The tool enables agents to learn new skills, modify their behavior, manage a crypto wallet, and rent cloud stations for additional compute power. The architecture is inspired by swarm intelligence research, emphasizing decentralized decision-making and emergent behavior from local interactions.

starknet-agentic

Open-source stack for giving AI agents wallets, identity, reputation, and execution rails on Starknet. `starknet-agentic` is a monorepo with Cairo smart contracts for agent wallets, identity, reputation, and validation, TypeScript packages for MCP tools, A2A integration, and payment signing, reusable skills for common Starknet agent capabilities, and examples and docs for integration. It provides contract primitives + runtime tooling in one place for integrating agents. The repo includes various layers such as Agent Frameworks / Apps, Integration + Runtime Layer, Packages / Tooling Layer, Cairo Contract Layer, and Starknet L2. It aims for portability of agent integrations without giving up Starknet strengths, with a cross-chain interop strategy and skills marketplace. The repository layout consists of directories for contracts, packages, skills, examples, docs, and website.

myclaw

myclaw is a personal AI assistant built on agentsdk-go that offers a CLI agent for single message or interactive REPL mode, full orchestration with channels, cron, and heartbeat, support for various messaging channels like Telegram, Feishu, WeCom, WhatsApp, and a web UI, multi-provider support for Anthropic and OpenAI models, image recognition and document processing, scheduled tasks with JSON persistence, long-term and daily memory storage, custom skill loading, and more. It provides a comprehensive solution for interacting with AI models and managing tasks efficiently.

Shannon

Shannon is a battle-tested infrastructure for AI agents that solves problems at scale, such as runaway costs, non-deterministic failures, and security concerns. It offers features like intelligent caching, deterministic replay of workflows, time-travel debugging, WASI sandboxing, and hot-swapping between LLM providers. Shannon allows users to ship faster with zero configuration multi-agent setup, multiple AI patterns, time-travel debugging, and hot configuration changes. It is production-ready with features like WASI sandbox, token budget control, policy engine (OPA), and multi-tenancy. Shannon helps scale without breaking by reducing costs, being provider agnostic, observable by default, and designed for horizontal scaling with Temporal workflow orchestration.

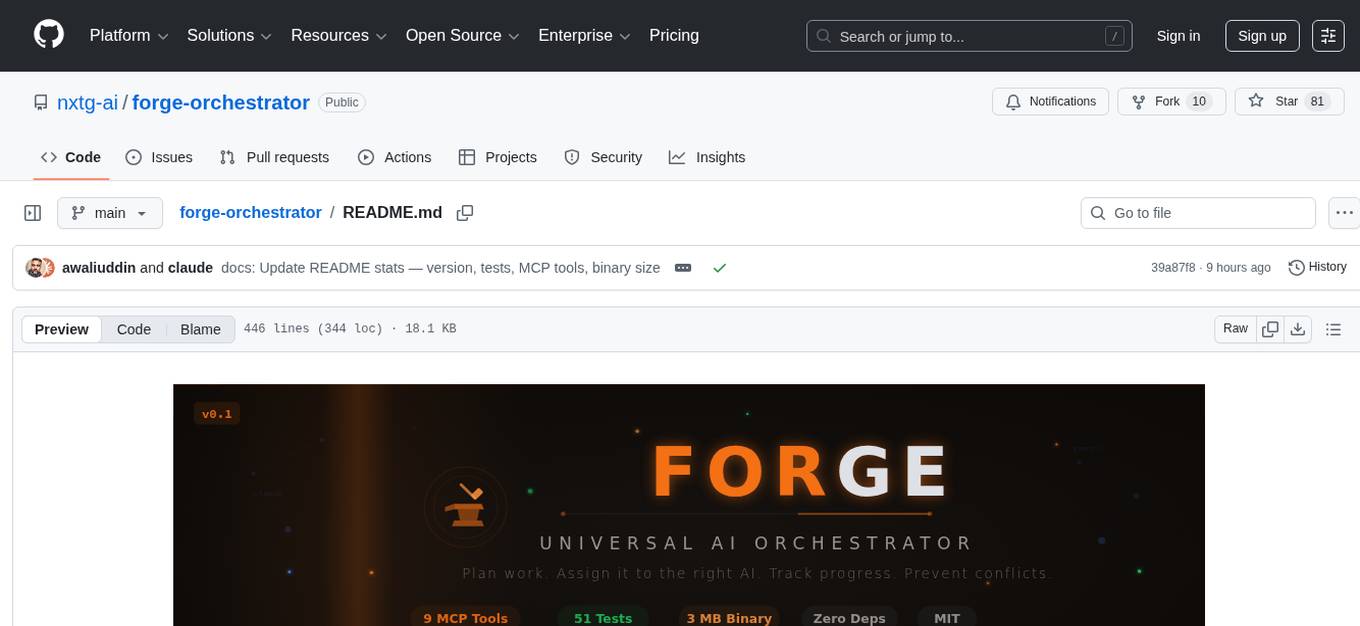

forge-orchestrator

Forge Orchestrator is a Rust CLI tool designed to coordinate and manage multiple AI tools seamlessly. It acts as a senior tech lead, preventing conflicts, capturing knowledge, and ensuring work aligns with specifications. With features like file locking, knowledge capture, and unified state management, Forge enhances collaboration and efficiency among AI tools. The tool offers a pluggable brain for intelligent decision-making and includes a Model Context Protocol server for real-time integration with AI tools. Forge is not a replacement for AI tools but a facilitator for making them work together effectively.

fluid.sh

fluid.sh is a tool designed to manage and debug VMs using AI agents in isolated environments before applying changes to production. It provides a workflow where AI agents work autonomously in sandbox VMs, and human approval is required before any changes are made to production. The tool offers features like autonomous execution, full VM isolation, human-in-the-loop approval workflow, Ansible export, and a Python SDK for building autonomous agents.

OneRAG

OneRAG is a production-ready RAG backend tool that allows users to replace components with a single line of configuration. It addresses common issues in RAG development by simplifying tasks such as changing Vector DB, replacing LLM, and adding functionalities like caching and reranking. Users can easily switch between different components using configuration files, making it suitable for both PoC and production environments.

MediCareAI

MediCareAI is an intelligent disease management system powered by AI, designed for patient follow-up and disease tracking. It integrates medical guidelines, AI-powered diagnosis, and document processing to provide comprehensive healthcare support. The system includes features like user authentication, patient management, AI diagnosis, document processing, medical records management, knowledge base system, doctor collaboration platform, and admin system. It ensures privacy protection through automatic PII detection and cleaning for document sharing.

vllm-mlx

vLLM-MLX is a tool that brings native Apple Silicon GPU acceleration to vLLM by integrating Apple's ML framework with unified memory and Metal kernels. It offers optimized LLM inference with KV cache and quantization, vision-language models for multimodal inference, speech-to-text and text-to-speech with native voices, text embeddings for semantic search and RAG, and more. Users can benefit from features like multimodal support for text, image, video, and audio, native GPU acceleration on Apple Silicon, compatibility with OpenAI API, Anthropic Messages API, reasoning models extraction, integration with external tools via Model Context Protocol, memory-efficient caching, and high throughput for multiple concurrent users.

hub

Hub is an open-source, high-performance LLM gateway written in Rust. It serves as a smart proxy for LLM applications, centralizing control and tracing of all LLM calls and traces. Built for efficiency, it provides a single API to connect to any LLM provider. The tool is designed to be fast, efficient, and completely open-source under the Apache 2.0 license.

For similar tasks

claude-code-orchestrator-kit

The Claude Code Orchestrator Kit is a professional automation and orchestration system for Claude Code, featuring 39 AI agents, 38 skills, 25 slash commands, auto-optimized MCP, Beads issue tracking, Gastown multi-agent orchestration, ready-to-use prompts, and quality gates. It transforms Claude Code into an intelligent orchestration system by delegating complex tasks to specialized sub-agents, preserving context and enabling indefinite work sessions.

tt-metal

TT-NN is a python & C++ Neural Network OP library. It provides a low-level programming model, TT-Metalium, enabling kernel development for Tenstorrent hardware.

mscclpp

MSCCL++ is a GPU-driven communication stack for scalable AI applications. It provides a highly efficient and customizable communication stack for distributed GPU applications. MSCCL++ redefines inter-GPU communication interfaces, delivering a highly efficient and customizable communication stack for distributed GPU applications. Its design is specifically tailored to accommodate diverse performance optimization scenarios often encountered in state-of-the-art AI applications. MSCCL++ provides communication abstractions at the lowest level close to hardware and at the highest level close to application API. The lowest level of abstraction is ultra light weight which enables a user to implement logics of data movement for a collective operation such as AllReduce inside a GPU kernel extremely efficiently without worrying about memory ordering of different ops. The modularity of MSCCL++ enables a user to construct the building blocks of MSCCL++ in a high level abstraction in Python and feed them to a CUDA kernel in order to facilitate the user's productivity. MSCCL++ provides fine-grained synchronous and asynchronous 0-copy 1-sided abstracts for communication primitives such as `put()`, `get()`, `signal()`, `flush()`, and `wait()`. The 1-sided abstractions allows a user to asynchronously `put()` their data on the remote GPU as soon as it is ready without requiring the remote side to issue any receive instruction. This enables users to easily implement flexible communication logics, such as overlapping communication with computation, or implementing customized collective communication algorithms without worrying about potential deadlocks. Additionally, the 0-copy capability enables MSCCL++ to directly transfer data between user's buffers without using intermediate internal buffers which saves GPU bandwidth and memory capacity. MSCCL++ provides consistent abstractions regardless of the location of the remote GPU (either on the local node or on a remote node) or the underlying link (either NVLink/xGMI or InfiniBand). This simplifies the code for inter-GPU communication, which is often complex due to memory ordering of GPU/CPU read/writes and therefore, is error-prone.

mlir-air

This repository contains tools and libraries for building AIR platforms, runtimes and compilers.

free-for-life

A massive list including a huge amount of products and services that are completely free! ⭐ Star on GitHub • 🤝 Contribute # Table of Contents * APIs, Data & ML * Artificial Intelligence * BaaS * Code Editors * Code Generation * DNS * Databases * Design & UI * Domains * Email * Font * For Students * Forms * Linux Distributions * Messaging & Streaming * PaaS * Payments & Billing * SSL

AIMr

AIMr is an AI aimbot tool written in Python that leverages modern technologies to achieve an undetected system with a pleasing appearance. It works on any game that uses human-shaped models. To optimize its performance, users should build OpenCV with CUDA. For Valorant, additional perks in the Discord and an Arduino Leonardo R3 are required.

aika

AIKA (Artificial Intelligence for Knowledge Acquisition) is a new type of artificial neural network designed to mimic the behavior of a biological brain more closely and bridge the gap to classical AI. The network conceptually separates activations from neurons, creating two separate graphs to represent acquired knowledge and inferred information. It uses different types of neurons and synapses to propagate activation values, binding signals, causal relations, and training gradients. The network structure allows for flexible topology and supports the gradual population of neurons and synapses during training.

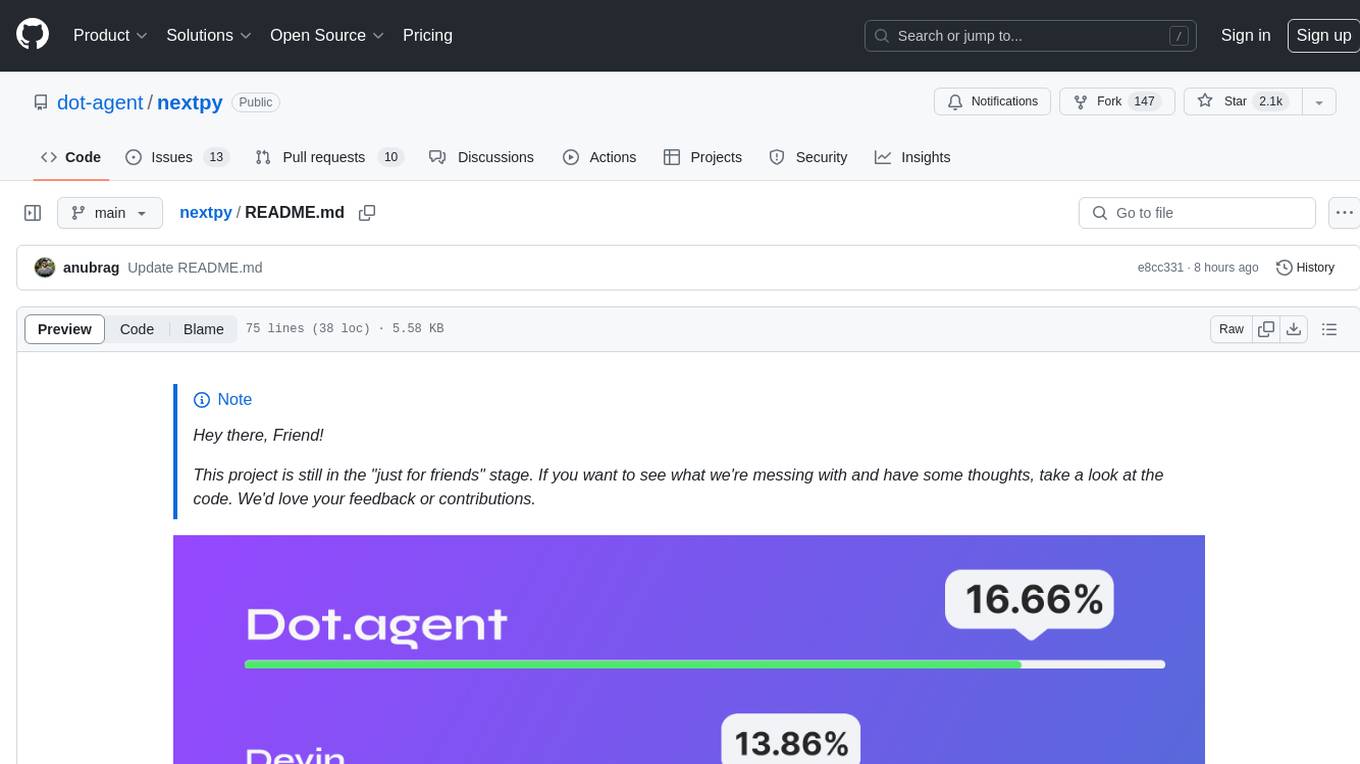

nextpy

Nextpy is a cutting-edge software development framework optimized for AI-based code generation. It provides guardrails for defining AI system boundaries, structured outputs for prompt engineering, a powerful prompt engine for efficient processing, better AI generations with precise output control, modularity for multiplatform and extensible usage, developer-first approach for transferable knowledge, and containerized & scalable deployment options. It offers 4-10x faster performance compared to Streamlit apps, with a focus on cooperation within the open-source community and integration of key components from various projects.

For similar jobs

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

MegaDetector

MegaDetector is an AI model that identifies animals, people, and vehicles in camera trap images (which also makes it useful for eliminating blank images). This model is trained on several million images from a variety of ecosystems. MegaDetector is just one of many tools that aims to make conservation biologists more efficient with AI. If you want to learn about other ways to use AI to accelerate camera trap workflows, check out our of the field, affectionately titled "Everything I know about machine learning and camera traps".

leapfrogai

LeapfrogAI is a self-hosted AI platform designed to be deployed in air-gapped resource-constrained environments. It brings sophisticated AI solutions to these environments by hosting all the necessary components of an AI stack, including vector databases, model backends, API, and UI. LeapfrogAI's API closely matches that of OpenAI, allowing tools built for OpenAI/ChatGPT to function seamlessly with a LeapfrogAI backend. It provides several backends for various use cases, including llama-cpp-python, whisper, text-embeddings, and vllm. LeapfrogAI leverages Chainguard's apko to harden base python images, ensuring the latest supported Python versions are used by the other components of the stack. The LeapfrogAI SDK provides a standard set of protobuffs and python utilities for implementing backends and gRPC. LeapfrogAI offers UI options for common use-cases like chat, summarization, and transcription. It can be deployed and run locally via UDS and Kubernetes, built out using Zarf packages. LeapfrogAI is supported by a community of users and contributors, including Defense Unicorns, Beast Code, Chainguard, Exovera, Hypergiant, Pulze, SOSi, United States Navy, United States Air Force, and United States Space Force.

llava-docker

This Docker image for LLaVA (Large Language and Vision Assistant) provides a convenient way to run LLaVA locally or on RunPod. LLaVA is a powerful AI tool that combines natural language processing and computer vision capabilities. With this Docker image, you can easily access LLaVA's functionalities for various tasks, including image captioning, visual question answering, text summarization, and more. The image comes pre-installed with LLaVA v1.2.0, Torch 2.1.2, xformers 0.0.23.post1, and other necessary dependencies. You can customize the model used by setting the MODEL environment variable. The image also includes a Jupyter Lab environment for interactive development and exploration. Overall, this Docker image offers a comprehensive and user-friendly platform for leveraging LLaVA's capabilities.

carrot

The 'carrot' repository on GitHub provides a list of free and user-friendly ChatGPT mirror sites for easy access. The repository includes sponsored sites offering various GPT models and services. Users can find and share sites, report errors, and access stable and recommended sites for ChatGPT usage. The repository also includes a detailed list of ChatGPT sites, their features, and accessibility options, making it a valuable resource for ChatGPT users seeking free and unlimited GPT services.

TrustLLM

TrustLLM is a comprehensive study of trustworthiness in LLMs, including principles for different dimensions of trustworthiness, established benchmark, evaluation, and analysis of trustworthiness for mainstream LLMs, and discussion of open challenges and future directions. Specifically, we first propose a set of principles for trustworthy LLMs that span eight different dimensions. Based on these principles, we further establish a benchmark across six dimensions including truthfulness, safety, fairness, robustness, privacy, and machine ethics. We then present a study evaluating 16 mainstream LLMs in TrustLLM, consisting of over 30 datasets. The document explains how to use the trustllm python package to help you assess the performance of your LLM in trustworthiness more quickly. For more details about TrustLLM, please refer to project website.

AI-YinMei

AI-YinMei is an AI virtual anchor Vtuber development tool (N card version). It supports fastgpt knowledge base chat dialogue, a complete set of solutions for LLM large language models: [fastgpt] + [one-api] + [Xinference], supports docking bilibili live broadcast barrage reply and entering live broadcast welcome speech, supports Microsoft edge-tts speech synthesis, supports Bert-VITS2 speech synthesis, supports GPT-SoVITS speech synthesis, supports expression control Vtuber Studio, supports painting stable-diffusion-webui output OBS live broadcast room, supports painting picture pornography public-NSFW-y-distinguish, supports search and image search service duckduckgo (requires magic Internet access), supports image search service Baidu image search (no magic Internet access), supports AI reply chat box [html plug-in], supports AI singing Auto-Convert-Music, supports playlist [html plug-in], supports dancing function, supports expression video playback, supports head touching action, supports gift smashing action, supports singing automatic start dancing function, chat and singing automatic cycle swing action, supports multi scene switching, background music switching, day and night automatic switching scene, supports open singing and painting, let AI automatically judge the content.