tt-metal

:metal: TT-NN operator library, and TT-Metalium low level kernel programming model.

Stars: 1366

TT-NN is a python & C++ Neural Network OP library. It provides a low-level programming model, TT-Metalium, enabling kernel development for Tenstorrent hardware.

README:

Hardware | Install | Discord | Join Us | Bounty $

TT-NN is a Python & C++ Neural Network OP library.

The Models team is focused on developing the following models, optimizing them for performance, accuracy, and compatibility. Follow each model link for more details.

[!IMPORTANT] For a full model list see the Model Matrix, or visit the Developer Hub.

[!NOTE] Performance Metrics:

- Time to First Token (TTFT) measures the time (in milliseconds) it takes to generate the first output token after input is received.

- T/S/U (Tokens per Second per User): Represents the throughput of first-token generation after prefill. It is calculated as 1 / inter-token latency.

- T/S (Tokens per Second): Represents total token throughput, calculated as T/S = T/S/U x batch size.

- TP (Tensor Parallel) and DP (Data Parallel): Indicate the parallelization factors across multiple devices.

- Reported LLM Performance: Based on an input sequence length of 128 tokens for all models.

- Performance Data Source: Metrics were collected using the tt-metal model demos (linked above). Results may vary when using other runtimes such as the vLLM inference server.

| Batch | Hardware | TTFT (MS) | T/S/U | Target T/S/U |

T/S | TT-Metalium Release | vLLM Tenstorrent Repo Release |

|---|---|---|---|---|---|---|---|

| 32 | Galaxy (Wormhole) | 53 | 72.5 | 80 | 2268.8 | v0.65.0-rc7 | 59be953 |

| Batch | Hardware | TTFT (MS) | T/S/U | Target T/S/U |

T/S | TT-Metalium Release | vLLM Tenstorrent Repo Release |

|---|---|---|---|---|---|---|---|

| 32 | n300 (Wormhole) | 109 | 22.1 | 30 | 707.2 | v0.62.0-rc35 | ced0161 |

| Batch | Hardware | TTFT (MS) | T/S/U | Target T/S/U |

T/S | TT-Metalium Release | vLLM Tenstorrent Repo Release |

|---|---|---|---|---|---|---|---|

| 32 | QuietBox (Wormhole) | 223 | 15.4 | 20 | 492.8 | v0.62.0-rc25 | e7c329b |

| Batch | Hardware | TTFT (MS) | T/S/U | Target T/S/U |

T/S | TT-Metalium Release |

|---|---|---|---|---|---|---|

| 1 | n150 (Wormhole) | 163 | 105.0 | 45 | 105.0 | v0.65.0-dev20251208 |

| 1 | p150 (Blackhole) | 63 | 263.4 | 263.4 | v0.65.0-dev20251208 |

| Batch | Hardware | TTFT (MS) | T/S/U | Target T/S/U |

T/S | TT-Metalium Release |

|---|---|---|---|---|---|---|

| 32 | QuietBox (Wormhole) | 122 | 24.9 | 33 | 796.8 | v0.62.0-dev20251015 |

Blackhole software optimization is under active development. Please join us in shaping the future of open source AI!

[Discord] [Developer Hub]

For more information regarding vLLM installation and environment creation visit the Tenstorrent vLLM repository.

For the latest model updates and features, please see MODEL_UPDATES.md

For information on initial model procedures, please see Model Bring-Up and Testing

- Advanced Performance Optimizations for Models (updated March 4th, 2025)

- ViT Implementation in TT-NN on GS (updated Sept 22nd, 2024)

- LLMs Bring up in TT-NN (updated Oct 29th, 2024)

- CNN Bring up & Optimization in TT-NN (updated Jan 22nd, 2025)

- Matrix Multiply FLOPS on Wormhole and Blackhole (updated June 17th, 2025)

TT-Metalium is our low-level programming model, enabling kernel development for Tenstorrent hardware.

Get started with simple kernels.

- Matrix Engine (updated Sept 6th, 2024)

- Data Formats (updated Sept 7th, 2024)

- Reconfiguring Data Formats (updated Oct 17th, 2024)

- Handling special floating-point numbers (updated Oct 5th, 2024)

- Allocator (Updated Dec 19th, 2024)

- Tensor Layouts (updated Sept 6th, 2024)

- Saturating DRAM Bandwidth (updated Sept 6th, 2024)

- Flash Attention on Wormhole (updated Sept 6th, 2024)

- CNNs on TT Architectures (updated Sept 6th, 2024)

- Ethernet and Multichip Basics (Updated Sept 20th, 2024)

- Blackhole Bring-Up Programming Guide (Updated Dec 18th, 2024)

- Sub-Devices (Updated Jan 7th, 2025)

- Programming Mesh of Devices (Scale-Up) (updated Jan 6th, 2026)

- Programming Multiple Meshes (Scale-Out) (updated Jan 19th, 2026)

- TT-Fabric Architecture (updated Dec 1st, 2025)

- TT-Distributed Architecture (updated Oct 20th, 2025)

- Matmul OP on a Single_core

- Matmul OP on Multi_core (Basic)

- Matmul Multi_core Reuse (Optimized)

- Matmul Multi_core Multi-Cast (Optimized)

A comprehensive tool for visualizing and analyzing model execution, offering interactive graphs, memory plots, tensor details, buffer overviews, operation flow graphs, and multi-instance support with file or SSH-based report loading.

The TT-Exalens repository describes TT-Lensium, a low-level debugging tool for Tenstorrent hardware. It allows developers to access and communicate with Wormhole and Blackhole devices.

The TT-SMI repository describes the Tenstorrent System Management Interface. This command line utility can interact with Tenstorrent devices on host. TT-SMI provides an easy to use interface displaying device, telemetry, and firmware information.

The Model Explorer is an intuitive and hierarchical visualization tool using model graphs. It organizes model operations into nested layers and provides features for model exploration and debugging.

The Tracy Profiler is a real-time nanosecond resolution, remote telemetry, hybrid frame, and sampling tool. Tracy supports profiling CPU, GPU, memory allocation, locks, context switches, and more.

DPRINT can print variables, addresses, and circular buffer data from kernels to the host terminal or log file. This feature is useful for debugging issues with kernels.

Watcher monitors firmware and kernels for common programming errors, and overall device status. If an error or hang occurs, Watcher displays log data of that occurrence.

Inspector provides insights into host runtime. It logs necessary data for investigation and allows queries to host runtime data.

| Release | Release Date | FW Version | KMD Version | SMI Version |

|---|---|---|---|---|

| 0.66.0 | ETA Jan 30, 2026 | 19.2.0 | 2.5.0 | 3.0.38 |

| 0.65.0 | Dec 15, 2025 | 19.2.0 | 2.5.0 | 3.0.38 |

| 0.64.5 | Dec 1, 2025 | 18.12.0 | 2.4.1 | 3.0.32 |

| 0.64.4 | Nov 24, 2025 | 18.12.0 | 2.4.1 | 3.0.32 |

| 0.64.3 | Nov 14, 2025 | 18.12.0 | 2.4.1 | 3.0.32 |

| 0.64.0 | Oct 29, 2025 | 18.12.0 | 2.4.1 | 3.0.32 |

| 0.63.0 | Sep 22, 2025 | 18.8.0 | 2.3.0 | 3.0.28 |

| 0.62.2 | Aug 20, 2025 | 18.6.0 | 2.0.0 | 3.0.20 |

| 0.61.0 | Skipped | - | - | - |

| 0.60.1 | Jul 22, 2025 | 18.6.0 | 2.0.0 | 3.0.20 |

| 0.59.0 | Jun 18, 2025 | - | - | - |

| 0.58.0 | May 13, 2025 | - | - | - |

| 0.57.0 | Apr 15, 2025 | - | - | - |

| 0.56.0 | Mar 7, 2025 | - | - | - |

Visit the releases folder for details on releases, release notes, and estimated release dates.

This repo is a part of Tenstorrent’s bounty program. If you are interested in helping to improve tt-metal, please make sure to read the Tenstorrent Bounty Program Terms and Conditions before heading to the issues tab. Look for the issues that are tagged with both “bounty” and difficulty level!

TT-Metalium and TTNN are licensed under the Apache 2.0 License, as detailed in LICENSE and LICENSE_understanding.txt.

Some distributable forms of this project—such as manylinux-compliant wheels—may need to bundle additional libraries beyond the standard Linux system libraries. For example:

- libnuma

- libhwloc

- openmpi (when built with multihost support)

- libevent (when built with multihost support)

These libraries are bound by their own license terms.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for tt-metal

Similar Open Source Tools

tt-metal

TT-NN is a python & C++ Neural Network OP library. It provides a low-level programming model, TT-Metalium, enabling kernel development for Tenstorrent hardware.

awesome-mobile-llm

Awesome Mobile LLMs is a curated list of Large Language Models (LLMs) and related studies focused on mobile and embedded hardware. The repository includes information on various LLM models, deployment frameworks, benchmarking efforts, applications, multimodal LLMs, surveys on efficient LLMs, training LLMs on device, mobile-related use-cases, industry announcements, and related repositories. It aims to be a valuable resource for researchers, engineers, and practitioners interested in mobile LLMs.

LocalAI

LocalAI is a free and open-source OpenAI alternative that acts as a drop-in replacement REST API compatible with OpenAI (Elevenlabs, Anthropic, etc.) API specifications for local AI inferencing. It allows users to run LLMs, generate images, audio, and more locally or on-premises with consumer-grade hardware, supporting multiple model families and not requiring a GPU. LocalAI offers features such as text generation with GPTs, text-to-audio, audio-to-text transcription, image generation with stable diffusion, OpenAI functions, embeddings generation for vector databases, constrained grammars, downloading models directly from Huggingface, and a Vision API. It provides a detailed step-by-step introduction in its Getting Started guide and supports community integrations such as custom containers, WebUIs, model galleries, and various bots for Discord, Slack, and Telegram. LocalAI also offers resources like an LLM fine-tuning guide, instructions for local building and Kubernetes installation, projects integrating LocalAI, and a how-tos section curated by the community. It encourages users to cite the repository when utilizing it in downstream projects and acknowledges the contributions of various software from the community.

llumen

Llumen is a self-hosted interface optimized for modest hardware like Raspberry Pi, old laptops, and minimal VPS. It offers privacy without complexity, providing essential features with minimal resource demands. Users can enjoy sub-second cold starts, real-time token streaming, various chat modes, rich media support, and a universal API for OpenAI-compatible providers. The tool has a small footprint with a binary size of around 17MB and RAM usage under 128MB. Llumen aims to simplify the setup process and offer a user-friendly experience for individuals seeking a privacy-focused solution.

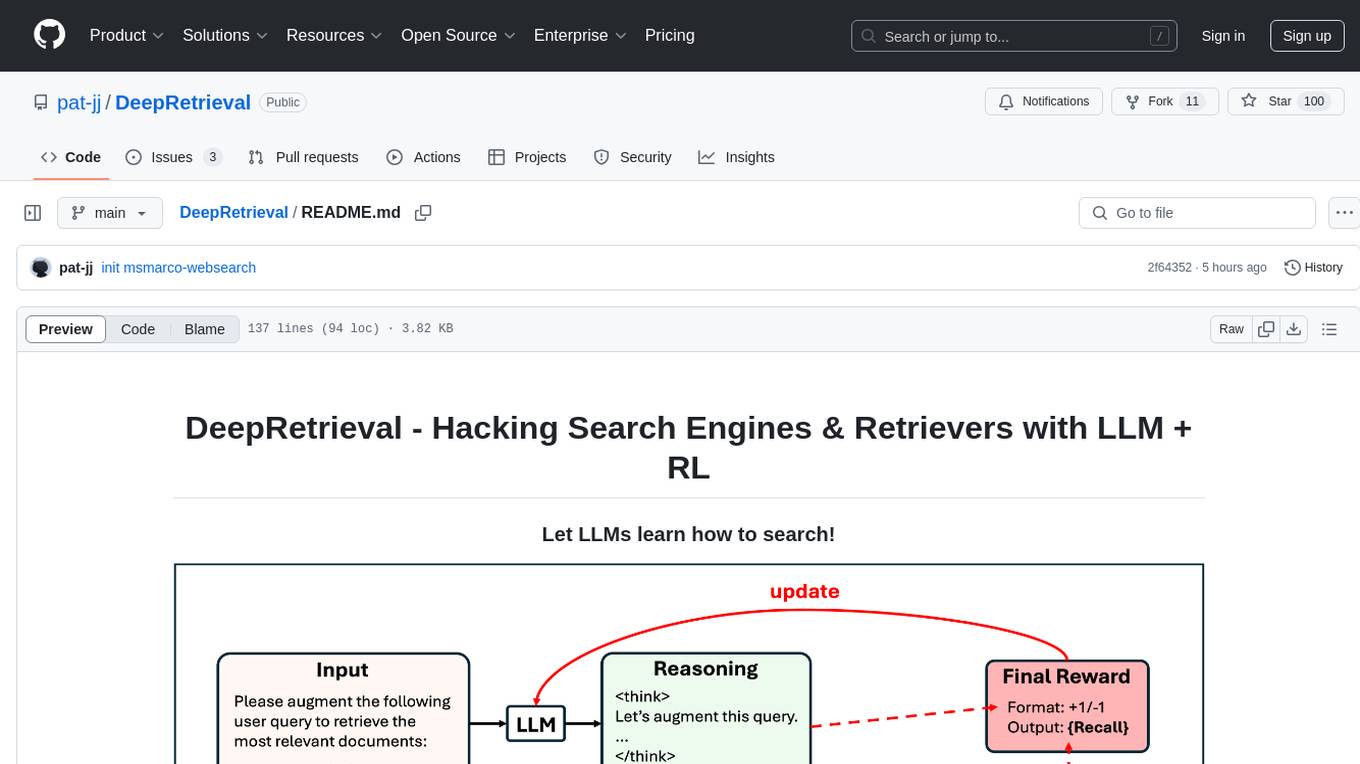

DeepRetrieval

DeepRetrieval is a tool designed to enhance search engines and retrievers using Large Language Models (LLMs) and Reinforcement Learning (RL). It allows LLMs to learn how to search effectively by integrating with search engine APIs and customizing reward functions. The tool provides functionalities for data preparation, training, evaluation, and monitoring search performance. DeepRetrieval aims to improve information retrieval tasks by leveraging advanced AI techniques.

TokenPacker

TokenPacker is a novel visual projector that compresses visual tokens by 75%∼89% with high efficiency. It adopts a 'coarse-to-fine' scheme to generate condensed visual tokens, achieving comparable or better performance across diverse benchmarks. The tool includes TokenPacker for general use and TokenPacker-HD for high-resolution image understanding. It provides training scripts, checkpoints, and supports various compression ratios and patch numbers.

rag-web-ui

RAG Web UI is an intelligent dialogue system based on RAG (Retrieval-Augmented Generation) technology. It helps enterprises and individuals build intelligent Q&A systems based on their own knowledge bases. By combining document retrieval and large language models, it delivers accurate and reliable knowledge-based question-answering services. The system is designed with features like intelligent document management, advanced dialogue engine, and a robust architecture. It supports multiple document formats, async document processing, multi-turn contextual dialogue, and reference citations in conversations. The architecture includes a backend stack with Python FastAPI, MySQL + ChromaDB, MinIO, Langchain, JWT + OAuth2 for authentication, and a frontend stack with Next.js, TypeScript, Tailwind CSS, Shadcn/UI, and Vercel AI SDK for AI integration. Performance optimization includes incremental document processing, streaming responses, vector database performance tuning, and distributed task processing. The project is licensed under the Apache-2.0 License and is intended for learning and sharing RAG knowledge only, not for commercial purposes.

ColossalAI

Colossal-AI is a deep learning system for large-scale parallel training. It provides a unified interface to scale sequential code of model training to distributed environments. Colossal-AI supports parallel training methods such as data, pipeline, tensor, and sequence parallelism and is integrated with heterogeneous training and zero redundancy optimizer.

IDvs.MoRec

This repository contains the source code for the SIGIR 2023 paper 'Where to Go Next for Recommender Systems? ID- vs. Modality-based Recommender Models Revisited'. It provides resources for evaluating foundation, transferable, multi-modal, and LLM recommendation models, along with datasets, pre-trained models, and training strategies for IDRec and MoRec using in-batch debiased cross-entropy loss. The repository also offers large-scale datasets, code for SASRec with in-batch debias cross-entropy loss, and information on joining the lab for research opportunities.

dl_model_infer

This project is a c++ version of the AI reasoning library that supports the reasoning of tensorrt models. It provides accelerated deployment cases of deep learning CV popular models and supports dynamic-batch image processing, inference, decode, and NMS. The project has been updated with various models and provides tutorials for model exports. It also includes a producer-consumer inference model for specific tasks. The project directory includes implementations for model inference applications, backend reasoning classes, post-processing, pre-processing, and target detection and tracking. Speed tests have been conducted on various models, and onnx downloads are available for different models.

langfuse

Langfuse is a powerful tool that helps you develop, monitor, and test your LLM applications. With Langfuse, you can: * **Develop:** Instrument your app and start ingesting traces to Langfuse, inspect and debug complex logs, and manage, version, and deploy prompts from within Langfuse. * **Monitor:** Track metrics (cost, latency, quality) and gain insights from dashboards & data exports, collect and calculate scores for your LLM completions, run model-based evaluations, collect user feedback, and manually score observations in Langfuse. * **Test:** Track and test app behaviour before deploying a new version, test expected in and output pairs and benchmark performance before deploying, and track versions and releases in your application. Langfuse is easy to get started with and offers a generous free tier. You can sign up for Langfuse Cloud or deploy Langfuse locally or on your own infrastructure. Langfuse also offers a variety of integrations to make it easy to connect to your LLM applications.

EVE

EVE is an official PyTorch implementation of Unveiling Encoder-Free Vision-Language Models. The project aims to explore the removal of vision encoders from Vision-Language Models (VLMs) and transfer LLMs to encoder-free VLMs efficiently. It also focuses on bridging the performance gap between encoder-free and encoder-based VLMs. EVE offers a superior capability with arbitrary image aspect ratio, data efficiency by utilizing publicly available data for pre-training, and training efficiency with a transparent and practical strategy for developing a pure decoder-only architecture across modalities.

MooER

MooER (摩耳) is an LLM-based speech recognition and translation model developed by Moore Threads. It allows users to transcribe speech into text (ASR) and translate speech into other languages (AST) in an end-to-end manner. The model was trained using 5K hours of data and is now also available with an 80K hours version. MooER is the first LLM-based speech model trained and inferred using domestic GPUs. The repository includes pretrained models, inference code, and a Gradio demo for a better user experience.

Athena-Public

Project Athena is a Linux OS designed for AI Agents, providing memory, persistence, scheduling, and governance for AI models. It offers a comprehensive memory layer that survives across sessions, models, and IDEs, allowing users to own their data and port it anywhere. The system is built bottom-up through 1,079+ sessions, focusing on depth and compounding knowledge. Athena features a trilateral feedback loop for cross-model validation, a Model Context Protocol server with 9 tools, and a robust security model with data residency options. The repository structure includes an SDK package, examples for quickstart, scripts, protocols, workflows, and deep documentation. Key concepts cover architecture, knowledge graph, semantic memory, and adaptive latency. Workflows include booting, reasoning modes, planning, research, and iteration. The project has seen significant content expansion, viral validation, and metrics improvements.

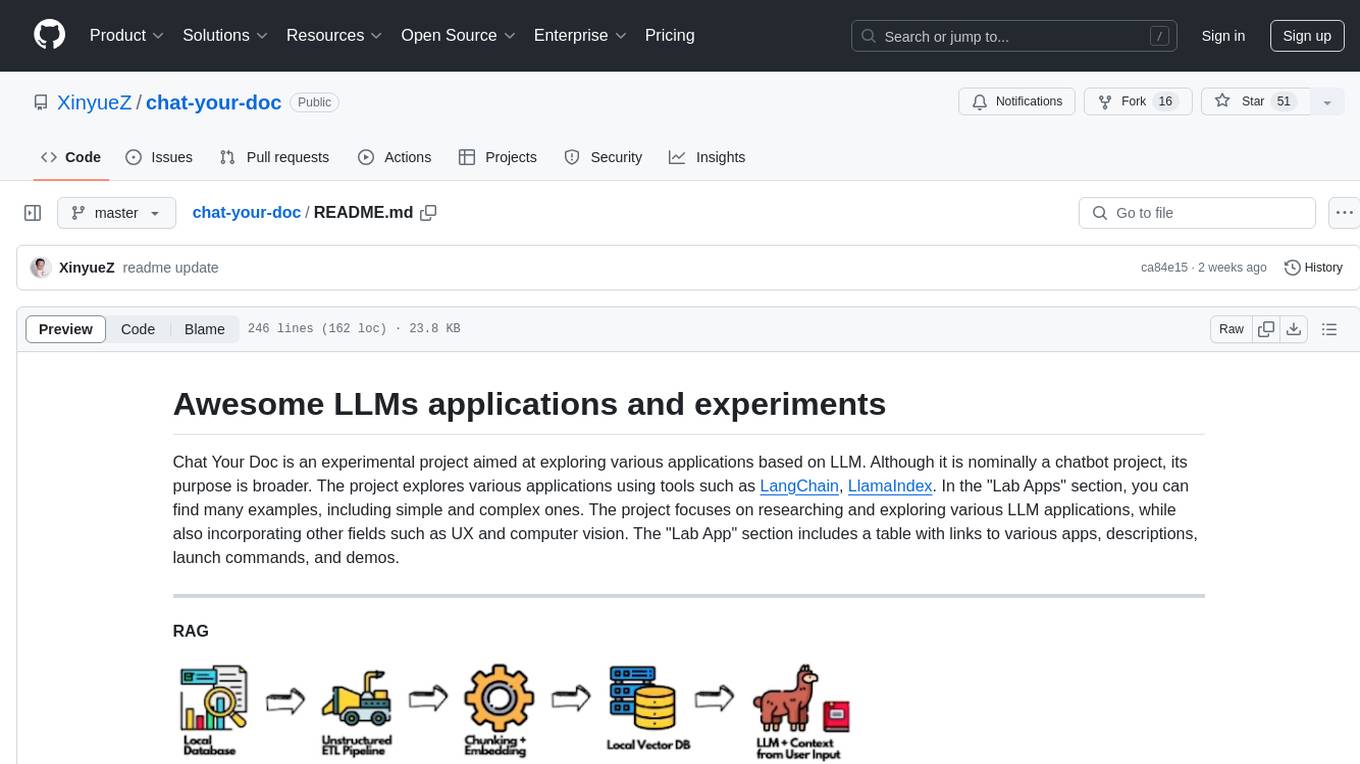

chat-your-doc

Chat Your Doc is an experimental project exploring various applications based on LLM technology. It goes beyond being just a chatbot project, focusing on researching LLM applications using tools like LangChain and LlamaIndex. The project delves into UX, computer vision, and offers a range of examples in the 'Lab Apps' section. It includes links to different apps, descriptions, launch commands, and demos, aiming to showcase the versatility and potential of LLM applications.

For similar tasks

ai-on-gke

This repository contains assets related to AI/ML workloads on Google Kubernetes Engine (GKE). Run optimized AI/ML workloads with Google Kubernetes Engine (GKE) platform orchestration capabilities. A robust AI/ML platform considers the following layers: Infrastructure orchestration that support GPUs and TPUs for training and serving workloads at scale Flexible integration with distributed computing and data processing frameworks Support for multiple teams on the same infrastructure to maximize utilization of resources

ray

Ray is a unified framework for scaling AI and Python applications. It consists of a core distributed runtime and a set of AI libraries for simplifying ML compute, including Data, Train, Tune, RLlib, and Serve. Ray runs on any machine, cluster, cloud provider, and Kubernetes, and features a growing ecosystem of community integrations. With Ray, you can seamlessly scale the same code from a laptop to a cluster, making it easy to meet the compute-intensive demands of modern ML workloads.

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.

djl

Deep Java Library (DJL) is an open-source, high-level, engine-agnostic Java framework for deep learning. It is designed to be easy to get started with and simple to use for Java developers. DJL provides a native Java development experience and allows users to integrate machine learning and deep learning models with their Java applications. The framework is deep learning engine agnostic, enabling users to switch engines at any point for optimal performance. DJL's ergonomic API interface guides users with best practices to accomplish deep learning tasks, such as running inference and training neural networks.

mlflow

MLflow is a platform to streamline machine learning development, including tracking experiments, packaging code into reproducible runs, and sharing and deploying models. MLflow offers a set of lightweight APIs that can be used with any existing machine learning application or library (TensorFlow, PyTorch, XGBoost, etc), wherever you currently run ML code (e.g. in notebooks, standalone applications or the cloud). MLflow's current components are:

* `MLflow Tracking

tt-metal

TT-NN is a python & C++ Neural Network OP library. It provides a low-level programming model, TT-Metalium, enabling kernel development for Tenstorrent hardware.

burn

Burn is a new comprehensive dynamic Deep Learning Framework built using Rust with extreme flexibility, compute efficiency and portability as its primary goals.

awsome-distributed-training

This repository contains reference architectures and test cases for distributed model training with Amazon SageMaker Hyperpod, AWS ParallelCluster, AWS Batch, and Amazon EKS. The test cases cover different types and sizes of models as well as different frameworks and parallel optimizations (Pytorch DDP/FSDP, MegatronLM, NemoMegatron...).

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.