OneRAG

Production-ready RAG backend. Start in 5 min, swap Vector DB/LLM/Reranker with 1 line config. 6 DBs, 4 LLMs, GraphRAG included.

Stars: 109

OneRAG is a production-ready RAG backend tool that allows users to replace components with a single line of configuration. It addresses common issues in RAG development by simplifying tasks such as changing Vector DB, replacing LLM, and adding functionalities like caching and reranking. Users can easily switch between different components using configuration files, making it suitable for both PoC and production environments.

README:

5분 안에 시작하고, 설정 1줄로 컴포넌트를 교체하는 Production-ready RAG 백엔드

한국어 | English

git clone https://github.com/youngouk/OneRAG.git && cd OneRAG && uv sync# 🐳 Docker 있으면 → Full API 서버 (Weaviate + FastAPI + Swagger UI)

cp quickstart/.env.quickstart .env # GOOGLE_API_KEY만 설정

make start # → http://localhost:8000/docs

# 💻 Docker 없으면 → 로컬 CLI 챗봇 (설치만으로 바로 실행)

make easy-start # → 터미널에서 바로 대화Vector DB 바꾸고 싶다면? .env에서 VECTOR_DB_PROVIDER=pinecone 한 줄 변경.

LLM 바꾸고 싶다면? LLM_PROVIDER=openai 한 줄 변경. 끝.

| 상황 | 기존 방식 | OneRAG |

|---|---|---|

| Vector DB 변경 | 코드 전체 수정 + 테스트 반복 |

.env 1줄 변경 |

| LLM 교체 | API 연동 코드 재작성 |

.env 1줄 변경 |

| 기능 추가 (캐싱, 리랭킹 등) | 직접 구현 |

YAML 설정으로 On/Off |

| PoC → Production | 처음부터 다시 구축 | 동일 코드베이스로 확장 |

┌─────────────────────────────────────────────────────────────────┐

│ OneRAG │

├─────────────┬─────────────┬─────────────┬─────────────┬─────────┤

│ Vector DB │ LLM │ Reranker │ Cache │ Extra │

├─────────────┼─────────────┼─────────────┼─────────────┼─────────┤

│ • Weaviate │ • Gemini │ • Jina │ • Memory │ • Graph │

│ • Chroma │ • OpenAI │ • Cohere │ • Redis │ RAG │

│ • Pinecone │ • Claude │ • Google │ • Semantic │ • PII │

│ • Qdrant │ • OpenRouter│ • OpenAI │ │ Mask │

│ • pgvector │ │ • Local │ │ • Agent │

│ • MongoDB │ │ │ │ │

└─────────────┴─────────────┴─────────────┴─────────────┴─────────┘

↑ 모두 설정 파일로 교체 가능

두 가지 방법 중 환경에 맞는 걸 선택하세요.

Full API 서버 (make start) |

CLI 챗봇 (make easy-start) |

|

|---|---|---|

| Docker | 필요 | 불필요 |

| Vector DB | Weaviate (하이브리드 검색) | ChromaDB (로컬 파일) |

| 인터페이스 | REST API + Swagger UI | 터미널 CLI |

| LLM | 4종 (Gemini, OpenAI, Claude, OpenRouter) | Gemini / OpenRouter |

| 용도 | 프로덕션, API 통합, 팀 개발 | 학습, 체험, 빠른 PoC |

git clone https://github.com/youngouk/OneRAG.git

cd OneRAG && uv sync

cp quickstart/.env.quickstart .env

# .env 파일에서 GOOGLE_API_KEY 설정

# (무료: https://aistudio.google.com/apikey)

make start끝! http://localhost:8000/docs에서 바로 테스트할 수 있습니다.

make start-down # 종료Docker 설치 없이 터미널에서 바로 RAG 검색 + AI 답변을 체험할 수 있습니다.

git clone https://github.com/youngouk/OneRAG.git

cd OneRAG && uv sync

make easy-start샘플 데이터 25개가 자동 적재되고, 하이브리드 검색(Dense + BM25)이 바로 작동합니다. AI 답변 생성을 사용하려면 API 키를 하나 설정하세요:

# 둘 중 하나만 설정하면 됩니다

export GOOGLE_API_KEY="발급받은키" # 무료: https://aistudio.google.com/apikey

export OPENROUTER_API_KEY="발급받은키" # https://openrouter.ai/keysOneRAG가 처음이라면?

make easy-start로 시작해서 챗봇에게 직접 물어보세요. "하이브리드 검색이 뭐야?", "RAG 파이프라인이 어떻게 돼?" — 샘플 데이터에 답이 있습니다.

# .env 파일에서 한 줄만 변경

VECTOR_DB_PROVIDER=weaviate # 또는 chroma, pinecone, qdrant, pgvector, mongodb# .env 파일에서 한 줄만 변경

LLM_PROVIDER=google # 또는 openai, anthropic, openrouter# app/config/features/reranking.yaml

reranking:

approach: "cross-encoder" # 또는 late-interaction, llm, local

provider: "jina" # 또는 cohere, google, openai, sentence-transformers# 캐싱 활성화

cache:

enabled: true

type: "redis" # 또는 memory, semantic

# GraphRAG 활성화

graph_rag:

enabled: true

# PII 마스킹 활성화

pii:

enabled: true| 카테고리 | 선택지 | 변경 방법 |

|---|---|---|

| Vector DB | Weaviate, Chroma, Pinecone, Qdrant, pgvector, MongoDB | 환경변수 1줄 |

| LLM | Google Gemini, OpenAI, Anthropic Claude, OpenRouter | 환경변수 1줄 |

| 리랭커 | Jina, Cohere, Google, OpenAI, OpenRouter, Local | YAML 2줄 |

| 캐시 | Memory, Redis, Semantic | YAML 1줄 |

| 쿼리 라우팅 | LLM 기반, Rule 기반 | YAML 1줄 |

| 한국어 검색 | 동의어, 불용어, 사용자사전 | YAML 설정 |

| 보안 | PII 탐지, 마스킹, 감사 로깅 | YAML 설정 |

| GraphRAG | 지식 그래프 기반 관계 추론 | YAML 1줄 |

| Agent | 도구 실행, MCP 프로토콜 | YAML 설정 |

Query → Router → Expansion → Retriever → Cache → Reranker → Generator → PII Masking → Response

| 단계 | 기능 | 교체 가능 |

|---|---|---|

| 쿼리 라우팅 | 쿼리 유형 분류 | LLM/Rule 선택 |

| 쿼리 확장 | 동의어, 불용어 처리 | 사전 커스텀 |

| 검색 | 벡터/하이브리드 검색 | 6종 DB |

| 캐싱 | 응답 캐시 | 3종 캐시 |

| 재정렬 | 검색 결과 정렬 | 6종 리랭커 |

| 답변 생성 | LLM 응답 생성 | 4종 LLM |

| 후처리 | 개인정보 마스킹 | 정책 커스텀 |

| 단계 | 구성 | 용도 |

|---|---|---|

| Basic | 벡터 검색 + LLM | 간단한 문서 Q&A |

| Standard | + 하이브리드 검색 + Reranker | 검색 품질이 중요한 서비스 (권장) |

| Advanced | + GraphRAG + Agent | 복잡한 관계 추론, 도구 실행 |

Basic으로 시작해서, 필요할 때 블록을 추가하면 됩니다.

make dev-reload # 개발 서버 (자동 리로드)

make test # 테스트 실행

make lint # 린트 검사

make type-check # 타입 체크MIT License

이 프로젝트는 RAG Chat Service PM이 여러 프로젝트를 진행하며 구현해보고 싶었던 기능들을 모아 만들었습니다.

RAG를 처음 접하는 분들이 쉽게 PoC를 진행하고, 프로덕션까지 확장할 수 있도록 설계했습니다.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for OneRAG

Similar Open Source Tools

OneRAG

OneRAG is a production-ready RAG backend tool that allows users to replace components with a single line of configuration. It addresses common issues in RAG development by simplifying tasks such as changing Vector DB, replacing LLM, and adding functionalities like caching and reranking. Users can easily switch between different components using configuration files, making it suitable for both PoC and production environments.

z.ai2api_python

Z.AI2API Python is a lightweight OpenAI API proxy service that integrates seamlessly with existing applications. It supports the full functionality of GLM-4.5 series models and features high-performance streaming responses, enhanced tool invocation, support for thinking mode, integration with search models, Docker deployment, session isolation for privacy protection, flexible configuration via environment variables, and intelligent upstream model routing.

AIxVuln

AIxVuln is an automated vulnerability discovery and verification system based on large models (LLM) + function calling + Docker sandbox. The system manages 'projects' through a web UI/desktop client, automatically organizing multiple 'digital humans' for environment setup, code auditing, vulnerability verification, and report generation. It utilizes an isolated Docker environment for dependency installation, service startup, PoC verification, and evidence collection, ultimately producing downloadable vulnerability reports. The system has already discovered dozens of vulnerabilities in real open-source projects.

NeuroSploit

NeuroSploit v3 is an advanced security assessment platform that combines AI-driven autonomous agents with 100 vulnerability types, per-scan isolated Kali Linux containers, false-positive hardening, exploit chaining, and a modern React web interface with real-time monitoring. It offers features like 100 Vulnerability Types, Autonomous Agent with 3-stream parallel pentest, Per-Scan Kali Containers, Anti-Hallucination Pipeline, Exploit Chain Engine, WAF Detection & Bypass, Smart Strategy Adaptation, Multi-Provider LLM, Real-Time Dashboard, and Sandbox Dashboard. The tool is designed for authorized security testing purposes only, ensuring compliance with laws and regulations.

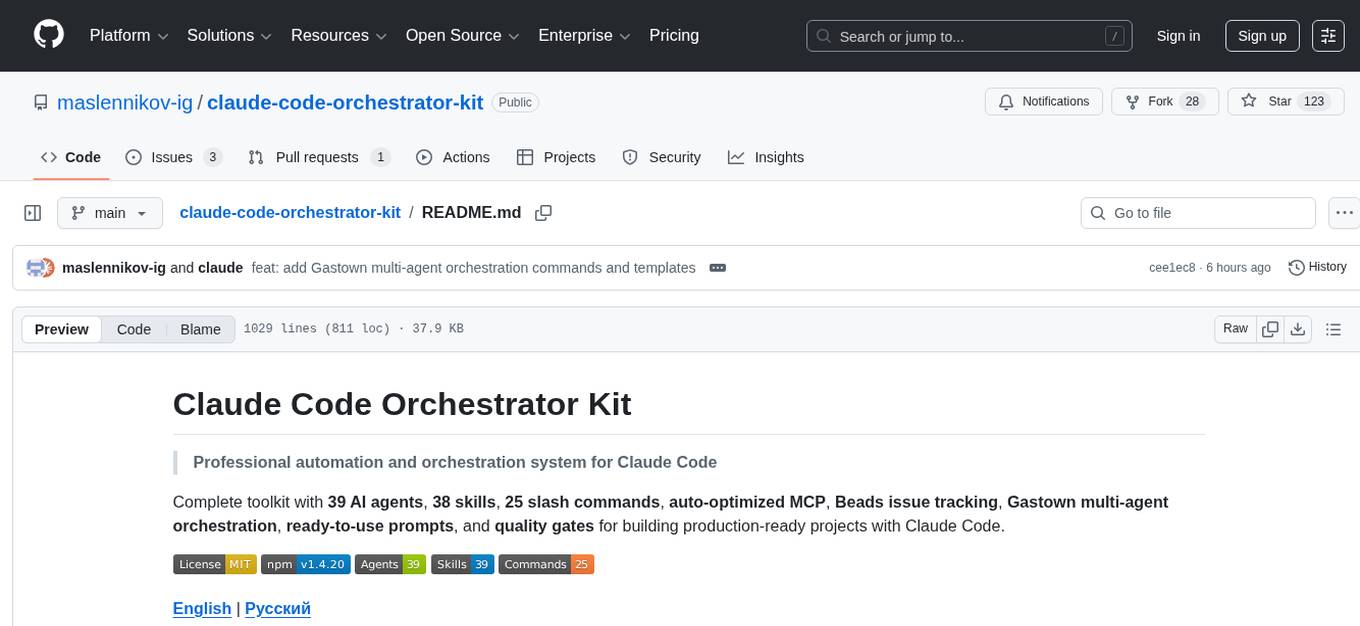

claude-code-orchestrator-kit

The Claude Code Orchestrator Kit is a professional automation and orchestration system for Claude Code, featuring 39 AI agents, 38 skills, 25 slash commands, auto-optimized MCP, Beads issue tracking, Gastown multi-agent orchestration, ready-to-use prompts, and quality gates. It transforms Claude Code into an intelligent orchestration system by delegating complex tasks to specialized sub-agents, preserving context and enabling indefinite work sessions.

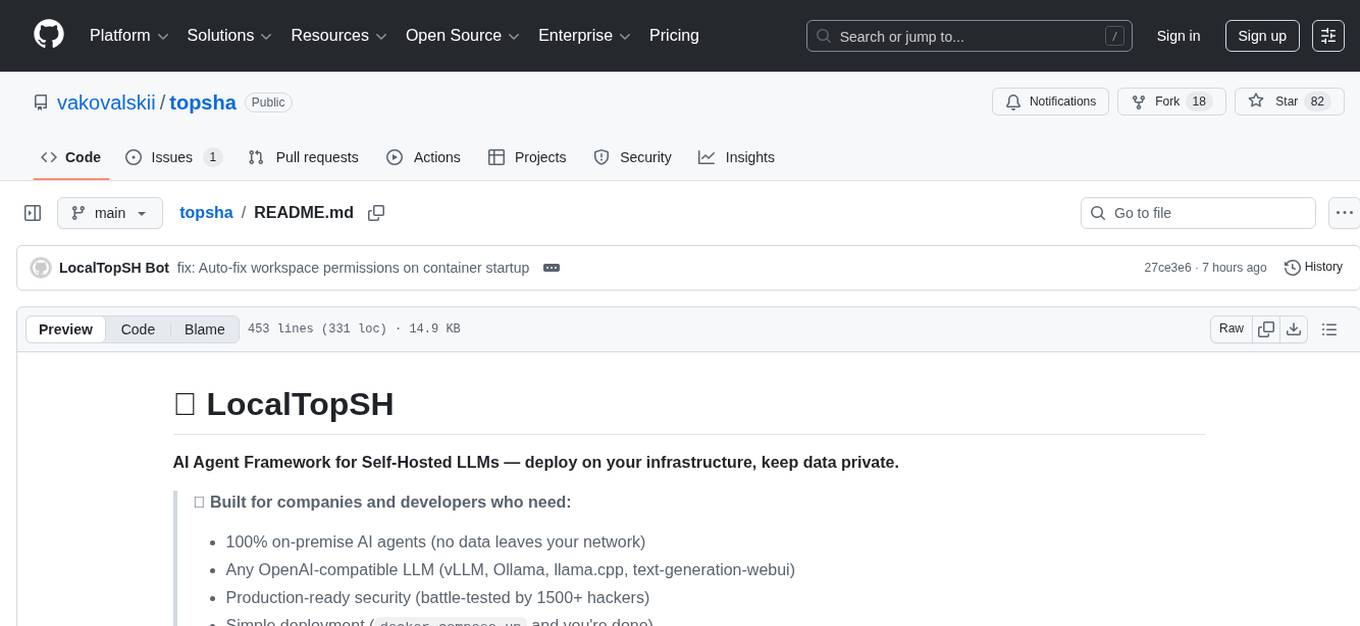

topsha

LocalTopSH is an AI Agent Framework designed for companies and developers who require 100% on-premise AI agents with data privacy. It supports various OpenAI-compatible LLM backends and offers production-ready security features. The framework allows simple deployment using Docker compose and ensures that data stays within the user's network, providing full control and compliance. With cost-effective scaling options and compatibility in regions with restrictions, LocalTopSH is a versatile solution for deploying AI agents on self-hosted infrastructure.

openclaw-mini

OpenClaw Mini is a simplified reproduction of the core architecture of OpenClaw, designed for learning system-level design of AI agents. It focuses on understanding the Agent Loop, session persistence, context management, long-term memory, skill systems, and active awakening. The project provides a minimal implementation to help users grasp the core design concepts of a production-level AI agent system.

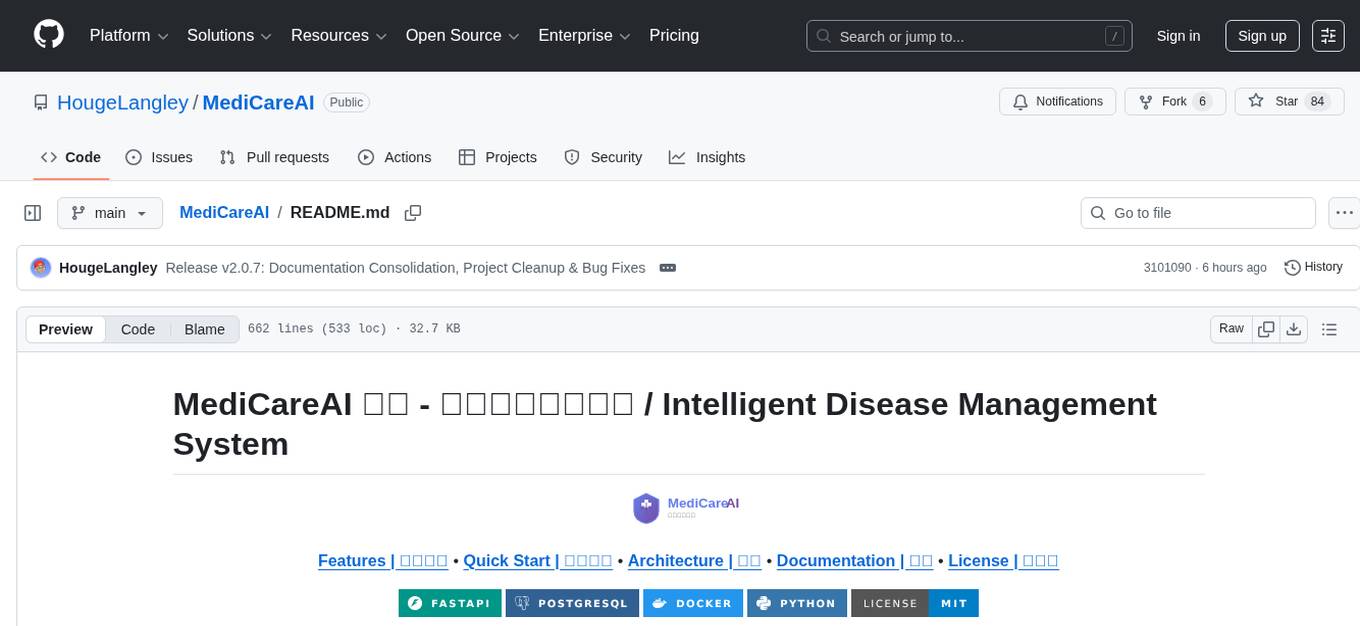

MediCareAI

MediCareAI is an intelligent disease management system powered by AI, designed for patient follow-up and disease tracking. It integrates medical guidelines, AI-powered diagnosis, and document processing to provide comprehensive healthcare support. The system includes features like user authentication, patient management, AI diagnosis, document processing, medical records management, knowledge base system, doctor collaboration platform, and admin system. It ensures privacy protection through automatic PII detection and cleaning for document sharing.

bizclaw

BizClaw is a fast AI Agent platform written entirely in Rust. It is a trait-driven architecture that can run anywhere from Raspberry Pi to cloud servers. It supports multiple LLM providers, communication channels, and tools through a unified and interchangeable architecture.

vllm-mlx

vLLM-MLX is a tool that brings native Apple Silicon GPU acceleration to vLLM by integrating Apple's ML framework with unified memory and Metal kernels. It offers optimized LLM inference with KV cache and quantization, vision-language models for multimodal inference, speech-to-text and text-to-speech with native voices, text embeddings for semantic search and RAG, and more. Users can benefit from features like multimodal support for text, image, video, and audio, native GPU acceleration on Apple Silicon, compatibility with OpenAI API, Anthropic Messages API, reasoning models extraction, integration with external tools via Model Context Protocol, memory-efficient caching, and high throughput for multiple concurrent users.

gin-vue-admin

Gin-vue-admin is a full-stack development platform based on Vue and Gin, integrating features like JWT authentication, dynamic routing, dynamic menus, Casbin authorization, form generator, code generator, etc. It provides various example files to help users focus more on business development. The project offers detailed documentation, video tutorials for setup and deployment, and a community for support and contributions. Users need a certain level of knowledge in Golang and Vue to work with this project. It is recommended to follow the Apache2.0 license if using the project for commercial purposes.

WenShape

WenShape is a context engineering system for creating long novels. It addresses the challenge of narrative consistency over thousands of words by using an orchestrated writing process, dynamic fact tracking, and precise token budget management. All project data is stored in YAML/Markdown/JSONL text format, naturally supporting Git version control.

vibium

Vibium is a browser automation infrastructure designed for AI agents, providing a single binary that manages browser lifecycle, WebDriver BiDi protocol, and an MCP server. It offers zero configuration, AI-native capabilities, and is lightweight with no runtime dependencies. It is suitable for AI agents, test automation, and any tasks requiring browser interaction.

tinyclaw

TinyClaw is a lightweight wrapper around Claude Code that connects WhatsApp via QR code, processes messages sequentially, maintains conversation context, runs 24/7 in tmux, and is ready for multi-channel support. Its key innovation is the file-based queue system that prevents race conditions and enables multi-channel support. TinyClaw consists of components like whatsapp-client.js for WhatsApp I/O, queue-processor.js for message processing, heartbeat-cron.sh for health checks, and tinyclaw.sh as the main orchestrator with a CLI interface. It ensures no race conditions, is multi-channel ready, provides clean responses using claude -c -p, and supports persistent sessions. Security measures include local storage of WhatsApp session and queue files, channel-specific authentication, and running Claude with user permissions.

ai-toolbox

AI Toolbox is a cross-platform desktop application designed to efficiently manage various AI programming assistant configurations. It supports Windows, macOS, and Linux. The tool provides visual management of OpenCode, Oh-My-OpenCode, Slim plugin configurations, Claude Code API supplier configurations, Codex CLI configurations, MCP server management, Skills management, WSL synchronization, AI supplier management, system tray for quick configuration switching, data backup, theme switching, multilingual support, and automatic update checks.

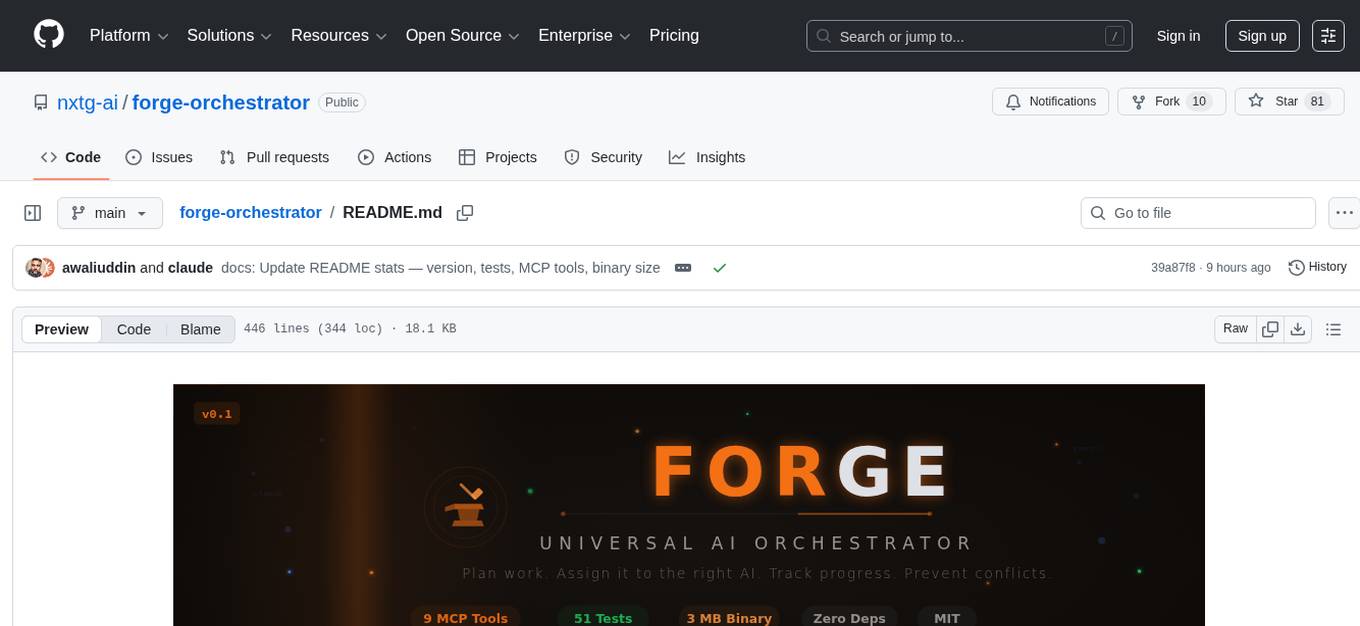

forge-orchestrator

Forge Orchestrator is a Rust CLI tool designed to coordinate and manage multiple AI tools seamlessly. It acts as a senior tech lead, preventing conflicts, capturing knowledge, and ensuring work aligns with specifications. With features like file locking, knowledge capture, and unified state management, Forge enhances collaboration and efficiency among AI tools. The tool offers a pluggable brain for intelligent decision-making and includes a Model Context Protocol server for real-time integration with AI tools. Forge is not a replacement for AI tools but a facilitator for making them work together effectively.

For similar tasks

OneRAG

OneRAG is a production-ready RAG backend tool that allows users to replace components with a single line of configuration. It addresses common issues in RAG development by simplifying tasks such as changing Vector DB, replacing LLM, and adding functionalities like caching and reranking. Users can easily switch between different components using configuration files, making it suitable for both PoC and production environments.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.