kestra

Orchestrate everything — from scripts to data, infra, AI, and business — as code, with UI and AI Copilot. Simple. Fast. Scalable.

Stars: 21308

Kestra is an open-source event-driven orchestration platform that simplifies building scheduled and event-driven workflows. It offers Infrastructure as Code best practices for data, process, and microservice orchestration, allowing users to create reliable workflows using YAML configuration. Key features include everything as code with Git integration, event-driven and scheduled workflows, rich plugin ecosystem for data extraction and script running, intuitive UI with syntax highlighting, scalability for millions of workflows, version control friendly, and various features for structure and resilience. Kestra ensures declarative orchestration logic management even when workflows are modified via UI, API calls, or other methods.

README:

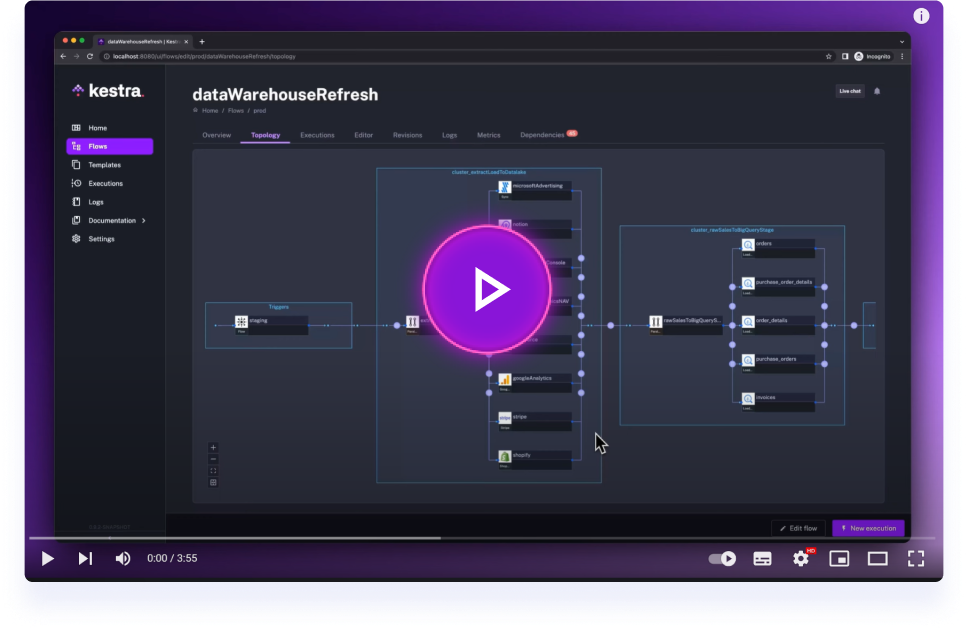

Click on the image to learn how to get started with Kestra in 3 minutes.

Kestra is an open-source, event-driven orchestration platform that makes both scheduled and event-driven workflows easy. By bringing Infrastructure as Code best practices to data, process, and microservice orchestration, you can build reliable workflows directly from the UI in just a few lines of YAML.

Key Features:

- Everything as Code and from the UI: keep workflows as code with a Git Version Control integration, even when building them from the UI.

-

Event-Driven & Scheduled Workflows: automate both scheduled and real-time event-driven workflows via a simple

triggerdefinition. - Declarative YAML Interface: define workflows using a simple configuration in the built-in code editor.

- Rich Plugin Ecosystem: hundreds of plugins built in to extract data from any database, cloud storage, or API, and run scripts in any language.

- Intuitive UI & Code Editor: build and visualize workflows directly from the UI with syntax highlighting, auto-completion and real-time syntax validation.

- Scalable: designed to handle millions of workflows, with high availability and fault tolerance.

- Version Control Friendly: write your workflows from the built-in code Editor and push them to your preferred Git branch directly from Kestra, enabling best practices with CI/CD pipelines and version control systems.

-

Structure & Resilience: tame chaos and bring resilience to your workflows with namespaces, labels, subflows, retries, timeout, error handling, inputs, outputs that generate artifacts in the UI, variables, conditional branching, advanced scheduling, event triggers, backfills, dynamic tasks, sequential and parallel tasks, and skip tasks or triggers when needed by setting the flag

disabledtotrue.

🧑💻 The YAML definition gets automatically adjusted any time you make changes to a workflow from the UI or via an API call. Therefore, the orchestration logic is always managed declaratively in code, even if you modify your workflows in other ways (UI, CI/CD, Terraform, API calls).

Make sure that Docker is running. Then, start Kestra in a single command:

docker run --pull=always --rm -it -p 8080:8080 --user=root \

-v /var/run/docker.sock:/var/run/docker.sock \

-v /tmp:/tmp kestra/kestra:latest server localIf you're on Windows and use PowerShell:

docker run --pull=always --rm -it -p 8080:8080 --user=root `

-v "/var/run/docker.sock:/var/run/docker.sock" `

-v "C:/Temp:/tmp" kestra/kestra:latest server localIf you're on Windows and use Command Prompt (CMD):

docker run --pull=always --rm -it -p 8080:8080 --user=root ^

-v "/var/run/docker.sock:/var/run/docker.sock" ^

-v "C:/Temp:/tmp" kestra/kestra:latest server localIf you're on Windows and use WSL (Linux-based environment in Windows):

docker run --pull=always --rm -it -p 8080:8080 --user=root \

-v "/var/run/docker.sock:/var/run/docker.sock" \

-v "C:/Temp:/tmp" kestra/kestra:latest server localCheck our Installation Guide for other deployment options (Docker Compose, Podman, Kubernetes, AWS, GCP, Azure, and more).

Access the Kestra UI at http://localhost:8080 and start building your first flow!

Create a new flow with the following content:

id: hello_world

namespace: dev

tasks:

- id: say_hello

type: io.kestra.plugin.core.log.Log

message: "Hello, World!"Run the flow and see the output in the UI!

Kestra's functionality is extended through a rich ecosystem of plugins that empower you to run tasks anywhere and code in any language, including Python, Node.js, R, Go, Shell, and more. Here's how Kestra plugins enhance your workflows:

-

Run Anywhere:

- Local or Remote Execution: Execute tasks on your local machine, remote servers via SSH, or scale out to serverless containers using Task Runners.

- Docker and Kubernetes Support: Seamlessly run Docker containers within your workflows or launch Kubernetes jobs to handle compute-intensive workloads.

-

Code in Any Language:

- Scripting Support: Write scripts in your preferred programming language. Kestra supports Python, Node.js, R, Go, Shell, and others, allowing you to integrate existing codebases and deployment patterns.

- Flexible Automation: Execute shell commands, run SQL queries against various databases, and make HTTP requests to interact with APIs.

-

Event-Driven and Real-Time Processing:

- Real-Time Triggers: React to events from external systems in real-time, such as file arrivals, new messages in message buses (Kafka, Redis, Pulsar, AMQP, MQTT, NATS, AWS SQS, Google Pub/Sub, Azure Event Hubs), and more.

- Custom Events: Define custom events to trigger flows based on specific conditions or external signals, enabling highly responsive workflows.

-

Cloud Integrations:

- AWS, Google Cloud, Azure: Integrate with a variety of cloud services to interact with storage solutions, messaging systems, compute resources, and more.

- Big Data Processing: Run big data processing tasks using tools like Apache Spark or interact with analytics platforms like Google BigQuery.

-

Monitoring and Notifications:

- Stay Informed: Send messages to Slack channels, email notifications, or trigger alerts in PagerDuty to keep your team updated on workflow statuses.

Kestra's plugin ecosystem is continually expanding, allowing you to tailor the platform to your specific needs. Whether you're orchestrating complex data pipelines, automating scripts across multiple environments, or integrating with cloud services, there's likely a plugin to assist. And if not, you can always build your own plugins to extend Kestra's capabilities.

🧑💻 Note: This is just a glimpse of what Kestra plugins can do. Explore the full list on our Plugins Page.

- Flows: the core unit in Kestra, representing a workflow composed of tasks.

- Tasks: individual units of work, such as running a script, moving data, or calling an API.

- Namespaces: logical grouping of flows for organization and isolation.

- Triggers: schedule or events that initiate the execution of flows.

- Inputs & Variables: parameters and dynamic data passed into flows and tasks.

Kestra provides an intuitive UI that allows you to interactively build and visualize your workflows:

- Drag-and-Drop Interface: add and rearrange tasks from the Topology Editor.

- Real-Time Validation: instant feedback on your workflow's syntax and structure to catch errors early.

- Auto-Completion: smart suggestions as you type to write flow code quickly and without syntax errors.

- Live Topology View: see your workflow as a Directed Acyclic Graph (DAG) that updates in real-time.

Create custom plugins to extend Kestra's capabilities. Check out our Plugin Developer Guide to get started.

- Version Control: store your flows in Git repositories.

- CI/CD Integration: automate deployment of flows using CI/CD pipelines.

- Terraform Provider: manage Kestra resources with the official Terraform provider.

Stay connected and get support:

- Slack: Join our Slack community to ask questions and share ideas.

- LinkedIn: Follow us on LinkedIn — next to Slack and GitHub, this is our main channel to share updates and product announcements.

- YouTube: Subscribe to our YouTube channel for educational video content. We publish new videos every week!

- X: Follow us on X if you're still active there.

We welcome contributions of all kinds!

- Report Issues: Found a bug or have a feature request? Open an issue on GitHub.

- Contribute Code: Check out our Contributor Guide for initial guidelines, and explore our good first issues for beginner-friendly tasks to tackle first.

- Develop Plugins: Build and share plugins using our Plugin Developer Guide.

- Contribute to our Docs: Contribute edits or updates to keep our documentation top-notch.

Kestra is licensed under the Apache 2.0 License © Kestra Technologies.

Give our repository a star to stay informed about the latest features and updates!

Thank you for considering Kestra for your workflow orchestration needs. We can't wait to see what you'll build!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for kestra

Similar Open Source Tools

kestra

Kestra is an open-source event-driven orchestration platform that simplifies building scheduled and event-driven workflows. It offers Infrastructure as Code best practices for data, process, and microservice orchestration, allowing users to create reliable workflows using YAML configuration. Key features include everything as code with Git integration, event-driven and scheduled workflows, rich plugin ecosystem for data extraction and script running, intuitive UI with syntax highlighting, scalability for millions of workflows, version control friendly, and various features for structure and resilience. Kestra ensures declarative orchestration logic management even when workflows are modified via UI, API calls, or other methods.

Riona-AI-Agent

Riona-AI-Agent is a versatile AI chatbot designed to assist users in various tasks. It utilizes natural language processing and machine learning algorithms to understand user queries and provide accurate responses. The chatbot can be integrated into websites, applications, and messaging platforms to enhance user experience and streamline communication. With its customizable features and easy deployment, Riona-AI-Agent is suitable for businesses, developers, and individuals looking to automate customer support, provide information, and engage with users in a conversational manner.

ai-flow

AI Flow is an open-source, user-friendly UI application that empowers you to seamlessly connect multiple AI models together, specifically leveraging the capabilities of multiples AI APIs such as OpenAI, StabilityAI and Replicate. In a nutshell, AI Flow provides a visual platform for crafting and managing AI-driven workflows, thereby facilitating diverse and dynamic AI interactions.

TaskingAI

TaskingAI brings Firebase's simplicity to **AI-native app development**. The platform enables the creation of GPTs-like multi-tenant applications using a wide range of LLMs from various providers. It features distinct, modular functions such as Inference, Retrieval, Assistant, and Tool, seamlessly integrated to enhance the development process. TaskingAI’s cohesive design ensures an efficient, intelligent, and user-friendly experience in AI application development.

refact

This repository contains Refact WebUI for fine-tuning and self-hosting of code models, which can be used inside Refact plugins for code completion and chat. Users can fine-tune open-source code models, self-host them, download and upload Lloras, use models for code completion and chat inside Refact plugins, shard models, host multiple small models on one GPU, and connect GPT-models for chat using OpenAI and Anthropic keys. The repository provides a Docker container for running the self-hosted server and supports various models for completion, chat, and fine-tuning. Refact is free for individuals and small teams under the BSD-3-Clause license, with custom installation options available for GPU support. The community and support include contributing guidelines, GitHub issues for bugs, a community forum, Discord for chatting, and Twitter for product news and updates.

Zentara-Code

Zentara Code is an AI coding assistant for VS Code that turns chat instructions into precise, auditable changes in the codebase. It is optimized for speed, safety, and correctness through parallel execution, LSP semantics, and integrated runtime debugging. It offers features like parallel subagents, integrated LSP tools, and runtime debugging for efficient code modification and analysis.

orbit

ORBIT (Open Retrieval-Based Inference Toolkit) is a middleware platform that provides a unified API for AI inference. It acts as a central gateway, allowing you to connect various local and remote AI models with your private data sources like SQL databases, vector stores, and local files. ORBIT uses a flexible adapter architecture to connect your data to AI models, creating specialized 'agents' for specific tasks. It supports scenarios like Knowledge Base Q&A and Chat with Your SQL Database, enabling users to interact with AI models seamlessly. The tool offers a RESTful API for programmatic access and includes features like authentication, API key management, system prompts, health monitoring, and file management. ORBIT is designed to streamline AI inference tasks and facilitate interactions between users and AI models.

intellij-aicoder

AI Coding Assistant is a free and open-source IntelliJ plugin that leverages cutting-edge Language Model APIs to enhance developers' coding experience. It seamlessly integrates with various leading LLM APIs, offers an intuitive toolbar UI, and allows granular control over API requests. With features like Code & Patch Chat, Planning with AI Agents, Markdown visualization, and versatile text processing capabilities, this tool aims to streamline coding workflows and boost productivity.

open-webui

Open WebUI is an extensible, feature-rich, and user-friendly self-hosted WebUI designed to operate entirely offline. It supports various LLM runners, including Ollama and OpenAI-compatible APIs. For more information, be sure to check out our Open WebUI Documentation.

omniscient

Omniscient is an advanced AI Platform offered as a SaaS, empowering projects with cutting-edge artificial intelligence capabilities. Seamlessly integrating with Next.js 14, React, Typescript, and APIs like OpenAI and Replicate, it provides solutions for code generation, conversation simulation, image creation, music composition, and video generation.

curiso

Curiso AI is an infinite canvas platform that connects nodes and AI services to explore ideas without repetition. It empowers advanced users to unlock richer AI interactions. Features include multi OS support, infinite canvas, multiple AI provider integration, local AI inference provider integration, custom model support, model metrics, RAG support, local Transformers.js embedding models, inference parameters customization, multiple boards, vision model support, customizable interface, node-based conversations, and secure local encrypted storage. Curiso also offers a Solana token for exclusive access to premium features and enhanced AI capabilities.

chatnio

Chat Nio is a next-generation AIGC one-stop business solution that combines the advantages of frontend-oriented lightweight deployment projects with powerful API distribution systems. It offers rich model support, beautiful UI design, complete Markdown support, multi-theme support, internationalization support, text-to-image support, powerful conversation sync, model market & preset system, rich file parsing, full model internet search, Progressive Web App (PWA) support, comprehensive backend management, multiple billing methods, innovative model caching, and additional features. The project aims to address limitations in conversation synchronization, billing, file parsing, conversation URL sharing, channel management, and API call support found in existing AIGC commercial sites, while also providing a user-friendly interface design and C-end features.

Simplifine

Simplifine is an open-source library designed for easy LLM finetuning, enabling users to perform tasks such as supervised fine tuning, question-answer finetuning, contrastive loss for embedding tasks, multi-label classification finetuning, and more. It provides features like WandB logging, in-built evaluation tools, automated finetuning parameters, and state-of-the-art optimization techniques. The library offers bug fixes, new features, and documentation updates in its latest version. Users can install Simplifine via pip or directly from GitHub. The project welcomes contributors and provides comprehensive documentation and support for users.

weam

Weam is an open source platform designed to help teams systematically adopt AI. It provides a production-ready stack with Next.js frontend and Node.js/Python backend, allowing for immediate deployment and use. Weam connects to major LLM providers, enabling easy access to the latest AI models. The platform organizes AI interactions into 'Brains' for different departments, offering customization and expansion options. Features include chat system, productivity tools, sharing & access controls, prompt library, AI agents, RAG, MCP, enterprise features, pre-built automations, and upcoming AI app solutions. Weam is free, open source, and scalable to meet growing needs.

Open-WebUI-Functions

Open-WebUI-Functions is a collection of Python-based functions that extend Open WebUI with custom pipelines, filters, and integrations. Users can interact with AI models, process data efficiently, and customize the Open WebUI experience. It includes features like custom pipelines, data processing filters, Azure AI support, N8N workflow integration, flexible configuration, secure API key management, and support for both streaming and non-streaming processing. The functions require an active Open WebUI instance, may need external AI services like Azure AI, and admin access for installation. Security features include automatic encryption of sensitive information like API keys. Pipelines include Azure AI Foundry, N8N, Infomaniak, and Google Gemini. Filters like Time Token Tracker measure response time and token usage. Integrations with Azure AI, N8N, Infomaniak, and Google are supported. Contributions are welcome, and the project is licensed under Apache License 2.0.

deepnote

Deepnote is a data notebook tool designed for the AI era, used by over 500,000 data professionals at companies like Estée Lauder, SoundCloud, Statsig, and Gusto. It offers a human-readable format, block-based architecture, reactive notebook execution, and effortless conversion between .ipynb and .deepnote formats. Deepnote extends Jupyter with features like native AI agent, Git integration, cloud compute, and native database & API connections. The repository contains reusable packages and libraries for Deepnote's notebook, runtime, and collaboration features.

For similar tasks

aiida-core

AiiDA (www.aiida.net) is a workflow manager for computational science with a strong focus on provenance, performance and extensibility. **Features** * **Workflows:** Write complex, auto-documenting workflows in python, linked to arbitrary executables on local and remote computers. The event-based workflow engine supports tens of thousands of processes per hour with full checkpointing. * **Data provenance:** Automatically track inputs, outputs & metadata of all calculations in a provenance graph for full reproducibility. Perform fast queries on graphs containing millions of nodes. * **HPC interface:** Move your calculations to a different computer by changing one line of code. AiiDA is compatible with schedulers like SLURM, PBS Pro, torque, SGE or LSF out of the box. * **Plugin interface:** Extend AiiDA with plugins for new simulation codes (input generation & parsing), data types, schedulers, transport modes and more. * **Open Science:** Export subsets of your provenance graph and share them with peers or make them available online for everyone on the Materials Cloud. * **Open source:** AiiDA is released under the MIT open source license

domino

Domino is an open source workflow management platform that provides an intuitive GUI for creating, editing, and monitoring workflows. It also offers a standard way of writing and publishing functional pieces that can be reused in multiple workflows. Domino is powered by Apache Airflow for top-tier workflows scheduling and monitoring.

anything

Anything is an open automation tool built in Rust that aims to rebuild Zapier, enabling local AI to perform a wide range of tasks beyond chat functionalities. The tool focuses on extensibility without sacrificing understandability, allowing users to create custom extensions in Rust or other interpreted languages like Python or Typescript. It features an embedded SQLite DB, a WYSIWYG editor, event system, cron trigger, HTTP and CLI extensions, with plans for additional extensions like Deno, Python, and Local AI. The tool is designed to be user-friendly, with a file-first state approach, portable triggers, actions, and flows, and a human-centric file and folder naming convention. It does not require Docker, making it easy to run on low-powered devices for 24/7 self-hosting. The event processing is focused on simplicity and visibility, with extensibility through custom extensions and a marketplace for templates, actions, and triggers.

FlowTest

FlowTestAI is the world’s first GenAI powered OpenSource Integrated Development Environment (IDE) designed for crafting, visualizing, and managing API-first workflows. It operates as a desktop app, interacting with the local file system, ensuring privacy and enabling collaboration via version control systems. The platform offers platform-specific binaries for macOS, with versions for Windows and Linux in development. It also features a CLI for running API workflows from the command line interface, facilitating automation and CI/CD processes.

Geoweaver

Geoweaver is an in-browser software that enables users to easily compose and execute full-stack data processing workflows using online spatial data facilities, high-performance computation platforms, and open-source deep learning libraries. It provides server management, code repository, workflow orchestration software, and history recording capabilities. Users can run it from both local and remote machines. Geoweaver aims to make data processing workflows manageable for non-coder scientists and preserve model run history. It offers features like progress storage, organization, SSH connection to external servers, and a web UI with Python support.

AIBotPublic

AIBotPublic is an open-source version of AIBotPro, a comprehensive AI tool that provides various features such as knowledge base construction, AI drawing, API hosting, and more. It supports custom plugins and parallel processing of multiple files. The tool is built using bootstrap4 for the frontend, .NET6.0 for the backend, and utilizes technologies like SqlServer, Redis, and Milvus for database and vector database functionalities. It integrates third-party dependencies like Baidu AI OCR, Milvus C# SDK, Google Search, and more to enhance its capabilities.

agentok

Agentok Studio is a visual tool built for AutoGen, a cutting-edge agent framework from Microsoft and various contributors. It offers intuitive visual tools to simplify the construction and management of complex agent-based workflows. Users can create workflows visually as graphs, chat with agents, and share flow templates. The tool is designed to streamline the development process for creators and developers working on next-generation Multi-Agent Applications.

gradient-cli

Gradient CLI is a tool designed to facilitate the end-to-end MLOps process, allowing individuals and organizations to develop, train, and deploy Deep Learning models efficiently. It supports various ML/DL frameworks and provides features such as 1-click Jupyter Notebooks, scalable model training workflows, and model deployment as API endpoints. The tool can run on different infrastructures like AWS, GCP, on-premise, and Paperspace GPUs, offering automatic versioning, distributed training, hyperparameter search, and more.

For similar jobs

serverless-pdf-chat

The serverless-pdf-chat repository contains a sample application that allows users to ask natural language questions of any PDF document they upload. It leverages serverless services like Amazon Bedrock, AWS Lambda, and Amazon DynamoDB to provide text generation and analysis capabilities. The application architecture involves uploading a PDF document to an S3 bucket, extracting metadata, converting text to vectors, and using a LangChain to search for information related to user prompts. The application is not intended for production use and serves as a demonstration and educational tool.

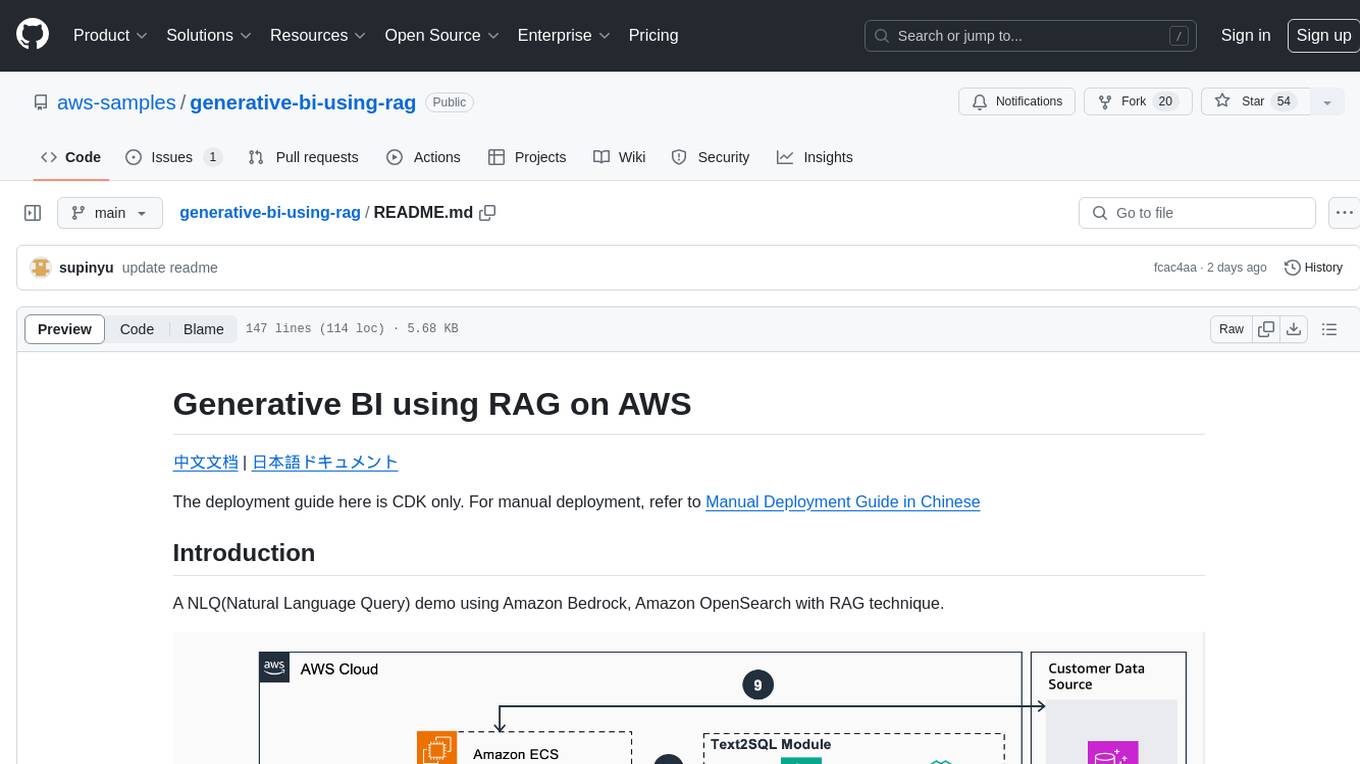

generative-bi-using-rag

Generative BI using RAG on AWS is a comprehensive framework designed to enable Generative BI capabilities on customized data sources hosted on AWS. It offers features such as Text-to-SQL functionality for querying data sources using natural language, user-friendly interface for managing data sources, performance enhancement through historical question-answer ranking, and entity recognition. It also allows customization of business information, handling complex attribution analysis problems, and provides an intuitive question-answering UI with a conversational approach for complex queries.

azure-functions-openai-extension

Azure Functions OpenAI Extension is a project that adds support for OpenAI LLM (GPT-3.5-turbo, GPT-4) bindings in Azure Functions. It provides NuGet packages for various functionalities like text completions, chat completions, assistants, embeddings generators, and semantic search. The project requires .NET 6 SDK or greater, Azure Functions Core Tools v4.x, and specific settings in Azure Function or local settings for development. It offers features like text completions, chat completion, assistants with custom skills, embeddings generators for text relatedness, and semantic search using vector databases. The project also includes examples in C# and Python for different functionalities.

edge2ai-workshop

The edge2ai-workshop repository provides a hands-on workshop for building an IoT Predictive Maintenance workflow. It includes lab exercises for setting up components like NiFi, Streams Processing, Data Visualization, and more on a single host. The repository also covers use cases such as credit card fraud detection. Users can follow detailed instructions, prerequisites, and connectivity guidelines to connect to their cluster and explore various services. Additionally, troubleshooting tips are provided for common issues like MiNiFi not sending messages or CEM not picking up new NARs.

cb-tumblebug

CB-Tumblebug (CB-TB) is a system for managing multi-cloud infrastructure consisting of resources from multiple cloud service providers. It provides an overview, features, and architecture. The tool supports various cloud providers and resource types, with ongoing development and localization efforts. Users can deploy a multi-cloud infra with GPUs, enjoy multiple LLMs in parallel, and utilize LLM-related scripts. The tool requires Linux, Docker, Docker Compose, and Golang for building the source. Users can run CB-TB with Docker Compose or from the Makefile, set up prerequisites, contribute to the project, and view a list of contributors. The tool is licensed under an open-source license.

yu-picture

The 'yu-picture' project is an educational project that provides complete video tutorials, text tutorials, resume writing, interview question solutions, and Q&A services to help you improve your project skills and enhance your resume. It is an enterprise-level intelligent collaborative cloud image library platform based on Vue 3 + Spring Boot + COS + WebSocket. The platform has a wide range of applications, including public image uploading and retrieval, image analysis for administrators, private image management for individual users, and real-time collaborative image editing for enterprises. The project covers file management, content retrieval, permission control, and real-time collaboration, using various programming concepts, architectural design methods, and optimization strategies to ensure high-speed iteration and stable operation.

AmazonSageMakerCourse

Amazon SageMaker Course is a comprehensive guide for AWS Certified Machine Learning Specialty (MLS-C01) that covers training, optimizing, deploying, and integrating machine learning models in the AWS cloud. The course includes hands-on experience with AWS built-in algorithms, Bring Your Own models, and ready-to-use AI capabilities. It also provides a complete guide to AWS Certified Machine Learning – Specialty certification, along with a high-quality timed practice test. Participants will learn how to integrate trained models into their applications and receive prompt support through the course Q&A forum and private messaging.

aimeos-headless

Aimeos headless distribution is an ultra-fast, cloud-native, and API-first headless ecommerce solution for Laravel. It offers a full-featured e-commerce package with features like JSON REST API, GraphQL API, multi-vendor support, subscriptions, block/tier pricing, admin backend, and more. The distribution is highly customizable, extensible, and suitable for multi-tenant e-commerce SaaS solutions. It supports multiple languages, AI-based text translation, and provides secure and high-quality source code. Aimeos is designed for AWS, Google, Azure, and Kubernetes based clouds, and can handle a wide range of products efficiently.