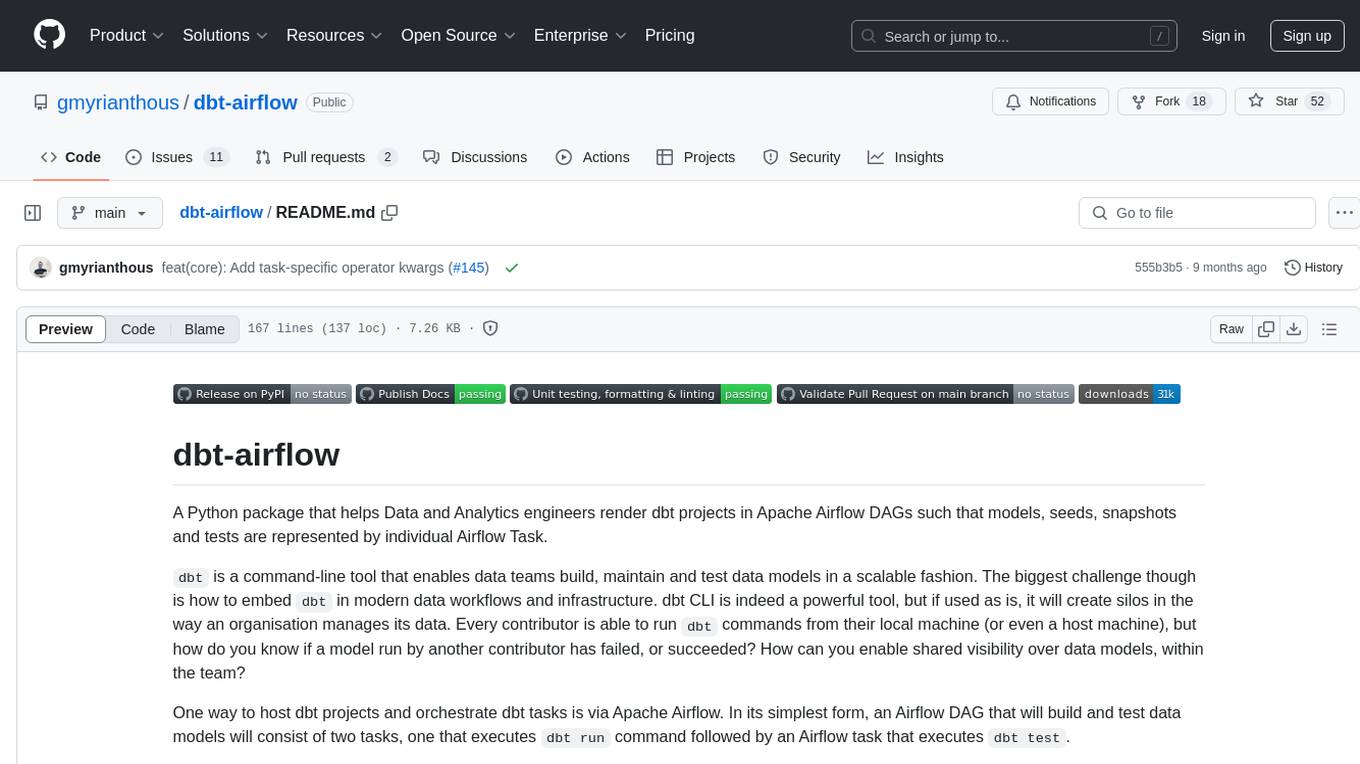

dbt-airflow

A Python package that creates fine-grained dbt tasks on Apache Airflow

Stars: 52

A Python package that helps Data and Analytics engineers render dbt projects in Apache Airflow DAGs. It enables teams to automatically render their dbt projects in a granular level, creating individual Airflow tasks for every model, seed, snapshot, and test within the dbt project. This allows for full control at the task-level, improving visibility and management of data models within the team.

README:

A Python package that helps Data and Analytics engineers render dbt projects in Apache Airflow DAGs such that models, seeds, snapshots and tests are represented by individual Airflow Task.

dbt is a command-line tool that enables data teams build, maintain and test data models in a scalable fashion. The

biggest challenge though is how to embed dbt in modern data workflows and infrastructure. dbt CLI is indeed a powerful

tool, but if used as is, it will create silos in the way an organisation manages its data. Every contributor is able to

run dbt commands from their local machine (or even a host machine), but how do you know if a model run by another

contributor has failed, or succeeded? How can you enable shared visibility over data models, within the team?

One way to host dbt projects and orchestrate dbt tasks is via Apache Airflow. In its simplest form, an Airflow DAG

that will build and test data models will consist of two tasks, one that executes dbt run command followed by an

Airflow task that executes dbt test.

But what happens when model builds or tests fail? Should we re-run the whole dbt project (that could involve hundreds of different models and/or tests) just to run a single model we've just fixed? This doesn't seem to be a good practice since re-running the whole project will be time-consuming and expensive.

A potential solution to this problem is to create individual Airflow tasks for every model, seed, snapshot and test within the dbt project. If we were about to do this work manually, we would have to put huge effort that would also be prone to errors. Additionally, it would beat the purpose of dbt, that among other features, it also automates model dependency management.

dbt-airflow is a package that builds a layer in-between Apache Airflow and dbt, and enables teams to automatically

render their dbt projects in a granular level such that they have full control to individual dbt resource types. Every

dbt model, seed, snapshot or test will have its own Airflow Task so that you can perform any action at a task-level.

Here's how the popular Jaffle Shop dbt project will be rendered on Apache Airflow via dbt-airflow:

- Render a

dbtproject as aTaskGroupconsisting of Airflow Tasks that correspond to dbt models, seeds, snapshots and tests - Every

model,seedandsnapshotresource that has at least a single test, will also have a corresponding test task as a downstream task - Add tasks before or after the whole dbt project

- Introduce extra tasks within the dbt project tasks and specify any downstream or upstream dependencies

- Create sub-

TaskGroups of dbt Airflow tasks based on your project's folder structure

The library essentially builds on top of the metadata generated by dbt-core and are stored in

the target/manifest.json file in your dbt project directory. This means that you first need to compile (or run

any other dbt command that creates the manifest file) before creating your Airflow DAG. This means the dbt-airflow

package expects that you have already compiled your dbt project so that an up to date manifest file can then be used

to render the individual tasks.

The package is available on PyPI and can be installed through pip:

pip install dbt-airflowdbt needs to connect to your target environment (database, warehouse etc.) and in order to do so, it makes use of

different adapters, each dedicated to a different technology (such as Postgres or BigQuery). Therefore, before running

dbt-airflow you also need to ensure that the required adapter(s) are installed in your environment.

For the full list of available adapters please refer to the official dbt documentation.

from datetime import datetime, timedelta

from pathlib import Path

from airflow import DAG

from airflow.operators.python import PythonOperator

from airflow.operators.empty import EmptyOperator

from dbt_airflow.core.config import DbtAirflowConfig, DbtProjectConfig, DbtProfileConfig

from dbt_airflow.core.task_group import DbtTaskGroup

from dbt_airflow.core.task import ExtraTask

from dbt_airflow.operators.execution import ExecutionOperator

with DAG(

dag_id='test_dag',

start_date=datetime(2021, 1, 1),

catchup=False,

tags=['example'],

default_args={

'owner': 'airflow',

'retries': 1,

'retry_delay': timedelta(minutes=2),

},

) as dag:

extra_tasks = [

ExtraTask(

task_id='test_task',

operator=PythonOperator,

operator_args={

'python_callable': lambda: print('Hello world'),

},

upstream_task_ids={

'model.example_dbt_project.int_customers_per_store',

'model.example_dbt_project.int_revenue_by_date',

},

),

ExtraTask(

task_id='another_test_task',

operator=PythonOperator,

operator_args={

'python_callable': lambda: print('Hello world 2!'),

},

upstream_task_ids={

'test.example_dbt_project.int_customers_per_store',

},

downstream_task_ids={

'snapshot.example_dbt_project.int_customers_per_store_snapshot',

},

),

ExtraTask(

task_id='test_task_3',

operator=PythonOperator,

operator_args={

'python_callable': lambda: print('Hello world 3!'),

},

downstream_task_ids={

'snapshot.example_dbt_project.int_customers_per_store_snapshot',

},

upstream_task_ids={

'model.example_dbt_project.int_revenue_by_date',

},

),

]

t1 = EmptyOperator(task_id='dummy_1')

t2 = EmptyOperator(task_id='dummy_2')

tg = DbtTaskGroup(

group_id='dbt-company',

dbt_project_config=DbtProjectConfig(

project_path=Path('/opt/airflow/example_dbt_project/'),

manifest_path=Path('/opt/airflow/example_dbt_project/target/manifest.json'),

),

dbt_profile_config=DbtProfileConfig(

profiles_path=Path('/opt/airflow/example_dbt_project/profiles'),

target='dev',

),

dbt_airflow_config=DbtAirflowConfig(

extra_tasks=extra_tasks,

execution_operator=ExecutionOperator.BASH,

test_tasks_operator_kwargs={'retries': 0},

),

)

t1 >> tg >> t2For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for dbt-airflow

Similar Open Source Tools

dbt-airflow

A Python package that helps Data and Analytics engineers render dbt projects in Apache Airflow DAGs. It enables teams to automatically render their dbt projects in a granular level, creating individual Airflow tasks for every model, seed, snapshot, and test within the dbt project. This allows for full control at the task-level, improving visibility and management of data models within the team.

neuron-ai

Neuron AI is a PHP framework that provides an Agent class for creating fully functional agents to perform tasks like analyzing text for SEO optimization. The framework manages advanced mechanisms such as memory, tools, and function calls. Users can extend the Agent class to create custom agents and interact with them to get responses based on the underlying LLM. Neuron AI aims to simplify the development of AI-powered applications by offering a structured framework with documentation and guidelines for contributions under the MIT license.

neuron-ai

Neuron is a PHP framework for creating and orchestrating AI Agents, providing tools for the entire agentic application development lifecycle. It allows integration of AI entities in existing PHP applications with a powerful and flexible architecture. Neuron offers tutorials and educational content to help users get started using AI Agents in their projects. The framework supports various LLM providers, tools, and toolkits, enabling users to create fully functional agents for tasks like data analysis, chatbots, and structured output. Neuron also facilitates monitoring and debugging of AI applications, ensuring control over agent behavior and decision-making processes.

mosec

Mosec is a high-performance and flexible model serving framework for building ML model-enabled backend and microservices. It bridges the gap between any machine learning models you just trained and the efficient online service API. * **Highly performant** : web layer and task coordination built with Rust 🦀, which offers blazing speed in addition to efficient CPU utilization powered by async I/O * **Ease of use** : user interface purely in Python 🐍, by which users can serve their models in an ML framework-agnostic manner using the same code as they do for offline testing * **Dynamic batching** : aggregate requests from different users for batched inference and distribute results back * **Pipelined stages** : spawn multiple processes for pipelined stages to handle CPU/GPU/IO mixed workloads * **Cloud friendly** : designed to run in the cloud, with the model warmup, graceful shutdown, and Prometheus monitoring metrics, easily managed by Kubernetes or any container orchestration systems * **Do one thing well** : focus on the online serving part, users can pay attention to the model optimization and business logic

matsciml

The Open MatSci ML Toolkit is a flexible framework for machine learning in materials science. It provides a unified interface to a variety of materials science datasets, as well as a set of tools for data preprocessing, model training, and evaluation. The toolkit is designed to be easy to use for both beginners and experienced researchers, and it can be used to train models for a wide range of tasks, including property prediction, materials discovery, and materials design.

kafka-ml

Kafka-ML is a framework designed to manage the pipeline of Tensorflow/Keras and PyTorch machine learning models on Kubernetes. It enables the design, training, and inference of ML models with datasets fed through Apache Kafka, connecting them directly to data streams like those from IoT devices. The Web UI allows easy definition of ML models without external libraries, catering to both experts and non-experts in ML/AI.

airflow-ai-sdk

This repository contains an SDK for working with LLMs from Apache Airflow, based on Pydantic AI. It allows users to call LLMs and orchestrate agent calls directly within their Airflow pipelines using decorator-based tasks. The SDK leverages the familiar Airflow `@task` syntax with extensions like `@task.llm`, `@task.llm_branch`, and `@task.agent`. Users can define tasks that call language models, orchestrate multi-step AI reasoning, change the control flow of a DAG based on LLM output, and support various models in the Pydantic AI library. The SDK is designed to integrate LLM workflows into Airflow pipelines, from simple LLM calls to complex agentic workflows.

lhotse

Lhotse is a Python library designed to make speech and audio data preparation flexible and accessible. It aims to attract a wider community to speech processing tasks by providing a Python-centric design and an expressive command-line interface. Lhotse offers standard data preparation recipes, PyTorch Dataset classes for speech tasks, and efficient data preparation for model training with audio cuts. It supports data augmentation, feature extraction, and feature-space cut mixing. The tool extends Kaldi's data preparation recipes with seamless PyTorch integration, human-readable text manifests, and convenient Python classes.

web-llm

WebLLM is a modular and customizable javascript package that directly brings language model chats directly onto web browsers with hardware acceleration. Everything runs inside the browser with no server support and is accelerated with WebGPU. WebLLM is fully compatible with OpenAI API. That is, you can use the same OpenAI API on any open source models locally, with functionalities including json-mode, function-calling, streaming, etc. We can bring a lot of fun opportunities to build AI assistants for everyone and enable privacy while enjoying GPU acceleration.

agentscript

AgentScript is an open-source framework for building AI agents that think in code. It prompts a language model to generate JavaScript code, which is then executed in a dedicated runtime with resumability, state persistence, and interactivity. The framework allows for abstract task execution without needing to know all the data beforehand, making it flexible and efficient. AgentScript supports tools, deterministic functions, and LLM-enabled functions, enabling dynamic data processing and decision-making. It also provides state management and human-in-the-loop capabilities, allowing for pausing, serialization, and resumption of execution.

Trace

Trace is a new AutoDiff-like tool for training AI systems end-to-end with general feedback. It generalizes the back-propagation algorithm by capturing and propagating an AI system's execution trace. Implemented as a PyTorch-like Python library, users can write Python code directly and use Trace primitives to optimize certain parts, similar to training neural networks.

verifiers

Verifiers is a library of modular components for creating RL environments and training LLM agents. It includes an async GRPO implementation built around the `transformers` Trainer, is supported by `prime-rl` for large-scale FSDP training, and can easily be integrated into any RL framework which exposes an OpenAI-compatible inference client. The library provides tools for creating and evaluating RL environments, training LLM agents, and leveraging OpenAI-compatible models for various tasks. Verifiers aims to be a reliable toolkit for building on top of, minimizing fork proliferation in the RL infrastructure ecosystem.

axar

AXAR AI is a lightweight framework designed for building production-ready agentic applications using TypeScript. It aims to simplify the process of creating robust, production-grade LLM-powered apps by focusing on familiar coding practices without unnecessary abstractions or steep learning curves. The framework provides structured, typed inputs and outputs, familiar and intuitive patterns like dependency injection and decorators, explicit control over agent behavior, real-time logging and monitoring tools, minimalistic design with little overhead, model agnostic compatibility with various AI models, and streamed outputs for fast and accurate results. AXAR AI is ideal for developers working on real-world AI applications who want a tool that gets out of the way and allows them to focus on shipping reliable software.

POPPER

Popper is an agentic framework for automated validation of free-form hypotheses using Large Language Models (LLMs). It follows Karl Popper's principle of falsification and designs falsification experiments to validate hypotheses. Popper ensures strict Type-I error control and actively gathers evidence from diverse observations. It delivers robust error control, high power, and scalability across various domains like biology, economics, and sociology. Compared to human scientists, Popper achieves comparable performance in validating complex biological hypotheses while reducing time by 10 folds, providing a scalable, rigorous solution for hypothesis validation.

artkit

ARTKIT is a Python framework developed by BCG X for automating prompt-based testing and evaluation of Gen AI applications. It allows users to develop automated end-to-end testing and evaluation pipelines for Gen AI systems, supporting multi-turn conversations and various testing scenarios like Q&A accuracy, brand values, equitability, safety, and security. The framework provides a simple API, asynchronous processing, caching, model agnostic support, end-to-end pipelines, multi-turn conversations, robust data flows, and visualizations. ARTKIT is designed for customization by data scientists and engineers to enhance human-in-the-loop testing and evaluation, emphasizing the importance of tailored testing for each Gen AI use case.

sieves

sieves is a library for zero- and few-shot NLP tasks with structured generation, enabling rapid prototyping of NLP applications without the need for training. It simplifies NLP prototyping by bundling capabilities into a single library, providing zero- and few-shot model support, a unified interface for structured generation, built-in tasks for common NLP operations, easy extendability, document-based pipeline architecture, caching to prevent redundant model calls, and more. The tool draws inspiration from spaCy and spacy-llm, offering features like immediate inference, observable pipelines, integrated tools for document parsing and text chunking, ready-to-use tasks such as classification, summarization, translation, and more, persistence for saving and loading pipelines, distillation for specialized model creation, and caching to optimize performance.

For similar tasks

dbt-airflow

A Python package that helps Data and Analytics engineers render dbt projects in Apache Airflow DAGs. It enables teams to automatically render their dbt projects in a granular level, creating individual Airflow tasks for every model, seed, snapshot, and test within the dbt project. This allows for full control at the task-level, improving visibility and management of data models within the team.

airflow-provider-great-expectations

The 'airflow-provider-great-expectations' repository contains a set of Airflow operators for Great Expectations, a Python library used for testing and validating data. The operators enable users to run Great Expectations validations and checks within Apache Airflow workflows. The package requires Airflow 2.1.0+ and Great Expectations >=v0.13.9. It provides functionalities to work with Great Expectations V3 Batch Request API, Checkpoints, and allows passing kwargs to Checkpoints at runtime. The repository includes modules for a base operator and examples of DAGs with sample tasks demonstrating the operator's functionality.

blades

Blades is a multimodal AI Agent framework in Go, supporting custom models, tools, memory, middleware, and more. It is well-suited for multi-turn conversations, chain reasoning, and structured output. The framework provides core components like Agent, Prompt, Chain, ModelProvider, Tool, Memory, and Middleware, enabling developers to build intelligent applications with flexible configuration and high extensibility. Blades leverages the characteristics of Go to achieve high decoupling and efficiency, making it easy to integrate different language model services and external tools. The project is in its early stages, inviting Go developers and AI enthusiasts to contribute and explore the possibilities of building AI applications in Go.

flyte-sdk

Flyte 2 SDK is a pure Python tool for type-safe, distributed orchestration of agents, ML pipelines, and more. It allows users to write data pipelines, ML training jobs, and distributed compute in Python without any DSL constraints. With features like async-first parallelism and fine-grained observability, Flyte 2 offers a seamless workflow experience. Users can leverage core concepts like TaskEnvironments for container configuration, pure Python workflows for flexibility, and async parallelism for distributed execution. Advanced features include sub-task observability with tracing and remote task execution. The tool also provides native Jupyter integration for running and monitoring workflows directly from notebooks. Configuration and deployment are made easy with configuration files and commands for deploying and running workflows. Flyte 2 is licensed under the Apache 2.0 License.

open-computer-use

Open Computer Use is an open-source platform that enables AI agents to control computers through browser automation, terminal access, and desktop interaction. It is designed for developers to create autonomous AI workflows. The platform allows agents to browse the web, run terminal commands, control desktop applications, orchestrate multi-agents, stream execution, and is 100% open-source and self-hostable. It provides capabilities similar to Anthropic's Claude Computer Use but is fully open-source and extensible.

For similar jobs

dbt-airflow

A Python package that helps Data and Analytics engineers render dbt projects in Apache Airflow DAGs. It enables teams to automatically render their dbt projects in a granular level, creating individual Airflow tasks for every model, seed, snapshot, and test within the dbt project. This allows for full control at the task-level, improving visibility and management of data models within the team.

lollms-webui

LoLLMs WebUI (Lord of Large Language Multimodal Systems: One tool to rule them all) is a user-friendly interface to access and utilize various LLM (Large Language Models) and other AI models for a wide range of tasks. With over 500 AI expert conditionings across diverse domains and more than 2500 fine tuned models over multiple domains, LoLLMs WebUI provides an immediate resource for any problem, from car repair to coding assistance, legal matters, medical diagnosis, entertainment, and more. The easy-to-use UI with light and dark mode options, integration with GitHub repository, support for different personalities, and features like thumb up/down rating, copy, edit, and remove messages, local database storage, search, export, and delete multiple discussions, make LoLLMs WebUI a powerful and versatile tool.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

minio

MinIO is a High Performance Object Storage released under GNU Affero General Public License v3.0. It is API compatible with Amazon S3 cloud storage service. Use MinIO to build high performance infrastructure for machine learning, analytics and application data workloads.

mage-ai

Mage is an open-source data pipeline tool for transforming and integrating data. It offers an easy developer experience, engineering best practices built-in, and data as a first-class citizen. Mage makes it easy to build, preview, and launch data pipelines, and provides observability and scaling capabilities. It supports data integrations, streaming pipelines, and dbt integration.

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

airbyte

Airbyte is an open-source data integration platform that makes it easy to move data from any source to any destination. With Airbyte, you can build and manage data pipelines without writing any code. Airbyte provides a library of pre-built connectors that make it easy to connect to popular data sources and destinations. You can also create your own connectors using Airbyte's no-code Connector Builder or low-code CDK. Airbyte is used by data engineers and analysts at companies of all sizes to build and manage their data pipelines.