ML-Bench

The Official Repo of ML-Bench: Evaluating Large Language Models and Agents for Machine Learning Tasks on Repository-Level Code (https://arxiv.org/abs/2311.09835)

Stars: 344

ML-Bench is a tool designed to evaluate large language models and agents for machine learning tasks on repository-level code. It provides functionalities for data preparation, environment setup, usage, API calling, open source model fine-tuning, and inference. Users can clone the repository, load datasets, run ML-LLM-Bench, prepare data, fine-tune models, and perform inference tasks. The tool aims to facilitate the evaluation of language models and agents in the context of machine learning tasks on code repositories.

README:

ML-Bench: Evaluating Large Language Models and Agents for Machine Learning Tasks on Repository-Level Code

📖 Paper • 🚀 Github Page • 📊 Data

- 📋 Prerequisites

- 📊 Data Preparation

- 🦙 ML-LLM-Bench

- 🤖 ML-Agent-Bench

- 🛠️ Utils for Data Curations

- 📝 Cite Us

- 📜 License

To clone this repository with all its submodules, use the --recurse-submodules flag:

git clone --recurse-submodules https://github.com/gersteinlab/ML-Bench.git

cd ML-BenchIf you have already cloned the repository without the --recurse-submodules flag, you can run the following commands to fetch the submodules:

git submodule update --init --recursiveThen run

pip install -r requeirments.txtYou can load the dataset using the following code:

from datasets import load_dataset

ml_bench = load_dataset("super-dainiu/ml-bench") # splits: ['full', 'quarter']The dataset contains the following columns:

-

github_id: The ID of the GitHub repository. -

github: The URL of the GitHub repository. -

repo_id: The ID of the sample within each repository. -

id: The unique ID of the sample in the entire dataset. -

path: The path to the corresponding folder in LLM-Bench. -

arguments: The arguments specified in the user requirements. -

instruction: The user instructions for the task. -

oracle: The oracle contents relevant to the task. -

type: The expected output type based on the oracle contents. -

output: The ground truth output generated based on the oracle contents. -

prefix_code: The code snippet for preparing the execution environment

If you want to run ML-LLM-Bench, you need to do post-processing on the dataset. You can use the following code to post-process the dataset:

bash scripts/post_process/prepare.shSee post_process for more details.

After clone submodules, you can run

cd scripts/post_process

bash prepare.sh to generate full and quarter benchmark into merged_full_benchmark.jsonl and merged_quarter_benchmark.jsonl

You can change readme_content = fr.read() in merge.py, line 50 to readme_content = fr.read()[:100000] to get 32k length README contents or to readme_content = fr.read()[:400000] to get 128k length README contents.

Under the 128k setting, users can prepare trainset and testset in 10 mins with 10 workers. Without token limitation, users may need 2 hours to prepare the whole dataset and get a huge dataset.

To run the ML-LLM-Bench Docker container, you can use the following command:

docker pull public.ecr.aws/i5g0m1f6/ml-bench

docker run -it -v ML_Bench:/deep_data public.ecr.aws/i5g0m1f6/ml-bench /bin/bashTo download model weights and prepare files, you can use the following command:

cd utils

bash download_model_weight_pics.shIt may take 2 hours to automatically prepare them.

Place your results in utils/results directory, and update the --result_path in exec.sh with your path. Also, modify the log address.

Then run bash exec.sh. And you can check the run logs in your log file, view the overall results in eval_total_user.jsonl, and see the results for each repository in eval_result_user.jsonl.

Both JSONL files starting with eval_result and eval_total contain partial execution results in our paper.

The `utils/results` folder includes the model-generated outputs we used for testing.

The `utils/exec_logs` folder saves our the execute log.

The `temp.py` file is not for users, it is used to store the code written by models.

Additionally, the execution process may generate new unnecessary files.

To reproduce OpenAI's performance on this task, use the following script:

bash script/openai/run.shYou need to change the parameter settings in script/openai/run.sh:

-

type: Choose fromquarterorfull. -

model: Model name. -

input_file: File path of the dataset. -

answer_file: Original answer in JSON format from GPT. -

parsing_file: Post-process the output of GPT in JSONL format to obtain executable code segments. -

readme_type: Choose fromoracle_segmentandreadme.-

oracle_segment: The code paragraph in the README that is most relevant to the task. -

readme: The entire text of the README in the repository where the task is located.

-

-

engine_name: Choose fromgpt-35-turbo-16kandgpt-4-32. -

n_turn: Number of executable codes GPT returns (5 times in the paper experiment). -

openai_key: Your OpenAI API key.

Please refer to openai for details.

Llama-recipes provides a pip distribution for easy installation and usage in other projects. Alternatively, it can be installed from the source.

- Install with pip

pip install --extra-index-url https://download.pytorch.org/whl/test/cu118 llama-recipes

- Install from source To install from source e.g. for development use this command. We're using hatchling as our build backend which requires an up-to-date pip as well as setuptools package.

git clone https://github.com/facebookresearch/llama-recipes

cd llama-recipes

pip install -U pip setuptools

pip install --extra-index-url https://download.pytorch.org/whl/test/cu118 -e .

By definition, we have three tasks in the paper.

- Task 1: Given a task description + Code, generate a code snippet.

- Task 2: Given a task description + Retrieval, generate a code snippet.

- Task 3: Given a task description + Oracle, generate a code snippet.

You can use the following script to reproduce CodeLlama-7b's fine-tuning performance on this task:

torchrun --nproc_per_node 2 finetuning.py \

--use_peft \

--peft_method lora \

--enable_fsdp \

--model_name codellama/CodeLlama-7b-Instruct-hf \

--context_length 8192 \

--dataset mlbench_dataset \

--output_dir OUTPUT_PATH \

--task TASK \

--data_path DATA_PATH \You need to change the parameter settings of OUTPUT_PATH, TASK, and DATA_PATH correspondingly.

-

OUTPUT_DIR: The directory to save the model. -

TASK: Choose from1,2and3. -

DATA_PATH: The directory of the dataset.

You can use the following script to reproduce CodeLlama-7b's inference performance on this task:

python chat_completion.py \

--model_name 'codellama/CodeLlama-7b-Instruct-hf' \

--peft_model PEFT_MODEL \

--prompt_file PROMPT_FILE \

--task TASK \You need to change the parameter settings of PEFT_MODEL, PROMPT_FILE, and TASK correspondingly.

-

PEFT_MODEL: The path of the PEFT model. -

PROMPT_FILE: The path of the prompt file. -

TASK: Choose from1,2and3.

Please refer to finetune for details.

To run the ML-Agent-Bench Docker container, you can use the following command:

docker pull public.ecr.aws/i5g0m1f6/ml-bench

docker run -it public.ecr.aws/i5g0m1f6/ml-bench /bin/bashThis will pull the latest ML-Agent-Bench Docker image and run it in an interactive shell. The container includes all the necessary dependencies to run the ML-Agent-Bench codebase.

For ML-Agent-Bench in OpenDevin, please refer to the OpenDevin setup guide.

Please refer to envs for details.

This project is inspired by some related projects. We would like to thank the authors for their contributions. If you find this project or dataset useful, please cite it:

@misc{tang2024mlbench,

title={ML-Bench: Evaluating Large Language Models and Agents for Machine Learning Tasks on Repository-Level Code},

author={Xiangru Tang and Yuliang Liu and Zefan Cai and Yanjun Shao and Junjie Lu and Yichi Zhang and Zexuan Deng and Helan Hu and Kaikai An and Ruijun Huang and Shuzheng Si and Sheng Chen and Haozhe Zhao and Liang Chen and Yan Wang and Tianyu Liu and Zhiwei Jiang and Baobao Chang and Yin Fang and Yujia Qin and Wangchunshu Zhou and Yilun Zhao and Arman Cohan and Mark Gerstein},

year={2024},

eprint={2311.09835},

archivePrefix={arXiv},

primaryClass={'cs.CL'}

}

Distributed under the MIT License. See LICENSE for more information.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ML-Bench

Similar Open Source Tools

ML-Bench

ML-Bench is a tool designed to evaluate large language models and agents for machine learning tasks on repository-level code. It provides functionalities for data preparation, environment setup, usage, API calling, open source model fine-tuning, and inference. Users can clone the repository, load datasets, run ML-LLM-Bench, prepare data, fine-tune models, and perform inference tasks. The tool aims to facilitate the evaluation of language models and agents in the context of machine learning tasks on code repositories.

mods

AI for the command line, built for pipelines. LLM based AI is really good at interpreting the output of commands and returning the results in CLI friendly text formats like Markdown. Mods is a simple tool that makes it super easy to use AI on the command line and in your pipelines. Mods works with OpenAI, Groq, Azure OpenAI, and LocalAI To get started, install Mods and check out some of the examples below. Since Mods has built-in Markdown formatting, you may also want to grab Glow to give the output some _pizzazz_.

hash

HASH is a self-building, open-source database which grows, structures and checks itself. With it, we're creating a platform for decision-making, which helps you integrate, understand and use data in a variety of different ways.

garak

Garak is a free tool that checks if a Large Language Model (LLM) can be made to fail in a way that is undesirable. It probes for hallucination, data leakage, prompt injection, misinformation, toxicity generation, jailbreaks, and many other weaknesses. Garak's a free tool. We love developing it and are always interested in adding functionality to support applications.

cli-agent

Pieces CLI for Developers is a comprehensive command-line interface (CLI) tool designed to interact seamlessly with Pieces OS. It provides functionalities such as asset management, application interaction, and integration with various Pieces OS features. The tool is compatible with Windows 10 or greater, Mac, and Windows operating systems. Users can install the tool by running 'pip install pieces-cli' or 'brew install pieces-cli'. After installation, users can access the tool's functionalities through the terminal by using the 'pieces' command followed by subcommands and options. The tool supports various commands, which can be found in the documentation. Developers can contribute to the project by forking and cloning the repository, setting up a virtual environment, installing dependencies with poetry, and running test cases with pytest and coverage.

cheating-based-prompt-engine

This is a vulnerability mining engine purely based on GPT, requiring no prior knowledge base, no fine-tuning, yet its effectiveness can overwhelmingly surpass most of the current related research. The core idea revolves around being task-driven, not question-driven, driven by prompts, not by code, and focused on prompt design, not model design. The essence is encapsulated in one word: deception. It is a type of code understanding logic vulnerability mining that fully stimulates the capabilities of GPT, suitable for real actual projects.

codespin

CodeSpin.AI is a set of open-source code generation tools that leverage large language models (LLMs) to automate coding tasks. With CodeSpin, you can generate code in various programming languages, including Python, JavaScript, Java, and C++, by providing natural language prompts. CodeSpin offers a range of features to enhance code generation, such as custom templates, inline prompting, and the ability to use ChatGPT as an alternative to API keys. Additionally, CodeSpin provides options for regenerating code, executing code in prompt files, and piping data into the LLM for processing. By utilizing CodeSpin, developers can save time and effort in coding tasks, improve code quality, and explore new possibilities in code generation.

tiledesk-dashboard

Tiledesk is an open-source live chat platform with integrated chatbots written in Node.js and Express. It is designed to be a multi-channel platform for web, Android, and iOS, and it can be used to increase sales or provide post-sales customer service. Tiledesk's chatbot technology allows for automation of conversations, and it also provides APIs and webhooks for connecting external applications. Additionally, it offers a marketplace for apps and features such as CRM, ticketing, and data export.

trickPrompt-engine

This repository contains a vulnerability mining engine based on GPT technology. The engine is designed to identify logic vulnerabilities in code by utilizing task-driven prompts. It does not require prior knowledge or fine-tuning and focuses on prompt design rather than model design. The tool is effective in real-world projects and should not be used for academic vulnerability testing. It supports scanning projects in various languages, with current support for Solidity. The engine is configured through prompts and environment settings, enabling users to scan for vulnerabilities in their codebase. Future updates aim to optimize code structure, add more language support, and enhance usability through command line mode. The tool has received a significant audit bounty of $50,000+ as of May 2024.

log10

Log10 is a one-line Python integration to manage your LLM data. It helps you log both closed and open-source LLM calls, compare and identify the best models and prompts, store feedback for fine-tuning, collect performance metrics such as latency and usage, and perform analytics and monitor compliance for LLM powered applications. Log10 offers various integration methods, including a python LLM library wrapper, the Log10 LLM abstraction, and callbacks, to facilitate its use in both existing production environments and new projects. Pick the one that works best for you. Log10 also provides a copilot that can help you with suggestions on how to optimize your prompt, and a feedback feature that allows you to add feedback to your completions. Additionally, Log10 provides prompt provenance, session tracking and call stack functionality to help debug prompt chains. With Log10, you can use your data and feedback from users to fine-tune custom models with RLHF, and build and deploy more reliable, accurate and efficient self-hosted models. Log10 also supports collaboration, allowing you to create flexible groups to share and collaborate over all of the above features.

ice-score

ICE-Score is a tool designed to instruct large language models to evaluate code. It provides a minimum viable product (MVP) for evaluating generated code snippets using inputs such as problem, output, task, aspect, and model. Users can also evaluate with reference code and enable zero-shot chain-of-thought evaluation. The tool is built on codegen-metrics and code-bert-score repositories and includes datasets like CoNaLa and HumanEval. ICE-Score has been accepted to EACL 2024.

desktop

ComfyUI Desktop is a packaged desktop application that allows users to easily use ComfyUI with bundled features like ComfyUI source code, ComfyUI-Manager, and uv. It automatically installs necessary Python dependencies and updates with stable releases. The app comes with Electron, Chromium binaries, and node modules. Users can store ComfyUI files in a specified location and manage model paths. The tool requires Python 3.12+ and Visual Studio with Desktop C++ workload for Windows. It uses nvm to manage node versions and yarn as the package manager. Users can install ComfyUI and dependencies using comfy-cli, download uv, and build/launch the code. Troubleshooting steps include rebuilding modules and installing missing libraries. The tool supports debugging in VSCode and provides utility scripts for cleanup. Crash reports can be sent to help debug issues, but no personal data is included.

garak

Garak is a vulnerability scanner designed for LLMs (Large Language Models) that checks for various weaknesses such as hallucination, data leakage, prompt injection, misinformation, toxicity generation, and jailbreaks. It combines static, dynamic, and adaptive probes to explore vulnerabilities in LLMs. Garak is a free tool developed for red-teaming and assessment purposes, focusing on making LLMs or dialog systems fail. It supports various LLM models and can be used to assess their security and robustness.

ai-starter-kit

SambaNova AI Starter Kits is a collection of open-source examples and guides designed to facilitate the deployment of AI-driven use cases for developers and enterprises. The kits cover various categories such as Data Ingestion & Preparation, Model Development & Optimization, Intelligent Information Retrieval, and Advanced AI Capabilities. Users can obtain a free API key using SambaNova Cloud or deploy models using SambaStudio. Most examples are written in Python but can be applied to any programming language. The kits provide resources for tasks like text extraction, fine-tuning embeddings, prompt engineering, question-answering, image search, post-call analysis, and more.

screeps-starter-rust

screeps-starter-rust is a Rust AI starter kit for Screeps: World, a JavaScript-based MMO game. It utilizes the screeps-game-api bindings from the rustyscreeps organization and wasm-pack for building Rust code to WebAssembly. The example includes Rollup for bundling javascript, Babel for transpiling code, and screeps-api Node.js package for deployment. Users can refer to the Rust version of game APIs documentation at https://docs.rs/screeps-game-api/. The tool supports most crates on crates.io, except those interacting with OS APIs.

For similar tasks

labelbox-python

Labelbox is a data-centric AI platform for enterprises to develop, optimize, and use AI to solve problems and power new products and services. Enterprises use Labelbox to curate data, generate high-quality human feedback data for computer vision and LLMs, evaluate model performance, and automate tasks by combining AI and human-centric workflows. The academic & research community uses Labelbox for cutting-edge AI research.

promptfoo

Promptfoo is a tool for testing and evaluating LLM output quality. With promptfoo, you can build reliable prompts, models, and RAGs with benchmarks specific to your use-case, speed up evaluations with caching, concurrency, and live reloading, score outputs automatically by defining metrics, use as a CLI, library, or in CI/CD, and use OpenAI, Anthropic, Azure, Google, HuggingFace, open-source models like Llama, or integrate custom API providers for any LLM API.

vespa

Vespa is a platform that performs operations such as selecting a subset of data in a large corpus, evaluating machine-learned models over the selected data, organizing and aggregating it, and returning it, typically in less than 100 milliseconds, all while the data corpus is continuously changing. It has been in development for many years and is used on a number of large internet services and apps which serve hundreds of thousands of queries from Vespa per second.

python-aiplatform

The Vertex AI SDK for Python is a library that provides a convenient way to use the Vertex AI API. It offers a high-level interface for creating and managing Vertex AI resources, such as datasets, models, and endpoints. The SDK also provides support for training and deploying custom models, as well as using AutoML models. With the Vertex AI SDK for Python, you can quickly and easily build and deploy machine learning models on Vertex AI.

ScandEval

ScandEval is a framework for evaluating pretrained language models on mono- or multilingual language tasks. It provides a unified interface for benchmarking models on a variety of tasks, including sentiment analysis, question answering, and machine translation. ScandEval is designed to be easy to use and extensible, making it a valuable tool for researchers and practitioners alike.

opencompass

OpenCompass is a one-stop platform for large model evaluation, aiming to provide a fair, open, and reproducible benchmark for large model evaluation. Its main features include: * Comprehensive support for models and datasets: Pre-support for 20+ HuggingFace and API models, a model evaluation scheme of 70+ datasets with about 400,000 questions, comprehensively evaluating the capabilities of the models in five dimensions. * Efficient distributed evaluation: One line command to implement task division and distributed evaluation, completing the full evaluation of billion-scale models in just a few hours. * Diversified evaluation paradigms: Support for zero-shot, few-shot, and chain-of-thought evaluations, combined with standard or dialogue-type prompt templates, to easily stimulate the maximum performance of various models. * Modular design with high extensibility: Want to add new models or datasets, customize an advanced task division strategy, or even support a new cluster management system? Everything about OpenCompass can be easily expanded! * Experiment management and reporting mechanism: Use config files to fully record each experiment, and support real-time reporting of results.

flower

Flower is a framework for building federated learning systems. It is designed to be customizable, extensible, framework-agnostic, and understandable. Flower can be used with any machine learning framework, for example, PyTorch, TensorFlow, Hugging Face Transformers, PyTorch Lightning, scikit-learn, JAX, TFLite, MONAI, fastai, MLX, XGBoost, Pandas for federated analytics, or even raw NumPy for users who enjoy computing gradients by hand.

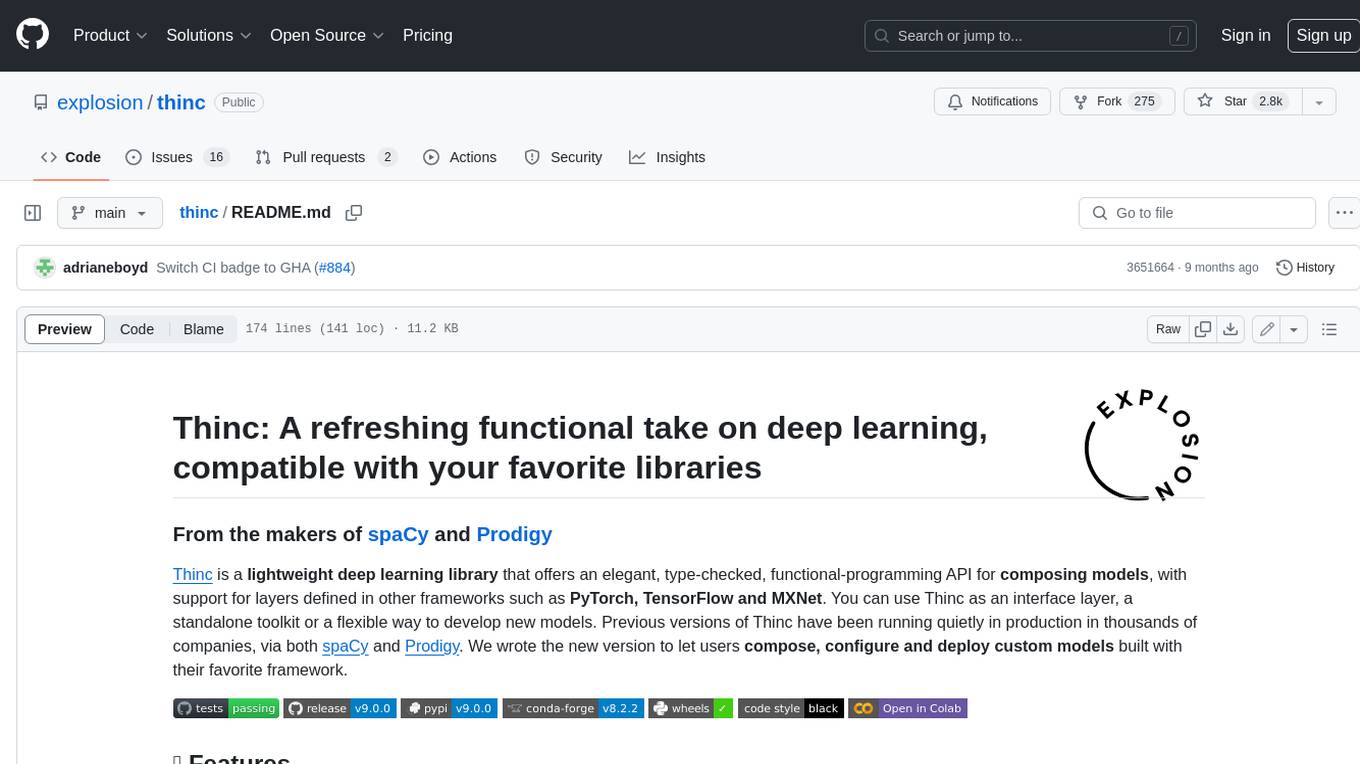

thinc

Thinc is a lightweight deep learning library that offers an elegant, type-checked, functional-programming API for composing models, with support for layers defined in other frameworks such as PyTorch, TensorFlow and MXNet. You can use Thinc as an interface layer, a standalone toolkit or a flexible way to develop new models.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.