ollama-ai

A Ruby gem for interacting with Ollama's API that allows you to run open source AI LLMs (Large Language Models) locally.

Stars: 133

Ollama AI is a Ruby gem designed to interact with Ollama's API, allowing users to run open source AI LLMs (Large Language Models) locally. The gem provides low-level access to Ollama, enabling users to build abstractions on top of it. It offers methods for generating completions, chat interactions, embeddings, creating and managing models, and more. Users can also work with text and image data, utilize Server-Sent Events for streaming capabilities, and handle errors effectively. Ollama AI is not an official Ollama project and is distributed under the MIT License.

README:

A Ruby gem for interacting with Ollama's API that allows you to run open source AI LLMs (Large Language Models) locally.

This Gem is designed to provide low-level access to Ollama, enabling people to build abstractions on top of it. If you are interested in more high-level abstractions or more user-friendly tools, you may want to consider Nano Bots 💎 🤖.

gem 'ollama-ai', '~> 1.3.0'require 'ollama-ai'

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: { server_sent_events: true }

)

result = client.generate(

{ model: 'llama2',

prompt: 'Hi!' }

)Result:

[{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:34:02.088810408Z',

'response' => 'Hello',

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:34:02.419045606Z',

'response' => '!',

'done' => false },

# ..

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:34:07.680049831Z',

'response' => '?',

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:34:07.872170352Z',

'response' => '',

'done' => true,

'context' =>

[518, 25_580,

# ...

13_563, 29_973],

'total_duration' => 11_653_781_127,

'load_duration' => 1_186_200_439,

'prompt_eval_count' => 22,

'prompt_eval_duration' => 5_006_751_000,

'eval_count' => 25,

'eval_duration' => 5_453_058_000 }]- TL;DR and Quick Start

- Index

- Setup

- Usage

- Development

- Resources and References

- Disclaimer

gem install ollama-ai -v 1.3.0gem 'ollama-ai', '~> 1.3.0'Create a new client:

require 'ollama-ai'

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: { server_sent_events: true }

)require 'ollama-ai'

client = Ollama.new(

credentials: {

address: 'http://localhost:11434',

bearer_token: 'eyJhbG...Qssw5c'

},

options: { server_sent_events: true }

)Remember that hardcoding your credentials in code is unsafe. It's preferable to use environment variables:

require 'ollama-ai'

client = Ollama.new(

credentials: {

address: 'http://localhost:11434',

bearer_token: ENV['OLLAMA_BEARER_TOKEN']

},

options: { server_sent_events: true }

)client.generate

client.chat

client.embeddings

client.create

client.tags

client.show

client.copy

client.delete

client.pull

client.pushAPI Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-a-completion

API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-a-completion

result = client.generate(

{ model: 'llama2',

prompt: 'Hi!',

stream: false }

)Result:

[{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:35:41.951371247Z',

'response' => "Hi there! It's nice to meet you. How are you today?",

'done' => true,

'context' =>

[518, 25_580,

# ...

9826, 29_973],

'total_duration' => 6_981_097_576,

'load_duration' => 625_053,

'prompt_eval_count' => 22,

'prompt_eval_duration' => 4_075_171_000,

'eval_count' => 16,

'eval_duration' => 2_900_325_000 }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-a-completion

Ensure that you have enabled Server-Sent Events before using blocks for streaming. stream: true is not necessary, as true is the default:

client.generate(

{ model: 'llama2',

prompt: 'Hi!' }

) do |event, raw|

puts event

endEvent:

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:36:30.665245712Z',

'response' => 'Hello',

'done' => false }You can get all the receive events at once as an array:

result = client.generate(

{ model: 'llama2',

prompt: 'Hi!' }

)Result:

[{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:36:30.665245712Z',

'response' => 'Hello',

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:36:30.927337136Z',

'response' => '!',

'done' => false },

# ...

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:36:37.249416767Z',

'response' => '?',

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:36:37.44041283Z',

'response' => '',

'done' => true,

'context' =>

[518, 25_580,

# ...

13_563, 29_973],

'total_duration' => 10_551_395_645,

'load_duration' => 966_631,

'prompt_eval_count' => 22,

'prompt_eval_duration' => 4_034_990_000,

'eval_count' => 25,

'eval_duration' => 6_512_954_000 }]You can mix both as well:

result = client.generate(

{ model: 'llama2',

prompt: 'Hi!' }

) do |event, raw|

puts event

endAPI Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-a-chat-completion

result = client.chat(

{ model: 'llama2',

messages: [

{ role: 'user', content: 'Hi! My name is Purple.' }

] }

) do |event, raw|

puts event

endEvent:

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:38:01.729897311Z',

'message' => { 'role' => 'assistant', 'content' => "\n" },

'done' => false }Result:

[{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:38:01.729897311Z',

'message' => { 'role' => 'assistant', 'content' => "\n" },

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:38:02.081494506Z',

'message' => { 'role' => 'assistant', 'content' => '*' },

'done' => false },

# ...

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:38:17.855905499Z',

'message' => { 'role' => 'assistant', 'content' => '?' },

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:38:18.07331245Z',

'message' => { 'role' => 'assistant', 'content' => '' },

'done' => true,

'total_duration' => 22_494_544_502,

'load_duration' => 4_224_600,

'prompt_eval_count' => 28,

'prompt_eval_duration' => 6_496_583_000,

'eval_count' => 61,

'eval_duration' => 15_991_728_000 }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-a-chat-completion

To maintain a back-and-forth conversation, you need to append the received responses and build a history for your requests:

result = client.chat(

{ model: 'llama2',

messages: [

{ role: 'user', content: 'Hi! My name is Purple.' },

{ role: 'assistant',

content: 'Hi, Purple!' },

{ role: 'user', content: "What's my name?" }

] }

) do |event, raw|

puts event

endEvent:

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:07.352998498Z',

'message' => { 'role' => 'assistant', 'content' => ' Pur' },

'done' => false }Result:

[{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:06.562939469Z',

'message' => { 'role' => 'assistant', 'content' => 'Your' },

'done' => false },

# ...

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:07.352998498Z',

'message' => { 'role' => 'assistant', 'content' => ' Pur' },

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:07.545323584Z',

'message' => { 'role' => 'assistant', 'content' => 'ple' },

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:07.77769408Z',

'message' => { 'role' => 'assistant', 'content' => '!' },

'done' => false },

{ 'model' => 'llama2',

'created_at' => '2024-01-07T01:40:07.974165849Z',

'message' => { 'role' => 'assistant', 'content' => '' },

'done' => true,

'total_duration' => 11_482_012_681,

'load_duration' => 4_246_882,

'prompt_eval_count' => 57,

'prompt_eval_duration' => 10_387_150_000,

'eval_count' => 6,

'eval_duration' => 1_089_249_000 }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#generate-embeddings

result = client.embeddings(

{ model: 'llama2',

prompt: 'Hi!' }

)Result:

[{ 'embedding' =>

[0.6970467567443848, -2.248202085494995,

# ...

-1.5994540452957153, -0.3464218080043793] }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#create-a-model

result = client.create(

{ name: 'mario',

modelfile: "FROM llama2\nSYSTEM You are mario from Super Mario Bros." }

) do |event, raw|

puts event

endEvent:

{ 'status' => 'reading model metadata' }Result:

[{ 'status' => 'reading model metadata' },

{ 'status' => 'creating system layer' },

{ 'status' =>

'using already created layer sha256:4eca7304a07a42c48887f159ef5ad82ed5a5bd30fe52db4aadae1dd938e26f70' },

{ 'status' =>

'using already created layer sha256:876a8d805b60882d53fed3ded3123aede6a996bdde4a253de422cacd236e33d3' },

{ 'status' =>

'using already created layer sha256:a47b02e00552cd7022ea700b1abf8c572bb26c9bc8c1a37e01b566f2344df5dc' },

{ 'status' =>

'using already created layer sha256:f02dd72bb2423204352eabc5637b44d79d17f109fdb510a7c51455892aa2d216' },

{ 'status' =>

'writing layer sha256:1741cf59ce26ff01ac614d31efc700e21e44dd96aed60a7c91ab3f47e440ef94' },

{ 'status' =>

'writing layer sha256:e8bcbb2eebad88c2fa64bc32939162c064be96e70ff36aff566718fc9186b427' },

{ 'status' => 'writing manifest' },

{ 'status' => 'success' }]After creation, you can use it:

client.generate(

{ model: 'mario',

prompt: 'Hi! Who are you?' }

) do |event, raw|

print event['response']

endWoah! adjusts sunglasses It's-a me, Mario! winks You must be a new friend I've-a met here in the Mushroom Kingdom. tips top hat What brings you to this neck of the woods? Maybe you're looking for-a some help on your adventure? nods Just let me know, and I'll do my best to-a assist ya! 😃

API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#list-local-models

result = client.tagsResult:

[{ 'models' =>

[{ 'name' => 'llama2:latest',

'modified_at' => '2024-01-06T15:06:23.6349195-03:00',

'size' => 3_826_793_677,

'digest' =>

'78e26419b4469263f75331927a00a0284ef6544c1975b826b15abdaef17bb962',

'details' =>

{ 'format' => 'gguf',

'family' => 'llama',

'families' => ['llama'],

'parameter_size' => '7B',

'quantization_level' => 'Q4_0' } },

{ 'name' => 'mario:latest',

'modified_at' => '2024-01-06T22:41:59.495298101-03:00',

'size' => 3_826_793_787,

'digest' =>

'291f46d2fa687dfaff45de96a8cb6e32707bc16ec1e1dfe8d65e9634c34c660c',

'details' =>

{ 'format' => 'gguf',

'family' => 'llama',

'families' => ['llama'],

'parameter_size' => '7B',

'quantization_level' => 'Q4_0' } }] }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#show-model-information

result = client.show(

{ name: 'llama2' }

)Result:

[{ 'license' =>

"LLAMA 2 COMMUNITY LICENSE AGREEMENT\t\n" \

# ...

"* Reporting violations of the Acceptable Use Policy or unlicensed uses of Llama..." \

"\n",

'modelfile' =>

"# Modelfile generated by \"ollama show\"\n" \

# ...

'PARAMETER stop "<</SYS>>"',

'parameters' =>

"stop [INST]\n" \

"stop [/INST]\n" \

"stop <<SYS>>\n" \

'stop <</SYS>>',

'template' =>

"[INST] <<SYS>>{{ .System }}<</SYS>>\n\n{{ .Prompt }} [/INST]\n",

'details' =>

{ 'format' => 'gguf',

'family' => 'llama',

'families' => ['llama'],

'parameter_size' => '7B',

'quantization_level' => 'Q4_0' } }]API Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#copy-a-model

result = client.copy(

{ source: 'llama2',

destination: 'llama2-backup' }

)Result:

trueIf the source model does not exist:

begin

result = client.copy(

{ source: 'purple',

destination: 'purple-backup' }

)

rescue Ollama::Errors::OllamaError => error

puts error.class # Ollama::Errors::RequestError

puts error.message # 'the server responded with status 404'

puts error.payload

# { source: 'purple',

# destination: 'purple-backup',

# ...

# }

puts error.request.inspect

# #<Faraday::ResourceNotFound response={:status=>404, :headers...

endAPI Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#delete-a-model

result = client.delete(

{ name: 'llama2' }

)Result:

trueIf the model does not exist:

begin

result = client.delete(

{ name: 'llama2' }

)

rescue Ollama::Errors::OllamaError => error

puts error.class # Ollama::Errors::RequestError

puts error.message # 'the server responded with status 404'

puts error.payload

# { name: 'llama2',

# ...

# }

puts error.request.inspect

# #<Faraday::ResourceNotFound response={:status=>404, :headers...

endAPI Documentation: https://github.com/jmorganca/ollama/blob/main/docs/api.md#pull-a-model

result = client.pull(

{ name: 'llama2' }

) do |event, raw|

puts event

endEvent:

{ 'status' => 'pulling manifest' }Result:

[{ 'status' => 'pulling manifest' },

{ 'status' => 'pulling 4eca7304a07a',

'digest' =>

'sha256:4eca7304a07a42c48887f159ef5ad82ed5a5bd30fe52db4aadae1dd938e26f70',

'total' => 1_602_463_008,

'completed' => 1_602_463_008 },

# ...

{ 'status' => 'verifying sha256 digest' },

{ 'status' => 'writing manifest' },

{ 'status' => 'removing any unused layers' },

{ 'status' => 'success' }]Documentation: API and Publishing Your Model.

You need to create an account at https://ollama.ai and add your Public Key at https://ollama.ai/settings/keys.

Your keys are located in /usr/share/ollama/.ollama/. You may need to copy them to your user directory:

sudo cp /usr/share/ollama/.ollama/id_ed25519 ~/.ollama/

sudo cp /usr/share/ollama/.ollama/id_ed25519.pub ~/.ollama/Copy your model to your user namespace:

client.copy(

{ source: 'mario',

destination: 'your-user/mario' }

)And push it:

result = client.push(

{ name: 'your-user/mario' }

) do |event, raw|

puts event

endEvent:

{ 'status' => 'retrieving manifest' }Result:

[{ 'status' => 'retrieving manifest' },

{ 'status' => 'pushing 4eca7304a07a',

'digest' =>

'sha256:4eca7304a07a42c48887f159ef5ad82ed5a5bd30fe52db4aadae1dd938e26f70',

'total' => 1_602_463_008,

'completed' => 1_602_463_008 },

# ...

{ 'status' => 'pushing e8bcbb2eebad',

'digest' =>

'sha256:e8bcbb2eebad88c2fa64bc32939162c064be96e70ff36aff566718fc9186b427',

'total' => 555,

'completed' => 555 },

{ 'status' => 'pushing manifest' },

{ 'status' => 'success' }]You can use the generate or chat methods for text.

Courtesy of Unsplash

You need to choose a model that supports images, like LLaVA or bakllava, and encode the image as Base64.

Depending on your hardware, some models that support images can be slow, so you may want to increase the client timeout:

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: {

server_sent_events: true,

connection: { request: { timeout: 120, read_timeout: 120 } } }

)Using the generate method:

require 'base64'

client.generate(

{ model: 'llava',

prompt: 'Please describe this image.',

images: [Base64.strict_encode64(File.read('piano.jpg'))] }

) do |event, raw|

print event['response']

endOutput:

The image is a black and white photo of an old piano, which appears to be in need of maintenance. A chair is situated right next to the piano. Apart from that, there are no other objects or people visible in the scene.

Using the chat method:

require 'base64'

result = client.chat(

{ model: 'llava',

messages: [

{ role: 'user',

content: 'Please describe this image.',

images: [Base64.strict_encode64(File.read('piano.jpg'))] }

] }

) do |event, raw|

puts event

endOutput:

The image displays an old piano, sitting on a wooden floor with black keys. Next to the piano, there is another keyboard in the scene, possibly used for playing music.

On top of the piano, there are two mice placed in different locations within its frame. These mice might be meant for controlling the music being played or simply as decorative items. The overall atmosphere seems to be focused on artistic expression through this unique instrument.

Server-Sent Events (SSE) is a technology that allows certain endpoints to offer streaming capabilities, such as creating the impression that "the model is typing along with you," rather than delivering the entire answer all at once.

You can set up the client to use Server-Sent Events (SSE) for all supported endpoints:

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: { server_sent_events: true }

)Or, you can decide on a request basis:

result = client.generate(

{ model: 'llama2',

prompt: 'Hi!' },

server_sent_events: true

) do |event, raw|

puts event

endWith Server-Sent Events (SSE) enabled, you can use a block to receive partial results via events. This feature is particularly useful for methods that offer streaming capabilities, such as generate: Receiving Stream Events

Method calls will hang until the server-sent events finish, so even without providing a block, you can obtain the final results of the received events: Receiving Stream Events

Ollama may launch a new endpoint that we haven't covered in the Gem yet. If that's the case, you may still be able to use it through the request method. For example, generate is just a wrapper for api/generate, which you can call directly like this:

result = client.request(

'api/generate',

{ model: 'llama2',

prompt: 'Hi!' },

request_method: 'POST', server_sent_events: true

)The gem uses Faraday with the Typhoeus adapter by default.

You can use a different adapter if you want:

require 'faraday/net_http'

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: { connection: { adapter: :net_http } }

)You can set the maximum number of seconds to wait for the request to complete with the timeout option:

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: { connection: { request: { timeout: 5 } } }

)You can also have more fine-grained control over Faraday's Request Options if you prefer:

client = Ollama.new(

credentials: { address: 'http://localhost:11434' },

options: {

connection: {

request: {

timeout: 5,

open_timeout: 5,

read_timeout: 5,

write_timeout: 5

}

}

}

)require 'ollama-ai'

begin

client.chat_completions(

{ model: 'llama2',

prompt: 'Hi!' }

)

rescue Ollama::Errors::OllamaError => error

puts error.class # Ollama::Errors::RequestError

puts error.message # 'the server responded with status 500'

puts error.payload

# { model: 'llama2',

# prompt: 'Hi!',

# ...

# }

puts error.request.inspect

# #<Faraday::ServerError response={:status=>500, :headers...

endrequire 'ollama-ai/errors'

begin

client.chat_completions(

{ model: 'llama2',

prompt: 'Hi!' }

)

rescue OllamaError => error

puts error.class # Ollama::Errors::RequestError

endOllamaError

BlockWithoutServerSentEventsError

RequestErrorbundle

rubocop -A

bundle exec ruby spec/tasks/run-client.rb

bundle exec ruby spec/tasks/test-encoding.rbThis Gem is designed to provide low-level access to Ollama, enabling people to build abstractions on top of it. If you are interested in more high-level abstractions or more user-friendly tools, you may want to consider Nano Bots 💎 🤖.

gem build ollama-ai.gemspec

gem signin

gem push ollama-ai-1.3.0.gemInstall Babashka:

curl -s https://raw.githubusercontent.com/babashka/babashka/master/install | sudo bashUpdate the template.md file and then:

bb tasks/generate-readme.cljTrick for automatically updating the README.md when template.md changes:

sudo pacman -S inotify-tools # Arch / Manjaro

sudo apt-get install inotify-tools # Debian / Ubuntu / Raspberry Pi OS

sudo dnf install inotify-tools # Fedora / CentOS / RHEL

while inotifywait -e modify template.md; do bb tasks/generate-readme.clj; doneTrick for Markdown Live Preview:

pip install -U markdown_live_preview

mlp README.md -p 8076These resources and references may be useful throughout your learning process:

This is not an official Ollama project, nor is it affiliated with Ollama in any way.

This software is distributed under the MIT License. This license includes a disclaimer of warranty. Moreover, the authors assume no responsibility for any damage or costs that may result from using this project. Use the Ollama AI Ruby Gem at your own risk.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ollama-ai

Similar Open Source Tools

ollama-ai

Ollama AI is a Ruby gem designed to interact with Ollama's API, allowing users to run open source AI LLMs (Large Language Models) locally. The gem provides low-level access to Ollama, enabling users to build abstractions on top of it. It offers methods for generating completions, chat interactions, embeddings, creating and managing models, and more. Users can also work with text and image data, utilize Server-Sent Events for streaming capabilities, and handle errors effectively. Ollama AI is not an official Ollama project and is distributed under the MIT License.

gemini-ai

Gemini AI is a Ruby Gem designed to provide low-level access to Google's generative AI services through Vertex AI, Generative Language API, or AI Studio. It allows users to interact with Gemini to build abstractions on top of it. The Gem provides functionalities for tasks such as generating content, embeddings, predictions, and more. It supports streaming capabilities, server-sent events, safety settings, system instructions, JSON format responses, and tools (functions) calling. The Gem also includes error handling, development setup, publishing to RubyGems, updating the README, and references to resources for further learning.

dingllm.nvim

dingllm.nvim is a lightweight configuration for Neovim that provides scripts for invoking various AI models for text generation. It offers functionalities to interact with APIs from OpenAI, Groq, and Anthropic for generating text completions. The configuration is designed to be simple and easy to understand, allowing users to quickly set up and use the provided AI models for text generation tasks.

opencode.nvim

Opencode.nvim is a Neovim plugin that provides a simple and efficient way to browse, search, and open files in a project. It enhances the file navigation experience by offering features like fuzzy finding, file preview, and quick access to frequently used files. With Opencode.nvim, users can easily navigate through their project files, jump to specific locations, and manage their workflow more effectively. The plugin is designed to improve productivity and streamline the development process by simplifying file handling tasks within Neovim.

instruct-ner

Instruct NER is a solution for complex Named Entity Recognition tasks, including Nested NER, based on modern Large Language Models (LLMs). It provides tools for dataset creation, training, automatic metric calculation, inference, error analysis, and model implementation. Users can create instructions for LLM, build dictionaries with labels, and generate model input templates. The tool supports various entity types and datasets, such as RuDReC, NEREL-BIO, CoNLL-2003, and MultiCoNER II. It offers training scripts for LLMs and metric calculation functions. Instruct NER models like Llama, Mistral, T5, and RWKV are implemented, with HuggingFace models available for adaptation and merging.

nextlint

Nextlint is a rich text editor (WYSIWYG) written in Svelte, using MeltUI headless UI and tailwindcss CSS framework. It is built on top of tiptap editor (headless editor) and prosemirror. Nextlint is easy to use, develop, and maintain. It has a prompt engine that helps to integrate with any AI API and enhance the writing experience. Dark/Light theme is supported and customizable.

MCP-Chinese-Getting-Started-Guide

The Model Context Protocol (MCP) is an innovative open-source protocol that redefines the interaction between large language models (LLMs) and the external world. MCP provides a standardized approach for any large language model to easily connect to various data sources and tools, enabling seamless access and processing of information. MCP acts as a USB-C interface for AI applications, offering a standardized way for AI models to connect to different data sources and tools. The core functionalities of MCP include Resources, Prompts, Tools, Sampling, Roots, and Transports. This guide focuses on developing an MCP server for network search using Python and uv management. It covers initializing the project, installing dependencies, creating a server, implementing tool execution methods, and running the server. Additionally, it explains how to debug the MCP server using the Inspector tool, how to call tools from the server, and how to connect multiple MCP servers. The guide also introduces the Sampling feature, which allows pre- and post-tool execution operations, and demonstrates how to integrate MCP servers into LangChain for AI applications.

opencode.nvim

Opencode.nvim is a neovim frontend for Opencode, a terminal-based AI coding agent. It provides a chat interface between neovim and the Opencode AI agent, capturing editor context to enhance prompts. The plugin maintains persistent sessions for continuous conversations with the AI assistant, similar to Cursor AI.

hezar

Hezar is an all-in-one AI library designed specifically for the Persian community. It brings together various AI models and tools, making it easy to use AI with just a few lines of code. The library seamlessly integrates with Hugging Face Hub, offering a developer-friendly interface and task-based model interface. In addition to models, Hezar provides tools like word embeddings, tokenizers, feature extractors, and more. It also includes supplementary ML tools for deployment, benchmarking, and optimization.

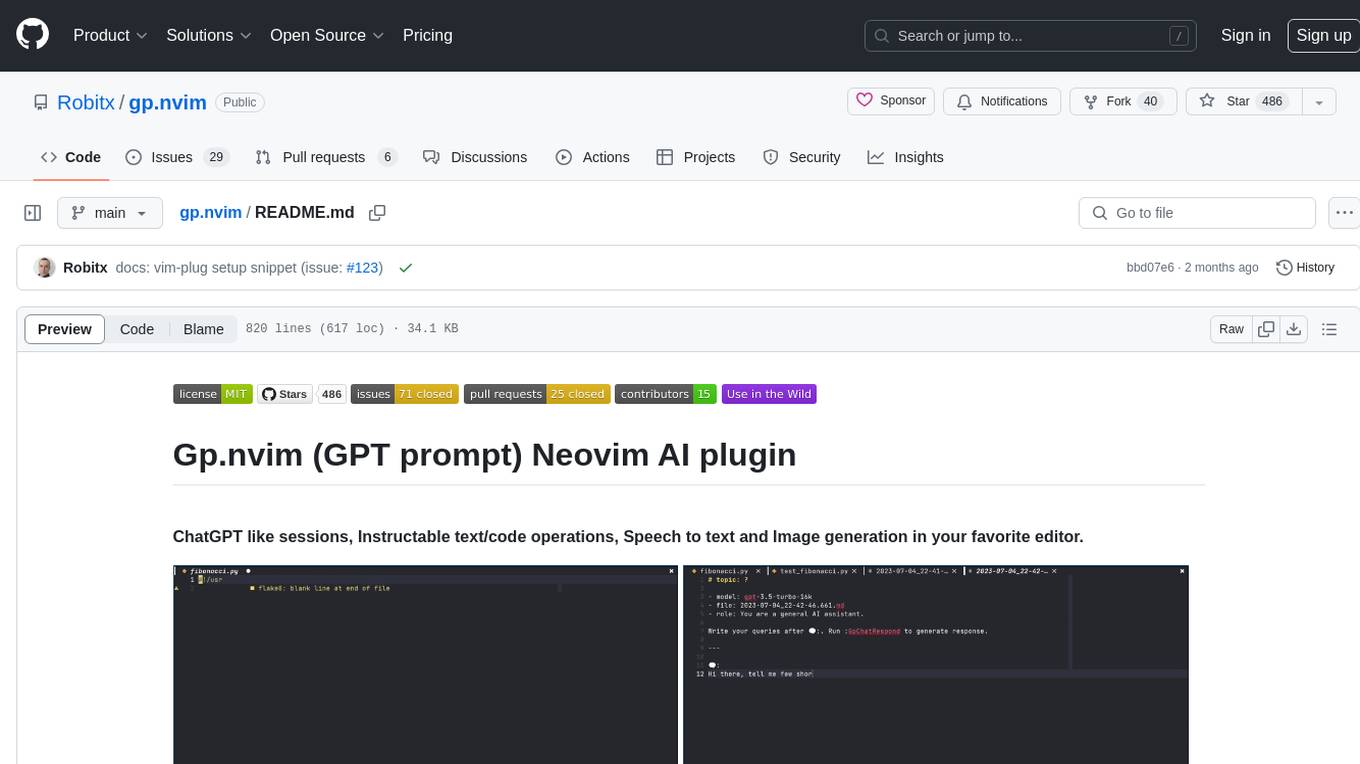

gp.nvim

Gp.nvim (GPT prompt) Neovim AI plugin provides a seamless integration of GPT models into Neovim, offering features like streaming responses, extensibility via hook functions, minimal dependencies, ChatGPT-like sessions, instructable text/code operations, speech-to-text support, and image generation directly within Neovim. The plugin aims to enhance the Neovim experience by leveraging the power of AI models in a user-friendly and native way.

python-genai

The Google Gen AI SDK is a Python library that provides access to Google AI and Vertex AI services. It allows users to create clients for different services, work with parameter types, models, generate content, call functions, handle JSON response schemas, stream text and image content, perform async operations, count and compute tokens, embed content, generate and upscale images, edit images, work with files, create and get cached content, tune models, distill models, perform batch predictions, and more. The SDK supports various features like automatic function support, manual function declaration, JSON response schema support, streaming for text and image content, async methods, tuning job APIs, distillation, batch prediction, and more.

lagent

Lagent is a lightweight open-source framework that allows users to efficiently build large language model(LLM)-based agents. It also provides some typical tools to augment LLM. The overview of our framework is shown below:

Webscout

Webscout is an all-in-one Python toolkit for web search, AI interaction, digital utilities, and more. It provides access to diverse search engines, cutting-edge AI models, temporary communication tools, media utilities, developer helpers, and powerful CLI interfaces through a unified library. With features like comprehensive search leveraging Google and DuckDuckGo, AI powerhouse for accessing various AI models, YouTube toolkit for video and transcript management, GitAPI for GitHub data extraction, Tempmail & Temp Number for privacy, Text-to-Speech conversion, GGUF conversion & quantization, SwiftCLI for CLI interfaces, LitPrinter for styled console output, LitLogger for logging, LitAgent for user agent generation, Text-to-Image generation, Scout for web parsing and crawling, Awesome Prompts for specialized tasks, Weather Toolkit, and AI Search Providers.

Webscout

WebScout is a versatile tool that allows users to search for anything using Google, DuckDuckGo, and phind.com. It contains AI models, can transcribe YouTube videos, generate temporary email and phone numbers, has TTS support, webai (terminal GPT and open interpreter), and offline LLMs. It also supports features like weather forecasting, YT video downloading, temp mail and number generation, text-to-speech, advanced web searches, and more.

Scrapegraph-ai

ScrapeGraphAI is a Python library that uses Large Language Models (LLMs) and direct graph logic to create web scraping pipelines for websites, documents, and XML files. It allows users to extract specific information from web pages by providing a prompt describing the desired data. ScrapeGraphAI supports various LLMs, including Ollama, OpenAI, Gemini, and Docker, enabling users to choose the most suitable model for their needs. The library provides a user-friendly interface through its `SmartScraper` class, which simplifies the process of building and executing scraping pipelines. ScrapeGraphAI is open-source and available on GitHub, with extensive documentation and examples to guide users. It is particularly useful for researchers and data scientists who need to extract structured data from web pages for analysis and exploration.

For similar tasks

ollama-ai

Ollama AI is a Ruby gem designed to interact with Ollama's API, allowing users to run open source AI LLMs (Large Language Models) locally. The gem provides low-level access to Ollama, enabling users to build abstractions on top of it. It offers methods for generating completions, chat interactions, embeddings, creating and managing models, and more. Users can also work with text and image data, utilize Server-Sent Events for streaming capabilities, and handle errors effectively. Ollama AI is not an official Ollama project and is distributed under the MIT License.

dashscope-sdk

DashScope SDK for .NET is an unofficial SDK maintained by Cnblogs, providing various APIs for text embedding, generation, multimodal generation, image synthesis, and more. Users can interact with the SDK to perform tasks such as text completion, chat generation, function calls, file operations, and more. The project is under active development, and users are advised to check the Release Notes before upgrading.

ollama-ex

Ollama is a powerful tool for running large language models locally or on your own infrastructure. It provides a full implementation of the Ollama API, support for streaming requests, and tool use capability. Users can interact with Ollama in Elixir to generate completions, chat messages, and perform streaming requests. The tool also supports function calling on compatible models, allowing users to define tools with clear descriptions and arguments. Ollama is designed to facilitate natural language processing tasks and enhance user interactions with language models.

llm4s

llm4s is an experimental Scala 3 bindings tool for llama.cpp using Slinc. It provides version compatibility with Scala 3.3.0 and JDK 17, 19 for llama.cpp. Users can utilize llm4s to work with llama.cpp shared library and model, enabling completion and embeddings functionalities in Scala.

blockoli

Blockoli is a high-performance tool for code indexing, embedding generation, and semantic search tool for use with LLMs. It is built in Rust and uses the ASTerisk crate for semantic code parsing. Blockoli allows you to efficiently index, store, and search code blocks and their embeddings using vector similarity. Key features include indexing code blocks from a codebase, generating vector embeddings for code blocks using a pre-trained model, storing code blocks and their embeddings in a SQLite database, performing efficient similarity search on code blocks using vector embeddings, providing a REST API for easy integration with other tools and platforms, and being fast and memory-efficient due to its implementation in Rust.

client-js

The Mistral JavaScript client is a library that allows you to interact with the Mistral AI API. With this client, you can perform various tasks such as listing models, chatting with streaming, chatting without streaming, and generating embeddings. To use the client, you can install it in your project using npm and then set up the client with your API key. Once the client is set up, you can use it to perform the desired tasks. For example, you can use the client to chat with a model by providing a list of messages. The client will then return the response from the model. You can also use the client to generate embeddings for a given input. The embeddings can then be used for various downstream tasks such as clustering or classification.

fastllm

A collection of LLM services you can self host via docker or modal labs to support your applications development. The goal is to provide docker containers or modal labs deployments of common patterns when using LLMs and endpoints to integrate easily with existing codebases using the openai api. It supports GPT4all's embedding api, JSONFormer api for chat completion, Cross Encoders based on sentence transformers, and provides documentation using MkDocs.

openai-kotlin

OpenAI Kotlin API client is a Kotlin client for OpenAI's API with multiplatform and coroutines capabilities. It allows users to interact with OpenAI's API using Kotlin programming language. The client supports various features such as models, chat, images, embeddings, files, fine-tuning, moderations, audio, assistants, threads, messages, and runs. It also provides guides on getting started, chat & function call, file source guide, and assistants. Sample apps are available for reference, and troubleshooting guides are provided for common issues. The project is open-source and licensed under the MIT license, allowing contributions from the community.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.